Post Syndicated from Cristian Gavazzeni original https://aws.amazon.com/blogs/big-data/part-1-building-a-cost-efficient-petabyte-scale-lake-house-with-amazon-s3-lifecycle-rules-and-amazon-redshift-spectrum/

The continuous growth of data volumes combined with requirements to implement long-term retention (typically due to specific industry regulations) puts pressure on the storage costs of data warehouse solutions, even for cloud native data warehouse services such as Amazon Redshift. The introduction of the new Amazon Redshift RA3 node types helped in decoupling compute from storage growth. Integration points provided by Amazon Redshift Spectrum, Amazon Simple Storage Service (Amazon S3) storage classes, and other Amazon S3 features allow for compliance of retention policies while keeping costs under control.

An enterprise customer in Italy asked the AWS team to recommend best practices on how to implement a data journey solution for sales data; the objective of part 1 of this series is to provide step-by-step instructions and best practices on how to build an end-to-end data lifecycle management system integrated with a data lake house implemented on Amazon S3 with Amazon Redshift. In part 2, we show some additional best practices to operate the solution: implementing a sustainable monthly ageing process, using Amazon Redshift local tables to troubleshoot common issues, and using Amazon S3 access logs to analyze data access patterns.

Amazon Redshift and Redshift Spectrum

At re:Invent 2019, AWS announced new Amazon Redshift RA3 nodes. Even though this introduced new levels of cost efficiency in the cloud data warehouse, we faced customer cases where the data volume to be kept is an order of magnitude higher due to specific regulations that impose historical data to be kept for up to 10–12 years or more. In addition, this historical cold data must be accessed by other services and applications external to Amazon Redshift (such as Amazon SageMaker for AI and machine learning (ML) training jobs), and occasionally it needs to be queried jointly with Amazon Redshift hot data. In these situations, Redshift Spectrum is a great fit because, among other factors, you can use it in conjunction with Amazon S3 storage classes to further improve TCO.

Redshift Spectrum allows you to query data that resides in S3 buckets using already in place application code and logic used for data warehouse tables, and potentially performing joins and unions of Amazon Redshift local tables and data on Amazon S3.

Redshift Spectrum uses a fleet of compute nodes managed by AWS that increases system scalability. To use it, we need to define at least an external schema and an external table (unless an external schema and external database are already defined in the AWS Glue Data Catalog). Data definition language (DDL) statements used to define an external table include a location attribute to address S3 buckets and prefixes containing the dataset, which could be in common file formats like ORC, Parquet, AVRO, CSV, JSON, or plain text. Compressed and columnar file formats like Apache Parquet are preferred because they provide less storage usage and better performance.

For a data catalog, we could use AWS Glue or an external hive metastore. For this post, we use AWS Glue.

S3 Lifecycle rules

Amazon S3 storage classes include S3 Standard, S3-IA, S3 One-Zone, S3 Intelligent-Tiering, S3 Glacier, and S3 Glacier Deep Archive. For our use case, we need to keep data accessible for queries for 5 years and with high durability, so we consider only S3 Standard and S3-IA for this time frame, and S3 Glacier only for long term (5–12 years). Data access to S3 Glacier requires data retrieval in the range of minutes (if using expedited retrieval) and this can’t be matched with the ability to query data. We can adopt Glacier for very cold data if you implement a manual process to first restore the Glacier archive to a temporary S3 bucket, and then query this data defined via an external table.

S3 Glacier Select allows you to query on data directly in S3 Glacier, but it only supports uncompressed CSV files. Because the objective of this post is to propose a cost-efficient solution, we didn’t consider it. If for any reason you have constraints for storing in CSV file format (instead of compressed formats like Parquet), Glacier Select might also be a good fit.

Excluding retrieval costs, the cost for storage for S3-IA is typically around 45% cheaper than S3 Standard, and S3 Glacier is 68% cheaper than S3-IA. For updated pricing information, see Amazon S3 pricing.

We don’t use S3 Intelligent Tiering because it bases storage transition on the last access time, and this resets every time we need to query the data. We use the S3 Lifecycle rules that are based either on creation time or prefix or tag matching, which is consistent regardless of data access patterns.

Simulated use case and retention policy

For our use case, we need to implement the data retention strategy for trip records outlined in the following table.

| Corporate Rule |

Dataset Start |

Dataset end |

Data Storage |

Engine |

| Last 6 months in Redshift Spectrum |

December 2019 |

May 2020 |

Amazon Redshift local tables |

Amazon Redshift |

| Months 6–11 in Amazon S3 |

June 2019 |

November 2019 |

S3 Standard |

Redshift Spectrum |

| Months 12–14 in S3-IA |

March 2019 |

May 2019 |

S3-IA |

Redshift Spectrum |

| After month 15 |

January 2019 |

February 2019 |

Glacier |

N/A |

For this post, we create a new table in a new Amazon Redshift cluster and load a public dataset. We use the New York City Taxi and Limousine Commission (TLC) Trip Record Data because it provides the required historical depth.

We use the Green Taxi Trip Records, based on monthly CSV files containing 20 columns with fields like vendor ID, pickup time, drop-off time, fare, and other information.

Preparing the dataset and setting up the Amazon Redshift environment

As a first step, we create an AWS Identity and Access Management (IAM) role for Redshift Spectrum. This is required to allow access to Amazon Redshift to Amazon S3 for querying and loading data, and also to allow access to the AWS Glue Data Catalog whenever we create, modify, or delete a new external table.

- Create a role named

BlogSpectrumRole.

- Edit the following two JSON files, which have the IAM policies according to the bucket and prefix used in this post, as needed, and attach the policies to the role you created:

S3-Lakehouse-Policy.JSON

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "VisualEditor0",

"Effect": "Allow",

"Action": "s3:*",

"Resource": [

"arn:aws:s3:::rs-lakehouse-blog-post",

"arn:aws:s3:::rs-lakehouse-blog-post/extract_longterm/*",

"arn:aws:s3:::rs-lakehouse-blog-post/extract_midterm/*",

"arn:aws:s3:::rs-lakehouse-blog-post/extract_shortterm/*",

"arn:aws:s3:::rs-lakehouse-blog-post/accesslogs/*"

]

}

]

}

Glue-Lakehouse-Policy.JSON

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "VisualEditor0",

"Effect": "Allow",

"Action": [

"glue:CreateDatabase",

"glue:DeleteDatabase",

"glue:GetDatabase",

"glue:GetDatabases",

"glue:UpdateDatabase",

"glue:CreateTable",

"glue:DeleteTable",

"glue:BatchDeleteTable",

"glue:UpdateTable",

"glue:GetTable",

"glue:GetTables",

"glue:BatchCreatePartition",

"glue:CreatePartition",

"glue:DeletePartition",

"glue:BatchDeletePartition",

"glue:UpdatePartition",

"glue:GetPartition",

"glue:GetPartitions",

"glue:BatchGetPartition"

],

"Resource": [

"*"

]

}

]

}

Now you create a single-node Amazon Redshift cluster based on a DC2.large instance type, attaching the newly created IAM role BlogSpectrumRole.

- On the Amazon Redshift console, choose Create cluster.

- Keep the default cluster identifier

redshift-cluster-1.

- Choose the node type DC2.large.

- Set the configuration to single node.

- Keep the default

dbname and ports.

- Set a primary user password.

- Choose Create cluster.

- After the cluster is configured, check the attached IAM role on the Properties tab for the cluster.

- Take note of the IAM role ARN, because you use it to create external tables.

Copying data into Amazon Redshift

To connect to Amazon Redshift, you can use a free client like SQL Workbench/J or use the AWS console embedded query editor with the previously created credentials.

- Create a table according to the dataset schema using the following DDL statement:

create table greentaxi(

vendor_id integer,

pickup timestamp,

dropoff timestamp,

storeandfwd char(2),

ratecodeid integer,

pulocid integer,

dolocid integer,

passenger_count integer,

trip_dist real,

fare_amount real,

extra real,

mta_tax real,

tip_amount real,

toll_amount real,

ehail_fee real,

improve_surch real,

total_amount real,

pay_type integer,

trip_type integer,

congest_surch real

);

The most efficient method to load data into Amazon Redshift is using the COPY command, because it uses the distributed architecture (each slice can ingest one file at the same time).

- Load year 2020 data from January to June in the Amazon Redshift table with the following command (replace the IAM role value with the one you created earlier):

copy greentaxi from ‘s3://nyc-tlc/trip data/green_tripdata_2020’

iam_role ‘arn:aws:iam::123456789012:role/BlogSpectrumRole’

delimiter ‘,’

CSV

dateformat ‘auto’

region ‘us-east-1’

ignoreheader as 1;

Now the greentaxi table includes all records starting from January 2019 to June 2020. You’re now ready to leverage Redshift Spectrum and S3 storage classes to save costs.

Extracting data from Amazon Redshift

You perform the next steps using the AWS Command Line Interface (AWS CLI). For download and installation instructions, see Installing, updating, and uninstalling the AWS CLI version 2.

Use the AWS CONFIGURE command to set the access key and secret access key of your IAM user and your selected AWS Region (same as your S3 buckets) of your Amazon Redshift cluster.

In this section, we evaluate two different use cases:

- New customer – As a new customer, you don’t have much Amazon Redshift old data, and want to extract only the oldest monthly data and apply a lifecycle policy based on creation date. Storage tiering only affects future data and is fully automated.

- Old customer – In this use case, you come from a multi-year data growth, and need to move existing Amazon Redshift data to different storage classes. In addition, you want a fully automated solution but with the ability to override and decide what and when to transition data between S3 storage classes. This requirement is due to many factors, like the GDPR rule “right to be forgotten.” You may need to edit historical data to remove specific customer records, which changes the file creation date. For this reason, you need S3 Lifecycle rules based on tagging instead of creation date.

New customer use case

The UNLOAD command uses the result of an embedded SQL query to extract data from Amazon Redshift to Amazon S3, producing different file formats such as CSV, text, and Parquet. To extract data from January 2019, complete the following steps:

- Create a destination bucket like the following:

aws s3 mb s3://rs-lakehouse-blog-post

- Create a folder named archive in the destination bucket

rs-lakehouse-blog-post:

aws s3api put-object --bucket rs-lakehouse-blog-post -–key archive

- Use the following SQL code to implement the UNLOAD statement. The SELECT on Data data types requires quoting as well as a SELECT statement embedded in the UNLOAD command:

unload ('select * from greentaxi where pickup between ''2019-01-01 00:00:00'' and ''2019-01-30 23:59:59''')

to 's3://rs-lakehouse-blog-post/archive/5to12years_taxi'

iam_role 'arn:aws:iam::123456789012:role/BlogSpectrumRole';

- You can perform a check with the AWS CLI:

aws s3 ls s3://rs-lakehouse-blog-post/archive/

2020-10-12 14:49:51 0

2020-11-04 09:51:00 34792620 5to12years_taxi0000_part_00

2020-11-04 09:51:00 34792738 5to12years_taxi0001_part_00

The output shows that the UNLOAD statement generated two files of 33 MB each. By default, UNLOAD generates at least one file for each slice in the Amazon Redshift cluster. My cluster is a single node with DC2 type instances with two slices. This default file format is text, which is not storage optimized.

To simplify the process, you create a single file for each month so that you can later apply lifecycle rules to each file. In real-world scenarios, extracting data with a single file isn’t the best practice in terms of performance optimization. This is just to simplify the process for the purpose of this post.

- Create your files with the following code:

unload ('select * from greentaxi where pickup between ''2019-01-01 00:00:00'' and ''2019-01-31 23:59:59''')

to 's3://rs-lakehouse-blog-post/archive/green_tripdata_2019-01'

iam_role 'arn:aws:iam::123456789012:role/BlogSpectrumRole' parquet parallel off;

The output of the UNLOAD commands is a single file (per month) in Parquet format, which takes 80% less space than the previous unload. This is important to save costs related to both Amazon S3 and Glacier, but also for costs associated to Redshift Spectrum queries, which is billed by amount of data scanned.

- You can check how efficient Parquet is compared to text format:

aws s3 ls s3://rs-lakehouse-blog-post/archive/

2020-08-12 17:17:42 14523090 green_tripdata_2019-01000.parquet

- Clean up previous the text files:

aws s3 rm s3://rs-lakehouse-blog-post/ --recursive \

--exclude "*" --include "archive/5to12*"

The next step is creating a lifecycle rule based on creation date to automate the migration to S3-IA after 12 months and to Glacier after 15 months. The proposed policy name is 12IA-15Glacier and it’s filtered on the prefix archive/.

- Create a JSON file containing the lifecycle policy definition named json:

{

"Rules": [

{

"ID": "12IA-15Glacier",

"Filter": {

"Prefix": "archive"

},

"Status": "Enabled",

"Transitions": [

{

"Days": 365,

"StorageClass": "STANDARD_IA"

},

{

"Days": 548,

"StorageClass": "GLACIER"

}

]

}

- Run the following command to send the JSON file to Amazon S3:

aws s3api put-bucket-lifecycle-configuration \

--bucket rs-lakehouse-blog-post \

--lifecycle-configuration file://lifecycle.json

This lifecycle policy migrates all keys in the archive prefix from Amazon S3 to S3-IA after 12 months and from S3-IA to Glacier after 15 months. For example, if today were 2020-09-12, and you unload the 2020-03 data to Amazon S3, by 2021-09-12, this 2020-03 data is automatically migrated to S3-IA.

If using this basic use case, you can skip the partition steps in the section Defining the external schema and external tables.

Old customer use case

In this use case, you extract data with different ageing in the same time frame. You extract all data from January 2019 to February 2019 and, because we assume that you aren’t using this data, archive it to S3 Glacier.

Data from March 2019 to May 2019 is migrated as an external table on S3-IA, and data from June 2019 to November 2019 is migrated as an external table to S3 Standard. With this approach, you comply with customer long-term retention policies and regulations, and reduce TCO.

You implement the retention strategy described in the Simulated use case and retention policy section.

- Create a destination bucket (if you also walked through the first use case, use a different bucket):

aws s3 mb s3://rs-lakehouse-blog-post

- Create three folders named

extract_longterm, extract_midterm, and extract_shortterm in the destination bucket rs-lakehouse-blog-post. The following code is the syntax for creating the extract_longterm folder:

aws s3api put-object --bucket rs-lakehouse-blog-post –key

- Extract the data:

unload ('select * from greentaxi where pickup between ''2019-01-01 00:00:00'' and ''2019-01-31 23:59:59''')

to 's3://rs-lakehouse-blog-post/extract_longterm/green_tripdata_2019-01'

iam_role 'arn:aws:iam::123456789012:role/BlogSpectrumRole' parquet parallel off;

- Repeat these steps for the February 2019 time frame.

Managing data ageing with Amazon S3 storage classes and lifecycle policies

In this section, you manage your data with storage classes and lifecycle policies.

- Migrate your keys in Parquet format to Amazon Glacier:

aws s3api copy-object \

--copy-source rs-lakehouse-blog-post/extract_longterm/green_tripdata_2019-01000.parquet \

--storage-class GLACIER \

--bucket rs-lakehouse-blog-post \

--key extract_longterm/green_tripdata_2019-01000.parquet

aws s3api copy-object \

--copy-source rs-lakehouse-blog-post/extract_longterm/green_tripdata_2019-02000.parquet \

--storage-class GLACIER \

--bucket rs-lakehouse-blog-post \

--key extract_longterm/green_tripdata_2019-02000.parquet

- Extract the data from March 2019 to May 2019 (months 12–15) and migrate them to S3-IA. The following code is for March:

unload ('select * from greentaxi where pickup between ''2019-03-01 00:00:00'' and ''2019-03-31 23:59:59''')

to 's3://rs-lakehouse-blog-post/extract_midterm/03/green_tripdata_2019-03'

iam_role 'arn:aws:iam::123456789012:role/BlogSpectrumRole' parquet parallel off;

- Repeat the previous step for April and May.

- Migrate all three months to S3-IA using same process as before. The following code is for March:

aws s3api copy-object \

--copy-source rs-lakehouse-blog-post/extract_midterm/03/green_tripdata_2019-03000.parquet \

--storage-class STANDARD_IA \ 8. --bucket rs-lakehouse-blog-post \

--key extract_midterm/03/green_tripdata_2019-03000.parquet

- Do the same for other two months.

- Check the newly applied storage class with the following AWS CLI command:

aws s3api head-object \

--bucket rs-lakehouse-blog-post \

--key extract_midterm/03/green_tripdata_2019-03000.parquet

{

"AcceptRanges": "bytes",

"LastModified": "2020-10-12T13:47:32+00:00",

"ContentLength": 14087514,

"ETag": "\"15bf39e6b3f32b10ef589d75e0988ce6\"",

"ContentType": "application/x-www-form-urlencoded; charset=utf-8",

"Metadata": {},

"StorageClass": "STANDARD_IA"

}

In the next step, you tag every monthly file with a key value named ageing set to the number of months elapsed from the origin date.

- Set March to 14, April to 13, and May to 12:

aws s3api put-object-tagging \

--bucket rs-lakehouse-blog-post \

--key extract_midterm/03/green_tripdata_2019-03000.parquet \

--tagging '{"TagSet": [{ "Key": "ageing", "Value": "14"} ]}'

aws s3api put-object-tagging \

--bucket rs-lakehouse-blog-post \

--key extract_midterm/04/green_tripdata_2019-04000.parquet \

--tagging '{"TagSet": [{ "Key": "ageing", "Value": "13"} ]}'

aws s3api put-object-tagging \

--bucket rs-lakehouse-blog-post \

--key extract_midterm/05/green_tripdata_2019-05000.parquet \

--tagging '{"TagSet": [{ "Key": "ageing", "Value": "12"} ]}'

In this set of three objects, the oldest file has the tag ageing set to value 14, and the newest is set to 12. In the second post in this series, you discover how to manage the ageing tag as it increases month by month.

The next step is to create a lifecycle rule based on this specific tag in order to automate the migration to Glacier at month 15. The proposed policy name is 15IAtoGlacier and the definition is to limit the scope to only object with the tag ageing set to 15 in the specific bucket.

- Create a JSON file containing the lifecycle policy definition named json:

{

"Rules": [

{

"ID": "15IAtoGlacier",

"Filter": {

"Tag": {

"Key": "ageing",

"Value": "15"

}

},

"Status": "Enabled",

"Transitions": [

{

"Days": 1,

"StorageClass": "GLACIER"

}

]

}

]

}

- Run the following command to send the JSON file to Amazon S3:

aws s3api put-bucket-lifecycle-configuration \

--bucket rs-lakehouse-blog-post \

--lifecycle-configuration file://lifecycle.json

This lifecycle policy migrates all objects with the tag ageing set to 15 from S3-IA to Glacier.

Though I described this process as automating the migration, I actually want to control the process from the application level using the self-managed tag mechanism. I use this approach because otherwise, the transition is based on file creation date, and the objective is to be able to delete, update, or create a new file whenever needed (for example, to delete parts of records in order to comply to the GDPR “right to be forgotten” rule).

Now you extract all data from June 2019 to November 2019 (7–11 months old) and keep them in Amazon S3 with a lifecycle policy to automatically migrate to S3-IA after ageing 12 months, using same process as described. These six new objects also inherit the rule created previously to migrate to Glacier after 15 months. Finally, you set the ageing tag as described before.

Use the extract_shortterm prefix for these unload operations.

- Unload June 2019 with the following code:

unload ('select * from greentaxi where pickup between ''2019-06-01 00:00:00 '' and ''2019-06-30 23:59:59''')

to 's3://rs-lakehouse-blog-post/extract_shortterm/06/green_tripdata_2019-06'

iam_role 'arn:aws:iam::123456789012:role/BlogSpectrumRole' parquet parallel off;

- Use the same logic for the remaining months up to October.

- For November, see the following code:

unload ('select * from greentaxi where pickup between ''2019-11-01 00:00:00'' and ''2019-11-30 23:59:59''')

to 's3://rs-lakehouse-blog-post/extract_shortterm/11/green_tripdata_2019-11''

iam_role 'arn:aws:iam::123456789012:role/BlogSpectrumRole' parquet parallel off;

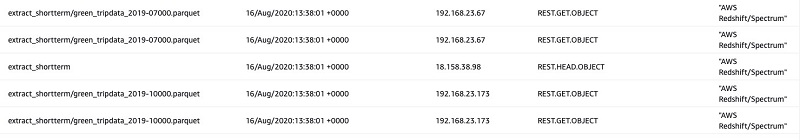

aws s3 ls –recursive s3://rs-lakehouse-blog-post/extract_shortterm/

2020-10-12 14:52:11 0 extract_shortterm/

2020-10-12 18:45:42 0 extract_shortterm/06/

2020-10-12 18:46:49 10889436 extract_shortterm/06/green_tripdata_2019-06000.parquet

2020-10-12 18:45:53 0 extract_shortterm/07/

2020-10-12 18:47:03 10759747 extract_shortterm/07/green_tripdata_2019-07000.parquet

2020-10-12 18:45:58 0 extract_shortterm/08/

2020-10-12 18:47:24 9947793 extract_shortterm/08/green_tripdata_2019-08000.parquet

2020-10-12 18:46:03 0 extract_shortterm/09/

2020-10-12 18:47:45 10302432 extract_shortterm/09/green_tripdata_2019-09000.parquet

2020-10-12 18:46:08 0 extract_shortterm/10/

2020-10-12 18:48:00 10659857 extract_shortterm/10/green_tripdata_2019-10000.parquet

2020-10-12 18:46:11 0 extract_shortterm/11/

2020-10-12 18:48:14 10247201 extract_shortterm/11/green_tripdata_2019-11000.parquet

aws s3 ls --recursive rs-lakehouse-blog-post/extract_longterm/

2020-10-12 14:49:51 0 extract_longterm/

2020-10-12 14:56:38 14403710 extract_longterm/green_tripdata_2019-01000.parquet

2020-10-12 15:30:14 13454341 extract_longterm/green_tripdata_2019-02000.parquet

- Apply the tag

ageing with range 11 to 6 (June 2019 to November 2019), using either the AWS CLI or console if you prefer.

- Create a new lifecycle rule named

12S3toS3IA, which transitions from Amazon S3 to S3-IA.

- With the AWS CLI, create a JSON file that includes the previously defined rule

15IAtoGlacier and new 12S3toS3IA, because the command s3api overwrites the current configuration (no incremental approach) with the new policy definition file (JSON). The following code is the new lifecycle.json:

{

"Rules": [

{

"ID": "12S3toS3IA",

"Filter": {

"Tag": {

"Key": "ageing",

"Value": "12"

}

},

"Status": "Enabled",

"Transitions": [

{

"Days": 30,

"StorageClass": "STANDARD_IA"

}

]

},

{

"ID": "15IAtoGlacier",

"Filter": {

"Tag": {

"Key": "ageing",

"Value": "15"

}

},

"Status": "Enabled",

"Transitions": [

{

"Days": 1,

"StorageClass": "GLACIER"

}

]

}

]

}

aws s3api get-bucket-lifecycle-configuration \

--bucket rs-lakehouse-blog-post

- Check the applied policies with the following command:

aws s3api get-bucket-lifecycle-configuration \

--bucket rs-lakehouse-blog-post

You get in stdout a single JSON with merge of 15IAtoGlacier and 12S3toS3IA.

Defining the external schema and external tables

Before deleting the records you extracted from Amazon Redshift with the UNLOAD command, we define the external schema and external tables to enable Redshift Spectrum queries for these Parquet files.

- Enter the following code to create your schema:

create external schema taxispectrum

from data catalog

database 'blogdb'

iam_role 'arn:aws:iam::123456789012:role/BlogSpectrumRole'

create external database if not exists;

- Create the external table

taxi_archive in the taxispectrum external schema. If you’re walking through the new customer use case, replace the prefix extract_midterm with archive:

create external table taxispectrum.taxi_archive(

vendor_id integer,

pickup timestamp,

dropoff timestamp,

storeandfwd char(2),

ratecodeid integer,

pulocid integer,

dolocid integer,

passenger_count integer,

trip_dist real,

fare_amount real,

extra real,

mta_tax real,

tip_amount real,

toll_amount real,

ehail_fee real,

improve_surch real,

total_amount real,

pay_type integer,

trip_type integer,

congest_surch real)

partitioned by (yearmonth char(7))

stored as parquet

location 's3://rs-lakehouse-blog-post/extract_midterm/'

- Add the six files stored in Amazon S3 and three files stored in S3-IA as partitions (if you’re walking through the new customer use case, you can skip the following partitioning steps). The following code shows March and April:

ALTER TABLE taxispectrum.taxi_archive

ADD PARTITION (yearmonth='2019-03')

LOCATION 's3://rs-lakehouse-blog-post/extract_midterm/03/';

ALTER TABLE taxispectrum.taxi_archive

ADD PARTITION (yearmonth='2019-04')

LOCATION 's3://rs-lakehouse-blog-post/extract_midterm/04/';

- Continue this process up to December 2019, using

extract_shortterm instead of extract_midterm.

- Check the table isn’t empty with the following SQL statement:

Select count (*) from taxispectrum.taxi_archive

You get the number of entries in this external table.

- Optionally, you can check the partitions mapped to this table with a query to the Amazon Redshift internal table:

select * from svv_external_partitions

Run a SELECT command using partitioning in order to optimize costs related to Redshift Spectrum scanning:

select * from taxispectrum.taxi_archive where yearmonth='2019-11' and fare_amount > 20

- Redshift Spectrum scans only specific partitions matching

yearmonth.

The final step is cleaning all the records extracted from the Amazon Redshift local tables:

delete from public.greentaxi where pickup between '2019-01-01 00:00:00' and '2019-11-30 23:59:59'

Conclusion

We demonstrated how to extract historical data from Amazon Redshift and implement an archive strategy with Redshift Spectrum and Amazon S3 storage classes. In addition, we showed how to optimize Redshift Spectrum scans with partitioning.

In the next post in this series, we show how to operate this solution day by day, especially for the old customer use case, and share some best practices.

About the Authors

Cristian Gavazzeni is a senior solution architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes eating Japanese food and travelling abroad with only fly and drive bookings.

Cristian Gavazzeni is a senior solution architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes eating Japanese food and travelling abroad with only fly and drive bookings.

Francesco Marelli is a senior solutions architect at Amazon Web Services. He has lived and worked in London for 10 years, after that he has worked in Italy, Switzerland and other countries in EMEA. He is specialized in the design and implementation of Analytics, Data Management and Big Data systems, mainly for Enterprise and FSI customers. Francesco also has a strong experience in systems integration and design and implementation of web applications. He loves sharing his professional knowledge, collecting vinyl records and playing bass.

Francesco Marelli is a senior solutions architect at Amazon Web Services. He has lived and worked in London for 10 years, after that he has worked in Italy, Switzerland and other countries in EMEA. He is specialized in the design and implementation of Analytics, Data Management and Big Data systems, mainly for Enterprise and FSI customers. Francesco also has a strong experience in systems integration and design and implementation of web applications. He loves sharing his professional knowledge, collecting vinyl records and playing bass.

-

-

Cristian Gavazzeni is a senior solution architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes eating Japanese food and travelling abroad with only fly and drive bookings.

Cristian Gavazzeni is a senior solution architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes eating Japanese food and travelling abroad with only fly and drive bookings. Francesco Marelli is a senior solutions architect at Amazon Web Services. He has lived and worked in London for 10 years, after that he has worked in Italy, Switzerland and other countries in EMEA. He is specialized in the design and implementation of Analytics, Data Management and Big Data systems, mainly for Enterprise and FSI customers. Francesco also has a strong experience in systems integration and design and implementation of web applications. He loves sharing his professional knowledge, collecting vinyl records and playing bass.

Francesco Marelli is a senior solutions architect at Amazon Web Services. He has lived and worked in London for 10 years, after that he has worked in Italy, Switzerland and other countries in EMEA. He is specialized in the design and implementation of Analytics, Data Management and Big Data systems, mainly for Enterprise and FSI customers. Francesco also has a strong experience in systems integration and design and implementation of web applications. He loves sharing his professional knowledge, collecting vinyl records and playing bass.