Post Syndicated from Amit Borulkar original https://aws.amazon.com/blogs/devops/factset-automation-at-scale/

This post is by FactSet’s Cloud Infrastructure team, Gaurav Jain, Nathan Goodman, Geoff Wang, Daniel Cordes, Sunu Joseph, and AWS Solution Architects Amit Borulkar and Tarik Makota. In their own words, “FactSet creates flexible, open data and software solutions for tens of thousands of investment professionals around the world, which provides instant access to financial data and analytics that investors use to make crucial decisions. At FactSet, we are always working to improve the value that our products provide.”

At FactSet, our operational goal to use the AWS Cloud is to have high developer velocity alongside enterprise governance. Assigning AWS accounts per project enables the agility and isolation boundary needed by each of the project teams to innovate faster. As existing workloads are migrated and new workloads are developed in the cloud, we realized that we were operating close to thousands of AWS accounts. To have a consistent and repeatable experience for diverse project teams, we automated the AWS account creation process, various service control policies (SCP) and AWS Identity and Access Management (IAM) policies and roles associated with the accounts, and enforced policies for ongoing configuration across the accounts. This post covers our automation workflows to enable governance for thousands of AWS accounts.

AWS account creation workflow

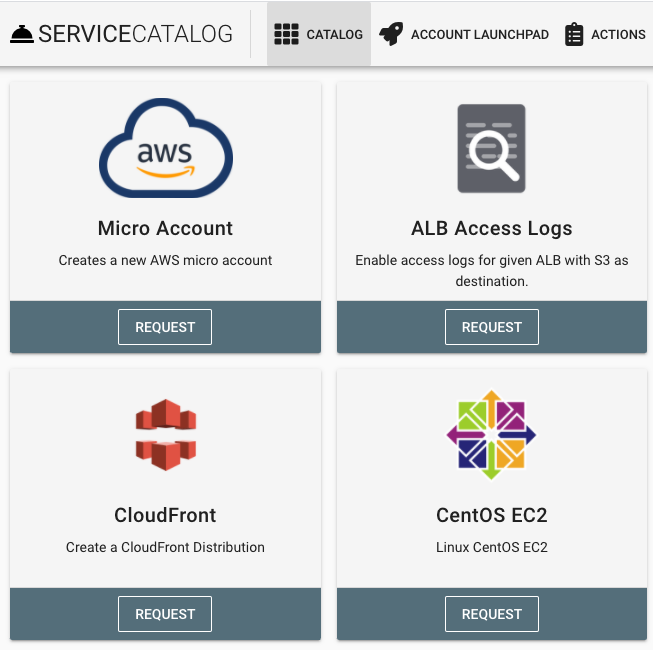

To empower our project teams to operate in the AWS Cloud in an agile manner, we developed a platform that enables AWS account creation with the default configuration customized to meet FactSet’s governance policies. These AWS accounts are provisioned with defaults such as a virtual private cloud (VPC), subnets, routing tables, IAM roles, SCP policies, add-ons for monitoring and load-balancing, and FactSet-specific governance. Developers and project team members can request a micro account for their product via this platform’s website, or do so programmatically using an API or wrap-around custom Terraform modules. The following screenshot shows a portion of the web interface that allows developers to request an AWS account.

Continue reading How FactSet automates thousands of AWS accounts at scale