Post Syndicated from Roland Odorfer original https://aws.amazon.com/blogs/security/governance-at-scale-enforce-permissions-and-compliance-by-using-policy-as-code/

AWS Identity and Access Management (IAM) policies are at the core of access control on AWS. They enable the bundling of permissions, helping to provide effective and modular access control for AWS services. Service control policies (SCPs) complement IAM policies by helping organizations enforce permission guardrails at scale across their AWS accounts.

The use of access control policies isn’t limited to AWS resources. Customer applications running on AWS infrastructure can also use policies to help control user access. This often involves implementing custom authorization logic in the program code itself, which can complicate audits and policy changes.

To address this, AWS developed Amazon Verified Permissions, which helps implement fine-grained authorizations and permissions management for customer applications. This service uses Cedar, an open-source policy language, to define permissions separately from application code.

In addition to access control, you can also use policies to help monitor your organization’s individual governance rules for security, operations and compliance. One example of such a rule is the regular rotation of cryptographic keys to help reduce the impact in the event of a key leak.

However, manually checking and enforcing such rules is complex and doesn’t scale, particularly in fast-growing IT organizations. Therefore, organizations should aim for an automated implementation of such rules. In this blog post, I will show you how to use policy as code to help you govern your AWS landscape.

Policy as code

Similar to infrastructure as code (IaC), policy as code is an approach in which you treat policies like regular program code. You define policies in the form of structured text files (policy documents), which policy engines can automatically evaluate.

The main advantage of this approach is the ability to automate key governance tasks, such as policy deployment, enforcement, and auditing. By storing policy documents in a central repository, you can use versioning, simplify audits, and track policy changes. Furthermore, you can subject new policies to automated testing through integration into a continuous integration and continuous delivery (CI/CD) pipeline. Policy as code thus forms one of the key pillars of a modern automated IT governance strategy.

The following sections describe how you can combine different AWS services and functions to integrate policy as code into existing IT governance processes.

Access control – AWS resources

Every request to AWS control plane resources (specifically, AWS APIs)—whether through the AWS Management Console, AWS Command Line Interface (AWS CLI), or SDK — is authenticated and authorized by IAM. To determine whether to approve or deny a specific request, IAM evaluates both the applicable policies associated with the requesting principal (human user or workload) and the respective request context. These policies come in the form of JSON documents and follow a specific schema that allows for automated evaluation.

IAM supports a range of different policy types that you can use to help protect your AWS resources and implement a least privilege approach. For an overview of the individual policy types and their purpose, see Policies and permissions in IAM. For some practical guidance on how and when to use them, see IAM policy types: How and when to use them. To learn more about the IAM policy evaluation process and the order in which IAM reviews individual policy types, see Policy evaluation logic.

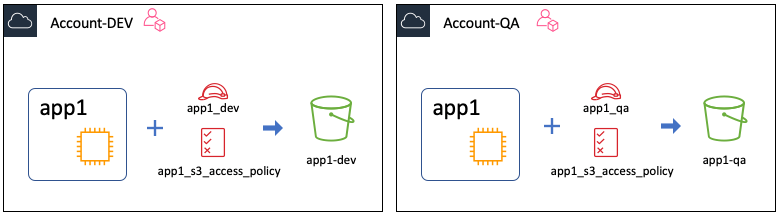

Traditionally, IAM relied on role-based access control (RBAC) for authorization. With RBAC, principals are assigned predefined roles that grant only the minimum permissions needed to perform their duties (also known as a least privilege approach). RBAC can seem intuitive initially, but it can become cumbersome at scale. Every new resource that you add to AWS requires the IAM administrator to manually update each role’s permissions – a tedious process that can hamper agility in dynamic environments.

In contrast, attribute-based access control (ABAC) bases permissions on the attributes assigned to users and resources. IAM administrators define a policy that allows access when certain tags match. ABAC is especially advantageous for dynamic, fast-growing organizations that have outgrown the RBAC model. To learn more about how to implement ABAC in an AWS environment, see Define permissions to access AWS resources based on tags.

For a list of AWS services that IAM supports and whether each service supports ABAC, see AWS services that work with IAM.

Access control – Customer applications

Customer applications that run on AWS resources often require an authorization mechanism that can control access to the application itself and its individual functions in a fine-grained manner.

Many customer applications come with custom authorization mechanisms in the application code itself, making it challenging to implement policy changes. This approach can also hinder monitoring and auditing because the implementation of authorization logic often differs between applications, and there is no uniform standard.

To address this challenge, AWS developed Amazon Verified Permissions and the associated open-source policy language Cedar. Amazon Verified Permissions replaces the custom authorization logic in the application code with a simple IsAuthorized API call, so that you can control and monitor authorization logic centrally by using Cedar-based policies. To learn how to integrate Amazon Verified Permissions into your applications and define custom access control policies with Cedar, see How to use Amazon Verified Permissions for authorization.

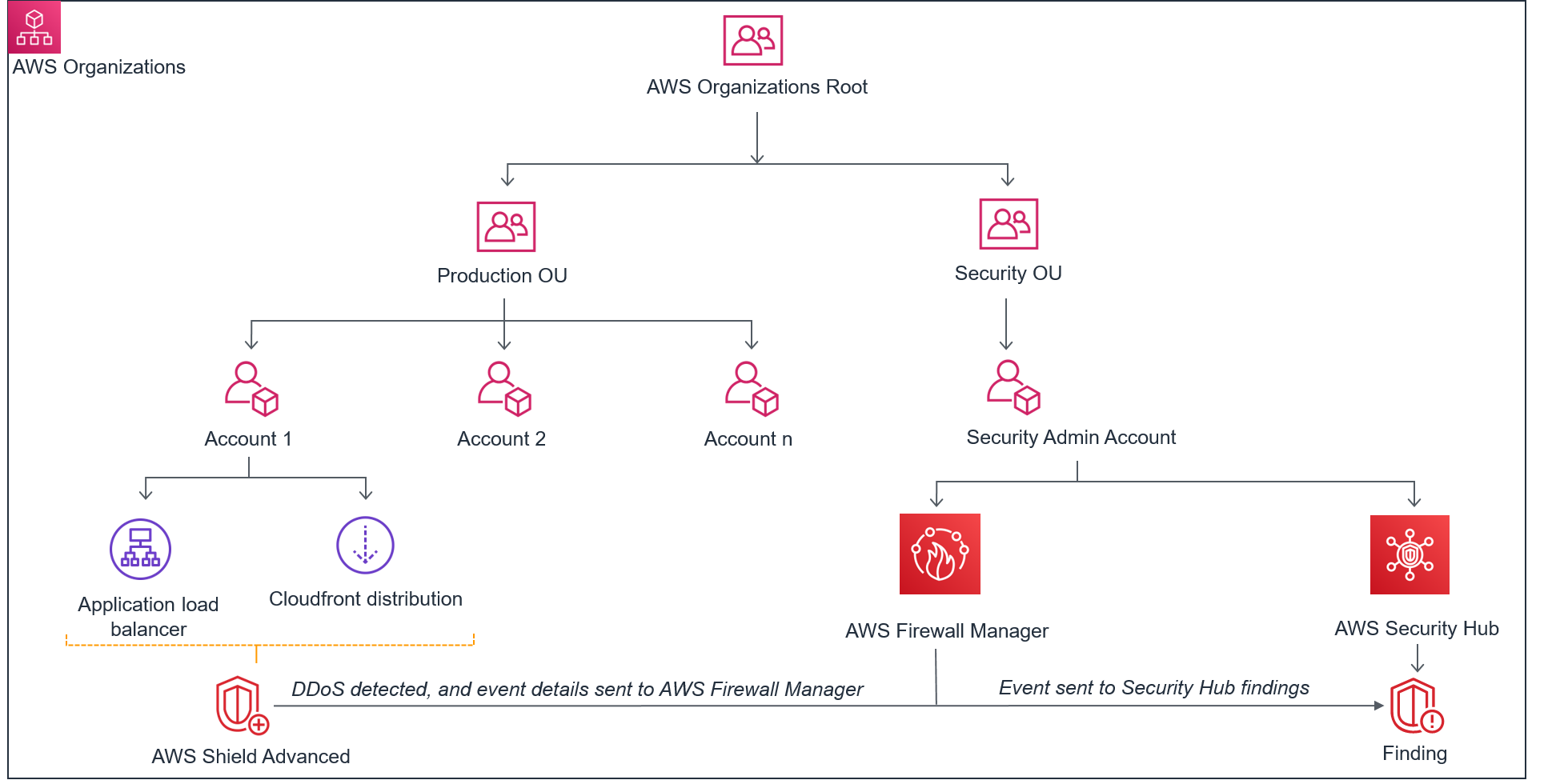

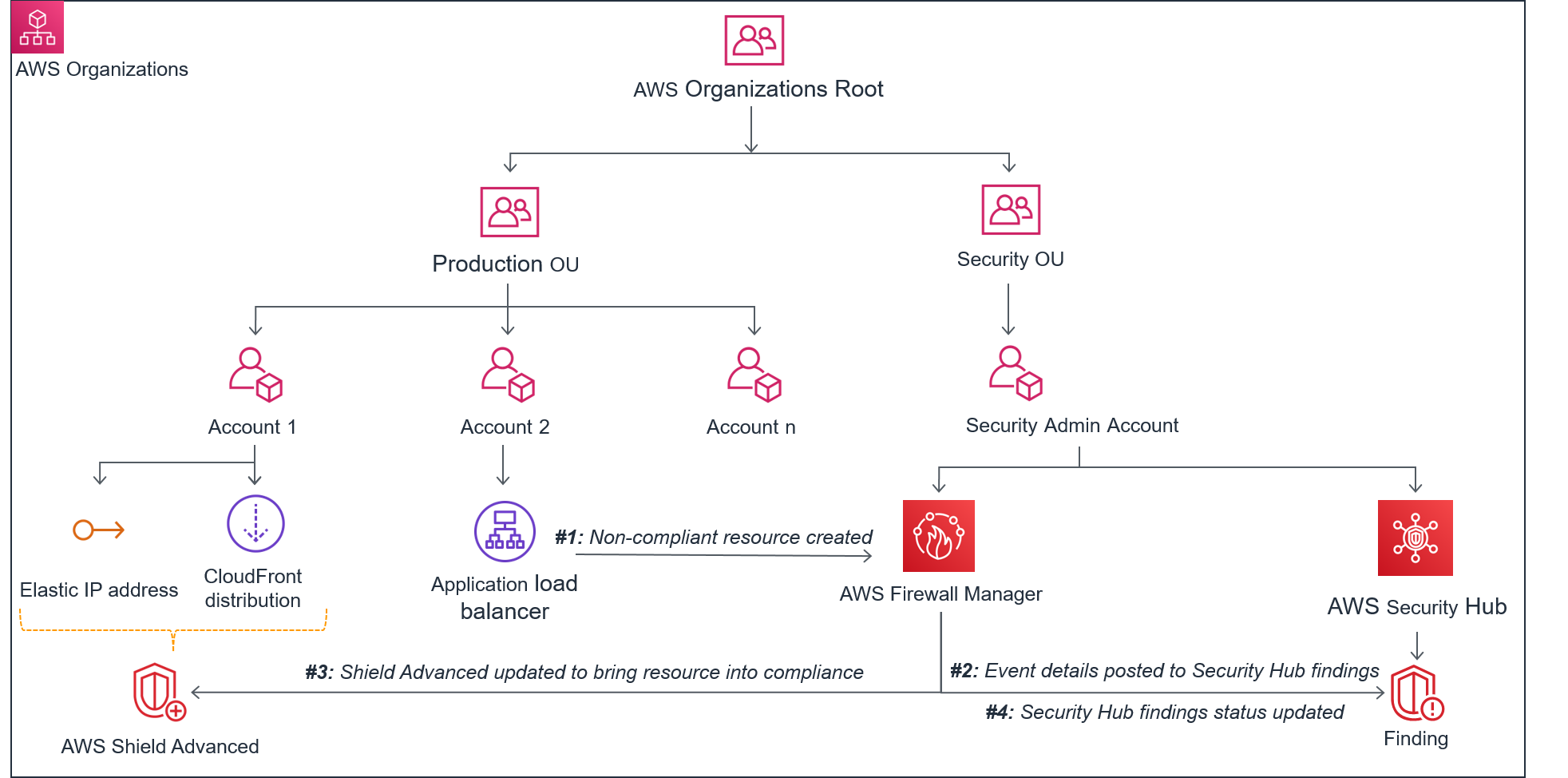

Compliance

In addition to access control, you can also use policies to help monitor and enforce your organization’s individual governance rules for security, operations and compliance. AWS Config and AWS Security Hub play a central role in compliance because they enable the setup of multi-account environments that follow best practices (known as landing zones). AWS Config continuously tracks resource configurations and changes, while Security Hub aggregates and prioritizes security findings. With these services, you can create controls that enable automated audits and conformity checks. Alternatively, you can also choose from ready-to-use controls that cover individual compliance objectives such as encryption at rest, or entire frameworks, such as PCI-DSS and NIST 800-53.

AWS Control Tower builds on top of AWS Config and Security Hub to help simplify governance and compliance for multi-account environments. AWS Control Tower incorporates additional controls with the existing ones from AWS Config and Security Hub, presenting them together through a unified interface. These controls apply at different resource life cycle stages, as shown in Figure 1, and you define them through policies.

Figure 1: Resource life cycle

The controls can be categorized according to their behavior:

- Proactive controls scan IaC templates before deployment to help identify noncompliance issues early.

- Preventative controls restrict actions within an AWS environment to help prevent noncompliant actions. For example, these controls can help prevent deployment of large Amazon Elastic Compute Cloud (Amazon EC2) instances or restrict the available AWS Regions for some users.

- Detective controls monitor deployed resources to help identify noncompliant resources that proactive and preventative controls might have missed. They also detect when deployed resources are changed or drift out of compliance over time.

Categorizing controls this way allows for a more comprehensive compliance framework that encompasses the entire resource life cycle. The stage at which each control applies determines how it may help enforce policies and governance rules.

With AWS Control Tower, you can enable hundreds of preconfigured security, compliance, and operational controls through the console with a single click, without needing to write code. You can also implement your own custom controls beyond what AWS Control Tower provides out of the box. The process for implementing custom controls varies depending on the type of control. In the following sections, I will explain how to set up custom controls for each type.

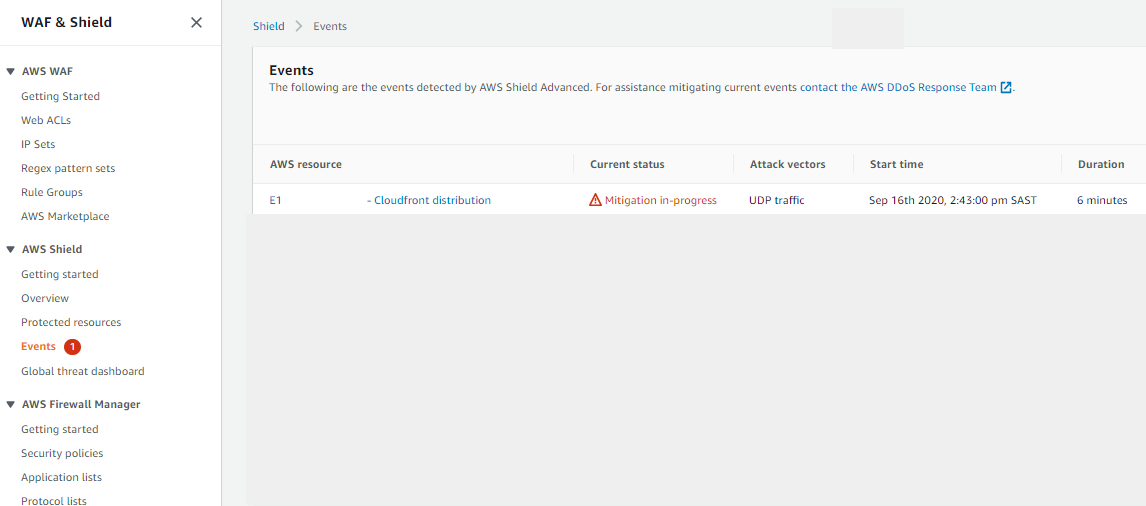

Proactive controls

Proactive controls are mechanisms that scan resources and their configuration to confirm that they adhere to compliance requirements before they are deployed. AWS provides a range of tools and services that you can use, both in isolation and in combination with each other, to implement proactive controls. The following diagram provides an overview of the available mechanisms and an example of their integration into a CI/CD pipeline for AWS Cloud Development Kit (CDK) projects.

Figure 2: CI/CD pipeline in AWS CDK projects

As shown in Figure 2, you can use the following mechanisms as proactive controls:

- You can validate artifacts such as IaC templates locally on your machine by using the AWS CloudFormation Guard CLI, which facilitates a shift-left testing strategy. The advantage of this approach is the relatively early testing in the deployment cycle. This supports rapid iterative development and thus reduces waiting times.

Alternatively, you can use the CfnGuardValidator plugin for AWS CDK, which integrates CloudFormation Guard rules into the AWS CDK CLI. This streamlines local development by applying policies and best practices directly within the CDK project.

- To centrally enforce validation checks, integrate the CfnGuardValidator plugin into a CDK CI/CD pipeline.

- You can also invoke the CloudFormation Guard CLI from within AWS CodeBuild buildspecs to embed CloudFormation Guard scans in a CI/CD pipeline.

- With CloudFormation hooks, you can impose policies on resources before CloudFormation deploys them.

AWS CloudFormation Guard uses a policy-as-code approach to evaluate IaC documents such as AWS CloudFormation templates and Terraform configuration files. The tool defines validation rules in the Guard language to check that these JSON or YAML documents align with best practices and organizational policies around provisioning cloud resources. By codifying rules and scanning infrastructure definitions programmatically, CloudFormation Guard automates policy enforcement and helps promote consistency and security across infrastructure deployments.

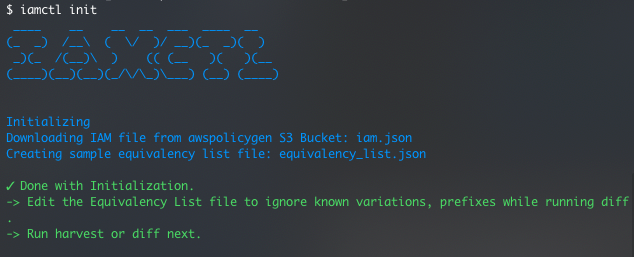

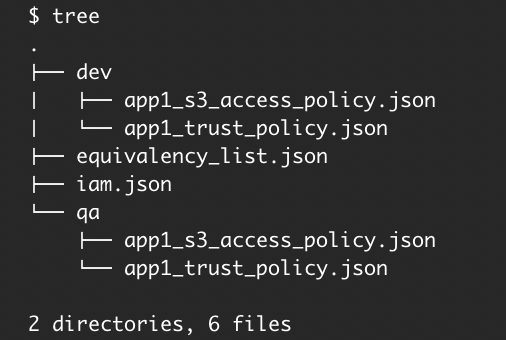

In the following example, you will use CloudFormation Guard to validate the name of an Amazon Simple Storage Service (Amazon S3) bucket in a CloudFormation template through a simple Guard rule:

To validate the S3 bucket

- Install CloudFormation Guard locally. For instructions, see Setting up AWS CloudFormation Guard.

- Create a YAML file named template.yaml with the following content and replace <DOC-EXAMPLE-BUCKET> with a bucket name of your choice (this file is a CloudFormation template, which creates an S3 bucket):

- Create a text file named rules.guard with the following content:

- To validate your CloudFormation template against your Guard rules, run the following command in your local terminal:

- If CloudFormation Guard successfully validates the template, the validate command produces an exit status of 0 ($? in bash). Otherwise, it returns a status report listing the rules that failed. You can test this yourself by changing the bucket name.

To accelerate the writing of Guard rules, use the CloudFormation Guard rulegen command, which takes a CloudFormation template file as an input and autogenerates Guard rules that match the properties of the template resources. To learn more about the structure of CloudFormation Guard rules and how to write them, see Writing AWS CloudFormation Guard rules.

The AWS Guard Rules Registry provides ready-to-use CloudFormation Guard rule files to accelerate your compliance journey, so that you don’t have to write them yourself.

Through the CDK plugin interface for policy validation, the CfnGuardValidator plugin integrates CloudFormation Guard rules into the AWS CDK and validates generated CloudFormation templates automatically during its synthesis step. For more details, see the plugin documentation and Accelerating development with AWS CDK plugin – CfnGuardValidator.

CloudFormation Guard alone can’t necessarily prevent the provisioning of noncompliant resources. This is because CloudFormation Guard can’t detect when templates or other documents change after validation. Therefore, I recommend that you combine CloudFormation Guard with a more authoritative mechanism.

One such mechanism is CloudFormation hooks, which you can use to validate AWS resources before you deploy them. You can configure hooks to cancel the deployment process with an alert if CloudFormation templates aren’t compliant, or just initiate an alert but complete the process. To learn more about CloudFormation hooks, see the following blog posts:

- Proactively keep resources secure and compliant with AWS CloudFormation Hooks

- How AWS Control Tower users can proactively verify compliance in AWS CloudFormation stacks

CloudFormation hooks provide a way to authoritatively enforce rules for resources deployed through CloudFormation. However, they don’t control resource creation that occurs outside of CloudFormation, such as through the console, CLI, SDK, or API. Terraform is one example that provisions resources directly through the AWS API rather than through CloudFormation templates. Because of this, I recommend that you implement additional detective controls by using AWS Config. AWS Config can continuously check resource configurations after deployment, regardless of the provisioning method. Using AWS Config rules complements the preventative capabilities of CloudFormation hooks.

Preventative controls

Preventative controls can help maintain compliance by applying guardrails that disallow policy-violating actions. AWS Control Tower integrates with AWS Organizations to implement preventative controls with SCPs. By using SCPs, you can restrict IAM permissions granted in a given organization or organizational unit (OU). One example of this is the selective activation of certain AWS Regions to meet data residency requirements.

SCPs are particularly valuable for managing IAM permissions across large environments with multiple AWS accounts. Organizations with many accounts might find it challenging to monitor and control IAM permissions. SCPs help address this challenge by applying centralized permission guardrails automatically to the accounts of an organization or organizational unit (OU). As new accounts are added, the SCPs are enforced without the need for extra configuration.

You can define SCPs through CloudFormation or CDK templates and deploy them through a CI/CD pipeline, similar to other AWS resources. Because misconfigured SCPs can negatively affect an organization’s operations, it’s vital that you test and simulate the effects of new policies in a sandbox environment before broader deployment. For an example of how to implement a pipeline for SCP testing, see the aws-service-control-policies-deployment GitHub repository.

To learn more about SCPs and how to implement them, see Service control policies (SCPs) and Best Practices for AWS Organizations Service Control Policies in a Multi-Account Environment.

Detective controls

Detective controls help detect noncompliance with existing resources. You can implement detective controls by using AWS Config rules, with both managed rules (provided by AWS) and custom rules available. You can implement custom rules either by using the domain-specific language Guard or Lambda functions. To learn more about the Guard option, see Evaluate custom configurations using AWS Config Custom Policy rules and the open source sample repository. For guidance on creating custom rules using Lambda functions, see AWS Config Rule Development Kit library: Build and operate rules at scale and Deploying Custom AWS Config Rules in an AWS Organization Environment.

To simplify audits for compliance frameworks such as PCI-DSS, HIPAA, and SOC2, AWS Config also offers conformance packs that bundle rules and remediation actions. To learn more about conformance packs, see Conformance Packs and Introducing AWS Config Conformance Packs.

When a resource’s configuration shifts to a noncompliant state that preventive controls didn’t avert, detective controls can help remedy the noncompliant state by implementing predefined actions, such as alerting an operator or reconfiguring the resource. You can implement these controls with AWS Config, which integrates with AWS Systems Manager Automation to help enable the remediation of noncompliant resources.

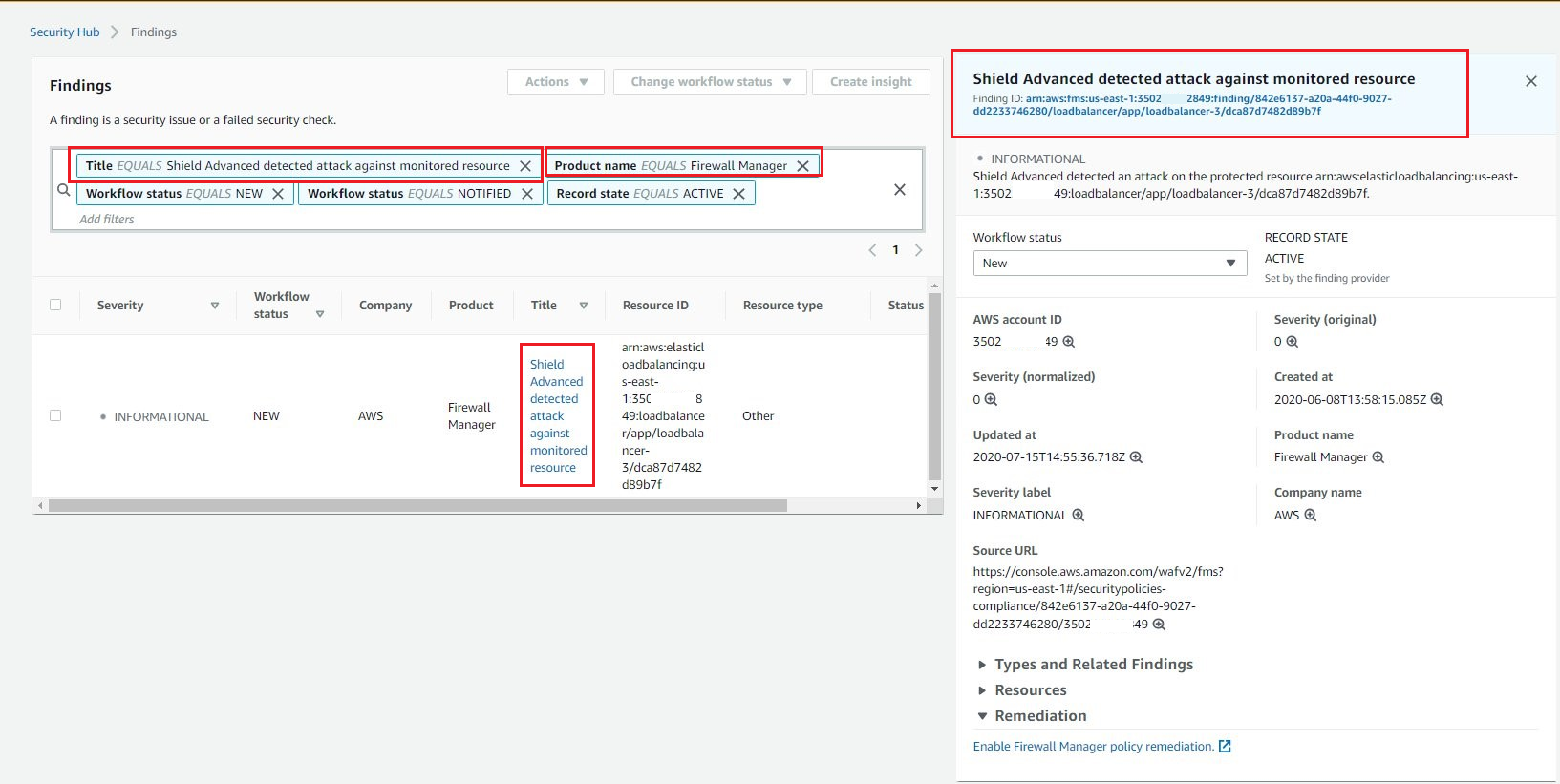

Security Hub can help centralize the detection of noncompliant resources across multiple AWS accounts. Using AWS Config and third-party tools for detection, Security Hub sends findings of noncompliance to Amazon EventBridge, which can then send notifications or launch automated remediations. You can also use the security controls and standards in Security Hub to monitor the configuration of your AWS infrastructure. This complements the conformance packs in AWS Config.

Conclusion

Many large and fast-growing organizations are faced with the challenge that manual IT governance processes are difficult to scale and can hinder growth. Policy-as-code services help to manage permissions and resource configurations at scale by automating key IT governance processes and, at the same time, increasing the quality and transparency of those processes. This helps to reconcile large environments with key governance objectives such as compliance.

In this post, you learned how to use policy as code to enhance IT governance. A first step is to activate AWS Control Tower, which provides preconfigured guardrails (SCPs) for each AWS account within an organization. These guardrails help enforce baseline compliance across infrastructure. You can then layer on additional controls to further strengthen governance in line with your needs. As a second step, you can select AWS Config conformance packs and Security Hub standards to complement the controls that AWS Control Tower offers. Finally, you can secure applications built on AWS by using Amazon Verified Permissions and Cedar for fine-grained authorization.

Resources

- AWS Control Tower Controls Guide

- AWS Well-Architected Framework – Management and Governance Cloud Environment Guide

- CDK Pipelines: Continuous delivery for AWS CDK applications

- AWS Config Developer Guide

- AWS Security Hub User Guide

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, contact AWS Support.

Want more AWS Security news? Follow us on Twitter.