Post Syndicated from Junho Choi original https://blog.cloudflare.com/delivering-http-2-upload-speed-improvements/

Cloudflare recently shipped improved upload speeds across our network for clients using HTTP/2. This post describes our journey from troubleshooting an issue to fixing it and delivering faster upload speeds to the global Internet.

We launched speed.cloudflare.com in May 2020 to give our users insight into how well their networks perform. The test provides download, upload and latency tests. Soon after release, we received reports from a small number of users that sometimes upload speeds were underreported. Our investigation determined that it seemed to happen with end users that had high upload bandwidth available (several hundreds Mbps class cable modem or fiber service). Our speed tests are performed via browser JavaScript, and most browsers use HTTP/2 by default. We found that HTTP/2 upload speeds were sometimes much slower than HTTP/1.1 (assuming all TLS) when the user had high available upload bandwidth.

Upload speed is more important than ever, especially for people using home broadband connections. As many people have been forced to work from home they’re using their broadband connections differently than before. Prior to the pandemic broadband traffic was very asymmetric (you downloaded way more than you uploaded… think listening to music, or streaming a movie), but now we’re seeing an increase in uploading as people video conference from home or create content from their home office.

Initial Tests

User reports were focused on particularly fast home networks. I set up a dummynet network simulator to test upload speed in a controlled environment. I launched a linux VM running our code inside my Macbook Pro and set up a dummynet between the VM and Mac host. Measuring upload speed is simple – create a file and upload using curl to an endpoint which accepts a request body. I ran the same test 20 times and took a median upload speed (Mbps).

% dd if=/dev/urandom of=test.dat bs=1M count=10

% curl --http1.1 -w '%{speed_upload}\n' -sf -o/dev/null --data-binary @test.dat https://edge/upload-endpoint

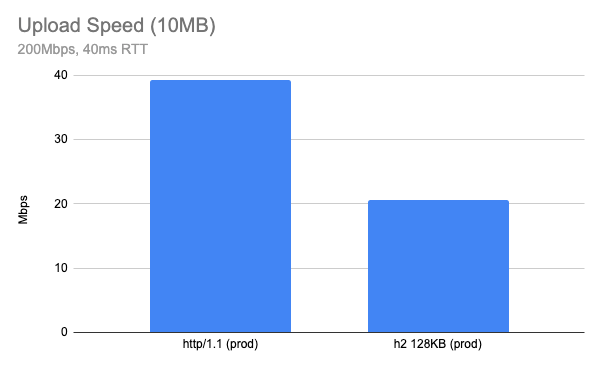

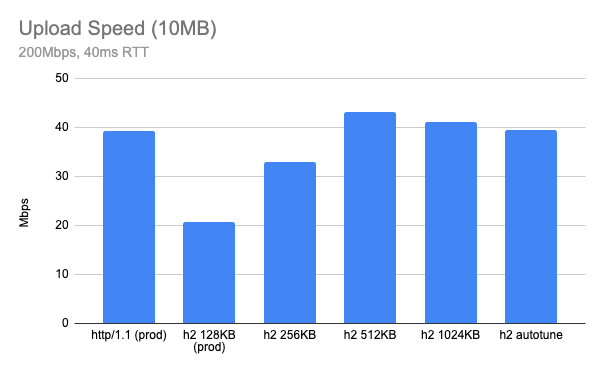

% curl --http2 -w '%{speed_upload}\n' -sf -o/dev/null --data-binary @test.dat https://edge/upload-endpointStepping up to uploading a 10MB object over a network which has 200Mbps available bandwidth and 40ms RTT, the result was surprising. Using our production configuration, HTTP/2 upload speed tested at almost half of the same test conditions using HTTP/1.1 (higher is better).

The result may differ depending on your network, but the gap is bigger when the network is fast. On a slow network, like my home cable connection (5Mbps upload and 20ms RTT), HTTP/2 upload speed was almost identical to the performance observed with HTTP/1.1.

Receiver Flow Control

Before we get into more detail on this topic, my intuition suggested the issue was related to receiver flow control. Usually the client (browser or any HTTP client) is the receiver of data, but in the case the client is uploading content to the server, the server is the receiver of data. And the receiver needs some type of flow control of the receive buffer.

How we handle receiver flow control differs between HTTP/1.1 and HTTP/2. For example, HTTP/1.1 doesn’t define protocol-level receiver flow control since there is no multiplexing of requests in the connection and it’s up to the TCP layer which handles receiving data. Note that most of the modern OS TCP stacks have auto tuning of the receive buffer (we will revisit that later) and they tune based on the current BDP (bandwidth-delay product).

In the case of HTTP/2, there is a stream-level flow control mechanism because the protocol supports multiplexing of streams. Each HTTP/2 stream has its own flow control window and there is connection level flow control for all streams in the connection. If it’s too tight, the sender will be blocked by the flow control. If it’s too loose we may end up wasting memory for buffering. So keeping it optimal is important when implementing flow control and the most optimal strategy is to keep the receive buffer matching the current BDP. BDP represents the maximum bytes in flight in the given network and can be used as an optimal buffer size.

How NGINX handles the request body buffer

Initially I tried to find a parameter which controls NGINX upload buffering and tried to see if tuning the values improved the result. There are a couple of parameters which are related to uploading a request body.

And this is HTTP/2 specific:

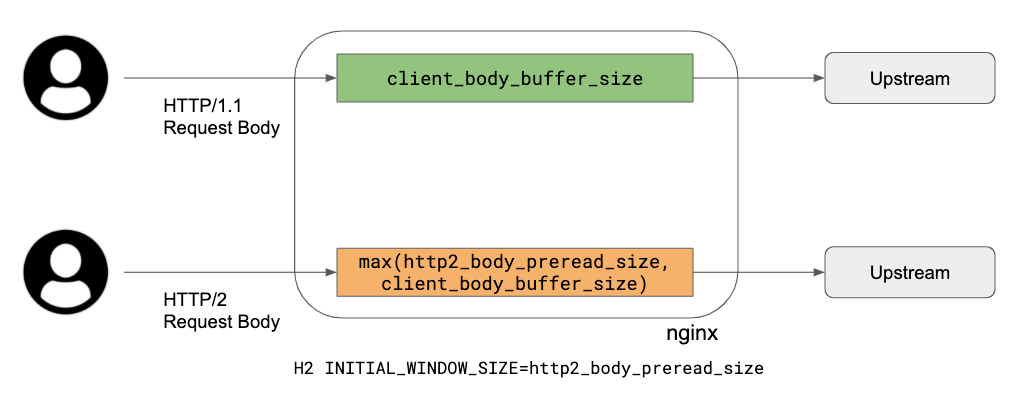

Cloudflare does not use the proxy_buffering directive, so it can be immediately discounted. client_body_buffer_size is the size of the request body buffer which is used regardless of the protocol, so this one applies to HTTP/1.1 and HTTP/2 as well.

When looking into the code, here is how it works:

- HTTP/1.1: use

client_body_buffer_sizebuffer as a buffer between upstream and the client, simply repeating reading and writing using this buffer. - HTTP/2: since we need a flow control window update for the HTTP/2 DATA frame, there are two parameters:

http2_body_preread_size: it specifies the size of the initial request body read before it starts to send to the upstream.client_body_buffer_size: it specifies the size of the request body buffer.- Those two parameters are used for allocating a request body buffer during uploading. Here is a brief summary of how unbuffered upload works:

- Allocate a single request body buffer which size is a maximum of

http2_body_preread_sizeandclient_body_buffer_size. This means ifhttp2_body_preread_sizeis 64KB andclient_body_buffer_sizeis 128KB, then a 128KB buffer is allocated. We use 128KB forclient_body_buffer_size. - HTTP/2 Settings

INITIAL_WINDOW_SIZEof the stream is set tohttp2_body_preread_sizeand we use 64KB as a default (the RFC7540 default value). - HTTP/2 module reads up to

http2_body_preread_sizebefore sending it to upstream. - After flushing the preread buffer, keep reading from the client and write to upstream and send

WINDOW_UPDATEframe back to the client when necessary until the request body is fully received.

- Allocate a single request body buffer which size is a maximum of

To summarise what this means: HTTP/1.1 simply uses a single buffer, so TCP socket buffers do the flow control. However with HTTP/2, the application layer also has receiver flow control and NGINX uses a fixed size buffer for the receiver. This limits upload speed when the current link has a BDP larger than the current request body buffer size. So the bottleneck is HTTP/2 flow control when the buffer size is too tight.

We’re going to need a bigger buffer?

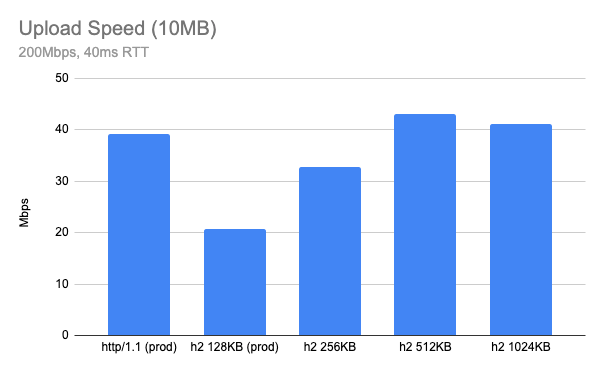

In theory, bigger buffer sizes should avoid upload bottlenecks, so I tried a few out by running my tests again. The previous chart result is now labelled “prod” and plotted alongside HTTP/2 tests with client_body_buffer_size set to 256KB, 512KB and 1024KB:

It appears 512KB is an optimal value for client_body_buffer_size.

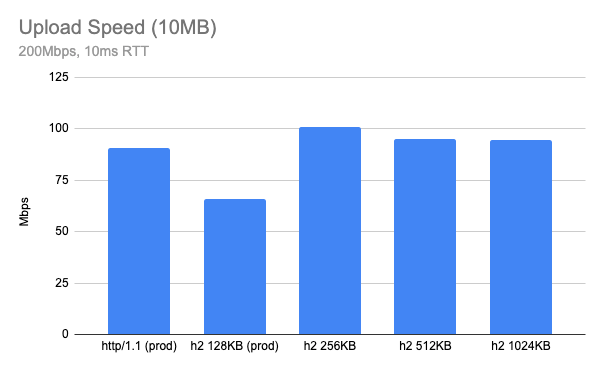

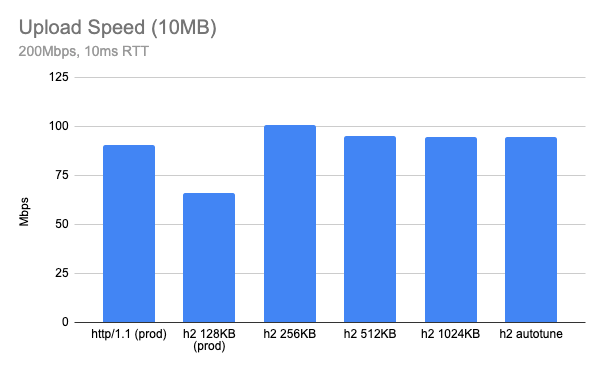

What if I test with some other network parameter? Here is when RTT is 10ms, in this case, 256KB looks optimal.

Both cases look much better than current 128KB and get a similar performance to HTTP/1.1 or even better. However, it seems like the optimal buffer size is a moving target and furthermore having too large a buffer size can hurt the performance: we need a smart way to find the optimal buffer size.

Autotuning request body buffer size

One of the ideas that can help this kind of situation is autotuning. For example, modern TCP stacks autotune their receive buffers automatically. In production, our edge also has TCP receiver buffer autotuning enabled by default.

net.ipv4.tcp_moderate_rcvbuf = 1But in case of HTTP/2, TCP buffer autotuning is not very effective because the HTTP/2 layer is doing its own flow control and the existing 128KB was too small for a high BDP link. At this point, I decided to pursue autotuning HTTP/2 receive buffer sizing as well, similar to what TCP does.

The basic idea is that NGINX doubles the size of HTTP/2 request body buffer size based on its BDP. Here is an algorithm currently implemented in our version of NGINX:

- Allocate a request body buffer as explained above.

- For every RTT (using linux

tcp_info), update the current BDP. - Double the request body buffer size when the current BDP > (receiver window / 4).

Test Result

Lab Test

Here is a test result when HTTP/2 autotune upload is enabled (still using client_body_buffer_size 128KB). You can see “h2 autotune” is doing pretty well – similar or even slightly faster than HTTP/1.1 speed (that’s the initial goal). It might be slightly worse than a hand-picked optimal buffer size for given conditions, but you can see now NGINX picks up optimal buffer size automatically based on network conditions.

Production test

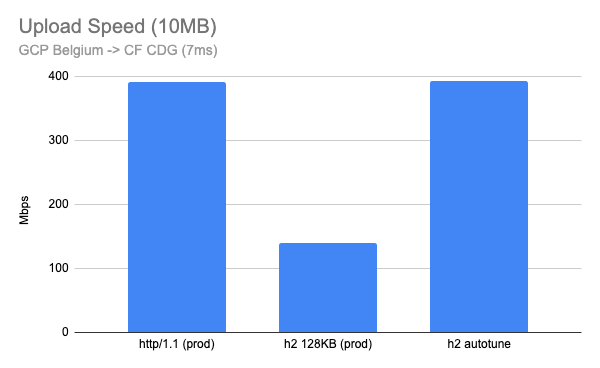

After we deployed this feature, I ran similar tests against our production edge, uploading a 10MB file from well connected client nodes to our edge. I created a Linux VM instance in Google Cloud and ran the upload test where the network is very fast (a few Gbps) and low latency (<10ms).

Here is when I run the test in the Google Cloud Belgium region to our CDG (Paris) PoP which has 7ms RTT. This looks very good with almost 3x improvement.

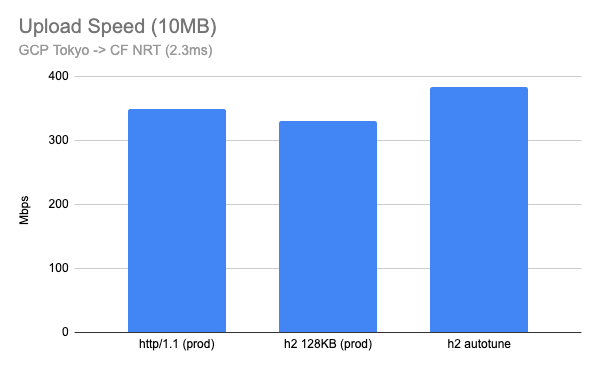

I also tested between the Google Cloud Tokyo region and our NRT (Tokyo) PoP, which had a 2.3ms RTT. Although this is not realistic for home users, the results are interesting. A 128KB fixed buffer performs well, but HTTP/2 with buffer autotune outperforms even HTTP/1.1.

Summary

HTTP/2 upload buffer autotuning is now fully deployed in the Cloudflare edge. Customers should now benefit from improved upload performance for all HTTP/2 connections, including speed tests on speed.cloudflare.com. Autotuning upload buffer size logic works well for most cases, and now HTTP/2 upload is much faster than before! When we think about the performance we usually tend to think about download speed or latency reduction, but faster uploading can also help users working from home when they need a large amount of upload, such as photo/video sharing apps, content creation, video conferencing or self broadcasting.

Many thanks to Lucas Pardue and Rustam Lalkaka for great feedback and suggestions on the article.