Post Syndicated from Sue Sentance original https://www.raspberrypi.org/blog/ai-ethics-lessons-education-children-research/

Between September 2021 and March 2022, we’re partnering with The Alan Turing Institute to host speakers from the UK, Finland, Germany, and the USA presenting a series of free research seminars about AI and data science education for young people. These rapidly developing technologies have a huge and growing impact on our lives, so it’s important for young people to understand them both from a technical and a societal perspective, and for educators to learn how to best support them to gain this understanding.

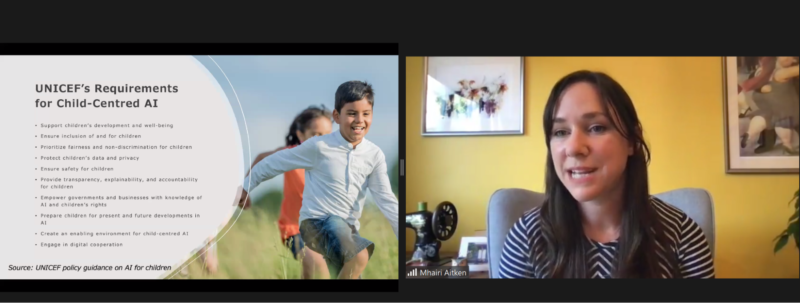

In our first seminar we were beyond delighted to hear from Dr Mhairi Aitken, Ethics Fellow at The Alan Turing Institute. Mhairi is a sociologist whose research examines social and ethical dimensions of digital innovation, particularly relating to uses of data and AI. You can catch up on her full presentation and the Q&A with her in the video below.

Why we need AI ethics

The increased use of AI in society and industry is bringing some amazing benefits. In healthcare for example, AI can facilitate early diagnosis of life-threatening conditions and provide more accurate surgery through robotics. AI technology is also already being used in housing, financial services, social services, retail, and marketing. Concerns have been raised about the ethical implications of some aspects of these technologies, and Mhairi gave examples of a number of controversies to introduce us to the topic.

“Ethics considers not what we can do but rather what we should do — and what we should not do.”

Mhairi Aitken

One such controversy in England took place during the coronavirus pandemic, when an AI system was used to make decisions about school grades awarded to students. The system’s algorithm drew on grades awarded in previous years to other students of a school to upgrade or downgrade grades given by teachers; this was seen as deeply unfair and raised public consciousness of the real-life impact that AI decision-making systems can have.

Another high-profile controversy was caused by biased machine learning-based facial recognition systems and explored in Shalini Kantayya’s documentary Coded Bias. Such facial recognition systems have been shown to be much better at recognising a white male face than a black female one, demonstrating the inequitable impact of the technology.

What should AI be used for?

There is a clear need to consider both the positive and negative impacts of AI in society. Mhairi stressed that using AI effectively and ethically is not just about mitigating negative impacts but also about maximising benefits. She told us that bringing ethics into the discussion means that we start to move on from what AI applications can do to what they should and should not do. To outline how ethics can be applied to AI, Mhairi first outlined four key ethical principles:

- Beneficence (do good)

- Nonmaleficence (do no harm)

- Autonomy

- Justice

Mhairi shared a number of concrete questions that ethics raise about new technologies including AI:

- How do we ensure the benefits of new technologies are experienced equitably across society?

- Do AI systems lead to discriminatory practices and outcomes?

- Do new forms of data collection and monitoring threaten individuals’ privacy?

- Do new forms of monitoring lead to a Big Brother society?

- To what extent are individuals in control of the ways they interact with AI technologies or how these technologies impact their lives?

- How can we protect against unjust outcomes, ensuring AI technologies do not exacerbate existing inequalities or reinforce prejudices?

- How do we ensure diverse perspectives and interests are reflected in the design, development, and deployment of AI systems?

Who gets to inform AI systems? The kangaroo metaphor

To mitigate negative impacts and maximise benefits of an AI system in practice, it’s crucial to consider the context in which the system is developed and used. Mhairi illustrated this point using the story of an autonomous vehicle, a self-driving car, developed in Sweden in 2017. It had been thoroughly safety-tested in the country, including tests of its ability to recognise wild animals that may cross its path, for example elk and moose. However, when the car was used in Australia, it was not able to recognise kangaroos that hopped into the road! Because the system had not been tested with kangaroos during its development, it did not know what they were. As a result, the self-driving car’s safety and reliability significantly decreased when it was taken out of the context in which it had been developed, jeopardising people and kangaroos.

Mhairi used the kangaroo example as a metaphor to illustrate ethical issues around AI: the creators of an AI system make certain assumptions about what an AI system needs to know and how it needs to operate; these assumptions always reflect the positions, perspectives, and biases of the people and organisations that develop and train the system. Therefore, AI creators need to include metaphorical ‘kangaroos’ in the design and development of an AI system to ensure that their perspectives inform the system. Mhairi highlighted children as an important group of ‘kangaroos’.

AI in children’s lives

AI may have far-reaching consequences in children’s lives, where it’s being used for decision-making around access to resources and support. Mhairi explained the impact that AI systems are already having on young people’s lives through these systems’ deployment in children’s education, in apps that children use, and in children’s lives as consumers.

Children can be taught not only that AI impacts their lives, but also that it can get things wrong and that it reflects human interests and biases. However, Mhairi was keen to emphasise that we need to find out what children know and want to know before we make assumptions about what they should be taught. Moreover, engaging children in discussions about AI is not only about them learning about AI, it’s also about ethical practice: what can people making decisions about AI learn from children by listening to their views and perspectives?

AI research that listens to children

UNICEF, the United Nations Children’s Fund, has expressed concerns about the impact of new AI technologies used on children and young people. They have developed the UNICEF Requirements for Child-Centred AI.

Together with UNICEF, Mhairi and her colleagues working on the Ethics Theme in the Public Policy Programme at The Alan Turing Institute are engaged in new research to pilot UNICEF’s Child-Centred Requirements for AI, and to examine how these impact public sector uses of AI. A key aspect of this research is to hear from children themselves and to develop approaches to engage children to inform future ethical practices relating to AI in the public sector. The researchers hope to find out how we can best engage children and ensure that their voices are at the heart of the discussion about AI and ethics.

We all learned a tremendous amount from Mhairi and her work on this important topic. After her presentation, we had a lively discussion where many of the participants relayed the conversations they had had about AI ethics and shared their own concerns and experiences and many links to resources. The Q&A with Mhairi is included in the video recording.

What we love about our research seminars is that everyone attending can share their thoughts, and as a result we learn so much from attendees as well as from our speakers!

It’s impossible to cover more than a tiny fraction of the seminar here, so I do urge you to take the time to watch the seminar recording. You can also catch up on our previous seminars through our blogs and videos.

Join our next seminar

We have six more seminars in our free series on AI, machine learning, and data science education, taking place every first Tuesday of the month. At our next seminar on Tuesday 5 October at 17:00–18:30 BST / 12:00–13:30 EDT / 9:00–10:30 PDT / 18:00–19:30 CEST, we will welcome Professor Carsten Schulte, Yannik Fleischer, and Lukas Höper from the University of Paderborn, Germany, who will be presenting on the topic of teaching AI and machine learning (ML) from a data-centric perspective (find out more here). Their talk will raise the questions of whether and how AI and ML should be taught differently from other themes in the computer science curriculum at school.

Sign up now and we’ll send you the link to join on the day of the seminar — don’t forget to put the date in your diary.

I look forward to meeting you there!

In the meantime, we’re offering a brand-new, free online course that introduces machine learning with a practical focus — ideal for educators and anyone interested in exploring AI technology for the first time.

The post What’s a kangaroo?! AI ethics lessons for and from the younger generation appeared first on Raspberry Pi.