Post Syndicated from Janne Pikkarainen original https://blog.zabbix.com/whats-up-home-monitor-your-ad-blocker-with-zabbix/26912/

Can you monitor your ad blocker with Zabbix? Of course, you can!

API defines it all

My home Asus router is running on Asuswrt-Merlin firmware, and with that, I have AdGuard Home ad blocker.

As AdGuard Home has an API, monitoring it with Zabbix is trivial.

Communicate with the API

Communicating with AdGuard Home API is easy: pass it Authorisation: Basic XXXXXXXXXXXX header, where XXXXXXXXXX is just a Base64 hash of your AdGuard username and password. You can generate that Base64 snippet with for example

echo -n "myuser:mypassword" | base64

Next, in Zabbix, create a new HTTP Agent type item, and point it to your AdGuard Home instance.

Create some items

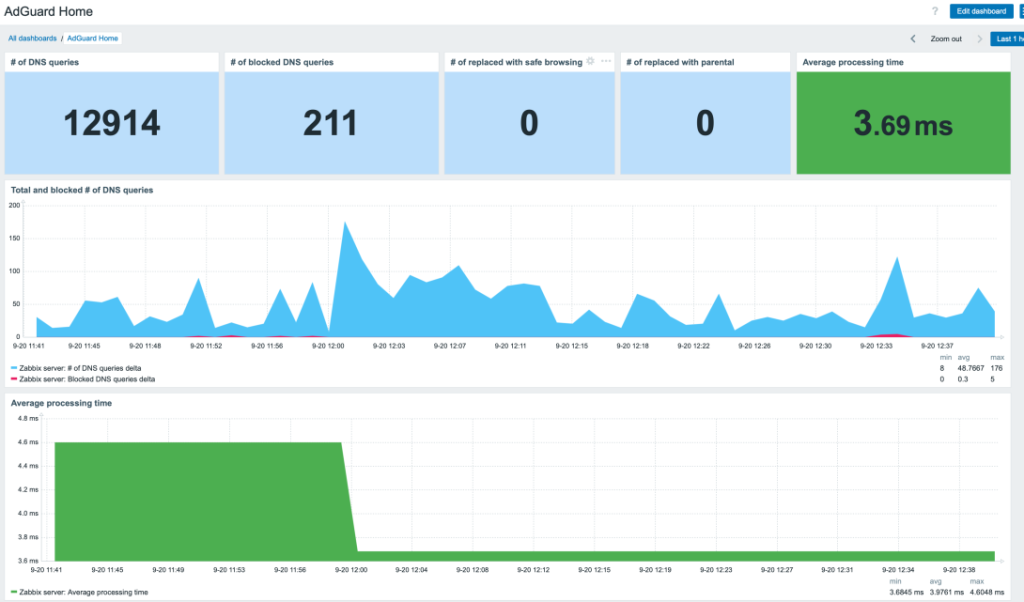

You’ll get the info back as JSON, so next you can create some dependent items and start monitoring. I only added

- Total number of DNS requests

- Blocked # of DNS requests

- Redirects to safe search

- Parental advisory stuff

- Average request processing time

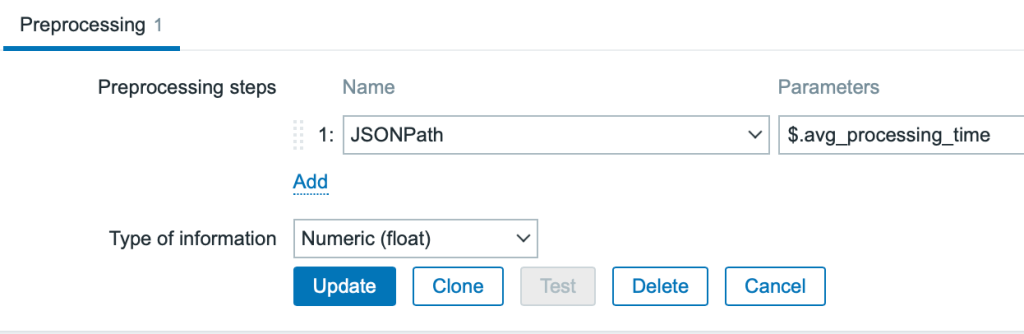

For the dependent items, you’ll then just do some JSONPath processing.

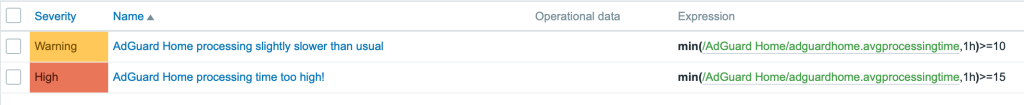

Add triggers

Next, I added a few triggers to alert me if AdGuard starts to run slower than usual.

Add service

Finally, I added AdGuard as a new business service, so I’ll get an SLA for it.

And that’s it! From now on I’ll know more about how well my home router ad-blocker is working. (Well, it also has a Skynet firewall which probably filters stuff before AdGuard Home, but that’s another story….)

This post was originally published on the author’s page.

The post What’s Up, Home? – Monitor your ad blocker with Zabbix appeared first on Zabbix Blog.