Post Syndicated from Bobby Whyte original https://www.raspberrypi.org/blog/using-an-ai-code-generator-with-school-age-beginner-programmers/

AI models for general-purpose programming, such as OpenAI Codex, which powers the AI pair programming tool GitHub Copilot, have the potential to significantly impact how we teach and learn programming.

The basis of these tools is a ‘natural language to code’ approach, also called natural language programming. This allows users to generate code using a simple text-based prompt, such as “Write a simple Python script for a number guessing game”. Programming-specific AI models are trained on vast quantities of text data, including GitHub repositories, to enable users to quickly solve coding problems using natural language.

As a computing educator, you might ask what the potential is for using these tools in your classroom. In our latest research seminar, Majeed Kazemitabaar (University of Toronto) shared his work in developing AI-assisted coding tools to support students during Python programming tasks.

Majeed argued that natural language programming can enable students to focus on the problem-solving aspects of computing, and support them in fixing and debugging their code. However, he cautioned that students might become overdependent on the use of ‘AI assistants’ and that they might not understand what code is being outputted. Nonetheless, Majeed and colleagues were interested in exploring the impact of these code generators on students who are starting to learn programming.

Using AI code generators to support novice programmers

In one study, the team Majeed works in investigated whether students’ task and learning performance was affected by an AI code generator. They split 69 students (aged 10–17) into two groups: one group used a code generator in an environment, Coding Steps, that enabled log data to be captured, and the other group did not use the code generator.

Learners who used the code generator completed significantly more authoring tasks — where students manually write all of the code — and spent less time completing them, as well as generating significantly more correct solutions. In multiple choice questions and modifying tasks — where students were asked to modify a working program — students performed similarly whether they had access to the code generator or not.

A test was administered a week later to check the groups’ performance, and both groups did similarly well. However, the ‘code generator’ group made significantly more errors in authoring tasks where no starter code was given.

Majeed’s team concluded that using the code generator significantly increased the completion rate of tasks and student performance (i.e. correctness) when authoring code, and that using code generators did not lead to decreased performance when manually modifying code.

Finally, students in the code generator group reported feeling less stressed and more eager to continue programming at the end of the study.

Understanding how novices use AI code generators

In a related study, Majeed and his colleagues investigated how novice programmers used the code generator and whether this usage impacted their learning. Working with data from 33 learners (aged 11–17), they analysed 45 tasks completed by students to understand:

- The context in which the code generator was used

- What learners asked for

- How prompts were written

- The nature of the outputted code

- How learners used the outputted code

Their analysis found that students used the code generator for the majority of task attempts (74% of cases) with far fewer tasks attempted without the code generator (26%). Of the task attempts made using the code generator, 61% involved a single prompt while only 8% involved decomposition of the task into multiple prompts for the code generator to solve subgoals; 25% used a hybrid approach — that is, some subgoal solutions being AI-generated and others manually written.

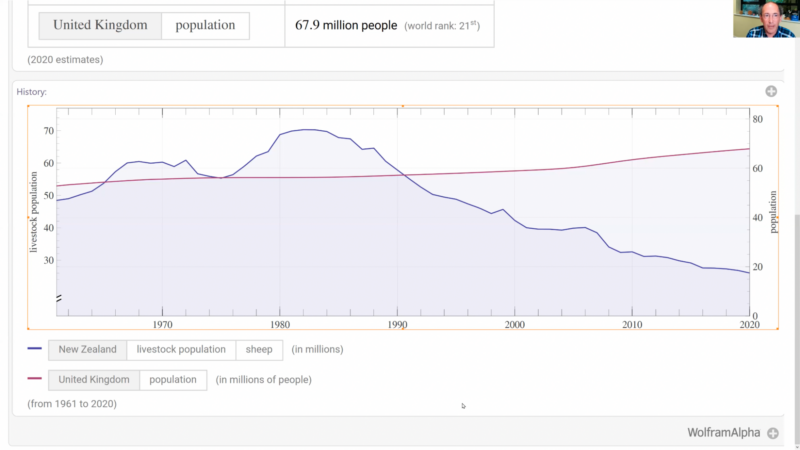

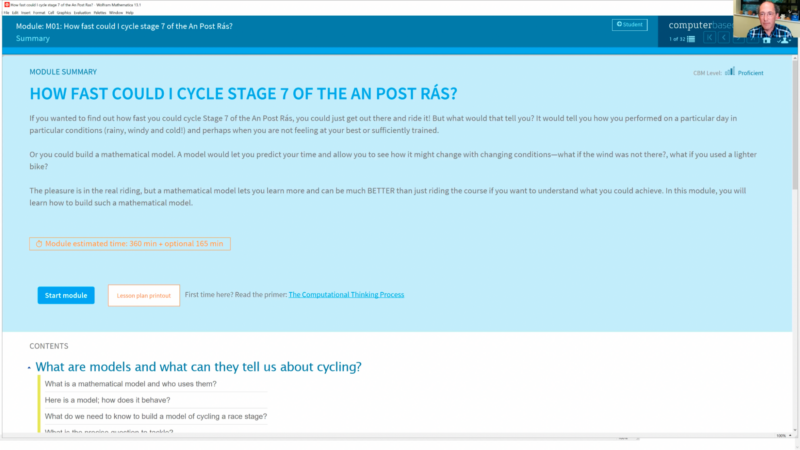

In a comparison of students against their post-test evaluation scores, there were positive though not statistically significant trends for students who used a hybrid approach (see the image below). Conversely, negative though not statistically significant trends were found for students who used a single prompt approach.

Though not statistically significant, these results suggest that the students who actively engaged with tasks — i.e. generating some subgoal solutions, manually writing others, and debugging their own written code — performed better in coding tasks.

Majeed concluded that while the data showed evidence of self-regulation, such as students writing code manually or adding to AI-generated code, students frequently used the output from single prompts in their solutions, indicating an over-reliance on the output of AI code generators.

He suggested that teachers should support novice programmers to write better quality prompts to produce better code.

If you want to learn more, you can watch Majeed’s seminar:

You can read more about Majeed’s work on his personal website. You can also download and use the code generator Coding Steps yourself.

Join our next seminar

The focus of our ongoing seminar series is on teaching programming with or without AI.

For our next seminar on Tuesday 16 April at 17:00–18:30 GMT, we’re joined by Brett Becker (University College Dublin), who will discuss how generative AI may be effectively utilised in secondary school programming education and how it can be leveraged so that students can be best prepared for whatever lies ahead. To take part in the seminar, click the button below to sign up, and we will send you information about joining. We hope to see you there.

The schedule of our upcoming seminars is online. You can catch up on past seminars on our previous seminars and recordings page.

The post Using an AI code generator with school-age beginner programmers appeared first on Raspberry Pi Foundation.