Post Syndicated from Igor Alekseev original https://aws.amazon.com/blogs/big-data/introducing-mongodb-atlas-metadata-collection-with-aws-glue-crawlers/

For data lake customers who need to discover petabytes of data, AWS Glue crawlers are a popular way to discover and catalog data in the background. This allows users to search and find relevant data from multiple data sources. Many customers also have data in managed operational databases such as MongoDB Atlas and need to combine it with data from Amazon Simple Storage Service (Amazon S3) data lakes to derive insights. AWS Glue crawlers now support MongoDB Atlas, making it simpler for you to understand MongoDB collections’ evolution and extract meaningful insights.

AWS Glue is a serverless data integration service that makes it simple to discover, prepare, move, and integrate data from multiple sources for analytics, machine learning (ML), and application development.

MongoDB Atlas is a developer data service from AWS technology partner MongoDB, Inc. The service combines transactional processing, relevance-based search, real-time analytics, and mobile-to-cloud data synchronization in an integrated architecture.

With today’s launch, you can create and schedule an AWS Glue crawler to crawl MongoDB Atlas. In the crawler setup, you can select MongoDB as a data source. You can then create an AWS Glue connection with MongoDB Atlas and provide the MongoDB Atlas cluster name and credentials. We walk you through this process in this post.

Solution overview

The following architecture illustrates how you can scan a MongoDB Atlas database and collections using AWS Glue.

With each run of the crawler, the crawler inspects specified collections and catalogs information, such as updates or deletes to MongoDB Atlas collections, views, and materialized views in the AWS Glue Data Catalog. In AWS Glue Studio, you can then use the AWS Glue Data Catalog as a source to pull data from MongoDB Atlas and populate an Amazon S3 target. Finally, this job can run and read data from MongoDB Atlas and write the results to Amazon S3, opening up possibilities to integrate with AWS services such as Amazon SageMaker, Amazon QuickSight, and more.

In the following sections, we describe how to create an AWS Glue crawler with MongoDB Atlas as a data source. We then create an AWS Glue connection and provide the MongoDB Atlas cluster information and credentials. Then we specify the MongoDB Atlas database and collections to crawl.

Prerequisites

To follow along with this post, you must have access to MongoDB Atlas and the AWS Management Console. We also assume you have access to a VPC with subnets preconfigured via Amazon Virtual Private Cloud (Amazon VPC). The crawler that we configure later in the post runs in the VPC and connects to MongoDB Atlas via an AWS PrivateLink endpoint.

Set up MongoDB Atlas

To configure MongoDB Atlas, complete the following steps:

- Configure a MongoDB cluster on AWS. For instructions, refer to How to Set Up a MongoDB Cluster.

- Configure PrivateLink by following the steps described in Connecting Applications Securely to a MongoDB Atlas Data Plane with AWS PrivateLink.

This allows us to simplify our networking architecture and make sure the traffic stays on the AWS network.

Next, we obtain the MongoDB cluster connection string from the Connect UI on the MongoDB Atlas console.

- On the MongoDB Atlas console, choose Connect, Private Endpoint, and Connection Method.

- Copy the SRV connection string.

We use this SRV connection string in the subsequent steps.

The following screenshot shows that we have loaded a sample collection in MongoDB Atlas, which we crawl over in the next steps. Note that the records in this collection include several arrays as well as nested data.

Set up the MongoDB Atlas connection with AWS Glue

Before we can configure the AWS Glue crawler, we need to create the MongoDB Atlas connection in AWS Glue.

- On the AWS Glue Studio console, choose Connectors in the navigation pane.

- Choose Create connection.

- When filling out the connection details, use the SRV connection string we obtained earlier in MongoDB Atlas.

- In the Network options section, the VPC and subnets must correspond to the PrivateLink settings you configured earlier.

Create a MongoDB crawler

After we create the connection, we can create an AWS Glue crawler.

- On the AWS Glue console, choose Crawlers in the navigation pane.

- Choose Create crawler.

- For Name, enter a name.

- For the data source, choose the MongoDB Atlas data source we configured earlier and supply the path that corresponds to the MongoDB Atlas database and collection.

- Configure your security settings, output, and scheduling.

- On the Crawlers page, choose Run crawler.

After the crawler finishes crawling the MongoDB collections, its status shows as Completed.

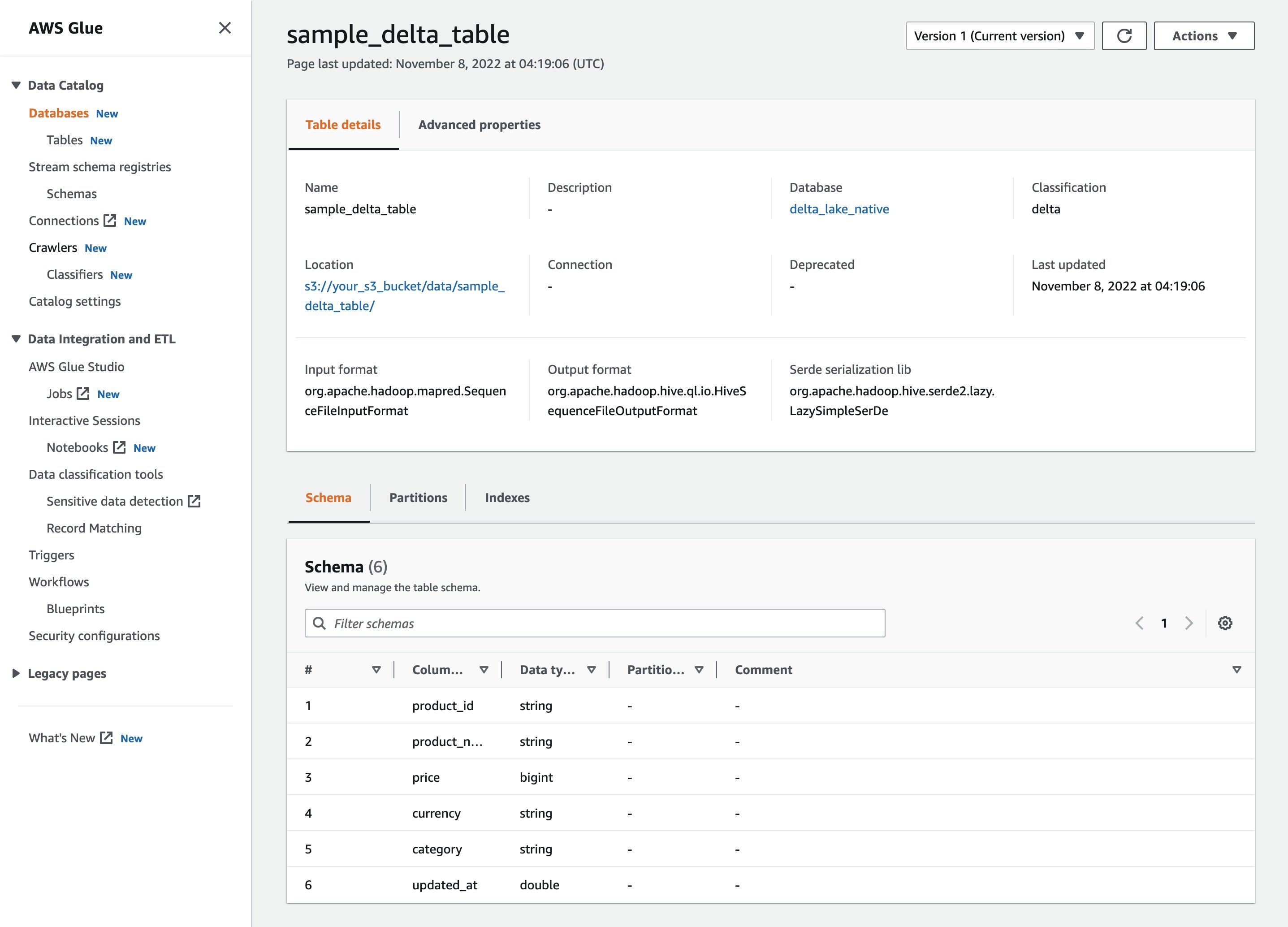

Review the MongoDB AWS Glue database and table

We can navigate to the AWS Glue Data Catalog to examine the tables that were created by the crawler.

Choose the table to view the schema and other metadata.

Note that the crawler captured nested data as a STRUCT and correctly listed the ARRAY fields.

Import MongoDB Atlas data to Amazon S3

Now we use the MongoDB Atlas-based AWS Glue Data Catalog table to perform a data import without writing code. We use AWS Glue Studio to build boilerplate code quickly. Alternatively, you can build the script in script editor.

- On the AWS Glue Studio console, choose Jobs in the navigation pane.

- Choose Create job.

- Select Visual with a source and target.

- Choose the Data Catalog table as the source and Amazon S3 as the target.

- In the AWS Glue Studio UI, supply additional parameters such as the S3 bucket name and choose the database and table from the drop-down menus.

- Next, review the generated script that is built by AWS Glue Studio. We now need to add a database and collection in the script as follows:

When the ETL job is complete, the extracted data is available on Amazon S3.

- On the Amazon S3 console, choose Buckets in the navigation pane.

- Choose our bucket and folder containing the extracted files.

- Choose a file and on the Actions menu, choose Query with S3 Select to view the contents of the file.

Clean up

To avoid incurring charges for the services used in this walkthrough, complete the following steps to delete your resources:

- On the AWS Glue console, choose Crawlers in the navigation pane.

- Select your crawler and on the Action menu, choose Delete crawler.

- On the AWS Glue Studio console, choose View jobs.

- Select the job you created and on the Actions menu, choose Delete job(s).

- Return to the AWS Glue console and choose Tables in the navigation pane.

- Select your table and choose Delete.

- Choose Databases in the navigation pane.

- Select your database and choose Delete.

- On the Amazon VPC console, choose Endpoints in the navigation pane.

- Select the PrivateLink endpoint you created and on the Actions menu, choose Delete VPC endpoints.

Conclusion

In this post, we showed how to set up an AWS Glue crawler to crawl over a MongoDB Atlas collection, gathering metadata and creating table records in the AWS Glue Data Catalog. With the Data Catalog table, we created an ETL process using the AWS Glue Studio UI to extract data from the MongoDB Atlas collection to an S3 bucket without writing a single line of code.

You can try this yourself by configuring an AWS Glue crawler, creating an AWS Glue ETL job with AWS Glue Studio, and launching MongoDB Atlas from a QuickStart or from MongoDB Atlas on AWS Marketplace.

Special thanks to everyone who contributed to this crawler feature launch: Julio Montes de Oca, Mita Gavade, and Alex Prazma.

About the authors

Igor Alekseev is a Senior Partner Solution Architect at AWS in Data and Analytics domain. In his role Igor is working with strategic partners helping them build complex, AWS-optimized architectures. Prior joining AWS, as a Data/Solution Architect he implemented many projects in Big Data domain, including several data lakes in Hadoop ecosystem. As a Data Engineer he was involved in applying AI/ML to fraud detection and office automation.

Igor Alekseev is a Senior Partner Solution Architect at AWS in Data and Analytics domain. In his role Igor is working with strategic partners helping them build complex, AWS-optimized architectures. Prior joining AWS, as a Data/Solution Architect he implemented many projects in Big Data domain, including several data lakes in Hadoop ecosystem. As a Data Engineer he was involved in applying AI/ML to fraud detection and office automation.

Sandeep Adwankar is a Senior Technical Product Manager at AWS. Based in the California Bay Area, he works with customers around the globe to translate business and technical requirements into products that enable customers to improve how they manage, secure, and access data.

Sandeep Adwankar is a Senior Technical Product Manager at AWS. Based in the California Bay Area, he works with customers around the globe to translate business and technical requirements into products that enable customers to improve how they manage, secure, and access data.