Post Syndicated from César Prieto Ballester original https://aws.amazon.com/blogs/devops/govern-ci-cd-best-practices-via-aws-service-catalog/

Introduction

AWS Service Catalog enables organizations to create and manage Information Technology (IT) services catalogs that are approved for use on AWS. These IT services can include resources such as virtual machine images, servers, software, and databases to complete multi-tier application architectures. AWS Service Catalog lets you centrally manage deployed IT services and your applications, resources, and metadata , which helps you achieve consistent governance and meet your compliance requirements. In addition, this configuration enables users to quickly deploy only approved IT services.

In large organizations, as more products are created, Service Catalog management can become exponentially complicated when different teams work on various products. The following solution simplifies Service Catalog products provisioning by considering elements such as shared accounts, roles, or users who can run portfolios or tags in the form of best practices via Continuous Integrations and Continuous Deployment (CI/CD) patterns.

This post demonstrates how Service Catalog Products can be delivered by taking advantage of the main benefits of CI/CD principles along with reducing complexity required to sync services. In this scenario, we have built a CI/CD Pipeline exclusively using AWS Services and the AWS Cloud Development Kit (CDK) Framework to provision the necessary Infrastructure.

Customers need the capability to consume services in a self-service manner, with services built on patterns that follow best practices, including focus areas such as compliance and security. The key tenants for these customers are: the use of infrastructure as code (IaC), and CI/CD. For these reasons, we built a scalable and automated deployment solution covered in this post.Furthermore, this post is also inspired from another post from the AWS community, Building a Continuous Delivery Pipeline for AWS Service Catalog.

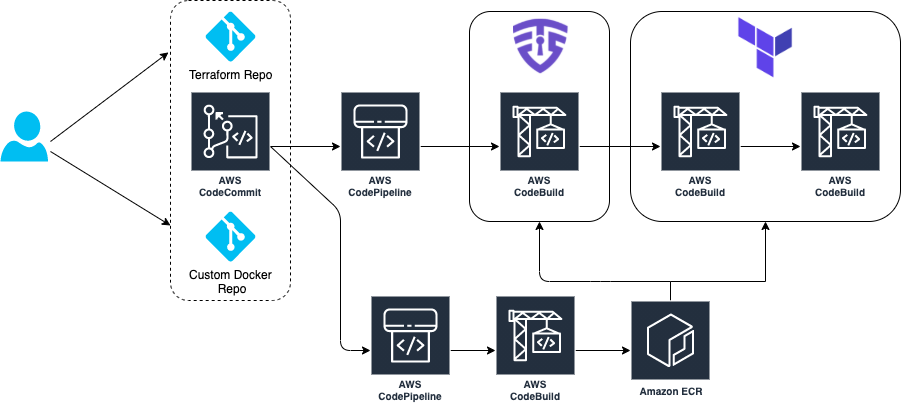

Solution Overview

The solution is built using a unified AWS CodeCommit repository with CDK v1 code, which manages and deploys the Service Catalog Product estate. The solution supports the following scenarios: 1) making Products available to accounts and 2) provisioning these Products directly into accounts. The configuration provides flexibility regarding which components must be deployed in accounts as opposed to making a collection of these components available to account owners/users who can in turn build upon and provision them via sharing.

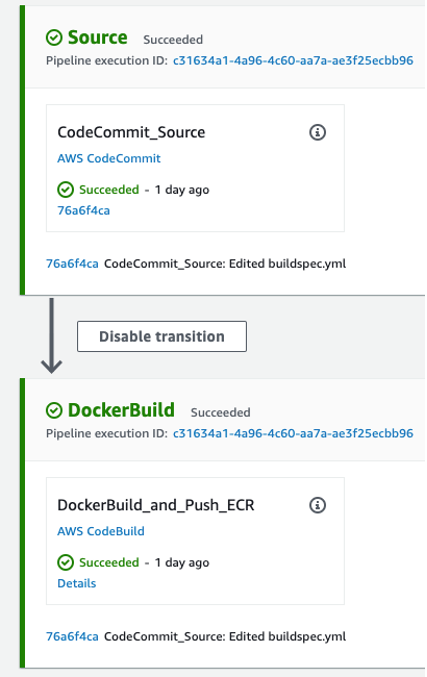

The pipeline created is comprised of the following stages:

- Retrieving the code from the repository

- Synthesize the CDK code to transform it into a CloudFormation template

- Ensure the pipeline is defined correctly

- Deploy and/or share the defined Portfolios and Products to a hub account or multiple accounts

Deploying and using the solution

Deploy the pipeline

We have created a Python AWS Cloud Development Kit (AWS CDK) v1 application hosted in a Git Repository. Deploying this application will create the required components described in this post. For a list of the deployment prerequisites, see the project README.

Clone the repository to your local machine. Then, bootstrap and deploy the CDK stack following the next steps.

The infrastructure creation takes around 3-5 minutes to complete deploying the AWS CodePipelines and repository creation. Once CDK has deployed the components, you will have a new empty repository where we will define the target Service Catalog estate. To do so, clone the new repository and push our sample code into it:

Review and update configuration

Our cdk.json file is used to manage context settings such as shared accounts, permissions, region to deploy, etc.

There are two mechanisms that can be used to create Service Catalog Products in this solution: 1) providing a CloudFormation template or 2) declaring a CDK stack (that will be transformed as part of the pipeline). Our sample contains two Products, each demonstrating one of these options: an Amazon Elastic Container Services (ECS) deployment and an Amazon Simple Storage Service (S3) product.

These Products are automatically shared with accounts specified in the shared_accounts_storage variable. Each product is managed by a CDK Python file in the cdk_service_catalog folder.

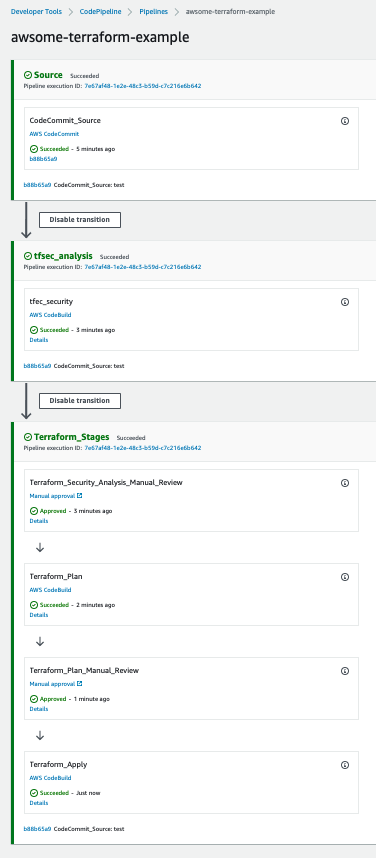

The Pipeline stages that AWS CodePipeline runs through are as follows:

- Download the AWS CodeCommit code

- Synthesize the CDK code to transform it into a CloudFormation template

- Auto-modify the Pipeline in case you have made manual changes to it

- Display the different Portfolios and Products associated in a Hub account in a Region or in multiple accounts

Adding new Portfolios and Products

To add a new Portfolio to the Pipeline, we recommend creating a new class under cdk_service_catalog similar to cdk_service_catalog_ecs_stack.py from our sample. Once the new class is created with the products you wish to associate, we instantiate the new class inside cdk_pipelines.py, and then add it inside the wave in the stage. There are two ways to create portfolio products. The first one is by creating a CloudFormation template, as can be seen in the Amazon Elastic Container Service (ECS) example. The second way is by creating a CDK stack that will be transformed into a template, as can be seen in the Storage example.

Product and Portfolio definition:

Clean up

The following will help you clean up all necessary parts of this post: After completing your demo, feel free to delete your stack using the CDK CLI:

Conclusion

In this post, we demonstrated how Service Catalog deployments can be accelerated by building a CI/CD pipeline using self-managed services. The Portfolio & Product estate is defined in its entirety by using Infrastructure-as-Code and automatically deployed based on your configuration. To learn more about AWS CDK Pipelines or AWS Service Catalog, visit the appropriate product documentation.

Authors: