Post Syndicated from Rodney Bozo original https://aws.amazon.com/blogs/devops/manage-application-security-and-compliance-with-the-aws-cloud-development-kit-and-cdk-nag/

Infrastructure as Code (IaC) is an important part of Cloud Applications. Developers rely on various Static Application Security Testing (SAST) tools to identify security/compliance issues and mitigate these issues early on, before releasing their applications to production. Additionally, SAST tools often provide reporting mechanisms that can help developers verify compliance during security reviews.

cdk-nag integrates directly into AWS Cloud Development Kit (AWS CDK) applications to provide identification and reporting mechanisms similar to SAST tooling.

This post demonstrates how to integrate cdk-nag into an AWS CDK application to provide continual feedback and help align your applications with best practices.

Overview of cdk-nag

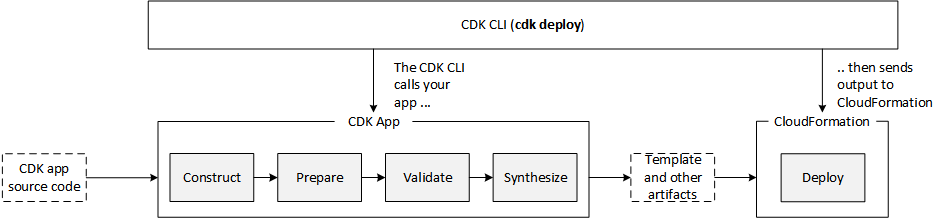

cdk-nag (inspired by cfn_nag) validates that the state of constructs within a given scope comply with a given set of rules. Additionally, cdk-nag provides a rule suppression and compliance reporting system. cdk-nag validates constructs by extending AWS CDK Aspects. If you’re interested in learning more about the AWS CDK Aspect system, then you should check out this post.

cdk-nag includes several rule sets (NagPacks) to validate your application against. As of this post, cdk-nag includes the AWS Solutions, HIPAA Security, NIST 800-53 rev 4, NIST 800-53 rev 5, and PCI DSS 3.2.1 NagPacks. You can pick and choose different NagPacks and apply as many as you wish to a given scope.

cdk-nag rules can either be warnings or errors. Both warnings and errors will be displayed in the console and compliance reports. Only unsuppressed errors will prevent applications from deploying with the cdk deploy command.

You can see which rules are implemented in each of the NagPacks in the Rules Documentation in the GitHub repository.

Walkthrough

This walkthrough will setup a minimal AWS CDK v2 application, as well as demonstrate how to apply a NagPack to the application, how to suppress rules, and how to view a report of the findings. Although cdk-nag has support for Python, TypeScript, Java, and .NET AWS CDK applications, we’ll use TypeScript for this walkthrough.

Prerequisites

For this walkthrough, you should have the following prerequisites:

- A local installation of and experience using the AWS CDK.

Create a baseline AWS CDK application

In this section you will create and synthesize a small AWS CDK v2 application with an Amazon Simple Storage Service (Amazon S3) bucket. If you are unfamiliar with using the AWS CDK, then learn how to install and setup the AWS CDK by looking at their open source GitHub repository.

- Run the following commands to create the AWS CDK application:

mkdir CdkTest

cd CdkTest

cdk init app --language typescript- Replace the contents of the

lib/cdk_test-stack.tswith the following:

import { Stack, StackProps } from 'aws-cdk-lib';

import { Construct } from 'constructs';

import { Bucket } from 'aws-cdk-lib/aws-s3';

export class CdkTestStack extends Stack {

constructor(scope: Construct, id: string, props?: StackProps) {

super(scope, id, props);

const bucket = new Bucket(this, 'Bucket')

}

}- Run the following commands to install dependencies and synthesize our sample app:

npm install

npx cdk synthYou should see an AWS CloudFormation template with an S3 bucket both in your terminal and in cdk.out/CdkTestStack.template.json.

Apply a NagPack in your application

In this section, you’ll install cdk-nag, include the AwsSolutions NagPack in your application, and view the results.

- Run the following command to install

cdk-nag:

npm install cdk-nag- Replace the contents of the

bin/cdk_test.tswith the following:

#!/usr/bin/env node

import 'source-map-support/register';

import * as cdk from 'aws-cdk-lib';

import { CdkTestStack } from '../lib/cdk_test-stack';

import { AwsSolutionsChecks } from 'cdk-nag'

import { Aspects } from 'aws-cdk-lib';

const app = new cdk.App();

// Add the cdk-nag AwsSolutions Pack with extra verbose logging enabled.

Aspects.of(app).add(new AwsSolutionsChecks({ verbose: true }))

new CdkTestStack(app, 'CdkTestStack', {});- Run the following command to view the output and generate the compliance report:

npx cdk synthThe output should look similar to the following (Note: SSE stands for Server-side encryption):

[Error at /CdkTestStack/Bucket/Resource] AwsSolutions-S1: The S3 Bucket has server access logs disabled. The bucket should have server access logging enabled to provide detailed records for the requests that are made to the bucket.

[Error at /CdkTestStack/Bucket/Resource] AwsSolutions-S2: The S3 Bucket does not have public access restricted and blocked. The bucket should have public access restricted and blocked to prevent unauthorized access.

[Error at /CdkTestStack/Bucket/Resource] AwsSolutions-S3: The S3 Bucket does not default encryption enabled. The bucket should minimally have SSE enabled to help protect data-at-rest.

[Error at /CdkTestStack/Bucket/Resource] AwsSolutions-S10: The S3 Bucket does not require requests to use SSL. You can use HTTPS (TLS) to help prevent potential attackers from eavesdropping on or manipulating network traffic using person-in-the-middle or similar attacks. You should allow only encrypted connections over HTTPS (TLS) using the aws:SecureTransport condition on Amazon S3 bucket policies.

Found errorsNote that applying the AwsSolutions NagPack to the application rendered several errors in the console (AwsSolutions-S1, AwsSolutions-S2, AwsSolutions-S3, and AwsSolutions-S10). Furthermore, the cdk.out/AwsSolutions-CdkTestStack-NagReport.csv contains the errors as well:

Rule ID,Resource ID,Compliance,Exception Reason,Rule Level,Rule Info

"AwsSolutions-S1","CdkTestStack/Bucket/Resource","Non-Compliant","N/A","Error","The S3 Bucket has server access logs disabled."

"AwsSolutions-S2","CdkTestStack/Bucket/Resource","Non-Compliant","N/A","Error","The S3 Bucket does not have public access restricted and blocked."

"AwsSolutions-S3","CdkTestStack/Bucket/Resource","Non-Compliant","N/A","Error","The S3 Bucket does not default encryption enabled."

"AwsSolutions-S5","CdkTestStack/Bucket/Resource","Compliant","N/A","Error","The S3 static website bucket either has an open world bucket policy or does not use a CloudFront Origin Access Identity (OAI) in the bucket policy for limited getObject and/or putObject permissions."

"AwsSolutions-S10","CdkTestStack/Bucket/Resource","Non-Compliant","N/A","Error","The S3 Bucket does not require requests to use SSL."Remediating and suppressing errors

In this section, you’ll remediate the AwsSolutions-S10 error, suppress the AwsSolutions-S1 error on a Stack level, suppress the AwsSolutions-S2 error on a Resource level errors, and not remediate the AwsSolutions-S3 error and view the results.

- Replace the contents of the

lib/cdk_test-stack.tswith the following:

import { Stack, StackProps } from 'aws-cdk-lib';

import { Construct } from 'constructs';

import { Bucket } from 'aws-cdk-lib/aws-s3';

import { NagSuppressions } from 'cdk-nag'

export class CdkTestStack extends Stack {

constructor(scope: Construct, id: string, props?: StackProps) {

super(scope, id, props);

// The local scope 'this' is the Stack.

NagSuppressions.addStackSuppressions(this, [

{

id: 'AwsSolutions-S1',

reason: 'Demonstrate a stack level suppression.'

},

])

// Remediating AwsSolutions-S10 by enforcing SSL on the bucket.

const bucket = new Bucket(this, 'Bucket', { enforceSSL: true })

NagSuppressions.addResourceSuppressions(bucket, [

{

id: 'AwsSolutions-S2',

reason: 'Demonstrate a resource level suppression.'

},

])

}

}- Run the

cdk synthcommand again:

npx cdk synthThe output should look similar to the following:

[Error at /CdkTestStack/Bucket/Resource] AwsSolutions-S3: The S3 Bucket does not default encryption enabled. The bucket should minimally have SSE enabled to help protect data-at-rest.

Found errorsThe cdk.out/AwsSolutions-CdkTestStack-NagReport.csv contains more details about rule compliance, non-compliance, and suppressions.

Rule ID,Resource ID,Compliance,Exception Reason,Rule Level,Rule Info

"AwsSolutions-S1","CdkTestStack/Bucket/Resource","Suppressed","Demonstrate a stack level suppression.","Error","The S3 Bucket has server access logs disabled."

"AwsSolutions-S2","CdkTestStack/Bucket/Resource","Suppressed","Demonstrate a resource level suppression.","Error","The S3 Bucket does not have public access restricted and blocked."

"AwsSolutions-S3","CdkTestStack/Bucket/Resource","Non-Compliant","N/A","Error","The S3 Bucket does not default encryption enabled."

"AwsSolutions-S5","CdkTestStack/Bucket/Resource","Compliant","N/A","Error","The S3 static website bucket either has an open world bucket policy or does not use a CloudFront Origin Access Identity (OAI) in the bucket policy for limited getObject and/or putObject permissions."

"AwsSolutions-S10","CdkTestStack/Bucket/Resource","Compliant","N/A","Error","The S3 Bucket does not require requests to use SSL."

Moreover, note that the resultant cdk.out/CdkTestStack.template.json template contains the cdk-nag suppression data. This provides transparency with what rules weren’t applied to an application, as the suppression data is included in the resources.

{

"Metadata": {

"cdk_nag": {

"rules_to_suppress": [

{

"id": "AwsSolutions-S1",

"reason": "Demonstrate a stack level suppression."

}

]

}

},

"Resources": {

"BucketDEB6E181": {

"Type": "AWS::S3::Bucket",

"UpdateReplacePolicy": "Retain",

"DeletionPolicy": "Retain",

"Metadata": {

"aws:cdk:path": "CdkTestStack/Bucket/Resource",

"cdk_nag": {

"rules_to_suppress": [

{

"id": "AwsSolutions-S2",

"reason": "Demonstrate a resource level suppression."

}

]

}

}

},

...

},

...

}Reflecting on the Walkthrough

In this section, you learned how to apply a NagPack to your application, remediate/suppress warnings and errors, and review the compliance reports. The reporting and suppression systems provide mechanisms for the development and security teams within organizations to work together to identify and mitigate potential security/compliance issues. Security can choose which NagPacks developers should apply to their applications. Then, developers can use the feedback to quickly remediate issues. Security can use the reports to validate compliances. Furthermore, developers and security can work together to use suppressions to transparently document exceptions to rules that they’ve decided not to follow.

Advanced usage and further reading

This section briefly covers some advanced options for using cdk-nag.

Unit Testing with the AWS CDK Assertions Library

The Annotations submodule of the AWS CDK assertions library lets you check for cdk-nag warnings and errors without AWS credentials by integrating a NagPack into your application unit tests. Read this post for further information about the AWS CDK assertions module. The following is an example of using assertions with a TypeScript AWS CDK application and Jest for unit testing.

import { Annotations, Match } from 'aws-cdk-lib/assertions';

import { App, Aspects, Stack } from 'aws-cdk-lib';

import { AwsSolutionsChecks } from 'cdk-nag';

import { CdkTestStack } from '../lib/cdk_test-stack';

describe('cdk-nag AwsSolutions Pack', () => {

let stack: Stack;

let app: App;

// In this case we can use beforeAll() over beforeEach() since our tests

// do not modify the state of the application

beforeAll(() => {

// GIVEN

app = new App();

stack = new CdkTestStack(app, 'test');

// WHEN

Aspects.of(stack).add(new AwsSolutionsChecks());

});

// THEN

test('No unsuppressed Warnings', () => {

const warnings = Annotations.fromStack(stack).findWarning(

'*',

Match.stringLikeRegexp('AwsSolutions-.*')

);

expect(warnings).toHaveLength(0);

});

test('No unsuppressed Errors', () => {

const errors = Annotations.fromStack(stack).findError(

'*',

Match.stringLikeRegexp('AwsSolutions-.*')

);

expect(errors).toHaveLength(0);

});

});Additionally, many testing frameworks include watch functionality. This is a background process that reruns all of the tests when files in your project have changed for fast feedback. For example, when using the AWS CDK in JavaScript/Typescript, you can use the Jest CLI watch commands. When Jest watch detects a file change, it attempts to run unit tests related to the changed file. This can be used to automatically run cdk-nag-related tests when making changes to your AWS CDK application.

CDK Watch

When developing in non-production environments, consider using AWS CDK Watch with a NagPack for fast feedback. AWS CDK Watch attempts to synthesize and then deploy changes whenever you save changes to your files. Aspects are run during synthesis. Therefore, any NagPacks applied to your application will also run on save. As in the walkthrough, all of the unsuppressed errors will prevent deployments, all of the messages will be output to the console, and all of the compliance reports will be generated. Read this post for further information about AWS CDK Watch.

Conclusion

In this post, you learned how to use cdk-nag in your AWS CDK applications. To learn more about using cdk-nag in your applications, check out the README in the GitHub Repository. If you would like to learn how to create your own rules and NagPacks, then check out the developer documentation. The repository is open source and welcomes community contributions and feedback.

Author: