Post Syndicated from Janne Pikkarainen original https://blog.zabbix.com/whats-up-home-ba-na-na-2/24126/

Can you monitor a banana with Zabbix? Of course, you can! By day, I am a monitoring tech lead in a global cyber security company. By night, I monitor my home with Zabbix & Grafana and do some weird experiments with them. Welcome to my blog about this project.

BA-NA-NA!

All was as usual at the recent Zabbix Summit 2022, until Steve Destivelle kept his speech. He asked the audience to say BA-NA-NA every now and then to, I don’t know, to keep them awake or entertain them, or both.

From that moment, the rest of the summit went all bananas. So, what’s a better way for me to contribute to this ba-na-na meme than to attempt to monitor a banana, as anyway I am now known for monitoring weird things? Here we go!

Apart from a monkey wrench and a gorilla leg for your camera to get perfectly steady photos or videos of your precious snacks, what do we need to monitor bananas with Zabbix? Not much:

- Some Python (I guess snakes might like bananas, too)

- OpenCV image recognition libraries (no, that’s not a tool to help you create resumes on LinkedIn, the CV stands for Computer Vision)

- zabbix_sender command

- Zabbix itself

Let’s get started!

This banana monitoring is just a simulation, so I downloaded a random picture of a vector graphics banana from our dear Internet. See, it’s beautiful!

Feed the snake

OK, I now have a nice picture of a banana, but how on earth would I monitor that with Zabbix? With OpenCV, that’s not too hard. No, I do not know anything about OpenCV, but with some lucky search engine hits and some copy-pasting, I managed to get my super intelligent image recognition script to work.

It’s tailored to check a pixel I know belongs to our banana and then check the hue value of that pixel. With hue, it’s easier and more reliable to check the actual color, no matter its brightness, or so I was told by the articles I found.

Anyway, here’s the script!

Really, most of this was just copy-paste, so I do not take any credit for this code. But, it seems to do its job, as this is what happens when I run the script from the command line.

Fantastic! Or maybe, to honor Steve, I should say This was I-ZI.

Configuring Zabbix

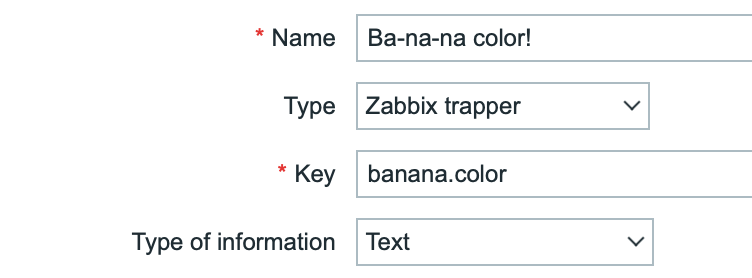

To send this data to Zabbix, I’m going to use the good old zabbix_sender command. For that to work, I needed to set up a new trapper-type item for Zabbix.

And, well… that’s it. Now if I run the following from the command line, it works:

Let’s check from Latest data, too:

But it needs a dashboard!

Now that it’s working, it definitely needs a dashboard. This is what I created for it with just an Item value and URL widgets.

Ain’t technology fantastic? Now if only Zabbix would have dynamic colors for the item value widget, but I guess they told us at the Summit that it’s coming.

Of course, like with most of my blog posts, this kind of monitoring could have some real-world use cases, too: make OpenCV check pictures, video streams, photos, whatever and if the color of something that should be the same all the time is not the same anymore, make Zabbix go bananas about it.

I have been working at Forcepoint since 2014 and writing these posts has never been tastier. — Janne Pikkarainen

This post was originally published on the author’s LinkedIn account.

The post What’s Up, Home? – BA-NA-NA! appeared first on Zabbix Blog.