Post Syndicated from Jesse Mack original https://blog.rapid7.com/2022/06/16/security-is-shifting-in-a-cloud-native-world-insights-from-rsac-2022/

The cloud has become the default for IT infrastructure and resource delivery, allowing an unprecedented level of speed and flexibility for development and production pipelines. This helps organizations compete and innovate in a fast-paced business environment. But as the cloud becomes more ingrained, the ephemeral nature of cloud infrastructure is presenting new challenges for security teams.

Several talks by our Rapid7 presenters at this year’s RSA Conference touched on this theme. Here’s a closer look at what our RSAC 2022 presenters had to say about adapting security processes to a cloud-native world.

A complex picture

As Lee Weiner, SVP Cloud Security and Chief Innovation Officer, pointed out in his RSA briefing, “Context Is King: The Future of Cloud Security,” cloud adoption is not only increasing — it’s growing more complex. Many organizations are bringing on multiple cloud vendors to meet a variety of different needs. One report estimates that a whopping 89% of companies that have adopted the cloud have chosen a multicloud approach.

This model is so popular because of the flexibility it offers organizations to utilize the right technology, in the right cloud environment, at the right cost — a key advantage in a today’s marketplace.

“Over the last decade or so, many organizations have been going through a transformation to put themselves in a position to use the scale and speed of the cloud as a strategic business advantage,” Jane Man, Director of Product Management for VRM, said in her RSA Lounge presentation, “Adapting Your Vulnerability Management Program for Cloud-Native Environments.”

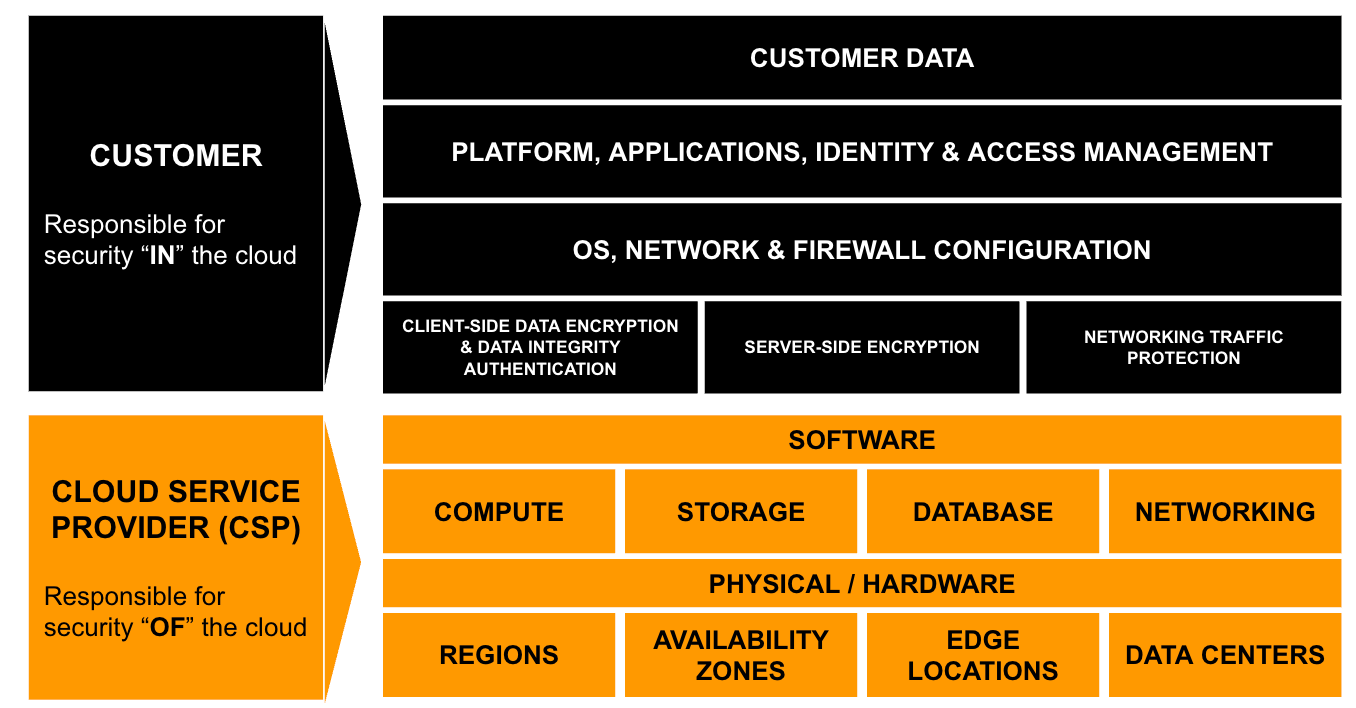

While DevOps teams can move more quickly than ever before with this model, security pros face a more complex set of questions than with traditional infrastructure, Lee noted. How many of our instances are exposed to known vulnerabilities? Do they have property identity and access management (IAM) controls established? What levels of access do those permissions actually grant users in our key applications?

New infrastructure, new demands

The core components of vulnerability management remain the same in cloud environments, Jane said in her talk. Security teams must:

- Get visibility into all assets, resources, and services

- Assess, prioritize, and remediate risks

- Communicate the organization’s security and compliance posture to management

But because of the ephemeral nature of the cloud, the way teams go about completing these requirements is shifting.

“Running a scheduled scan, waiting for it to complete and then handing a report to IT doesn’t work when instances may be spinning up and down on a daily or hourly basis,” she said.

In his presentation, Lee expressed optimism that the cloud itself may help provide the new methods we need for cloud-native security.

“Because of the way cloud infrastructure is built and deployed, there’s a real opportunity to answer these questions far faster, far more efficiently, far more effectively than we could with traditional infrastructure,” he said.

Calling for context

For Lee, the goal is to enable secure adoption of cloud technologies so companies can accelerate and innovate at scale. But there’s a key element needed to achieve this vision: context.

What often prevents teams from fully understanding the context around their security data is the fact that it is siloed, and the lack of integration between disparate systems requires a high level of manual effort to put the pieces together. To really get a clear picture of risk, security teams need to be able to bring their data together with context from each layer of the environment.

But what does context actually look like in practice, and how do you achieve it? Jane laid out a few key strategies for understanding the context around security data in your cloud environment.

- Broaden your scope: Set up your VM processes so that you can detect more than just vulnerabilities in the cloud — you want to be able to see misconfigurations and issues with IAM permissions, too.

- Understand the environment: When you identify a vulnerable instance, identify if it is publicly accessible and what its business application is — this will help you determine the scope of the vulnerability.

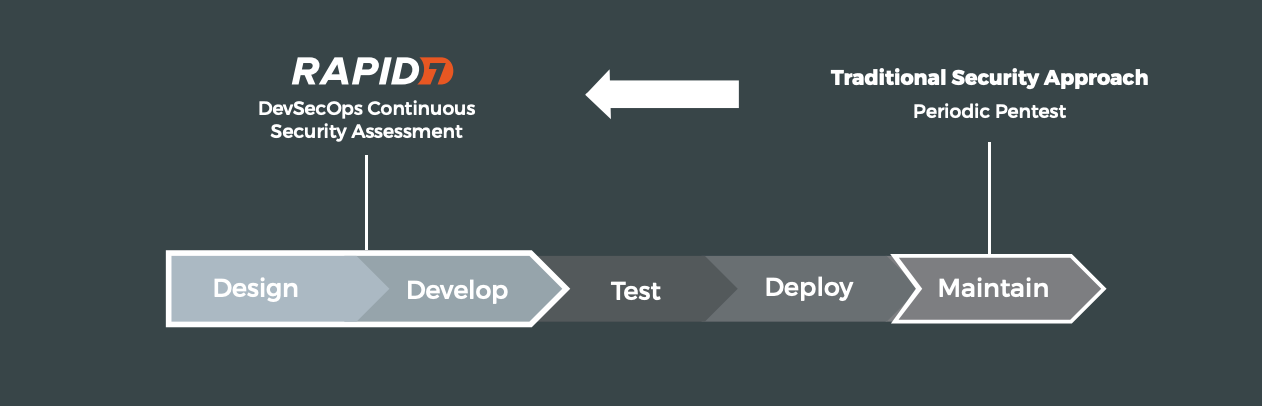

- Catch early: Aim to find and fix vulnerabilities in production or pre-production by shifting security left, earlier in the development cycle.

4 best practices for context-driven cloud security

Once you’re able to better understand the context around security data in your environment, how do you fit those insights into a holistic cloud security strategy? For Lee, this comes down to four key components that make up the framework for cloud-native security.

1. Visibility and findings

You can’t secure what you can’t see — so the first step in this process is to take a full inventory of your attack surface. With different kinds of cloud resources in place and providers releasing new services frequently, understanding the security posture of these pieces of your infrastructure is critical. This includes understanding not just vulnerabilities and misconfigurations but also access, permissions, and identities.

“Understanding the layer from the infrastructure to the workload to the identity can provide a lot of confidence,” Lee said.

2. Contextual prioritization

Not everything you discover in this inventory will be of equal importance, and treating it all the same way just isn’t practical or feasible. The vast amount of data that companies collect today can easily overwhelm security analysts — and this is where context really comes in.

With integrated visibility across your cloud infrastructure, you can make smarter decisions about what risks to prioritize. Then, you can assign ownership to resource owners and help them understand how those priorities were identified, improving transparency and promoting trust.

3. Prevent and automate

The cloud is built with automation in mind through Infrastructure as Code — and this plays a key role in security. Automation can help boost efficiency by minimizing the time it takes to detect, remediate, or contain threats. A shift-left strategy can also help with prevention by building security into deployment pipelines, so production teams can identify vulnerabilities earlier.

Jane echoed this sentiment in her talk, recommending that companies “automate to enable — but not force — remediation” and use tagging to drive remediation of vulnerabilities found running in production.

4. Runtime monitoring

The next step is to continually monitor the environment for vulnerabilities and threat activity — and as you might have guessed, monitoring looks a little different in the cloud. For Lee, it’s about leveraging the increased number of signals to understand if there’s any drift away from the way the service was originally configured.

He also recommended using behavioral analysis to detect threat activity and setting up purpose-built detections that are specific to cloud infrastructure. This will help ensure the security operations center (SOC) has the most relevant information possible, so they can perform more effective investigations.

Lee stressed that in order to carry out the core components of cloud security and achieve the outcomes companies are looking for, having an integrated ecosystem is absolutely essential. This will help prevent data from becoming siloed, enable security pros to obtain that ever-important context around their data, and let teams collaborate with less friction.

Looking for more insights on how to adapt your security program to a cloud-native world? Check out Lee’s presentation on demand, or watch our replays of Rapid7 speakers’ sessions from RSAC 2022.

Additional reading:

NEVER MISS A BLOG

Get the latest stories, expertise, and news about security today.

Subscribe