Post Syndicated from Miklos Csecsi original https://blog.rapid7.com/2024/03/07/securing-the-next-level-automated-cloud-defense-in-game-development-with-insightcloudsec/

Imagine the following scenario: You’re about to enjoy a strategic duel on chess.com or dive into an intense battle in Fortnite, but as you log in, you find your hard-earned achievements, ranks, and reputation have vanished into thin air. This is not just a hypothetical scenario but a real possibility in today’s cloud gaming landscape, where a single security breach can undo years of dedication and achievement.

Cloud gaming, powered by giants like AWS, is transforming the gaming industry, offering unparalleled accessibility and dynamic gaming experiences. Yet, with this technological leap forward comes an increase in cyber threats. The gaming world has already witnessed significant security breaches, such as the GTA5 code theft and Activision’s consistent data challenges, highlighting the lurking dangers in this digital arena.

In such a scenario, securing cloud-based games isn’t just an additional feature; it’s an absolute necessity. As we examine the intricate world of cloud gaming, the role of comprehensive security solutions becomes increasingly vital. In the subsequent sections, we will explore how Rapid7’s InsightCloudSec can be instrumental in securing cloud infrastructure and CI/CD processes in game development, thereby safeguarding the integrity and continuity of our virtual gaming experiences.

Challenges in Cloud-Based Game Development

Picture this: You’re a game developer, immersed in creating the next big title in cloud gaming. Your team is buzzing with creativity, coding, and testing. But then, out of the blue, you’re hit by a cyberattack, much like the one that rocked CD Projekt Red in 2021. Imagine the chaos – months of hard work (e.g. Cyberpunk 2077 or The Witcher 3) locked up by ransomware, with all sorts of confidential data floating in the wrong hands. This scenario is far from fiction in today’s digital gaming landscape.

What Does This Kind of Attack Really Mean for a Game Development Team?

The Network Weak Spot: It’s like leaving the back door open while you focus on the front; hackers can sneak in through network gaps we never knew existed. That’s what might have happened with CD Projekt Red. A more fortified network could have been their digital moat.

When Data Gets Held Hostage: It’s one thing to secure your castle, but what about safeguarding the treasures inside? The CD Projekt Red incident showed us how vital it is to keep our game codes and internal documents under lock and key, digitally speaking.

A Safety Net Missing: Imagine if CD Projekt Red had a robust backup system. Even after the attack, they could have bounced back quicker, minimizing the damage. It’s like having a safety net when you’re walking a tightrope. You hope you won’t need it, but you’ll be glad it’s there if you do.

This is where a solution like Rapid7’s InsightCloudSec comes into play. It’s not just about building higher walls; it’s about smarter, more responsive defense systems. Think of it as having a digital watchdog that’s always on guard, sniffing out threats, and barking alarms at the first sign of trouble.

With tools that watch over your cloud infrastructure, monitor every digital move in real time, and keep your compliance game strong, you’re not just creating games; you’re also playing the ultimate game of digital security – and winning.

Navigating Cloud Security in Game Development: An Artful Approach

In the realm of cloud-based game development, mastering AWS services’ security nuances transcends mere technical skill – it’s akin to painting a masterpiece. Let’s embark on a journey through the essential AWS services like EC2, S3, Lambda, CloudFront, and RDS, with a keen focus on their security features – our guardians in the digital expanse.

Consider Amazon EC2 as the infrastructure’s backbone, hosting the very servers that breathe life into games. Here, Security Groups act as discerning gatekeepers, meticulously managing who gets in and out. They’re not just gatekeepers but wise ones, remembering allowed visitors and ensuring a seamless yet secure flow of traffic.

Amazon S3 stands as our digital vault, safeguarding data with precision-crafted bucket policies. These policies aren’t just rules; they’re declarations of trust, dictating who can glimpse or alter the stored treasures. History is littered with tales of those who faltered, so precision here is paramount.

Lambda functions emerge as the silent virtuosos of serverless architecture, empowering game backends with their scalable might. Yet, their power is wielded judiciously, guided by the principle of least privilege through meticulously assigned roles and permissions, minimizing the shadow of vulnerability.

Amazon CloudFront, our swift courier, ensures game content flies across the globe securely and at breakneck speed. Coupled with AWS Shield (Advanced), it stands as a bulwark against DDoS onslaughts, guaranteeing that game delivery remains both rapid and impregnable.

Amazon RDS, the fortress for player data, automates the mundane yet crucial tasks – backups, patches, scaling – freeing developers to craft experiences. It whispers secrets only to those meant to hear, guarding data with robust encryption, both at rest and in transit.

Visibility and vigilance form the bedrock of our security ethos. With tools like AWS CloudTrail and CloudWatch, our gaze extends across every corner of our domain, ever watchful for anomalies, ready to act with precision and alacrity.

Encryption serves as our silent sentinel, a protective veil over data, whether nestled in S3’s embrace or traversing the vastness to and from EC2 and RDS. It’s our unwavering shield against the curious and the malevolent alike.

In weaving the security measures of these AWS services into the fabric of game development, we engage not in mere procedure but in the creation of a secure tapestry that envelops every facet of the development journey. In the vibrant, ever-evolving landscape of game creation, fortifying our cloud infrastructure with a security-first mindset is not just a technical endeavor – it’s a strategic masterpiece, ensuring our games are not only a source of joy but bastions of privacy and security in the cloud.

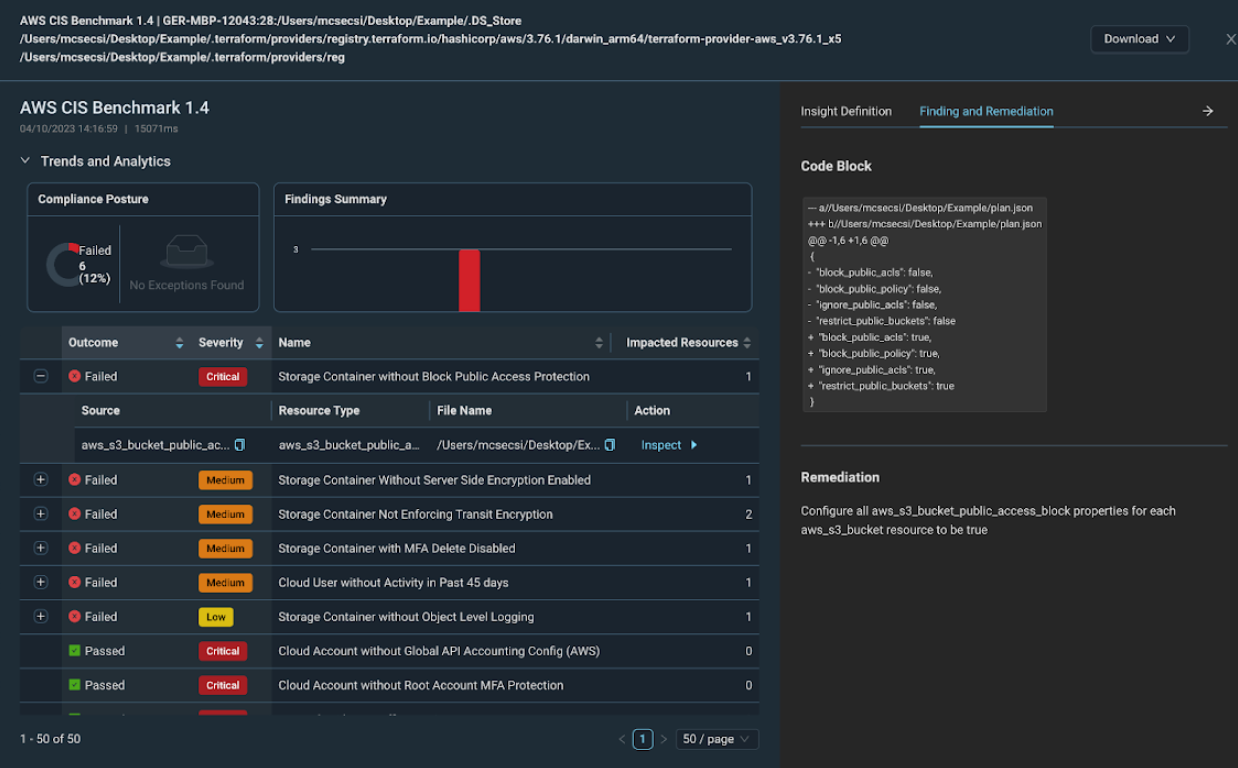

Automated Cloud Security with InsightCloudSec

When it comes to deploying a game in the cloud, understanding and implementing automated security is paramount. This is where Rapid7’s InsightCloudSec takes center stage, revolutionizing how game developers secure their cloud environments with a focus on automation and real-time monitoring.

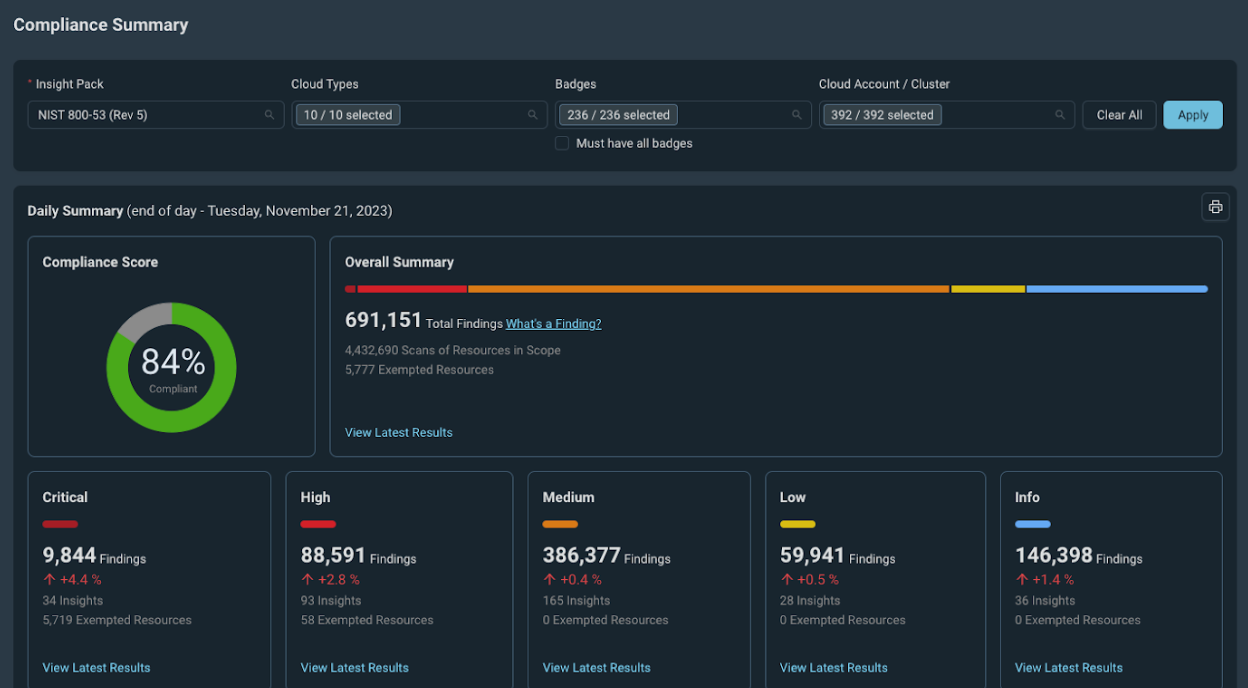

Data Harvesting Strategies

InsightCloudSec differentiates itself through its innovative approach to data collection and analysis, employing two primary methods: API harvesting and Event Driven Harvesting (EDH). Initially, InsightCloudSec utilizes the API method, where it directly calls cloud provider APIs to gather essential platform information. This process enables InsightCloudSec to populate its platform with critical data, which is then unified into a cohesive dataset. For example, disparate storage solutions from AWS, Azure, and GCP are consolidated under a generic “Storage” category, while compute instances are unified as “Instances.” This normalization allows for the creation of universal compliance packs that can be applied across multiple cloud environments, enhancing the platform’s efficiency and coverage.

However, the real game-changer is Rapid7’s implementation of EDH. Unlike the traditional API pull method, EDH leverages serverless functions within the customer’s cloud environment to ingest security event data and configuration changes in real-time. This data is then pushed to the InsightCloudSec platform, significantly reducing costs and increasing the speed of data acquisition. For AWS environments, this means event information can be updated in near real-time, within 60 seconds, and within 2-3 minutes for Azure and GCP. This rapid update capability is a stark contrast to the hourly or daily updates provided by other cloud security platforms, setting InsightCloudSec apart as a leader in real-time cloud security monitoring.

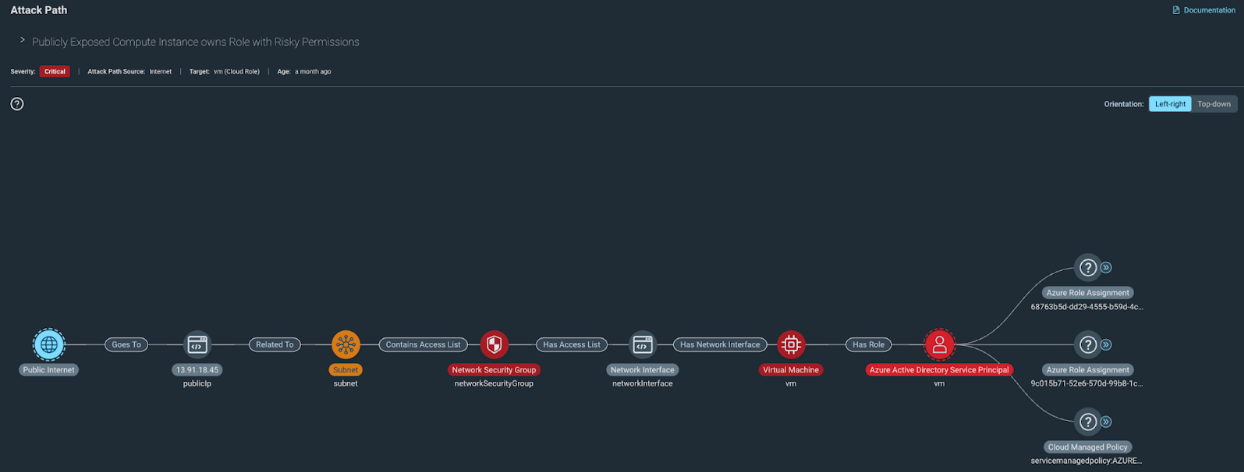

Automated Remediation with InsightCloudSec Bots

The integration of near-to-real-time event information through Event Driven Harvesting (EDH) with InsightCloudSec’s advanced bot automation features equips developers with a formidable toolset for safeguarding cloud environments. This unique combination not only flags vulnerable configurations but also facilitates automatic remediation within minutes, a critical capability for maintaining a pristine cloud ecosystem. InsightCloudSec’s bots go beyond mere detection; they proactively manage misconfigurations and vulnerabilities across virtual machines and containers, ensuring the cloud space is both secure and compliant.

The versatility of these bots is remarkable. Developers have the flexibility to define the scope of the bot’s actions, allowing changes to be applied across one or multiple accounts. This granular control ensures that automated security measures are aligned with the specific needs and architecture of the cloud environment.

Moreover, the timing of these interventions can be finely tuned. Whether responding to a set schedule or reacting to specific events – such as the creation, modification, or deletion of resources – the bots are adept at addressing security concerns at the most opportune moments. This responsiveness is especially beneficial in dynamic cloud environments where changes are frequent and the security landscape is constantly evolving.

The actions undertaken by InsightCloudSec’s bots are diverse and impactful. According to the extensive list of sample bots provided by Rapid7, these automated guardians can, for example:

- Automatically tag resources lacking proper identification, ensuring that all elements within the cloud are categorized and easily manageable

- Enforce compliance by identifying and rectifying resources that do not adhere to established security policies, such as unencrypted databases or improperly configured networks

- Remediate exposed resources by adjusting security group settings to prevent unauthorized access, a crucial step in safeguarding sensitive data

- Monitor and manage excessive permissions, scaling back unnecessary access rights to adhere to the principle of least privilege, thereby reducing the risk of internal and external threats

- And much more…

This automation, powered by InsightCloudSec, transforms cloud security from a reactive task to a proactive, streamlined process.

By harnessing the power of EDH for real-time data harvesting and leveraging the sophisticated capabilities of bots for immediate action, developers can ensure that their cloud environments are not just reactively protected but are also preemptively fortified against potential vulnerabilities and misconfigurations. This shift towards automated, intelligent cloud security management empowers developers to focus on innovation and development, confident in the knowledge that their infrastructure is secure, compliant, and optimized for the challenges of modern cloud computing.

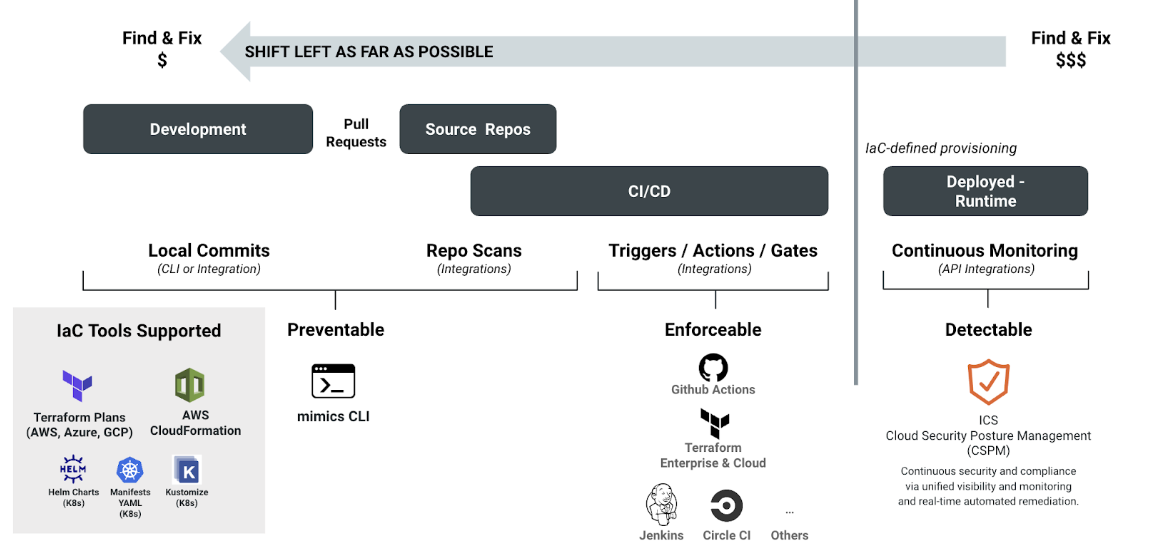

Infrastructure as Code (IaC) Enhanced: Introducing mimInsightCloudSec

In the dynamic arena of cloud security, particularly in the bustling sphere of game development, the wisdom of “an ounce of prevention is worth a pound of cure” holds unprecedented significance. This is where the role of Infrastructure as Code (IaC) becomes pivotal, and Rapid7’s innovative tool, mimInsightCloudSec, elevates this approach to new heights.

mimInsightCloudSec, a cutting-edge component of the InsightCloudSec platform, is specifically designed to integrate seamlessly into any development pipeline, whether you prefer working with executable binaries or thrive in a containerized ecosystem. Its versatility allows it to be a perfect fit for various deployment strategies, making it an indispensable tool for developers aiming to embed security directly into their infrastructure deployment process.

The primary goal of mimInsightCloudSec is to identify vulnerabilities before the infrastructure is even created, thus embodying the proactive stance on security. This foresight is crucial in the realm of game development, where the stakes are high, and the digital landscape is constantly shifting. By catching vulnerabilities at this nascent stage, developers can ensure that their games are built on a foundation of security, devoid of the common pitfalls that could jeopardize their work in the future.

Upon completion of its analysis, mimInsightCloudSec presents its findings in a variety of formats suitable for any team’s needs, including HTML, SARIF, and XML. This flexibility ensures that the results are not just comprehensive but also accessible, allowing teams to swiftly understand and address any identified issues. Moreover, these results are pushed to the InsightCloudSec platform, where they contribute to a broader overview of the security posture, offering actionable insights into potential misconfigurations.

But the capabilities of the InsightCloudSec platform extend even further. Within this sophisticated environment, developers can craft custom configurations, tailoring the security checks to fit the unique requirements of their projects. This feature is particularly valuable for teams looking to go beyond standard security measures, aiming instead for a level of infrastructure hardening that is both rigorous and bespoke. These custom configurations empower developers to establish a static level of security that is robust, nuanced, and perfectly aligned with the specific needs of their game-development projects.

By leveraging mimInsightCloudSec within the InsightCloudSec ecosystem, game developers not only can anticipate and mitigate vulnerabilities before they manifest but also refine their cloud infrastructure with precision-tailored security measures. This proactive and customized approach ensures that the gaming experiences they craft are not only immersive and engaging but also built on a secure, resilient digital foundation.

In summary, Rapid7’s InsightCloudSec offers a comprehensive and automated approach to cloud security, crucial for the dynamic environment of game development. By leveraging both API harvesting and innovative Event Driven Harvesting – along with robust support for Infrastructure as Code – InsightCloudSec ensures that game developers can focus on what they do best: creating engaging and immersive gaming experiences with the knowledge that their cloud infrastructure is secure, compliant, and optimized for performance.

In a forthcoming blog post, we’ll explore the unique security challenges that arise when operating a game in the cloud. We’ll also demonstrate how InsightCloudSec can offer automated solutions to effortlessly maintain a robust security posture.