Post Syndicated from Ryan Niksch original https://aws.amazon.com/blogs/architecture/architecture-patterns-for-red-hat-openshift-on-aws/

Editor’s note: Although this blog post and its accompanying code make use of the word “Master,” Red Hat is making open source code more inclusive by eradicating “problematic language.” Read more about this.

Introduction

Red Hat OpenShift is an application platform that provides customers with turnkey application platform that is much more than a simple Kubernetes orchestration.

OpenShift customers choose AWS as their cloud of choice because of the efficiency, security, and reliability, scalability, and elasticity it provides. Customers seeking to modernize their business, process, and application stacks are drawn to the rich AWS service and feature sets.

As such, we see some customers migrate from on-premises to AWS or exist in a hybrid context with application workloads running in various locations. For OpenShift customers, this poses a few questions and considerations:

- What are the recommendations for the best way to deploy OpenShift on AWS?

- How is this different from what customers were used to on-premises?

- How does this ensure resilience and availability?

- Do customers need a multi-region, multi-account approach?

For hybrid customers, there are assumptions and misconceptions:

- Where does the control plane exist?

- Is there replication, and if so, what are the considerations and ramifications?

In this post I will run through some of the more common questions and patterns for OpenShift on AWS, while looking at some of the terminology and conceptual differences of AWS. I’ll explore migration and hybrid use cases and address some misconceptions.

OpenShift building blocks

On AWS, OpenShift 4x is the norm. To that effect, I will focus on OpenShift 4, but many of the considerations will apply to both OpenShift 3 and OpenShift 4.

Let’s unpack some of the OpenShift building blocks. An OpenShift cluster consists of Master, infrastructure, and worker nodes. The Master forms the control plane and infrastructure nodes cater to a routing layer and additional functions, such as logging, monitoring etc. Worker nodes are the nodes that customer application container workloads will exist on.

When deployed on-premises, OpenShift nodes will be placed in separate network subnets. Depending on distance, latency, etc., a single OpenShift cluster may span two data centers that have some nodes in a subnet in one data center and other subnets in a different data center. This applies to customers with data centers within a few miles of each other with high-speed connectivity. An alternative would be an OpenShift cluster in each data center.

AWS concepts and terminology

At AWS, the concept of “region” is a geolocation, such as EMEA (Europe, Middle East, and Africa) or APAC (Asian Pacific) rather than a data center or specific building. An Availability Zone (AZ) is the closest construct on AWS that maps to a physical data center. Within each region you will find multiple (typically three or more) AZs. Note that a single AZ will contain multiple physical data centers but we treat it as a single point of failure. For example, an event that impacts an AZ would be expected to impact all the data centers within that AZ. To this effect, customers should deploy workloads spanning multiple AZs to protect against any event that would impact a single AZ.

Read more about Regions, Availability Zones, and Edge Locations.

Deploying OpenShift

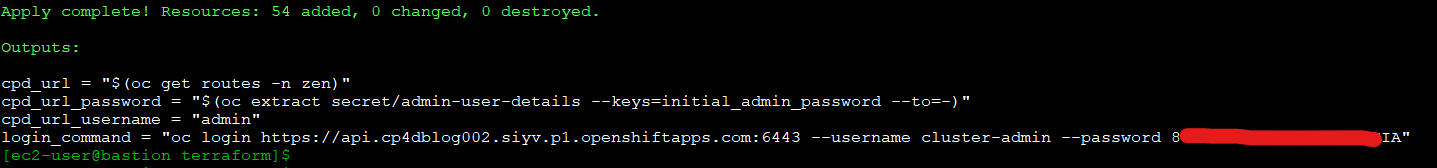

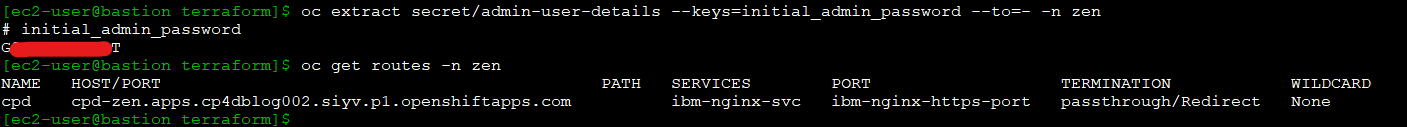

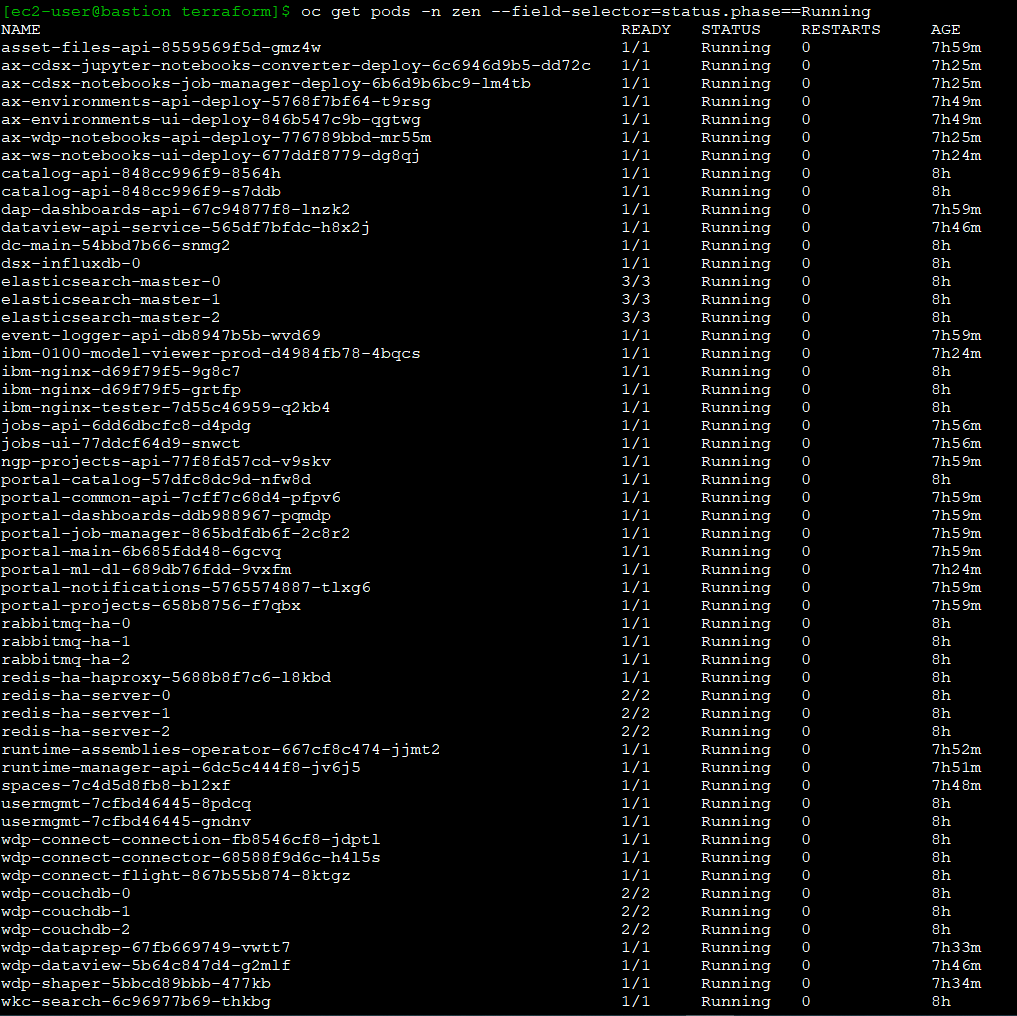

When deploying an OpenShift cluster on AWS, we recommend starting with three Master nodes spread across three AWS AZs and three worker nodes spread across three AZs. This allows for the combination of resilience and availably constructs provided by AWS as well as Red Hat OpenShift. The OpenShift installer provides a means of deploying the underlying AWS infrastructure in two ways: IPI Installer-provisioned infrastructure and UPI user-provisioned infrastructure. Both Red Hat and AWS collect customer feedback and use this to drive recommended patterns that are then included in the OpenShift installer. As such, the OpenShift installer IPI mode becomes a living reference architecture for deploying OpenShift on AWS.

The installer will require inputs for the environment on which it’s being deployed. In this case, since I am deploying on AWS, I will need to provide the AWS region, AZs, or subnets that related to the AZs, as well as EC2 instance type. The installer will then generate a set of ignition files that will be used during the deployment of OpenShift:

apiVersion: v1

baseDomain: example.com

controlPlane:

hyperthreading: Enabled

name: master

platform:

aws:

zones:

- us-west-2a

- us-west-2b

- us-west-2c

rootVolume:

iops: 4000

size: 500

type: io1

type: m5.xlarge

replicas: 3

compute:

- hyperthreading: Enabled

name: worker

platform:

aws:

rootVolume:

iops: 2000

size: 500

type: io1

type: m5.xlarge

zones:

- us-west-2a

- us-west-2b

- us-west-2c

replicas: 3

metadata:

name: test-cluster

networking:

clusterNetwork:

- cidr: 10.128.0.0/14

hostPrefix: 23

machineNetwork:

- cidr: 10.0.0.0/16

networkType: OpenShiftSDN

serviceNetwork:

- 172.30.0.0/16

platform:

aws:

region: us-west-2

userTags:

adminContact: jdoe

costCenter: 7536

pullSecret: '{"auths": ...}'

fips: false

sshKey: ssh-ed25519 AAAA...

What does this look like at scale?

For larger implementations, we would see additional worker nodes spread across three or more AZs. As more worker nodes are added, use of the control plane increases. Initially scaling up the Amazon Elastic Compute Cloud (EC2) instance type to a larger instance type is an effective way of addressing this. It’s possible to add more Master nodes, and we recommend that an odd number of nodes are maintained. It is more common to see scaling out of the infrastructure nodes before there is a need to scale Masters. For large-scale implementations, infrastructure functions such as the router, monitoring, and logging functions can be moved to separate EC2 instances from the Master nodes, as well as from each other. It is important to spread the routing layer across multiple AZs, which is critical to maintaining availability and resilience.

The process of resource separation is now controlled by infrastructure machine sets within OpenShift. An infrastructure machine set would need to be defined, then the infrastructure role edited to be moved from the default to this new infrastructure machine set. Read about this in greater detail.

OpenShift in a multi-account context

Using AWS accounts as a means of separation is a common well-architected pattern. AWS Organizations and AWS Control Tower are services that are commonly adopted as part of a multi-account strategy. This is very much the case when looking to enable teams to use their own accounts and when an account vending process is needed to cater for self-service account provisioning.

OpenShift clusters are deployed into multiple accounts. An OpenShift dev cluster is deployed into an AWS Dev account. This account would typically have AWS Developer Support associated with it. A separate production OpenShift cluster would be provisioned into an AWS production account with AWS Enterprise Support. Enterprise support provides for faster support case response times, and you get the benefit of dedicated resources such as a technical account manager and solutions architect.

CICD pipelines and processes are then used to control the application life cycle from code to dev to production. The pipelines would push the code to different OpenShift cluster end points at different stages of the life cycle.

Hybrid use case implementation

A common misconception of hybrid implementations is that there is a single cluster or control plan that has worker nodes in various locations. For example, there could be a cluster where the Master and infrastructure nodes are deployed in one location, but also worker nodes registered with this cluster that exist on-premises as well as in the cloud.

Having a single customer control plane for a hybrid implementation, even if technically possible, introduces undesired risks.

There is the potential to take multiple environments with very different resilience characteristics and make them interdependent of each other. This can result in performance and reliability issues, and these may increase not only the possibility of the risk manifesting, but also increase in the impact or blast radius.

Instead, hybrid implementations will see separate OpenShift clusters deployed into various locations. A customer may deploy clusters on-premises to cater for a workload that can’t be migrated to the cloud in the short term. Separate OpenShift clusters can then deployed into accounts in AWS for workloads on the cloud. Customers can also deploy separate OpenShift clusters in different AWS regions to cater for proximity to the consuming customer.

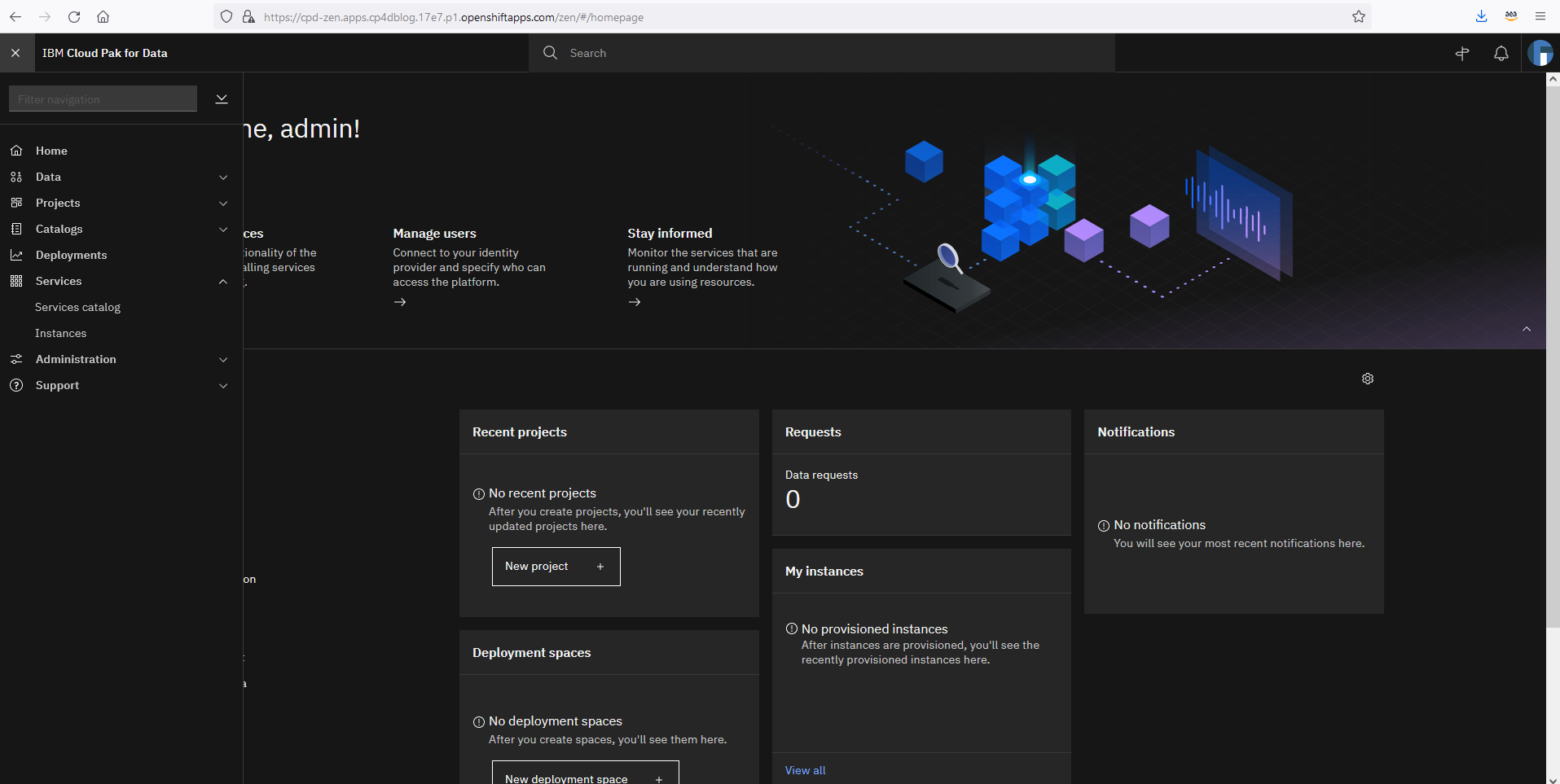

Though adding multiple clusters doesn’t add significant administrative overhead, there is a desire to be able to gain visibility and telemetry to all the deployed clusters from a central location. This may see the OpenShift clusters registered with Red Hat Advanced Cluster Manager for Kubernetes.

Summary

Take advantage of the IPI model, not only as a guide but to also save time. Make AWS Organizations, AWS Control Tower, and the AWS Service catalog part of your cloud and hybrid strategies. These will not only speed up migrations but also form building blocks for a modernized business with a focus of enabling prescriptive self-service. Consider Red Hat advanced cluster manager for multi cluster management.