Post Syndicated from Reid Tatoris original http://blog.cloudflare.com/ai-bots/

Today, we’re excited to announce that any Cloudflare user, on any plan, can choose specific categories of bots that they want to allow or block, including AI crawlers.

As the popularity of generative AI has grown, content creators and policymakers around the world have started to ask questions about what data AI companies are using to train their models without permission. As with all new innovative technologies, laws will likely need to evolve to address different parties' interests and what’s best for society at large. While we don’t know how it will shake out, we believe that website operators should have an easy way to block unwanted AI crawlers and to also let AI bots know when they are permitted to crawl their websites.

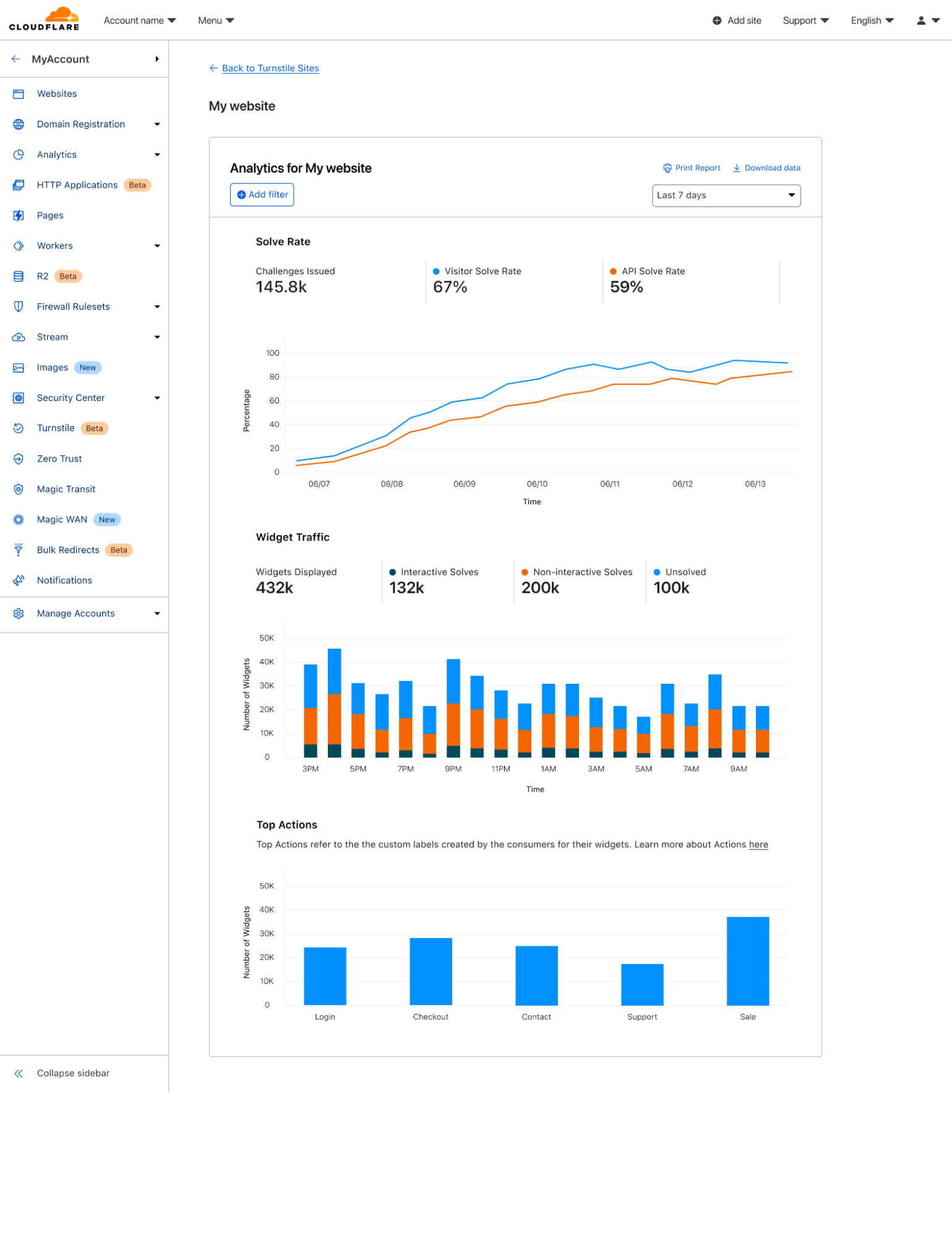

The good news is that Cloudflare already automatically stops scraper bots today. But we want to make it even easier for customers to be sure they are protected, see how frequently AI scrapers might be visiting their sites, and respond to them in more targeted ways. We also recognize that not all AI crawlers are the same and that some AI companies are looking for clear instructions for when they should not crawl a public website.

Crawler bots are nothing new. Cloudflare already protects you from scraping today.

Web crawlers have been around for a long time. The first, called World Wide Web Wanderer, was developed back in 1993 to measure the size of the web by counting the total number of accessible web pages. This technique led directly to the creation of the first popular search engine, WebCrawler, in 1994.

And still today, the most common use of a web crawler is for a search engine: Google’s GoogleBot. To provide the most relevant results for searches, crawlers like GoogleBot typically start by visiting web pages and retrieving the HTML content. Search engine operators predefine how much of the crawled HTML files is necessary for indexing, and then the files will be parsed to extract components like text, images, metadata, and links. This extracted data will then be stored in a structured format back on Google’s servers. Extracted links (URLs) are the key to how the crawlers discover new websites. The links that were present in the HTML files are added to a queue of URLs for the crawlers to visit and parse. And URLs are pretty easily spread around the Internet making it easy for crawlers to discover new sites. It can even be a URL that appeared in a referrer header that was stored and published by another web server. This process of following links, parsing, and storing data is recursively repeated allowing search engines to map out the web. All this collected data is then indexed to allow for efficient searching and retrieval of information.

While search engine crawler operations are generally beneficial for a site owner to get their site discovered, there are bot operators that use similar techniques for more malicious purposes such as price scraping to undercut competitor pricing or theft of copyrighted material such as images.

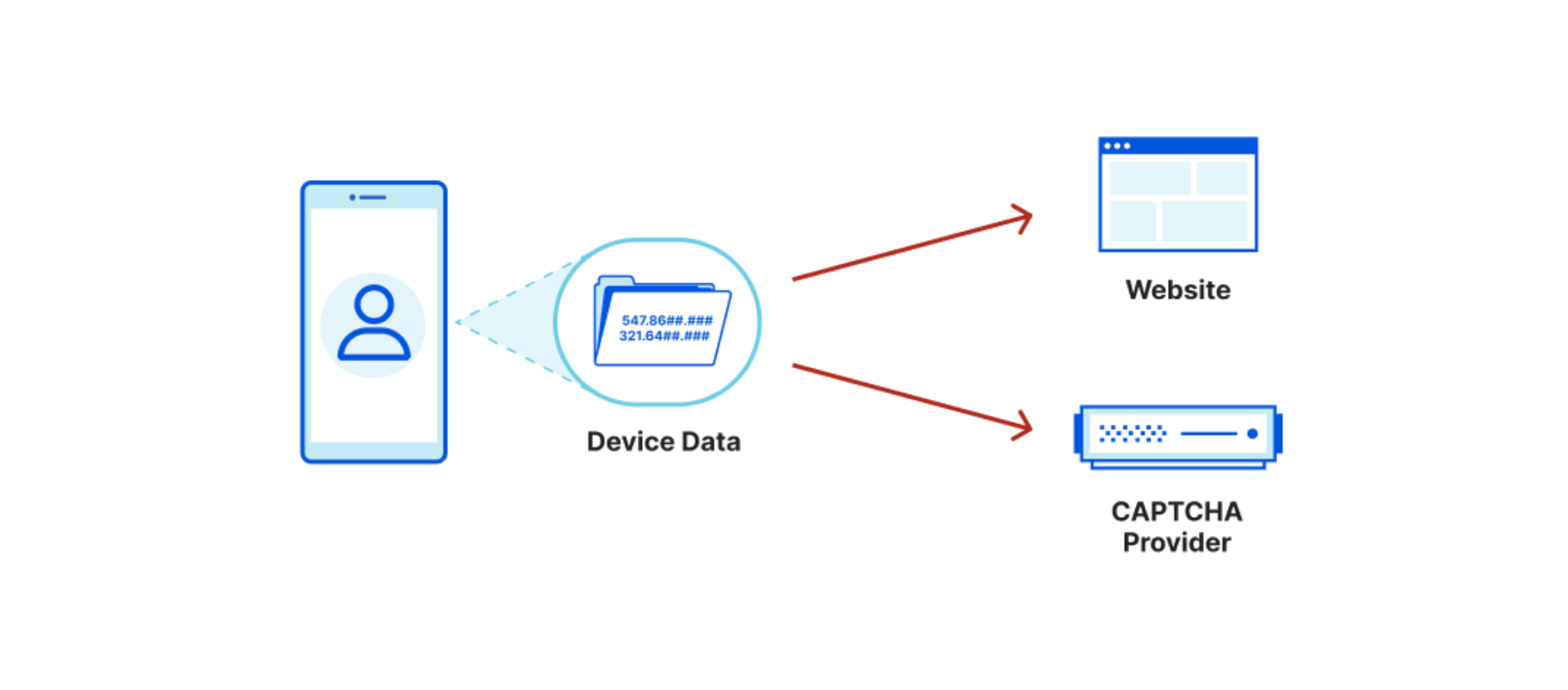

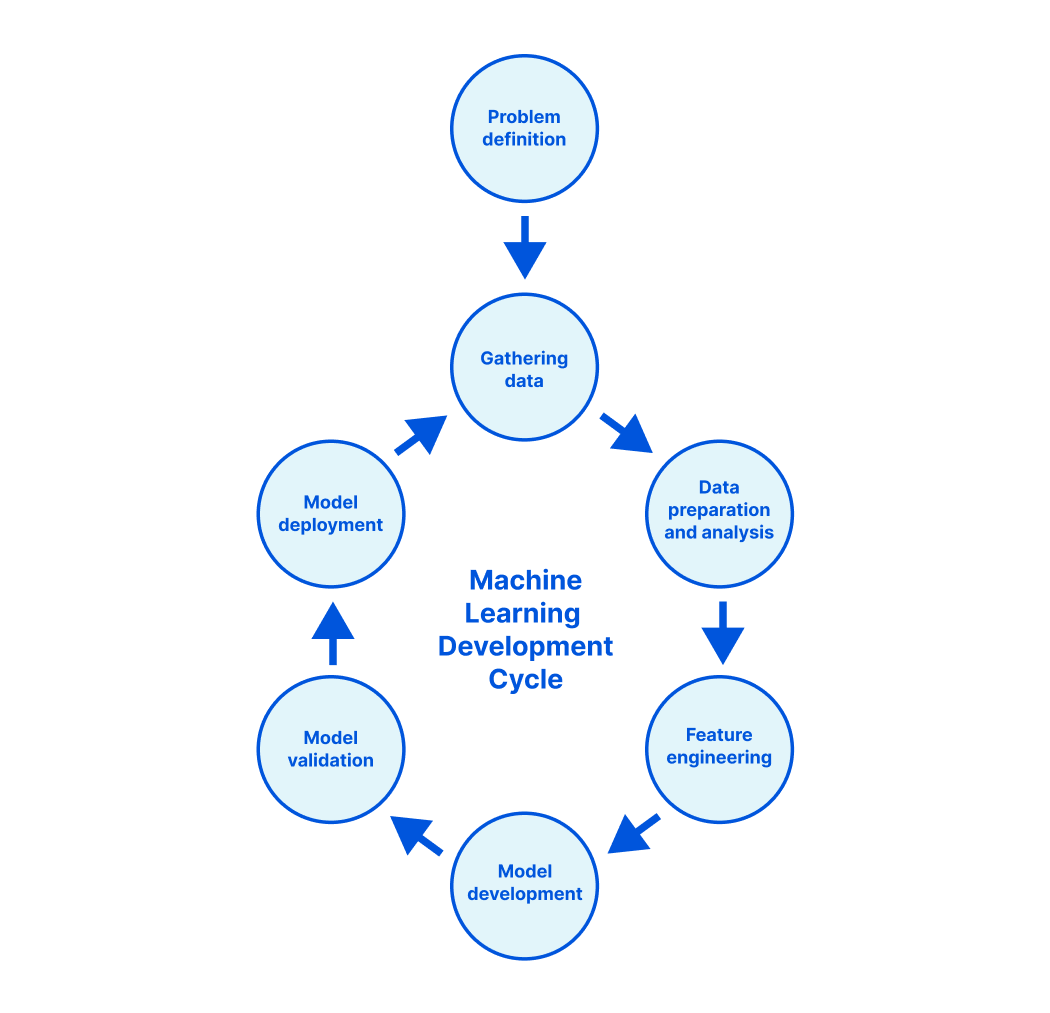

The techniques deployed by AI crawlers are no different. Just like a search engine crawler, they’ll parse HTML content and follow extracted URLs to gather available information. But instead of using it to index the web, this content will be applied as training data for their ML models.

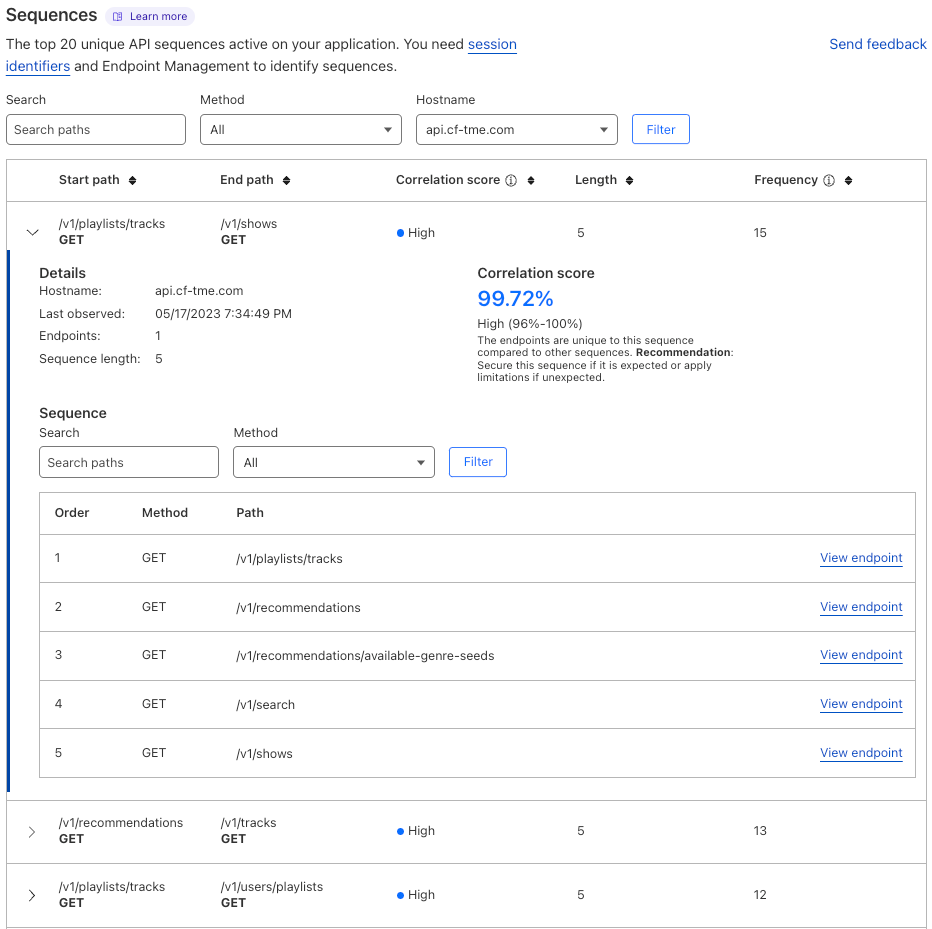

Cloudflare identifies both good and bad crawlers using various systems such as attack signature matching, heuristics, machine learning, and behavioral analysis. All Cloudflare customers using Bot Fight Mode, Super Bot Fight Mode, or Bot Management are already protected from malicious crawlers.

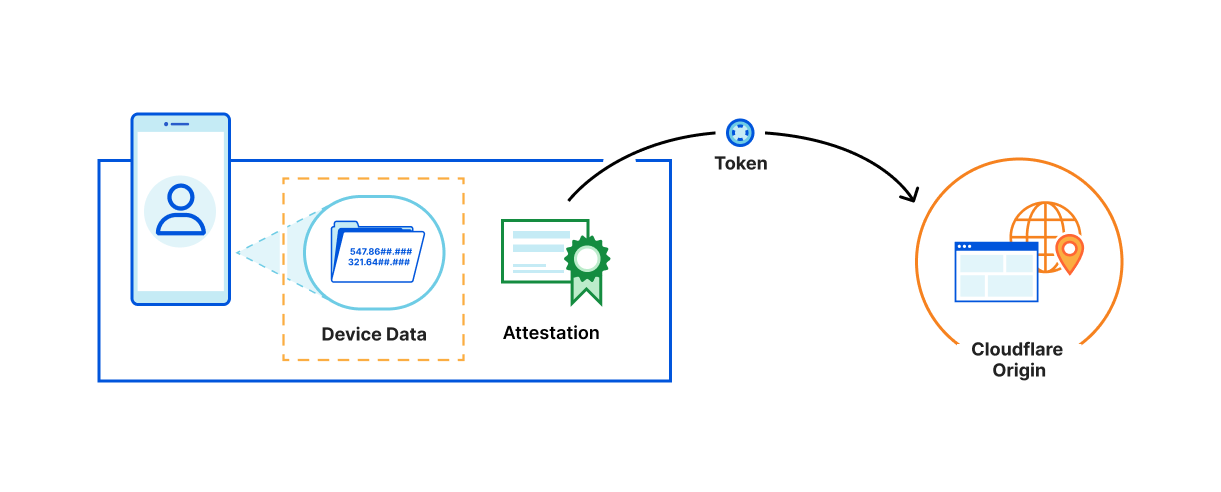

Along with our bot detection tools, we also have a Verified Bot directory that allows responsible and necessary bots, like GoogleBot, to register to be segmented into their own separate detections (fill out a request here if you have a bot you think should be added). We’ve added new functionality to that directory to give our customers more control.

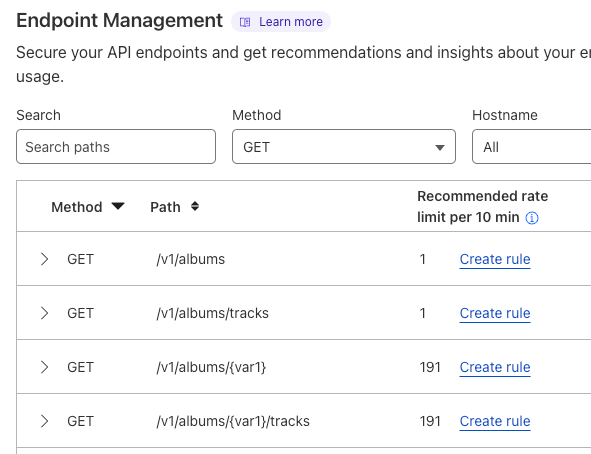

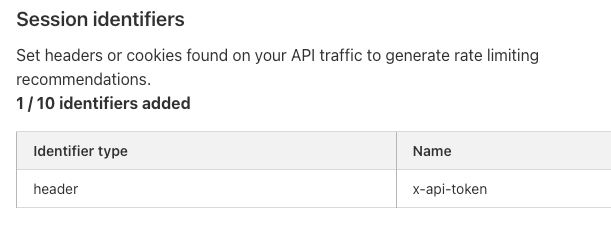

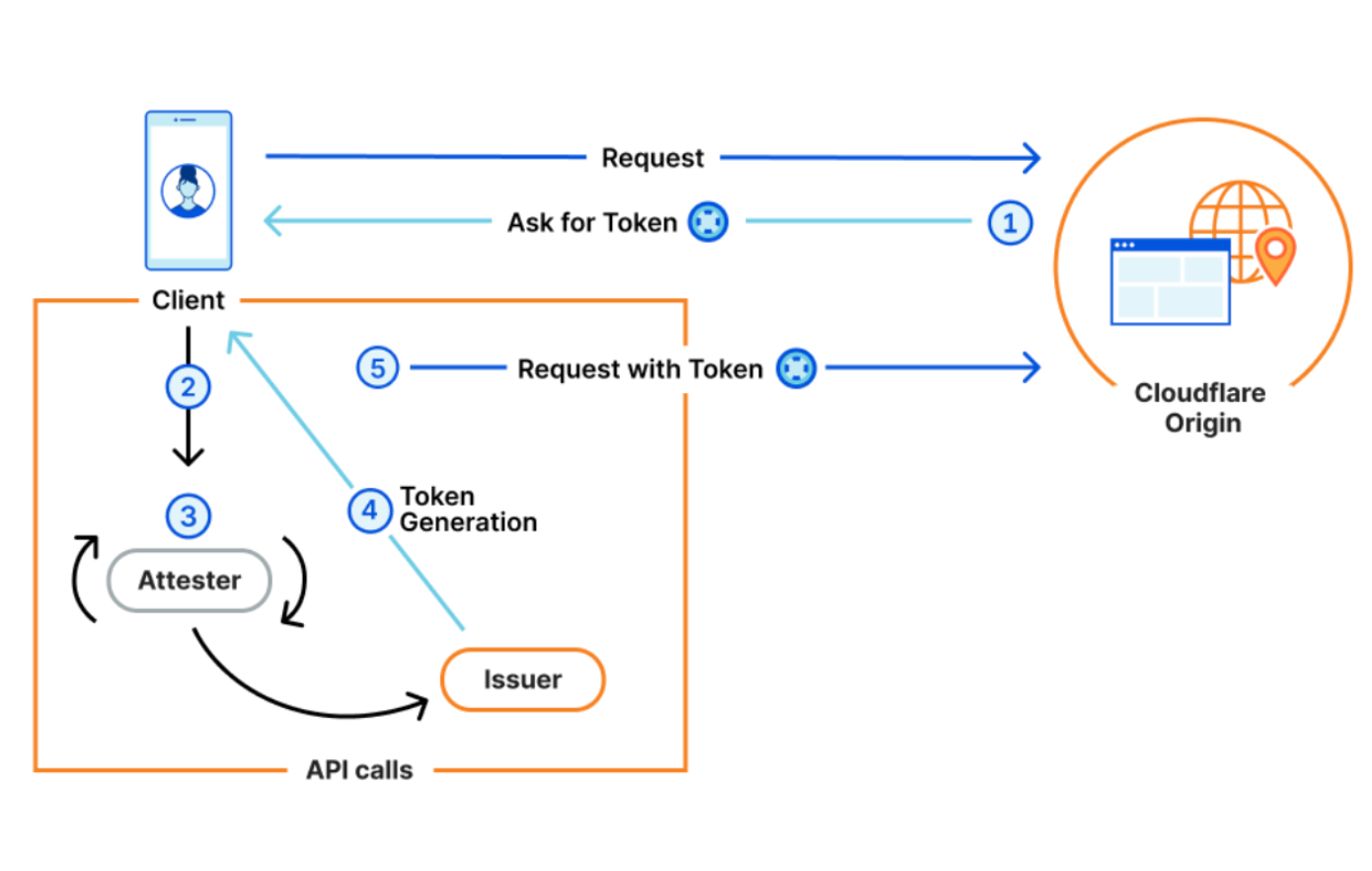

Available now: segment known bots with flexibility and precision

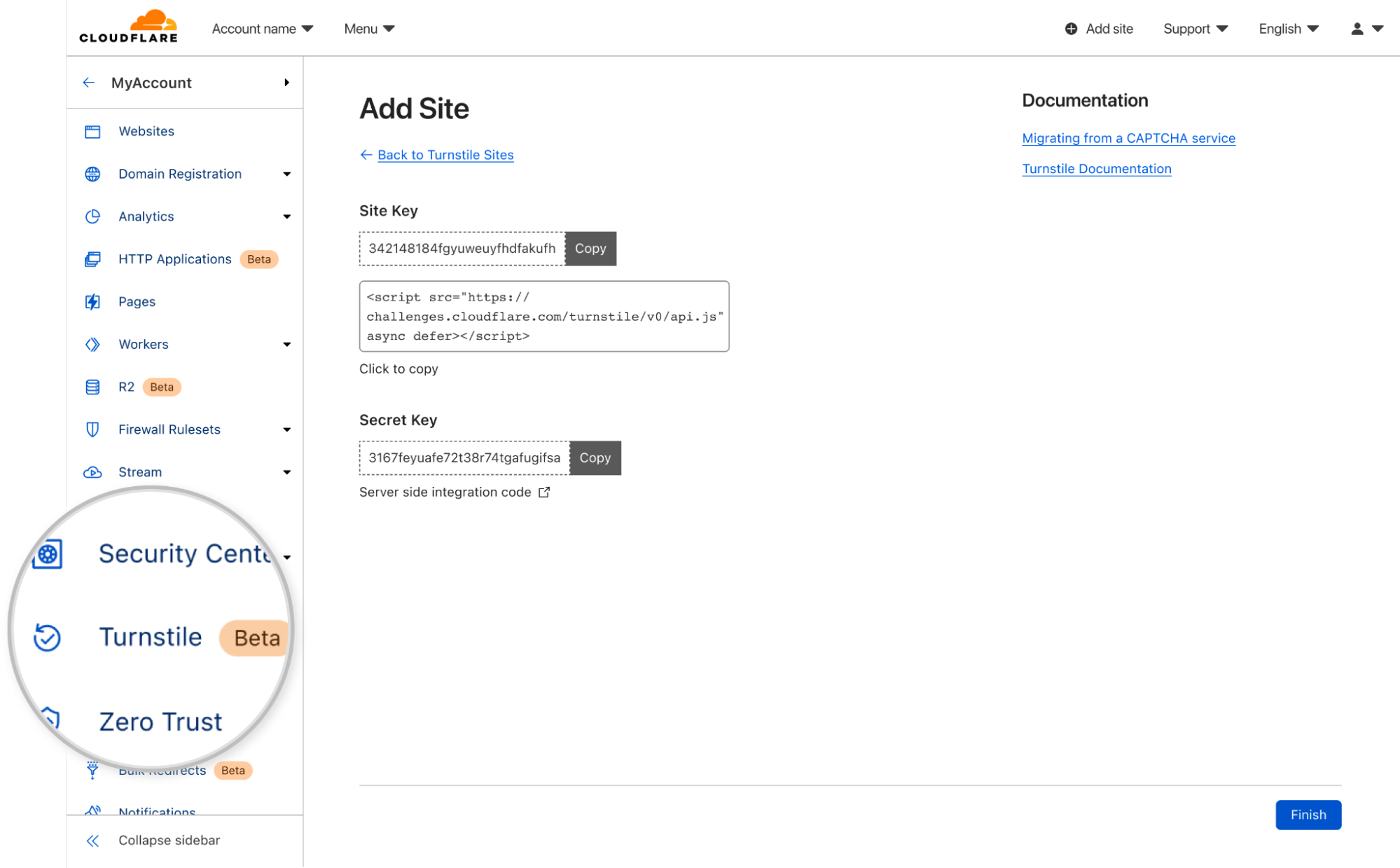

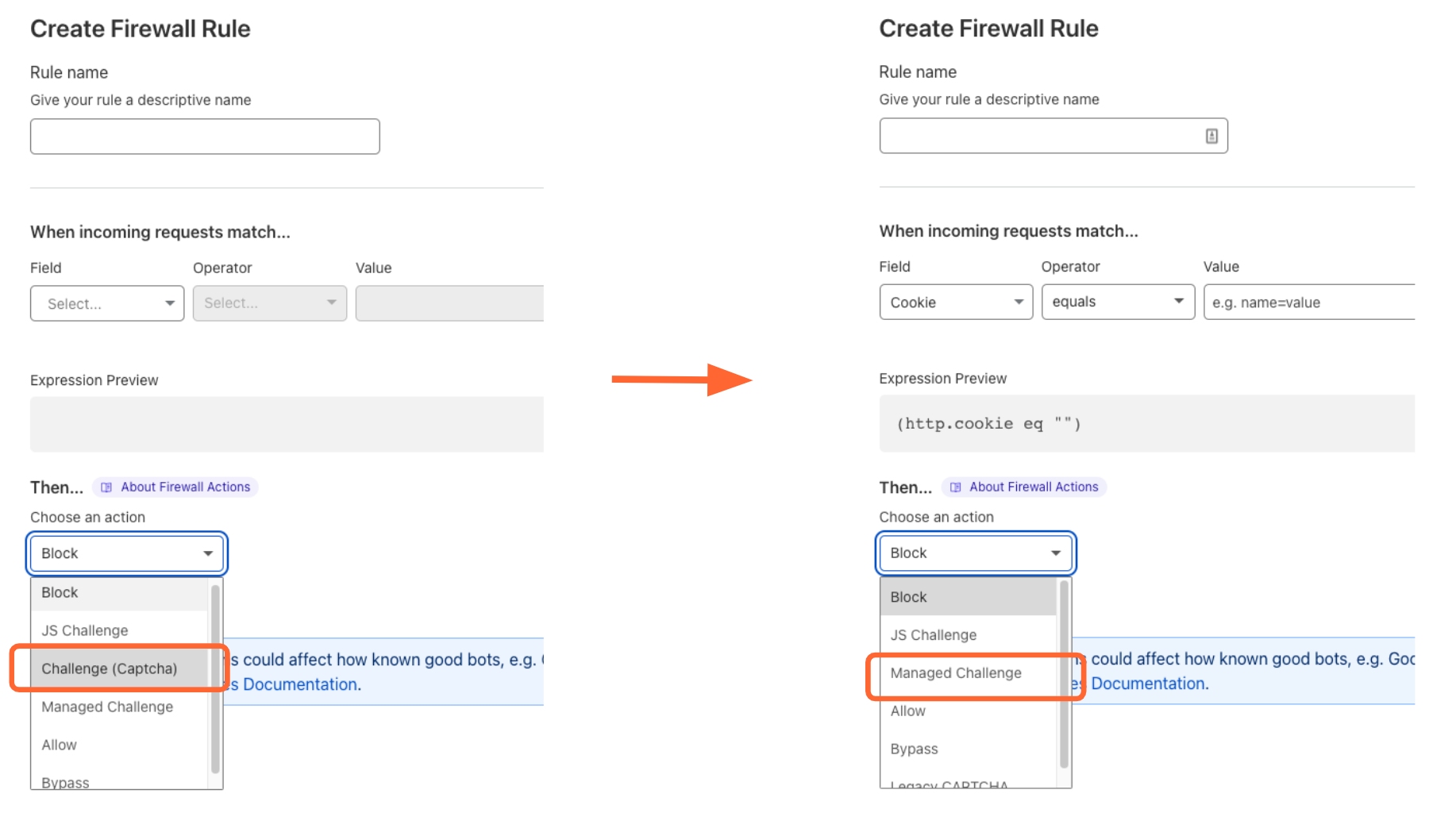

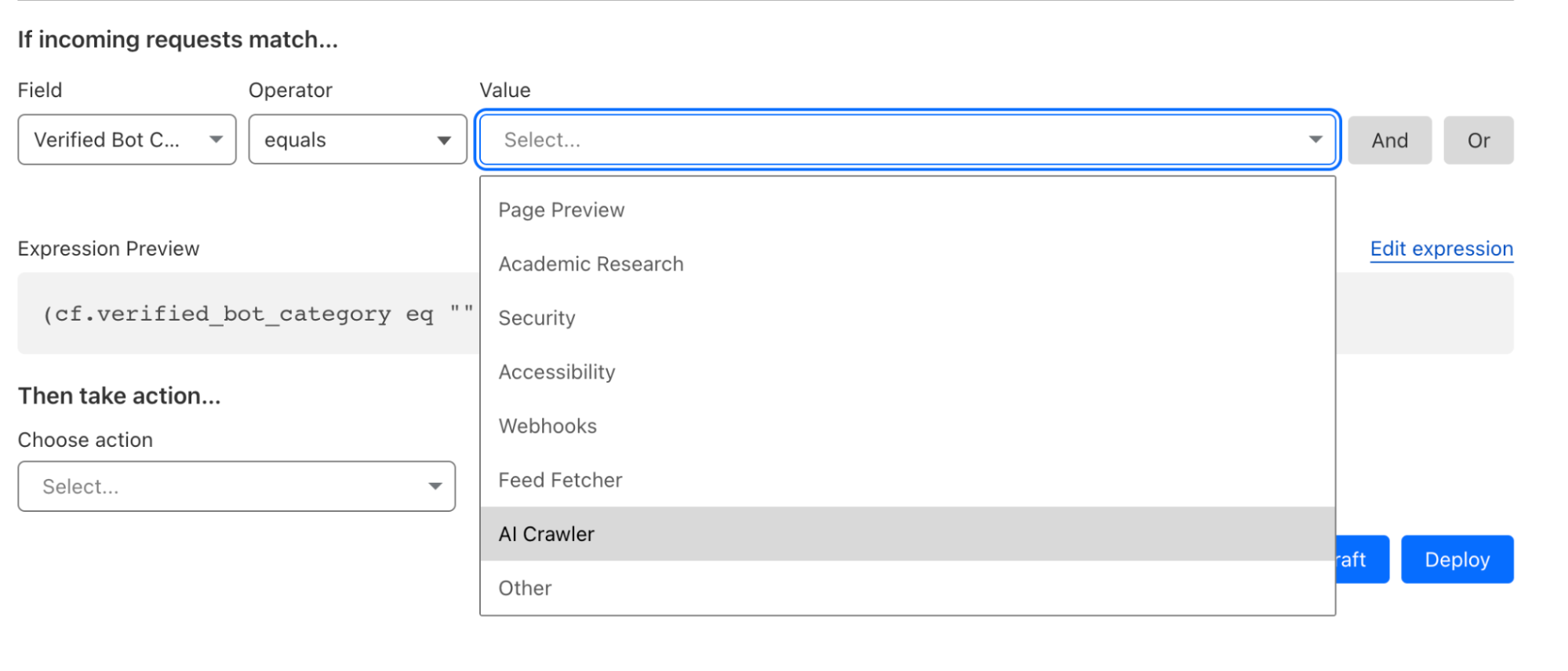

Our new Verified Bot categories are now available in the Cloudflare Rules Engine and Workers. With this granular bot categorization, Cloudflare users get better bot segmentation, and can choose specific responses to specific types of bots. To take advantage of these new bot categories, simply log in to the Cloudflare dash, go to the WAF tab, create a rule, and choose one of the Verified Bot sub categories as the Field.

The new categories include:

- Search Engine Crawler,

- Aggregator,

- AI Crawler,

- Page Preview,

- Advertising

- Academic Research,

- Accessibility,

- Feed Fetcher,

- Security,

- Webhooks.

You can also view all the available categories using the Cloudflare API.

curl --request GET 'https://api.cloudflare.com/client/v4/bots_directory/categories' \

--header "X-Auth-Email: <EMAIL>" \

--header "X-Auth-Key: <API_KEY>" \

More targeted responses can be useful in a variety of situations. A few examples include:

- If you are a content creator, and you’re concerned about your work being reproduced by AI services, you can block AI bots we have cataloged in a simple firewall rule, while still allowing search engine crawlers to index your site.

- If your content is frequently shared on social media, you may want to use Workers to serve a simplified version of the page to Page Preview services, like the services that X (formerly Twitter), Discord and Slack are using to render a thumbnail version of a web page.

- If you run an online store that processes payments through webhooks API, you can harden your site’s security by only allowing verified webhooks services to make a request to that API endpoint.

- If you are using Cloudflare’s Load Balancing service and have limited in-region capacity, you can use Custom Rules for Load Balancing to send all bots except Search Engine Crawlers to a backup pool, prioritizing critical visitors over non-critical automated services.

Above all, these new categories give you, the website owner, complete, granular control over not only whether bots can visit your site, but what specific types of bots can and can’t do. For those of you that simply don’t want any bots, no problem, you don’t have to make any changes. Your existing rules that reference bot score or our Verified bots change will not be impacted at all.

More than just blocking, encouraging good behavior to make the Internet better

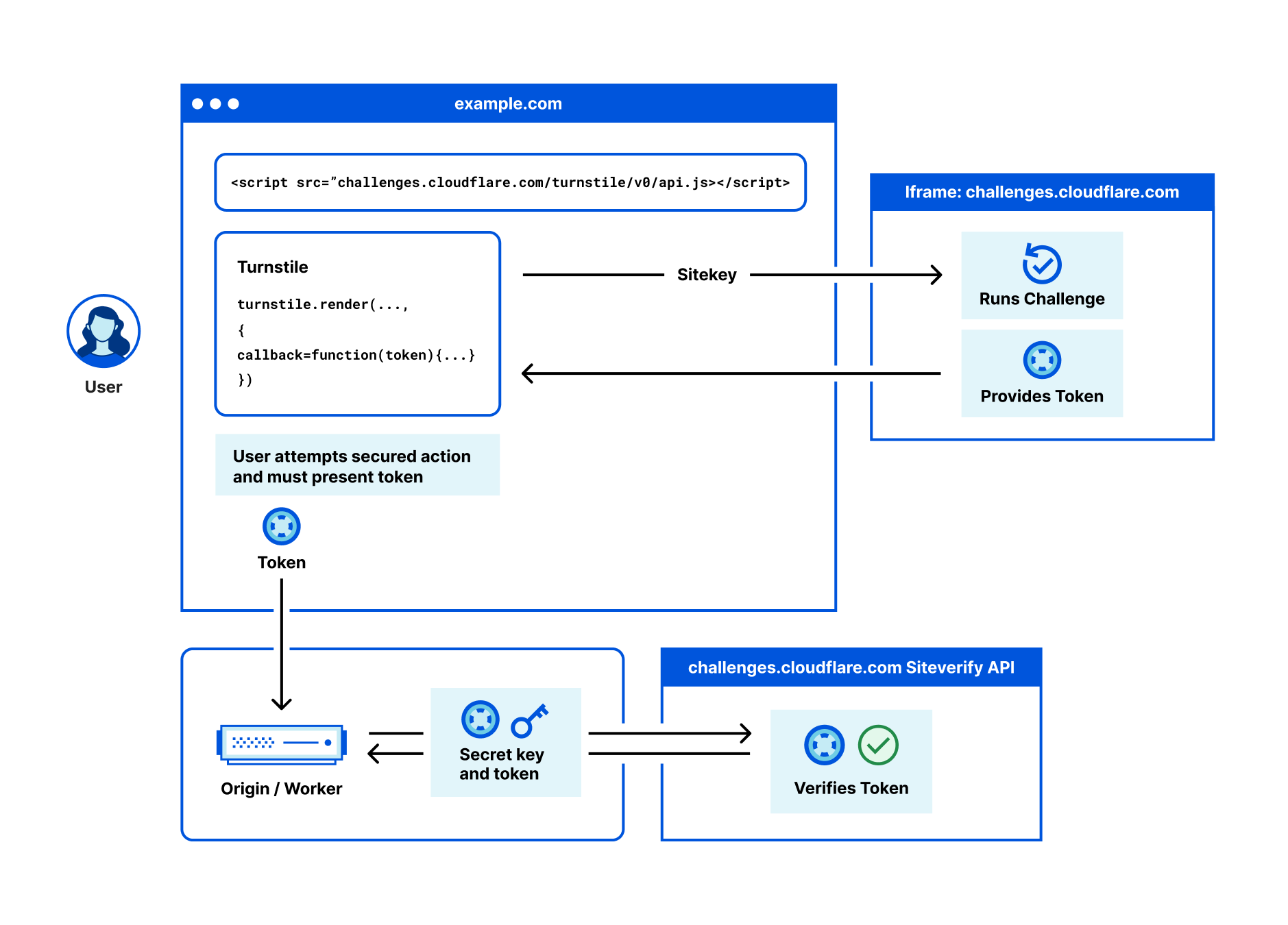

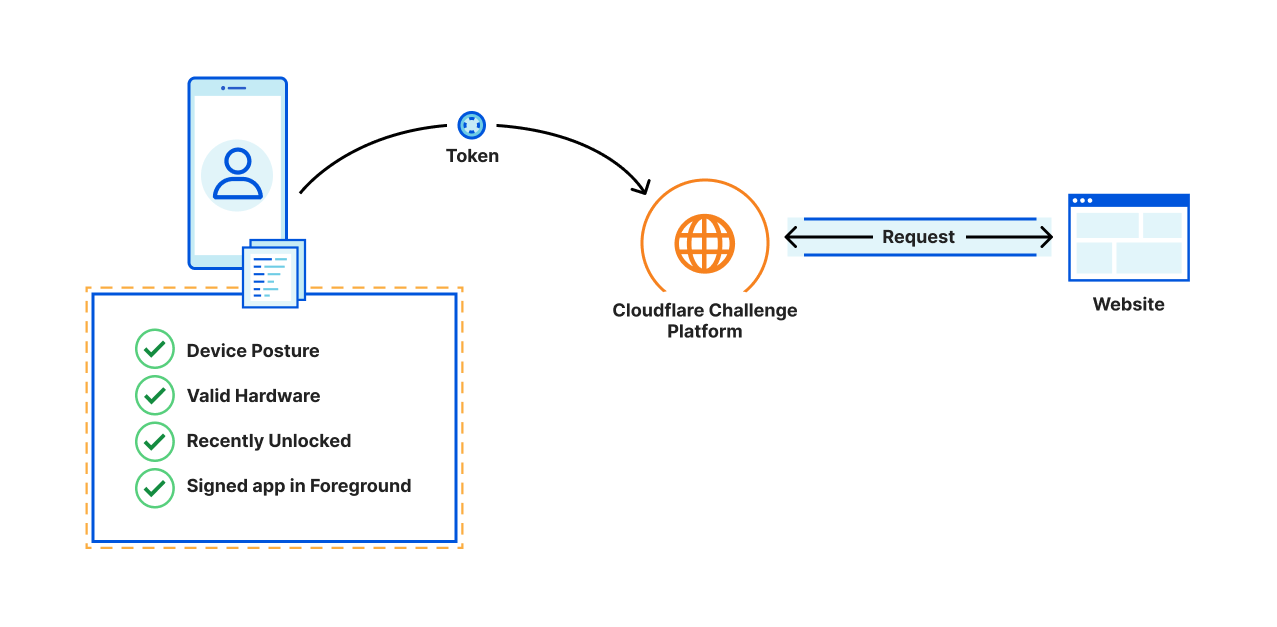

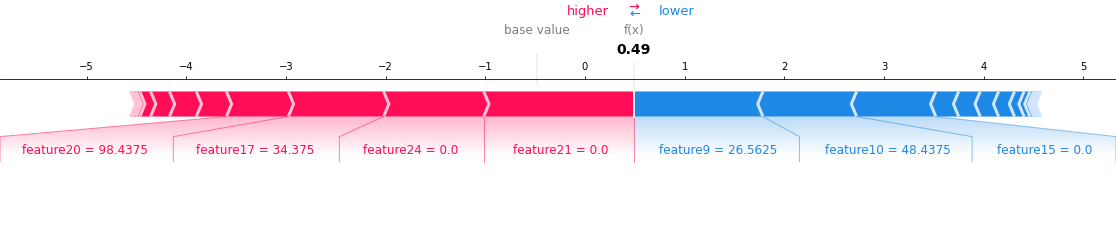

At Cloudflare, we have a history of working with good bot operators (like GoogleBot), who respect Internet norms and best practices, to access the websites that want to allow them. We want to encourage good behavior by AI crawlers as well, so we have developed a set of criteria that will allow us to tag respectful AI bots differently. In order to be tagged as a respectful AI bot, AI crawler must take the following steps to show they are acting in good faith:

Maintain a public web page committing to respect robots.txt.

- Set IPs that are used solely by the bot and are verifiable via a public IP list, reverse DNS lookup, or ASN ownership.

- Maintain a unique and stable user-agent to represent the bot.

- Respect a robots.txt entry for your user-agent as well as wild-card entries.

- Requiring all AI crawlers to respect crawl-delay, which has previously been a nonstandard extension.

These steps are an expansion of our existing Verified Bots policy which you can see here. When a bot creator has performed the steps above, we perform additional evaluation to confirm we’ve seen no suspicious activity from the bot. We check the bot's documentation, check internal dashboards to ensure traffic is appropriately distributed across the sites we protect, and check whether the bot hits suspicious endpoints like logins, or has exhibited other malicious activity.

While new AI bots can be scary, this industry is evolving incredibly quickly, and you may want to handle different bots differently in the future. We think it's important to distinguish between bot operators that are being respectful and those that are trying to be deceptive.

It should be easy for everyone to deal with AI crawlers, not just Cloudflare customers

While we’re glad we’ve made it easy for Cloudflare customers to manage AI Crawlers, not everyone uses Cloudflare. We want the Internet to be better for everyone. So we think that the industry should adopt a new protocol specifically for handling AI crawlers.

In the long run, AI bots respecting a new exclusion protocol gives website operators the most flexibility to change how they want to handle them over time. We think the key is to make it easier for customers to block these bots, or to allow them in some cases if they choose on their entire website or only on specific pages.

You’ll be hearing more about this from us in the next few months, so stay tuned.

But we didn’t want to wait to make sure our customers are protected, so we're making our new bot categories available today!

What’s next?

The first and most important step for us was to make it clear to every Cloudflare customer that you are already protected from AI crawlers you don’t want. Second, we wanted to give you granular control and make it easy to allow those crawlers, or other bots, that you deem useful for your site.

We encourage everyone to try out our new Verified Bot categories today. Log in to the Cloudflare dash, go to the WAF tab, create a rule, and choose one of the Verified Bot sub categories as the `Field`. And remember, this functionality is available to all Cloudflare customers, even on free plans.

Having launched Verified Bot categories, in the next few months we’ll be adding more detailed reporting based on the bot category, to better help you visualize the frequency at which different categories of bots are visiting your site over time. As AI continues to evolve at a breakneck pace, AI Crawlers are only going to become a larger part of the Internet. As that evolution happens, Cloudflare will be there every step of the way to help you evolve the way you deal with them.