Post Syndicated from Nikita Cano original https://blog.cloudflare.com/birthday-week-2025-wrap-up/

Cloudflare launched fifteen years ago with a mission to help build a better Internet. Over that time the Internet has changed and so has what it needs from teams like ours. In this year’s Founder’s Letter, Matthew and Michelle discussed the role we have played in the evolution of the Internet, from helping encryption grow from 10% to 95% of Internet traffic to more recent challenges like how people consume content.

We spend Birthday Week every year releasing the products and capabilities we believe the Internet needs at this moment and around the corner. Previous Birthday Weeks saw the launch of IPv6 gateway in 2011, Universal SSL in 2014, Cloudflare Workers and unmetered DDoS protection in 2017, Cloudflare Radar in 2020, R2 Object Storage with zero egress fees in 2021, post-quantum upgrades for Cloudflare Tunnel in 2022, Workers AI and Encrypted Client Hello in 2023. And those are just a sample of the launches.

This year’s themes focused on helping prepare the Internet for a new model of monetization that encourages great content to be published, fostering more opportunities to build community both inside and outside of Cloudflare, and evergreen missions like making more features available to everyone and constantly improving the speed and security of what we offer.

We shipped a lot of new things this year. In case you missed the dozens of blog posts, here is a breakdown of everything we announced during Birthday Week 2025.

Monday, September 22

|

What |

In a sentence … |

|

Help build the future: announcing Cloudflare’s goal to hire 1,111 interns in 2026 |

To invest in the next generation of builders, we announced our most ambitious intern program yet with a goal to hire 1,111 interns in 2026. |

|

Supporting the future of the open web: Cloudflare is sponsoring Ladybird and Omarchy |

To support a diverse and open Internet, we are now sponsoring Ladybird (an independent browser) and Omarchy (an open-source Linux distribution and developer environment). |

|

We are opening our office doors in four major cities (San Francisco, Austin, London, and Lisbon) as free hubs for startups to collaborate and connect with the builder community. |

|

|

Free access to Cloudflare developer services for non-profit and civil society organizations |

We extended our Cloudflare for Startups program to non-profits and public-interest organizations, offering free credits for our developer tools. |

|

Introducing free access to Cloudflare developer features for students |

We are removing cost as a barrier for the next generation by giving students with .edu emails 12 months of free access to our paid developer platform features. |

|

We open-sourced Cap’n Web, a new JavaScript-native RPC protocol that simplifies powerful, schema-free communication for web applications. |

|

|

A lookback at Workers Launchpad and a warm welcome to Cohort #6 |

We announced Cohort #6 of the Workers Launchpad, our accelerator program for startups building on Cloudflare. |

Tuesday, September 23

|

What |

In a sentence … |

|

Building unique, per-customer defenses against advanced bot threats in the AI era |

New anomaly detection system that uses machine learning trained on each zone to build defenses against AI-driven bot attacks. |

|

Why Cloudflare, Netlify, and Webflow are collaborating to support Open Source tools |

To support the open web, we joined forces with Webflow to sponsor Astro, and with Netlify to sponsor TanStack. |

|

Launching the x402 Foundation with Coinbase, and support for x402 transactions |

We are partnering with Coinbase to create the x402 Foundation, encouraging the adoption of the x402 protocol to allow clients and services to exchange value on the web using a common language |

|

Helping protect journalists and local news from AI crawlers with Project Galileo |

We are extending our free Bot Management and AI Crawl Control services to journalists and news organizations through Project Galileo. |

|

Cloudflare Confidence Scorecards – making AI safer for the Internet |

Automated evaluation of AI and SaaS tools, helping organizations to embrace AI without compromising security. |

Wednesday, September 24

|

What |

In a sentence … |

|

Automatically Secure: how we upgraded 6,000,000 domains by default |

Our Automatic SSL/TLS system has upgraded over 6 million domains to more secure encryption modes by default and will soon automatically enable post-quantum connections. |

|

Giving users choice with Cloudflare’s new Content Signals Policy |

The Content Signals Policy is a new standard for robots.txt that lets creators express clear preferences for how AI can use their content. |

|

To build a better Internet in the age of AI, we need responsible AI bot principles |

A proposed set of responsible AI bot principles to start a conversation around transparency and respect for content creators’ preferences. |

|

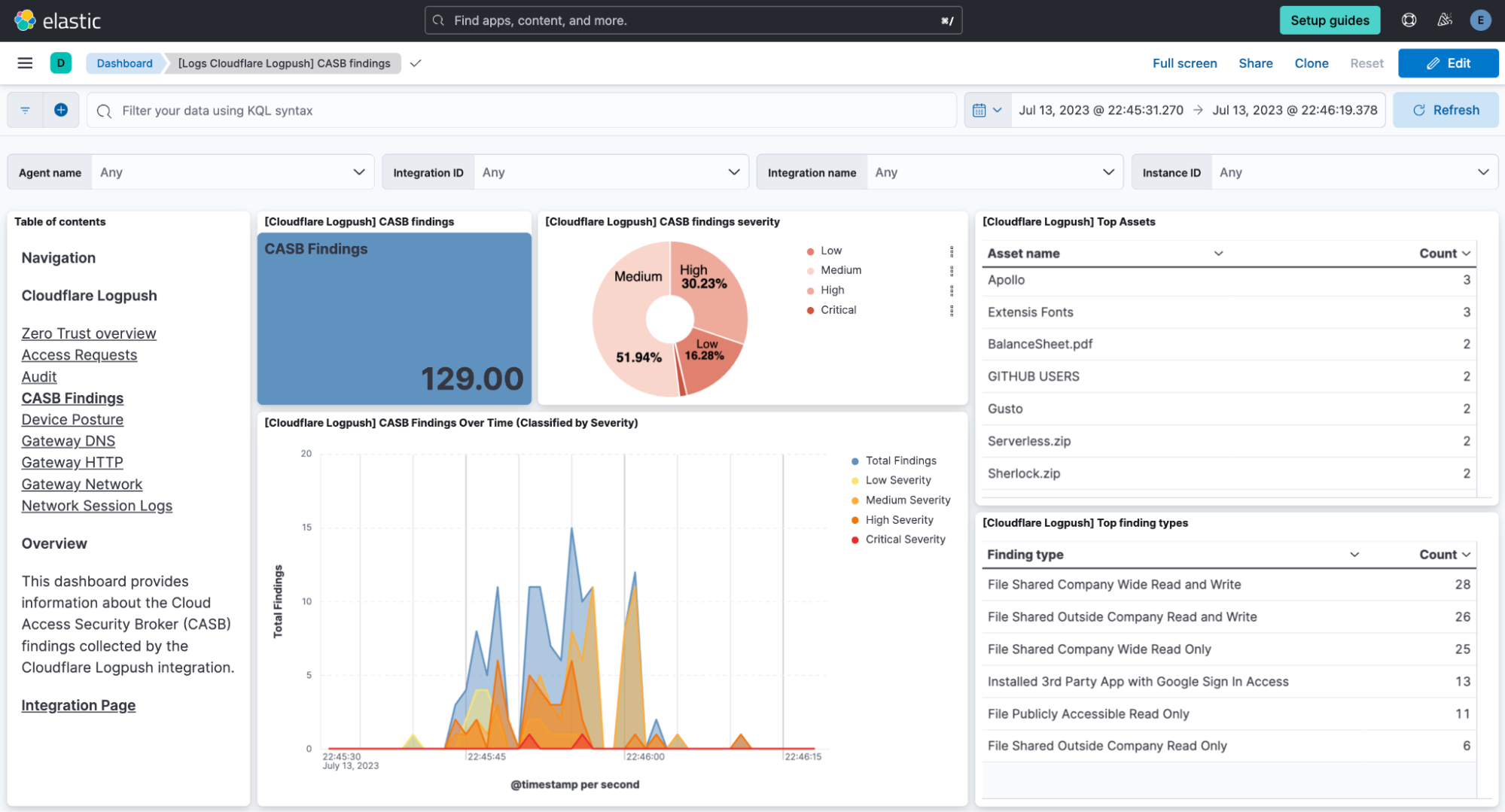

New security tools to give companies visibility and control over data flowing between SaaS applications. |

|

|

Securing today for the quantum future: WARP client now supports post-quantum cryptography (PQC) |

Cloudflare’s WARP client now supports post-quantum cryptography, providing quantum-resistant encryption for traffic. |

|

A simpler path to a safer Internet: an update to our CSAM scanning tool |

We made our CSAM Scanning Tool easier to adopt by removing the need to create and provide unique credentials, helping more site owners protect their platforms. |

Thursday, September 25

|

What |

In a sentence … |

|

We are making every Cloudflare feature, starting with Single Sign On (SSO), available for anyone to purchase on any plan. |

|

|

Cloudflare’s developer platform keeps getting better, faster, and more powerful |

Updates across Workers and beyond for a more powerful developer platform – such as support for larger and more concurrent Container images, support for external models from OpenAI and Anthropic in AI Search (previously AutoRAG), and more. |

|

Partnering to make full-stack fast: deploy PlanetScale databases directly from Workers |

You can now connect Cloudflare Workers to PlanetScale databases directly, with connections automatically optimized by Hyperdrive. |

|

A complete solution for ingesting, storing, and querying analytical data tables using open standards like Apache Iceberg. |

|

|

A technical deep dive on R2 SQL, a serverless query engine for petabyte-scale datasets in R2. |

|

|

Safe in the sandbox: security hardening for Cloudflare Workers |

A deep-dive into how we’ve hardened the Workers runtime with new defense-in-depth security measures, including V8 sandboxes and hardware-assisted memory protection keys. |

|

To champion AI sovereignty, we’ve added locally-developed open-source models from India, Japan, and Southeast Asia to our Workers AI platform. |

|

|

We announced the Cloudflare Email Service private beta, allowing developers to reliably send and receive transactional emails directly from Cloudflare Workers. |

|

|

A year of improving Node.js compatibility in Cloudflare Workers |

There are hundreds of new Node.js APIs now available that make it easier to run existing Node.js code on our platform. |

Friday, September 26

|

What |

In a sentence … |

|

We have re-engineered our core proxy with a new modular, Rust-based architecture, cutting median response time by 10ms for millions. |

|

|

New monitoring tools in the Cloudflare dashboard that provide actionable recommendations and one-click fixes for performance issues. |

|

|

Cloudflare Radar now includes Internet Routing Registry (IRR) data, allowing network operators to monitor AS-SETs to help prevent route leaks. |

|

|

We announced the private beta of AI Index, a new service that creates an AI-optimized search index for your domain that you control and can monetize. |

|

|

Introducing new regional Internet traffic and Certificate Transparency insights on Cloudflare Radar |

Sub-national traffic insights and Certificate Transparency dashboards for TLS monitoring. |

|

We have reduced Workers cold starts by 10x by implementing a new “worker sharding” system that routes requests to already-loaded Workers. |

|

|

The TCP Connection Time (Trimean) graph shows that we are the fastest TCP connection time in 40% of measured ISPs – and the fastest across the top networks. |

|

|

How Cloudflare uses performance data to make the world’s fastest global network even faster |

We are using our network’s vast performance data to tune congestion control algorithms, improving speeds by an average of 10% for QUIC traffic. |

Helping build a better Internet has always been about more than just technology. Like the announcements about interns or working together in our offices, the community of people behind helping build a better Internet matters to its future. This week, we rolled out our most ambitious set of initiatives ever to support the builders, founders, and students who are creating the future.

For founders and startups, we are thrilled to welcome Cohort #6 to the Workers Launchpad, our accelerator program that gives early-stage companies the resources they need to scale. But we’re not stopping there. We’re opening our doors, literally, by launching new physical hubs for startups in our San Francisco, Austin, London, and Lisbon offices. These spaces will provide access to mentorship, resources, and a community of fellow builders.

We’re also investing in the next generation of talent. We announced free access to the Cloudflare developer platform for all students, giving them the tools to learn and experiment without limits. To provide a path from the classroom to the industry, we also announced our goal to hire 1,111 interns in 2026 — our biggest commitment yet to fostering future tech leaders.

And because a better Internet is for everyone, we’re extending our support to non-profits and public-interest organizations, offering them free access to our production-grade developer tools, so they can focus on their missions.

Whether you’re a founder with a big idea, a student just getting started, or a team working for a cause you believe in, we want to help you succeed.

Thank you to our customers, our community, and the millions of developers who trust us to help them build, secure, and accelerate the Internet. Your curiosity and feedback drive our innovation.

It’s been an incredible 15 years. And as always, we’re just getting started!