Post Syndicated from Ziad Wali original https://aws.amazon.com/blogs/big-data/build-an-etl-process-for-amazon-redshift-using-amazon-s3-event-notifications-and-aws-step-functions/

Data warehousing provides a business with several benefits such as advanced business intelligence and data consistency. It plays a big role within an organization by helping to make the right strategic decision at the right moment which could have a huge impact in a competitive market. One of the major and essential parts in a data warehouse is the extract, transform, and load (ETL) process which extracts the data from different sources, applies business rules and aggregations and then makes the transformed data available for the business users.

This process is always evolving to reflect new business and technical requirements, especially when working in an ambitious market. Nowadays, more verification steps are applied to source data before processing them which so often add an administration overhead. Hence, automatic notifications are more often required in order to accelerate data ingestion, facilitate monitoring and provide accurate tracking about the process.

Amazon Redshift is a fast, fully managed, cloud data warehouse that allows you to process and run your complex SQL analytics workloads on structured and semi-structured data. It also helps you to securely access your data in operational databases, data lakes or third-party datasets with minimal movement or copying. AWS Step Functions is a fully managed service that gives you the ability to orchestrate and coordinate service components. Amazon S3 Event Notifications is an Amazon S3 feature that you can enable in order to receive notifications when specific events occur in your S3 bucket.

In this post we discuss how we can build and orchestrate in a few steps an ETL process for Amazon Redshift using Amazon S3 Event Notifications for automatic verification of source data upon arrival and notification in specific cases. And we show how to use AWS Step Functions for the orchestration of the data pipeline. It can be considered as a starting point for teams within organizations willing to create and build an event driven data pipeline from data source to data warehouse that will help in tracking each phase and in responding to failures quickly. Alternatively, you can also use Amazon Redshift auto-copy from Amazon S3 to simplify data loading from Amazon S3 into Amazon Redshift.

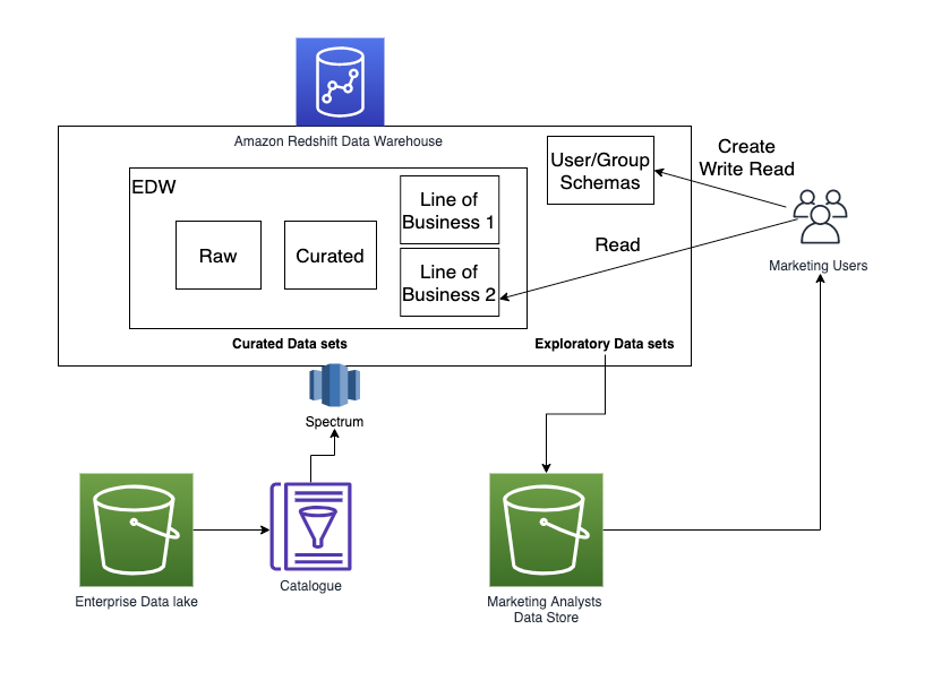

Solution overview

The workflow is composed of the following steps:

- A Lambda function is triggered by an S3 event whenever a source file arrives at the S3 bucket. It does the necessary verifications and then classifies the file before processing by sending it to the appropriate Amazon S3 prefix (accepted or rejected).

- There are two possibilities:

- If the file is moved to the rejected Amazon S3 prefix, an Amazon S3 event sends a message to Amazon SNS for further notification.

- If the file is moved to the accepted Amazon S3 prefix, an Amazon S3 event is triggered and sends a message with the file path to Amazon SQS.

- An Amazon EventBridge scheduled event triggers the AWS Step Functions workflow.

- The workflow executes a Lambda function that pulls out the messages from the Amazon SQS queue and generates a manifest file for the COPY command.

- Once the manifest file is generated, the workflow starts the ETL process using stored procedure.

The following image shows the workflow.

Prerequisites

Before configuring the previous solution, you can use the following AWS CloudFormation template to set up and create the infrastructure

- Give the stack a name, select a deployment VPC and define the master user for the Amazon Redshift cluster by filling in the two parameters

MasterUserNameandMasterUserPassword.

The template will create the following services:

- An S3 bucket

- An Amazon Redshift cluster composed of two ra3.xlplus nodes

- An empty AWS Step Functions workflow

- An Amazon SQS queue

- An Amazon SNS topic

- An Amazon EventBridge scheduled rule with a 5-minute rate

- Two empty AWS Lambda functions

- IAM roles and policies for the services to communicate with each other

The names of the created services are usually prefixed by the stack’s name or the word blogdemo. You can find the names of the created services in the stack’s resources tab.

Step 1: Configure Amazon S3 Event Notifications

Create the following four folders in the S3 bucket:

- received

- rejected

- accepted

- manifest

In this scenario, we will create the following three Amazon S3 event notifications:

- Trigger an AWS Lambda function on the received folder.

- Send a message to the Amazon SNS topic on the rejected folder.

- Send a message to Amazon SQS on the accepted folder.

To create an Amazon S3 event notification:

- Go to the bucket’s Properties tab.

- In the Event Notifications section, select Create Event Notification.

- Fill in the necessary properties:

- Give the event a name.

- Specify the appropriate prefix or folder (

accepted/,rejected/orreceived/). - Select All object create events as an event type.

- Select and choose the destination (AWS lambda, Amazon SNS or Amazon SQS).

Note: for an AWS Lambda destination, choose the function that starts with${stackname}-blogdemoVerify_%

At the end, you should have three Amazon S3 events:

- An event for the received prefix with an AWS Lambda function as a destination type.

- An event for the accepted prefix with an Amazon SQS queue as a destination type.

- An event for the rejected prefix with an Amazon SNS topic as a destination type.

The following image shows what you should have after creating the three Amazon S3 events:

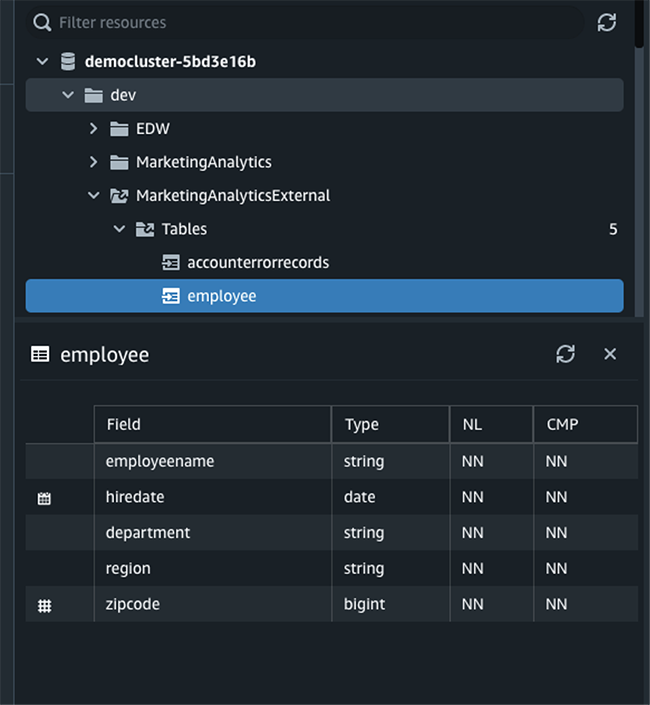

Step 2: Create objects in Amazon Redshift

Connect to the Amazon Redshift cluster and create the following objects:

- Three schemas:

- A table in the

blogdemo_stagingandblogdemo_coreschemas: - A stored procedure to extract and load data into the target schema:

- Set the role

${stackname}-blogdemoRoleRedshift_%as a default role:- In the Amazon Redshift console, go to clusters and click on the cluster

blogdemoRedshift%. - Go to the Properties tab.

- In the Cluster permissions section, select the role

${stackname}-blogdemoRoleRedshift%. - Click on Set default then Make default.

- In the Amazon Redshift console, go to clusters and click on the cluster

Step 3: Configure Amazon SQS queue

The Amazon SQS queue can be used as it is; this means with the default values. The only thing you need to do for this demo is to go to the created queue ${stackname}-blogdemoSQS% and purge the test messages generated (if any) by the Amazon S3 event configuration. Copy its URL in a text file for further use (more precisely, in one of the AWS Lambda functions).

Step 4: Setup Amazon SNS topic

- In the Amazon SNS console, go to the topic

${stackname}-blogdemoSNS% - Click on the Create subscription button.

- Choose the

blogdemotopic ARN, email protocol, type your email and then click on Create subscription. - Confirm your subscription in your email that you received.

Step 5: Customize the AWS Lambda functions

- The following code verifies the name of a file. If it respects the naming convention, it will move it to the accepted folder. If it does not respect the naming convention, it will move it to the rejected one. Copy it to the AWS Lambda function

${stackname}-blogdemoLambdaVerifyand then deploy it: - The second AWS Lambda function

${stackname}-blogdemonLambdaGenerate%retrieves the messages from the Amazon SQS queue and generates and stores a manifest file in the S3 bucket manifest folder. Copy the following content, replace the variable${sqs_url}by the value retrieved in Step 3 and then click on Deploy.

Step 6: Add tasks to the AWS Step Functions workflow

Create the following workflow in the state machine ${stackname}-blogdemoStepFunctions%.

If you would like to accelerate this step, you can drag and drop the content of the following JSON file in the definition part when you click on Edit. Make sure to replace the three variables:

${GenerateManifestFileFunctionName}by the${stackname}-blogdemoLambdaGenerate%arn.${RedshiftClusterIdentifier}by the Amazon Redshift cluster identifier.${MasterUserName}by the username that you defined while deploying the CloudFormation template.

Step 7: Enable Amazon EventBridge rule

Enable the rule and add the AWS Step Functions workflow as a rule target:

- Go to the Amazon EventBridge console.

- Select the rule created by the Amazon CloudFormation template and click on Edit.

- Enable the rule and click Next.

- You can change the rate if you want. Then select Next.

- Add the AWS Step Functions state machine created by the CloudFormation template

blogdemoStepFunctions%as a target and use an existing role created by the CloudFormation template${stackname}-blogdemoRoleEventBridge%

- Click on Next and then Update rule.

Test the solution

In order to test the solution, the only thing you should do is upload some csv files in the received prefix of the S3 bucket. Here are some sample data; each file contains 1000 rows of rideshare data.

If you upload them in one click, you should receive an email because the ridesharedata2022.csv does not respect the naming convention. The other three files will be loaded in the target table blogdemo_core.rideshare. You can check the Step Functions workflow to verify that the process finished successfully.

Clean up

- Go to the Amazon EventBridge console and delete the rule

${stackname}-blogdemoevenbridge%. - In the Amazon S3 console, select the bucket created by the CloudFormation template

${stackname}-blogdemobucket%and click on Empty. - Go to Subscriptions in the Amazon SNS console and delete the subscription created in Step 4.

- In the AWS CloudFormation console, select the stack and delete it.

Conclusion

In this post, we showed how different AWS services can be easily implemented together in order to create an event-driven architecture and automate its data pipeline, which targets the cloud data warehouse Amazon Redshift for business intelligence applications and complex queries.

About the Author

Ziad WALI is an Acceleration Lab Solutions Architect at Amazon Web Services. He has over 10 years of experience in databases and data warehousing where he enjoys building reliable, scalable and efficient solutions. Outside of work, he enjoys sports and spending time in nature.

Ziad WALI is an Acceleration Lab Solutions Architect at Amazon Web Services. He has over 10 years of experience in databases and data warehousing where he enjoys building reliable, scalable and efficient solutions. Outside of work, he enjoys sports and spending time in nature.

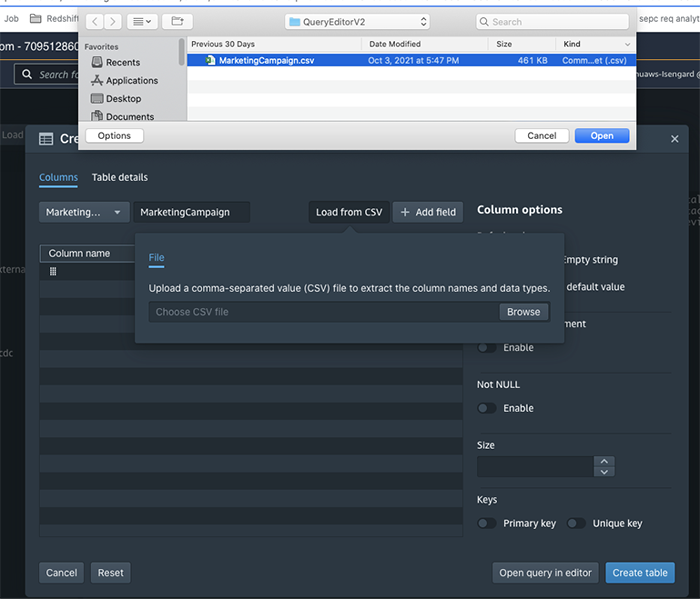

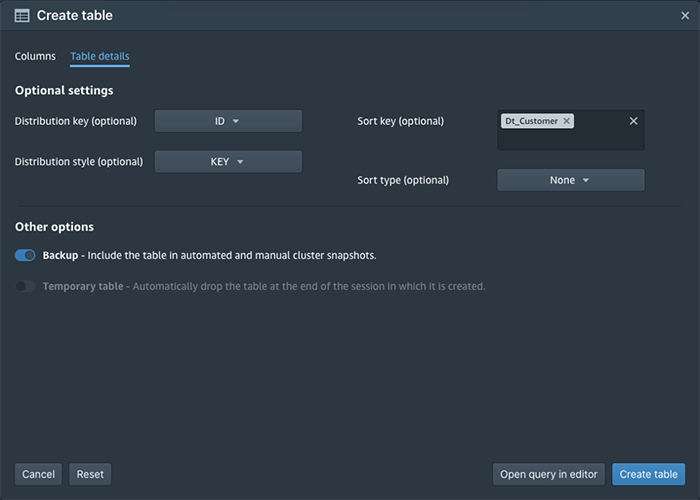

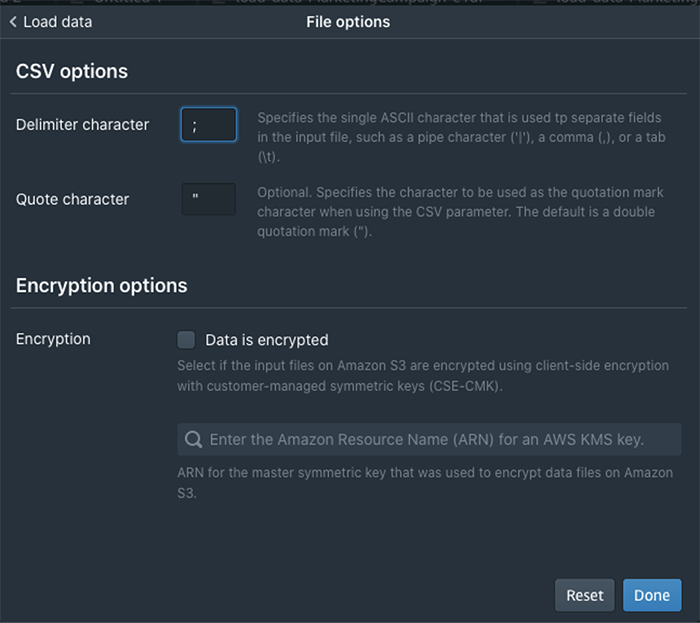

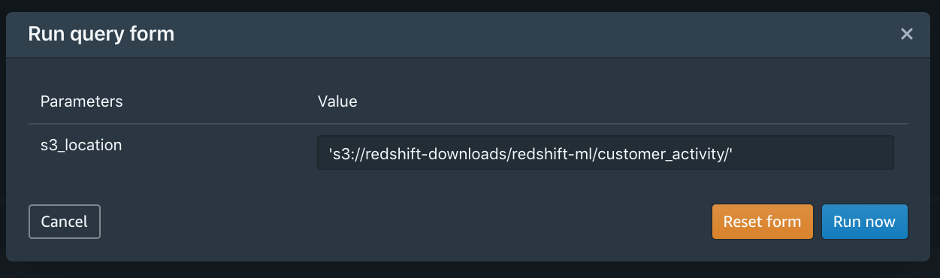

You would probably like to reuse the load query in the future to load data in from another S3 location. In that case, you can use the parameterized query by replacing the S3 URL of the as shown in the following screenshot:

You would probably like to reuse the load query in the future to load data in from another S3 location. In that case, you can use the parameterized query by replacing the S3 URL of the as shown in the following screenshot:

Debu Panda is a Principal Product Manager at AWS, is an industry leader in analytics, application platform, and database technologies, and has more than 25 years of experience in the IT world.

Debu Panda is a Principal Product Manager at AWS, is an industry leader in analytics, application platform, and database technologies, and has more than 25 years of experience in the IT world. Cansu Aksu is a Front End Engineer at AWS, has a several years of experience in developing user interfaces. She is detail oriented, eager to learn and passionate about delivering products and features that solve customer needs and problems

Cansu Aksu is a Front End Engineer at AWS, has a several years of experience in developing user interfaces. She is detail oriented, eager to learn and passionate about delivering products and features that solve customer needs and problems Chengyang Wang is a Frontend Engineer in Redshift Console Team. He worked on a number of new features delivered by redshift in the past 2 years. He thrives to deliver high quality products and aim to improve customer experience from UI

Chengyang Wang is a Frontend Engineer in Redshift Console Team. He worked on a number of new features delivered by redshift in the past 2 years. He thrives to deliver high quality products and aim to improve customer experience from UI