Post Syndicated from Mukul Sharma original https://aws.amazon.com/blogs/big-data/godaddy-benchmarking-results-in-up-to-24-better-price-performance-for-their-spark-workloads-with-aws-graviton2-on-amazon-emr-serverless/

This is a guest post co-written with Mukul Sharma, Software Development Engineer, and Ozcan IIikhan, Director of Engineering from GoDaddy.

GoDaddy empowers everyday entrepreneurs by providing all the help and tools to succeed online. With more than 22 million customers worldwide, GoDaddy is the place people come to name their ideas, build a professional website, attract customers, and manage their work.

GoDaddy is a data-driven company, and getting meaningful insights from data helps us drive business decisions to delight our customers. At GoDaddy, we embarked on a journey to uncover the efficiency promises of AWS Graviton2 on Amazon EMR Serverless as part of our long-term vision for cost-effective intelligent computing.

In this post, we share the methodology and results of our benchmarking exercise comparing the cost-effectiveness of EMR Serverless on the arm64 (Graviton2) architecture against the traditional x86_64 architecture. EMR Serverless on Graviton2 demonstrated an advantage in cost-effectiveness, resulting in significant savings in total run costs. We achieved 23.85% improvement in price-performance for sample production Spark workloads—an outcome that holds tremendous potential for businesses striving to maximize their computing efficiency.

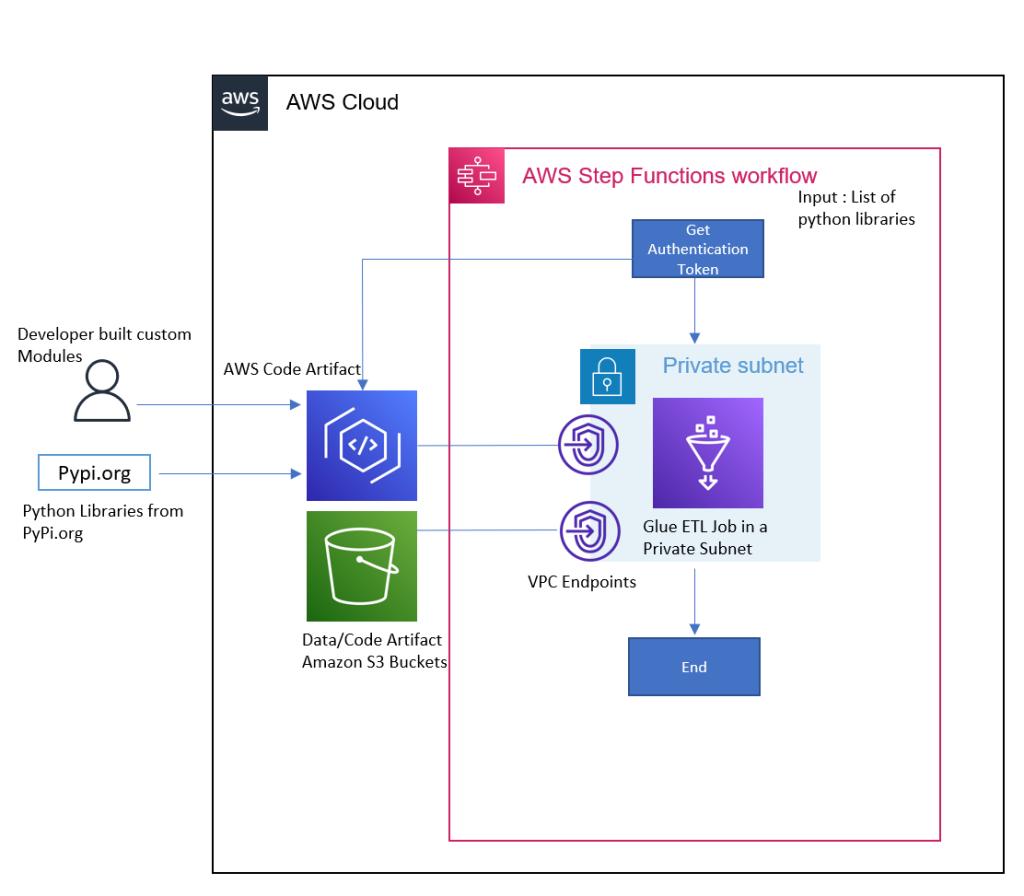

Solution overview

GoDaddy’s intelligent compute platform envisions simplification of compute operations for all personas, without limiting power users, to ensure out-of-box cost and performance optimization for data and ML workloads. As a part of this vision, GoDaddy’s Data & ML Platform team plans to use EMR Serverless as one of the compute solutions under the hood.

The following diagram shows a high-level illustration of the intelligent compute platform vision.

Benchmarking EMR Serverless for GoDaddy

EMR Serverless is a serverless option in Amazon EMR that eliminates the complexities of configuring, managing, and scaling clusters when running big data frameworks like Apache Spark and Apache Hive. With EMR Serverless, businesses can enjoy numerous benefits, including cost-effectiveness, faster provisioning, simplified developer experience, and improved resilience to Availability Zone failures.

At GoDaddy, we embarked on a comprehensive study to benchmark EMR Serverless using real production workflows at GoDaddy. The purpose of the study was to evaluate the performance and efficiency of EMR Serverless and develop a well-informed adoption plan. The results of the study have been extremely promising, showcasing the potential of EMR Serverless for our workloads.

Having achieved compelling results in favor of EMR Serverless for our workloads, our attention turned to evaluating the utilization of the Graviton2 (arm64) architecture on EMR Serverless. In this post, we focus on comparing the performance of Graviton2 (arm64) with the x86_64 architecture on EMR Serverless. By conducting this apples-to-apples comparative analysis, we aim to gain valuable insights into the benefits and considerations of using Graviton2 for our big data workloads.

By using EMR Serverless and exploring the performance of Graviton2, GoDaddy aims to optimize their big data workflows and make informed decisions regarding the most suitable architecture for their specific needs. The combination of EMR Serverless and Graviton2 presents an exciting opportunity to enhance the data processing capabilities and drive efficiency in our operations.

AWS Graviton2

The Graviton2 processors are specifically designed by AWS, utilizing powerful 64-bit Arm Neoverse cores. This custom-built architecture provides a remarkable boost in price-performance for various cloud workloads.

In terms of cost, Graviton2 offers an appealing advantage. As indicated in the following table, the pricing for Graviton2 is 20% lower compared to the x86 architecture option.

| x86_64 | arm64 (Graviton2) | |

| per vCPU per hour | $0.052624 | $0.042094 |

| per GB per hour | $0.0057785 | $0.004628 |

| per storage GB per hour* | $0.000111 | |

*Ephemeral storage: 20 GB of ephemeral storage is available for all workers by default—you pay only for any additional storage that you configure per worker.

For specific pricing details and current information, refer to Amazon EMR pricing.

AWS benchmark

The AWS team performed benchmark tests on Spark workloads with Graviton2 on EMR Serverless using the TPC-DS 3 TB scale performance benchmarks. The summary of their analysis are as follows:

- Graviton2 on EMR Serverless demonstrated an average improvement of 10% for Spark workloads in terms of runtime. This indicates that the runtime for Spark-based tasks was reduced by approximately 10% when utilizing Graviton2.

- Although the majority of queries showcased improved performance, a small subset of queries experienced a regression of up to 7% on Graviton2. These specific queries showed a slight decrease in performance compared to the x86 architecture option.

- In addition to the performance analysis, the AWS team considered the cost factor. Graviton2 is offered at a 20% lower cost than the x86 architecture option. Taking this cost advantage into account, the AWS benchmark set yielded an overall 27% better price-performance for workloads. This means that by using Graviton2, users can achieve a 27% improvement in performance per unit of cost compared to the x86 architecture option.

These findings highlight the significant benefits of using Graviton2 on EMR Serverless for Spark workloads, with improved performance and cost-efficiency. It showcases the potential of Graviton2 in delivering enhanced price-performance ratios, making it an attractive choice for organizations seeking to optimize their big data workloads.

GoDaddy benchmark

During our initial experimentation, we observed that arm64 on EMR Serverless consistently outperformed or performed on par with x86_64. One of the jobs showed a 7.51% increase in resource usage on arm64 compared to x86_64, but due to the lower price of arm64, it still resulted in a 13.48% cost reduction. In another instance, we achieved an impressive 43.7% reduction in run cost, attributed to both the lower price and reduced resource utilization. Overall, our initial tests indicated that arm64 on EMR Serverless delivered superior price-performance compared to x86_64. These promising findings motivated us to conduct a more comprehensive and rigorous study.

Benchmark results

To gain a deeper understanding of the value of Graviton2 on EMR Serverless, we conducted our study using real-life production workloads from GoDaddy, which are scheduled to run at a daily cadence. Without any exceptions, EMR Serverless on arm64 (Graviton2) is significantly more cost-effective compared to the same jobs run on EMR Serverless on the x86_64 architecture. In fact, we recorded an impressive 23.85% improvement in price-performance across the sample GoDaddy jobs using Graviton2.

Like the AWS benchmarks, we observed slight regressions of less than 5% in the total runtime of some jobs. However, given that these jobs will be migrated from Amazon EMR on EC2 to EMR Serverless, the overall total runtime will still be shorter due to the minimal provisioning time in EMR Serverless. Additionally, across all jobs, we observed an average speed up of 2.1% in addition to the cost savings achieved.

These benchmarking results provide compelling evidence of the value and effectiveness of Graviton2 on EMR Serverless. The combination of improved price-performance, shorter runtimes, and overall cost savings makes Graviton2 a highly attractive option for optimizing big data workloads.

Benchmarking methodology

As an extension of a larger benchmarking EMR Serverless for GoDaddy study, where we divided Spark jobs into brackets based on total runtime (quick-run, medium-run, long-run), we measured effect of architecture (arm64 vs. x86_64) on total cost and total runtime. All other parameters were kept the same to achieve an apples-to-apples comparison.

The team followed these steps:

- Prepare the data and environment.

- Choose two random production jobs from each job bracket.

- Make necessary changes to avoid inference with actual production outputs.

- Run tests to execute scripts over multiple iterations to collect accurate and consistent data points.

- Validate input and output datasets, partitions, and row counts to ensure identical data processing.

- Gather relevant metrics from the tests.

- Analyze results to draw insights and conclusions.

The following table shows the summary of an example Spark job.

| Metric | EMR Serverless (Average) – X86_64 | EMR Serverless (Average) – Graviton | X86_64 vs Graviton (% Difference) |

| Total Run Cost | $2.76 | $1.85 | 32.97% |

|

Total Runtime (hh:mm:ss) |

00:41:31 | 00:34:32 | 16.82% |

| EMR Release Label | emr-6.9.0 | ||

| Job Type | Spark | ||

| Spark Version | Spark 3.3.0 | ||

| Hadoop Distribution | Amazon 3.3.3 | ||

| Hive/HCatalog Version | Hive 3.1.3, HCatalog 3.1.3 | ||

Summary of results

The following table presents a comparison of job performance between EMR Serverless on arm64 (Graviton2) and EMR Serverless on x86_64. For each architecture, every job was run at least three times to obtain the accurate average cost and runtime.

| Job | Average x86_64 Cost | Average arm64 Cost | Average x86_64 Runtime (hh:mm:ss) | Average arm64 Runtime (hh:mm:ss) | Average Cost Savings % | Average Performance Gain % |

| 1 | $1.64 | $1.25 | 00:08:43 | 00:09:01 | 23.89% | -3.24% |

| 2 | $10.00 | $8.69 | 00:27:55 | 00:28:25 | 13.07% | -1.79% |

| 3 | $29.66 | $24.15 | 00:50:49 | 00:53:17 | 18.56% | -4.85% |

| 4 | $34.42 | $25.80 | 01:20:02 | 01:24:54 | 25.04% | -6.08% |

| 5 | $2.76 | $1.85 | 00:41:31 | 00:34:32 | 32.97% | 16.82% |

| 6 | $34.07 | $24.00 | 00:57:58 | 00:51:09 | 29.57% | 11.76% |

| Average | 23.85% | 2.10% | ||||

Note that the improvement calculations are based on higher-precision results for more accuracy.

Conclusion

Based on this study, GoDaddy observed a significant 23.85% improvement in price-performance for sample production Spark jobs utilizing the arm64 architecture compared to the x86_64 architecture. These compelling results have led us to strongly recommend internal teams to use arm64 (Graviton2) on EMR Serverless, except in cases where there are compatibility issues with third-party packages and libraries. By adopting an arm64 architecture, organizations can achieve enhanced cost-effectiveness and performance for their workloads, contributing to more efficient data processing and analytics.

About the Authors

Mukul Sharma is a Software Development Engineer on Data & Analytics (DnA) organization at GoDaddy. He is a polyglot programmer with experience in a wide array of technologies to rapidly deliver scalable solutions. He enjoys singing karaoke, playing various board games, and working on personal programming projects in his spare time.

Mukul Sharma is a Software Development Engineer on Data & Analytics (DnA) organization at GoDaddy. He is a polyglot programmer with experience in a wide array of technologies to rapidly deliver scalable solutions. He enjoys singing karaoke, playing various board games, and working on personal programming projects in his spare time.

Ozcan Ilikhan is a Director of Engineering on Data & Analytics (DnA) organization at GoDaddy. He is passionate about solving customer problems and increasing efficiency using data and ML/AI. In his spare time, he loves reading, hiking, gardening, and working on DIY projects.

Ozcan Ilikhan is a Director of Engineering on Data & Analytics (DnA) organization at GoDaddy. He is passionate about solving customer problems and increasing efficiency using data and ML/AI. In his spare time, he loves reading, hiking, gardening, and working on DIY projects.

Harsh Vardhan Singh Gaur is an AWS Solutions Architect, specializing in analytics. He has over 6 years of experience working in the field of big data and data science. He is passionate about helping customers adopt best practices and discover insights from their data.

Harsh Vardhan Singh Gaur is an AWS Solutions Architect, specializing in analytics. He has over 6 years of experience working in the field of big data and data science. He is passionate about helping customers adopt best practices and discover insights from their data.

Ramesh Kumar Venkatraman is a Senior Solutions Architect at AWS who is passionate about containers and databases. He works with AWS customers to design, deploy, and manage their AWS workloads and architectures. In his spare time, he loves to play with his two kids and follows cricket.

Ramesh Kumar Venkatraman is a Senior Solutions Architect at AWS who is passionate about containers and databases. He works with AWS customers to design, deploy, and manage their AWS workloads and architectures. In his spare time, he loves to play with his two kids and follows cricket.

Tom Romano is a Sr. Solutions Architect for AWS World Wide Public Sector from Tampa, FL, and assists GovTech and EdTech customers as they create new solutions that are cloud native, event driven, and serverless. He is an enthusiastic Python programmer for both application development and data analytics, and is an Analytics Specialist. In his free time, Tom flies remote control model airplanes and enjoys vacationing with his family around Florida and the Caribbean.

Tom Romano is a Sr. Solutions Architect for AWS World Wide Public Sector from Tampa, FL, and assists GovTech and EdTech customers as they create new solutions that are cloud native, event driven, and serverless. He is an enthusiastic Python programmer for both application development and data analytics, and is an Analytics Specialist. In his free time, Tom flies remote control model airplanes and enjoys vacationing with his family around Florida and the Caribbean. Shane Thompson is a Sr. Solutions Architect based out of San Luis Obispo, California, working with AWS Startups. He works with customers who use AI/ML in their business model and is passionate about democratizing AI/ML so that all customers can benefit from it. In his free time, Shane loves to spend time with his family and travel around the world.

Shane Thompson is a Sr. Solutions Architect based out of San Luis Obispo, California, working with AWS Startups. He works with customers who use AI/ML in their business model and is passionate about democratizing AI/ML so that all customers can benefit from it. In his free time, Shane loves to spend time with his family and travel around the world.

Sindhu Chandra is a Senior Product Marketing Manager for Amazon QuickSight, AWS’ cloud-native, business intelligence (BI) service that delivers easy-to-understand insights to anyone, wherever they are.

Sindhu Chandra is a Senior Product Marketing Manager for Amazon QuickSight, AWS’ cloud-native, business intelligence (BI) service that delivers easy-to-understand insights to anyone, wherever they are.

Chao Pan is a Data Analytics Solutions Architect at Amazon Web Services. He’s responsible for the consultation and design of customers’ big data solution architectures. He has extensive experience in open-source big data. Outside of work, he enjoys hiking.

Chao Pan is a Data Analytics Solutions Architect at Amazon Web Services. He’s responsible for the consultation and design of customers’ big data solution architectures. He has extensive experience in open-source big data. Outside of work, he enjoys hiking.

Varad Ram is Senior Solutions Architect in Amazon Web Services. He likes to help customers adopt to cloud technologies and is particularly interested in artificial intelligence. He believes deep learning will power future technology growth. In his spare time, he like to be outdoor with his daughter and son.

Varad Ram is Senior Solutions Architect in Amazon Web Services. He likes to help customers adopt to cloud technologies and is particularly interested in artificial intelligence. He believes deep learning will power future technology growth. In his spare time, he like to be outdoor with his daughter and son.

Changbin Gong is a Senior Solutions Architect at Amazon Web Services (AWS). He engages with customers to create innovative solutions that address customer business problems and accelerate the adoption of AWS services. In his spare time, Changbin enjoys reading, running, and traveling.

Changbin Gong is a Senior Solutions Architect at Amazon Web Services (AWS). He engages with customers to create innovative solutions that address customer business problems and accelerate the adoption of AWS services. In his spare time, Changbin enjoys reading, running, and traveling.

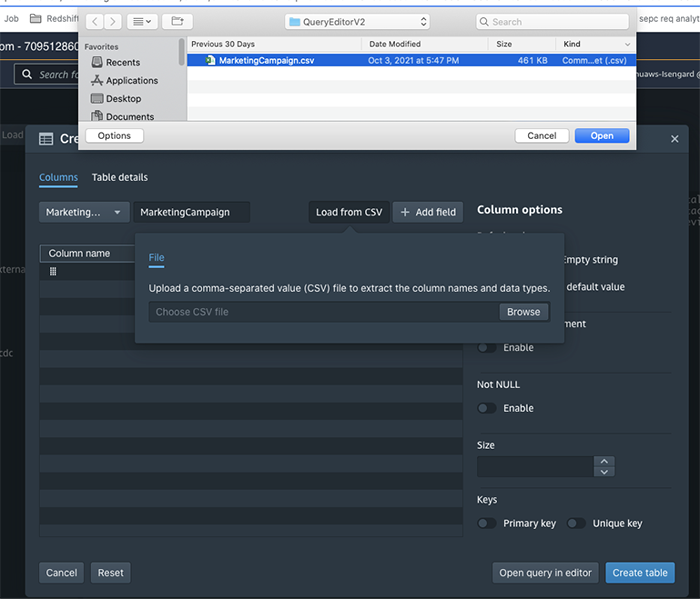

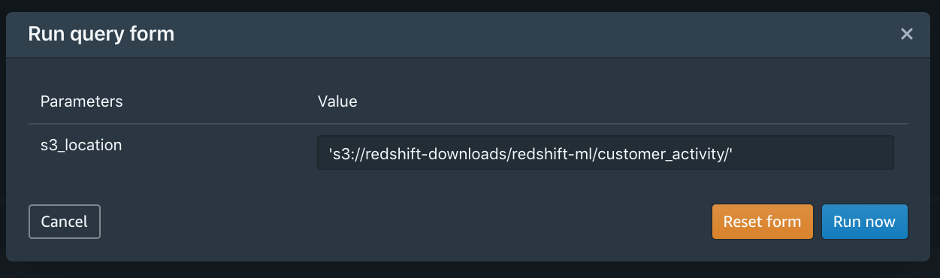

You would probably like to reuse the load query in the future to load data in from another S3 location. In that case, you can use the parameterized query by replacing the S3 URL of the as shown in the following screenshot:

You would probably like to reuse the load query in the future to load data in from another S3 location. In that case, you can use the parameterized query by replacing the S3 URL of the as shown in the following screenshot:

Debu Panda is a Principal Product Manager at AWS, is an industry leader in analytics, application platform, and database technologies, and has more than 25 years of experience in the IT world.

Debu Panda is a Principal Product Manager at AWS, is an industry leader in analytics, application platform, and database technologies, and has more than 25 years of experience in the IT world. Cansu Aksu is a Front End Engineer at AWS, has a several years of experience in developing user interfaces. She is detail oriented, eager to learn and passionate about delivering products and features that solve customer needs and problems

Cansu Aksu is a Front End Engineer at AWS, has a several years of experience in developing user interfaces. She is detail oriented, eager to learn and passionate about delivering products and features that solve customer needs and problems Chengyang Wang is a Frontend Engineer in Redshift Console Team. He worked on a number of new features delivered by redshift in the past 2 years. He thrives to deliver high quality products and aim to improve customer experience from UI

Chengyang Wang is a Frontend Engineer in Redshift Console Team. He worked on a number of new features delivered by redshift in the past 2 years. He thrives to deliver high quality products and aim to improve customer experience from UI