Post Syndicated from Willi Geiger original http://blog.cloudflare.com/uncovering-the-hidden-webp-vulnerability-cve-2023-4863/

At Cloudflare, we're constantly vigilant when it comes to identifying vulnerabilities that could potentially affect the Internet ecosystem. Recently, on September 12, 2023, Google announced a security issue in Google Chrome, titled "Heap buffer overflow in WebP in Google Chrome," which caught our attention. Initially, it seemed like just another bug in the popular web browser. However, what we discovered was far more significant and had implications that extended well beyond Chrome.

Impact much wider than suggested

The vulnerability, tracked under CVE-2023-4863, was described as a heap buffer overflow in WebP within Google Chrome. While this description might lead one to believe that it's a problem confined solely to Chrome, the reality was quite different. It turned out to be a bug deeply rooted in the libwebp library, which is not only used by Chrome but by virtually every application that handles WebP images.

Digging deeper, this vulnerability was in fact first reported in an earlier CVE from Apple, CVE-2023-41064, although the connection was not immediately obvious. In early September, Citizen Lab, a research lab based out of the University of Toronto, reported on an apparent exploit that was being used to attempt to install spyware on the iPhone of "an individual employed by a Washington DC-based civil society organization." The advisory from Apple was also incomplete, stating that it was a “buffer overflow issue in ImageIO,” and that they were aware the issue may have been actively exploited. Only after Google released CVE-2023-4863 did it become clear that these two issues were linked, and there was a wider vulnerability in WebP.

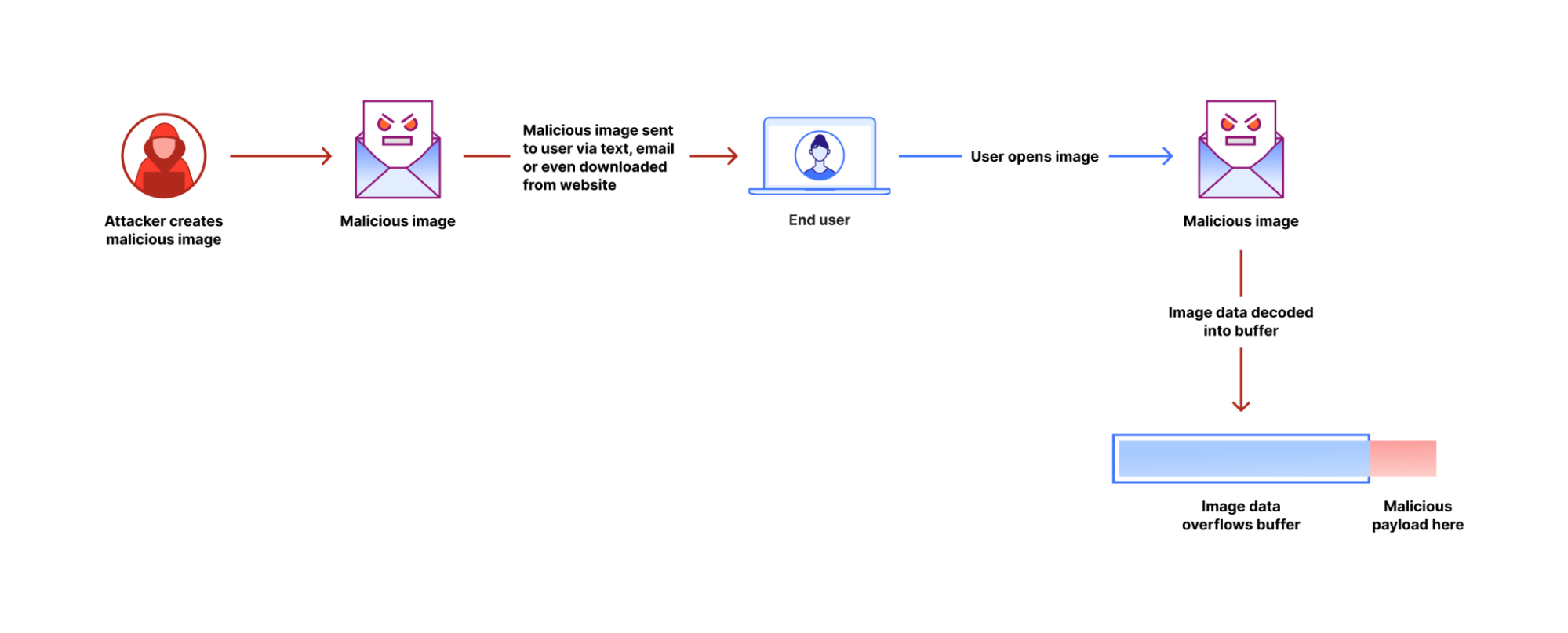

The vulnerability allows an attacker to create a malformed WebP image file that makes libwebp write data beyond the buffer memory allocated to the image decoder. By writing past the legal bounds of the buffer, it is possible to modify sensitive data in memory, eventually leading to execution of the attacker's code.

WebP, introduced over a decade ago, has gained widespread adoption in various applications, ranging from web browsers to email clients, chat apps, graphics programs, and even operating systems. This ubiquity meant that this vulnerability had far-reaching consequences, affecting a vast array of software and virtually all users of the WebP format.

Understanding the technical details

So what exactly was the issue, how could it be exploited, and how was it shut down? We can get our best clues by looking at the patch that was made to libwebp. This patch fixes a potential out-of-buffer (OOB) error in part of the image decoder – the Huffman tables – with two changes: additional validation of the input data, and a modified dynamic memory allocation model. A deeper dive into libwebp and the WebP image format built on top of it reveals what this means.

WebP is a combination of two different image formats: a lossy format similar to JPEG using VP8 codec, and a lossless format using WebP's custom lossless codec. The bug was in the lossless codec's handling of Huffman coding.

The fundamental idea behind Huffman coding is that using a constant number of bits for every basic unit of information in a dataset – like a pixel color – is not the most efficient representation. We can use a variable number of bits, and assign shortest sequences to the most frequently occurring values, and longer ones to the least common values. The sequences of ones and zeros can be represented as a binary tree, with the shorter, more common codes near the root, and longer, less common codes deeper in the tree. Looking up values in the tree bit by bit is relatively slow. Practical implementations build lookup tables that allow matching many bits at a time.

Image files contain compact information about the shape of the Huffman tree, which the decoder uses to reconstruct the tree, and build lookup tables for the codes. The bug in libwebp was in the code building the lookup tables. A specially crafted WebP file can contain a very unbalanced Huffman tree that contains codes much longer than any normal WebP file would have, and this made the function generating lookup tables write data beyond the buffer allocated for the lookup tables. Libwebp had checks for validity of the Huffman tree, but it would write the invalid lookup tables before the consistency check.

The buffer for lookup tables is allocated on the heap. Heap is an area of memory where most of the data of the application is stored. Code that writes data past its buffer allows attackers to modify and corrupt data that happens to be adjacent in memory to the buffer. This can be exploited to make the application misbehave, and eventually start executing code supplied by the attacker.

The fixed version of libwebp ensures that the input data will always create a valid internal structure, and if so, allocates more memory if necessary to ensure the buffer is always big enough.

Libwebp is a mature library, maintained by seasoned professionals. But it's written in the C language, which has very few safeguards against programming errors, especially memory use. Despite the care taken in the library's development, a single erroneous assumption led to a critical vulnerability.

Swift action

On the same day that Google's announcement caught our attention, we filed an internal security ticket, to document and address the vulnerability.

Google was initially perplexed about the true source of the problem. They did not release a patched version of libwebp before announcing the vulnerability. We discovered the yet-unreleased patch for libwebp in its repository, and used it to update libwebp in our services. libwebp officially released the patch a day later.

Our image processing services are written in Rust. We've submitted patches to Rust packages that contained a copy of libwebp and filed RustSec advisories for them (RUSTSEC-2023-0061 and RUSTSEC-2023-0062). This ensured that the broader Rust ecosystem was informed and could take appropriate action.

In an interesting turn of events, GitHub's vulnerability scanner was quick to recognize our RustSec reports as the first case of CVE-2023-4863, even before the issue gained widespread attention. This highlights the importance of having robust security reporting mechanisms in place and the vital role that platforms like GitHub play in keeping the open-source community secure.

These quick actions demonstrate how seriously Cloudflare takes this kind of threat. We have a belt-and-suspenders approach to security that limits the binaries we run at our edge to those signed by us, and ensures that all vulnerabilities are identified and remedied as soon as possible. In this case, we have scrutinized our logs, and found no evidence that any attackers attempted to leverage this vulnerability against Cloudflare. We believe this exploit targeted individuals rather than the infrastructure of a company like Cloudflare, but we never take chances with our customers’ data, and so fixed this vulnerability as quickly as possible, before it became well known.

Conclusion

Google has now widened its description of this issue, correctly calling out that all uses of WebP are potentially affected. This widened description was originally filed as yet another new CVE – CVE-2023-5129 – but then that was flagged as a duplicate of the original CVE-2023-4863, and the description of the earlier filing updated. This incident serves as a reminder of the complex and interconnected nature of the internet ecosystem. What initially seemed like a Chrome-specific problem revealed a much deeper issue that touched nearly every corner of the digital world. The incident also showcased the importance of swift collaboration and the critical role that responsible disclosure plays in mitigating security risks.

For each and every user, it demonstrates the need to keep all browsers, apps and operating systems up to date, and to install recommended security patches. All applications supporting WebP images need to be updated. We've updated our services.

At Cloudflare, we remain committed to enhancing the security of the internet, and incidents like these drive us to continually refine our processes and strengthen our partnerships within the global developer community. By working together, we can make the Internet a safer place for everyone.