Post Syndicated from Artem Lovan original https://aws.amazon.com/blogs/security/building-fine-grained-authorization-using-amazon-cognito-api-gateway-and-iam/

June 5, 2021: We’ve updated Figure 1: User request flow.

Authorizing functionality of an application based on group membership is a best practice. If you’re building APIs with Amazon API Gateway and you need fine-grained access control for your users, you can use Amazon Cognito. Amazon Cognito allows you to use groups to create a collection of users, which is often done to set the permissions for those users. In this post, I show you how to build fine-grained authorization to protect your APIs using Amazon Cognito, API Gateway, and AWS Identity and Access Management (IAM).

As a developer, you’re building a customer-facing application where your users are going to log into your web or mobile application, and as such you will be exposing your APIs through API Gateway with upstream services. The APIs could be deployed on Amazon Elastic Container Service (Amazon ECS), Amazon Elastic Kubernetes Service (Amazon EKS), AWS Lambda, or Elastic Load Balancing where each of these options will forward the request to your Amazon Elastic Compute Cloud (Amazon EC2) instances. Additionally, you can use on-premises services that are connected to your Amazon Web Services (AWS) environment over an AWS VPN or AWS Direct Connect. It’s important to have fine-grained controls for each API endpoint and HTTP method. For instance, the user should be allowed to make a GET request to an endpoint, but should not be allowed to make a POST request to the same endpoint. As a best practice, you should assign users to groups and use group membership to allow or deny access to your API services.

Solution overview

In this blog post, you learn how to use an Amazon Cognito user pool as a user directory and let users authenticate and acquire the JSON Web Token (JWT) to pass to the API Gateway. The JWT is used to identify what group the user belongs to, as mapping a group to an IAM policy will display the access rights the group is granted.

Note: The solution works similarly if Amazon Cognito would be federating users with an external identity provider (IdP)—such as Ping, Active Directory, or Okta—instead of being an IdP itself. To learn more, see Adding User Pool Sign-in Through a Third Party. Additionally, if you want to use groups from an external IdP to grant access, Role-based access control using Amazon Cognito and an external identity provider outlines how to do so.

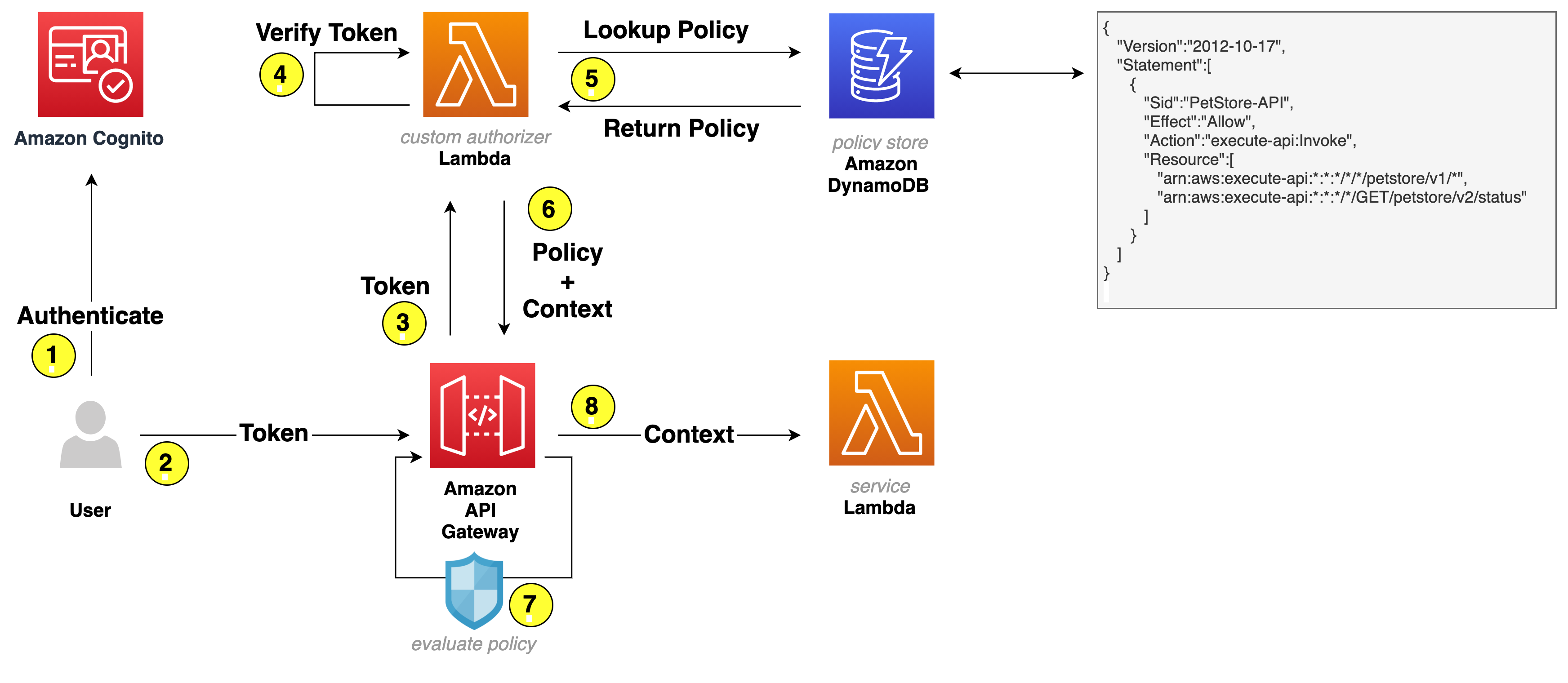

The following figure shows the basic architecture and information flow for user requests.

Figure 1: User request flow

Let’s go through the request flow to understand what happens at each step, as shown in Figure 1:

- A user logs in and acquires an Amazon Cognito JWT ID token, access token, and refresh token. To learn more about each token, see using tokens with user pools.

- A RestAPI request is made and a bearer token—in this solution, an access token—is passed in the headers.

- API Gateway forwards the request to a Lambda authorizer—also known as a custom authorizer.

- The Lambda authorizer verifies the Amazon Cognito JWT using the Amazon Cognito public key. On initial Lambda invocation, the public key is downloaded from Amazon Cognito and cached. Subsequent invocations will use the public key from the cache.

- The Lambda authorizer looks up the Amazon Cognito group that the user belongs to in the JWT and does a lookup in Amazon DynamoDB to get the policy that’s mapped to the group.

- Lambda returns the policy and—optionally—context to API Gateway. The context is a map containing key-value pairs that you can pass to the upstream service. It can be additional information about the user, the service, or anything that provides additional information to the upstream service.

- The API Gateway policy engine evaluates the policy.

Note: Lambda isn’t responsible for understanding and evaluating the policy. That responsibility falls on the native capabilities of API Gateway.

- The request is forwarded to the service.

Note: To further optimize Lambda authorizer, the authorization policy can be cached or disabled, depending on your needs. By enabling cache, you could improve the performance as the authorization policy will be returned from the cache whenever there is a cache key match. To learn more, see Configure a Lambda authorizer using the API Gateway console.

Let’s have a closer look at the following example policy that is stored as part of an item in DynamoDB.

Based on this example policy, the user is allowed to make calls to the petstore API. For version v1, the user can make requests to any verb and any path, which is expressed by an asterisk (*). For v2, the user is only allowed to make a GET request for path /status. To learn more about how the policies work, see Output from an Amazon API Gateway Lambda authorizer.

Getting started

For this solution, you need the following prerequisites:

- The AWS Command Line Interface (CLI) installed and configured for use.

- Python 3.6 or later, to package Python code for Lambda

Note: We recommend that you use a virtual environment or virtualenvwrapper to isolate the solution from the rest of your Python environment.

- An IAM role or user with enough permissions to create Amazon Cognito User Pool, IAM Role, Lambda, IAM Policy, API Gateway and DynamoDB table.

- The GitHub repository for the solution. You can download it, or you can use the following Git command to download it from your terminal.

Note: This sample code should be used to test out the solution and is not intended to be used in production account.

Use the following command to package the Python code for deployment to Lambda.

To implement this reference architecture, you will be utilizing the following services:

- Amazon Cognito to support a user pool for the user base.

- API Gateway to secure and publish the APIs.

- Lambda to serve the APIs.

Note: This solution was tested in the us-east-1, us-east-2, us-west-2, ap-southeast-1, and ap-southeast-2 Regions. Before selecting a Region, verify that the necessary services—Amazon Cognito, API Gateway, and Lambda—are available in those Regions.

Let’s review each service, and how those will be used, before creating the resources for this solution.

Amazon Cognito user pool

A user pool is a user directory in Amazon Cognito. With a user pool, your users can log in to your web or mobile app through Amazon Cognito. You use the Amazon Cognito user directory directly, as this sample solution creates an Amazon Cognito user. However, your users can also log in through social IdPs, OpenID Connect (OIDC), and SAML IdPs.

Lambda as backing API service

Initially, you create a Lambda function that serves your APIs. API Gateway forwards all requests to the Lambda function to serve up the requests.

An API Gateway instance and integration with Lambda

Next, you create an API Gateway instance and integrate it with the Lambda function you created. This API Gateway instance serves as an entry point for the upstream service. The following bash command below creates an Amazon Cognito user pool, a Lambda function, and an API Gateway instance. The command then configures proxy integration with Lambda and deploys an API Gateway stage.

Deploy the sample solution

From within the directory where you downloaded the sample code from GitHub, run the following command to generate a random Amazon Cognito user password and create the resources described in the previous section.

When the command is complete, it returns a message confirming successful stack creation.

Validate Amazon Cognito user creation

To validate that an Amazon Cognito user has been created successfully, run the following command to open the Amazon Cognito UI in your browser and then log in with your credentials.

Note: When you run this command, it returns the user name and password that you should use to log in.

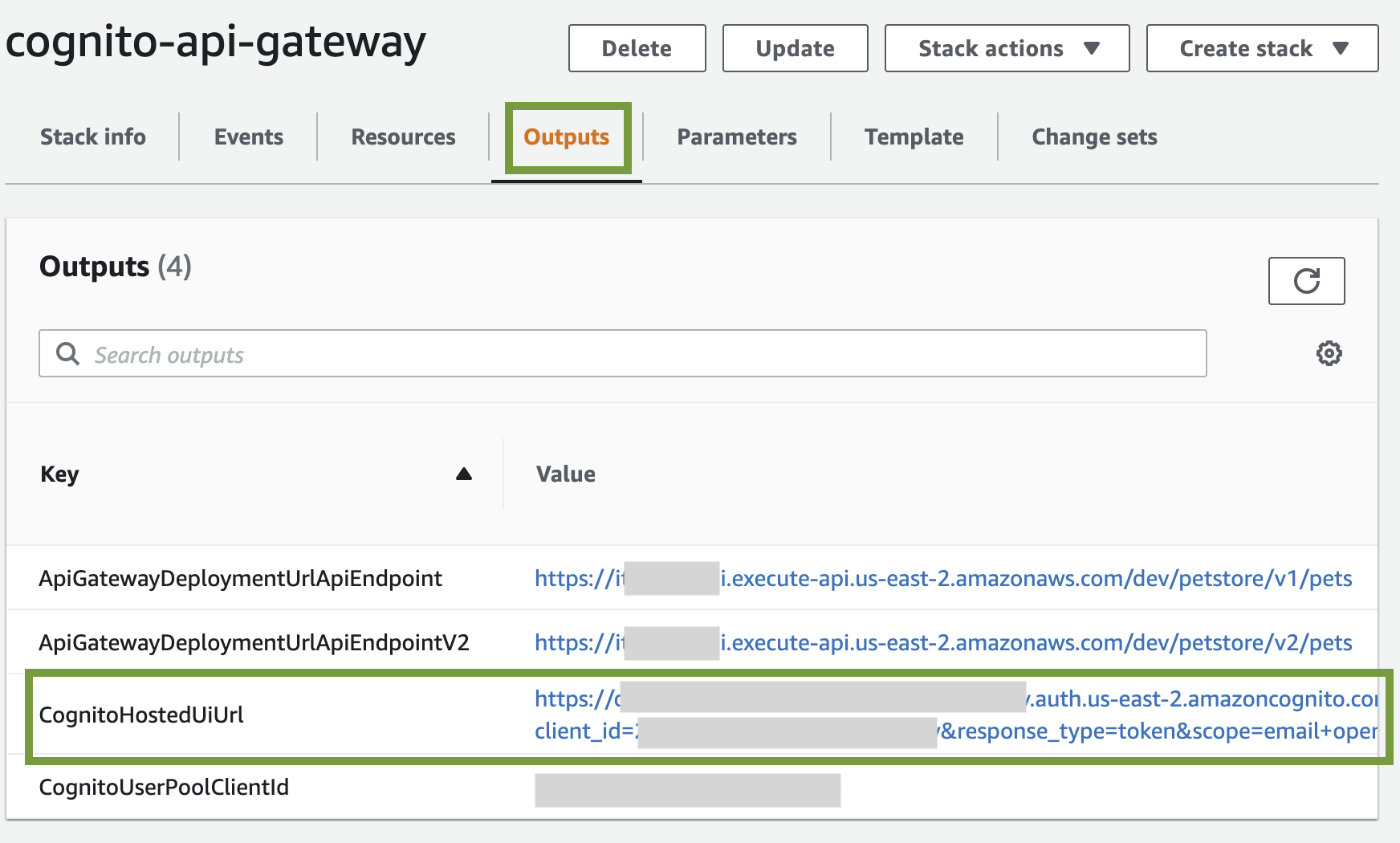

Alternatively, you can open the CloudFormation stack and get the Amazon Cognito hosted UI URL from the stack outputs. The URL is the value assigned to the CognitoHostedUiUrl variable.

Figure 2: CloudFormation Outputs – CognitoHostedUiUrl

Validate Amazon Cognito JWT upon login

Since we haven’t installed a web application that would respond to the redirect request, Amazon Cognito will redirect to localhost, which might look like an error. The key aspect is that after a successful log in, there is a URL similar to the following in the navigation bar of your browser:

Test the API configuration

Before you protect the API with Amazon Cognito so that only authorized users can access it, let’s verify that the configuration is correct and the API is served by API Gateway. The following command makes a curl request to API Gateway to retrieve data from the API service.

The expected result is that the response will be a list of pets. In this case, the setup is correct: API Gateway is serving the API.

Protect the API

To protect your API, the following is required:

- DynamoDB to store the policy that will be evaluated by the API Gateway to make an authorization decision.

- A Lambda function to verify the user’s access token and look up the policy in DynamoDB.

Let’s review all the services before creating the resources.

Lambda authorizer

A Lambda authorizer is an API Gateway feature that uses a Lambda function to control access to an API. You use a Lambda authorizer to implement a custom authorization scheme that uses a bearer token authentication strategy. When a client makes a request to one of the API operations, the API Gateway calls the Lambda authorizer. The Lambda authorizer takes the identity of the caller as input and returns an IAM policy as the output. The output is the policy that is returned in DynamoDB and evaluated by the API Gateway. If there is no policy mapped to the caller identity, Lambda will generate a deny policy and request will be denied.

DynamoDB table

DynamoDB is a key-value and document database that delivers single-digit millisecond performance at any scale. This is ideal for this use case to ensure that the Lambda authorizer can quickly process the bearer token, look up the policy, and return it to API Gateway. To learn more, see Control access for invoking an API.

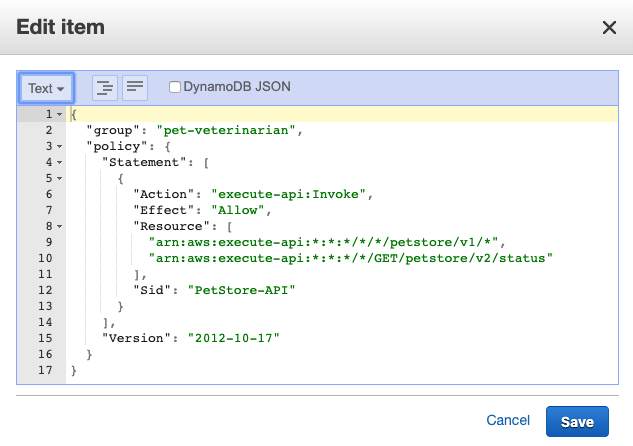

The final step is to create the DynamoDB table for the Lambda authorizer to look up the policy, which is mapped to an Amazon Cognito group.

Figure 3 illustrates an item in DynamoDB. Key attributes are:

- Group, which is used to look up the policy.

- Policy, which is returned to API Gateway to evaluate the policy.

Figure 3: DynamoDB item

Based on this policy, the user that is part of the Amazon Cognito group pet-veterinarian is allowed to make API requests to endpoints https://<domain>/<api-gateway-stage>/petstore/v1/* and https://<domain>/<api-gateway-stage>/petstore/v2/status for GET requests only.

Update and create resources

Run the following command to update existing resources and create a Lambda authorizer and DynamoDB table.

Test the custom authorizer setup

Begin your testing with the following request, which doesn’t include an access token.

The request is denied with the message Unauthorized. At this point, the Amazon API Gateway expects a header named Authorization (case sensitive) in the request. If there’s no authorization header, the request is denied before it reaches the lambda authorizer. This is a way to filter out requests that don’t include required information.

Use the following command for the next test. In this test, you pass the required header but the token is invalid because it wasn’t issued by Amazon Cognito but is a simple JWT-format token stored in ./helper.sh. To learn more about how to decode and validate a JWT, see decode and verify an Amazon Cognito JSON token.

This time the message is different. The Lambda authorizer received the request and identified the token as invalid and responded with the message User is not authorized to access this resource.

To make a successful request to the protected API, your code will need to perform the following steps:

- Use a user name and password to authenticate against your Amazon Cognito user pool.

- Acquire the tokens (id token, access token, and refresh token).

- Make an HTTPS (TLS) request to API Gateway and pass the access token in the headers.

Before the request is forwarded to the API service, API Gateway receives the request and passes it to the Lambda authorizer. The authorizer performs the following steps. If any of the steps fail, the request is denied.

- Retrieve the public keys from Amazon Cognito.

- Cache the public keys so the Lambda authorizer doesn’t have to make additional calls to Amazon Cognito as long as the Lambda execution environment isn’t shut down.

- Use public keys to verify the access token.

- Look up the policy in DynamoDB.

- Return the policy to API Gateway.

The access token has claims such as Amazon Cognito assigned groups, user name, token use, and others, as shown in the following example (some fields removed).

Finally, let’s programmatically log in to Amazon Cognito UI, acquire a valid access token, and make a request to API Gateway. Run the following command to call the protected API.

This time, you receive a response with data from the API service. Let’s examine the steps that the example code performed:

- Lambda authorizer validates the access token.

- Lambda authorizer looks up the policy in DynamoDB based on the group name that was retrieved from the access token.

- Lambda authorizer passes the IAM policy back to API Gateway.

- API Gateway evaluates the IAM policy and the final effect is an allow.

- API Gateway forwards the request to Lambda.

- Lambda returns the response.

Let’s continue to test our policy from Figure 3. In the policy document, arn:aws:execute-api:*:*:*/*/GET/petstore/v2/status is the only endpoint for version V2, which means requests to endpoint /GET/petstore/v2/pets should be denied. Run the following command to test this.

Note: Now that you understand fine grained access control using Cognito user pool, API Gateway and lambda function, and you have finished testing it out, you can run the following command to clean up all the resources associated with this solution:

Advanced IAM policies to further control your API

With IAM, you can create advanced policies to further refine access to your APIs. You can learn more about condition keys that can be used in API Gateway, their use in an IAM policy with conditions, and how policy evaluation logic determines whether to allow or deny a request.

Summary

In this post, you learned how IAM and Amazon Cognito can be used to provide fine-grained access control for your API behind API Gateway. You can use this approach to transparently apply fine-grained control to your API, without having to modify the code in your API, and create advanced policies by using IAM condition keys.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, start a new thread on the Amazon Cognito forum or contact AWS Support.

Want more AWS Security how-to content, news, and feature announcements? Follow us on Twitter.