Post Syndicated from Harun Abdi original https://aws.amazon.com/blogs/security/detecting-and-remediating-inactive-user-accounts-with-amazon-cognito/

For businesses, particularly those in highly regulated industries, managing user accounts isn’t just a matter of security but also a compliance necessity. In sectors such as finance, healthcare, and government, where regulations often mandate strict control over user access, disabling stale user accounts is a key compliance activity. In this post, we show you a solution that uses serverless technologies to track and disable inactive user accounts. While this process is particularly relevant for those in regulated industries, it can also be beneficial for other organizations looking to maintain a clean and secure user base.

The solution focuses on identifying inactive user accounts in Amazon Cognito and automatically disabling them. Disabling a user account in Cognito effectively restricts the user’s access to applications and services linked with the Amazon Cognito user pool. After their account is disabled, the user cannot sign in, access tokens are revoked for their account and they are unable to perform API operations that require user authentication. However, the user’s data and profile within the Cognito user pool remain intact. If necessary, the account can be re-enabled, allowing the user to regain access and functionality.

While the solution focuses on the example of a single Amazon Cognito user pool in a single account, you also learn considerations for multi-user pool and multi-account strategies.

Solution overview

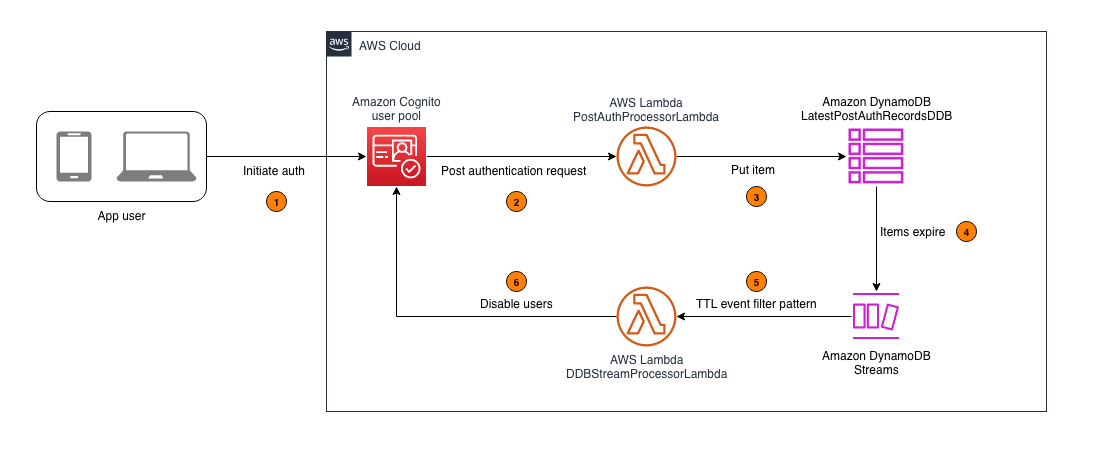

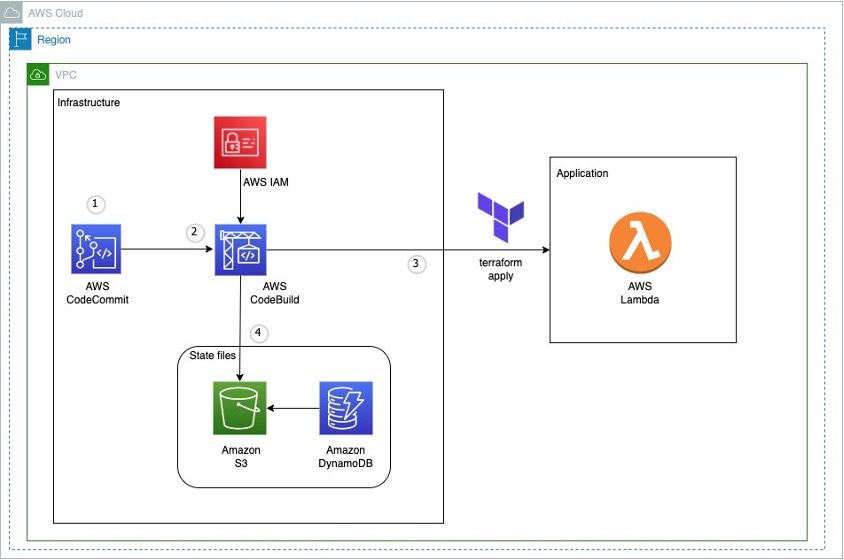

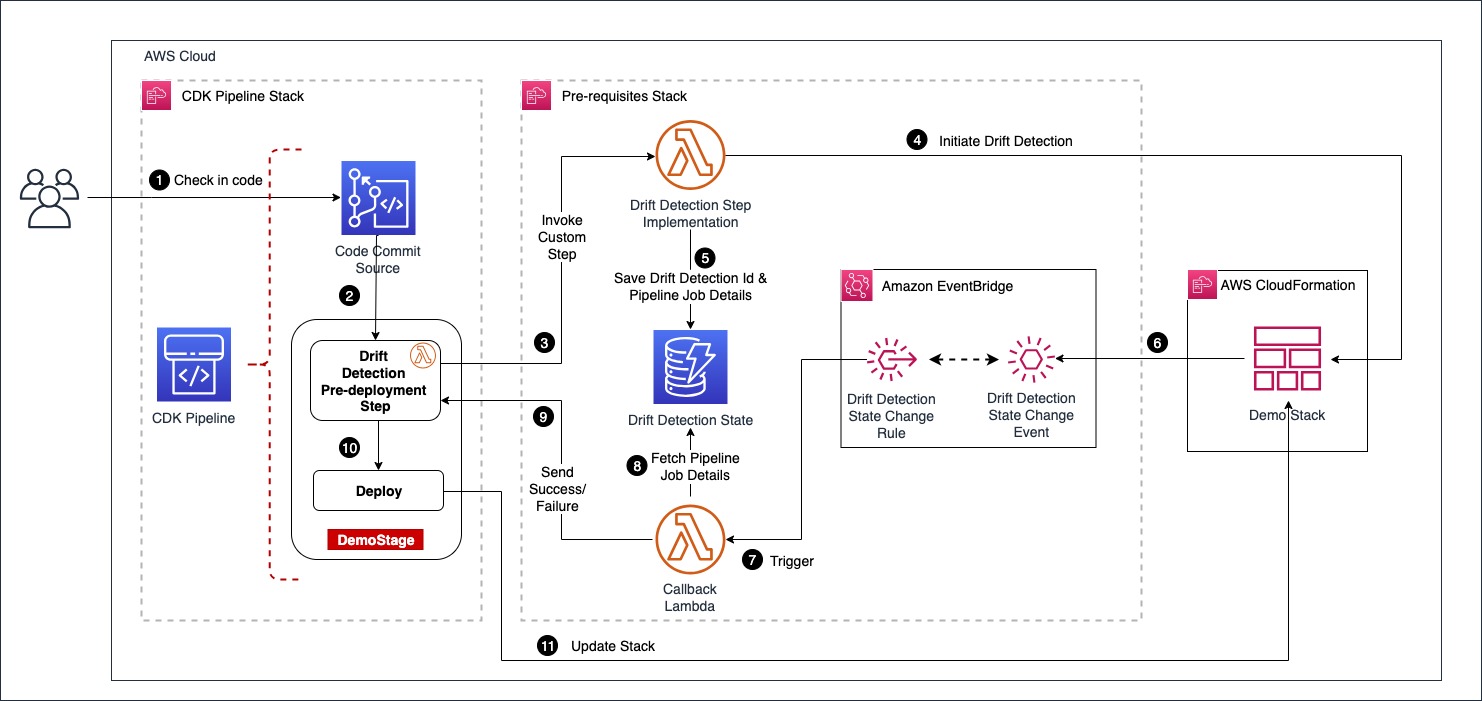

In this section, you learn how to configure an AWS Lambda function that captures the latest sign-in records of users authenticated by Amazon Cognito and write this data to an Amazon DynamoDB table. A time-to-live (TTL) indicator is set on each of these records based on the user inactivity threshold parameter defined when deploying the solution. This TTL represents the maximum period a user can go without signing in before their account is disabled. As these items reach their TTL expiry in DynamoDB, a second Lambda function is invoked to process the expired items and disable the corresponding user accounts in Cognito. For example, if the user inactivity threshold is configured to be 7 days, the accounts of users who don’t sign in within 7 days of their last sign-in will be disabled. Figure 1 shows an overview of the process.

Note: This solution functions as a background process and doesn’t disable user accounts in real time. This is because DynamoDB Time to Live (TTL) is designed for efficiency and to remain within the constraints of the Amazon Cognito quotas. Set your users’ and administrators’ expectations accordingly, acknowledging that there might be a delay in the reflection of changes and updates.

Figure 1: Architecture diagram for tracking user activity and disabling inactive Amazon Cognito users

As shown in Figure 1, this process involves the following steps:

- An application user signs in by authenticating to Amazon Cognito.

- Upon successful user authentication, Cognito initiates a post authentication Lambda trigger invoking the PostAuthProcessorLambda function.

- The PostAuthProcessorLambda function puts an item in the LatestPostAuthRecordsDDB DynamoDB table with the following attributes:

- sub: A unique identifier for the authenticated user within the Amazon Cognito user pool.

- timestamp: The time of the user’s latest sign-in, formatted in UTC ISO standard.

- username: The authenticated user’s Cognito username.

- userpool_id: The identifier of the user pool to which the user authenticated.

- ttl: The TTL value, in seconds, after which a user’s inactivity will initiate account deactivation.

- Items in the LatestPostAuthRecordsDDB DynamoDB table are automatically purged upon reaching their TTL expiry, launching events in DynamoDB Streams.

- DynamoDB Streams events are filtered to allow invocation of the DDBStreamProcessorLambda function only for TTL deleted items.

- The DDBStreamProcessorLambda function runs to disable the corresponding user accounts in Cognito.

Implementation details

In this section, you’re guided through deploying the solution, demonstrating how to integrate it with your existing Amazon Cognito user pool and exploring the solution in more detail.

Note: This solution begins tracking user activity from the moment of its deployment. It can’t retroactively track or manage user activities that occurred prior to its implementation. To make sure the solution disables currently inactive users in the first TTL period after deploying the solution, you should do a one-time preload of those users into the DynamoDB table. If this isn’t done, the currently inactive users won’t be detected because users are detected as they sign in. For the same reason, users who create accounts but never sign in won’t be detected either. To detect user accounts that sign up but never sign in, implement a post confirmation Lambda trigger to invoke a Lambda function that processes user sign-up records and writes them to the DynamoDB table.

Prerequisites

Before deploying this solution, you must have the following prerequisites in place:

- An existing Amazon Cognito user pool. This user pool is the foundation upon which the solution operates. If you don’t have a Cognito user pool set up, you must create one before proceeding. See Creating a user pool.

- The ability to launch a CloudFormation template. The second prerequisite is the capability to launch an AWS CloudFormation template in your AWS environment. The template provisions the necessary AWS services, including Lambda functions, a DynamoDB table, and AWS Identity and Access Management (IAM) roles that are integral to the solution. The template simplifies the deployment process, allowing you to set up the entire solution with minimal manual configuration. You must have the necessary permissions in your AWS account to launch CloudFormation stacks and provision these services.

To deploy the solution

- Choose the following Launch Stack button to deploy the solution’s CloudFormation template:

The solution deploys in the AWS US East (N. Virginia) Region (us-east-1) by default. To deploy the solution in a different Region, use the Region selector in the console navigation bar and make sure that the services required for this walkthrough are supported in your newly selected Region. For service availability by Region, see AWS Services by Region.

- On the Quick Create Stack screen, do the following:

- Specify the stack details.

- Stack name: The stack name is an identifier that helps you find a particular stack from a list of stacks. A stack name can contain only alphanumeric characters (case sensitive) and hyphens. It must start with an alphabetic character and can’t be longer than 128 characters.

- CognitoUserPoolARNs: A comma-separated list of Amazon Cognito user pool Amazon Resource Names (ARNs) to monitor for inactive users.

- UserInactiveThresholdDays: Time (in days) that the user account is allowed to be inactive before it’s disabled.

- Scroll to the bottom, and in the Capabilities section, select I acknowledge that AWS CloudFormation might create IAM resources with custom names.

- Choose Create Stack.

- Specify the stack details.

Integrate with your existing user pool

With the CloudFormation template deployed, you can set up Lambda triggers in your existing user pool. This is a key step for tracking user activity.

Note: This walkthrough is using the new AWS Management Console experience. Alternatively, These steps could also be done using CloudFormation.

To integrate with your existing user pool

- Navigate to the Amazon Cognito console and select your user pool.

- Navigate to User pool properties.

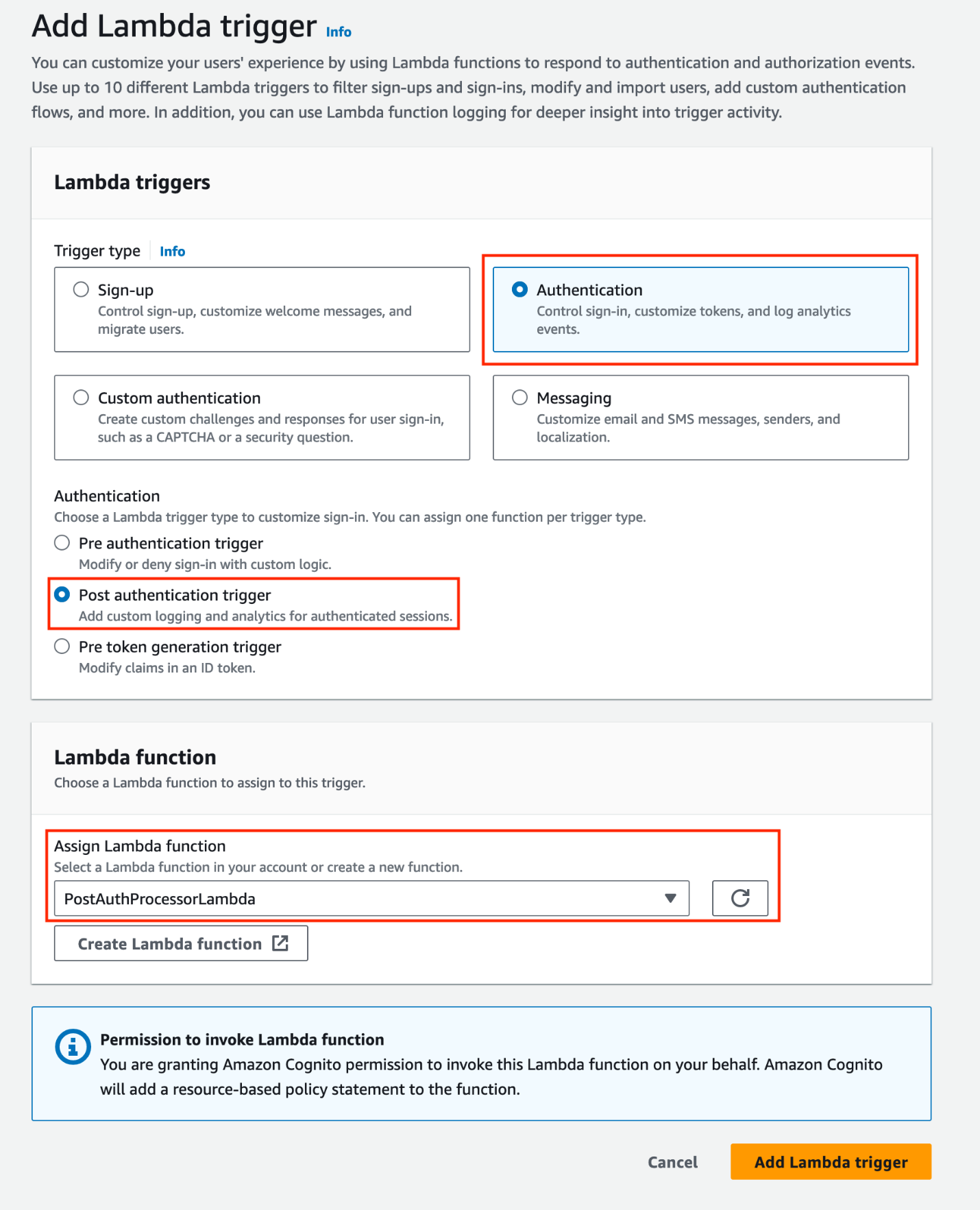

- Under Lambda triggers, choose Add Lambda trigger. Select the Authentication radio button, then add a Post authentication trigger and assign the PostAuthProcessorLambda function.

Note: Amazon Cognito allows you to set up one Lambda trigger per event. If you already have a configured post authentication Lambda trigger, you can refactor the existing Lambda function, adding new features directly to minimize the cold starts associated with invoking additional functions (for more information, see Anti-patterns in Lambda-based applications). Keep in mind that when Cognito calls your Lambda function, the function must respond within 5 seconds. If it doesn’t and if the call can be retried, Cognito retries the call. After three unsuccessful attempts, the function times out. You can’t change this 5-second timeout value.

Figure 2: Add a post-authentication Lambda trigger and assign a Lambda function

When you add a Lambda trigger in the Amazon Cognito console, Cognito adds a resource-based policy to your function that permits your user pool to invoke the function. When you create a Lambda trigger outside of the Cognito console, including a cross-account function, you must add permissions to the resource-based policy of the Lambda function. Your added permissions must allow Cognito to invoke the function on behalf of your user pool. You can add permissions from the Lambda console or use the Lambda AddPermission API operation. To configure this in CloudFormation, you can use the AWS::Lambda::Permission resource.

Explore the solution

The solution should now be operational. It’s configured to begin monitoring user sign-in activities and automatically disable inactive user accounts according to the user inactivity threshold. Use the following procedures to test the solution:

Note: When testing the solution, you can set the UserInactiveThresholdDays CloudFormation parameter to 0. This minimizes the time it takes for user accounts to be disabled.

Step 1: User authentication

- Create a user account (if one doesn’t exist) in the Amazon Cognito user pool integrated with the solution.

- Authenticate to the Cognito user pool integrated with the solution.

Figure 3: Example user signing in to the Amazon Cognito hosted UI

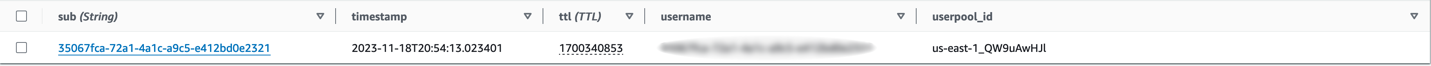

Step 2: Verify the sign-in record in DynamoDB

Confirm the sign-in record was successfully put in the LatestPostAuthRecordsDDB DynamoDB table.

- Navigate to the DynamoDB console.

- Select the LatestPostAuthRecordsDDB table.

- Select Explore Table Items.

- Locate the sign-in record associated with your user.

Figure 4: Locating the sign-in record associated with the signed-in user

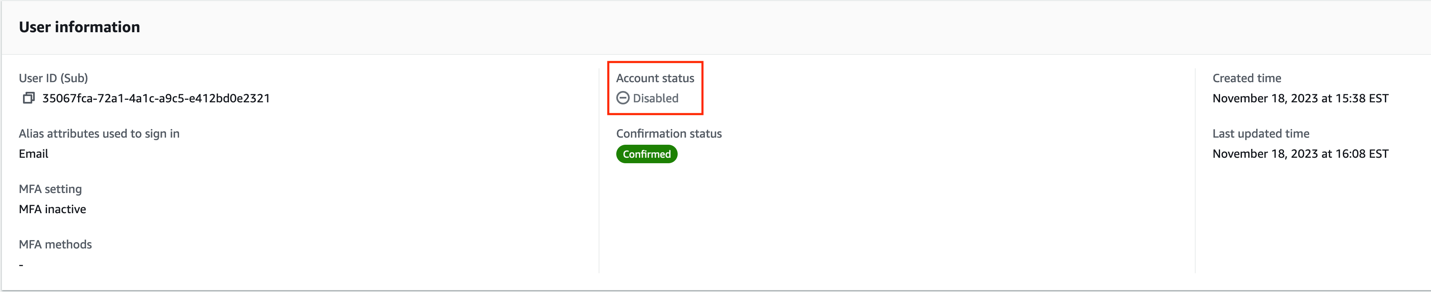

Step 3: Confirm user deactivation in Amazon Cognito

After the TTL expires, validate that the user account is disabled in Amazon Cognito.

- Navigate to the Amazon Cognito console.

- Select the relevant Cognito user pool.

- Under Users, select the specific user.

- Verify the Account status in the User information section.

Figure 5: Screenshot of the user that signed in with their account status set to disabled

Note: TTL typically deletes expired items within a few days. Depending on the size and activity level of a table, the actual delete operation of an expired item can vary. TTL deletes items on a best effort basis, and deletion might take longer in some cases.

The user’s account is now disabled. A disabled user account can’t be used to sign in, but still appears in the responses to GetUser and ListUsers API requests.

Design considerations

In this section, you dive deeper into the key components of this solution.

DynamoDB schema configuration:

The DynamoDB schema has the Amazon Cognito sub attribute as the partition key. The Cognito sub is a globally unique user identifier within Cognito user pools that cannot be changed. This configuration ensures each user has a single entry in the table, even if the solution is configured to track multiple user pools. See Other considerations for more about tracking multiple user pools.

Using DynamoDB Streams and Lambda to disable TTL deleted users

This solution uses DynamoDB TTL and DynamoDB Streams alongside Lambda to process user sign-in records. The TTL feature automatically deletes items past their expiration time without write throughput consumption. The deleted items are captured by DynamoDB Streams and processed using Lambda. You also apply event filtering within the Lambda event source mapping, ensuring that the DDBStreamProcessorLambda function is invoked exclusively for TTL-deleted items (see the following code example for the JSON filter pattern). This approach reduces invocations of the Lambda functions, simplifies code, and reduces overall cost.

Handling API quotas:

The DDBStreamProcessorLambda function is configured to comply with the AdminDisableUser API’s quota limits. It processes messages in batches of 25, with a parallelization factor of 1. This makes sure that the solution remains within the nonadjustable 25 requests per second (RPS) limit for AdminDisableUser, avoiding potential API throttling. For more details on these limits, see Quotas in Amazon Cognito.

Dead-letter queues:

Throughout the architecture, dead-letter queues (DLQs) are used to handle message processing failures gracefully. They make sure that unprocessed records aren’t lost but instead are queued for further inspection and retry.

Other considerations

The following considerations are important for scaling the solution in complex environments and maintaining its integrity. The ability to scale and manage the increased complexity is crucial for successful adoption of the solution.

Multi-user pool and multi-account deployment

While this solution discussed a single Amazon Cognito user pool in a single AWS account, this solution can also function in environments with multiple user pools. This involves deploying the solution and integrating with each user pool as described in Integrating with your existing user pool. Because of the AdminDisableUser API’s quota limit for the maximum volume of requests in one AWS Region in one AWS account, consider deploying the solution separately in each Region in each AWS account to stay within the API limits.

Efficient processing with Amazon SQS:

Consider using Amazon Simple Queue Service (Amazon SQS) to add a queue between the PostAuthProcessorLambda function and the LatestPostAuthRecordsDDB DynamoDB table to optimize processing. This approach decouples user sign-in actions from DynamoDB writes, and allows for batching writes to DynamoDB, reducing the number of write requests.

Clean up

Avoid unwanted charges by cleaning up the resources you’ve created. To decommission the solution, follow these steps:

- Remove the Lambda trigger from the Amazon Cognito user pool:

- Navigate to the Amazon Cognito console.

- Select the user pool you have been working with.

- Go to the Triggers section within the user pool settings.

- Manually remove the association of the Lambda function with the user pool events.

- Remove the CloudFormation stack:

- Open the CloudFormation console.

- Locate and select the CloudFormation stack that was used to deploy the solution.

- Delete the stack.

- CloudFormation will automatically remove the resources created by this stack, including Lambda functions, Amazon SQS queues, and DynamoDB tables.

Conclusion

In this post, we walked you through a solution to identify and disable stale user accounts based on periods of inactivity. While the example focuses on a single Amazon Cognito user pool, the approach can be adapted for more complex environments with multiple user pools across multiple accounts. For examples of Amazon Cognito architectures, see the AWS Architecture Blog.

Proper planning is essential for seamless integration with your existing infrastructure. Carefully consider factors such as your security environment, compliance needs, and user pool configurations. You can modify this solution to suit your specific use case.

Maintaining clean and active user pools is an ongoing journey. Continue monitoring your systems, optimizing configurations, and keeping up-to-date on new features. Combined with well-architected preventive measures, automated user management systems provide strong defenses for your applications and data.

For further reading, see the AWS Well-Architected Security Pillar and more posts like this one on the AWS Security Blog.

If you have feedback about this post, submit comments in the Comments section. If you have questions about this post, start a new thread on the Amazon Cognito re:Post forum or contact AWS Support.

Deepak Singh is a Senior Solutions Architect at Amazon Web Services with 20+ years of experience in Data & AIA. He enjoys working with AWS partners and customers on building scalable analytical solutions for their business outcomes. When not at work, he loves spending time with family or exploring new technologies in analytics and AI space.

Deepak Singh is a Senior Solutions Architect at Amazon Web Services with 20+ years of experience in Data & AIA. He enjoys working with AWS partners and customers on building scalable analytical solutions for their business outcomes. When not at work, he loves spending time with family or exploring new technologies in analytics and AI space. Piyush Patra is a Partner Solutions Architect at Amazon Web Services where he supports partners with their Analytics journeys and is the global lead for strategic Data Estate Modernization and Migration partner programs.

Piyush Patra is a Partner Solutions Architect at Amazon Web Services where he supports partners with their Analytics journeys and is the global lead for strategic Data Estate Modernization and Migration partner programs. Govind Mohan is an Associate Director with Cognizant with over 18 year of experience in data and analytics space, he has helped design and implement multiple large-scale data migration, application lift & shift and legacy modernization projects and works closely with customers in accelerating the cloud modernization journey leveraging Cognizant Data and Intelligence Toolkit (CDIT) platform.

Govind Mohan is an Associate Director with Cognizant with over 18 year of experience in data and analytics space, he has helped design and implement multiple large-scale data migration, application lift & shift and legacy modernization projects and works closely with customers in accelerating the cloud modernization journey leveraging Cognizant Data and Intelligence Toolkit (CDIT) platform. Kausik Dhar is a technology leader having more than 23 years of IT experience – primarily focused on Data & Analytics, Data Modernization, Application Development, Delivery Management, and Solution Architecture. He has played a pivotal role in guiding clients through the designing and executing large-scale data and process migrations, in addition to spearheading successful cloud implementations. Kausik possesses expertise in formulating migration strategies for complex programs and adeptly constructing data lake/Lakehouse architecture employing a wide array of tools and technologies.

Kausik Dhar is a technology leader having more than 23 years of IT experience – primarily focused on Data & Analytics, Data Modernization, Application Development, Delivery Management, and Solution Architecture. He has played a pivotal role in guiding clients through the designing and executing large-scale data and process migrations, in addition to spearheading successful cloud implementations. Kausik possesses expertise in formulating migration strategies for complex programs and adeptly constructing data lake/Lakehouse architecture employing a wide array of tools and technologies.

Matt Nispel is an Enterprise Solutions Architect at AWS. He has more than 10 years of experience building cloud architectures for large enterprise companies. At AWS, Matt helps customers rearchitect their applications to take full advantage of the cloud. Matt lives in Minneapolis, Minnesota, and in his free time enjoys spending time with friends and family.

Matt Nispel is an Enterprise Solutions Architect at AWS. He has more than 10 years of experience building cloud architectures for large enterprise companies. At AWS, Matt helps customers rearchitect their applications to take full advantage of the cloud. Matt lives in Minneapolis, Minnesota, and in his free time enjoys spending time with friends and family. Dan Dressel is a Senior Analytics Specialist Solutions Architect at AWS. He is passionate about databases, analytics, machine learning, and architecting solutions. In his spare time, he enjoys spending time with family, nature walking, and playing foosball.

Dan Dressel is a Senior Analytics Specialist Solutions Architect at AWS. He is passionate about databases, analytics, machine learning, and architecting solutions. In his spare time, he enjoys spending time with family, nature walking, and playing foosball.