Post Syndicated from Achraf Moussadek-Kabdani original https://aws.amazon.com/blogs/security/iam-access-analyzer-simplifies-inspection-of-unused-access-in-your-organization/

AWS Identity and Access Management (IAM) Access Analyzer offers tools that help you set, verify, and refine permissions. You can use IAM Access Analyzer external access findings to continuously monitor your AWS Organizations organization and Amazon Web Services (AWS) accounts for public and cross-account access to your resources, and verify that only intended external access is granted. Now, you can use IAM Access Analyzer unused access findings to identify unused access granted to IAM roles and users in your organization.

If you lead a security team, your goal is to manage security for your organization at scale and make sure that your team follows best practices, such as the principle of least privilege. When your developers build on AWS, they create IAM roles for applications and team members to interact with AWS services and resources. They might start with broad permissions while they explore AWS services for their use cases. To identify unused access, you can review the IAM last accessed information for a given IAM role or user and refine permissions gradually. If your company has a multi-account strategy, your roles and policies are created in multiple accounts. You then need visibility across your organization to make sure that teams are working with just the required access.

Now, IAM Access Analyzer simplifies inspection of unused access by reporting unused access findings across your IAM roles and users. IAM Access Analyzer continuously analyzes the accounts in your organization to identify unused access and creates a centralized dashboard with findings. From a delegated administrator account for IAM Access Analyzer, you can use the dashboard to review unused access findings across your organization and prioritize the accounts to inspect based on the volume and type of findings. The findings highlight unused roles, unused access keys for IAM users, and unused passwords for IAM users. For active IAM users and roles, the findings provide visibility into unused services and actions. With the IAM Access Analyzer integration with Amazon EventBridge and AWS Security Hub, you can automate and scale rightsizing of permissions by using event-driven workflows.

In this post, we’ll show you how to set up and use IAM Access Analyzer to identify and review unused access in your organization.

Generate unused access findings

To generate unused access findings, you need to create an analyzer. An analyzer is an IAM Access Analyzer resource that continuously monitors your accounts or organization for a given finding type. You can create an analyzer for the following findings:

An analyzer for unused access findings is a new analyzer that continuously monitors roles and users, looking for permissions that are granted but not actually used. This analyzer is different from an analyzer for external access findings; you need to create a new analyzer for unused access findings even if you already have an analyzer for external access findings.

You can centrally view unused access findings across your accounts by creating an analyzer at the organization level. If you operate a standalone account, you can get unused access findings by creating an analyzer at the account level. This post focuses on the organization-level analyzer setup and management by a central team.

Pricing

IAM Access Analyzer charges for unused access findings based on the number of IAM roles and users analyzed per analyzer per month. You can still use IAM Access Analyzer external access findings at no additional cost. For more details on pricing, see IAM Access Analyzer pricing.

Create an analyzer for unused access findings

To enable unused access findings for your organization, you need to create your analyzer by using the IAM Access Analyzer console or APIs in your management account or a delegated administrator account. A delegated administrator is a member account of the organization that you can delegate with administrator access for IAM Access Analyzer. A best practice is to use your management account only for tasks that require the management account and use a delegated administrator for other tasks. For steps on how to add a delegated administrator for IAM Access Analyzer, see Delegated administrator for IAM Access Analyzer.

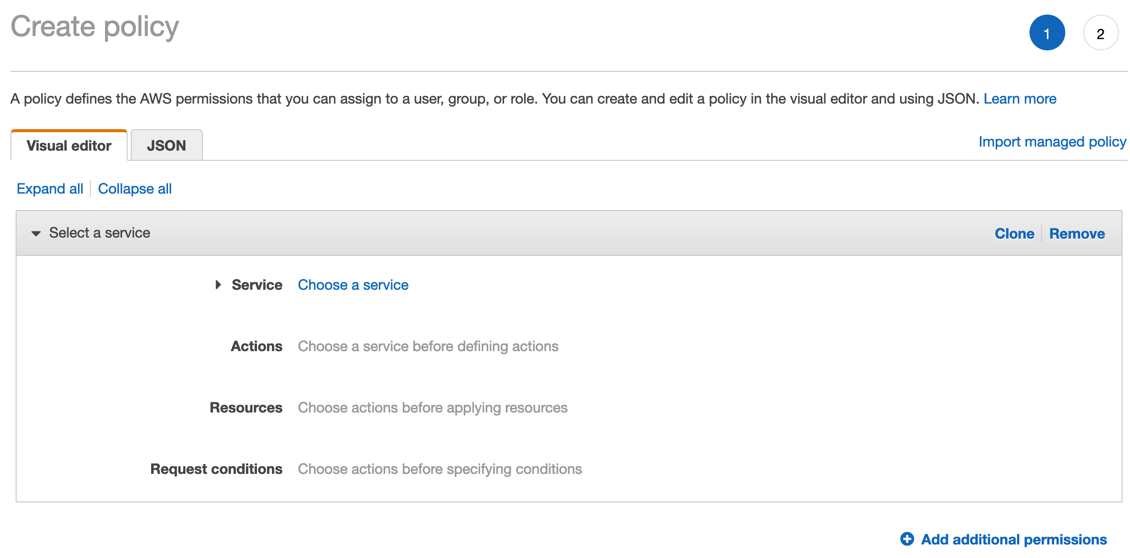

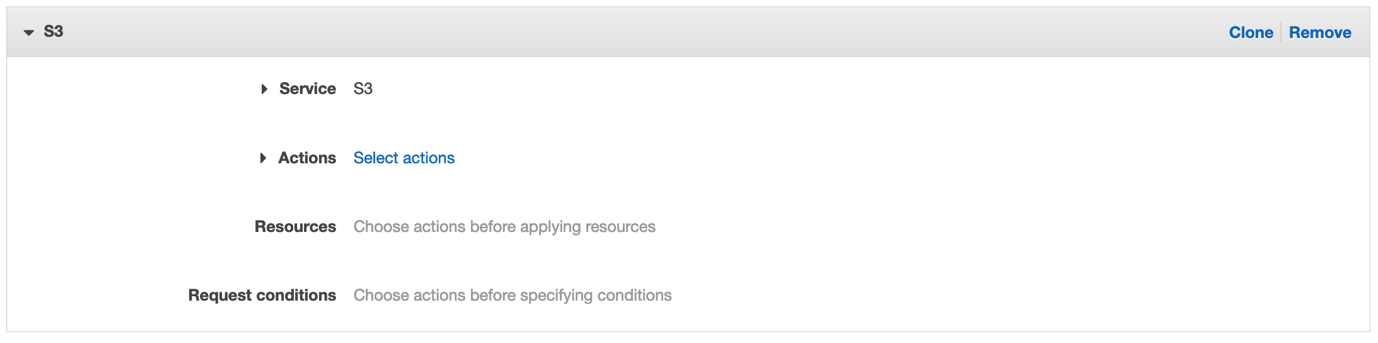

To create an analyzer for unused access findings (console)

- From the delegated administrator account, open the IAM Access Analyzer console, and in the left navigation pane, select Analyzer settings.

- Choose Create analyzer.

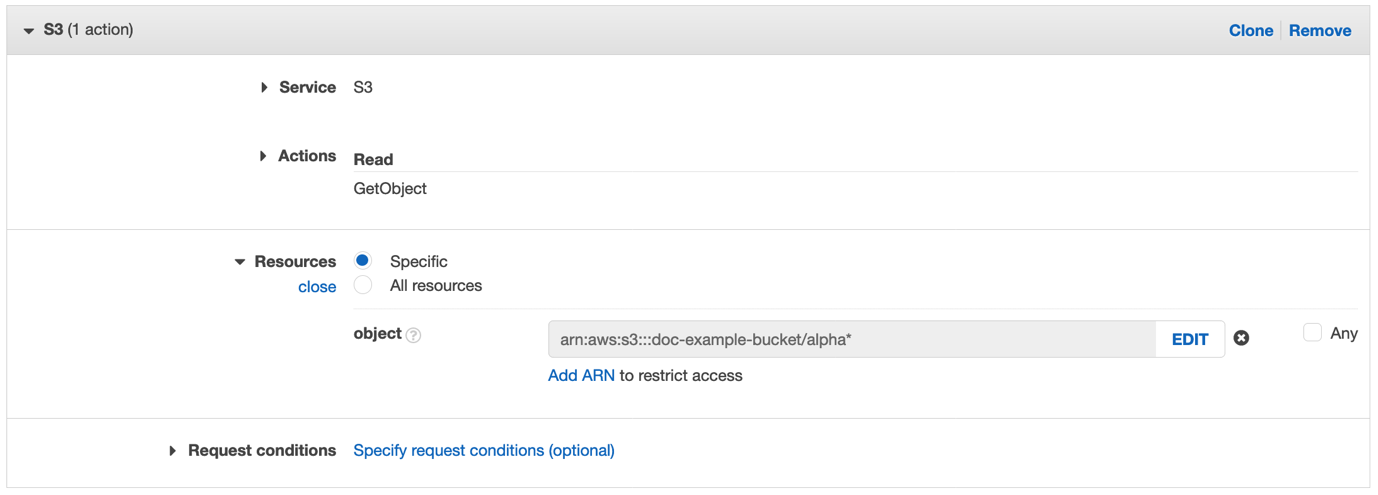

- On the Create analyzer page, do the following, as shown in Figure 1:

- For Findings type, select Unused access analysis.

- Provide a Name for the analyzer.

- Select a Tracking period. The tracking period is the threshold beyond which IAM Access Analyzer considers access to be unused. For example, if you select a tracking period of 90 days, IAM Access Analyzer highlights the roles that haven’t been used in the last 90 days.

- Set your Selected accounts. For this example, we select Current organization to review unused access across the organization.

- Select Create.

Figure 1: Create analyzer page

Now that you’ve created the analyzer, IAM Access Analyzer starts reporting findings for unused access across the IAM users and roles in your organization. IAM Access Analyzer will periodically scan your IAM roles and users to update unused access findings. Additionally, if one of your roles, users or policies is updated or deleted, IAM Access Analyzer automatically updates existing findings or creates new ones. IAM Access Analyzer uses a service-linked role to review last accessed information for all roles, user access keys, and user passwords in your organization. For active IAM roles and users, IAM Access Analyzer uses IAM service and action last accessed information to identify unused permissions.

Note: Although IAM Access Analyzer is a regional service (that is, you enable it for a specific AWS Region), unused access findings are linked to IAM resources that are global (that is, not tied to a Region). To avoid duplicate findings and costs, enable your analyzer for unused access in the single Region where you want to review and operate findings.

IAM Access Analyzer findings dashboard

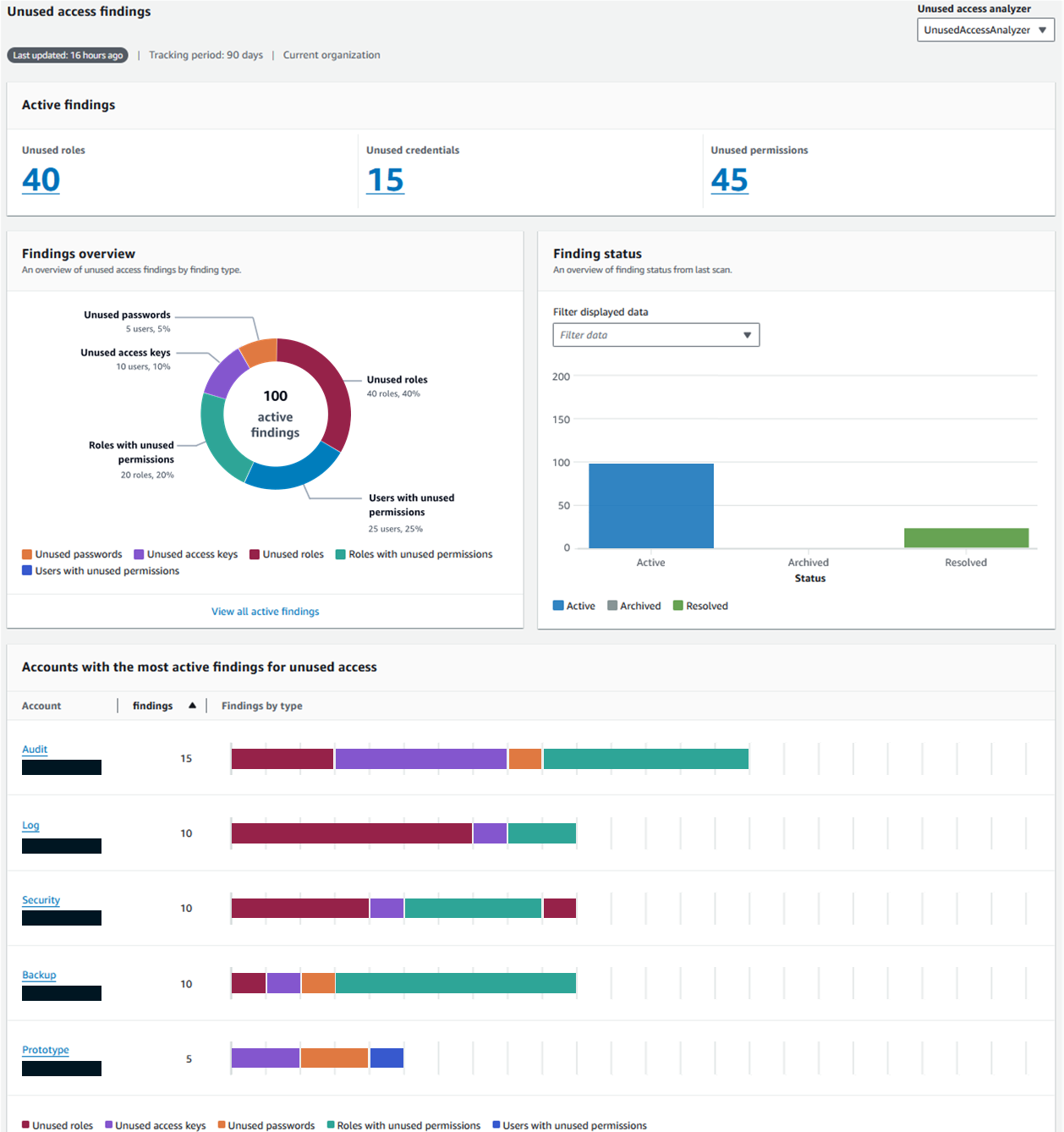

Your analyzer aggregates findings from across your organization and presents them on a dashboard. The dashboard aggregates, in the selected Region, findings for both external access and unused access—although this post focuses on unused access findings only. You can use the dashboard for unused access findings to centrally review the breakdown of findings by account or finding types to identify areas to prioritize for your inspection (for example, sensitive accounts, type of findings, type of environment, or confidence in refinement).

Unused access findings dashboard – Findings overview

Review the findings overview to identify the total findings for your organization and the breakdown by finding type. Figure 2 shows an example of an organization with 100 active findings. The finding type Unused access keys is present in each of the accounts, with the most findings for unused access. To move toward least privilege and to avoid long-term credentials, the security team should clean up the unused access keys.

Figure 2: Unused access finding dashboard

Unused access findings dashboard – Accounts with most findings

Review the dashboard to identify the accounts with the highest number of findings and the distribution per finding type. In Figure 2, the Audit account has the highest number of findings and might need attention. The account has five unused access keys and six roles with unused permissions. The security team should prioritize this account based on volume of findings and review the findings associated with the account.

Review unused access findings

In this section, we’ll show you how to review findings. We’ll share two examples of unused access findings, including unused access key findings and unused permissions findings.

Finding example: unused access keys

As shown previously in Figure 2, the IAM Access Analyzer dashboard showed that accounts with the most findings were primarily associated with unused access keys. Let’s review a finding linked to unused access keys.

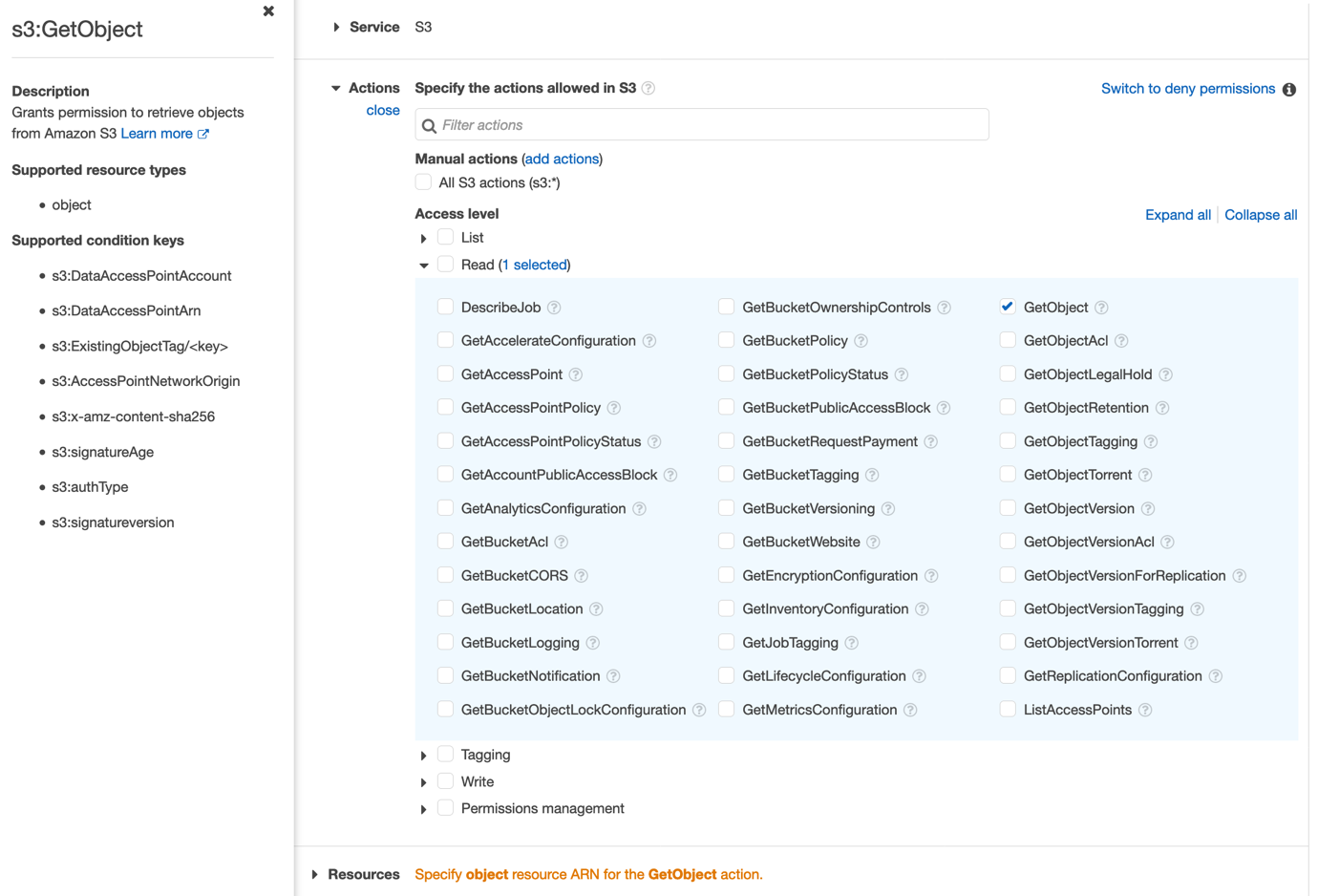

To review the finding for unused access keys

- Open the IAM Access Analyzer console, and in the left navigation pane, select Unused access.

- Select your analyzer to view the unused access findings.

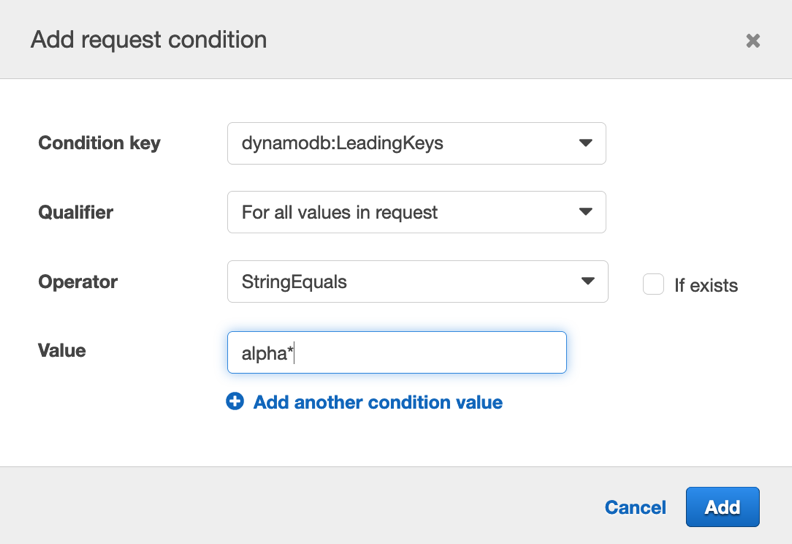

- In the search dropdown list, select the property Findings type, the Equals operator, and the value Unused access key to get only Findings type = Unused access key, as shown in Figure 3.

Figure 3: List of unused access findings

- Select one of the findings to get a view of the available access keys for an IAM user, their status, creation date, and last used date. Figure 4 shows an example in which one of the access keys has never been used, and the other was used 137 days ago.

Figure 4: Finding example – Unused IAM user access keys

From here, you can investigate further with the development teams to identify whether the access keys are still needed. If they aren’t needed, you should delete the access keys.

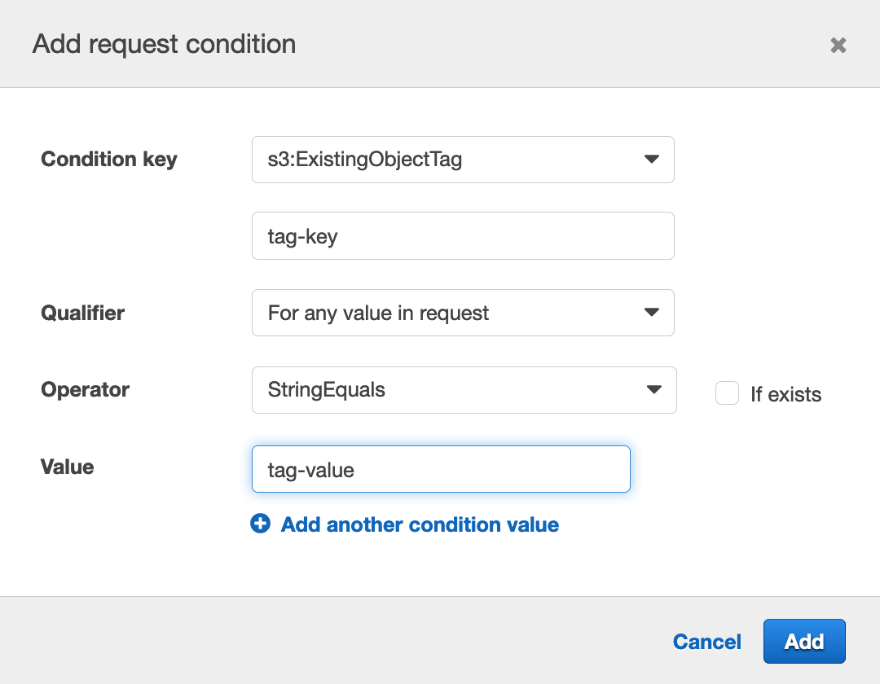

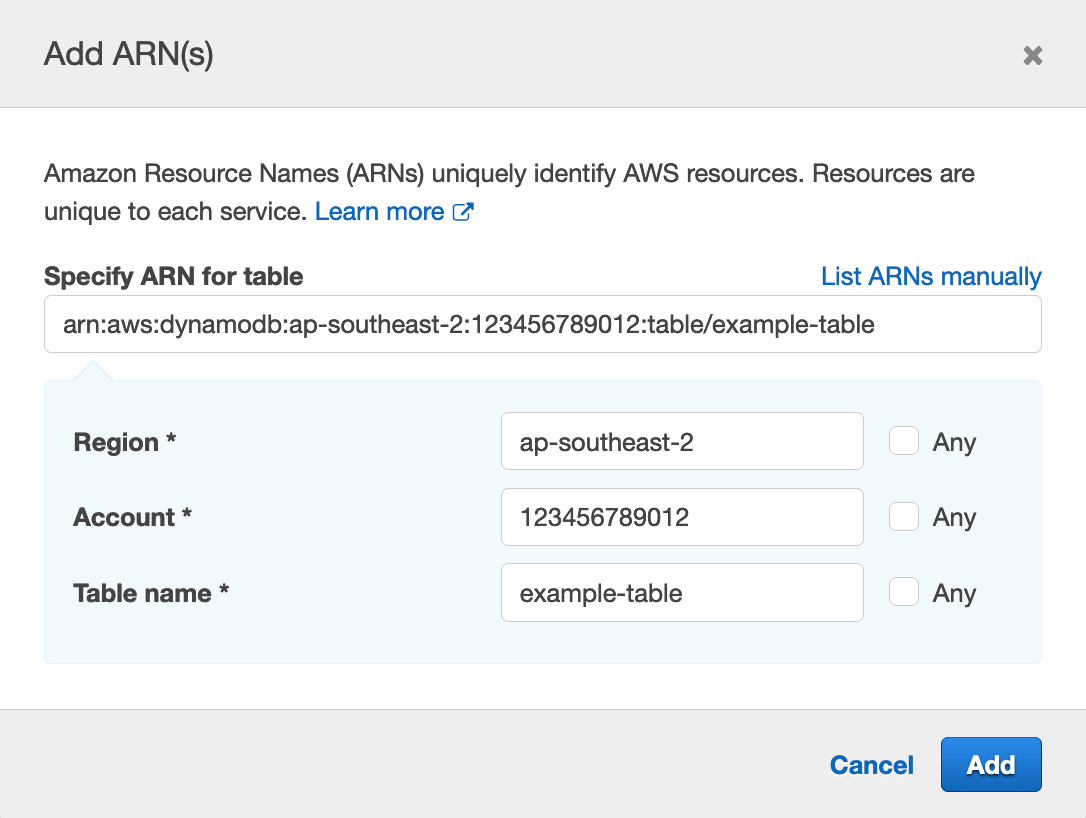

Finding example: unused permissions

Another goal that your security team might have is to make sure that the IAM roles and users across your organization are following the principle of least privilege. Let’s walk through an example with findings associated with unused permissions.

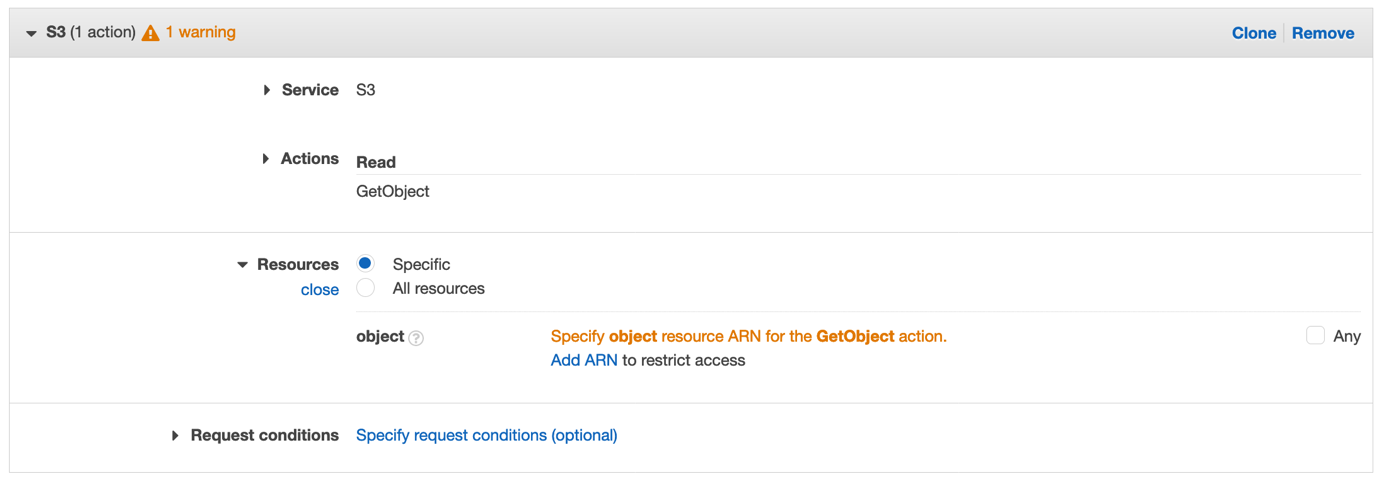

To review findings for unused permissions

- On the list of unused access findings, apply the filter on Findings type = Unused permissions.

- Select a finding, as shown in Figure 5. In this example, the IAM role has 148 unused actions on Amazon Relational Database Service (Amazon RDS) and has not used a service action for 200 days. Similarly, the role has unused actions for other services, including Amazon Elastic Compute Cloud (Amazon EC2), Amazon Simple Storage Service (Amazon S3), and Amazon DynamoDB.

Figure 5: Finding example – Unused permissions

The security team now has a view of the unused actions for this role and can investigate with the development teams to check if those permissions are still required.

The development team can then refine the permissions granted to the role to remove the unused permissions.

Unused access findings notify you about unused permissions for all service-level permissions and for 200 services at the action-level. For the list of supported actions, see IAM action last accessed information services and actions.

Take actions on findings

IAM Access Analyzer categorizes findings as active, resolved, and archived. In this section, we’ll show you how you can act on your findings.

Resolve findings

You can resolve unused access findings by deleting unused IAM roles, IAM users, IAM user credentials, or permissions. After you’ve completed this, IAM Access Analyzer automatically resolves the findings on your behalf.

To speed up the process of removing unused permissions, you can use IAM Access Analyzer policy generation to generate a fine-grained IAM policy based on your access analysis. For more information, see the blog post Use IAM Access Analyzer to generate IAM policies based on access activity found in your organization trail.

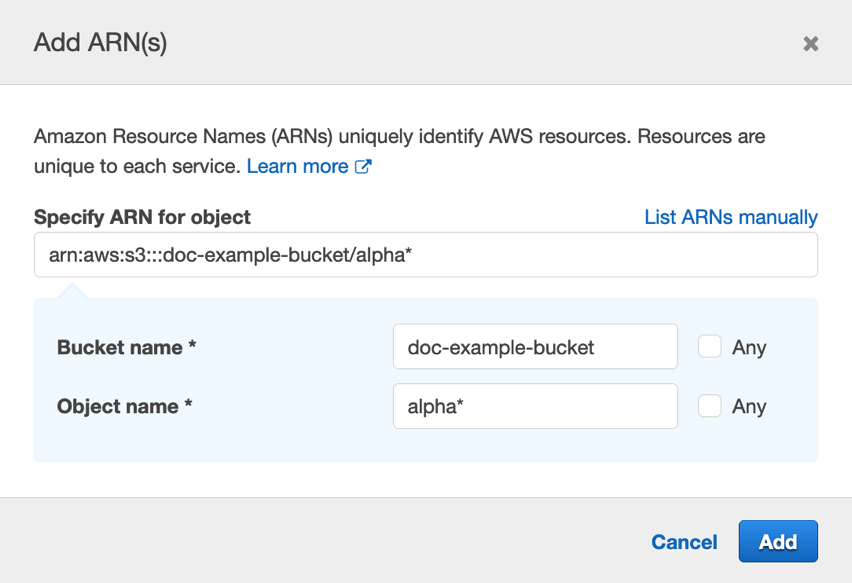

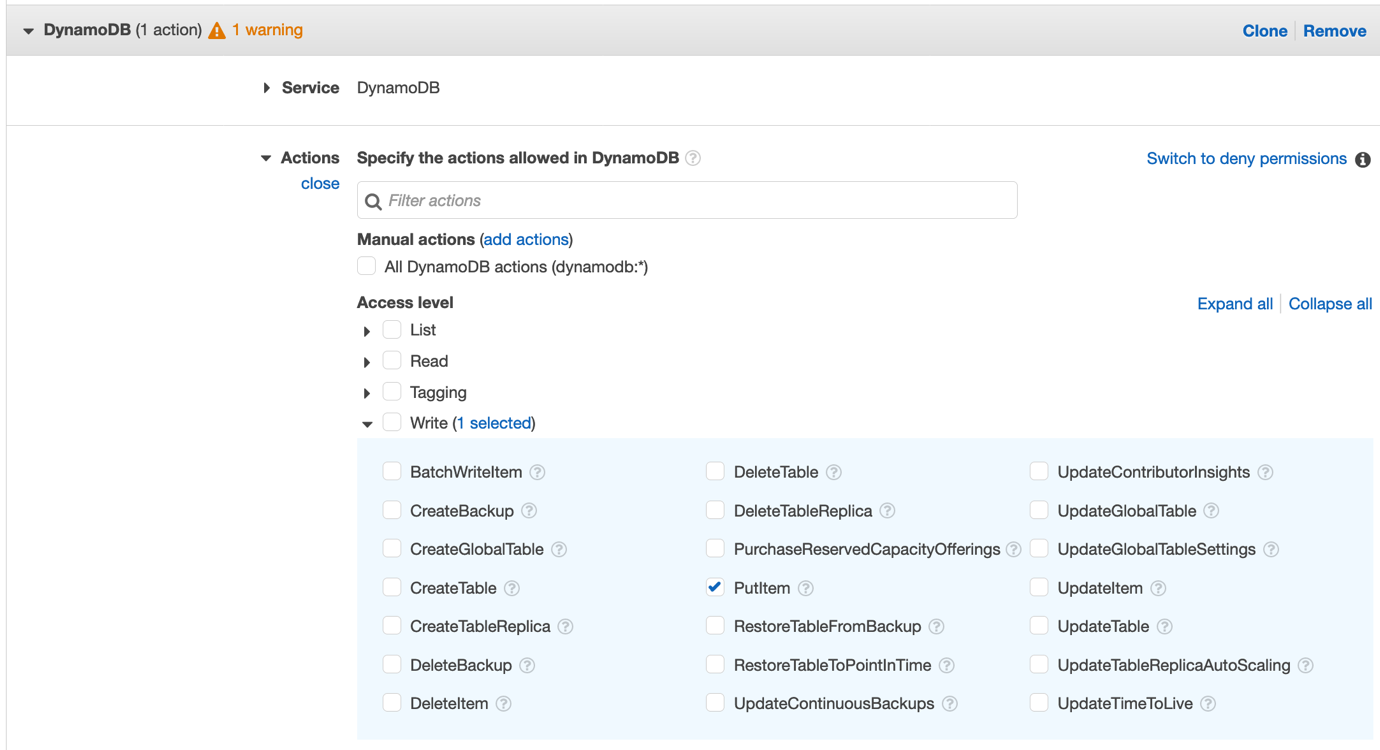

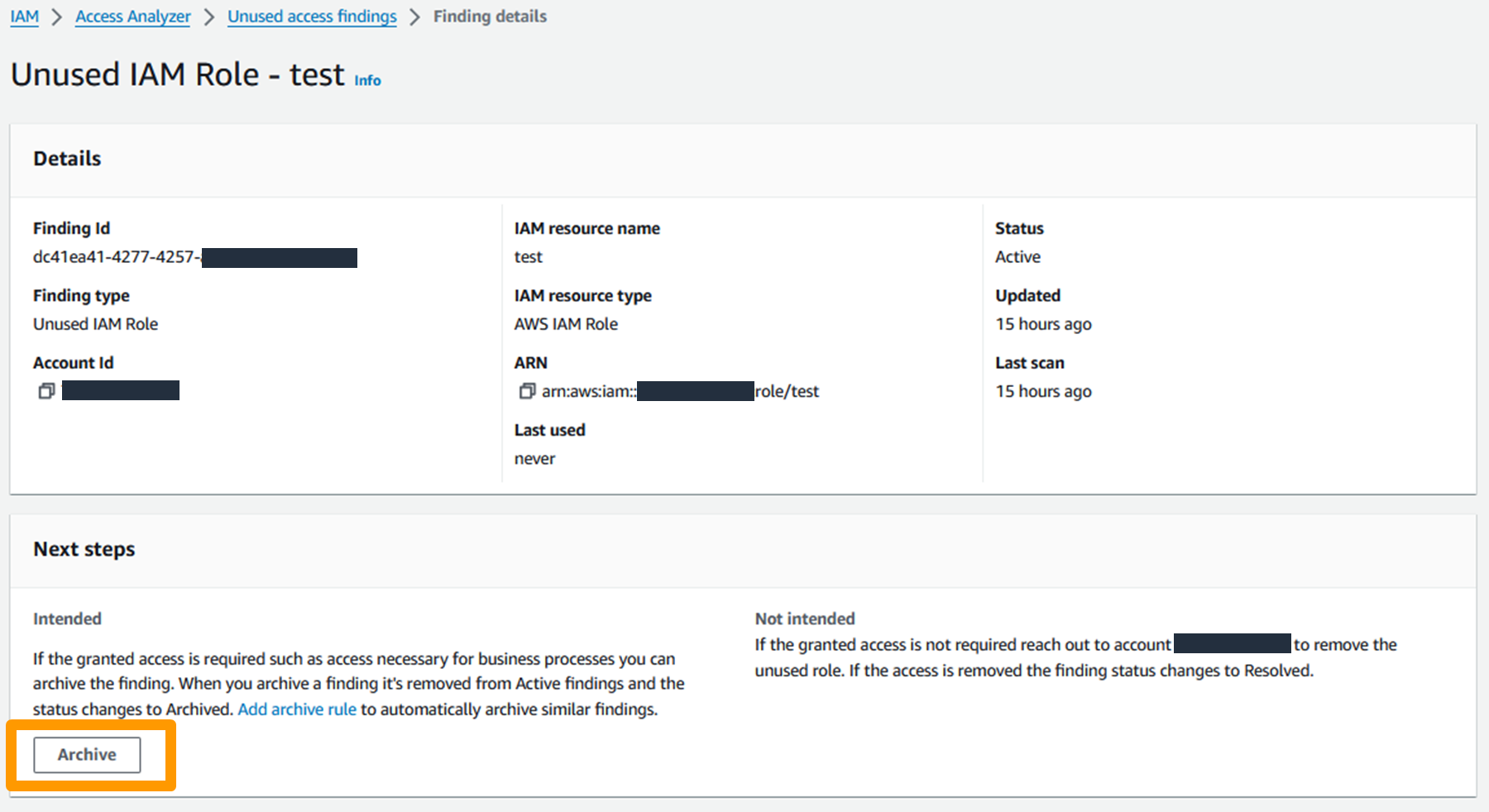

Archive findings

You can suppress a finding by archiving it, which moves the finding from the Active tab to the Archived tab in the IAM Access Analyzer console. To archive a finding, open the IAM Access Analyzer console, select a Finding ID, and in the Next steps section, select Archive, as shown in Figure 6.

Figure 6: Archive finding in the AWS management console

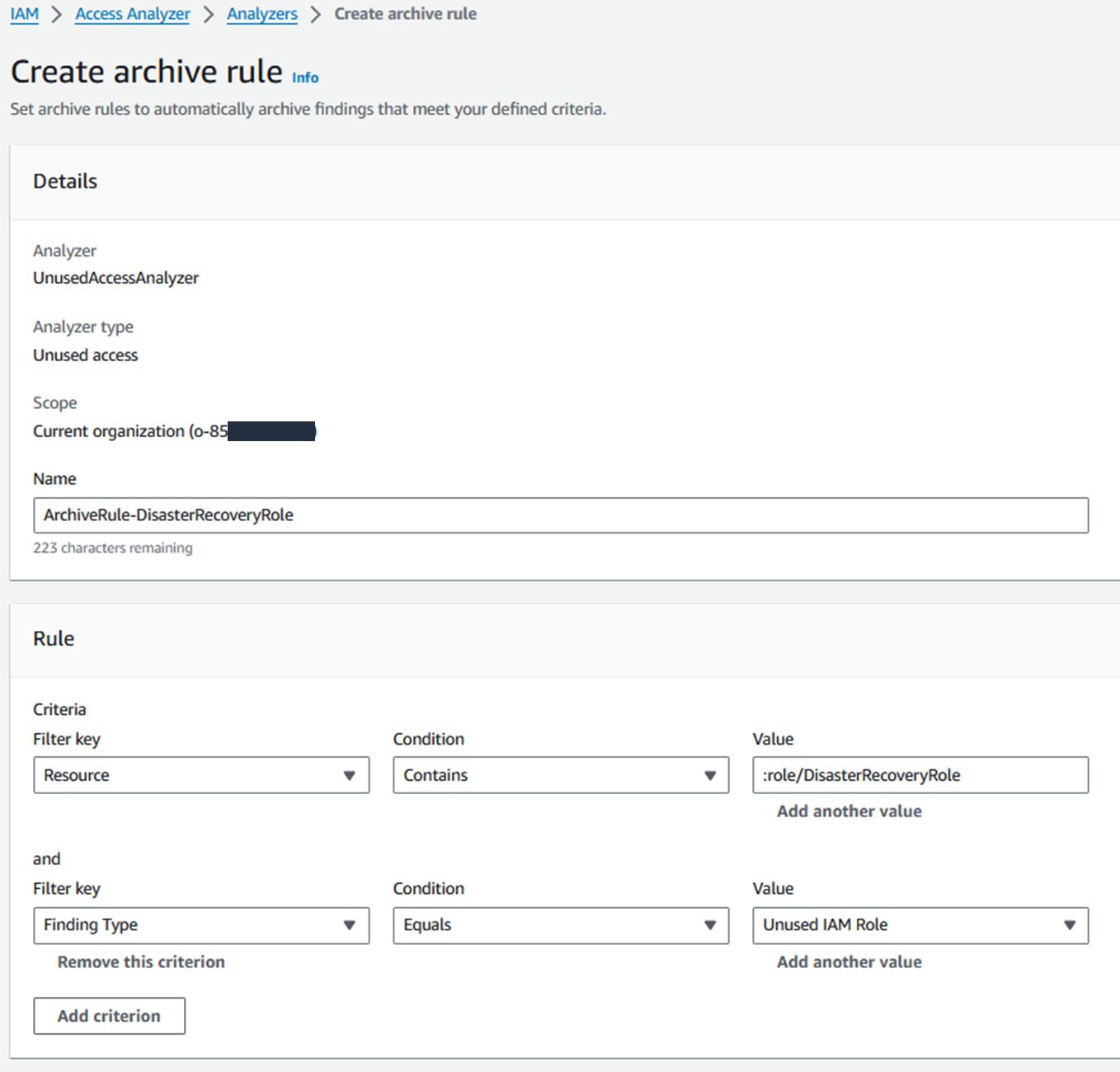

You can automate this process by creating archive rules that archive findings based on their attributes. An archive rule is linked to an analyzer, which means that you can have archive rules exclusively for unused access findings.

To illustrate this point, imagine that you have a subset of IAM roles that you don’t expect to use in your tracking period. For example, you might have an IAM role that is used exclusively for break glass access during your disaster recovery processes—you shouldn’t need to use this role frequently, so you can expect some unused access findings. For this example, let’s call the role DisasterRecoveryRole. You can create an archive rule to automatically archive unused access findings associated with roles named DisasterRecoveryRole, as shown in Figure 7.

Figure 7: Example of an archive rule

Automation

IAM Access Analyzer exports findings to both Amazon EventBridge and AWS Security Hub. Security Hub also forwards events to EventBridge.

Using an EventBridge rule, you can match the incoming events associated with IAM Access Analyzer unused access findings and send them to targets for processing. For example, you can notify the account owners so that they can investigate and remediate unused IAM roles, user credentials, or permissions.

For more information, see Monitoring AWS Identity and Access Management Access Analyzer with Amazon EventBridge.

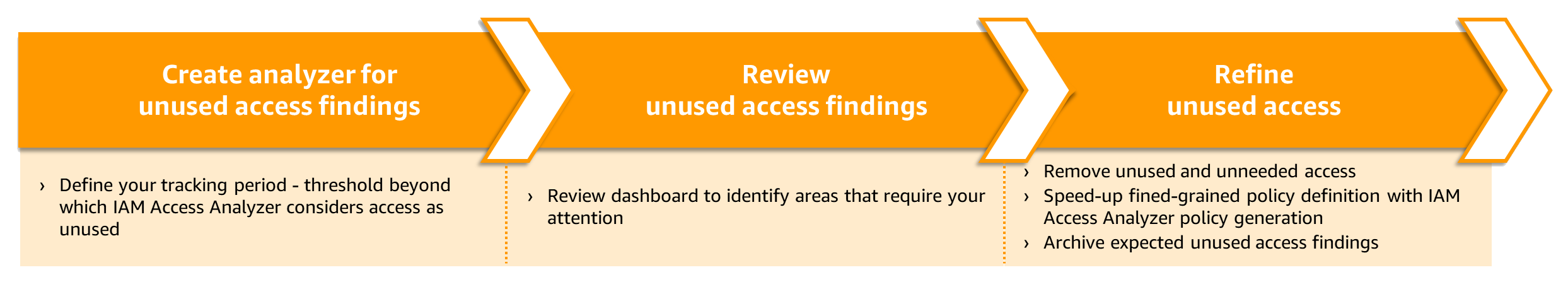

Conclusion

With IAM Access Analyzer, you can centrally identify, review, and refine unused access across your organization. As summarized in Figure 8, you can use the dashboard to review findings and prioritize which accounts to review based on the volume of findings. The findings highlight unused roles, unused access keys for IAM users, and unused passwords for IAM users. For active IAM roles and users, the findings provide visibility into unused services and actions. By reviewing and refining unused access, you can improve your security posture and get closer to the principle of least privilege at scale.

Figure 8: Process to address unused access findings

The new IAM Access Analyzer unused access findings and dashboard are available in AWS Regions, excluding the AWS GovCloud (US) Regions and AWS China Regions. To learn more about how to use IAM Access Analyzer to detect unused accesses, see the IAM Access Analyzer documentation.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, contact AWS Support.

Want more AWS Security news? Follow us on Twitter.