Post Syndicated from Kriti Heda original https://aws.amazon.com/blogs/security/control-access-to-amazon-elastic-container-service-resources-by-using-abac-policies/

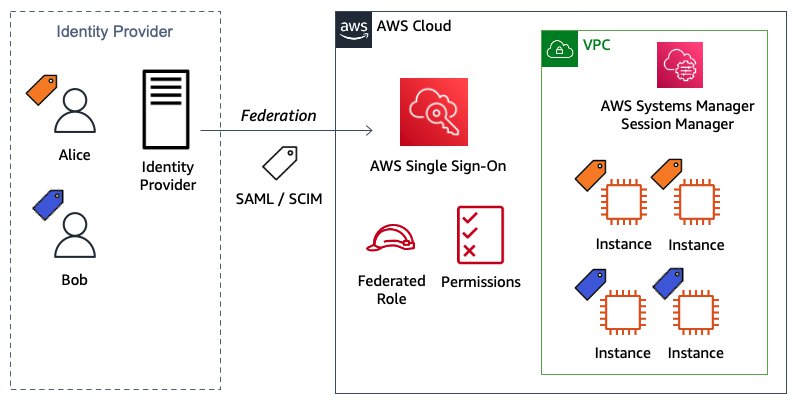

As an AWS customer, if you use multiple Amazon Elastic Container Service (Amazon ECS) services/tasks to achieve better isolation, you often have the challenge of how to manage access to these containers. In such cases, using tags can enable you to categorize these services in different ways, such as by owner or environment.

This blog post shows you how tags allow conditional access to Amazon ECS resources. You can use attribute-based access control (ABAC) policies to grant access rights to users through the use of policies that combine attributes together. ABAC can be helpful in rapidly-growing environments, where policy management can become cumbersome. This blog post uses ECS resource tags (owner tag and environment tag) as the attributes that are used to control access in the policies.

Amazon ECS resources have many attributes, such as tags, which can be used to control permissions. You can attach tags to AWS Identity and Access Management (IAM) principals, and create either a single ABAC policy, or a small set of policies for your IAM principals. These ABAC policies can be designed to allow operations when the principal tag (a tag that exists on the user or role making the call) matches the resource tag. They can be used to simplify permission management at scale. A single Amazon ECS policy can enforce permissions across a range of applications, without having to update the policy each time you create new Amazon ECS resources.

This post provides a step-by-step procedure for creating ABAC policies for controlling access to Amazon ECS containers. As the team adds ECS resources to its projects, permissions are automatically applied based on the owner tag and the environment tag. As a result, no policy update is required for each new resource. Using this approach can save time and help improve security, because it relies on granular permissions rules.

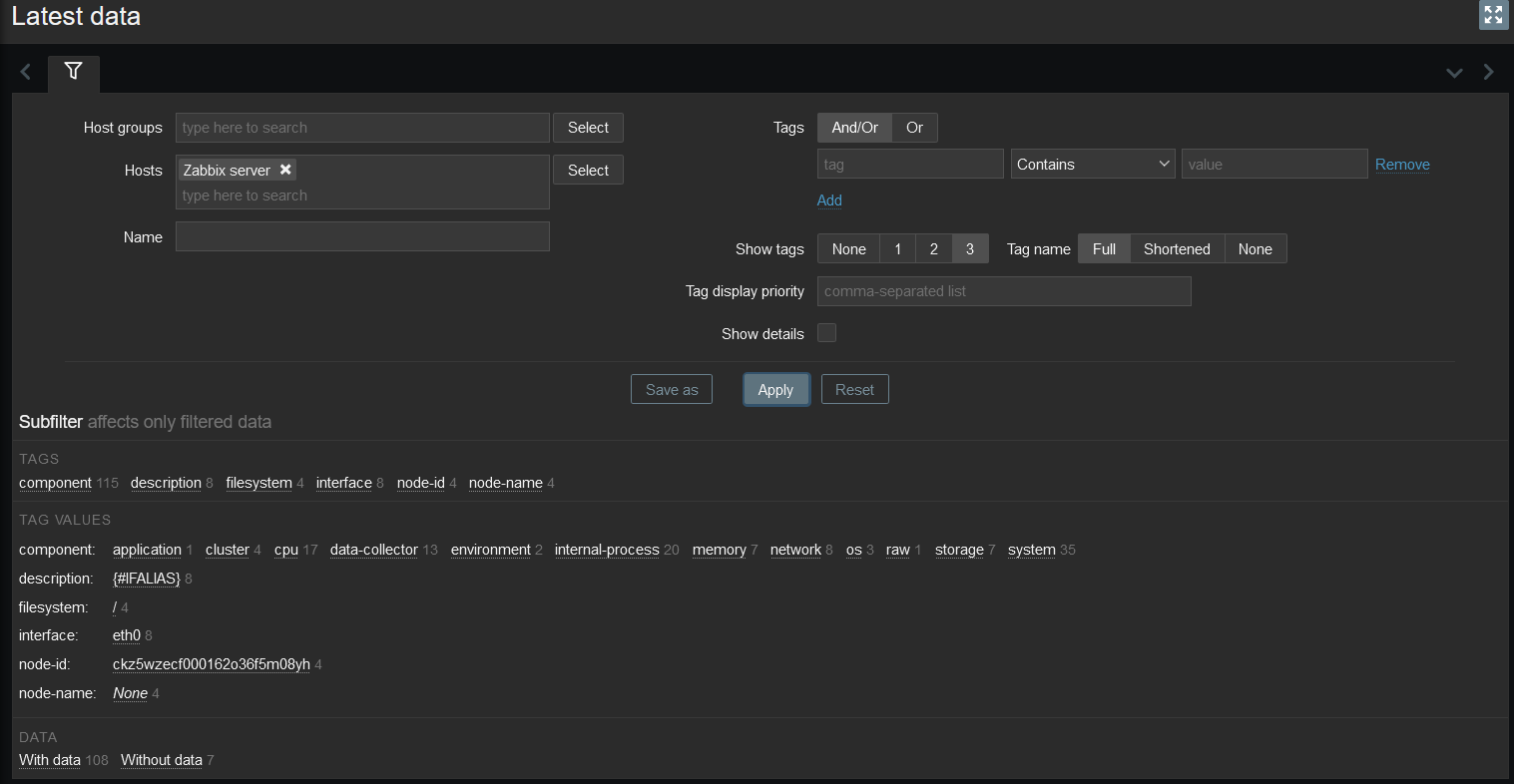

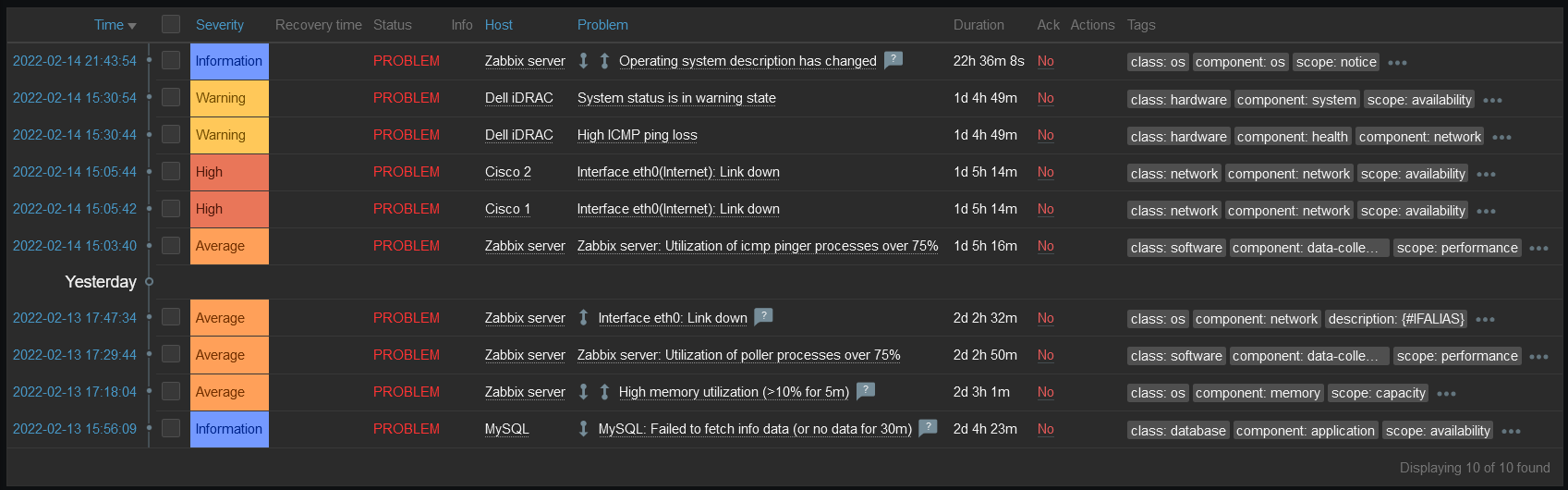

Condition key mappings

It’s important to note that each IAM permission in Amazon ECS supports different types of tagging condition keys. The following table maps each condition key to its ECS actions.

| Condition key |

Description |

ECS actions |

| aws:RequestTag/${TagKey} |

Set this tag value to require that a specific tag be used (or not used) when making an API request to create or modify a resource that allows tags. |

ecs:CreateCluster,

ecs:TagResource,

ecs:CreateCapacityProvider |

| aws:ResourceTag/${TagKey} |

Set this tag value to allow or deny user actions on resources with specific tags. |

ecs:PutAttributes,

ecs:StopTask,

ecs:DeleteCluster,

ecs:DeleteService,

ecs:CreateTaskSet,

ecs:DeleteAttributes,

ecs:DeleteTaskSet,

ecs:DeregisterContainerInstance |

aws:RequestTag/${TagKey}

and

aws:ResourceTag/${TagKey} |

Supports both RequestTag and ResourceTag |

ecs:CreateService,

ecs:RunTask,

ecs:StartTask,

ecs:RegisterContainerInstance |

For a detailed guide of Amazon ECS actions and the resource types and condition keys they support, see Actions, resources, and condition keys for Amazon Elastic Container Service.

Tutorial overview

The following tutorial gives you a step-by-step process to create and test an Amazon ECS policy that allows IAM roles with principal tags to access resources with matching tags. When a principal makes a request to AWS, their permissions are granted based on whether the principal and resource tags match. This strategy allows individuals to view or edit only the ECS resources required for their jobs.

Scenario

Example Corp. has multiple Amazon ECS containers created for different applications. Each of these containers are created by different owners within the company. The permissions for each of the Amazon ECS resources must be restricted based on the owner of the container, and also based on the environment where the action is performed.

Assume that you’re a lead developer at this company, and you’re an experienced IAM administrator. You’re familiar with creating and managing IAM users, roles, and policies. You want to ensure that the development engineering team members can access only the containers they own. You also need a strategy that will scale as your company grows.

For this scenario, you choose to use AWS resource tags and IAM role principal tags to implement an ABAC strategy for Amazon ECS resources. The condition key mappings table shows which tagging condition keys you can use in a policy for each Amazon ECS action and resources. You can define the tags in the role you created. For this scenario, you define two tags Owner and Environment. These tags restrict permissions in the role based on the tags you defined.

Prerequisites

To perform the steps in this tutorial, you must already have the following:

- An IAM role or user with sufficient privileges for services like IAM and ECS. Following the security best practices the role should have a minimum set of permissions and grant additional permissions as necessary. You can add the AWS managed policies IAMFullAccess and AmazonECS_FullAccess to create the IAM role to provide permissions for creating IAM and ECS resources.

- An AWS account that you can sign in to as an IAM role or user.

- Experience creating and editing IAM users, roles, and policies in the AWS Management Console. For more information, see Tutorial to create IAM resources.

Create an ABAC policy for Amazon ECS resources

After you complete the prerequisites for the tutorial, you will need to define which Amazon ECS privileges and access controls you want in place for the users, and configure the tags needed for creating the ABAC policies. This tutorial focuses on providing step-by-step instructions for creating test users, defining the ABAC policies for the Amazon ECS resources, creating a role, and defining tags for the implementation.

To create the ABAC policy

You create an ABAC policy that defines permissions based on attributes. In AWS, these attributes are called tags.

The sample ABAC policy that follows provides ECS permissions to users when the principal’s tag matches the resource tag.

Sample ABAC policy for ECS resources

The sample ECS ABAC policy that follows allows the user to perform action on the ECS resources, but only when those resources are tagged with the same key-pairs as the principal.

- Download the sample ECS policy. This policy allows principals to create, read, edit, and delete resources, but only when those resources are tagged with the same key-value pairs as the principal.

- Use the downloaded ECS policy to create the ECS ABAC policy, and name your new policy ECSABAC policy. For more information, see Creating IAM policies.

This sample policy provides permission to each ECS action based on the condition key that action supports. See to the condition key mappings table for a mapping of the ECS actions and the condition key they support.

What does this policy do?

- The ECSCreateCluster statement allows users to create cluster, create and tag resources. These ECS actions only support the RequestTag condition key. This condition block returns true if every tag passed (tags: owner and environment) in the request is included in the specified list. This is done using the StringEquals condition operator. If an incorrect tag key other than owner or environment tag is passed, or incorrect value for the tags are passed, then the condition returns false. The ECS actions within these statements do not have a specific requirement of a resource type.

- The ECSDeletion, ECSUpdate, and ECSDescribe statements allow users to update, delete or list/describe ECS resources. The ECS actions under these statements only support the ResourceTag condition key. Statements return true if the specified tag keys are present on the ECS resource and their values match the principal’s tags. These statements return false for mismatched tags (in this policy, the only acceptable tags are owner and environment), or for an incorrect value for the owner and environment tag passed to the ECS resources. They also return false for any ECS action that does not support resource tagging.

- The ECSCreateService, ECSTaskControl, and ECSRegistration statements contain ECS actions that allow users to create a service, start or run tasks and register container instances in ECS. The ECS actions within these statements support both Request and Resource tag condition keys.

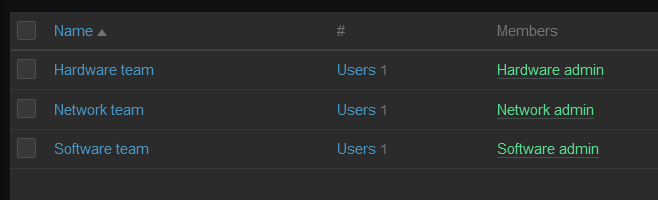

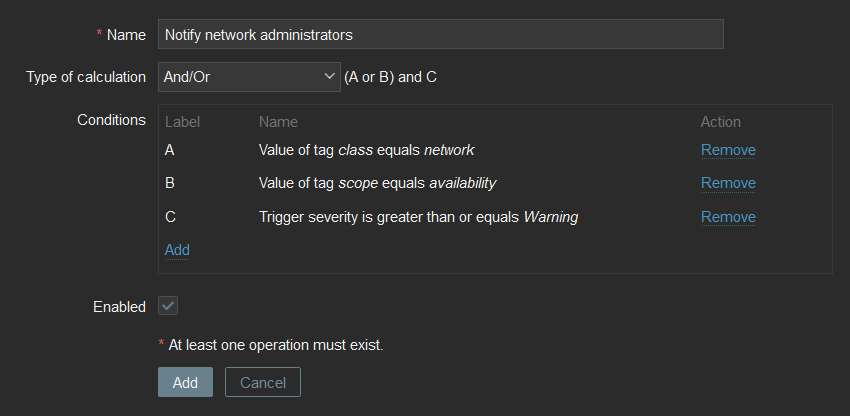

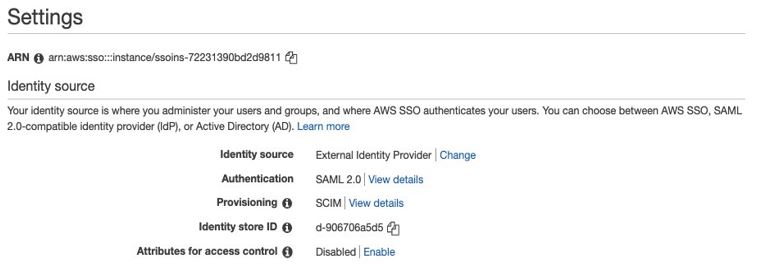

Create IAM roles

Create the following IAM roles and attach the ECSABAC policy you created in the previous procedure. You can create the roles and add tags to them using the AWS console, through the role creation flow, as shown in the following steps.

To create IAM roles

- Sign in to the AWS Management Console and navigate to the IAM console.

- In the left navigation pane, select Roles, and then select Create Role.

- Choose the Another AWS account role type.

- For Account ID, enter the AWS account ID mentioned in the prerequisites to which you want to grant access to your resources.

- Choose Next: Permissions.

- IAM includes a list of the AWS managed and customer managed policies in your account. Select the ECSABAC policy you created previously from the dropdown menu to use for the permissions policy. Alternatively, you can choose Create policy to open a new browser tab and create a new policy, as shown in Figure 1.

Figure 1. Attach the ECS ABAC policy to the role

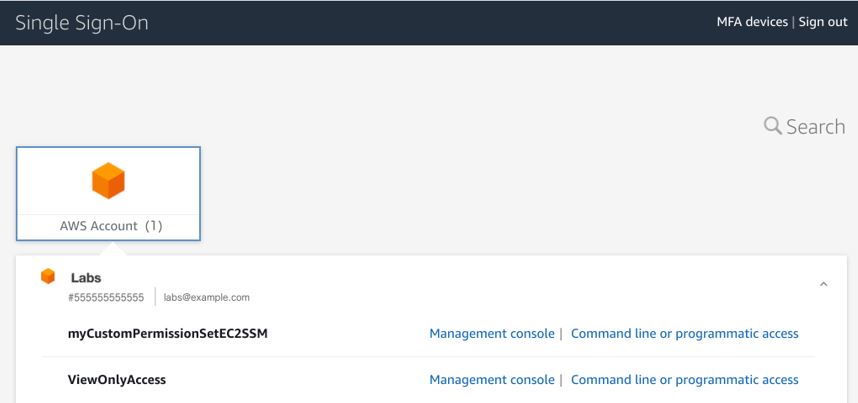

- Choose Next: Tags.

- Add metadata to the role by attaching tags as key-value pairs. Add the following tags to the role: for Key owner, enter Value mock_owner; and for Key environment, enter development, as shown in Figure 2.

Figure 2. Define the tags in the IAM role

- Choose Next: Review.

- For Role name, enter a name for your role. Role names must be unique within your AWS account.

- Review the role and then choose Create role.

Test the solution

The following sections present some positive and negative test cases that show how tags can provide fine-grained permission to users through ABAC policies.

Prerequisites for the negative and positive testing

Before you can perform the positive and negative tests, you must first do these steps in the AWS Management Console:

- Follow the procedures above for creating IAM role and the ABAC policy.

- Switch the role from the role assumed in the prerequisites to the role you created in To create IAM Roles above, following the steps in the documentation Switching to a role.

Perform negative testing

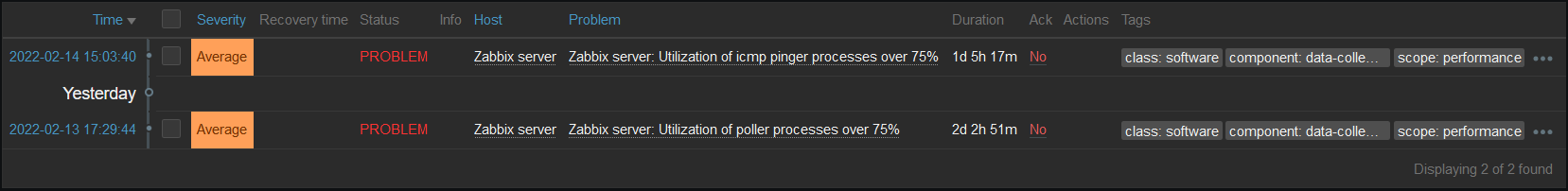

For the negative testing, three test cases are presented here that show how the ABAC policies prevent successful creation of the ECS resources if the owner or environment tags are missing, or if an incorrect tag is used for the creation of the ECS resource.

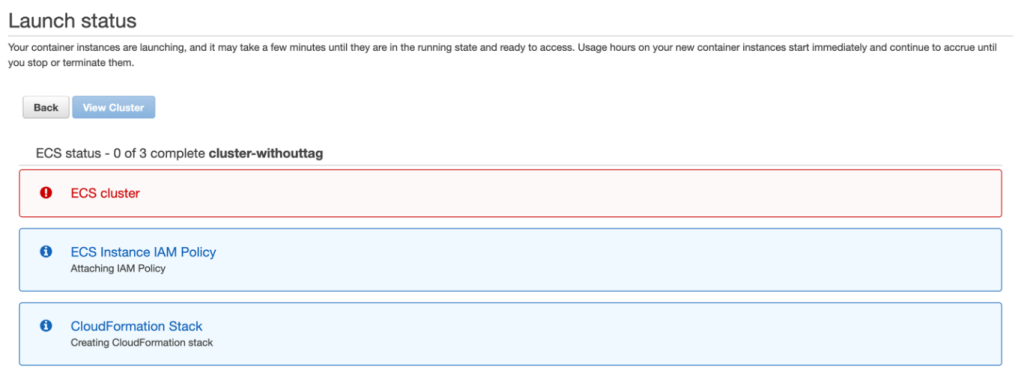

Negative test case 1: Create cluster without the required tags

In this test case, you check if an ECS cluster is successfully created without any tags. Create an Amazon ECS cluster without any tags (in other words, without adding the owner and environment tag).

To create a cluster without the required tags

- Sign in to the AWS Management Console and navigate to the IAM console.

- From the navigation bar, select the Region to use.

- In the navigation pane, choose Clusters.

- On the Clusters page, choose Create Cluster.

- For Select cluster compatibility, choose Networking only, then choose Next Step.

- On the Configure cluster page, enter a cluster name. For Provisioning Model, choose On-Demand Instance, as shown in Figure 3.

Figure 3. Create a cluster

- In the Networking section, configure the VPC for your cluster.

- Don’t add any tags in the Tags section, as shown in Figure 4.

Figure 4. No tags added to the cluster

- Choose Create.

Expected result of negative test case 1

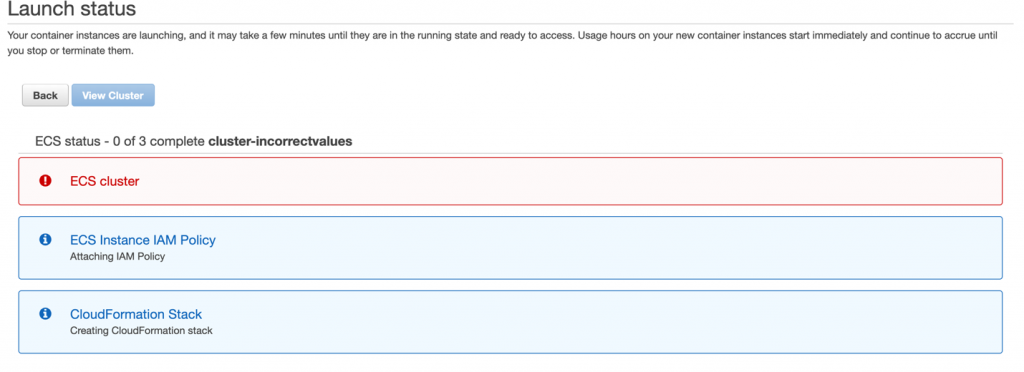

Because the owner and the environment tags are absent, the ABAC policy prevents the creation of the cluster and throws an error, as shown in Figure 5.

Figure 5. Unsuccessful creation of the ECS cluster due to missing tags

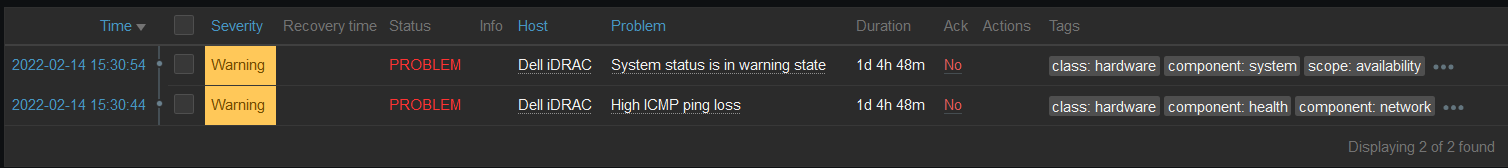

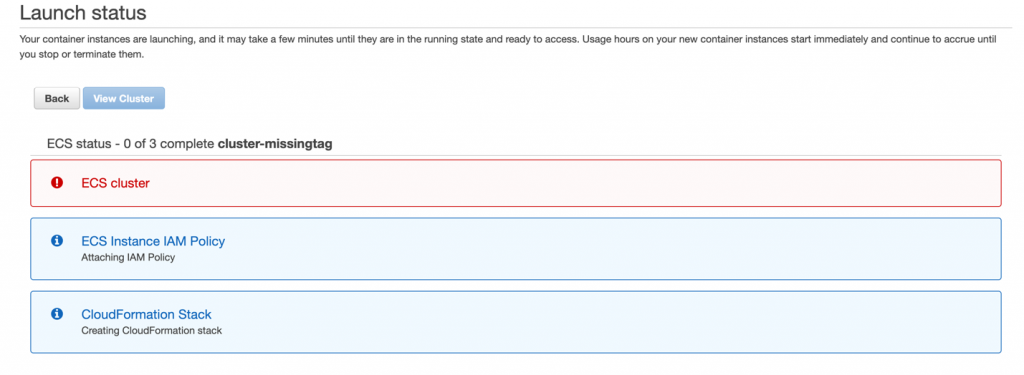

Negative test case 2: Create cluster with a missing tag

In this test case, you check whether an ECS cluster is successfully created missing a single tag. You create a cluster similar to the one created in Negative test case 1. However, in this test case, in the Tags section, you enter only the owner tag. The environment tag is missing, as shown in Figure 6.

To create a cluster with a missing tag

- Repeat steps 1-7 from the Negative test case 1 procedure.

- In the Tags section, add the owner tag and enter its value as mock_user.

Figure 6. Create a cluster with the environment tag missing

Expected result of negative test case 2

The ABAC policy prevents the creation of the cluster, due to the missing environment tag in the cluster. This results in an error, as shown in Figure 7.

Figure 7. Unsuccessful creation of the ECS cluster due to missing tag

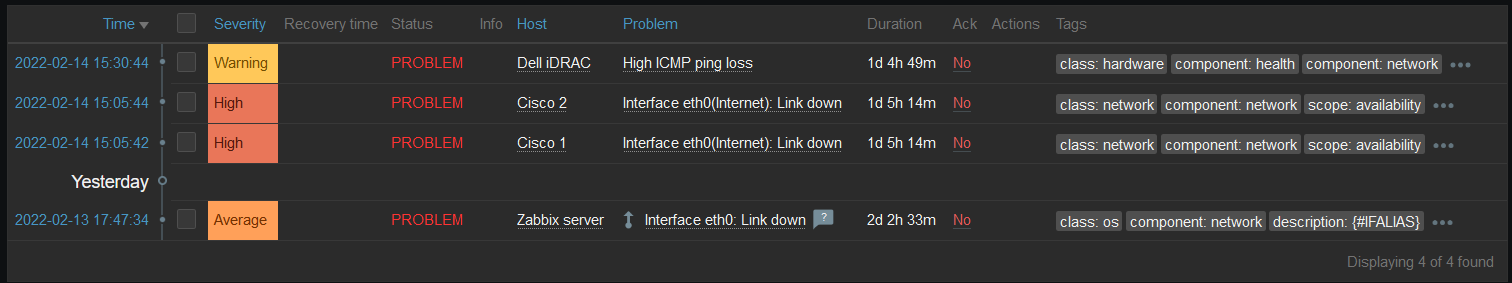

Negative test case 3: Create cluster with incorrect tag values

In this test case, you check whether an ECS cluster is successfully created with incorrect tag-value pairs. Create a cluster similar to the one in Negative test case 1. However, in this test case, in the Tags section, enter incorrect values for the owner and the environment tag keys, as shown in Figure 8.

To create a cluster with incorrect tag values

- Repeat steps 1-7 from the Negative test case 1 procedure.

- In the Tags section, add the owner tag and enter the value as test_user; add the environment tag and enter the value as production.

Figure 8. Create a cluster with the incorrect values for the tags

Expected result of negative test case 3

The ABAC policy prevents the creation of the cluster, due to incorrect values for the owner and environment tags in the cluster. This results in an error, as shown in Figure 9.

Figure 9. Unsuccessful creation of the ECS cluster due to incorrect value for the tags

Perform positive testing

For the positive testing, two test cases are provided here that show how the ABAC policies allow successful creation of ECS resources, such as ECS clusters and ECS tasks, if the correct tags with correct values are provided as input for the ECS resources.

Positive test case 1: Create cluster with all the correct tag-value pairs

This test case checks whether an ECS cluster is successfully created with the correct tag-value pairs when you create a cluster with both the owner and environment tag that matches the ABAC policy you created earlier.

To create a cluster with all the correct tag-value pairs

- Repeat steps 1-7 from the Negative test case 1 procedure.

- In the Tags section, add the owner tag and enter the value as mock_user; add the environment tag and enter the value as development, as shown in Figure 10.

Figure 10. Add correct tags to the cluster

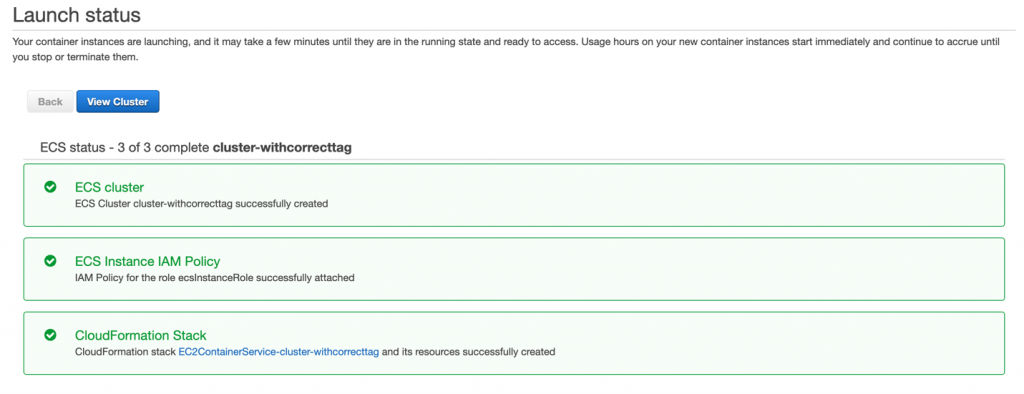

Expected result of positive test case 1

Because both the owner and the environment tags were input correctly, the ABAC policy allows the successful creation of the cluster without throwing an error, as shown in Figure 11.

Figure 11. Successful creation of the cluster

Positive test case 2: Create standalone task with all the correct tag-value pairs

Deploying your application as a standalone task can be ideal in certain situations. For example, suppose you’re developing an application, but you aren’t ready to deploy it with the service scheduler. Maybe your application is a one-time or periodic batch job, and it doesn’t make sense to keep running it, or to restart when it finishes.

For this test case, you run a standalone task with the correct owner and environment tags that match the ABAC policy.

To create a standalone task with all the correct tag-value pairs

- To run a standalone task, see Run a standalone task in the Amazon ECS Developer Guide. Figure 12 shows the beginning of the Run Task process.

Figure 12. Run a standalone task

- In the Task tagging configuration section, under Tags, add the owner tag and enter the value as mock_user; add the environment tag and enter the value as development, as shown in Figure 13.

Figure 13. Creation of the task with the correct tag

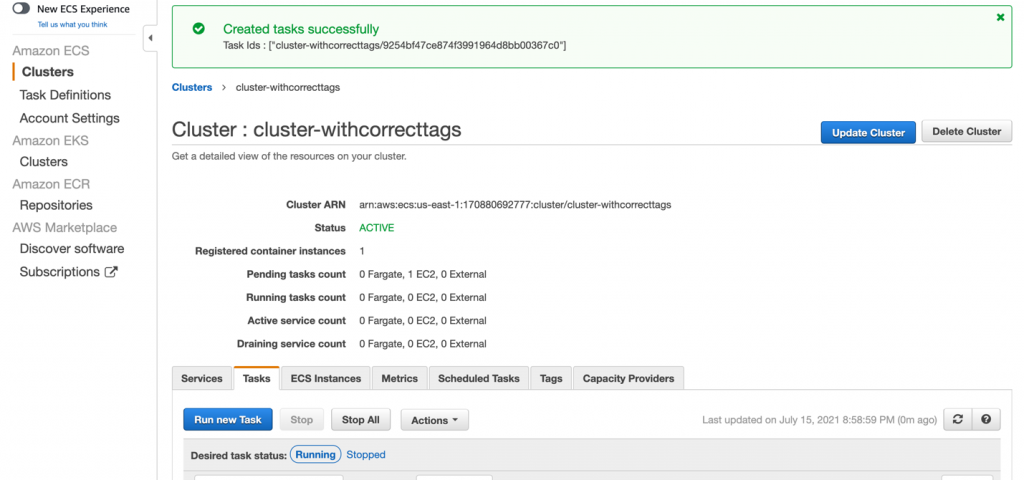

Expected result of positive test case 2

Because you applied the correct tags in the creation phase, the task is created successfully, as shown in Figure 14.

Figure 14. Successful creation of the task

Cleanup

To avoid incurring future charges, after completing testing, delete any resources you created for this solution that are no longer needed. See the following links for step-by-step instructions for deleting the resources you created in this blog post.

- Deregistering an ECS Task Definition

- Deleting ECS Clusters

- Deleting IAM Policies

- Deleting IAM Roles and Instance Profiles

Conclusion

This post demonstrates the basics of how to use ABAC policies to provide fine-grained permissions to users based on attributes such as tags. You learned how to create ABAC policies to restrict permissions to users by associating tags with each ECS resource you create. You can use tags to manage and secure access to ECS resources, including ECS clusters, ECS tasks, ECS task definitions, and ECS services.

For more information about the ECS resources that support tagging, see the Amazon Elastic Container Service Guide.

If you have feedback about this blog post, submit comments in the Comments section below. If you have questions about this blog post, start a new thread on AWS Secrets Manager re:Post or contact AWS Support.

Want more AWS Security news? Follow us on Twitter.