Post Syndicated from Eric Johnson original https://aws.amazon.com/blogs/compute/serverless-icymi-q4-2023/

Welcome to the 24th edition of the AWS Serverless ICYMI (in case you missed it) quarterly recap. Every quarter, we share all the most recent product launches, feature enhancements, blog posts, webinars, live streams, and other interesting things that you might have missed!

In case you missed our last ICYMI, check out what happened last quarter here.

ServerlessVideo

ServerlessVideo is a demo application built by the AWS Serverless Developer Advocacy team to stream live videos and also perform advanced post-video processing. It uses several AWS services including AWS Step Functions, Amazon EventBridge, AWS Lambda, Amazon ECS, and Amazon Bedrock in a serverless architecture that makes it fast, flexible, and cost-effective. Key features include an event-driven core with loosely coupled microservices that respond to events routed by EventBridge. Step Functions orchestrates using both Lambda and ECS for video processing to balance speed, scale, and cost. There is a flexible plugin-based architecture using Step Functions and EventBridge to integrate and manage multiple video processing workflows, which include GenAI.

ServerlessVideo allows broadcasters to stream video to thousands of viewers using Amazon IVS. When a broadcast ends, a Step Functions workflow triggers a set of configured plugins to process the video, generating transcriptions, validating content, and more. The application incorporates various microservices to support live streaming, on-demand playback, transcoding, transcription, and events. Learn more about the project and watch videos from reinvent 2023 at video.serverlessland.com.

AWS Lambda

AWS Lambda enabled outbound IPv6 connections from VPC-connected Lambda functions, providing virtually unlimited scale by removing IPv4 address constraints.

The AWS Lambda and AWS SAM teams also added support for sharing test events across teams using AWS SAM CLI to improve collaboration when testing locally.

AWS Lambda introduced integration with AWS Application Composer, allowing users to view and export Lambda function configuration details for infrastructure as code (IaC) workflows.

AWS added advanced logging controls enabling adjustable JSON-formatted logs, custom log levels, and configurable CloudWatch log destinations for easier debugging. AWS enabled monitoring of errors and timeouts occurring during initialization and restore phases in CloudWatch Logs as well, making troubleshooting easier.

For Kafka event sources, AWS enabled failed event destinations to prevent functions stalling on failing batches by rerouting events to SQS, SNS, or S3. AWS also enhanced Lambda auto scaling for Kafka event sources in November to reach maximum throughput faster, reducing latency for workloads prone to large bursts of messages.

AWS launched support for Python 3.12 and Java 21 Lambda runtimes, providing updated libraries, smaller deployment sizes, and better AWS service integration. AWS also introduced a simplified console workflow to automate complex network configuration when connecting functions to Amazon RDS and RDS Proxy.

Additionally in December, AWS enabled faster individual Lambda function scaling allowing each function to rapidly absorb traffic spikes by scaling up to 1000 concurrent executions every 10 seconds.

Amazon ECS and AWS Fargate

In Q4 of 2023, AWS introduced several new capabilities across its serverless container services including Amazon ECS, AWS Fargate, AWS App Runner, and more. These features help improve application resilience, security, developer experience, and migration to modern containerized architectures.

In October, Amazon ECS enhanced its task scheduling to start healthy replacement tasks before terminating unhealthy ones during traffic spikes. This prevents going under capacity due to premature shutdowns. Additionally, App Runner launched support for IPv6 traffic via dual-stack endpoints to remove the need for address translation.

In November, AWS Fargate enabled ECS tasks to selectively use SOCI lazy loading for only large container images in a task instead of requiring it for all images. Amazon ECS also added idempotency support for task launches to prevent duplicate instances on retries. Amazon GuardDuty expanded threat detection to Amazon ECS and Fargate workloads which users can easily enable.

Also in November, the open source Finch container tool for macOS became generally available. Finch allows developers to build, run, and publish Linux containers locally. A new website provides tutorials and resources to help developers get started.

Finally in December, AWS Migration Hub Orchestrator added new capabilities for replatforming applications to Amazon ECS using guided workflows. App Runner also improved integration with Route 53 domains to automatically configure required records when associating custom domains.

AWS Step Functions

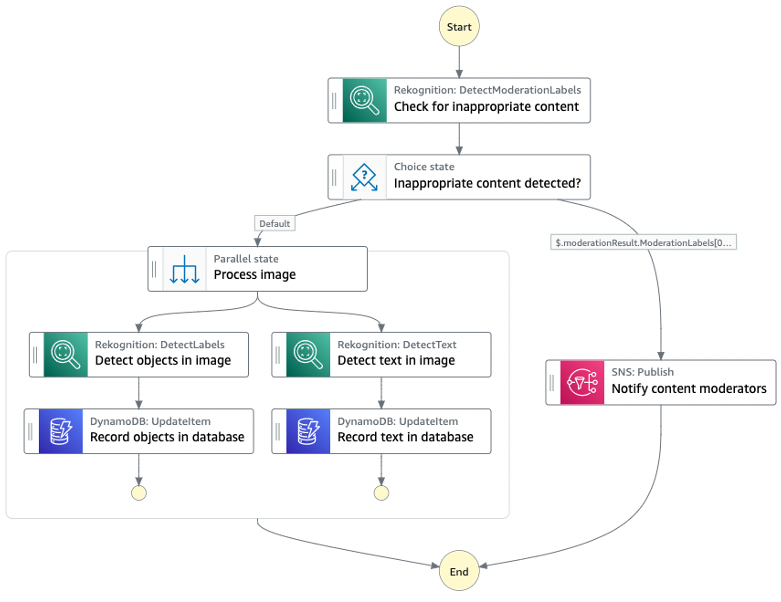

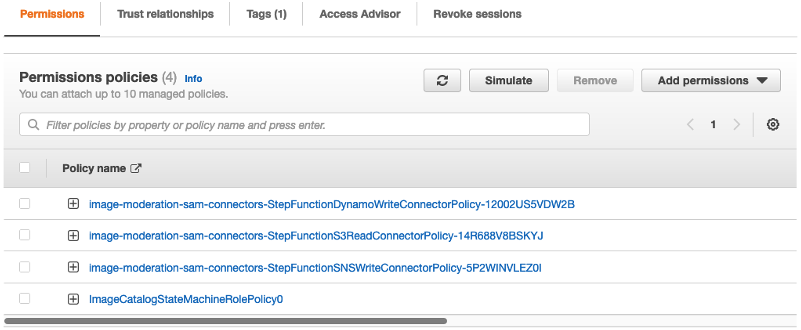

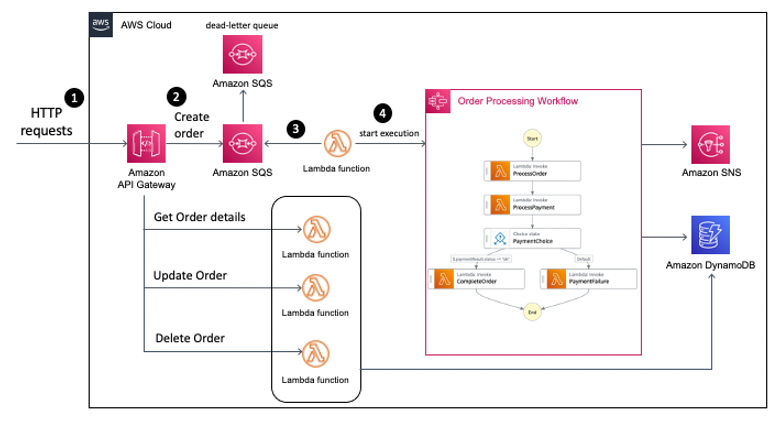

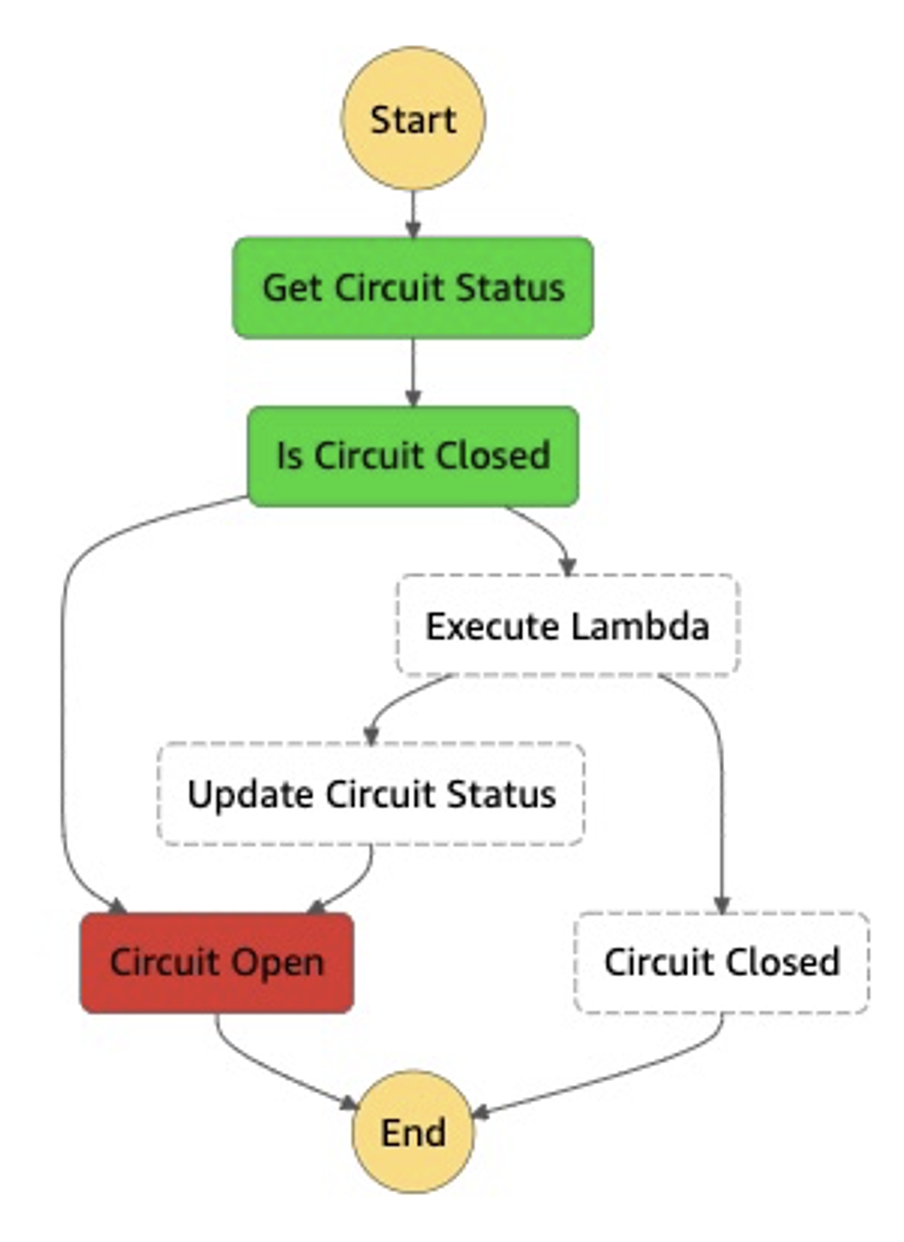

In Q4 2023, AWS Step Functions announced the redrive capability for Standard Workflows. This feature allows failed workflow executions to be redriven from the point of failure, skipping unnecessary steps and reducing costs. The redrive functionality provides an efficient way to handle errors that require longer investigation or external actions before resuming the workflow.

Step Functions also launched support for HTTPS endpoints in AWS Step Functions, enabling easier integration with external APIs and SaaS applications without needing custom code. Developers can now connect to third-party HTTP services directly within workflows. Additionally, AWS released a new test state capability that allows testing individual workflow states before full deployment. This feature helps accelerate development by making it faster and simpler to validate data mappings and permissions configurations.

AWS announced optimized integrations between AWS Step Functions and Amazon Bedrock for orchestrating generative AI workloads. Two new API actions were added specifically for invoking Bedrock models and training jobs from workflows. These integrations simplify building prompt chaining and other techniques to create complex AI applications with foundation models.

Finally, the Step Functions Workflow Studio is now integrated in the AWS Application Composer. This unified builder allows developers to design workflows and define application resources across the full project lifecycle within a single interface.

Amazon EventBridge

Amazon EventBridge announced support for new partner integrations with Adobe and Stripe. These integrations enable routing events from the Adobe and Stripe platforms to over 20 AWS services. This makes it easier to build event-driven architectures to handle common use cases.

Amazon SNS

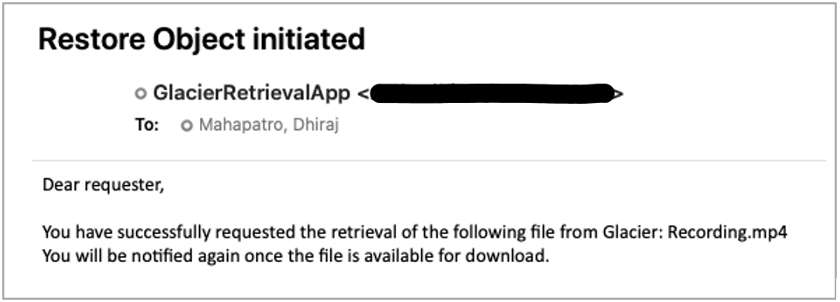

In Q4, Amazon SNS added native in-place message archiving for FIFO topics to improve event stream durability by allowing retention policies and selective replay of messages without provisioning separate resources. Additional message filtering operators were also introduced including suffix matching, case-insensitive equality checks, and OR logic for matching across properties to simplify routing logic implementation for publishers and subscribers. Finally, delivery status logging was enabled through AWS CloudFormation.

Amazon SQS

Amazon SQS has introduced several major new capabilities and updates. These improve visibility, throughput, and message handling for users. Specifically, Amazon SQS enabled AWS CloudTrail logging of key SQS APIs. This gives customers greater visibility into SQS activity. Additionally, SQS increased the throughput quota for the high throughput mode of FIFO queues. This was significantly increased in certain Regions. It also boosted throughput in Asia Pacific Regions. Furthermore, Amazon SQS added dead letter queue redrive support. This allows you to redrive messages that failed and were sent to a dead letter queue (DLQ).

Serverless at AWS re:Invent

Visit the Serverless Land YouTube channel to find a list of serverless and serverless container sessions from reinvent 2023. Hear from experts like Chris Munns and Julian Wood in their popular session, Best practices for serverless developers, or Nathan Peck and Jessica Deen in Deploying multi-tenant SaaS applications on Amazon ECS and AWS Fargate.

EDA Day Nashville

The AWS Serverless Developer Advocacy team hosted an event-driven architecture (EDA) day conference on October 26, 2022 in Nashville, Tennessee. This inaugural GOTO EDA day convened over 200 attendees ranging from prominent EDA community members to AWS speakers and product managers. Attendees engaged in 13 sessions, two workshops, and panels covering EDA adoption best practices. The event built upon 2022 content by incorporating additional topics like messaging, containers, and machine learning. It also created opportunities for students and underrepresented groups in tech to participate. The full-day conference facilitated education, inspiration, and thoughtful discussion around event-driven architectural patterns and services on AWS.

Videos from EDA Day are now available on the Serverless Land YouTube channel.

Serverless blog posts

October

- Filtering events in Amazon EventBridge with wildcard pattern matching

- Enhancing runtime security and governance with the AWS Lambda Runtime API proxy extension

- Archiving and replaying messages with Amazon SNS FIFO

- Sending and receiving webhooks on AWS: Innovate with event notifications

November

- Orchestrating dependent file uploads with AWS Step Functions

- Introducing faster polling scale-up for AWS Lambda functions configured with Amazon SQS

- Scaling improvements when processing Apache Kafka with AWS Lambda

- Introducing the Amazon Linux 2023 runtime for AWS Lambda

- Enhanced Amazon CloudWatch metrics for Amazon EventBridge

- The serverless attendee’s guide to AWS re:Invent 2023

- Introducing logging support for Amazon EventBridge Pipes

- Converting Apache Kafka events from Avro to JSON using EventBridge Pipes

- Node.js 20.x runtime now available in AWS Lambda

- Managing AWS Lambda runtime upgrades

- Introducing AWS Step Functions redrive to recover from failures more easily

- Triggering AWS Lambda function from a cross-account Amazon Managed Streaming for Apache Kafka

- Introducing the AWS Integrated Application Test Kit (IATK)

- Introducing advanced logging controls for AWS Lambda functions

- Introducing support for read-only management events in Amazon EventBridge

December

- Python 3.12 runtime now available in AWS Lambda

- Introducing Amazon MQ cross-Region data replication for ActiveMQ brokers

Serverless container blog posts

October

- Start Spring Boot applications faster on AWS Fargate using SOCI

- PBS speeds deployment and reduces costs with AWS Fargate

- Scale to 15,000+ tasks in a single Amazon Elastic Container Service (ECS) cluster

- Run time sensitive workloads on ECS Fargate with clock accuracy tracking

November

- A deep dive into Amazon ECS task health and task replacement

- Securing API endpoints using Amazon API Gateway and Amazon VPC Lattice

- Serverless containers at AWS re:Invent 2023

- Migration considerations – Cloud Foundry to Amazon ECS with AWS Fargate

- Build Generative AI apps on Amazon ECS for SageMaker JumpStart

- How Smartsheet optimized cost and performance with AWS Graviton and AWS Fargate

December

- AWS App Runner improves performance for image-based deployments

- Use SMB storage with Windows containers on AWS Fargate

- A deep dive into resilience and availability on Amazon Elastic Container Service

- Run Monte Carlo simulations at scale with AWS Step Functions and AWS Fargate

- Effective use: Amazon ECS lifecycle events with Amazon CloudWatch Logs Insights

Serverless Office Hours

October

- Oct 3 – Governance in depth for serverless apps

- Oct 10 – Serverless observability

- Oct 17 – Super serverless tools with Lars Jacobsson

- Oct 24 – Building GenAI apps

- Oct 31 – Visually build AWS applications

November

- Nov 7 – Bring chaos into serverless

- Nov 14 – Ampt: Just write code!

- Nov 21 – pre:Invent 2023

December

- Dec 5 – Step Functions: what’s new

- Dec 19 – 2023 Year in review

Containers from the Couch

October

- Oct 12 – Introducing ContainersOnAWS.com

- Oct 26 – Amazon ECS Console v2 updates

November

- Nov 9 – ECS Builder Series – John Mille (Sainsbury’s)

- Nov 16 – Diving into Finch 1.0

December

- Dec 15 – Cost optimization on AWS Fargate

FooBar

October

- Oct 5 – Build Applications with Bedrock and Lambda

- Oct 12 – Kinesis Data Streams and Lambda in production – What to do when something fails

- Oct 26 – Build applications with generative AI and Serverless – Amazon Bedrock and AWS Lambda Node.js

November

- Nov 2 – Lambda response streaming | get faster responses from AWS Lambda

- Nov 9 – Stream responses back from Bedrock using Lambda response streaming

December

- Dec 7 – Build generative AI apps using AWS Step Functions and Amazon Bedrock

- Dec 14 – Test State API for Step Functions

- Dec 21 – Invoke external endpoints from AWS Step Functions

Still looking for more?

The Serverless landing page has more information. The Lambda resources page contains case studies, webinars, whitepapers, customer stories, reference architectures, and even more Getting Started tutorials.

You can also follow the Serverless Developer Advocacy team on Twitter to see the latest news, follow conversations, and interact with the team.

|

|

And finally, visit the Serverless Land and Containers on AWS websites for all your serverless and serverless container needs.