Post Syndicated from Julian Wood original https://aws.amazon.com/blogs/compute/serverless-icymi-q1-2024/

Welcome to the 25th edition of the AWS Serverless ICYMI (in case you missed it) quarterly recap. Every quarter, we share all the most recent product launches, feature enhancements, blog posts, webinars, live streams, and other interesting things that you might have missed!

In case you missed our last ICYMI, check out what happened last quarter here.

Adobe Summit

At the Adobe Summit, the AWS Serverless Developer Advocacy team showcased a solution developed for the NFL using AWS serverless technologies and Adobe Photoshop APIs. The system automates image processing tasks, including background removal and dynamic resizing, by integrating AWS Step Functions, AWS Lambda, Amazon EventBridge, and AI/ML capabilities via Amazon Rekognition. This solution reduced image processing time from weeks to minutes and saved the NFL significant costs. Combining cloud-based serverless architectures with advanced machine learning and API technologies can optimize digital workflows for cost-effective and agile digital asset management.

ServerlessVideo is a demo application to stream live videos and also perform advanced post-video processing. It uses several AWS services, including Step Functions, Lambda, EventBridge, Amazon ECS, and Amazon Bedrock in a serverless architecture that makes it fast, flexible, and cost-effective. The team used ServerlessVideo to interview attendees about the conference experience and Adobe and partners about how they use Adobe. Learn more about the project and watch videos from Adobe Summit 2024 at video.serverlessland.com.

AWS Lambda

AWS launched support for the latest long-term support release of .NET 8, which includes API enhancements, improved Native Ahead of Time (Native AOT) support, and improved performance.

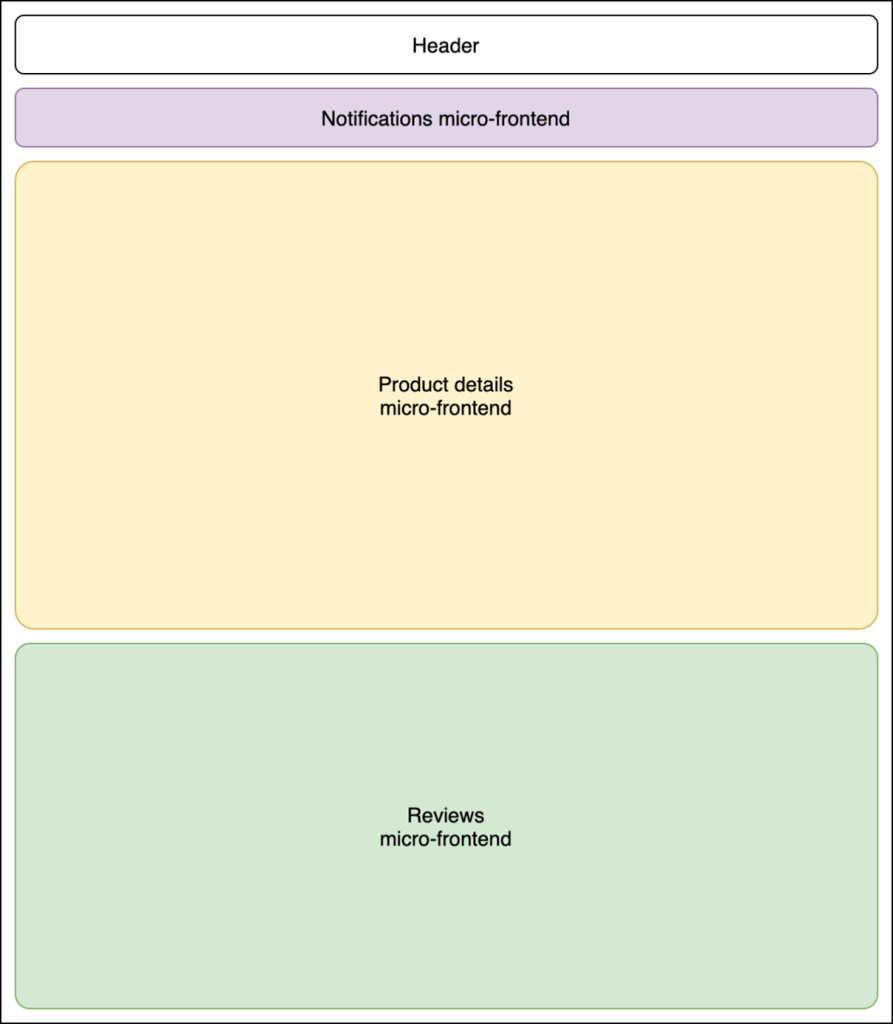

Learn how to compare design approaches for building serverless microservices. This post covers the trade-offs to consider with various application architectures. See how you can apply single responsibility, Lambda-lith, and read and write functions.

The AWS Serverless Java Container has been updated. This makes it easier to modernize a legacy Java application written with frameworks such as Spring, Spring Boot, or JAX-RS/Jersey in Lambda with minimal code changes.

Lambda has improved the responsiveness for configuring Event Source Mappings (ESMs) and Amazon EventBridge Pipes with event sources such as self-managed Apache Kafka, Amazon Managed Streaming for Apache Kafka (MSK), Amazon DocumentDB, and Amazon MQ.

Chaos engineering is a popular practice for building confidence in system resilience. However, many existing tools assume the ability to alter infrastructure configurations, and cannot be easily applied to the serverless application paradigm. You can use the AWS Fault Injection Service (FIS) to automate and manage chaos experiments across different Lambda functions to provide a reusable testing method.

Amazon ECS and AWS Fargate

Amazon Elastic Container Service (Amazon ECS) now provides managed instance draining as a built-in feature of Amazon ECS capacity providers. This allows Amazon ECS to safely and automatically drain tasks from Amazon Elastic Compute Cloud (Amazon EC2) instances that are part of an Amazon EC2 Auto Scaling Group associated with an Amazon ECS capacity provider. This simplification allows you to remove custom lifecycle hooks previously used to drain Amazon EC2 instances. You can now perform infrastructure updates such as rolling out a new version of the ECS agent by seamlessly using Auto Scaling Group instance refresh, with Amazon ECS ensuring workloads are not interrupted.

Credentials Fetcher makes it easier to run containers that depend on Windows authentication when using Amazon EC2. Credentials Fetcher now integrates with Amazon ECS, using either the Amazon EC2 launch type, or AWS Fargate serverless compute launch type.

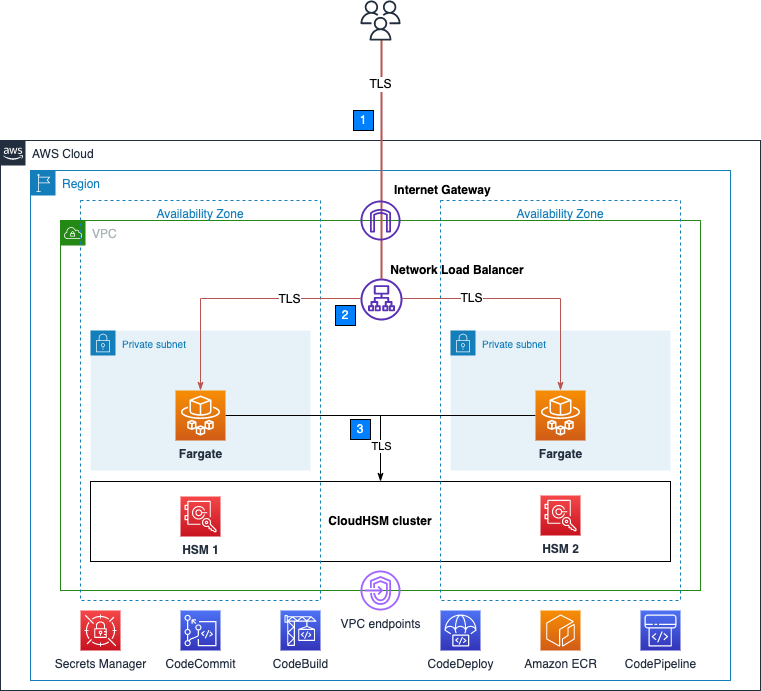

Amazon ECS Service Connect is a networking capability to simplify service discovery, connectivity, and traffic observability for Amazon ECS. You can now more easily integrate certificate management to encrypt service-to-service communication using Transport Layer Security (TLS). You do not need to modify your application code, add additional network infrastructure, or operate service mesh solutions.

Running distributed machine learning (ML) workloads on Amazon ECS allows ML teams to focus on creating, training and deploying models, rather than spending time managing the container orchestration engine. Amazon ECS provides a great environment to run ML projects as it supports workloads that use NVIDIA GPUs and provides optimized images with pre-installed NVIDIA Kernel drivers and Docker runtime.

See how to build preview environments for Amazon ECS applications with AWS Copilot. AWS Copilot is an open source command line interface that makes it easier to build, release, and operate production ready containerized applications.

Learn techniques for automatic scaling of your Amazon Elastic Container Service (Amazon ECS) container workloads to enhance the end user experience. This post explains how to use AWS Application Auto Scaling which helps you configure automatic scaling of your Amazon ECS service. You can also use Amazon ECS Service Connect and AWS Distro for OpenTelemetry (ADOT) in Application Auto Scaling.

AWS Step Functions

AWS workloads sometimes require access to data stored in on-premises databases and storage locations. Traditional solutions to establish connectivity to the on-premises resources require inbound rules to firewalls, a VPN tunnel, or public endpoints. Discover how to use the MQTT protocol (AWS IoT Core) with AWS Step Functions to dispatch jobs to on-premises workers to access or retrieve data stored on-premises.

You can use Step Functions to orchestrate many business processes. Many industries are required to provide audit trails for decision and transactional systems. Learn how to build a serverless pipeline to create a reliable, performant, traceable, and durable pipeline for audit processing.

Amazon EventBridge

Amazon EventBridge now supports publishing events to AWS AppSync GraphQL APIs as native targets. The new integration allows you to publish events easily to a wider variety of consumers and simplifies updating clients with near real-time data.

Discover how to send and receive CloudEvents with EventBridge. CloudEvents is an open-source specification for describing event data in a common way. You can publish CloudEvents directly to EventBridge, filter and route them, and use input transformers and API Destinations to send CloudEvents to downstream AWS services and third-party APIs.

AWS Application Composer

AWS Application Composer lets you create infrastructure as code templates by dragging and dropping cards on a virtual canvas. These represent CloudFormation resources, which you can wire together to create permissions and references. Application Composer has now expanded to the VS Code IDE as part of the AWS Toolkit. This now includes a generative AI partner that helps you write infrastructure as code (IaC) for all 1100+ AWS CloudFormation resources that Application Composer now supports.

Amazon API Gateway

Learn how to consume private Amazon API Gateway APIs using mutual TLS (mTLS). mTLS helps prevent man-in-the-middle attacks and protects against threats such as impersonation attempts, data interception, and tampering.

Serverless at AWS re:Invent

Visit the Serverless Land YouTube channel to find a list of serverless and serverless container sessions from reinvent 2023. Hear from experts like Chris Munns and Julian Wood in their popular session, Best practices for serverless developers, or Nathan Peck and Jessica Deen in Deploying multi-tenant SaaS applications on Amazon ECS and AWS Fargate.

Serverless blog posts

January

- Using generative infrastructure as code with Application Composer

- Consuming private Amazon API Gateway APIs using mutual TLS

- Invoking on-premises resources interactively using AWS Step Functions and MQTT

- Build real-time applications with Amazon EventBridge and AWS AppSync

February

- Re-platforming Java applications using the updated AWS Serverless Java Container

- Introducing the .NET 8 runtime for AWS Lambda

March

- Comparing design approaches for building serverless microservices

- Sending and receiving CloudEvents with Amazon EventBridge

- Automating chaos experiments with AWS Fault Injection Service and AWS Lambda

Serverless container blog posts

January

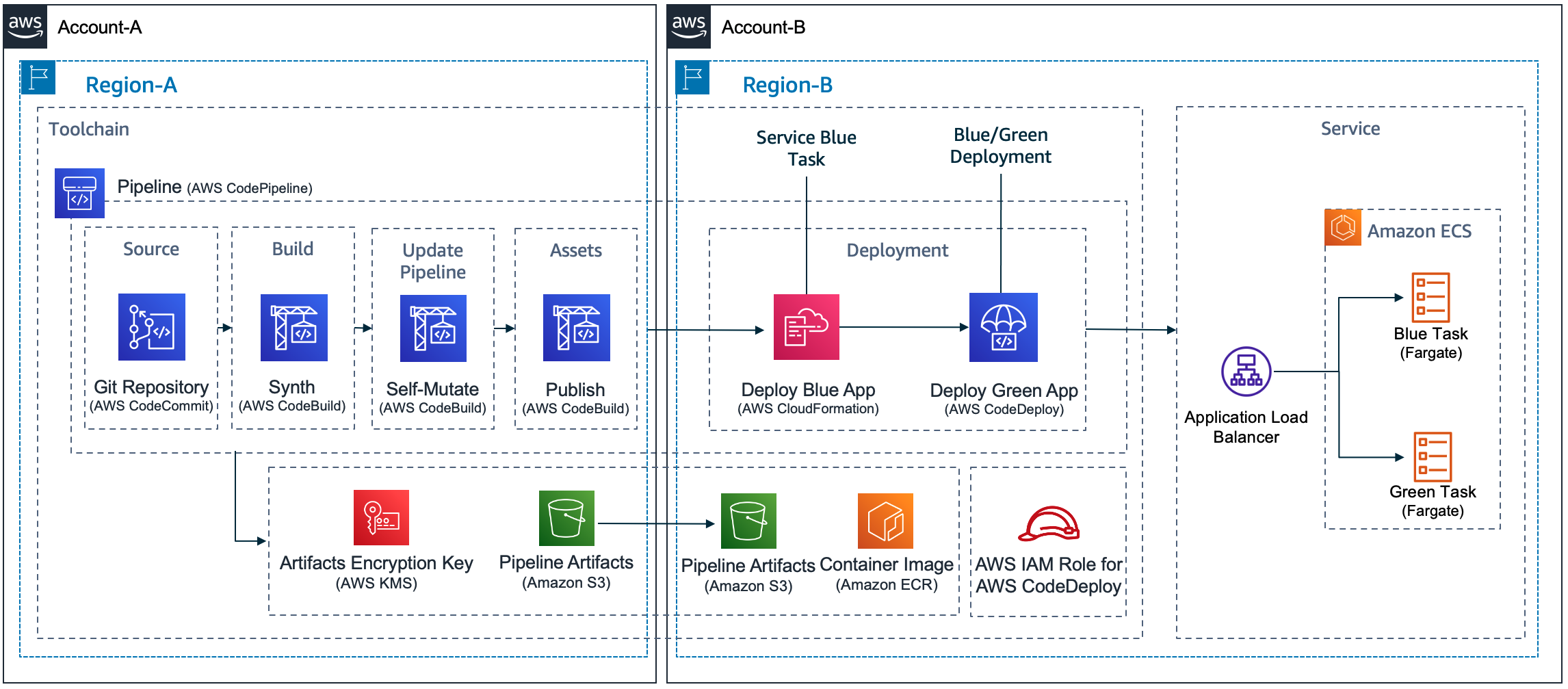

- Signing and Validating OCI Artifacts with AWS Signer

- Amazon ECS enables easier EC2 capacity management, with managed instance draining

- Secure Amazon Elastic Container Service workloads with Amazon ECS Service Connect

- Build preview environments for Amazon ECS applications with AWS Copilot

February

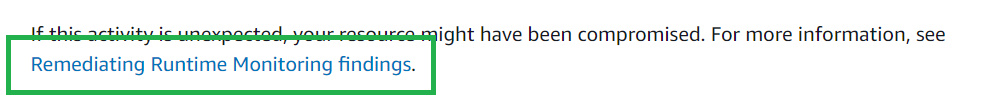

- How Perry Street Software Implemented Resilient Deployment Strategies with Amazon ECS

- Distributed machine learning with Amazon ECS

December

- Windows authentication with gMSA on Linux containers on Amazon ECS with AWS Fargate

- Scale your Amazon ECS using different AWS native services!

Serverless Office Hours

January

- Jan 9 – Introducing ServerlessVideo

- Jan 16 – Serverless Containers

- Jan 23 – API Gateway private integrations

- Jan 30 – Connecting to Salesforce using EventBridge

February

- Feb 6 – Comparing Apache Airflow and Step Functions

- Feb 13 – Refactoring Java applications to serverless

- Feb 20 – Lambda performance tuning

- Feb 27 – Building well architected API Gateway APIs

March

- Mar 5 – Using the new .NET 8 runtime in Lambda

- Mar 12 – Combining Kafka and EventBridge

- Mar 19 – Java AI/ML on Lambda with Human Graphics

- Mar 26 – Lambda low latency runtime

Containers from the Couch

January

February

- Feb 8 – Building your containers on Windows with Finch

- Feb 15 – ECS Builder Series with Autodesk

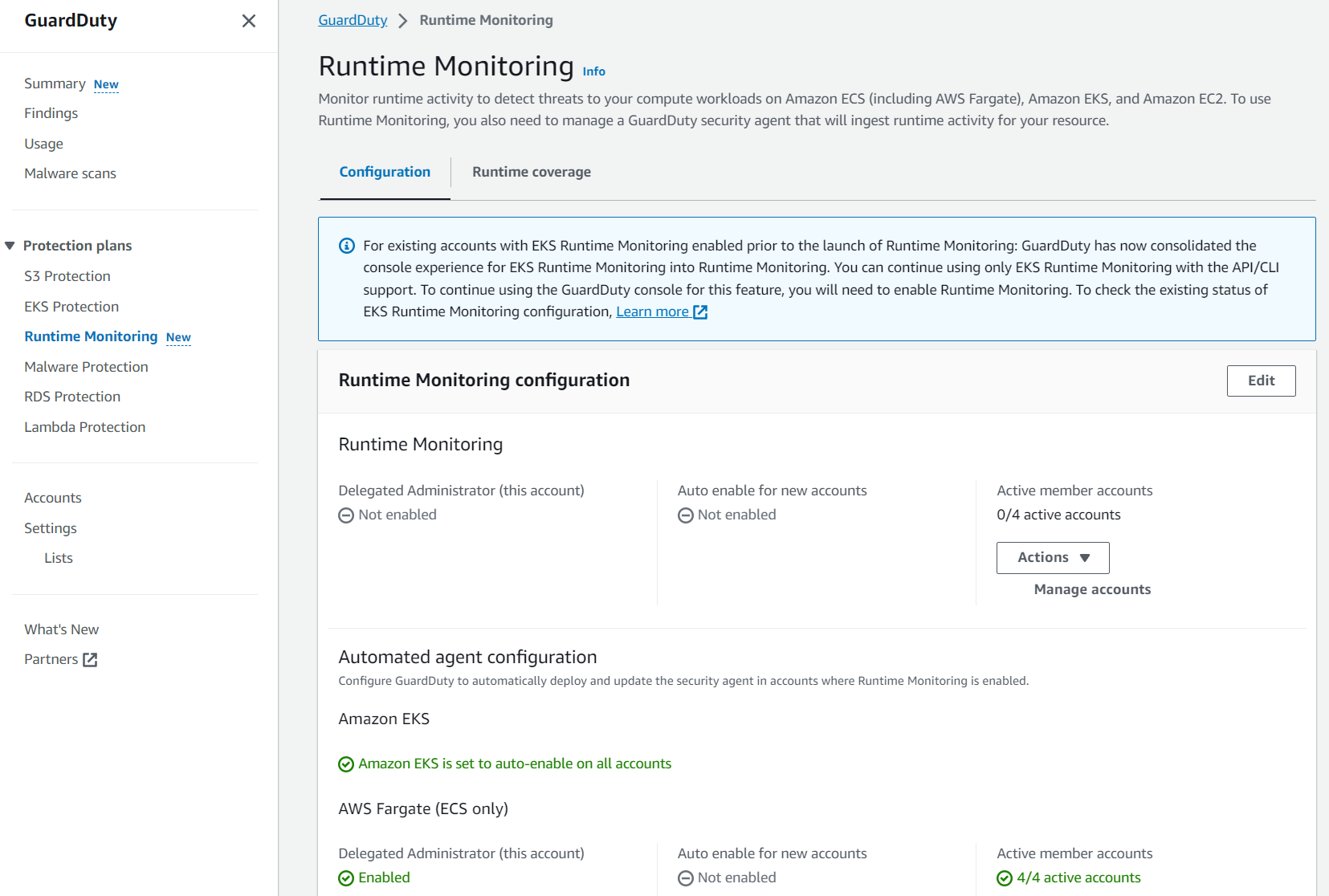

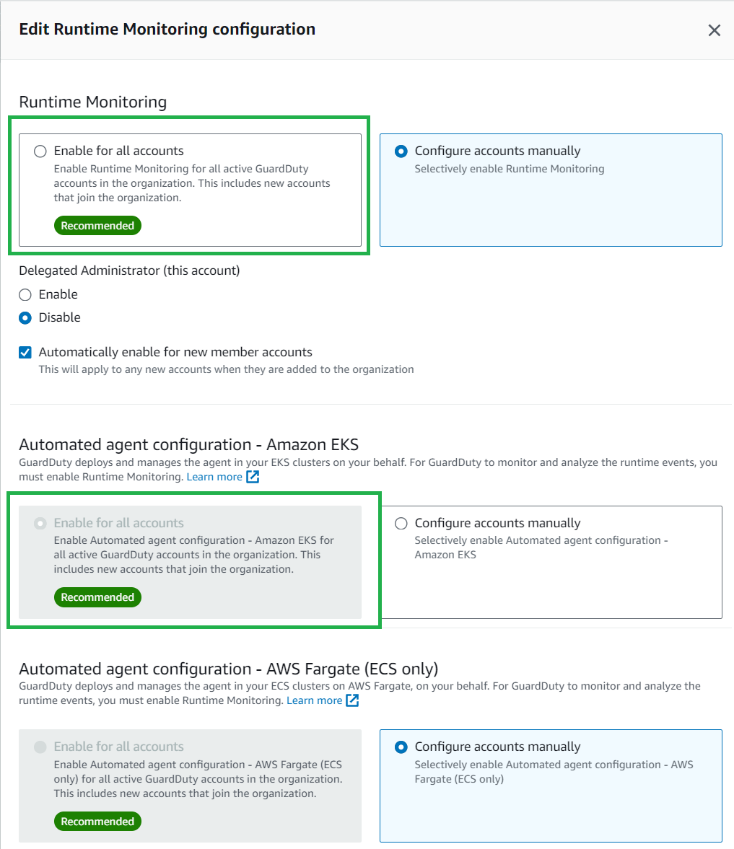

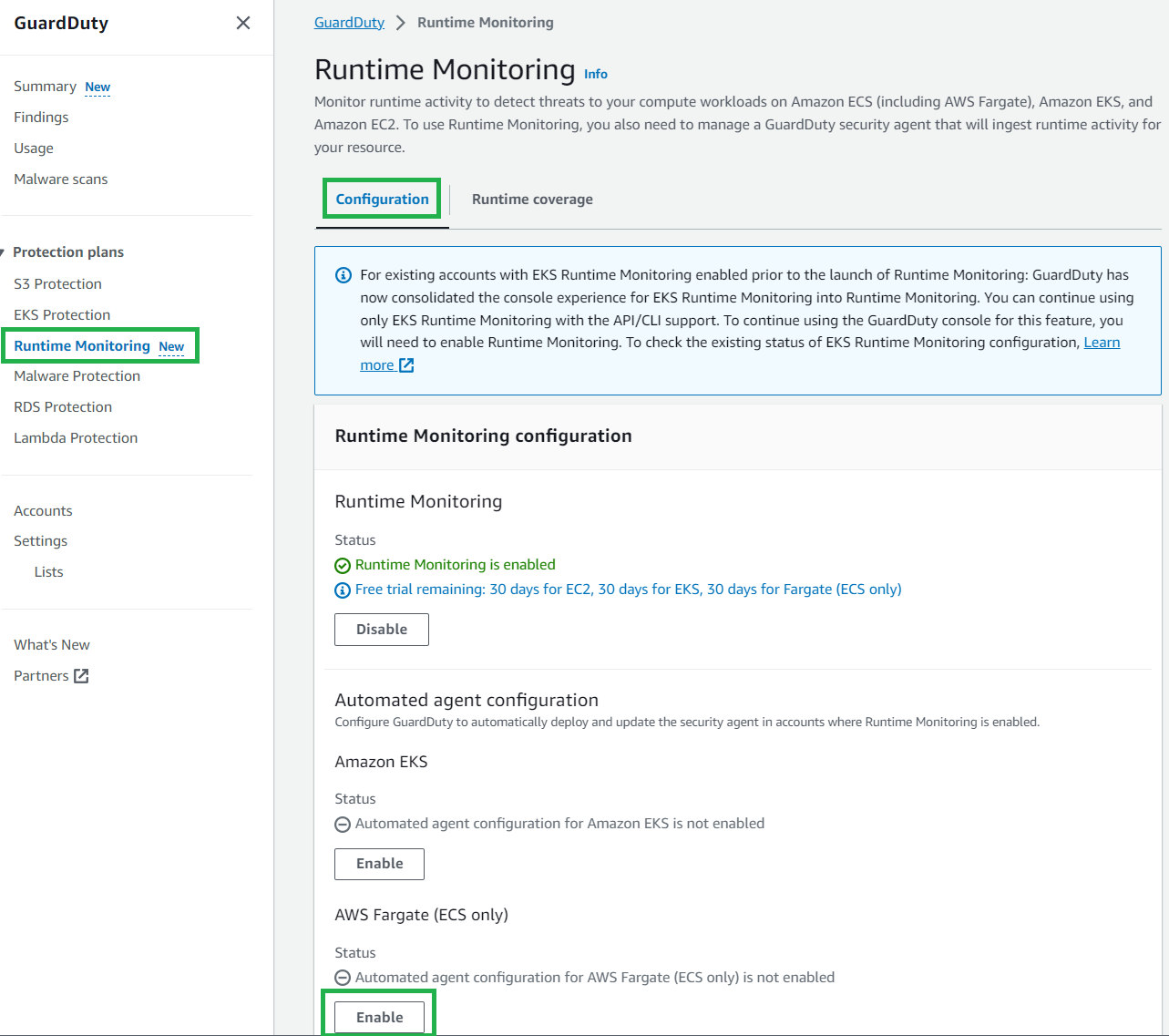

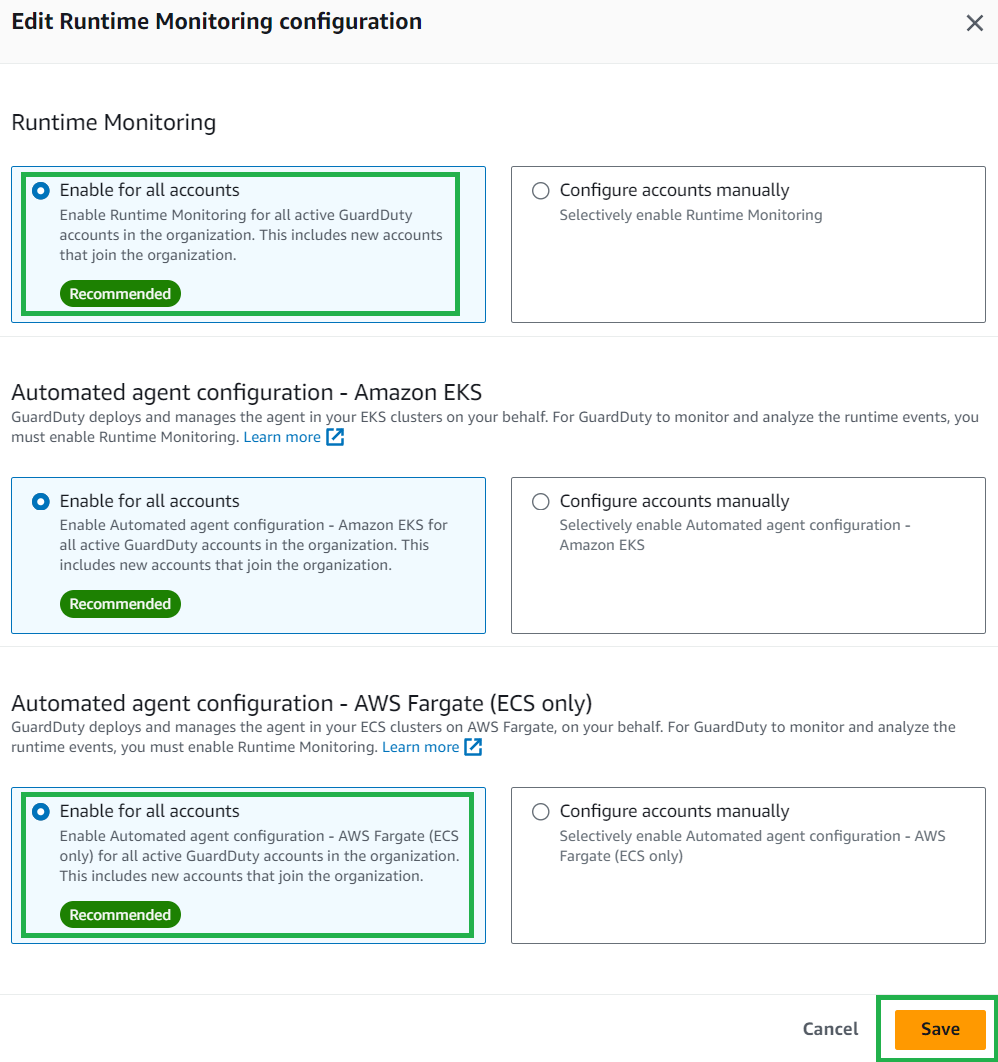

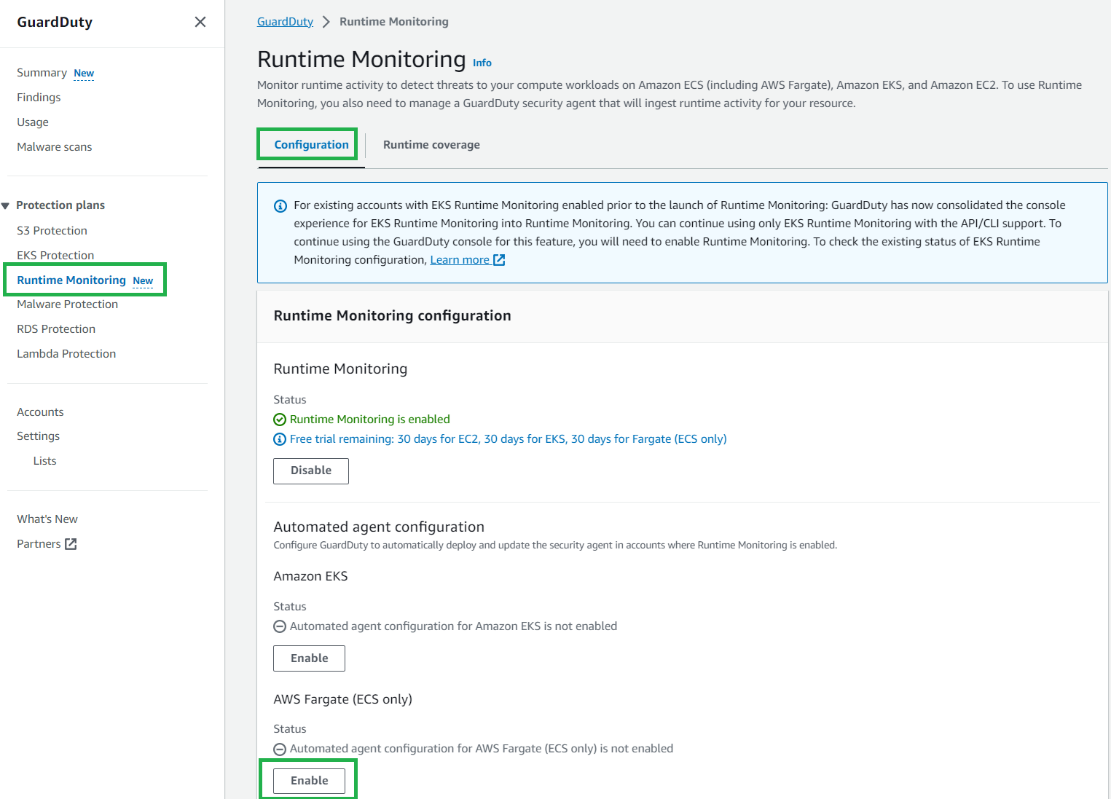

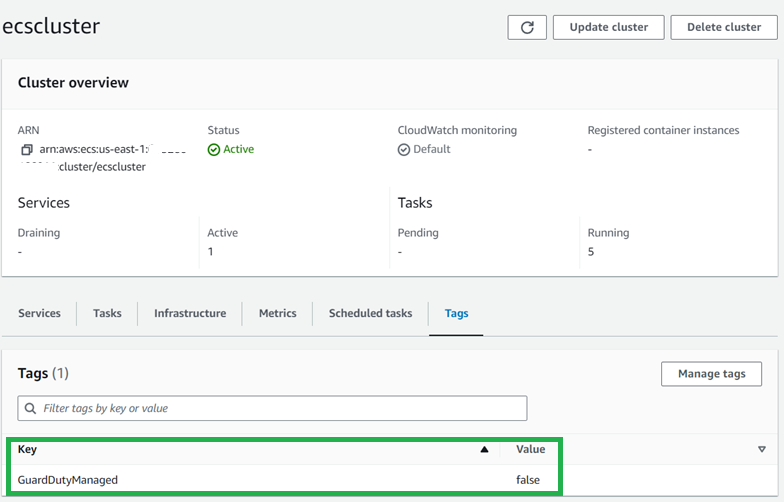

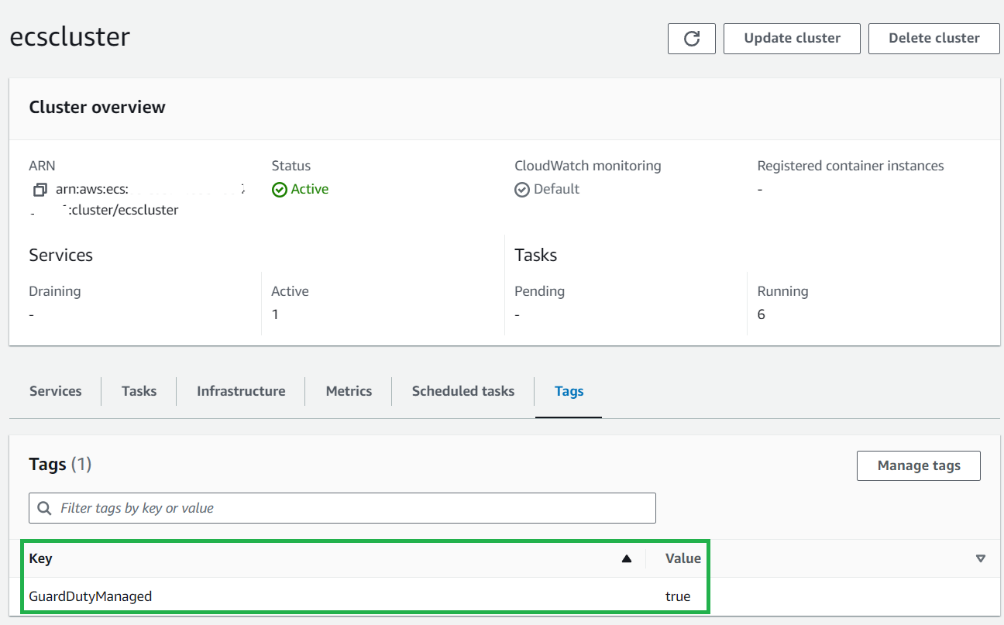

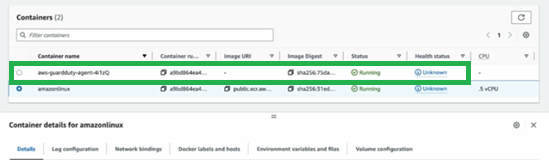

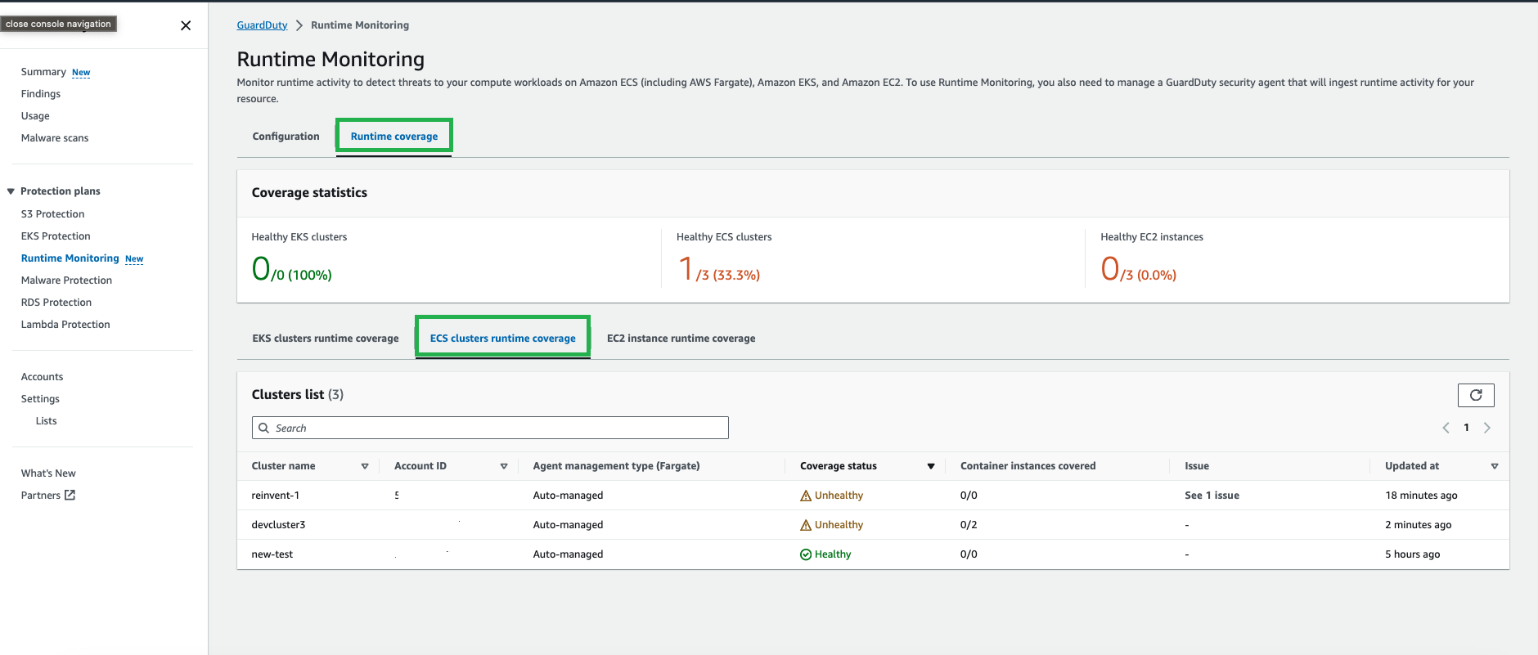

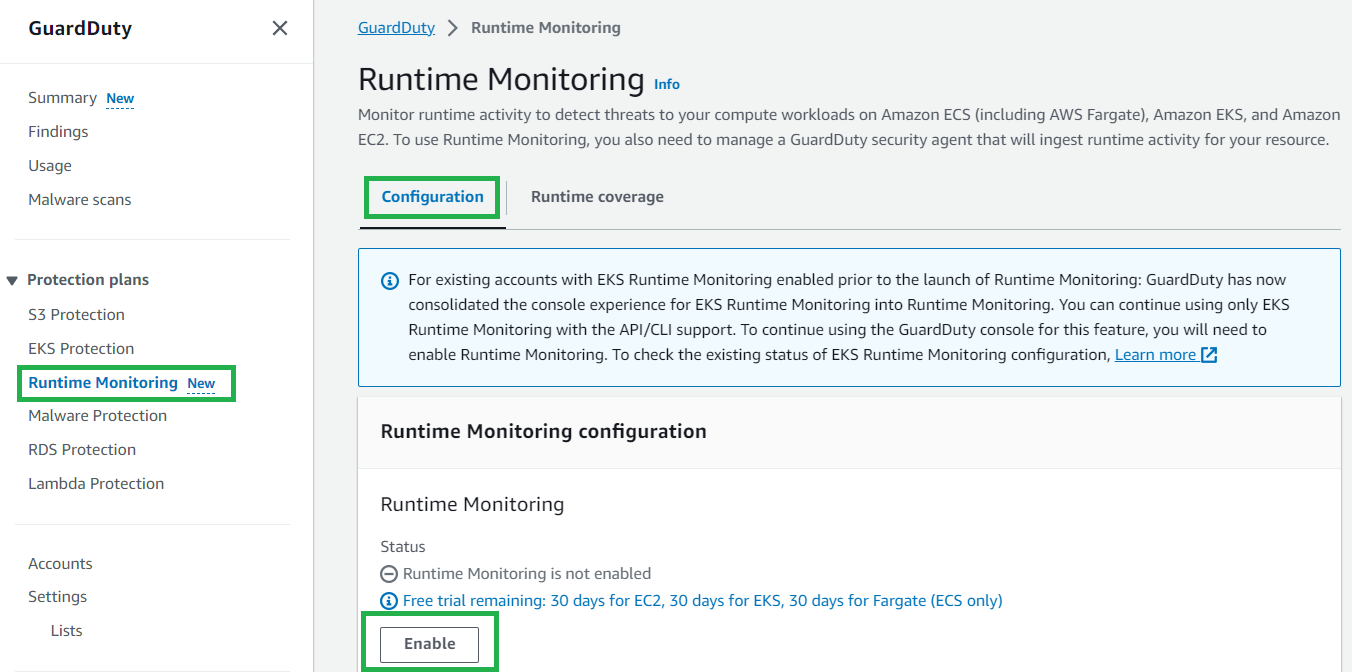

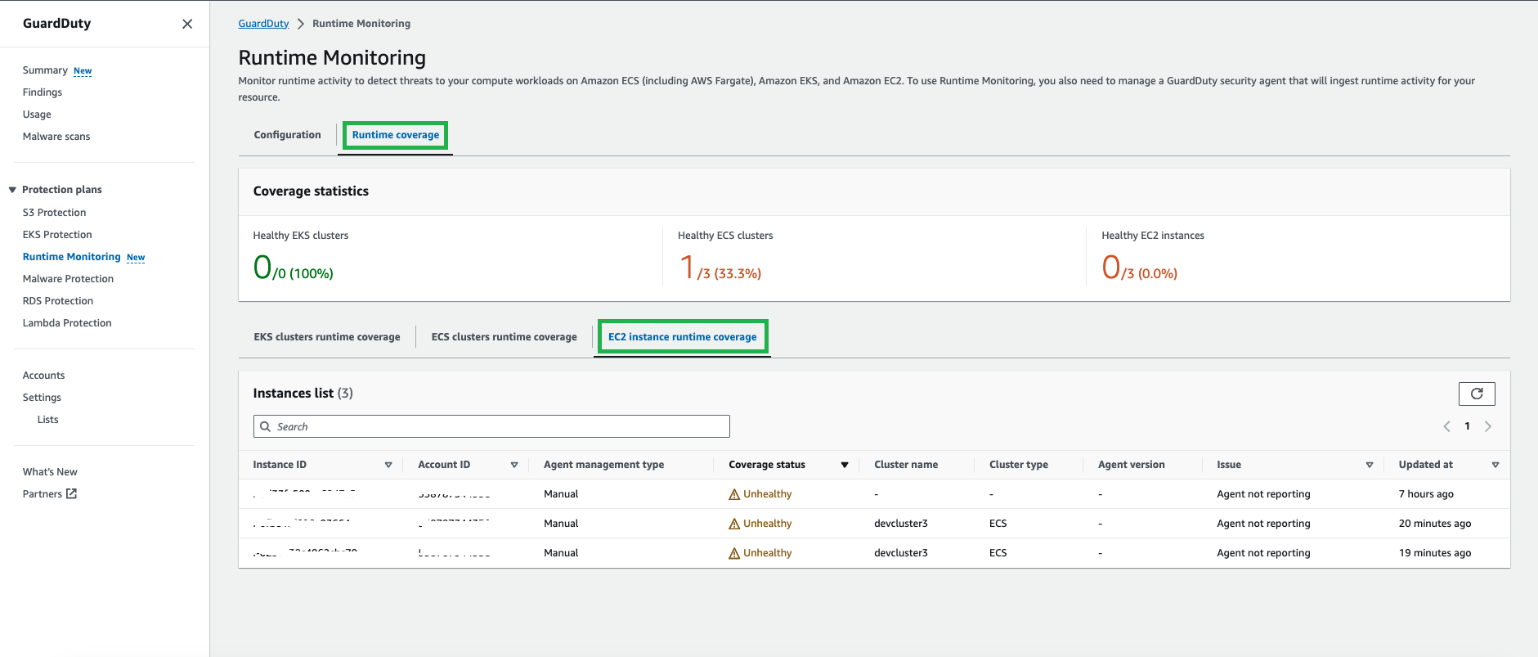

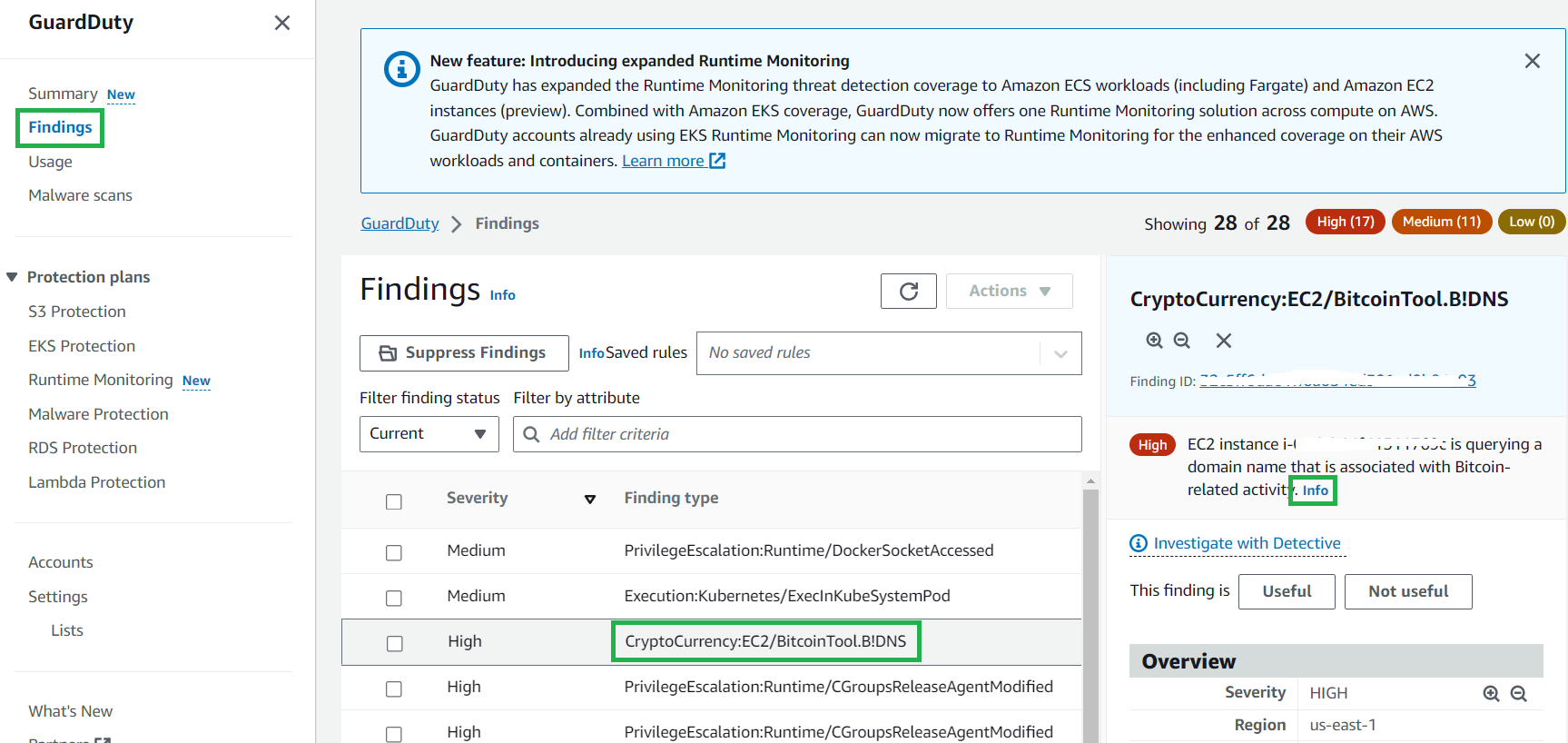

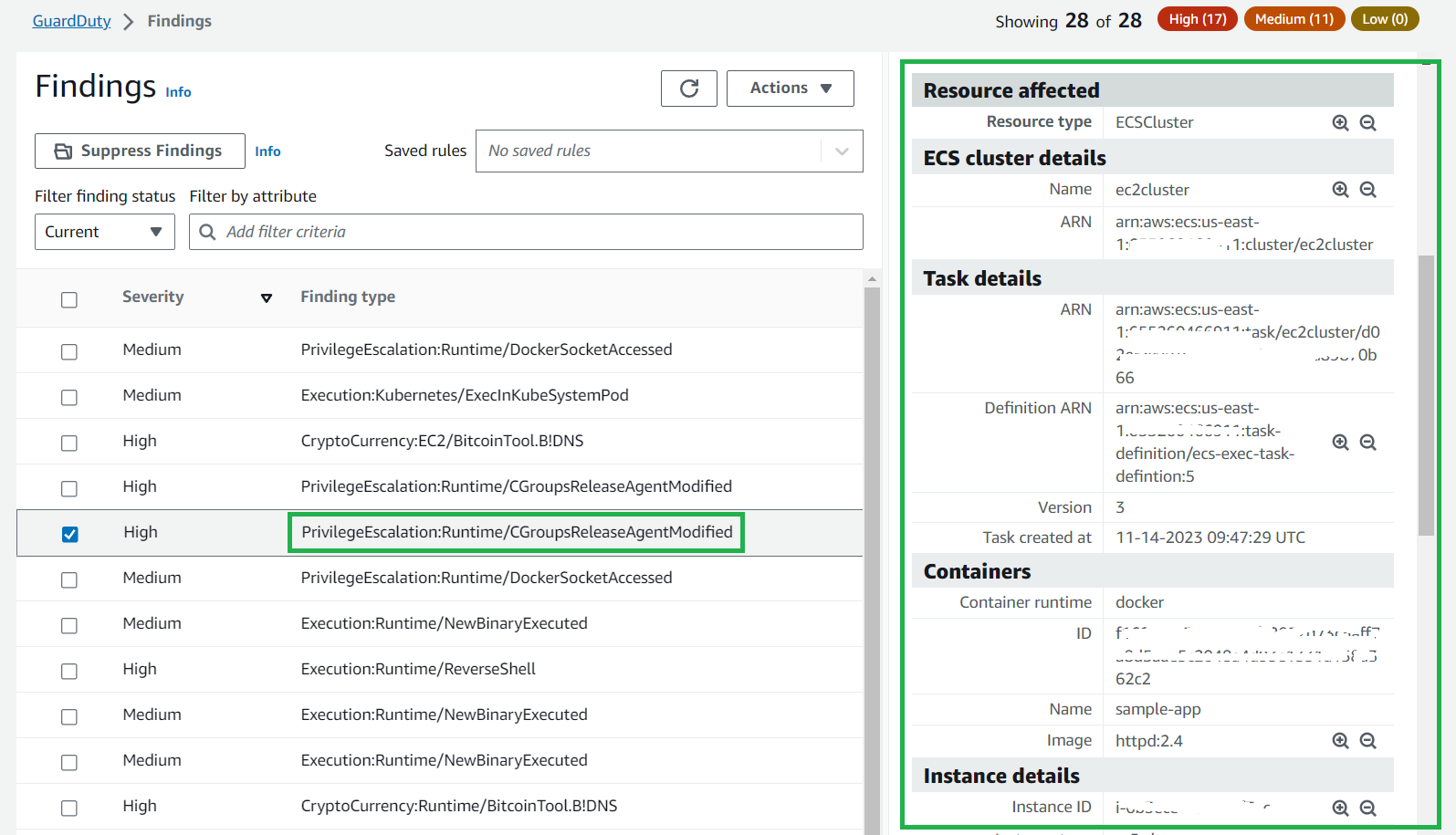

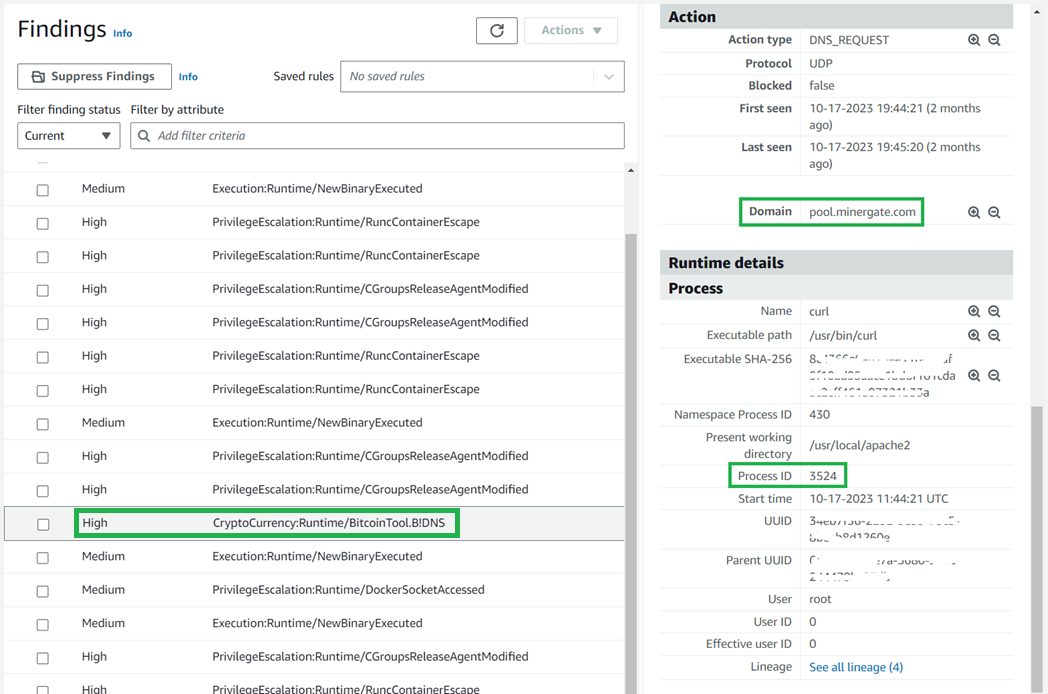

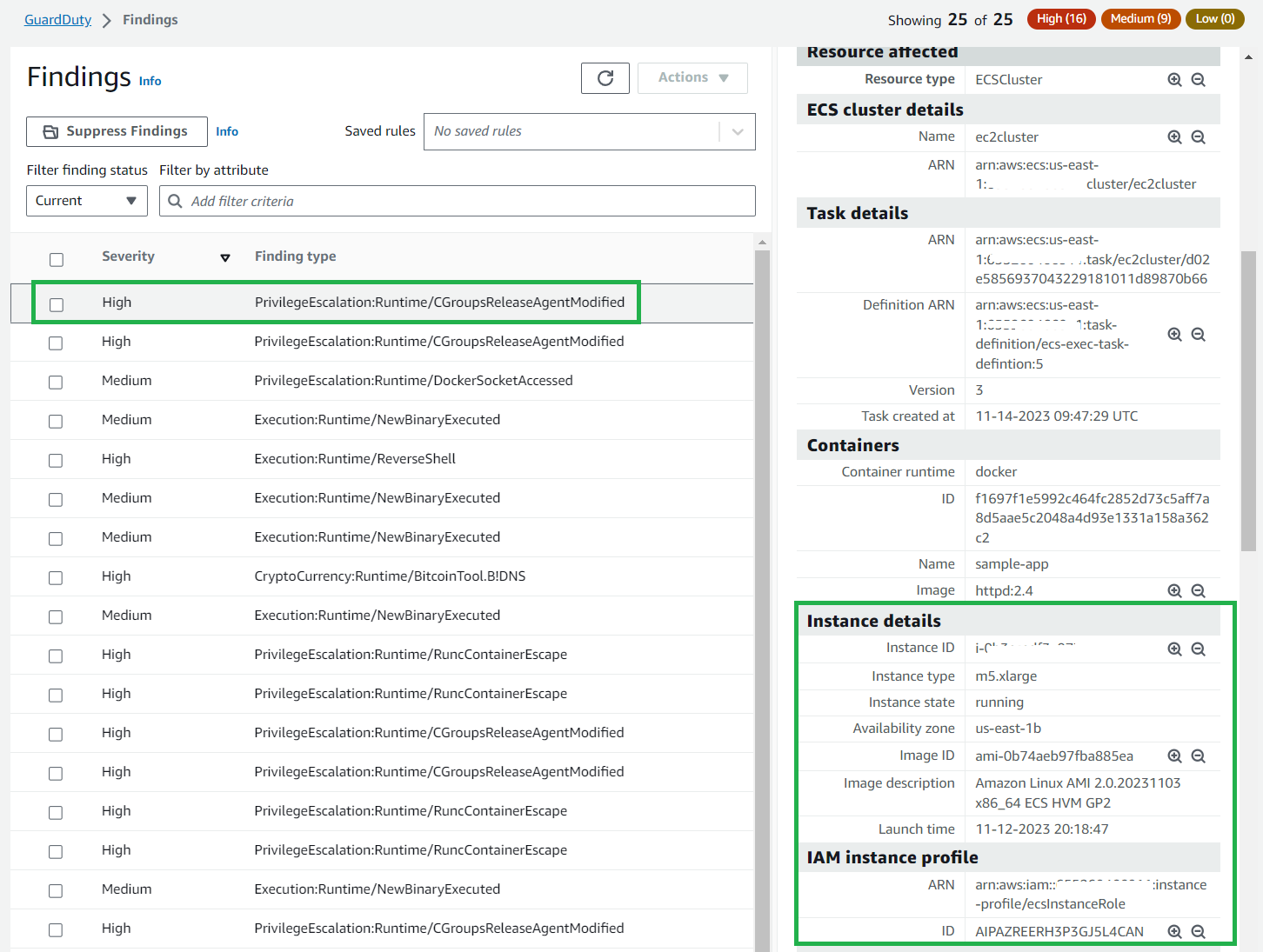

- Feb 29 – Amazon GuardDuty ECS Runtime Monitoring

March

FooBar Serverless

January

- Jan 11 – Bedrock Agents and Knowledge bases from a developer perspective with Demo!

- Jan 18 – What’s new in AppComposer? Integration with Visual Studio Code and Step Functions Workflow Studio!

- Jan 24 – Step Functions optimized integration with Amazon Bedrock

February

- Feb 1 – Introduction to AWS Step Functions – what is this service for? Use cases? Benefits?

- Feb 8 – Must know concepts to work with Step Functions | State types, data management, and workflow types

- Feb 15 – Create your AWS Step Functions workflows with AWS SAM

- Feb 22 – Create your AWS Step Functions workflows with AWS CDK

- Feb 29 – Step Functions Service Integration Patterns

March

- Mar 7 – Step Functions Error Handling Mechanisms

- Mar 14 – Mastering AWS Step Functions: Cost Analysis and Optimization Techniques with Ben Smith

- Mar 21 – Advanced Step Functions Patterns with Ben Smith

- Mar 28 – Run a long execution job with no hassle and for free with Step Functions

Still looking for more?

The Serverless landing page has more information. The Lambda resources page contains case studies, webinars, whitepapers, customer stories, reference architectures, and even more Getting Started tutorials.

You can also follow the Serverless Developer Advocacy team on Twitter to see the latest news, follow conversations, and interact with the team.

|

|

And finally, visit the Serverless Land and Containers on AWS websites for all your serverless and serverless container needs.

Happy Lunar New Year! Wishing you a year filled with joy, success, and endless opportunities! May the Year of the Dragon bring uninterrupted connections and limitless growth

Happy Lunar New Year! Wishing you a year filled with joy, success, and endless opportunities! May the Year of the Dragon bring uninterrupted connections and limitless growth