Post Syndicated from Tristan Nguyen original https://aws.amazon.com/blogs/messaging-and-targeting/build-better-engagement-using-the-aws-community-engagement-flywheel-part-1-of-3/

Introduction

Part 1 of 3: Extending the Cohort Modeler

Businesses are constantly looking for better ways to engage with customer communities, but it’s hard to do when profile data is limited to user-completed form input or messaging campaign interaction metrics. Neither of these data sources tell a business much about their customer’s interests or preferences when they’re engaging with that community.

To bridge this gap for their community of customers, AWS Game Tech created the Cohort Modeler: a deployable solution for developers to map out and classify player relationships and identify like behavior within a player base. Additionally, the Cohort Modeler allows customers to aggregate and categorize player metrics by leveraging behavioral science and customer data.

In this series of three blog posts, you’ll learn how to:

- Extend the Cohort Modeler’s functionality to provide reporting functionality.

- Use Amazon Pinpoint, the Digital User Engagement Events Database (DUE Events Database), and the Cohort Modeler together to group your customers into cohorts based on that data.

- Interact with them through automation to send meaningful messaging to them.

- Enrich their behavioral profiles via their interaction with your messaging.

In this blog post, we’ll show how to extend Cohort Modeler’s functionality to include and provide cohort reporting and extraction.

Use Case Examples for The Cohort Modeler

For this example, we’re going to retrieve a cohort of individuals from our Cohort Modeler who we’ve identified as at risk:

- Maybe they’ve triggered internal alarms where they’ve shared potential PII with others over cleartext

- Maybe they’ve joined chat channels known to be frequented by some of the game’s less upstanding citizens.

Either way, we want to make sure they understand the risks of what they’re doing and who they’re dealing with.

Because the Cohort Modeler’s API automatically translates the data it’s provided into the graph data format, the request we’re making is an easy one: we’re simply asking CM to retrieve all of the player IDs where the player’s ea_atrisk attribute value is greater than 2.

In our case, that either means

- They’ve shared PII at least twice, or shared PII at least once.

- Joined the #give-me-your-credit-card chat channel, which is frequented by real-life scammers.

These are currently the only two activities which generate at-risk data in our example model.

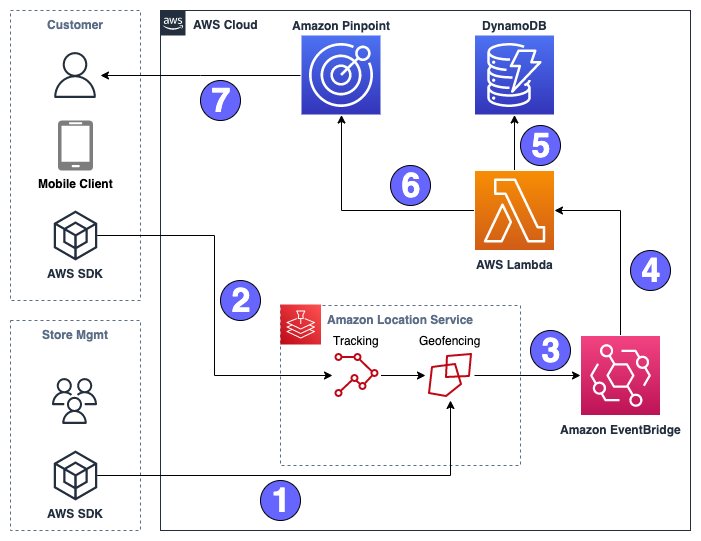

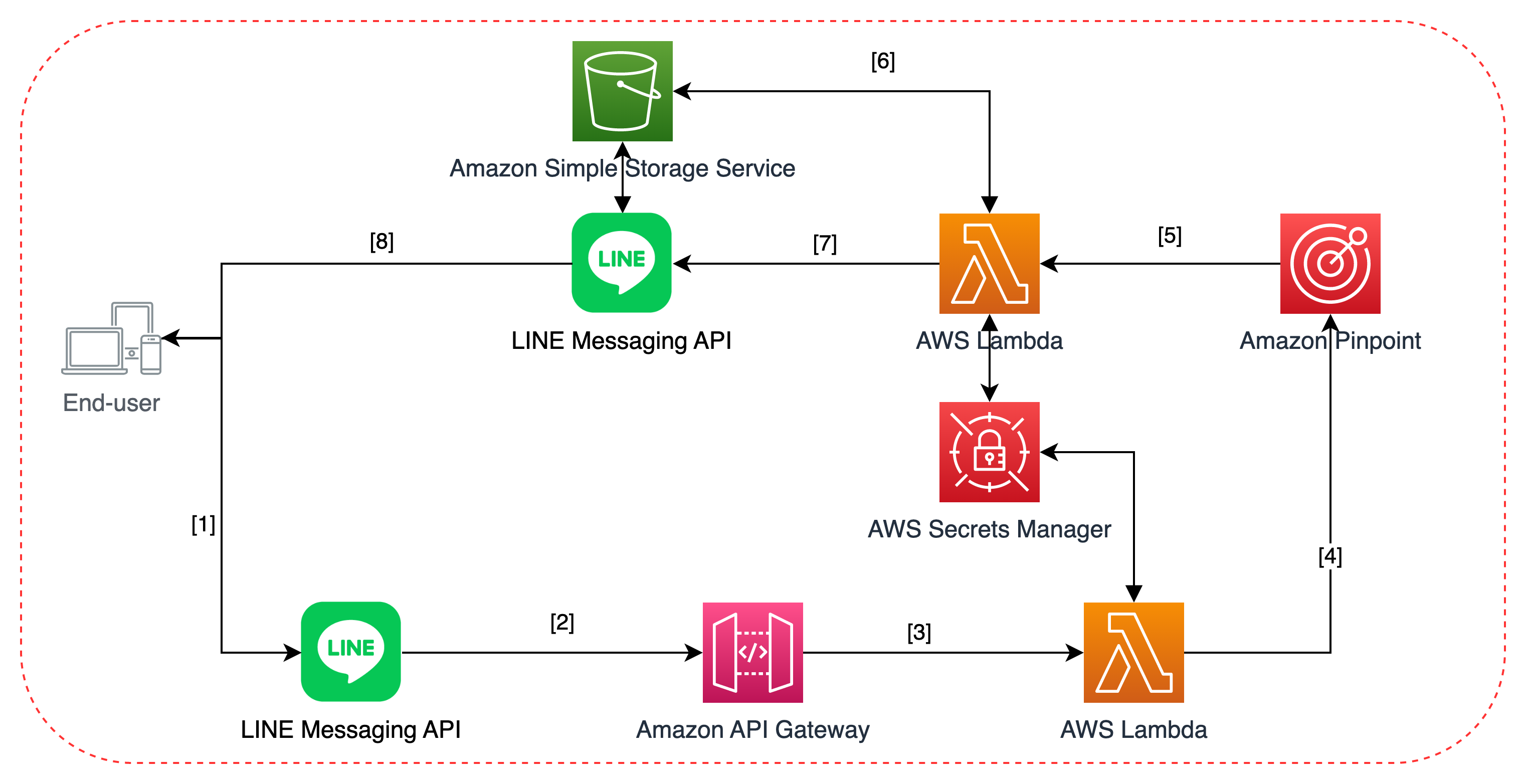

Architecture overview

In this example, you’ll extend Cohort Modeler’s functionality by creating a new API resource and method, and test that functional extension to verify it’s working. This supports our use case by providing a studio with a mechanism to identify the cohort of users who have engaged in activities that may put them at risk for fraud or malicious targeting.

Prerequisites

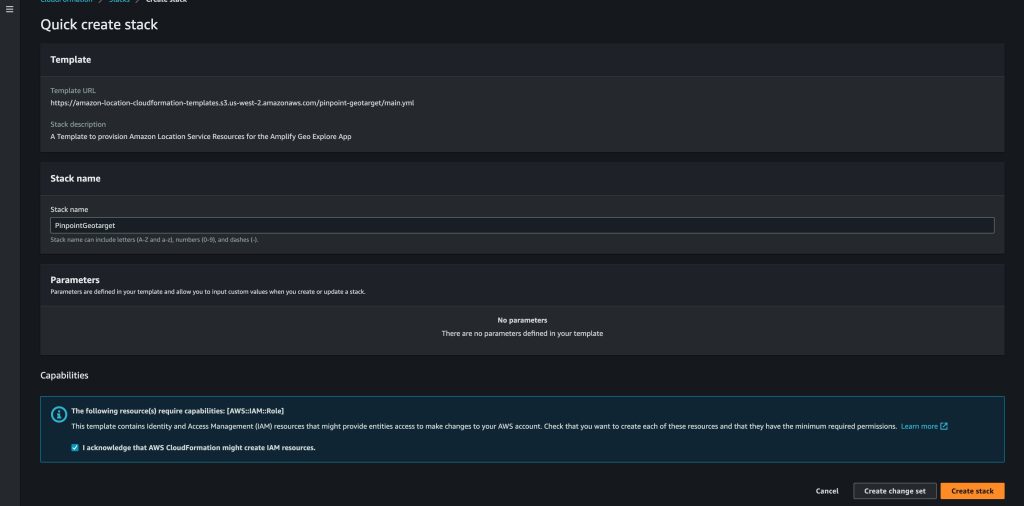

This blog post series integrates two tech stacks: the Cohort Modeler and the Digital User Engagement Events Database, both of which you’ll need to install. In addition to setting up your environment, you’ll need to clone the Community Engagement Flywheel repository, which contains the scripts you’ll need to use to integrate Cohort Modeler and Pinpoint.

You should have the following prerequisites:

- Create and activate a new AWS account for the procedure.

- Either use an Amazon Elastic Compute Cloud (Amazon EC2) or your local computer to follow the instructions on github to install the Cohort Modeler using the AWS Serverless Application Model (AWS SAM) Template.

- You should also have a working knowledge of python and pip-3, and basic OS-level operations.

- The respective solutions deployed, check out the Troubleshooting section if you run into any errors:

Walkthrough

Extending the Cohort Modeler

In order to meet our functional requirements, we’ll need to extend the Cohort Modeler API. This first part will walk you through the mechanisms to do so. In this walkthrough, you’ll:

- Create an Amazon Simple Storage Service (Amazon S3) bucket to accept exports from the Cohort Modeler

- Create an AWS Lambda Layer to support Python operations for Cohort Modeler’s Gremlin interface to the Amazon Neptune database

- Build a Lambda function to respond to API calls requesting cohort data, and

- Integrate the Lambda with the Amazon API Gateway.

The S3 Export Bucket

Normally it’d be enough to just create the S3 Bucket, but because our Cohort Modeler operates inside an Amazon Virtual Private Cloud (VPC), we need to both create the bucket and create an interface endpoint.

Create the Bucket

The size of a Cohort Modeler extract could be considerable depending on the size of a cohort, so it’s a best practice to deliver the extract to an S3 bucket. All you need to do in this step is create a new S3 bucket for Cohort Modeler exports.

- Navigate to the S3 Console page, and inside the main pane, choose Create Bucket.

- In the General configuration section, enter a bucket a name, remembering that its name must be unique across all of AWS.

- You can leave all other settings at their default values, so scroll down to the bottom of the page and choose Create Bucket. Remember the name – I’ll be referring to it as your “CM export bucket” from here on out.

Create S3 Gateway endpoint

When accessing “global” services, like S3 (as opposed to VPC services, like EC2) from inside a private VPC, you need to create an Endpoint for that service inside the VPC. For more information on how Gateway Endpoints for Amazon S3 work, refer to this documentation.

- Open the Amazon VPC console.

- In the navigation pane, under Virtual private cloud, choose Endpoints.

- In the Endpoints pane, choose Create endpoint.

- In the Endpoint settings section, under Service category, select AWS services.

- In the Services section, under find resources by attribute, choose Type, and select the filter Type: Gateway and select com.amazonaws.region.s3.

- For VPC section, select the VPC in which to create the endpoint.

- For Route tables, section, select the route tables to be used by the endpoint. We automatically add a route that points traffic destined for the service to the endpoint network interface.

- In the Policy section, select Full access to allow all operations by all principals on all resources over the VPC endpoint. Otherwise, select Custom to attach a VPC endpoint policy that controls the permissions that principals have to perform actions on resources over the VPC endpoint.

- (Optional) To add a tag, choose Add new tag in the Tags section and enter the tag key and the tag value.

- Choose Create endpoint.

Create the VPC Endpoint Security Group

When accessing “global” services, like S3 (as opposed to VPC services, like EC2) from inside a private VPC, you need to create an Endpoint for that service inside the VPC. One of the things the Endpoint needs to know is what network interfaces to accept connections from – so we’ll need to create a Security Group to establish that trust.

- Navigate to the Amazon VPC console and In the navigation pane, under Security, choose Security groups.

- In the Security Groups pane choose Create security group.

- Under the Basic details section, name your security group S3 Endpoint SG.

- Under the Outbound Rules section, choose Add Rule.

- Under Type, select All traffic.

- Under Source, leave Custom selected.

- For the Custom Source, open the dropdown and choose the S3 gateway endpoint (this should be named pl-63a5400a)

- Repeat the process for Outbound rules.

- When finished, choose Create security group

Creating a Lambda Layer

You can use the code as provided in a Lambda, but the gremlin libraries required for it to run are another story: gremlin_python doesn’t come as part of the default Lambda libraries. There are two ways to address this:

- You can upload the libraries with the code in a .zip file; this will work, but it will mean the Lambda isn’t editable via the built-in editor, which isn’t a scalable technique (and makes debugging quick changes a real chore).

- You can create a Lambda Layer, upload those libraries separately, and then associate them with the Lambda you’re creating.

The Layer is a best practice, so that’s what we’re going to do here.

Creating the zip file

In Python, you’ll need to upload a .zip file to the Layer, and all of your libraries need to be included in paths within the /python directory (inside the zip file) to be accessible. Use pip to install the libraries you need into a blank directory so you can zip up only what you need, and no more.

- Create a new subdirectory in your user directory,

- Create a /python subdirectory,

- Invoke pip3 with the —target option:

pip install --target=./python gremlinpythonEnsure that you’re zipping the python folder, the resultant file should be named python.zip and extracts to a python folder.

Creating the Layer

Head to the Lambda console, and select the Layers menu option from the AWS Lambda navigation pane. From there:

- Choose Create layer in the Layer’s section

- Give it a relevant name – like gremlinpython .

- Select Upload a .zip file and upload the zip file you just created

- For Compatible architectures, select x86_64.

- Select the Python 3.8 as your runtime,

- Choose Create.

Assuming all steps have been followed, you’ll receive a message that the layer has been successfully created.

Building the Lambda

You’ll be extending the Cohort Modeler with new functionality, and the way CM manages its functionality is via microservice-based Lambdas. You’ll be building a new API: to query the CM and extract Cohort information to S3.

Create the Lambda

Head back to the Lambda service menu, in the Resources for (your region) section, choose Create Function. From there:

- On the Create function page select Author from scratch.

- For Function Name enter ApiCohortGet for consistency.

- For Runtime choose Python 3.8.

- For Architectures, select x86_64.

- Under the Advanced Settings pane select Enable VPC – you’re going to need this Lambda to query Cohort Modeler’s Neptune database, which has VPC endpoints.

- Under VPC select the VPC created by the Cohort Modeler installation process.

- Select all subnets in the VPC.

- Select the security group labeled as the Security Group for API Lambda functions (also installed by CM)

- Furthermore, select the security group S3 Endpoint SG we created, this allows the Lambda function hosted inside the VPC to access the S3 bucket.

- Choose Create Function.

- In the Code tab, and within the Code source window, delete all of the sample code and replace it with the code below. This python script will allow you to query Cohort Modeler for cohort extracts.

import os

import json

import boto3

from datetime import datetime

from gremlin_python import statics

from gremlin_python.driver.driver_remote_connection import DriverRemoteConnection

from gremlin_python.driver.protocol import GremlinServerError

from gremlin_python.driver import serializer

from gremlin_python.process.anonymous_traversal import traversal

from gremlin_python.process.graph_traversal import __

from gremlin_python.process.strategies import *

from gremlin_python.process.traversal import T, P

from aiohttp.client_exceptions import ClientConnectorError

import logging

logger = logging.getLogger()

logger.setLevel(logging.INFO)

s3 = boto3.client('s3')

def query(g, cohort, thresh):

return (g.V().hasLabel('player')

.has(cohort, P.gt(thresh))

.valueMap("playerId", cohort)

.toList())

def doQuery(g, cohort, thresh):

return query(g, cohort, thresh)

# Lambda handler

def lambda_handler(event, context):

# Connection instantiation

conn = create_remote_connection()

g = create_graph_traversal_source(conn)

try:

# Validate the cohort info here if needed.

# Grab the event resource, method, and parameters.

resource = event["resource"]

method = event["httpMethod"]

pathParameters = event["pathParameters"]

# Grab query parameters. We should have two: cohort and threshold

queryParameters = event.get("queryStringParameters", {})

cohort_val = pathParameters.get("cohort")

thresh_val = int(queryParameters.get("threshold", 0))

result = doQuery(g, cohort_val, thresh_val)

# Convert result to JSON

result_json = json.dumps(result)

# Generate the current timestamp in the format YYYY-MM-DD_HH-MM-SS

current_timestamp = datetime.now().strftime('%Y-%m-%d_%H-%M-%S')

# Create the S3 key with the timestamp

s3_key = f"export/{cohort_val}_{thresh_val}_{current_timestamp}.json"

# Upload to S3

s3_result = s3.put_object(

Bucket=os.environ['S3ExportBucket'],

Key=s3_key,

Body=result_json,

ContentType="application/json"

)

response = {

'statusCode': 200,

'body': s3_key

}

return response

except Exception as e:

logger.error(f"Error occurred: {e}")

return {

'statusCode': 500,

'body': str(e)

}

finally:

conn.close()

# Connection management

def create_graph_traversal_source(conn):

return traversal().withRemote(conn)

def create_remote_connection():

database_url = 'wss://{}:{}/gremlin'.format(os.environ['NeptuneEndpoint'], 8182)

return DriverRemoteConnection(

database_url,

'g',

pool_size=1,

message_serializer=serializer.GraphSONSerializersV2d0()

)Configure the Lambda

Head back to the Lambda service page, and fom the navigation pane, select Functions. In the Functions section select ApiCohortGet from the list.

- In the Function overview section, select the Layers icon beneath your Lambda name.

- In the Layers section, choose Add a layer.

- From the Choose a layer section, select Layer Source to Custom layers.

- From the dropdown menu below, select your recently custom layer, gremlinpython.

- For Version, select the appropriate (probably the highest, or most recent) version.

- Once finished, choose Add.

Now, underneath the Function overview, navigate to the Configuration tab and choose Environment variables from the navigation pane.

- Now choose edit to create a new variable. For the key, enter NeptuneEndpoint , and give it the value of the Cohort Modeler’s Neptune Database endpoint. This value is available from the Neptune control panel under Databases. This should not be the read-only cluster endpoint, so select the ‘writer’ type. Once selected, the Endpoint URL will be listed beneath the Connectivity & security tab

- Create an additional new key titled, S3ExportBucket and for the value use the unique name of the S3 bucket you created earlier to receive extracts from Cohort Modeler. Once complete, choose save

- In a production build, you can have this information stored in System Manager Parameter Store in order to ensure portability and resilience.

While still in the Configuration tab, under the navigation pane choose Permissions.

- Note that AWS has created an IAM Role for the Lambda. select the role name to view it in the IAM console.

- Under the Permissions tab, in the Permisions policies section, there should be two policies attached to the role: AWSLambdaBasicExecutionRole and AWSLambdaVPCAccessExecutionRole.

- You’ll need to give the Lambda access to your CM export bucket

- Also in the Permissions policies section, choose the Add permissions dropdown and select Create Inline policy – we won’t be needing this role anywhere else.

- On the new page, choose the JSON tab.

- Delete all of the sample code within the Policy editor, and paste the inline policy below into the text area.

-

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": "s3:*", "Resource": [ "arn:aws:s3:::YOUR-S3-BUCKET-NAME-HERE", "arn:aws:s3:::YOUR-S3-BUCKET-NAME-HERE /*" ] } ] }

- Replace the placeholder YOUR-S3-BUCKET-NAME-HERE with the name of your CM export bucket.

- Click Review Policy.

- Give the policy a name – I used ApiCohortGetS3Policy.

- Click Create Policy.

Integrating with API Gateway

Now you’ll need to establish the API Gateway that the Cohort Modeler created with the new Lambda functions that you just created. If you’re on the old console User Interface, we strongly recommend switching over to the new console UI. This is due to the previous UI being deprecated by the 30th of October 2023. Consequently, the following instructions will apply to the new console UI.

- Navigate to the main service page for API Gateway.

- From the navigation pane, choose Use the new console.

Create the Resource

- From the new console UI, select the name of the API Gateway from the APIs Section that corresponds to the name given when you launched the SAM template.

- On the Resources navigation pane, choose /data, followed by selecting Create resource.

- Under Resource name, enter cohort, followed by Create resource.

We’re not quite finished. We want to be able to ask the Cohort Modeler to give us a cohort based on a path parameter – so that way when we go to /data/cohort/COHORT-NAME/ we receive back information about the cohort name that we provided. Therefore…

Create the Method

Now we’ll create the GET Method we’ll use to request cohort data from Cohort Modeler.

- From the same menu, choose the /data/cohort/{cohort} Resource, followed by selecting Get from the Methods dropdown section, and finally choosing Create Method.

- From the Create method page, select GET under Method type, and select Lambda function under the Integration type.

- For the Lambda proxy integration, turn the toggle switch on.

- Under Lamba function, choose the function ApiCohortGet, created previously.

- Finally, choose Create method.

- API Gateway will prompt and ask for permissions to access the Lambda – this is fine, choose OK.

Create the API Key

You’ll want to access your API securely, so at a minimum you should create an API Key and require it as part of your access model.

- Under the API Gateway navigation pane, choose APIs. From there, select API Keys, also under the navigation pane.

- In the API keys section, choose Create API key.

- On the Create API key page, enter your API Key name, while leaving the remaining fields at their default values. Choose Save to complete.

- Returning to the API keys section, select and copy the link for the API key which was generated.

- Once again, select APIs from the navigation menu, and continue again by selecting the link to your CM API from the list.

- From the navigation pane, choose API settings, folded under your API name, and not the Settings option at the bottom of the tab.

- In the API details section, choose Edit under API details. Once on the Edit API settings page, ensure the Header option is selected under API key source.

Deploy the API

Now that you’ve made your changes, you’ll want to deploy the API with the new endpoint enabled.

- Back in the navigation pane, under your CM API’s dropdown menu, choose Resources.

- On the Resources page for your CM API, choose Deploy API.

- Select the Prod stage (or create a new stage name for testing) and click Deploy.

Test the API

When the API has deployed, the system will display your API’s URL. You should now be able to test your new Cohort Modeler API:

- Using your favorite tool (curl, Postman, etc.) create a new request to your API’s URL.

- The URL should look like https://randchars.execute-api.us-east-1.amazonaws.com/Stagename. You can retrieve your APIGateway endpoint URL by selecting API Settings, in the navigation pane of your CM API’s dropdown menu.

- From the API settings page, under Default endpoint, will see your Active APIGateway endpoint URL. Remember to add the Stagename (for example, “Prod) at the end of the URL.

-

- Be sure you’re adding a header named X-API-Key to the request, and give it the value of the API key you created earlier.

- Add the /data/cohort resource to the end of the URL to access the new endpoint.

- Add /ea_atrisk after /data/cohort – you’re querying for the cohort of players who belong to the at-risk cohort.

- Finally, add ?threshold=2 so that we’re only looking at players whose cohort value (in this case, the number of times they’ve shared personally identifiable information) is greater than 2. The final URL should look something like: https://randchars.execute-api.us-east-1.amazonaws.com/Stagename/data/cohort/ea_atrisk?threshold=2

- Once you’ve submitted the query, your response should look like this:

{'statusCode': 200, 'body': 'export/ea_atrisk_2_2023-09-12_13-57-06.json'}The status code indicates a successful query, and the body indicates the name of the json file in your extract S3 bucket which contains the cohort information. The name comprises of the attribute, the threshold level and the time the export was made. Go ahead and navigate to the S3 bucket, find the file, and download it to see what Cohort Modeler has found for you.

Troubleshooting

Installing the Game Tech Cohort Modeler

- Error: Could not find public.ecr.aws/sam/build-python3.8:latest-x86_64 image locally and failed to pull it from docker

- Try: docker logout public.ecr.aws.

- Attempt to pull the docker image locally first: docker pull public.ecr.aws/sam/build-python3.8:latest-x86_64

- Error: RDS does not support creating a DB instance with the following combination:DBInstanceClass=db.r4.large, Engine=neptune, EngineVersion=1.2.0.2, LicenseModel=amazon-license.

- The default option r4 family was offered when Neptune was launched in 2018, but now newer instance types offer much better price/performance. As of engine version 1.1.0.0, Neptune no longer supports r4 instance types.

- Therefore, we recommend choosing another Neptune instance based on your needs, as detailed on this page.

- For testing and development, you can consider the t3.medium and t4g.medium instances, which are eligible for Neptune free-tier offer.

- Remember to add the instance type that you want to use in the AllowedValues attributes of the DBInstanceClass and rebuilt using sam build –use-container

Using the data gen script (for automated data generation)

- The cohort modeler deployment does not deploy the CohortModelerGraphGenerator.ipynb which is required for dummy data generation as a default.

- You will need to login to your Sagemaker instance and upload the CohortModelerGraphGenerator.ipynb file and run through the cells to generate the dummy data into your S3 bucket.

- Finally, you’ll need to follow the instructions in this page to load the dummy data from Amazon S3 into your Neptune instance.

- For the IAM role for Amazon Neptune to load data from Amazon S3, the stack should have created a role with the name Cohort-neptune-iam-role-gametech-modeler.

- You can run the requests script from your jupyter notebook instance, since it already has access to the Amazon Neptune endpoint. The python script should look like below:

import requests

import json

url = 'https://<NeptuneEndpointURL>:8182/loader'

headers = {

'Content-Type': 'application/json'

}

data = {

"source": "<S3FileURI>",

"format": "csv",

"iamRoleArn": "NeptuneIAMRoleARN",

"region": "us-east-1",

"failOnError": "FALSE",

"parallelism": "MEDIUM",

"updateSingleCardinalityProperties": "FALSE",

"queueRequest": "TRUE"

}

response = requests.post(url, headers=headers, data=json.dumps(data))

print(response.text)-

- Remember to replace the NeptuneEndpointURL, S3FileURI, and NeptuneIAMRoleARN.

- Remember to load user_vertices.csv, campaign_vertices.csv, action_vertices.csv, interaction_edges.csv, engagement_edges.csv, campaign_edges.csv, and campaign_bidirectional_edges.csv in that order.

Conclusion

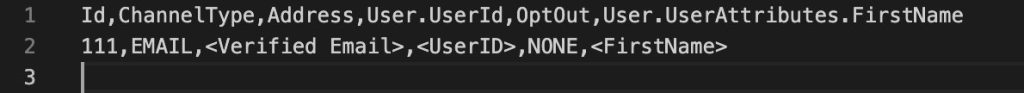

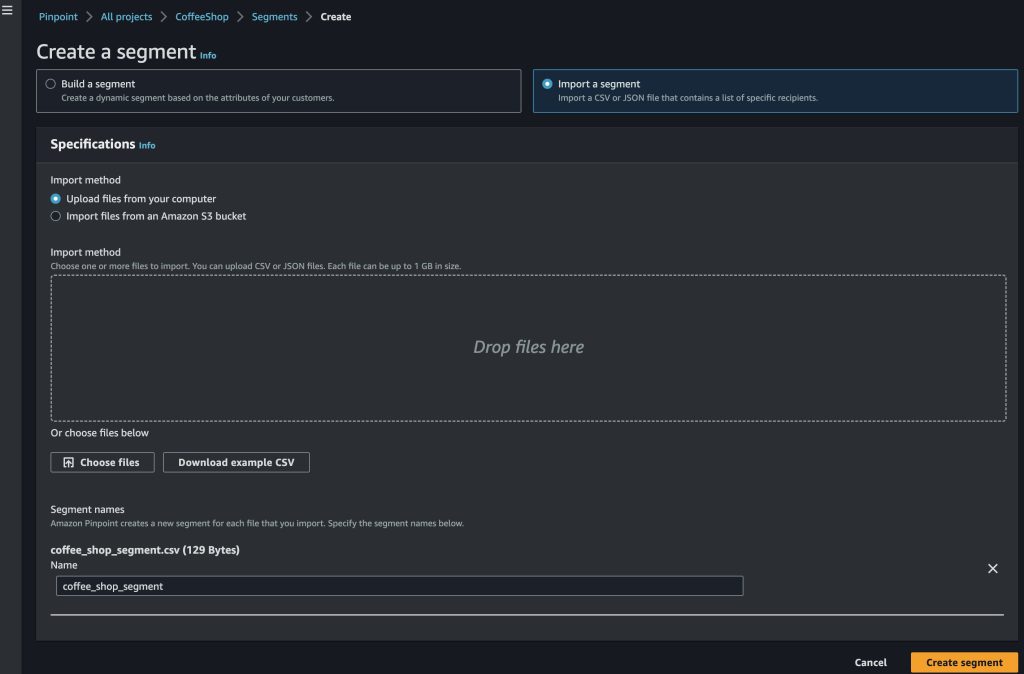

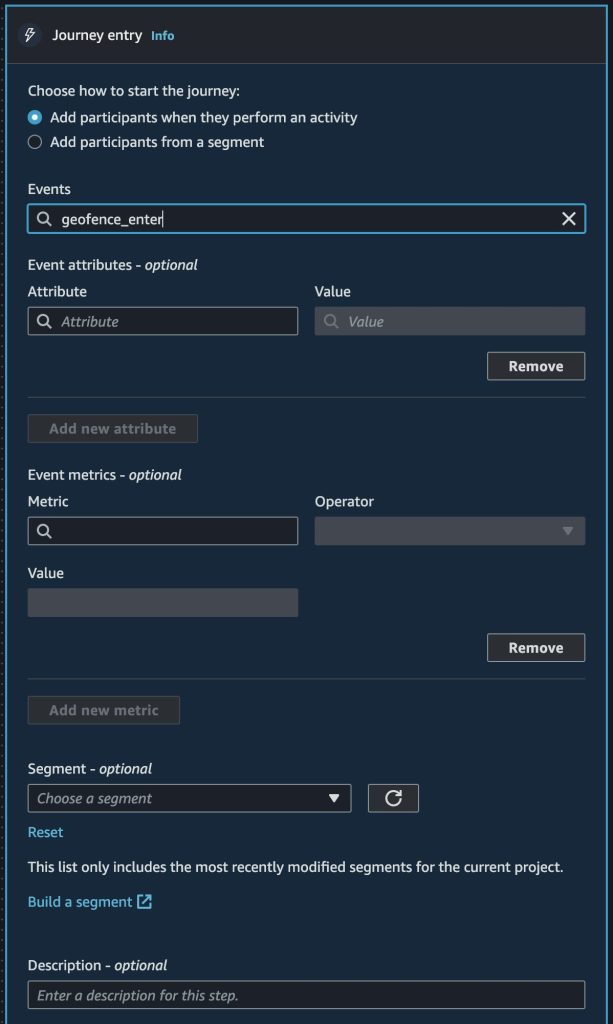

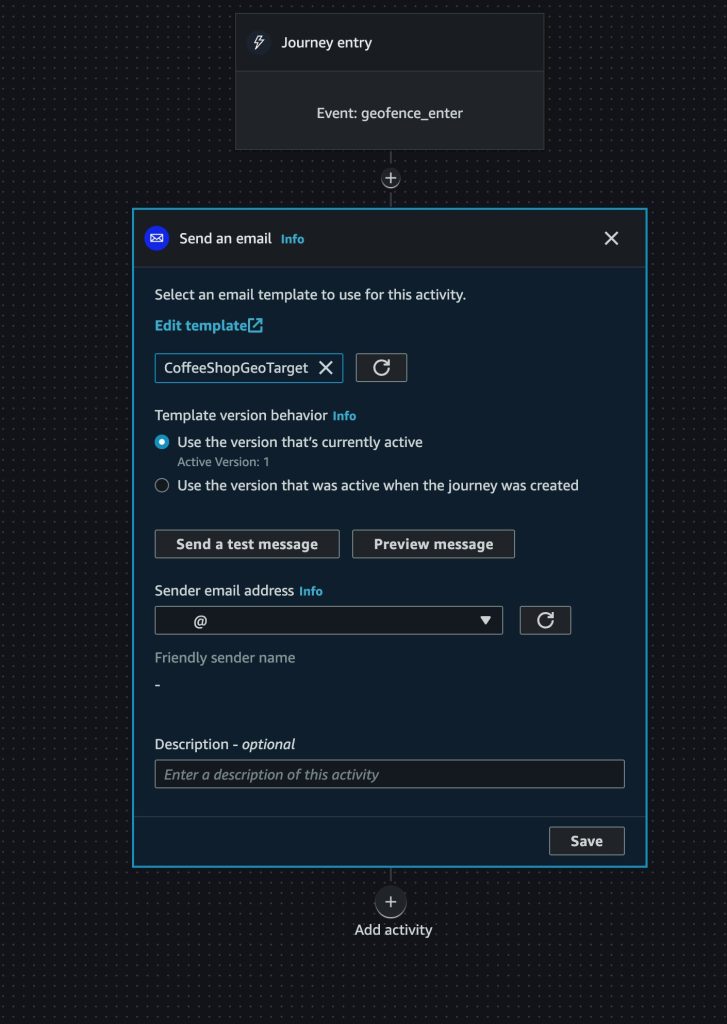

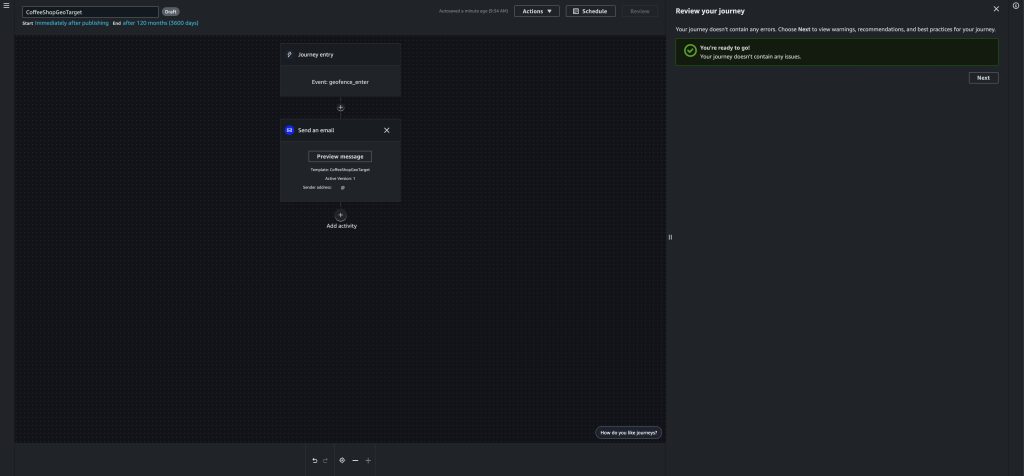

In this post, you’ve extended the Cohort Modeler to respond to requests for cohort data, by both querying the cohort database and providing an extract in an S3 bucket for future use. In the next post, we’ll demonstrate how creating this file triggers an automated process. This process will identify the players from the cohort in the studio’s database, extract their contact and other personalization data, compiling the data into a CSV file from that request, and import that file into Pinpoint for targeted messaging.

Related Content

- Gain Insights Into Your Player Base Using The AWS for Games Cohort Modeler

- AWS for Games Cohort Modeler: Graph Data Model

- Amazon Pinpoint

- Digital User Engagement Events Database