Post Syndicated from Brijesh Pati original https://aws.amazon.com/blogs/messaging-and-targeting/message-delivery-status-tracking-with-amazon-pinpoint/

In the vast landscape of digital communication, reaching your audience effectively is key to building successful customer relationships. Amazon Pinpoint – Amazon Web Services’ (AWS) flexible, user-focused messaging and targeting solution goes beyond mere messaging; it allows businesses to engage customers through email, SMS, push notifications, and more.

What sets Amazon Pinpoint apart is its scalability and deliverability. Amazon Pinpoint supports a multitude of business use cases, from promotional campaigns and transactional messages to customer engagement journeys. It provides insights and analytics that help tailor and measure the effectiveness of communication strategies.

For businesses, the power of this platform extends into areas such as marketing automation, customer retention campaigns, and transactional messaging for updates like order confirmations and shipping alerts. The versatility of Amazon Pinpoint can be a significant asset in crafting personalized user experiences at scale.

Use Case & Solution overview – Tracking SMS & Email Delivery Status

In a business setting, understanding whether a time-sensitive email or SMS was received can greatly impact customer experience as well as operational efficiency. For instance, consider an e-commerce platform sending out shipping notifications. By quickly verifying that the message was delivered, businesses can preemptively address any potential issues, ensuring customer satisfaction.

Amazon Pinpoint tracks email and SMS delivery and engagement events, which can be streamed using Amazon Kinesis Firehose for storage or further processing. However, third party applications don’t have a direct API to query and obtain the latest status of a message.

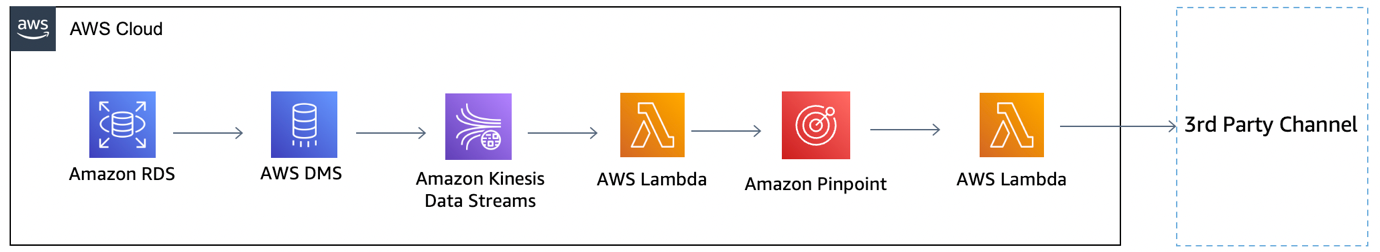

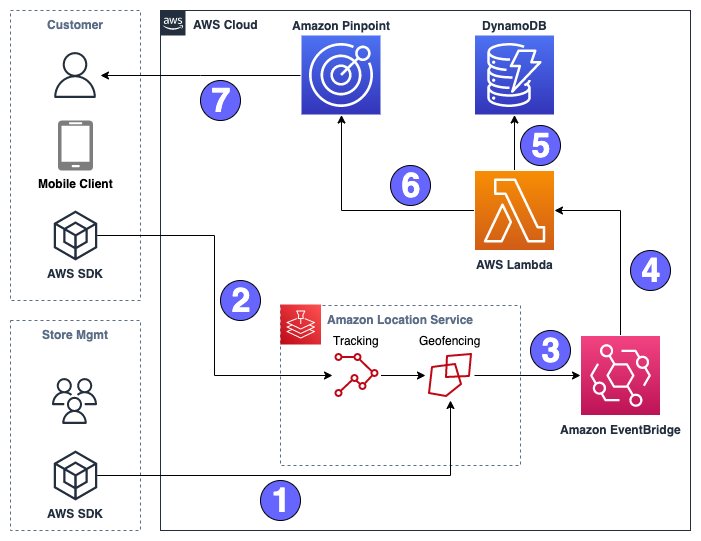

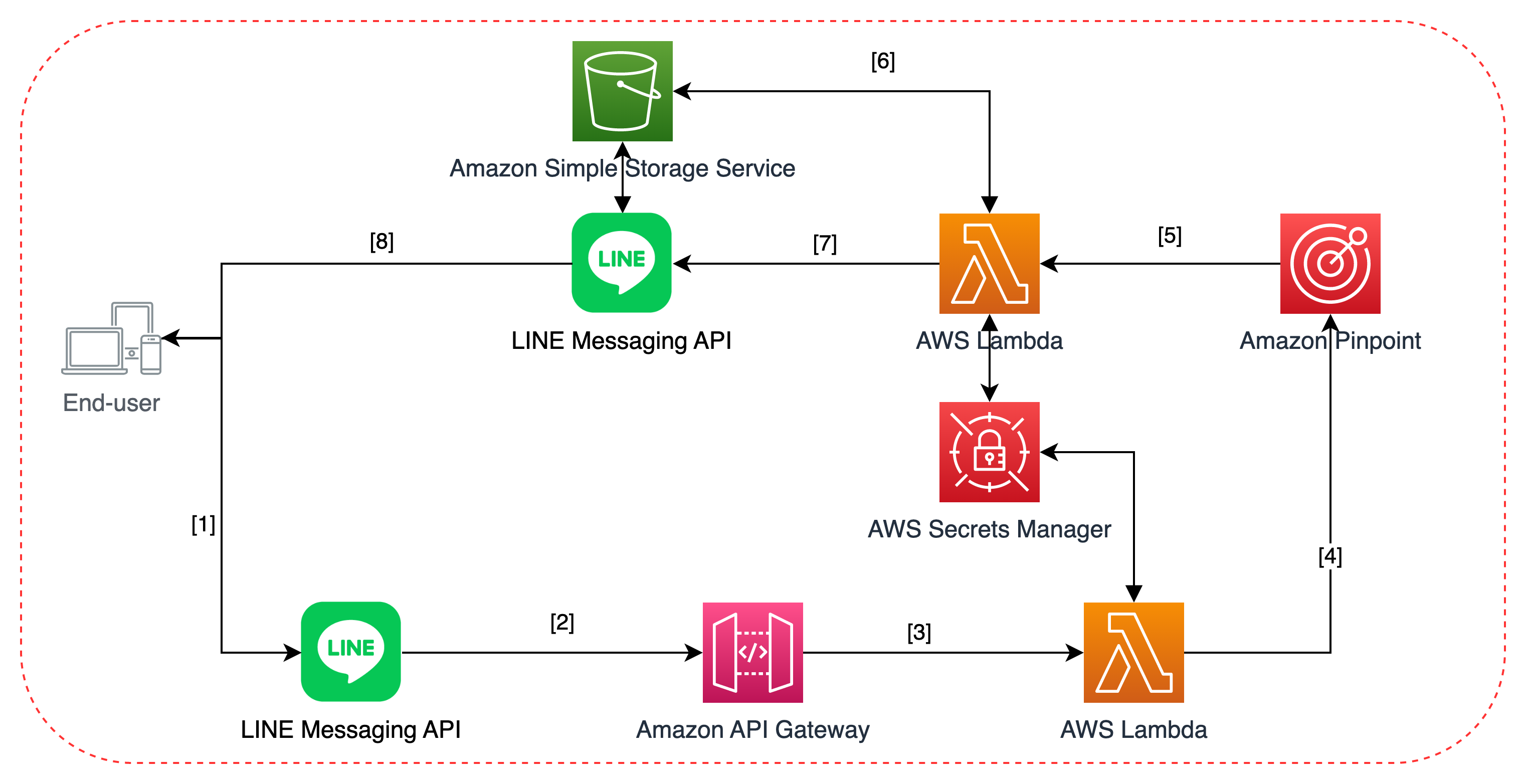

To address the above challenge, this blog presents a solution that leverages AWS services for data streaming, storage, and retrieval of Amazon Pinpoint events using a simple API call. At the core of the solution is Amazon Pinpoint event stream capability, which utilizes Amazon Kinesis services for data streaming.

The architecture for message delivery status tracking with Amazon Pinpoint is comprised of several AWS services that work in concert. To streamline the deployment of these components, they have been encapsulated into an AWS CloudFormation template. This template allows for automated provisioning and configuration of the necessary AWS resources, ensuring a repeatable and error-free deployment.

![]() The key components of the solution are as follows:

The key components of the solution are as follows:

- Event Generation: An event is generated within Amazon Pinpoint when a user interacts with an application, or when a message is sent from a campaign, journey, or as a transactional communication. The event name and metadata depends on the channel SMS or Email.

- Amazon Pinpoint Event Data Streaming: The generated event data is streamed to Amazon Kinesis Data Firehose. Kinesis Data Firehose is configured to collect the event information in near real-time, enabling the subsequent processing and analysis of the data.

- Pinpoint Event Data Processing: Amazon Kinesis Data Firehose is configured to invoke a specified AWS Lambda function to transform the incoming source data. This transformation step is set up during the creation of the Kinesis Data Firehose delivery stream, ensuring that the data is in the correct format before it is stored, enhancing its utility for immediate and downstream analysis. The Lambda function acts as a transformation mechanism for event data ingested through Kinesis Data Firehose. The function decodes the base64-encoded event data, deserializes the JSON payload, and processes the data depending on the event type (email or SMS)- it parses the raw data, extracting relevant attributes before ingesting it into Amazon DynamoDB. The function handles different event types, specifically email and SMS events, discerning their unique attributes and ensuring they are formatted correctly for DynamoDB’s schema.

- Data Ingestion into Dynamo DB: Once processed, the data is stored in Amazon DynamoDB. DynamoDB provides a fast and flexible NoSQL database service, which facilitates the efficient storage and retrieval of event data for analysis.

- Data Storage: Amazon DynamoDB stores the event data after it’s been processed by AWS Lambda. Amazon DynamoDB is a highly scalable NoSQL database that enables fast queries, which is essential for retrieving the status of messages quickly and efficiently, thereby facilitating timely decision-making based on customer interactions.

- Customer application/interface: Users or integrated systems engage with the messaging status through either a frontend customer application or directly via an API. This interface or API acts as the conduit through which message delivery statuses are queried, monitored, and managed, providing a versatile gateway for both user interaction and programmatic access.

- API Management: The customer application communicates with the backend systems through Amazon API Gateway. This service acts as a fully managed gateway, handling all the API calls, data transformation, and transfer between the frontend application and backend services.

- Event Status Retrieval API: When the API Gateway receives a delivery status request, it invokes another AWS Lambda function that is responsible for querying the DynamoDB table. It retrieves the latest status of the message delivery, which is then presented to the user via the API.

DynamoDB Table Design for Message Tracking:

The tables below outline the DynamoDB schema designed for the efficient storage and retrieval of message statuses, detailing distinct event statuses and attributes for each message type such as email and SMS:

| Attribute | Data type | Description |

|---|---|---|

| message_id | String | The unique message ID generated by Amazon Pinpoint. |

| event_type | String | The value would be ’email’. |

| aws_account_id | String | The AWS account ID used to send the email. |

| from_address | String | The sending identity used to send the email. |

| destination | String | The recipient’s email address. |

| client | String | The client ID if applicable |

| campaign_id | String | The campaign ID if part of a campaign |

| journey_id | String | The journey ID if part of a journey |

| send | Timestamp | The timestamp when Amazon Pinpoint accepted the message and attempted to deliver it to the recipient |

| delivered | Timestamp | The timestamp when the email was delivered, or ‘NA’ if not delivered. |

| rejected | Timestamp | The timestamp when the email was rejected (Amazon Pinpoint determined that the message contained malware and didn’t attempt to send it.) |

| hardbounce | Timestamp | The timestamp when a hard bounce occurred (A permanent issue prevented Amazon Pinpoint from delivering the message. Amazon Pinpoint won’t attempt to deliver the message again) |

| softbounce | Timestamp | The timestamp when a soft bounce occurred (A temporary issue prevented Amazon Pinpoint from delivering the message. Amazon Pinpoint will attempt to deliver the message again for a certain amount of time. If the message still can’t be delivered, no more retries will be attempted. The final state of the email will then be SOFTBOUNCE.) |

| complaint | Timestamp | The timestamp when a complaint was received (The recipient received the message, and then reported the message to their email provider as spam (for example, by using the “Report Spam” feature of their email client). |

| open | Timestamp | The timestamp when the email was opened (The recipient received the message and opened it.) |

| click | Timestamp | The timestamp when a link in the email was clicked. (The recipient received the message and clicked a link in it) |

| unsubscribe | Timestamp | The timestamp when a link in the email was unsubscribed (The recipient received the message and clicked an unsubscribe link in it.) |

| rendering_failure | Timestamp | The timestamp when a link in the email was clicked (The email was not sent due to a rendering failure. This can occur when template data is missing or when there is a mismatch between template parameters and data.) |

| Attribute | Data type | Description |

|---|---|---|

| message_id | String | The unique message ID generated by Amazon Pinpoint. |

| event_type | String | The value would be ‘sms’. |

| aws_account_id | String | The AWS account ID used to send the email. |

| origination_phone_number | String | The phone number from which the SMS was sent. |

| destination_phone_number | String | The phone number to which the SMS was sent. |

| record_status | String | Additional information about the status of the message. Possible values include: – SUCCESSFUL/DELIVERED – Successfully delivered. – PENDING – Not yet delivered. – INVALID – Invalid destination phone number. – UNREACHABLE – Recipient’s device unreachable. – UNKNOWN – Error preventing delivery. – BLOCKED – Device blocking SMS. – CARRIER_UNREACHABLE – Carrier issue preventing delivery. – SPAM – Message identified as spam. – INVALID_MESSAGE – Invalid SMS message body. – CARRIER_BLOCKED – Carrier blocked message. – TTL_EXPIRED – Message not delivered in time. – MAX_PRICE_EXCEEDED – Exceeded SMS spending quota. – OPTED_OUT – Recipient opted out. – NO_QUOTA_LEFT_ON_ACCOUNT – Insufficient spending quota. – NO_ORIGINATION_IDENTITY_AVAILABLE_TO_SEND – No suitable origination identity. – DESTINATION_COUNTRY_NOT_SUPPORTED – Destination country blocked. – ACCOUNT_IN_SANDBOX – Account in sandbox mode. – RATE_EXCEEDED – Message sending rate exceeded. – INVALID_ORIGINATION_IDENTITY – Invalid origination identity. – ORIGINATION_IDENTITY_DOES_NOT_EXIST – Non-existent origination identity. – INVALID_DLT_PARAMETERS – Invalid DLT parameters. – INVALID_PARAMETERS – Invalid parameters. – ACCESS_DENIED – Account blocked from sending messages. – INVALID_KEYWORD – Invalid keyword. – INVALID_SENDER_ID – Invalid Sender ID. – INVALID_POOL_ID – Invalid Pool ID. – SENDER_ID_NOT_SUPPORTED_FOR_DESTINATION – Sender ID not supported. – INVALID_PHONE_NUMBER – Invalid origination phone number. |

| iso_country_code | String | The ISO country code associated with the destination phone number. |

| message_type | String | The type of SMS message sent. |

| campaign_id | String | The campaign ID if part of a campaign, otherwise N/A. |

| journey_id | String | The journey ID if part of a journey, otherwise N/A. |

| success | Timestamp | The timestamp when the SMS was successfully accepted by the carrier/delivered to the recipient, or ‘NA’ if not applicable. |

| buffered | Timestamp | The timestamp when the SMS is still in the process of being delivered to the recipient, or ‘NA’ if not applicable. |

| failure | Timestamp | The timestamp when the SMS delivery failed, or ‘NA’ if not applicable. |

| complaint | Timestamp | The timestamp when a complaint was received (The recipient received the message, and then reported the message to their email provider as spam (for example, by using the “Report Spam” feature of their email client). |

| optout | Timestamp | The timestamp when the customer received the message and replied by sending the opt-out keyword (usually “STOP”), or ‘NA’ if not applicable. |

| price_in_millicents_usd | Number | The amount that was charged to send the message. |

Prerequisites

- AWS Account Access (setup) with admin-level permission.

- AWS CLI version 2 with named profile setup. If a locally configured IDE is not convenient, you can use the AWS CLI from the AWS CloudShell in your browser.

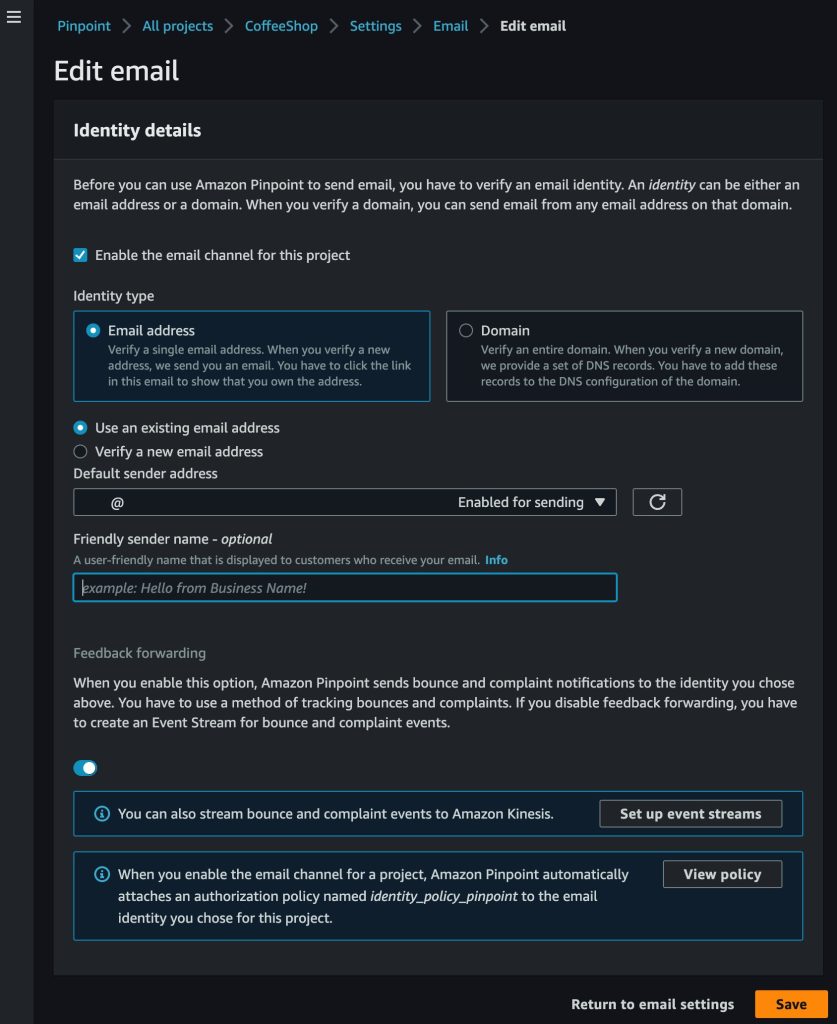

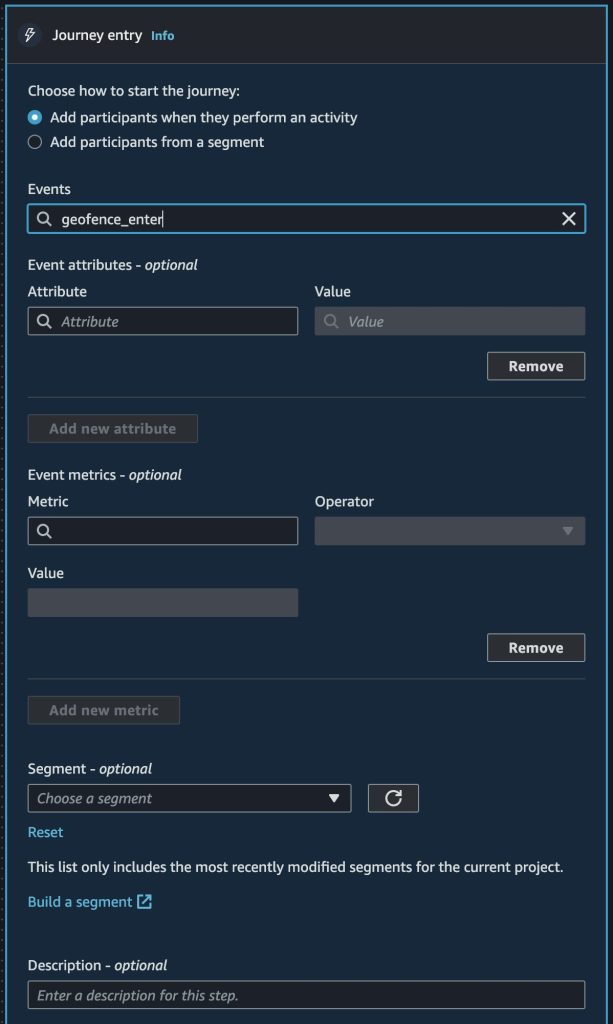

- A Pinpoint project that has never been configured with an event stream (PinpointEventStream).“

- The Pinpoint ID from the project you want to monitor. This ID can be found in the AWS Pinpoint console on the project’s main page (it will look something like “79788ecad55555513b71752a4e3ea1111”). Copy this ID to a text file, as you will need it shortly.

- Note, you must use the ID from a Pinpoint project that has never been configured with the PinpointEventStream option.

Solution Deployment & Testing

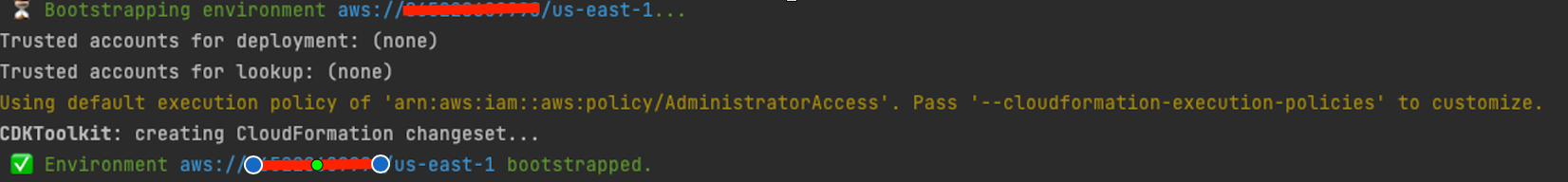

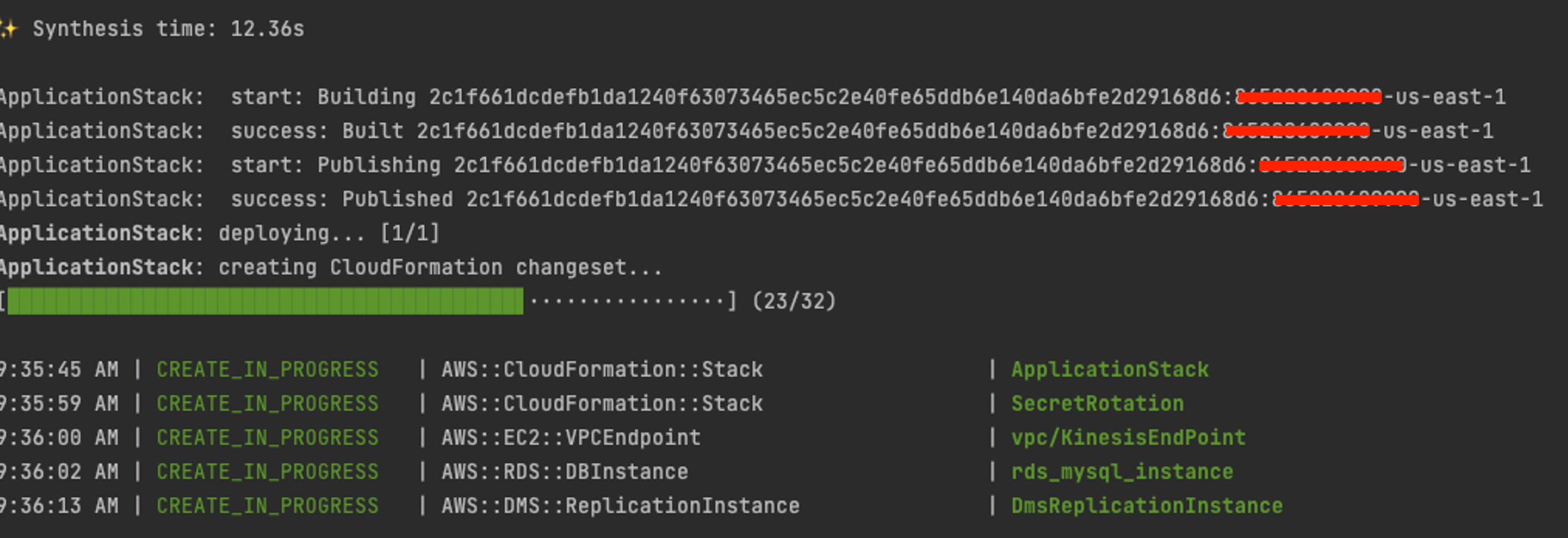

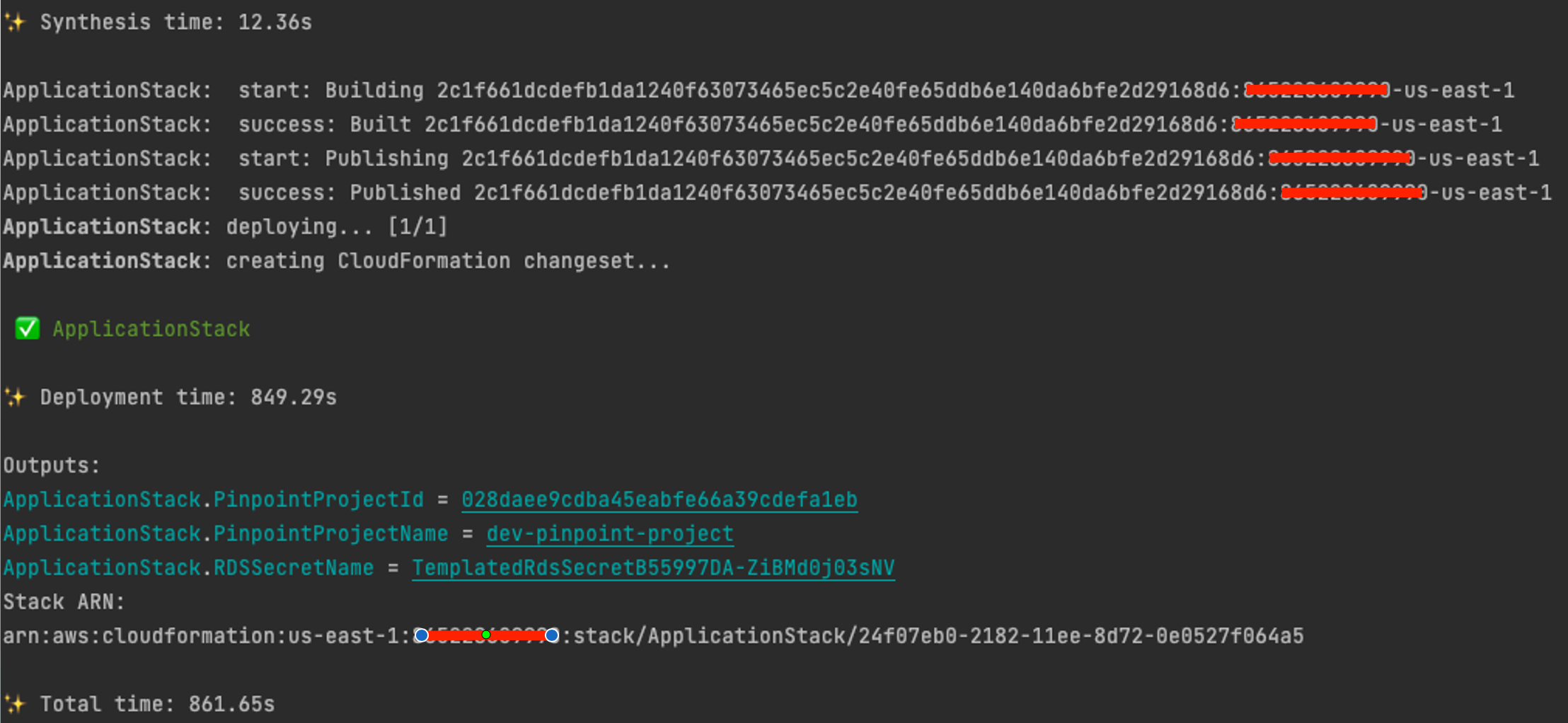

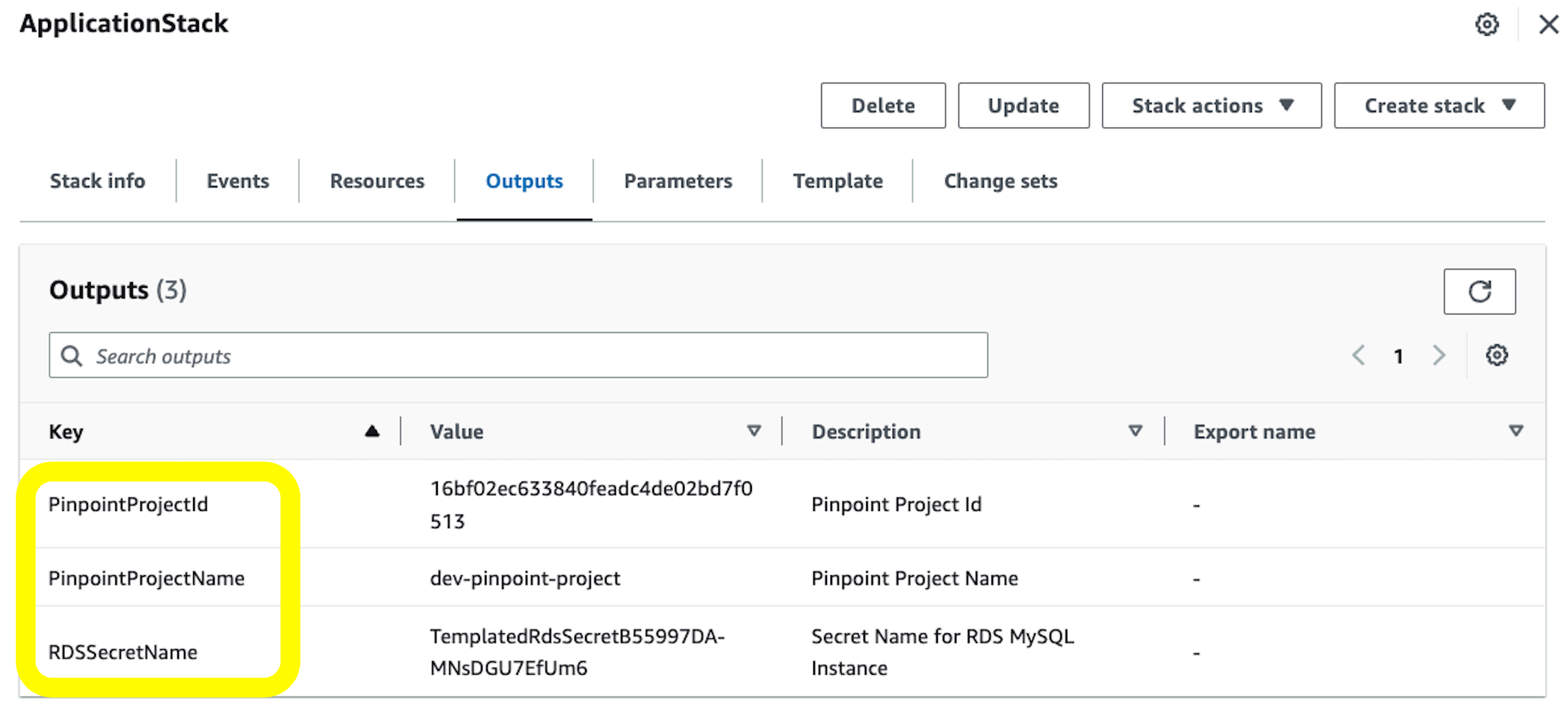

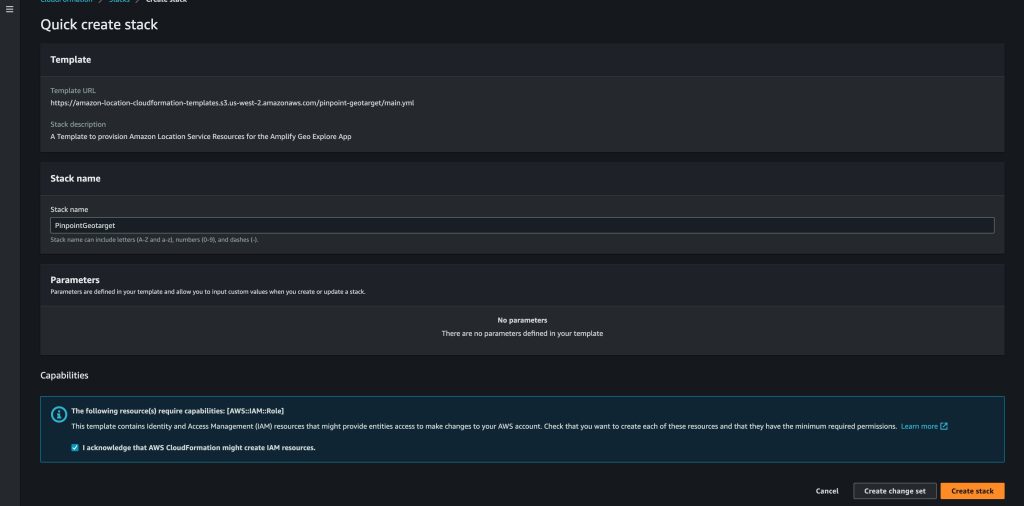

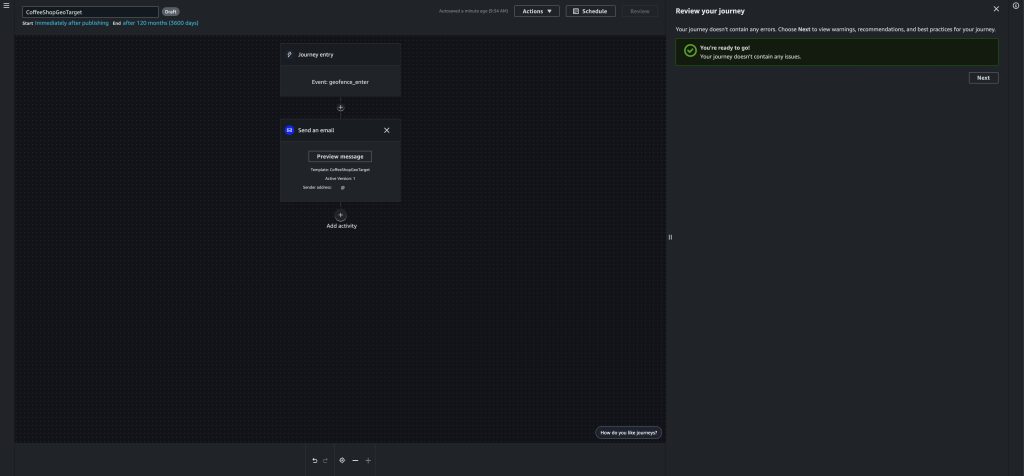

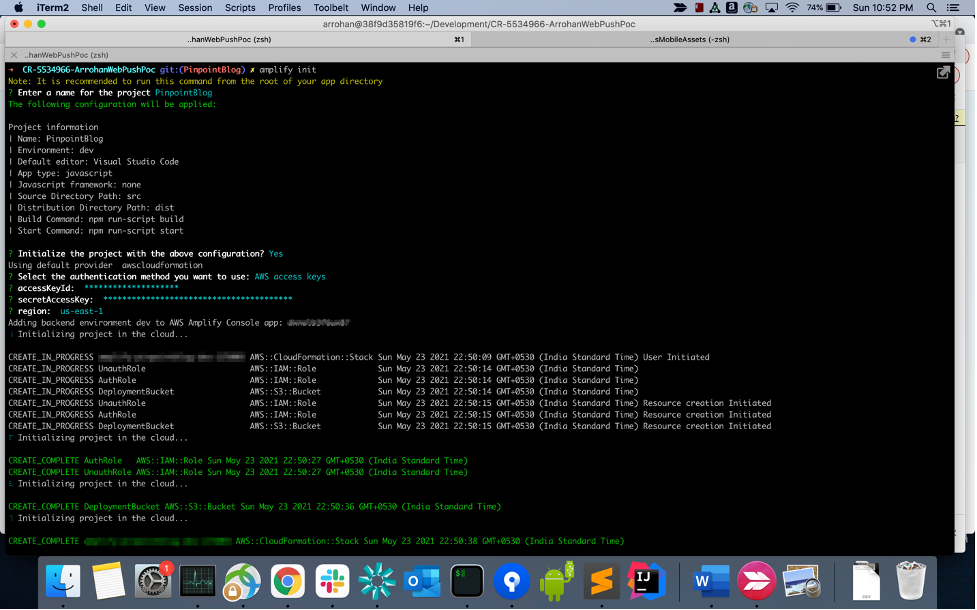

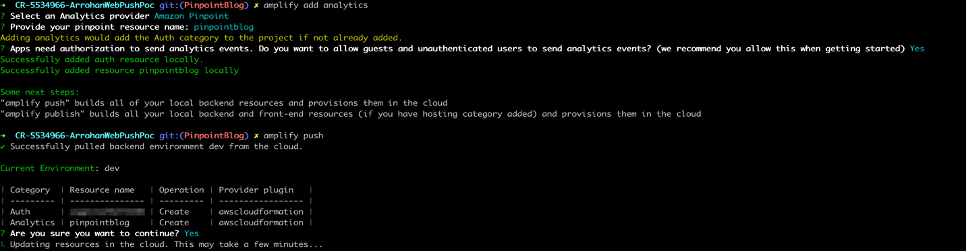

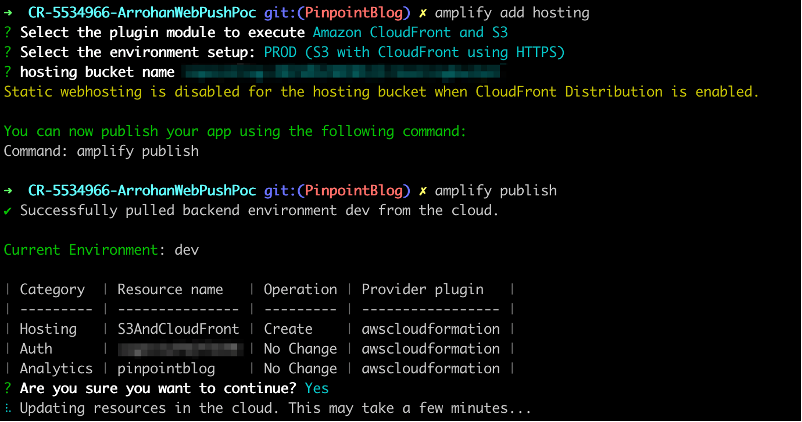

Deploying this solution is a straightforward process, thanks to the AWS CloudFormation template we’ve created. This template automates the creation and configuration of the necessary AWS resources into an AWS stack. The CloudFormation template ensures that the components such as Kinesis Data Firehose, AWS Lambda, Amazon DynamoDB, and Amazon API Gateway are set up consistently and correctly.

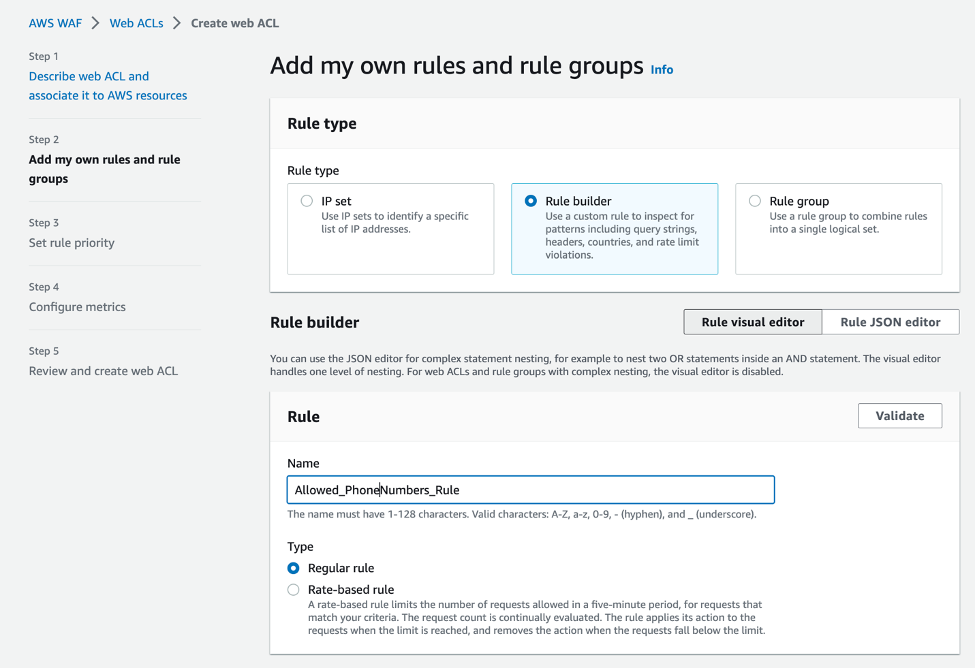

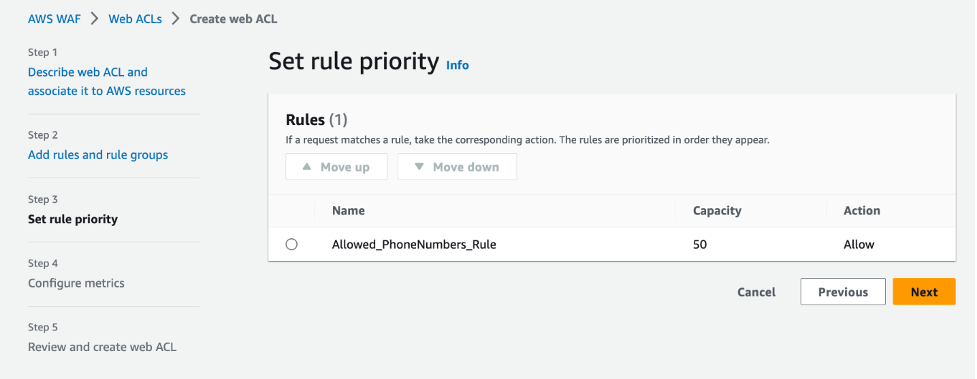

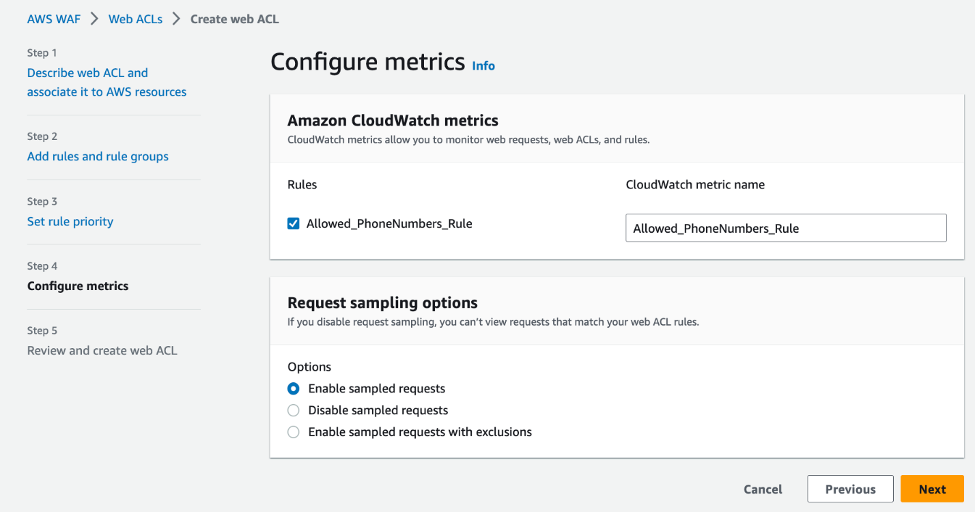

Deployment Steps:

- Download the CloudFormation Template from this GitHub sample repository. The CloudFormation template is authored in JSON and named PinpointAPIBlog.yaml.

- Access the CloudFormation Console: Sign into the AWS Management Console and open the AWS CloudFormation console.

- Create a New Stack:

- Choose Create Stack and select With new resources (standard) to start the stack creation process.

- Under Prerequisite – Prepare template, select Template is ready.

- Under ‘Specify template’, choose Upload a template file, and then upload the CloudFormation template file you downloaded in Step 1.

- Configure the Stack:

- Provide a stack name, such as “pinpoint-yourprojectname-monitoring” and paste the Pinpoint project (application) ID. Press Next.

- Review the stack settings, and make any necessary changes based on your specific requirements. Next.

- Initiate the Stack Creation: Once you’ve configured all options, acknowledge that AWS CloudFormation might create IAM resources with custom names, and then choose Create stack.

- AWS CloudFormation will now provision and configure the resources as defined in the template This will take about 20 minutes to fully deploy. You can view the status in the AWS CloudFormation console.

Testing the Solution:

After deployment is complete you can test (and use) the solution.

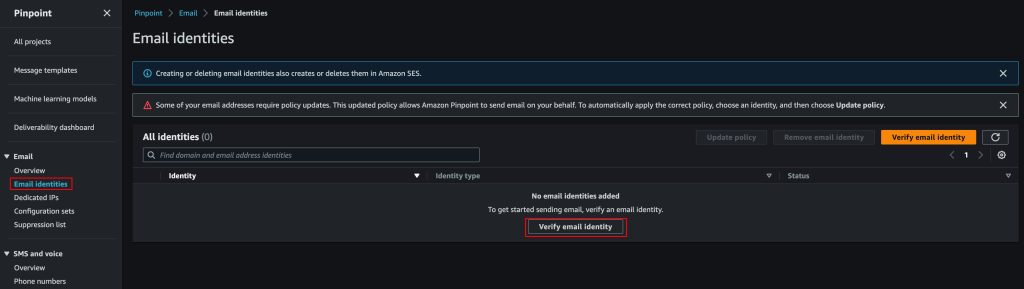

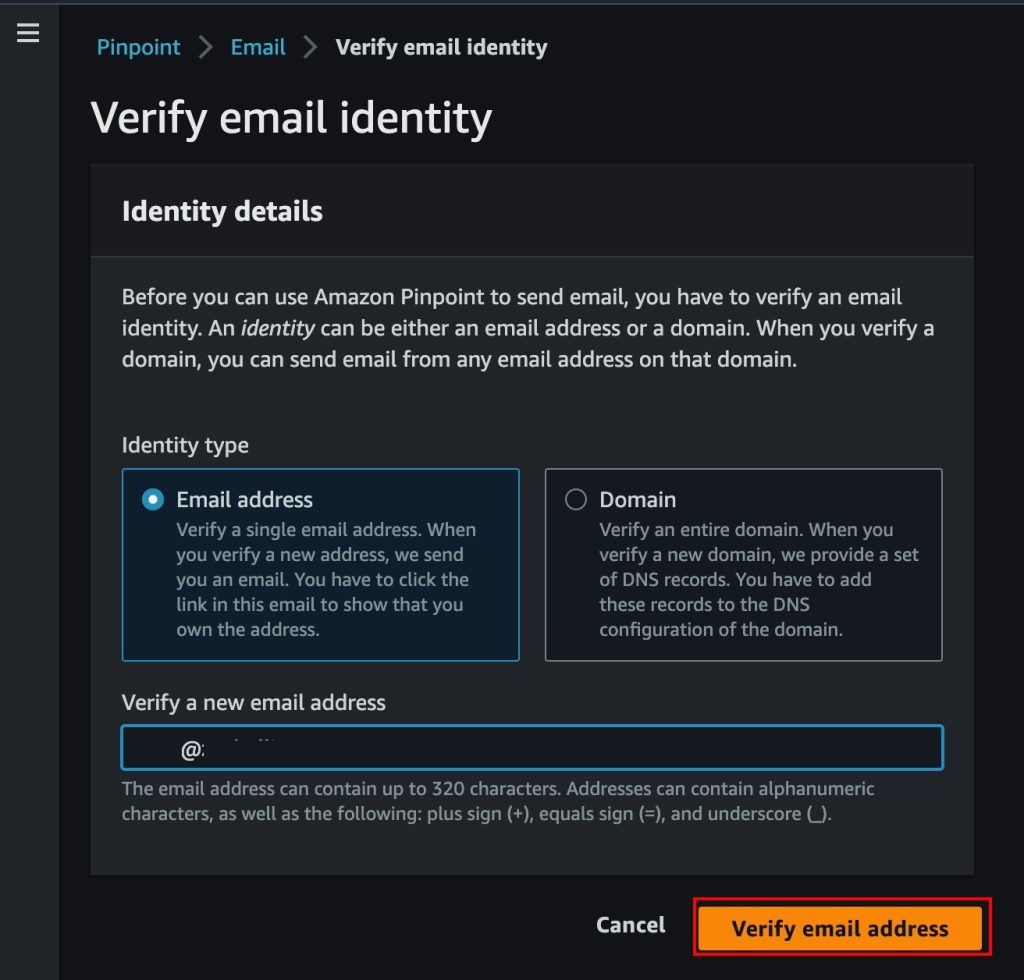

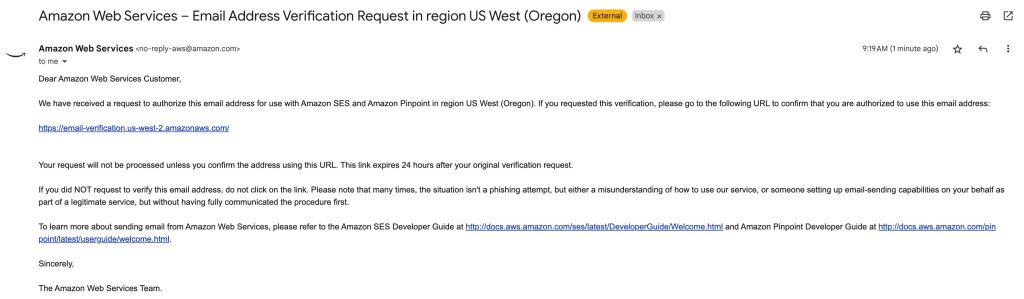

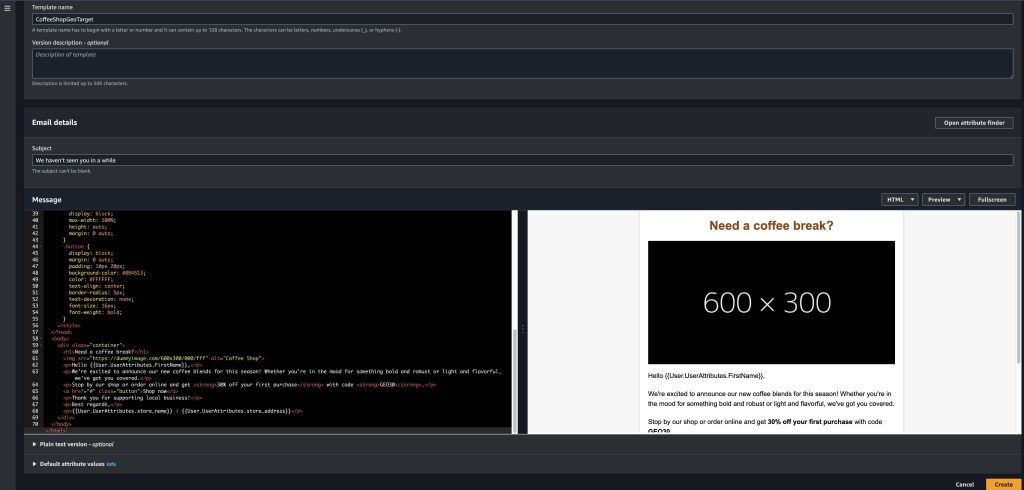

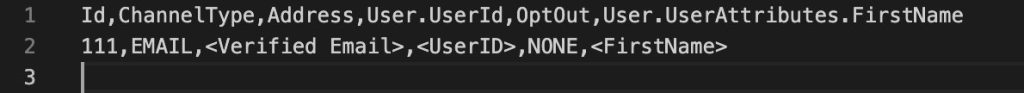

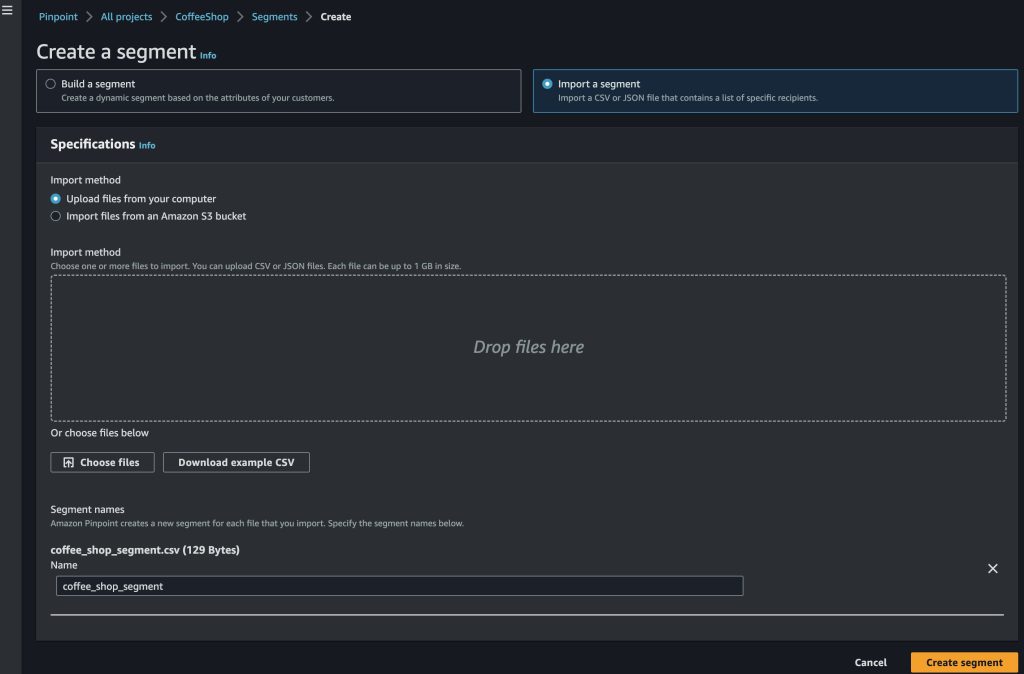

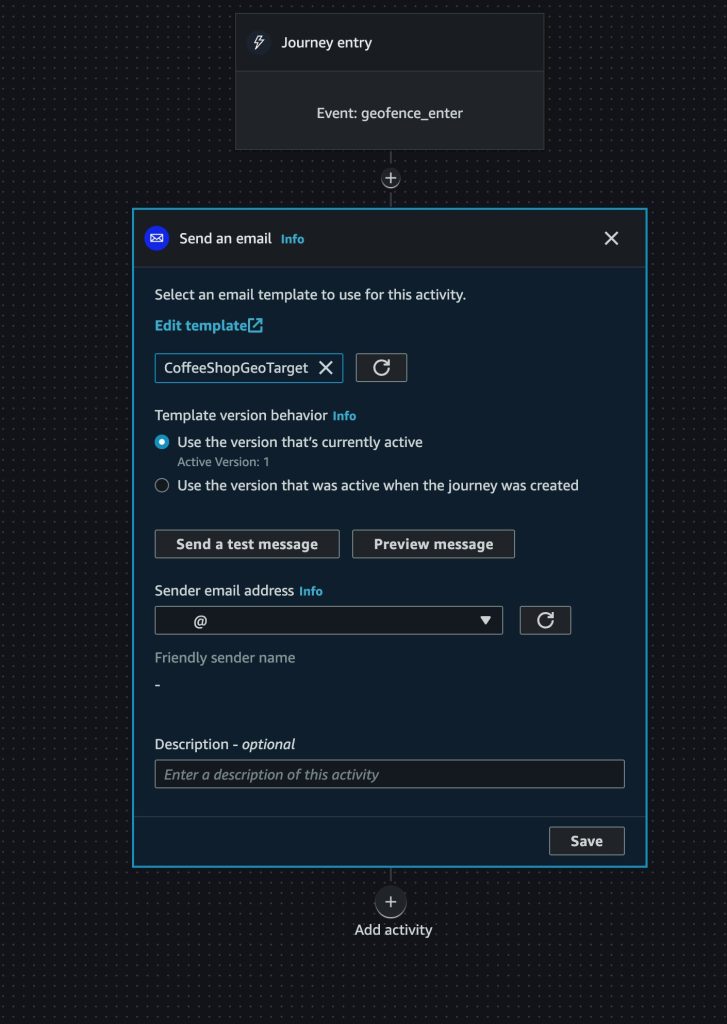

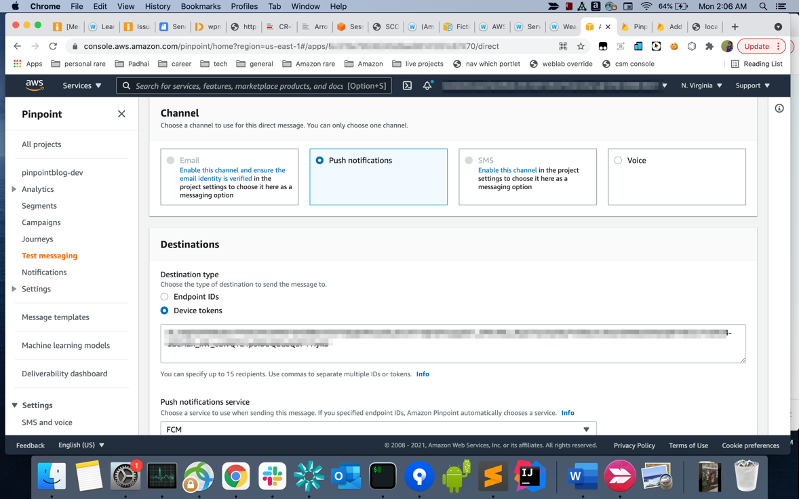

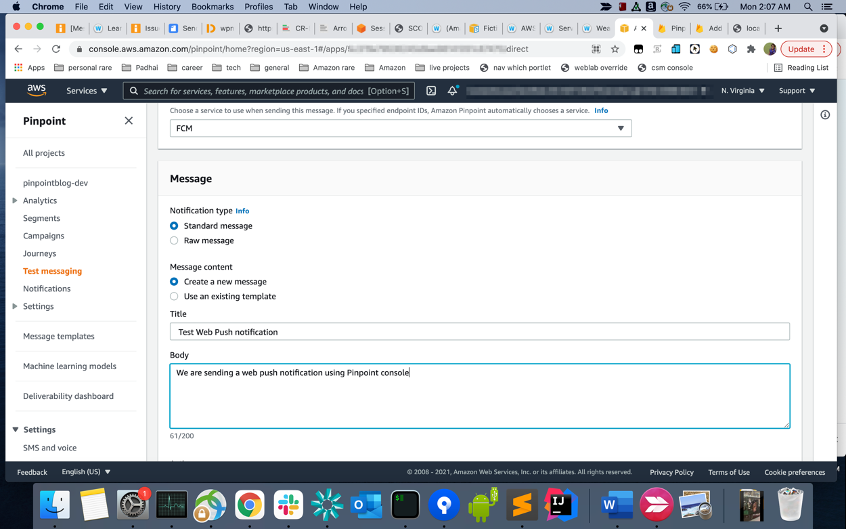

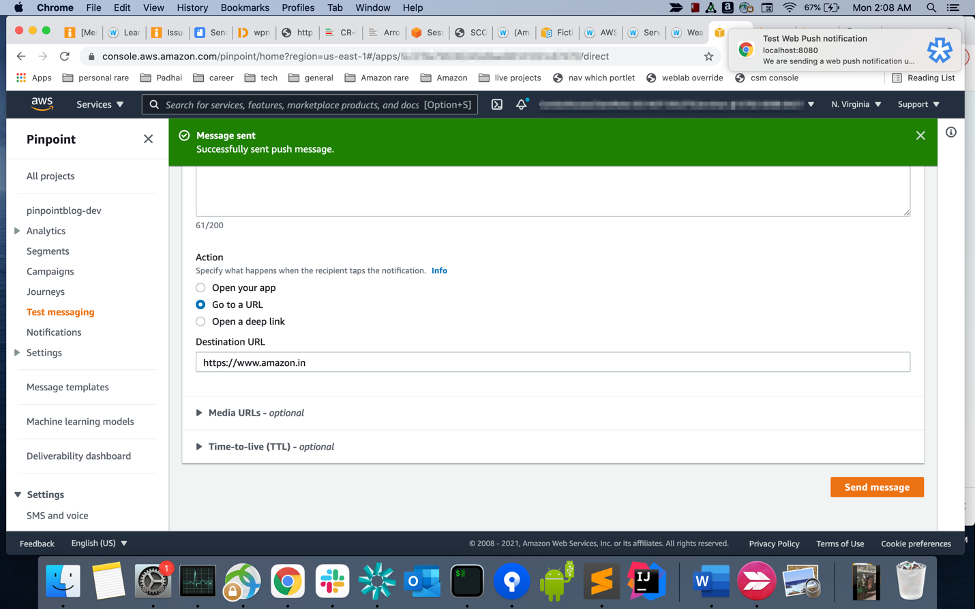

- Send Test Messages: Utilize the Amazon Pinpoint console to send test email and SMS messages. Documentation for this can be found at:

- Verify Lambda Execution:

- Navigate to the AWS CloudWatch console.

- Locate and review the logs for the Lambda functions specified in the solution (`aws/lambda/{functionName}`) to confirm that the Kinesis Data Firehose records are being processed successfully. In the log events you should see messages including INIT_START, Raw Kinesis Data Firehouse Record, etc.

- Check Amazon DynamoDB Data:

- Navigate to Amazon DynamoDB in the AWS Console.

- Select the table created by the CloudFormation template and choose ‘Explore Table Items‘.

- Confirm the presence of the event data by checking if the message IDs appear in the table.

- The table should have one or more message_id entries from the test message(s) you sent above.

- Click on a message_id to review the data, and copy the message_id to a text editor on your computer. It will look like “0201123456gs3nroo-clv5s8pf-8cq2-he0a-ji96-59nr4tgva0g0-343434”

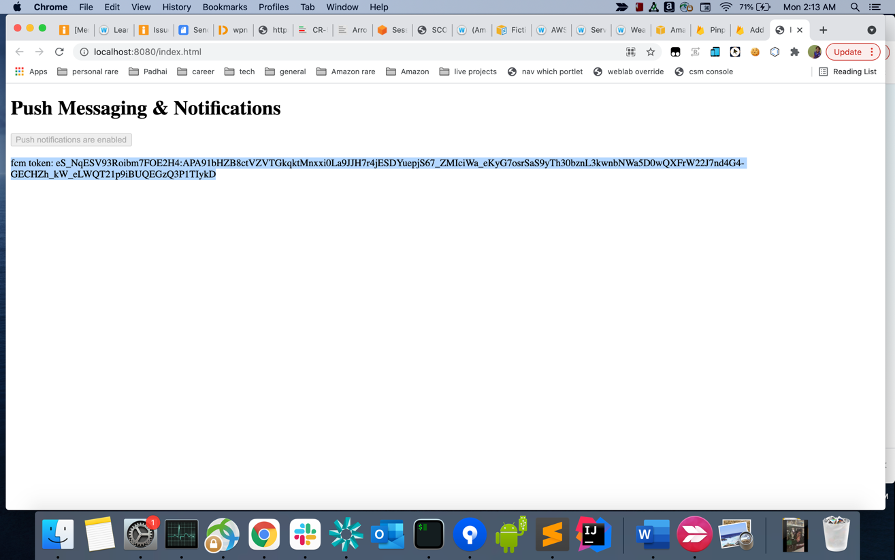

- API Gateway Testing:

- In the API Gateway console, find the MessageIdAPI.

- Navigate to Stages and copy the Invoke URL provided.

-

- Open the text editor on your computer and paste the APIGateway invoke URL.

- Create a curl command with you API Gateway + ?message_id=message_id. It should look like this: “https://txxxxxx0.execute-api.us-west-2.amazonaws.com/call?message_id=020100000xx3xxoo-clvxxxxf-8cq2-he0a-ji96-59nr4tgva0g0-000000”

- Copy the full curl command in your browser and enter.

- The results should look like this (MacOS, Chrome):

By following these deployment and testing steps, you’ll have a functioning solution for tracking Pinpoint message delivery status using Amazon Pinpoint, Kinesis Fire Hose, DynamoDB and CloudWatch.

Clean Up

To help prevent unwanted charges to your AWS account, you can delete the AWS resources that you used for this walkthrough.

To delete the stack follow these following instructions:

Open the AWS CloudFormation console.

- In the AWS CloudFormation console dashboard, select the stack you created (pinpoint-yourprojectname-monitoring).

- On the Actions menu, choose Delete Stack.

- When you are prompted to confirm, choose Yes, Delete.

- Wait for DELETE_COMPLETE to appear in the Status column for the stack.

Next steps

The solution on this blog provides you an API endpoint to query messages’ status. The next step is to store and analyze the raw data based on your business’s requirements. The Amazon Kinesis Firehose used in this blog can stream the Pinpoint events to an AWS database or object storage like Amazon S3. Once the data is stored, you can catalogue them using AWS Glue, query them via SQL using Amazon Athena and create custom dashboards using Amazon QuickSight, which is a cloud-native, serverless, business intelligence (BI) with native machine learning (ML) integrations.

Conclusion

The integration of AWS services such as Kinesis, Lambda, DynamoDB, and API Gateway with Amazon Pinpoint transforms your ability to connect with customers through precise event data retrieval and analysis. This solution provides a stream of real-time data, versatile storage options, and a secure method for accessing detailed information, all of which are critical for optimizing your communication strategies.

By leveraging these insights, you can fine-tune your email and SMS campaigns for maximum impact, ensuring every message counts in the broader narrative of customer engagement and satisfaction. Harness the power of AWS and Amazon Pinpoint to not just reach out but truly connect with your audience, elevating your customer relationships to new heights.

Considerations/Troubleshooting

When implementing a solution involving AWS Lambda, Kinesis Data Streams, Kinesis Data Firehose, and DynamoDB, several key considerations should be considered:

- Scalability and Performance: Assess the scalability needs of your system. Lambda functions scale automatically, but it’s important to configure concurrency settings and memory allocation based on expected load. Similarly, for Kinesis Streams and Firehose, consider the volume of data and the throughput rate. For DynamoDB, ensure that the table’s read and write capacity settings align with your data processing requirements.

- Error Handling and Retries: Implement robust error handling within the Lambda functions to manage processing failures. Kinesis Data Streams and Firehose have different retry behaviors and mechanisms. Understand and configure these settings to handle failed data processing attempts effectively. In DynamoDB, consider the use of conditional writes to handle potential data inconsistencies.

- Security and IAM Permissions: Secure your AWS resources by adhering to the principle of least privilege. Define IAM roles and policies that grant the Lambda function only the necessary permissions to interact with Kinesis and DynamoDB. Ensure that data in transit and at rest is encrypted as required, using AWS KMS or other encryption mechanisms.

- Monitoring and Logging: Utilize AWS CloudWatch for monitoring and logging the performance and execution of Lambda functions, as well as Kinesis and DynamoDB operations. Set up alerts for any anomalies or thresholds that indicate issues in data processing or performance bottlenecks.

About the Authors