Post Syndicated from Tony Stricker original https://aws.amazon.com/blogs/big-data/enrich-your-customer-data-with-geospatial-insights-using-amazon-redshift-aws-data-exchange-and-amazon-quicksight/

It always pays to know more about your customers, and AWS Data Exchange makes it straightforward to use publicly available census data to enrich your customer dataset.

The United States Census Bureau conducts the US census every 10 years and gathers household survey data. This data is anonymized, aggregated, and made available for public use. The smallest geographic area for which the Census Bureau collects and aggregates data are census blocks, which are formed by streets, roads, railroads, streams and other bodies of water, other visible physical and cultural features, and the legal boundaries shown on Census Bureau maps.

If you know the census block in which a customer lives, you are able to make general inferences about their demographic characteristics. With these new attributes, you are able to build a segmentation model to identify distinct groups of customers that you can target with personalized messaging. This data is available to subscribe to on AWS Data Exchange—and with data sharing, you don’t need to pay to store a copy of it in your account in order to query it.

In this post, we show how to use customer addresses to enrich a dataset with additional demographic details from the US Census Bureau dataset.

Solution overview

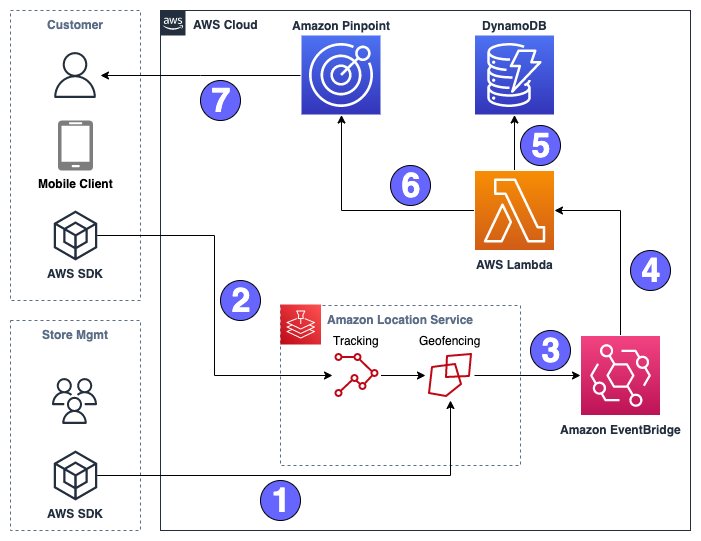

The solution includes the following high-level steps:

- Set up an Amazon Redshift Serverless endpoint and load customer data.

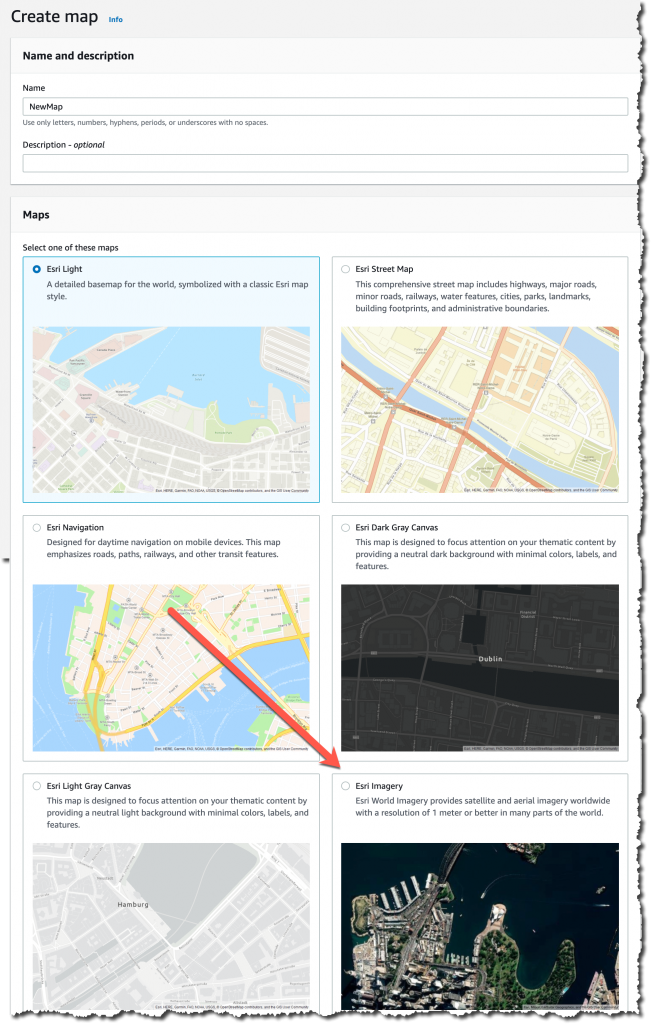

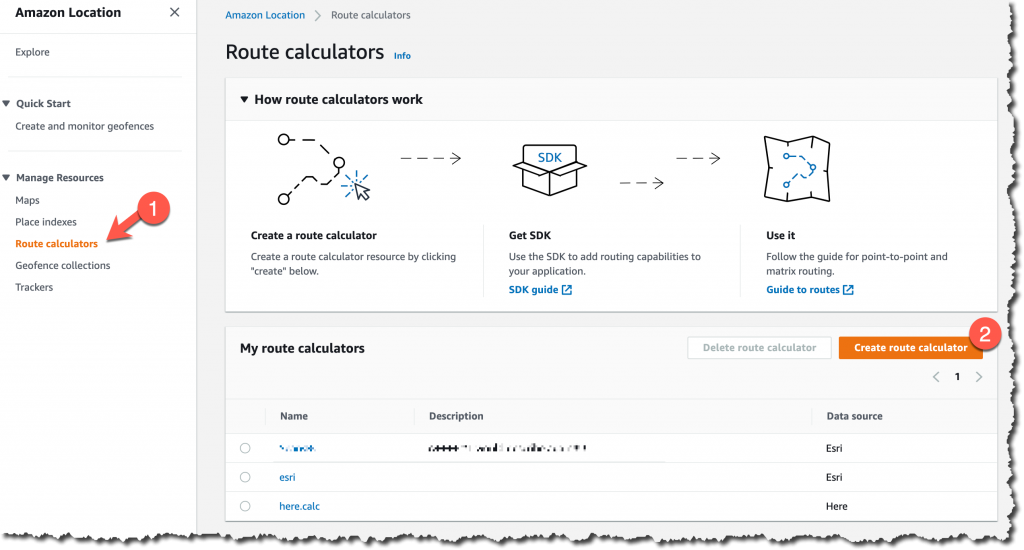

- Set up a place index in Amazon Location Service.

- Write an AWS Lambda user-defined function (UDF) to call Location Service from Amazon Redshift.

- Subscribe to census data on AWS Data Exchange.

- Use geospatial queries to tag addresses to census blocks.

- Create a new customer dataset in Amazon Redshift.

- Evaluate new customer data in Amazon QuickSight.

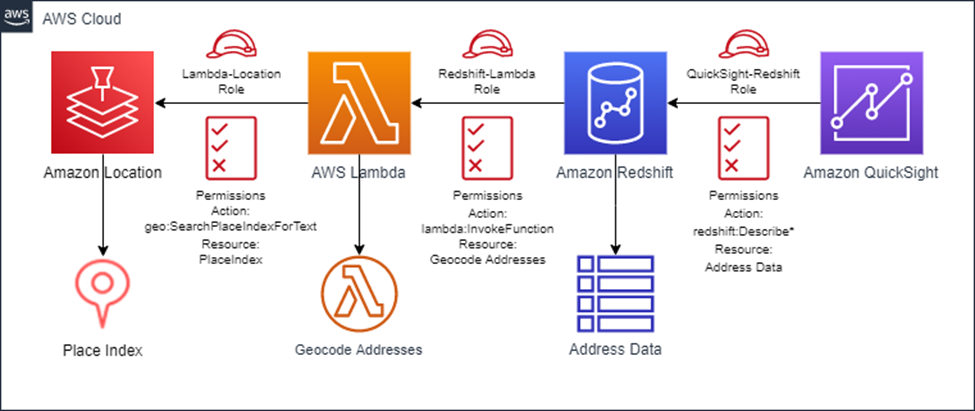

The following diagram illustrates the solution architecture.

Prerequisites

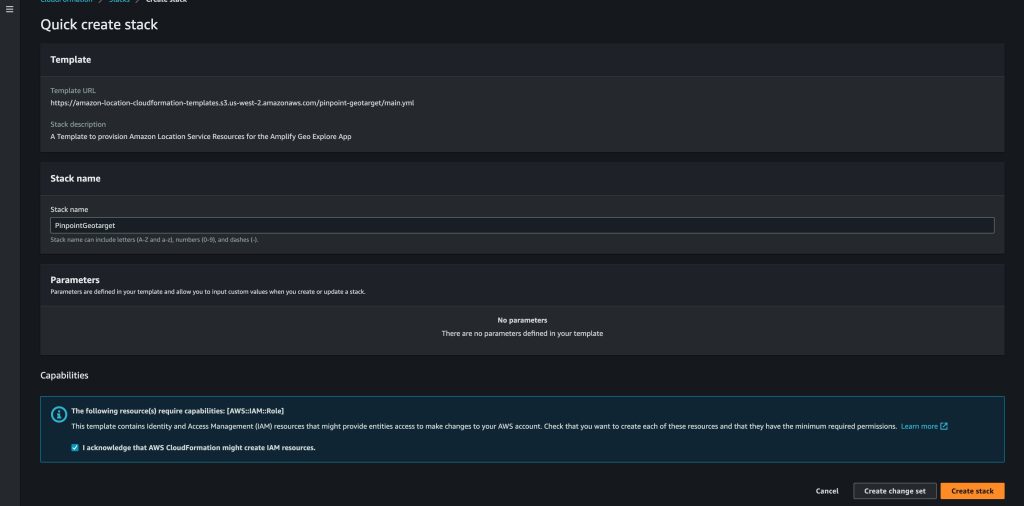

You can use the following AWS CloudFormation template to deploy the required infrastructure. Before deployment, you need to sign up for QuickSight access through the AWS Management Console.

Load generic address data to Amazon Redshift

Amazon Redshift is a fully managed, petabyte-scale data warehouse service in the cloud. Redshift Serverless makes it straightforward to run analytics workloads of any size without having to manage data warehouse infrastructure.

To load our address data, we first create a Redshift Serverless workgroup. Then we use Amazon Redshift Query Editor v2 to load customer data from Amazon Simple Storage Service (Amazon S3).

Create a Redshift Serverless workgroup

There are two primary components of the Redshift Serverless architecture:

- Namespace – A collection of database objects and users. Namespaces group together all of the resources you use in Redshift Serverless, such as schemas, tables, users, datashares, and snapshots.

- Workgroup – A collection of compute resources. Workgroups have network and security settings that you can configure using the Redshift Serverless console, the AWS Command Line Interface (AWS CLI), or the Redshift Serverless APIs.

To create your namespace and workgroup, refer to Creating a data warehouse with Amazon Redshift Serverless. For this exercise, name your workgroup sandbox and your namespace adx-demo.

Use Query Editor v2 to load customer data from Amazon S3

You can use Query Editor v2 to submit queries and load data to your data warehouse through a web interface. To configure Query Editor v2 for your AWS account, refer to Data load made easy and secure in Amazon Redshift using Query Editor V2. After it’s configured, complete the following steps:

- Use the following SQL to create the

customer_dataschema within the dev database in your data warehouse:

- Use the following SQL DDL to create your target table into which you’ll load your customer address data:

- Load the address_list.csv file to the table you just created. For instructions, refer to Data load made easy and secure in Amazon Redshift using Query Editor V2.

The file has no column headers and is pipe delimited (|). For information on how to load data from either Amazon S3 or your local desktop, refer to Loading data into a database.

Use Location Service to geocode and enrich address data

Location Service lets you add location data and functionality to applications, which includes capabilities such as maps, points of interest, geocoding, routing, geofences, and tracking.

Our data is in Amazon Redshift, so we need to access the Location Service APIs using SQL statements. Each row of data contains an address that we want to enrich and geotag using the Location Service APIs. Amazon Redshift allows developers to create UDFs using a SQL SELECT clause, Python, or Lambda.

Lambda is a compute service that lets you run code without provisioning or managing servers. With Lambda UDFs, you can write custom functions with complex logic and integrate with third-party components. Scalar Lambda UDFs return one result per invocation of the function—in this case, the Lambda function runs one time for each row of data it receives.

For this post, we write a Lambda function that uses the Location Service API to geotag and validate our customer addresses. Then we register this Lambda function as a UDF with our Redshift instance, allowing us to call the function from a SQL command.

For instructions to create a Location Service place index and create your Lambda function and scalar UDF, refer to Access Amazon Location Service from Amazon Redshift. For this post, we use ESRI as a provider and name the place index placeindex.redshift.

Test your new function with the following code, which returns the coordinates of the White House in Washington, DC:

Subscribe to demographic data from AWS Data Exchange

AWS Data Exchange is a data marketplace with more than 3,500 products from over 300 providers delivered—through files, APIs, or Amazon Redshift queries—directly to the data lakes, applications, analytics, and machine learning models that use it.

First, we need to give our Redshift namespace permission via AWS Identity and Access Management (IAM) to access subscriptions on AWS Data Exchange. Then we can subscribe to our sample demographic data. Complete the following steps:

- On the IAM console, add the

AWSDataExchangeSubscriberFullAccessmanaged policy to your Amazon Redshift commands access role you assigned when creating the namespace. - On the AWS Data Exchange console, navigate to the dataset ACS – Sociodemographics (USA, Census Block Groups, 2019), provided by CARTO.

- Choose Continue to subscribe, then choose Subscribe.

The subscription may take a few minutes to configure.

- When your subscription is in place, navigate back to the Redshift Serverless console.

- In the navigation pane, choose Datashares.

- On the Subscriptions tab, choose the datashare that you just subscribed to.

- On the datashare details page, choose Create database from datashare.

- Choose the namespace you created earlier and provide a name for the new database that will hold the shared objects from the dataset you subscribed to.

In Query Editor v2, you should see the new database you just created and two new tables: one that holds the block group polygons and another that holds the demographic information for each block group.

Join geocoded customer data to census data with geospatial queries

There are two primary types of spatial data: raster and vector data. Raster data is represented as a grid of pixels and is beyond the scope of this post. Vector data is comprised of vertices, edges, and polygons. With geospatial data, vertices are represented as latitude and longitude points and edges are the connections between pairs of vertices. Think of the road connecting two intersections on a map. A polygon is a set of vertices with a series of connecting edges that form a continuous shape. A simple rectangle is a polygon, just as the state border of Ohio can be represented as a polygon. The geography_usa_blockgroup_2019 dataset that you subscribed to has 220,134 rows, each representing a single census block group and its geographic shape.

Amazon Redshift supports the storage and querying of vector-based spatial data with the GEOMETRY and GEOGRAPHY data types. You can use Redshift SQL functions to perform queries such as a point in polygon operation to determine if a given latitude/longitude point falls within the boundaries of a given polygon (such as state or county boundary). In this dataset, you can observe that the geom column in geography_usa_blockgroup_2019 is of type GEOMETRY.

Our goal is to determine which census block (polygon) each of our geotagged addresses falls within so we can enrich our customer records with details that we know about the census block. Complete the following steps:

- Build a new table with the geocoding results from our UDF:

- Use the following code to extract the different address fields and latitude/longitude coordinates from the JSON column and create a new table with the results:

This code uses the ST_POINT function to create a new column from the latitude/longitude coordinates called address_point of type GEOMETRY and subtype POINT. It uses the ST_SetSRID geospatial function to set the spatial reference identifier (SRID) of the new column to 4326.

The SRID defines the spatial reference system to be used when evaluating the geometry data. It’s important when joining or comparing geospatial data that they have matching SRIDs. You can check the SRID of an existing geometry column by using the ST_SRID function. For more information on SRIDs and GEOMETRY data types, refer to Querying spatial data in Amazon Redshift.

- Now that your customer addresses are geocoded as latitude/longitude points in a geometry column, you can use a join to identify which census block shape your new point falls within:

The preceding code creates a new table called customer_addresses_with_census, which joins the customer addresses to the census block in which they belong as well as the demographic data associated with that census block.

To do this, you used the ST_CONTAINS function, which accepts two geometry data types as an input and returns TRUE if the 2D projection of the first input geometry contains the second input geometry. In our case, we have census blocks represented as polygons and addresses represented as points. The join in the SQL statement succeeds when the point falls within the boundaries of the polygon.

Visualize the new demographic data with QuickSight

QuickSight is a cloud-scale business intelligence (BI) service that you can use to deliver easy-to-understand insights to the people who you work with, wherever they are. QuickSight connects to your data in the cloud and combines data from many different sources.

First, let’s build some new calculated fields that will help us better understand the demographics of our customer base. We can do this in QuickSight, or we can use SQL to build the columns in a Redshift view. The following is the code for a Redshift view:

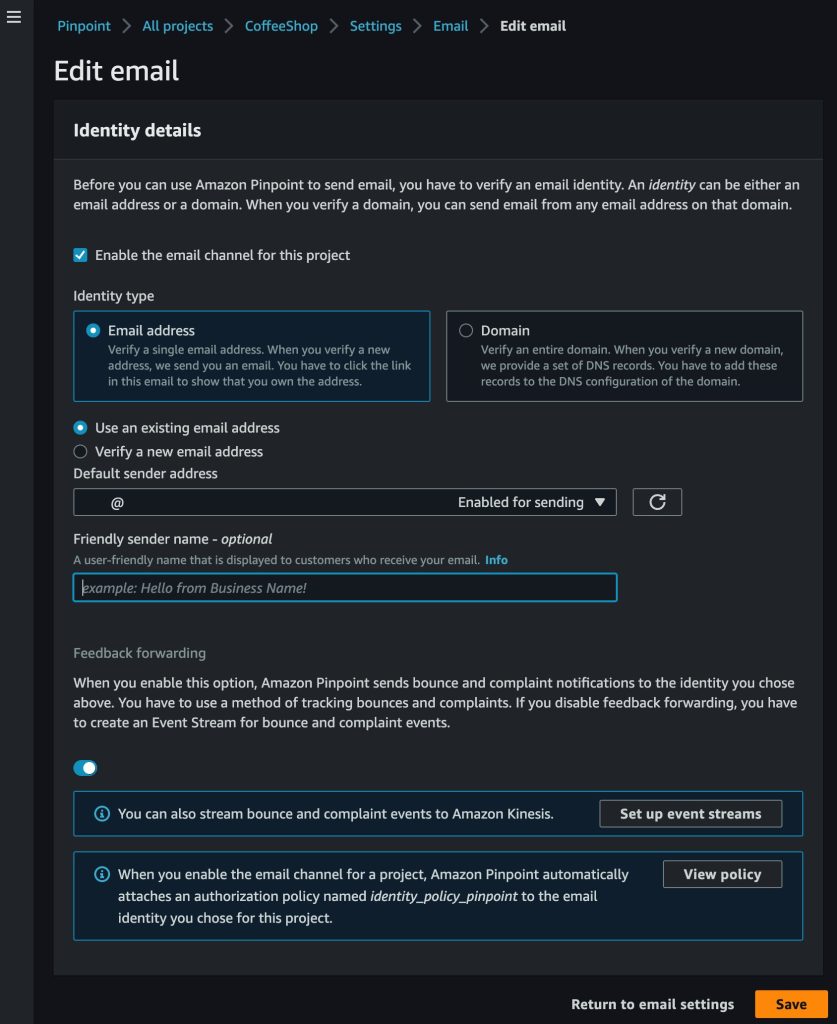

To get QuickSight to talk to our Redshift Serverless endpoint, complete the following steps:

- Manually authorize connections from QuickSight to Redshift clusters. For instructions, refer to Authorizing connections from Amazon QuickSight to Amazon Redshift clusters (stop after Step 19).

- Configure the VPC connection between QuickSight and the Redshift Serverless endpoint.

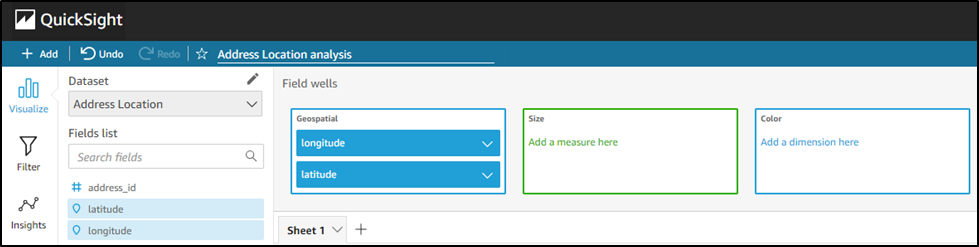

Now you can create a new dataset in QuickSight.

- On the QuickSight console, choose Datasets in the navigation pane.

- Choose New dataset.

- We want to create a dataset from a new data source and use the Redshift: Manual connect option.

- Provide the connection information for your Redshift Serverless workgroup.

You will need the endpoint for our workgroup and the user name and password that you created when you set up your workgroup. You can find your workgroup’s endpoint on the Redshift Serverless console by navigating to your workgroup configuration. The following screenshot is an example of the connection settings needed. Notice the connection type is the name of the VPC connection that you previously configured in QuickSight. When you copy the endpoint from the Redshift console, be sure to remove the database and port number from the end of the URL before entering it in the field.

- Save the new data source configuration.

You’ll be prompted to choose the table you want to use for your dataset.

- Choose the new view that you created that has your new derived fields.

- Select Directly query your data.

This will connect your visualizations directly to the data in the database rather than ingesting data into the QuickSight in-memory data store.

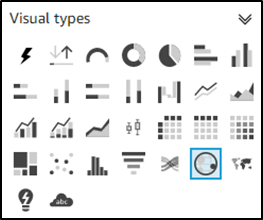

- To create a histogram of median income level, choose the blank visual on Sheet1 and then choose the histogram visual icon under Visual types.

- Choose

median_incomeunder Fields list and drag it to the Value field well.

This builds a histogram showing the distribution of median_income for our customers based on the census block group in which they live.

Conclusion

In this post, we demonstrated how companies can use open census data available on AWS Data Exchange to effortlessly gain a high-level understanding of their customer base from a demographic standpoint. This basic understanding of customers based on where they live can serve as the foundation for more targeted marketing campaigns and even influence product development and service offerings.

As always, AWS welcomes your feedback. Please leave your thoughts and questions in the comments section.

About the Author

Tony Stricker is a Principal Technologist on the Data Strategy team at AWS, where he helps senior executives adopt a data-driven mindset and align their people/process/technology in ways that foster innovation and drive towards specific, tangible business outcomes. He has a background as a data warehouse architect and data scientist and has delivered solutions in to production across multiple industries including oil and gas, financial services, public sector, and manufacturing. In his spare time, Tony likes to hang out with his dog and cat, work on home improvement projects, and restore vintage Airstream campers.

Tony Stricker is a Principal Technologist on the Data Strategy team at AWS, where he helps senior executives adopt a data-driven mindset and align their people/process/technology in ways that foster innovation and drive towards specific, tangible business outcomes. He has a background as a data warehouse architect and data scientist and has delivered solutions in to production across multiple industries including oil and gas, financial services, public sector, and manufacturing. In his spare time, Tony likes to hang out with his dog and cat, work on home improvement projects, and restore vintage Airstream campers.

Alan Peaty is a Senior Partner Solutions Architect at AWS. Alan helps Global Systems Integrators (GSIs) and Global Independent Software Vendors (GISVs) solve complex customer challenges using AWS services. Prior to joining AWS, Alan worked as an architect at systems integrators to translate business requirements into technical solutions. Outside of work, Alan is an IoT enthusiast and a keen runner who loves to hit the muddy trails of the English countryside.

Alan Peaty is a Senior Partner Solutions Architect at AWS. Alan helps Global Systems Integrators (GSIs) and Global Independent Software Vendors (GISVs) solve complex customer challenges using AWS services. Prior to joining AWS, Alan worked as an architect at systems integrators to translate business requirements into technical solutions. Outside of work, Alan is an IoT enthusiast and a keen runner who loves to hit the muddy trails of the English countryside. Parag Srivastava is a Solutions Architect at AWS, helping enterprise customers with successful cloud adoption and migration. During his professional career, he has been extensively involved in complex digital transformation projects. He is also passionate about building innovative solutions around geospatial aspects of addresses.

Parag Srivastava is a Solutions Architect at AWS, helping enterprise customers with successful cloud adoption and migration. During his professional career, he has been extensively involved in complex digital transformation projects. He is also passionate about building innovative solutions around geospatial aspects of addresses.

.

.