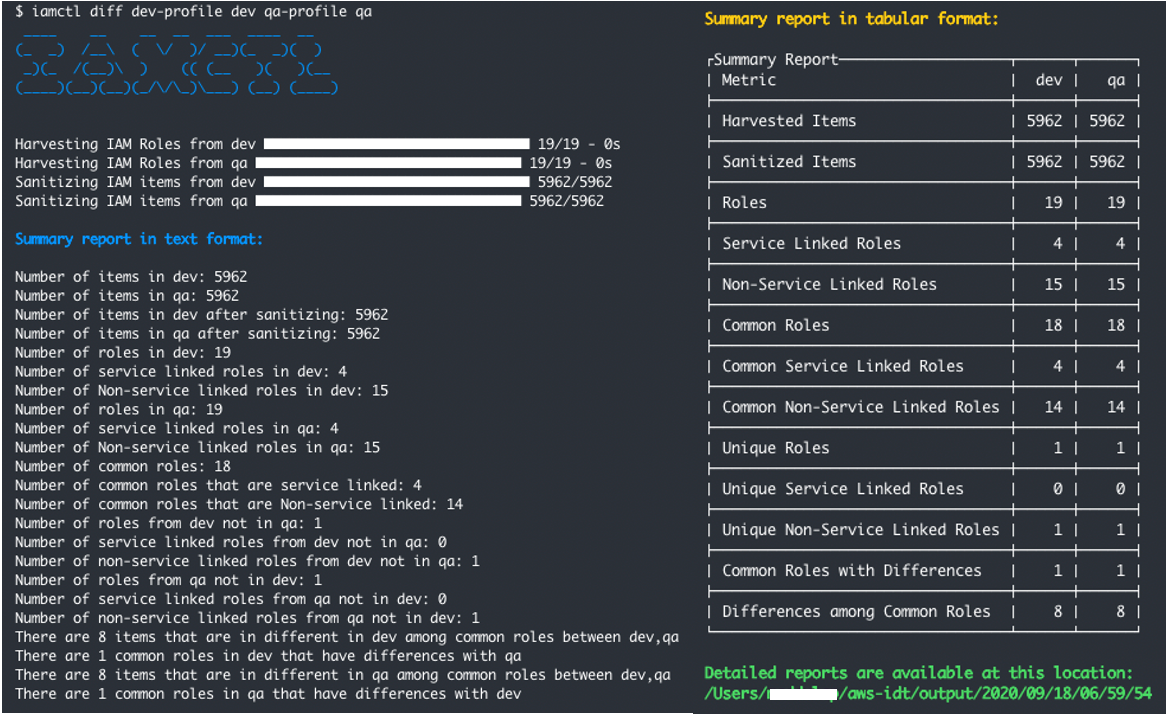

Post Syndicated from Nini Ren original https://aws.amazon.com/blogs/security/refine-permissions-for-externally-accessible-roles-using-iam-access-analyzer-and-iam-action-last-accessed/

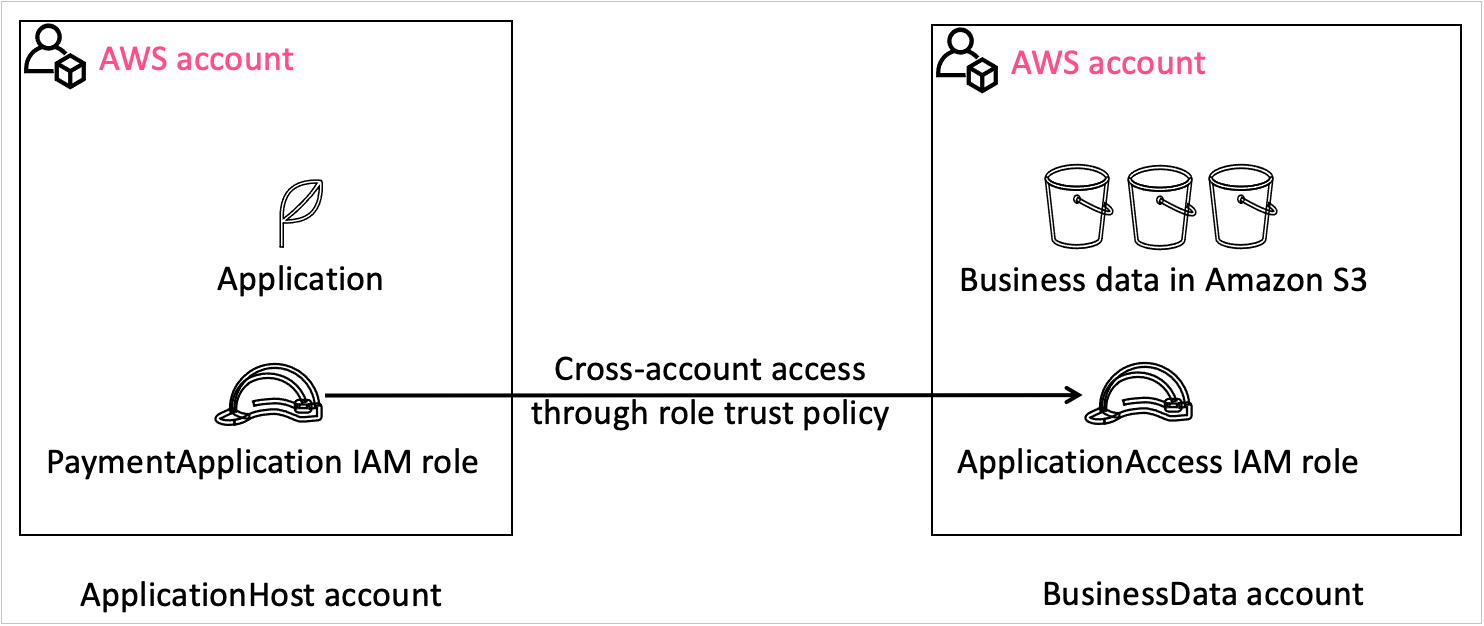

When you build on Amazon Web Services (AWS) across accounts, you might use an AWS Identity and Access Management (IAM) role to allow an authenticated identity from outside your account—such as an IAM entity or a user from an external identity provider—to access the resources in your account. IAM roles have two types of policies attached to them: a trust policy that allows access to an external entity, and a permissions policy that defines what actions the role can take. This blog post focuses on how to use AWS Identity and Access Management Access Analyzer cross-account access findings and IAM action last accessed information to refine the permissions policies of your IAM roles that have a trust policy.

IAM Access Analyzer helps you set, verify, and refine permissions. To learn more about how IAM Access Analyzer guides you toward least-privilege permissions, visit Using AWS IAM Access Analyzer. Action last accessed information helps you identify unused permissions and refine the access of your IAM roles to only the actions they use. IAM now provides action last accessed information for more than 140 services such as Amazon Kinesis Data Streams and Data Firehose, Amazon DynamoDB, and Amazon Simple Queue Service (Amazon SQS).

This blog post walks you through how to use IAM Access Analyzer and action last accessed to refine the required permissions for your IAM roles that have a trust policy, which allows entities outside of your account to assume a role and access your resources.

Use IAM roles to grant access to an external entity

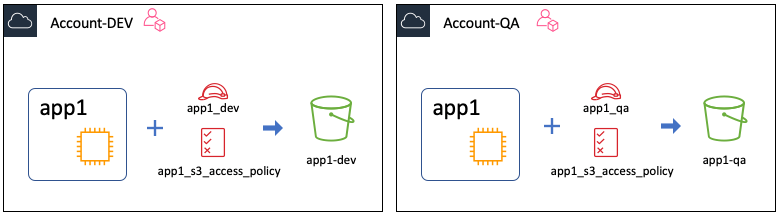

You can create an IAM role that grants permissions for an entity outside your account to access the resources in your account. For example, if you’re an application developer, you might grant cross-account access to your AWS resources by using a role and attaching a trust policy to the role.

To allow an external entity access to your resources by using a role, you first create a role with a role trust policy to grant access to entities outside your account, and then grant permissions that specify which actions the role can take. The external entities can then assume the role in your account and access your resources based on the permissions you granted to the role. See Cross-account access using roles for more information.

You should restrict the access of roles that grant access outside of your account to just the permissions required to perform a specific task.

Use IAM Access Analyzer cross-account access findings to identify roles that grant access to external entities

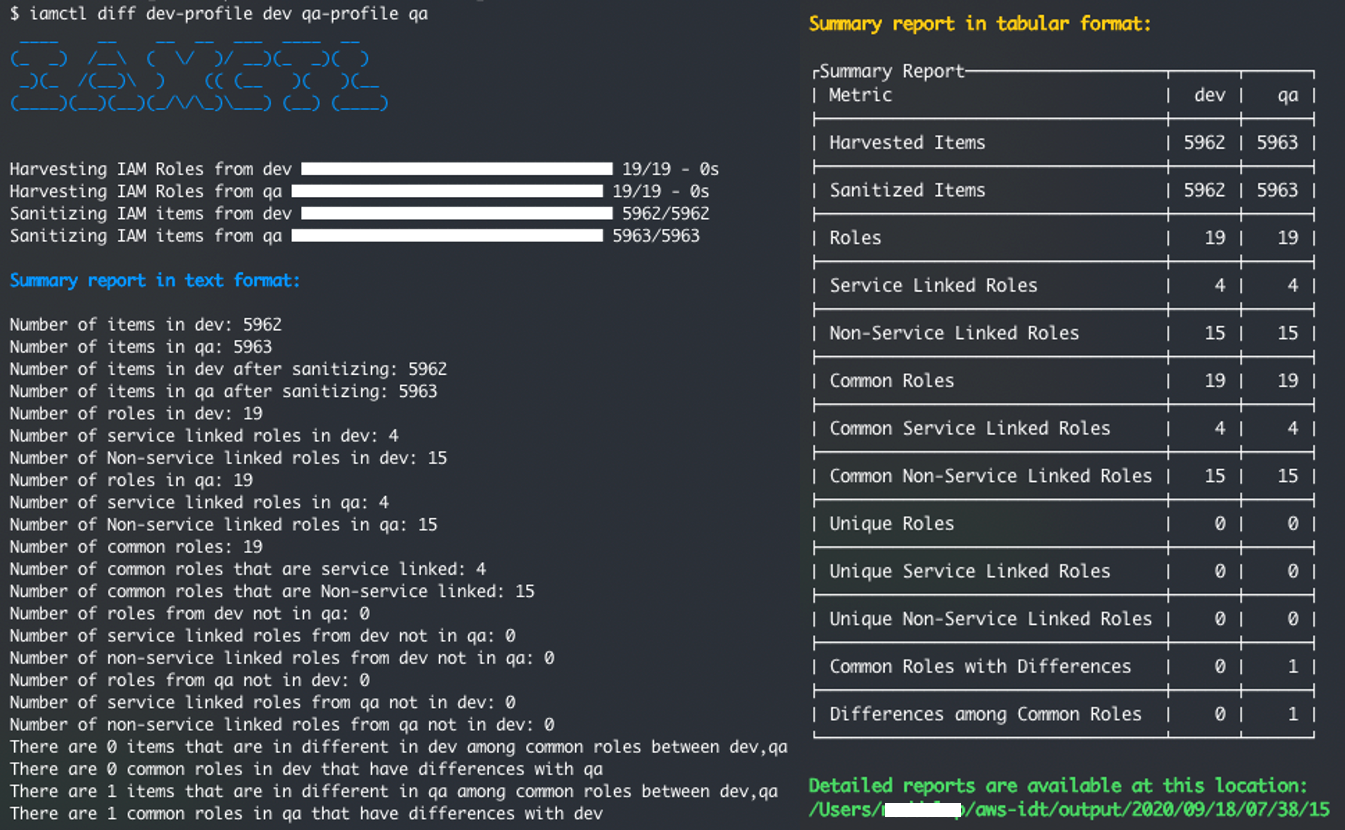

When you use role trust policies to grant account access to entities outside your account, those entities can access and take the allowed actions on your resources. IAM Access Analyzer continuously monitors your account to identify the resources in your account that can be accessed from outside your account and helps you verify whether the access permissions meet your intent. For the example in this post, if you were to add a new trust policy to your

ApplicationRole to grant permissions to an external account to access an application in your account, IAM Access Analyzer would let you know that ApplicationRole is accessible by entities from outside your account.

Use IAM action last accessed information to identify and remove unused permissions

After you’ve identified the IAM roles that grant access to entities outside your account, review what those roles can do and remove unused permissions. You can use action last accessed to show you the latest timestamp when your IAM role used an action, analyze its access permissions, and remove unused permissions.

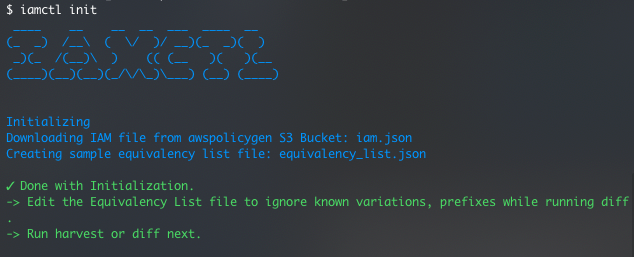

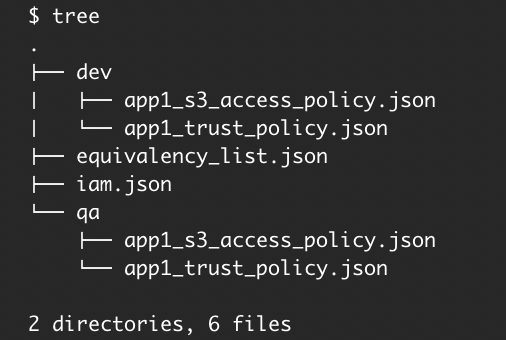

Refine permissions for externally accessible roles by using IAM Access Analyzer cross-account access findings and action last accessed information

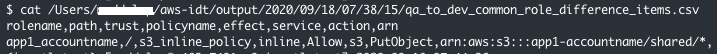

This example demonstrates how you can combine the information from IAM Access Analyzer cross-account access findings and IAM action last accessed information to identify roles that can be assumed from outside your account, review unused and unnecessary actions, and reduce the permissions available to external roles.

To view action last accessed information in the IAM console

- Open the AWS Management Console and go to the IAM console, and then select Access analyzer in the navigation pane.

- If you’ve already created an analyzer, go to Step 3. Otherwise, follow Identify Unintended Resource Access with IAM Access Analyzer to create an analyzer.

- Review your findings on the IAM Access Analyzer tab.

- Under Active findings, for Filter active findings, enter AWS::IAM::Role. The list of Active findings shows you the roles that can be accessed by entities outside your account.

- Under the Finding ID column, select a finding for a role (for example, ApplicationRole) that you want to review.

- A new page for the Finding ID will appear. Choose the resource ARN link in the Resource field under the Details section.

- A new page for the role will appear. Select the Access Advisor tab to review the last accessed information of your services for this role. This tab displays the AWS services to which the role has permissions. Action last accessed reports the actions listed in the IAM action last accessed information services and actions. The tracking period for services is the last 400 days—fewer if your AWS Region began tracking within the last 400 days. Learn more about Where AWS tracks last accessed information.

- In this exercise, we will use DynamoDB as an example. Under Allowed services, for Search, enter Amazon DynamoDB and under the Service column, choose Amazon DynamoDB. This will take you to a new section titled Allowed management actions for Amazon DynamoDB, which displays the action last accessed information of your role for DynamoDB. The Action column displays the action, the Last Accessed column displays the timestamp of when access was last attempted, and the Region accessed column displays in which region access was last attempted.

- The Action column on the resulting Allowed management actions for Amazon DynamoDB section includes the actions to which the role has permissions, when the role last accessed each action, and the Region accessed. You can sort the actions by choosing the arrow next to Last accessed.

- Because you want to remove unused permissions, filter for all unused actions for the role by selecting Services not accessed from the Last accessed dropdown list. This will show you the actions that haven’t been accessed during the tracking period.

- To return to the service view, choose Back to Allowed services and then select the Permissions tab. Select the plus sign to the left of DynamoDBAccess to see the JSON of the customer managed policy.

- Choose Edit and remove dynamodb:* and replace it with just the actions that have been used recently such as: DescribeTable and DescribeKinesisStreamingDestination. Not all actions are reported by action last accessed. Review the list of actions that action last accessed information reports and when action last accessed started tracking the action for the service in an AWS Region.

- Choose Next and then Save changes. Return to the Access Advisor tab to confirm that all the retained permissions have been used recently.

Figure 1: Findings filtered by resource types

Figure 2: Findings page

Figure 3: Last accessed information of allowed services

Figure 4: Action last accessed information for Amazon DynamoDB

Figure 5: Action last accessed information ordered by not accessed

Figure 6: The JSON code of the customer managed policy

Conclusion

In this post, you learned how to use IAM Access Analyzer and action last accessed information to identify and refine permissions for externally accessible roles in your journey toward least privilege. You first used IAM Access Analyzer cross-account access findings to identify IAM roles that can be accessed from outside your account. You then used IAM action last accessed information to review the permissions those roles are using and to remove unused permissions.

For more information about IAM Access Analyzer cross-account findings, see Findings for public and cross-account access. For more information about action last accessed information, see Things to know about last accessed information and the IAM action last accessed information services and actions.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, start a new thread on the AWS re:Post or contact AWS Support.