Post Syndicated from Michael Miller original https://aws.amazon.com/blogs/security/build-a-strong-identity-foundation-that-uses-your-existing-on-premises-active-directory/

This blog post outlines how to use your existing Microsoft Active Directory (AD) to reliably authenticate access to your Amazon Web Services (AWS) accounts, infrastructure running on AWS, and third-party applications. The architecture we describe is designed to be highly available and extends access to your existing AD to AWS, enabling your users to use their existing credentials to access authorized AWS resources and applications.

Many customers rely on AD as their single source of truth for IT identity management. HR automation processes are often already in place to automatically add, update, and remove employee access within an organization’s AD as staffing changes occur. Using a single source of truth as the basis for all authentication and authorization, both on-premises and in the cloud, makes it easier to manage access across multiple applications and services, because you are creating, managing, and revoking access from a single location. For example, if someone leaves your organization, you can revoke access for all applications and services (including AWS accounts) from one location. Additionally, this reduces risks associated with stranded or forgotten credentials, or users needing to remember multiple different sets of credentials.

Microsoft Active Directory (AD) is deployed on Microsoft Windows Server servers called domain controllers, which replicate the contents of the directory between the domain controllers that are hosting the AD domain. Multiple domain controllers are deployed within a domain to improve the availability and performance of the directory. The AD infrastructure should be designed to provide sufficiently high levels of availability and performance, because it governs access to your organization’s IT resources. This typically requires the placement of at least one domain controller in every customer hosting location, because the lack of availability of your identity store is likely to cause authentication and authorization failures, which in turn prevent access to resources.

These design principles align with the Security Pillar of the AWS Well-Architected Framework, which is focused on implementing a strong identity foundation. The Security Pillar guidance states that you should centralize identity management and aim to eliminate reliance on long-term static credentials. By using your existing AD, you can benefit from centralized identity management and your existing group-based permissions for access to your AWS accounts. Applications that are running on domain-joined servers can use their AD service account credentials when they access other domain-joined resources, which removes the need for those credentials to be stored in application configuration files. As your AWS usage grows, it is important to give serious consideration to effective identity management, both for access to AWS and AWS resources, and for your instances that are running on AWS.

By extending your existing Active Directory to AWS, you can continue to use your existing Active Directory user credentials and group policies to manage your Microsoft Windows Server servers, whether those servers are running on-premises or on AWS, and extend these capabilities to authenticate and authorize access to the AWS Management Console and third-party applications.

This post covers networking requirements and connectivity setup to enable network connectivity to your on-premises AD; the approach to extending your AD to AWS; integrating AWS Single Sign-On with your AD; and joining Amazon Elastic Compute Cloud (Amazon EC2) instances to AD. As part of the setup, you will add additional domain controllers running on Amazon EC2 instances to your existing AD, for availability and latency reasons. You will also build a resource forest to enable your existing AD identities to access AD-integrated AWS services and resources. This enables you to have a highly available single identity source as the source of truth for your user authentication.

Networking prerequisites to extend your Active Directory to AWS

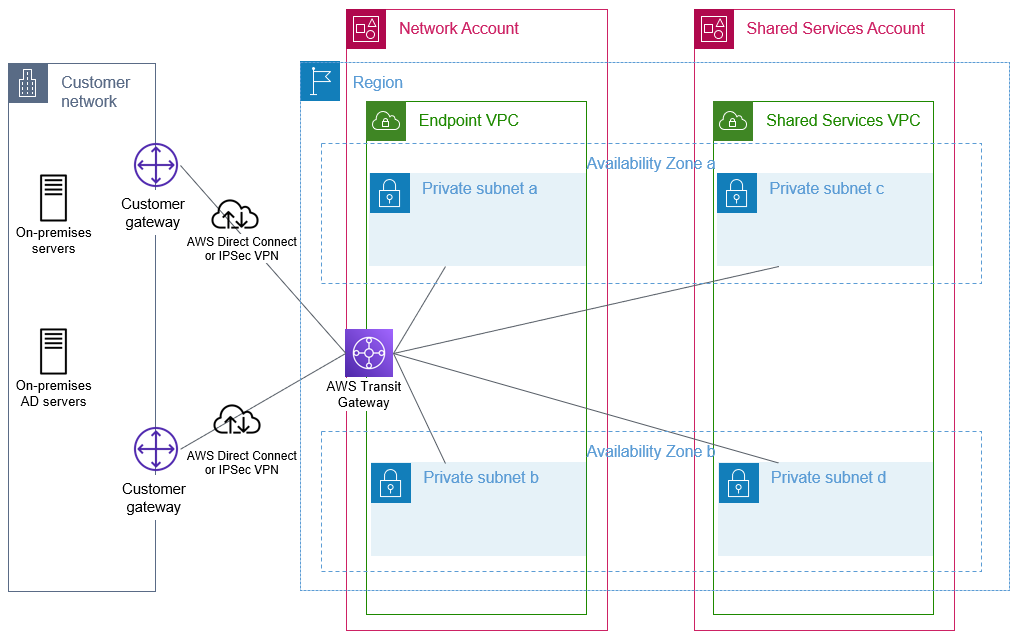

To enable Active Directory–related network communication, network connectivity needs to be established between your on-premises network and your AWS environment. You need to ensure there is connectivity between the on-premises network that is hosting your existing domain controllers and the Amazon Virtual Private Cloud (Amazon VPC) VPC that will host your AD infrastructure on AWS. Typically, hybrid network connectivity is configured within a network account within your organization, where the multiple AWS accounts within your organization are managed by using AWS Organizations. This network account effectively sits between your on-premises network and the resources, including the AD infrastructure, that are deployed in AWS.

You can provide connectivity between your on-premises network and your network account by using AWS Site-to-Site VPN or AWS Direct Connect connections. For an overview of the options to connect your on-premises network to AWS, refer to Amazon Virtual Private Cloud Connectivity Options. The necessary routing and firewall rules need to be configured to allow connectivity between these subnets and the on-premises network that is hosting your existing domain controllers. AWS recommends that you have highly resilient, fault-tolerant connectivity with dynamic routing between your on-premises network and your AWS network. You can achieve high resiliency through the use of redundant AWS Direct Connect connections, or, for less critical workloads, a VPN connection might offer sufficient resilience.

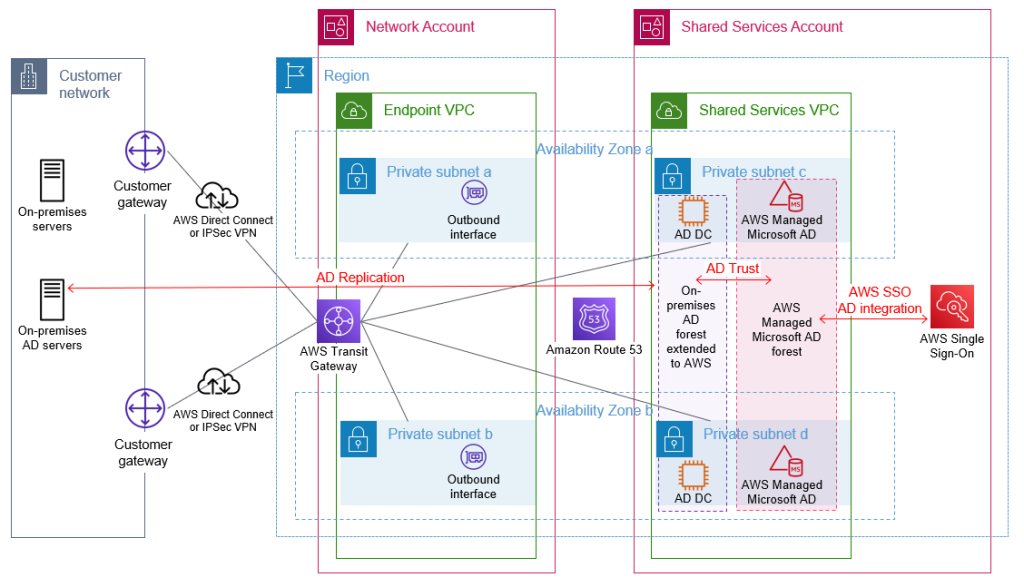

We recommend AWS Transit Gateway to provide connectivity between your AWS accounts. A transit gateway will be in your network account and then shared with your other AWS accounts that have VPCs that require access to on-premises networks or other VPCs. This enables a hub and spoke network architecture, which is used to provide connectivity both between your VPCs as needed and between your VPCs and your on-premises network. You will create a VPC, which we will refer to within this blog as the endpoint VPC, with subnets across two Availability Zones, within the network account. This endpoint VPC will be used later by Amazon Route 53 outbound endpoints for DNS resolution of AD-hosted DNS zones. Other documentation might refer to this endpoint VPC by alternative names, such as outbound VPC or egress VPC.

Your AD infrastructure that is running on AWS is typically deployed within a shared services account, sometimes referred to as an operations account. Within this shared services account, you will create a shared services VPC with at least two subnets within different Availability Zones to host your domain controller infrastructure on AWS. Your domain controller availability is increased when your architecture is configured to use multiple Availability Zones. You will attach this shared services VPC to the transit gateway that is shared from your network account. This VPC attachment provides connectivity between this VPC and your on-premises network through the transit gateway and network account. You will need to configure the subnet route table(s) and transit gateway route table(s) appropriately to provide IP connectivity between the shared services VPC and your on-premises network.

The sample architecture shown in Figure 1 illustrates the use of a transit gateway with two AWS Direct Connect connections to provide resilient connectivity between an on-premises network, the network account, and a VPC within the shared services account.

Figure 1: Foundational network connectivity between on-premises and AWS VPCs

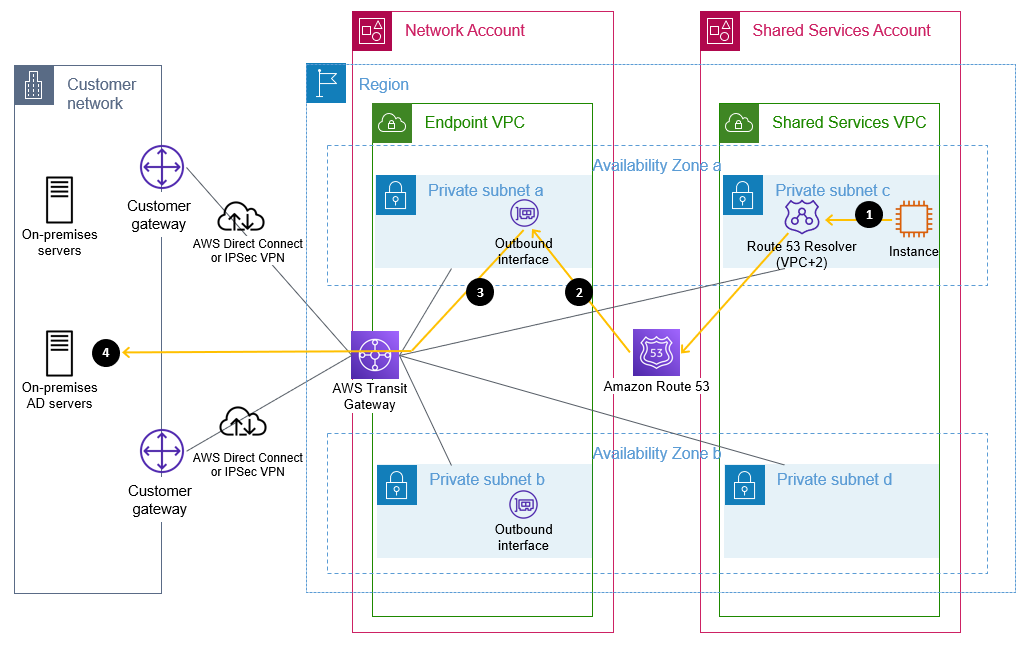

Active Directory relies heavily on Domain Name System (DNS) services and typically hosts its own DNS services on domain controllers. To establish name resolution of your AD-hosted DNS domains from within your VPCs, you should use Route 53 Resolver with outbound resolver endpoints and forwarding rules. Forwarding rules specify the domain name queries to forward from your VPCs to DNS servers that are authoritative for your AD DNS names. The queries will be forwarded through the outbound endpoints. The outbound endpoints will be configured in the network account on the endpoint VPC, and use the previously configured network connectivity to communicate with your existing DNS servers. You will configure your existing DNS servers as targets in the forwarding rules. Configuring Route 53 Resolver with the appropriate forwarding rules will help to enable seamless DNS resolution between your on-premises and AWS hosted resources. You need to share the Route 53 Resolver rules with your organization so that they can be used by your other AWS accounts. These shared rules are then associated with your VPCs, which need to be able to resolve names within AD-hosted DNS domains. Refer to the AWS Hybrid DNS with Active Directory technical guide for detailed step-by-step configuration guidance.

Figure 2 shows a sample flow of a DNS query from an Amazon Elastic Compute Cloud (Amazon EC2) instance through Route 53 Resolver and an outbound interface when resolving an on-premises domain name that matches a forwarding rule. In this example, the domain controllers are also the DNS servers, but splitting the DNS and AD servers is also fully supported.

Figure 2: Flow of a DNS query matching a forwarding rule through a Route 53 outbound endpoint

The flow is as follows:

- An Amazon EC2 instance sends a DNS request for an internal name, such as ad.example.com, to the Route 53 Resolver address within the VPC.

- Route 53 matches this query against a forwarding rule and directs the query through the configured outbound interface.

- The query is sent from the outbound interface towards the target IP address, configured in the forwarding rule, of a server that is authoritative for the domain name.

- This target DNS server receives the query and responds.

Extend your Active Directory to AWS

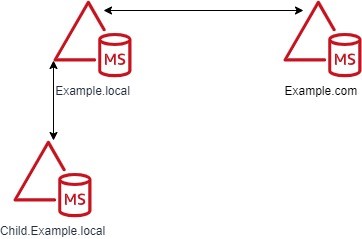

AWS offers multiple options for hosting Active Directory on AWS, which are discussed in detail in the Active Directory Domain Services on AWS Design and Planning Guide. This blog post incorporates both the option of running Active Directory on Amazon EC2 and the AWS Managed Microsoft Active Directory option from that guide. The architecture covered in this post is recommended if:

- You have multiple on-premises Active Directory domains that contain users

- You want a separate resource domain on AWS

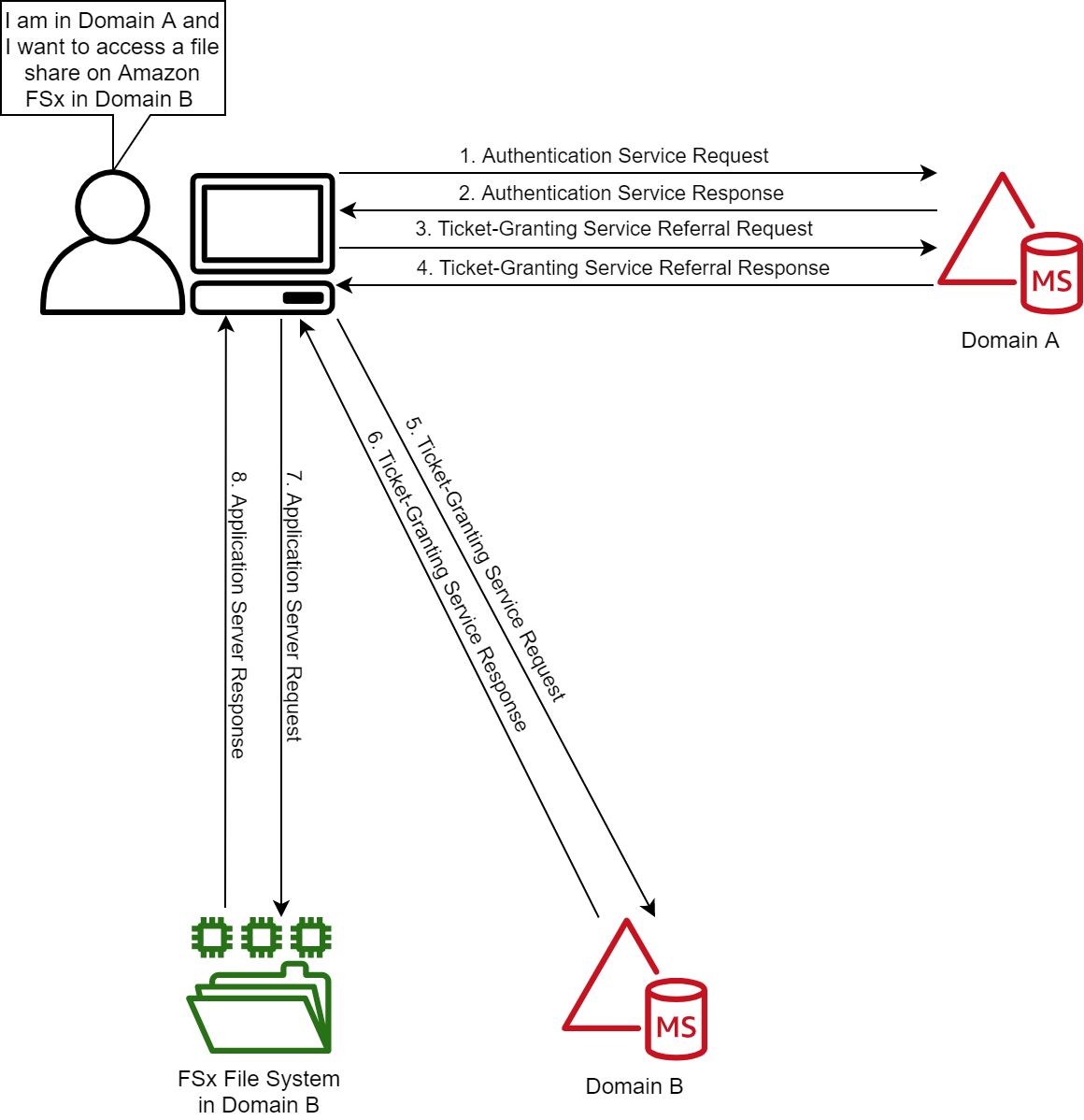

- You want to integrate AD with AWS services such as Amazon Relational Database Service (Amazon RDS) with Active Directory–based authentication, Amazon WorkSpaces, seamless domain joins for Windows and Linux instances, or Amazon FSx for Windows File Server

To extend your existing AD to AWS, domain controllers on Amazon EC2 instances are required, because AWS Managed Microsoft AD does not support being added to an existing forest. An AWS Managed Microsoft AD resource forest is required to enable integration with AWS services that offer AD integration. This is discussed in more detail in the following sections.

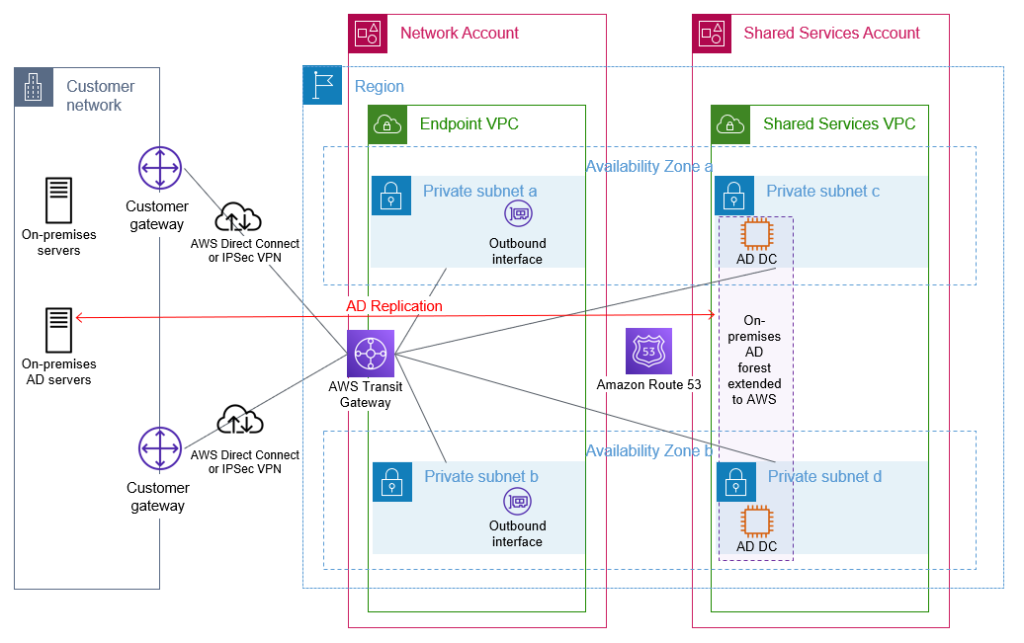

Extend your on-premises AD to AWS

Your first step is to build additional AD domain controllers for your existing AD domain(s) on Amazon EC2 instances that are running Microsoft Windows Server. You would then manage these domain controllers along with your existing domain controllers. By running additional domain controllers within AWS, you remove dependencies on network links and improve reliability and performance of your directory for infrastructure that is running within AWS. Communication between the domain controllers and other domain-joined resources within AWS is designed to remain within the AWS Region. AWS recommends that a minimum of two domain controllers, spread across multiple Availability Zones for resilience, are deployed. You should deploy the domain controllers into the subnets within the shared services VPC.

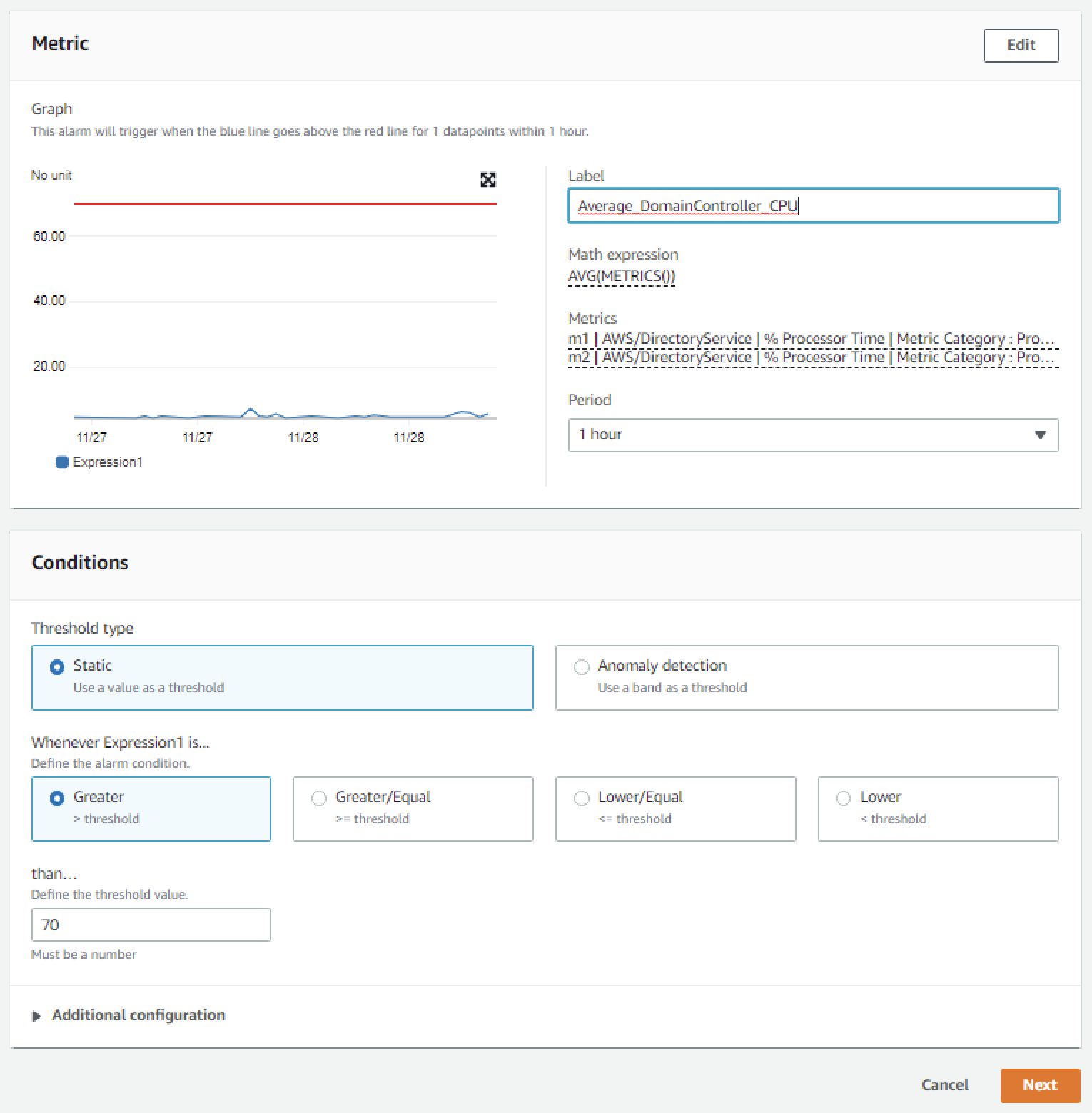

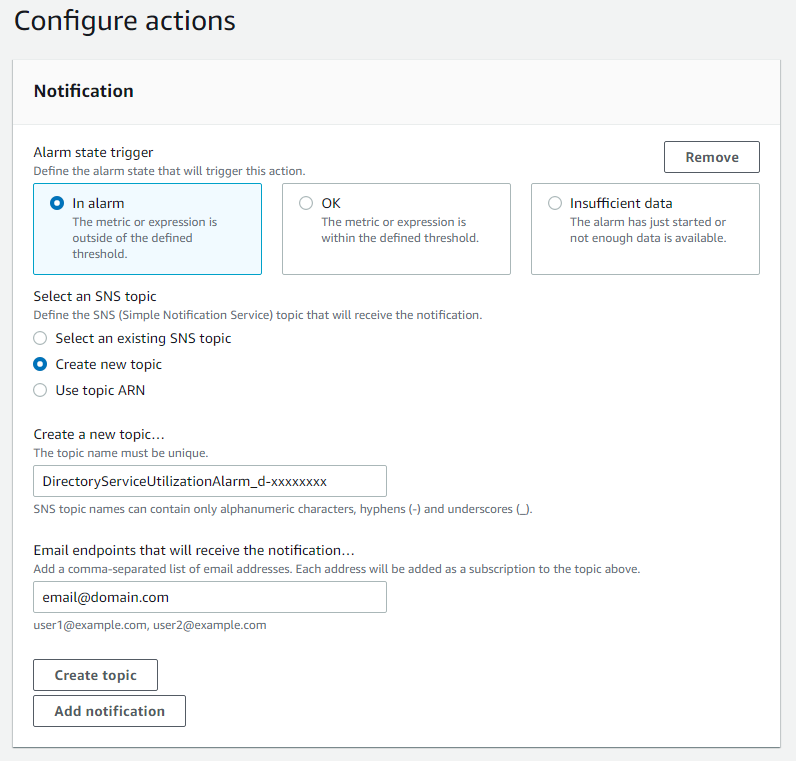

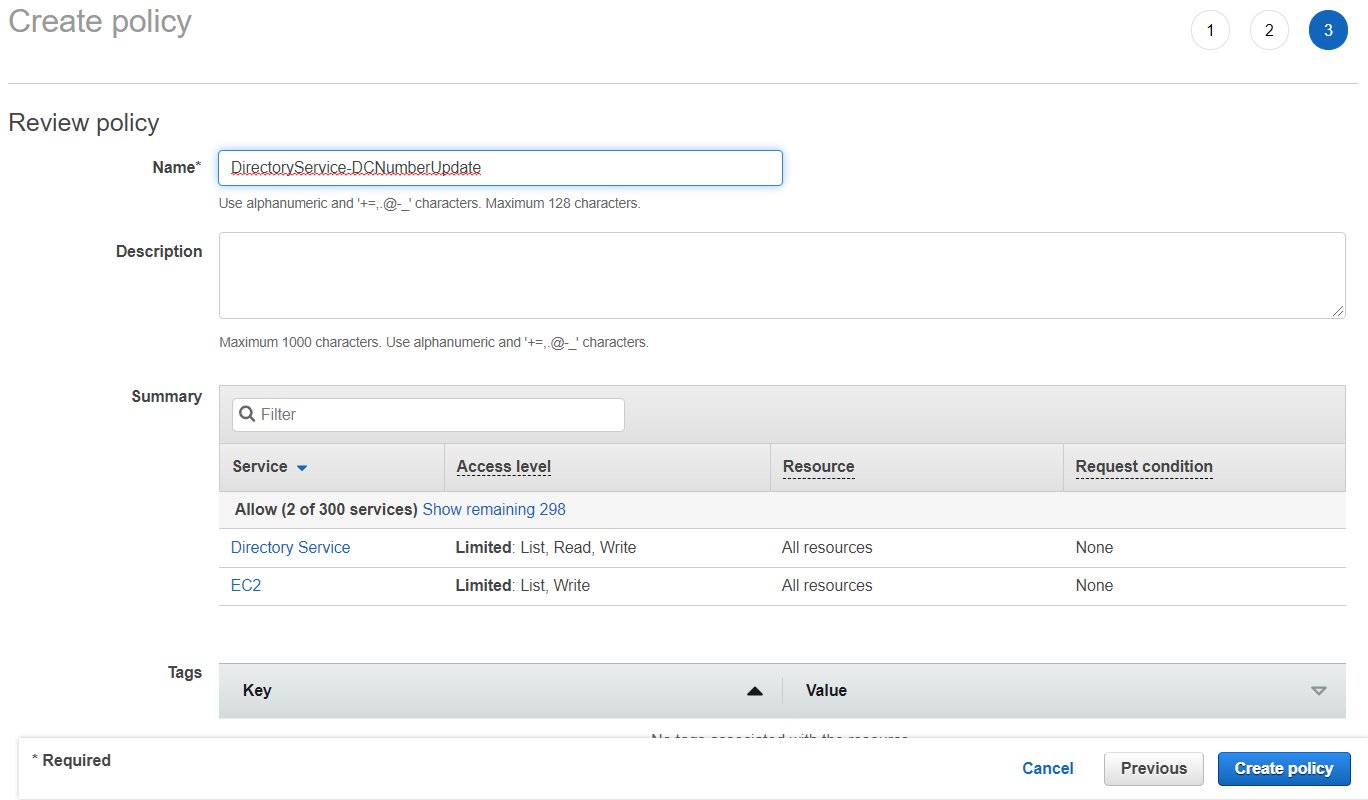

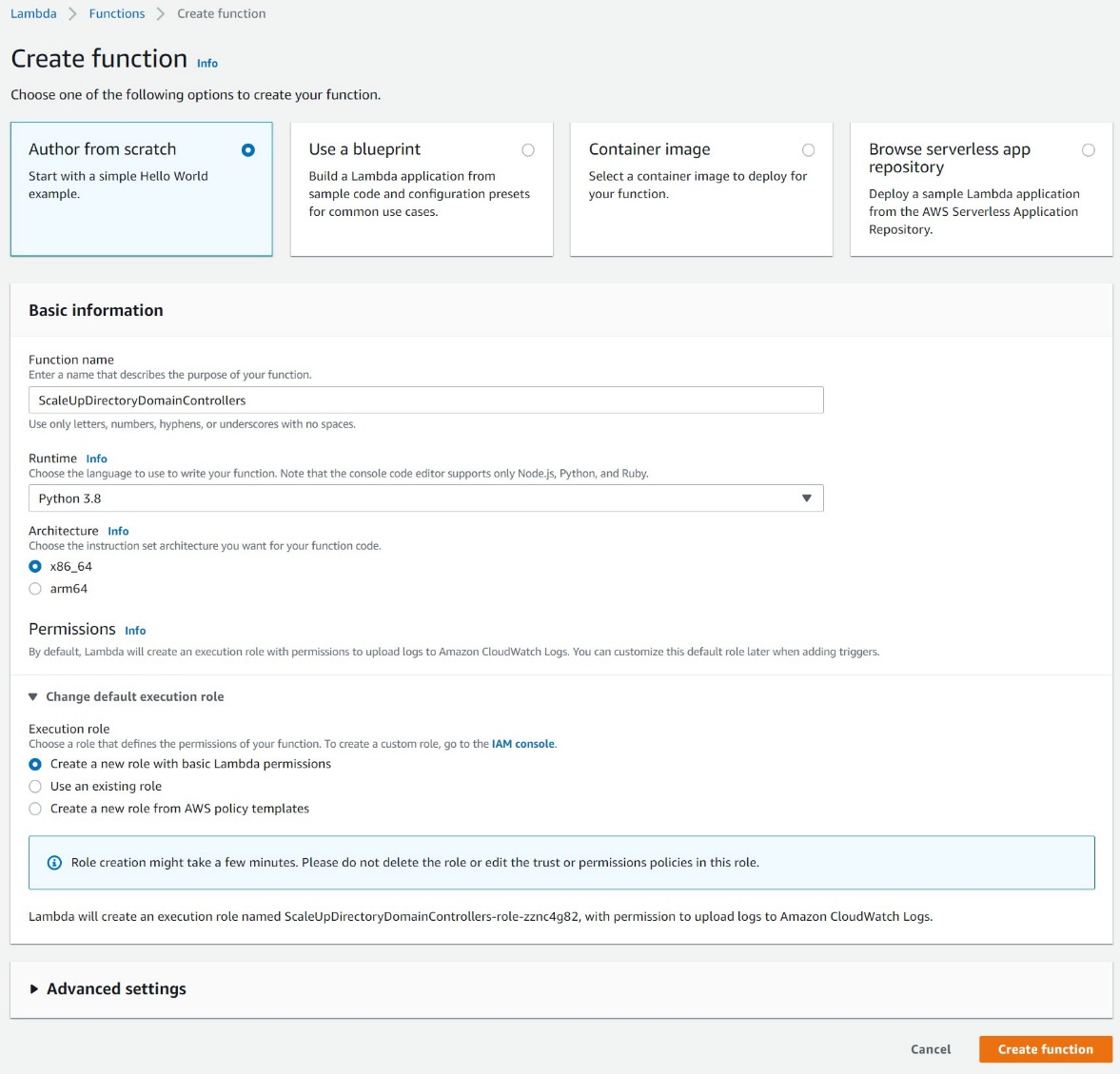

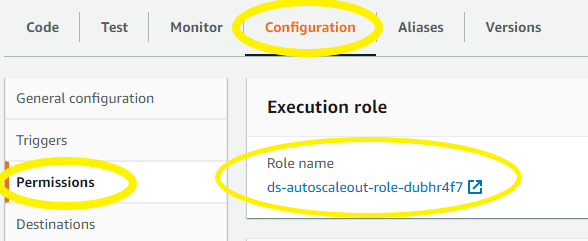

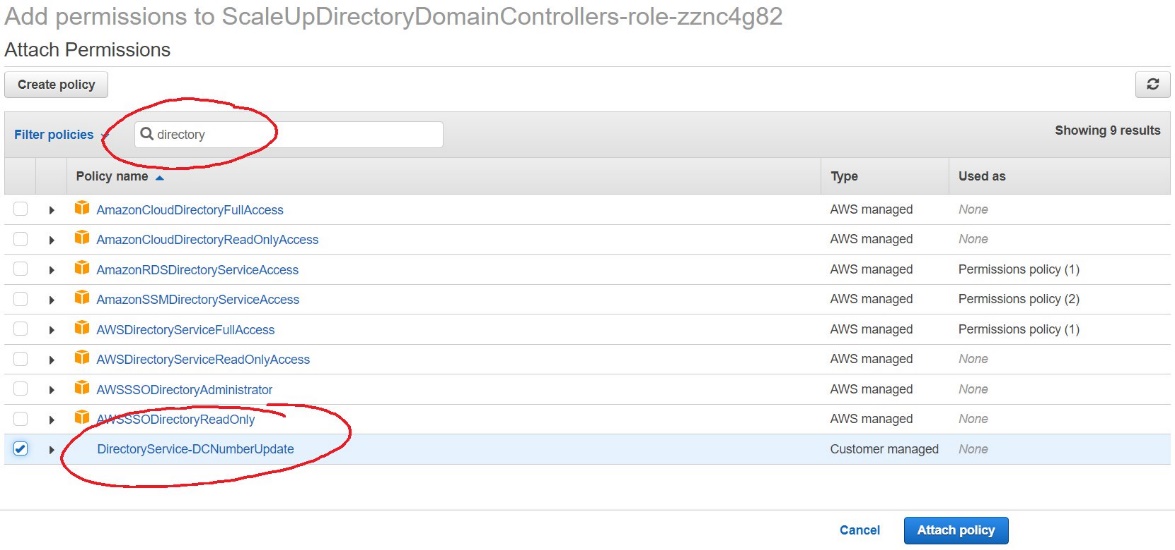

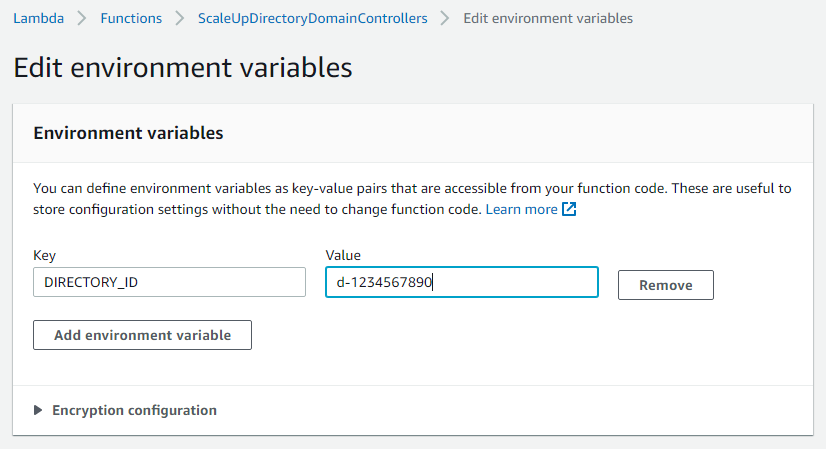

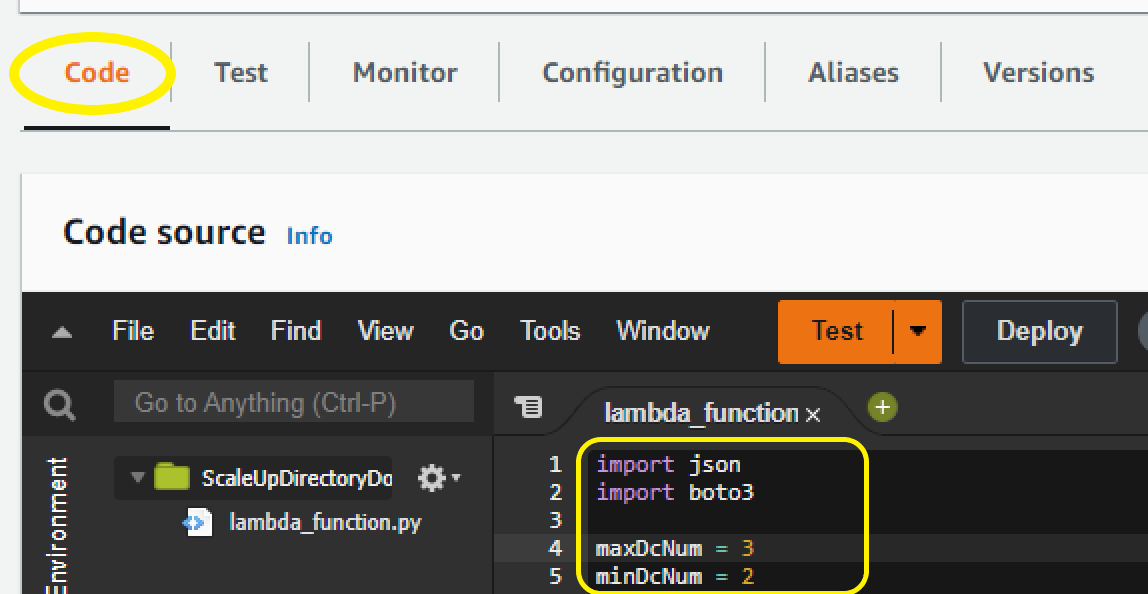

Depending on your capacity planning considerations and availability goals, you may choose to deploy more than two domain controllers. The number of users, servers, and applications that access your directory will influence the required number of domain controllers. Security considerations, including the required TCP/IP ports, and management options are discussed in the blog post Securely extend and access on-premises Active Directory domain controllers in AWS.

These new domain controllers will be in a new AD site, which includes all your VPC CIDR blocks within your chosen AWS Region. In Active Directory, a site represents a group of IP subnets that are connected with fast and highly reliable network connectivity. Site information is used to locate domain controllers closest to the client, to reduce latency and unnecessary network traffic. AWS recommends that your VPCs within an AWS Region belong to the same new Active Directory site, consisting only of your IP ranges within the chosen AWS Region, and that consistent site names are used in all AD forests that are connected by trusts. Further details are available in the section Designing Active Directory sites and services topology in Active Directory Domain Services on AWS and in Designing the Site Topology.

Update targets in Route 53 Resolver rules

After you have deployed AD-integrated DNS servers to these domain controllers and opened the required TCP/IP ports on the associated security groups, you can update the targets in your Route 53 Resolver forwarding rules to use the IP addresses of these servers. This will improve performance and reliability of DNS resolution, by removing the need for DNS resolution traffic to flow between AWS and on-premises infrastructure.

Figure 3 shows Amazon EC2 instances that are configured as AD domain controllers within a shared services VPC. After they are configured, these domain controllers will replicate with the on-premises domain controllers, using the connectivity that is provided through the transit gateway.

Figure 3: On-premises AD extended to AWS by deploying additional domain controllers

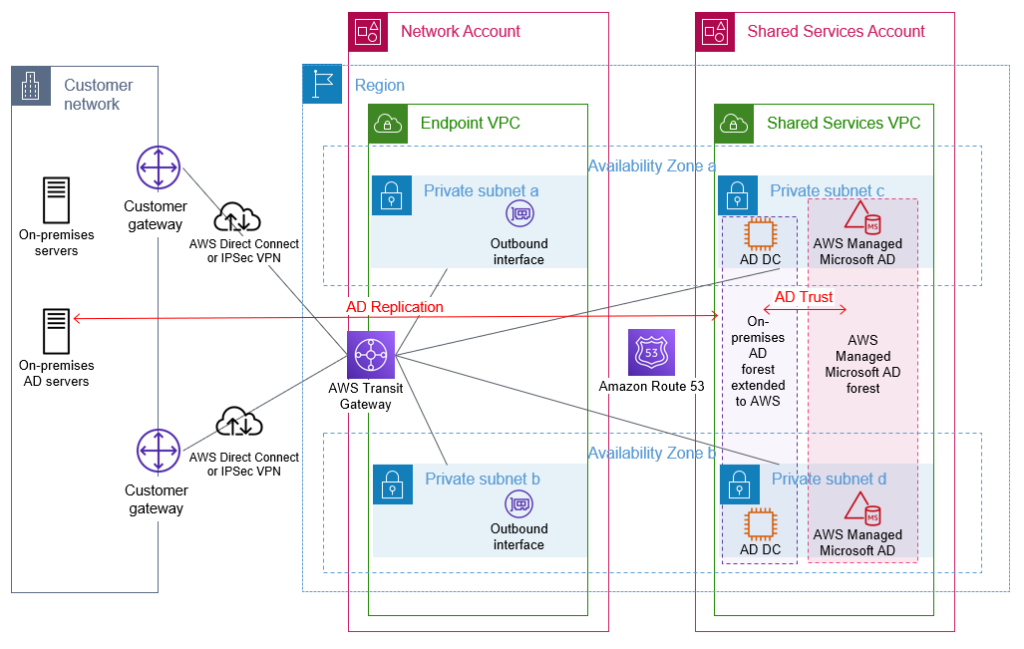

Build a resource forest for AWS hosted infrastructure and applications

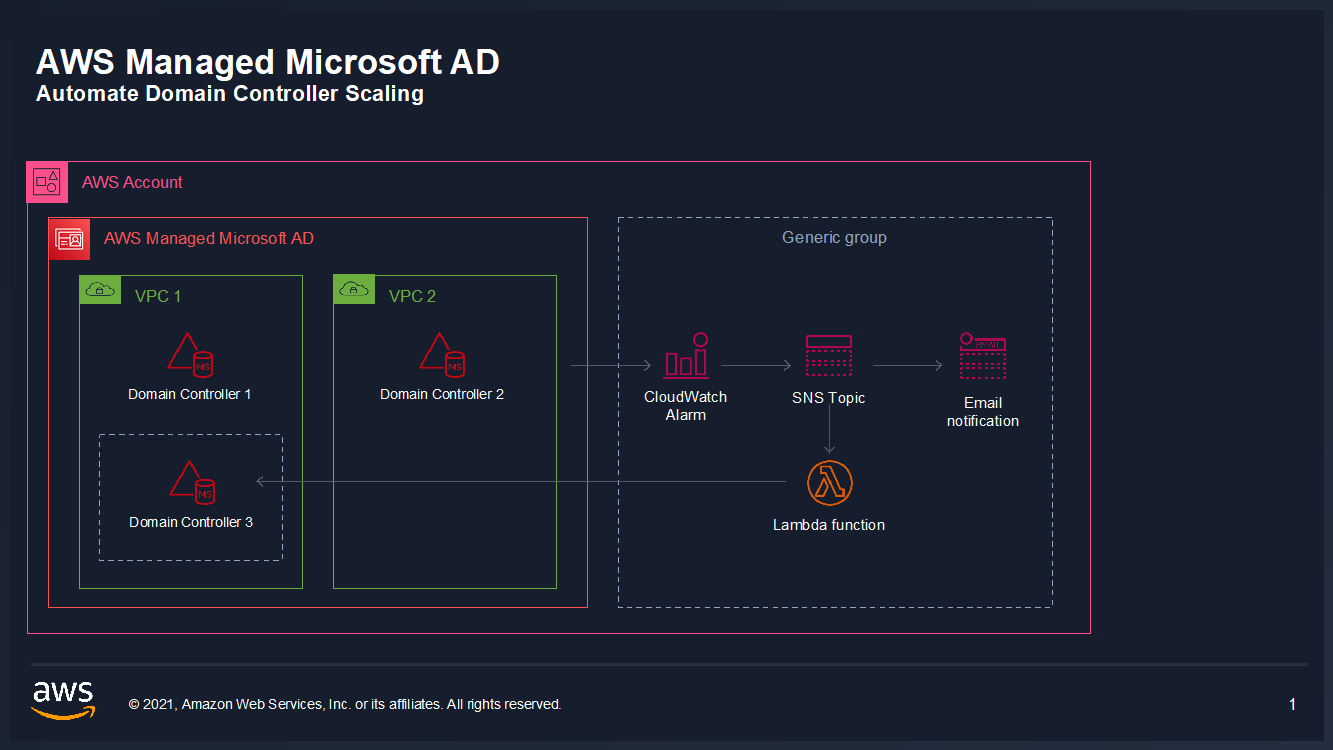

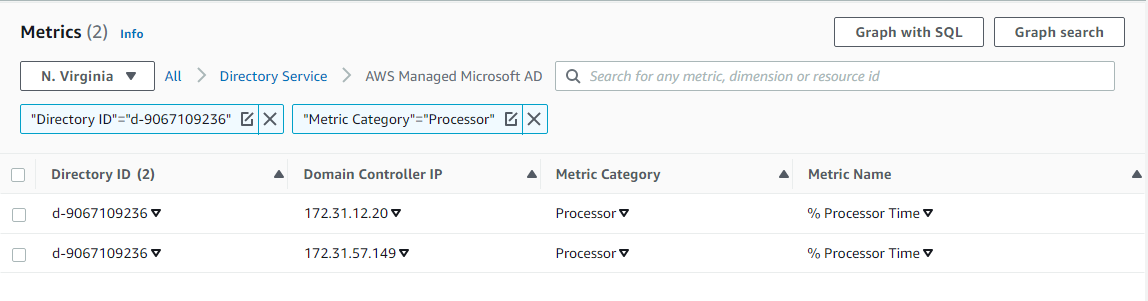

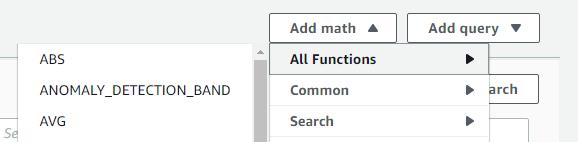

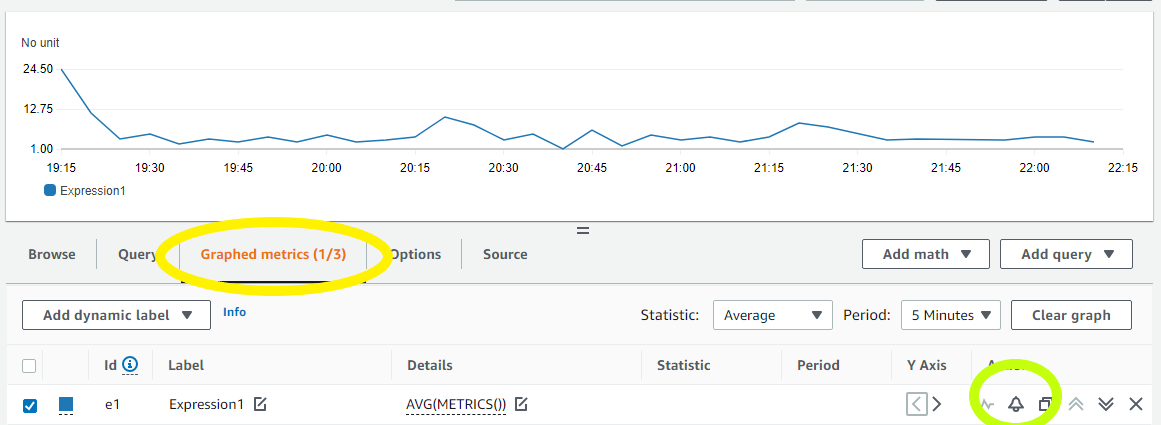

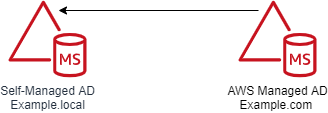

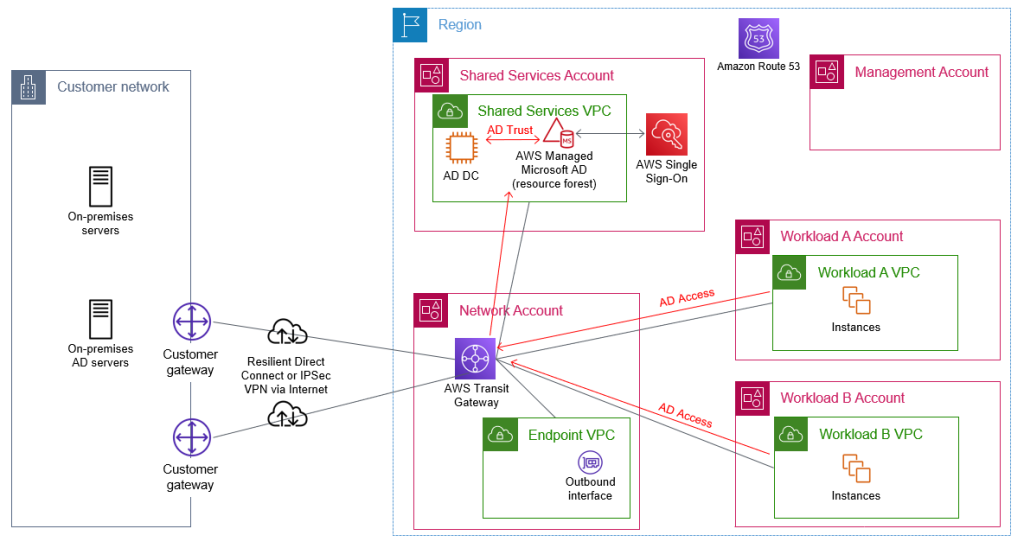

To benefit from seamless domain joins for Windows-based or Linux-based EC2 instances, Amazon RDS Windows-based authentication, and support for AWS services such as Amazon Chime and Amazon WorkSpaces, you must build a resource forest on AWS by using AWS Directory Service for Microsoft Active Directory, also referred to as AWS Managed Microsoft AD. You first set up an AWS Managed Microsoft AD directory as a resource forest, and then configure a trust with your existing on-premises AD forest.

When you select and launch this directory type, it is created as a highly available pair of domain controllers that are connected to your virtual private cloud (VPC). The domain controllers run in different Availability Zones in your choice of AWS Region. Host monitoring and recovery, data replication, snapshots, and software updates are automatically configured and managed for you. AWS Managed Microsoft AD is available in Standard and Enterprise Editions.

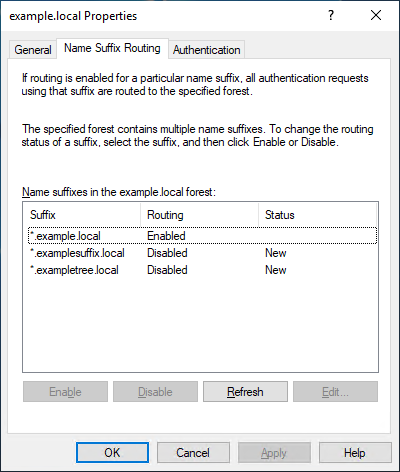

Enterprise Edition is recommended for all but the smallest environments, because the directory can then be shared with a larger number of AWS accounts. Enterprise Edition also allows the AWS Managed Microsoft AD directory to be replicated across multiple AWS Regions if required. This AWS Managed Microsoft AD should be deployed into your shared services account. The domain controllers should be deployed into the subnets within the shared services VPC. After you have deployed your AWS Managed Microsoft AD directory, you create a trust between this new forest and your existing on-premises forest, to enable access by existing AD users to resources within the new directory. Further information about trusts and AWS Managed Microsoft AD is available at Everything you wanted to know about trusts with AWS Managed Microsoft AD, including when to use a one-way or two-way trust. A two-way trust is recommended, because it will allow your AWS accounts to use a wider range of AD-integrated AWS services, such as AWS Single Sign-On, Amazon Chime, Amazon Connect, Amazon QuickSight, Amazon WorkSpaces, and AWS Transfer Family. Ensure that you update the default AD site name to match the name of the site for your AWS Region in your existing forest, and ensure that your sites have the correct site links and subnet associations to enable efficient location of domain controllers.

The AWS Managed Microsoft AD will be shared with your accounts within your organization to enable your other AWS accounts to access this directory and benefit from the features and services outlined previously.

With correct AD site configuration in both forests, communication between the AWS Managed Microsoft AD domain controllers and other domain-joined resources within AWS, and your existing domain’s domain controllers, remains within the chosen AWS Region. This is designed to keep your data within AWS in the country of your chosen AWS Region, to help to address possible data residency concerns.

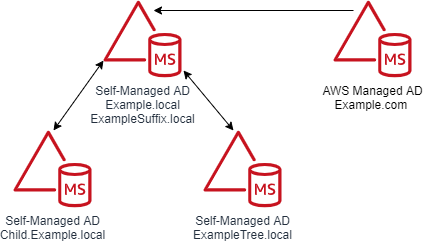

An example of this architecture is depicted in Figure 4.

Figure 4: AWS Managed Microsoft AD resource forest with trust to on-premises AD

Manage access to your AWS accounts

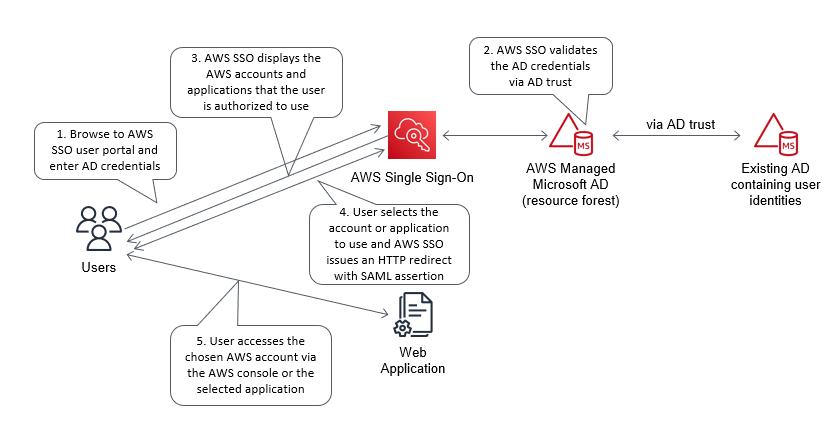

AWS Single Sign-On (AWS SSO) enables you to centrally manage access across your AWS organization. You can choose to manage access just to your AWS accounts, or to your cloud applications as well. You can create user identities directly in AWS SSO, access your existing identifies by connecting AWS SSO to your existing Active Directory domain, or you can federate them from your Active Directory Federation Services (AD FS) or a standards-based identity provider, such as Okta Universal Directory or Azure AD. Your workforce users get a user portal to access all of their assigned AWS accounts or cloud applications. AWS SSO can be flexibly configured to run alongside or replace AWS account access management through AWS Identity and Access Management (IAM).

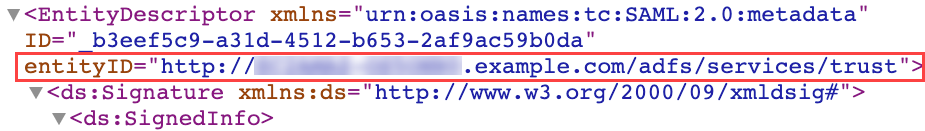

Identity federation is a system of trust between two parties for the purpose of authenticating third parties, such as users, and conveying information that is needed to authorize their access to resources. In this system, an identity provider (IdP) is responsible for user authentication, and a service provider (SP), such as a service or an application, controls access to resources. AWS SSO automates the setup of the identity federation that is used to provide authorized users access to your AWS accounts. AWS SSO is acting as an IdP when AWS SSO is connected to your AD and used to give access to your AWS accounts.

Although you can create users and groups directly within AWS SSO, a best practice is to use your existing identity single source of truth to simplify user and permission management. Connecting AWS SSO through to your Active Directory, which has been extended to AWS, will allow authentication of users for access to your AWS accounts to take place entirely within the AWS Region. This practice is designed to reduce dependencies on hybrid networking and resources located on-premises or in other hosting locations.

You should enforce secure access to the user portal, AWS SSO integrated apps, and the AWS CLI by enabling multi-factor authentication (MFA). AWS SSO MFA supports various MFA types, including client-side authenticator apps, security keys, and built-in authenticators. Using MFA is recommended as part of configuring strong sign-in mechanisms.

Connect AWS SSO to your Active Directory

You can connect AWS SSO to your Active Directory on AWS by using AD Connector, or through an AWS Managed Microsoft AD. Using AD Connector is often the primary mechanism considered by customers, but given the lack of support for multi-domain environments as used in this post, this blog post recommends using AWS Managed Microsoft AD.

When you use AWS Managed Microsoft AD with AWS SSO, AWS SSO requires two-way trusts to be in place between this AWS Managed Microsoft AD forest and any other forest that contains the user identities that will authenticate through AWS SSO.

Before AWS SSO supported delegated administration, AWS SSO had to be configured within the management account of your AWS organization, and required the connected AWS Managed Microsoft AD directory to also be within your organization’s management account.

With the announcement of AWS SSO delegated administration support, AWS SSO and the connected AWS Managed Microsoft AD can be configured in an account other than your management account. This post recommends using your shared services account as the AWS SSO delegated administration account. Doing so will enable AWS SSO to use the AWS Managed Microsoft AD that you configured within the shared services account in the preceding Build a resource forest for AWS hosted infrastructure and applications section.

This follows the AWS guidance to avoid deploying workloads to the organization’s management account and to limit access to the management account. Using a delegated administration account for AWS SSO reduces the need for regular access to the management account.

From within your management account, your shared services account needs to be registered as the AWS SSO delegated administration account. You can then configure and manage AWS SSO from within your shared services account. The AWS SSO delegated administration account can manage permissions across your organization, apart from assigning permissions to access the management account. Assignment of permissions to access the management account through AWS SSO needs to be configured from within the management account itself.

You should configure AWS SSO to use the AWS Managed Microsoft AD directory that is deployed in the shared services account. If you are using AWS Control Tower, or have previously configured AWS SSO, see Considerations for changing your identity source before you change the default identity source from AWS SSO to Active Directory. After this is complete, you can set up SSO access to your AWS accounts within your organization from the AWS SSO console.

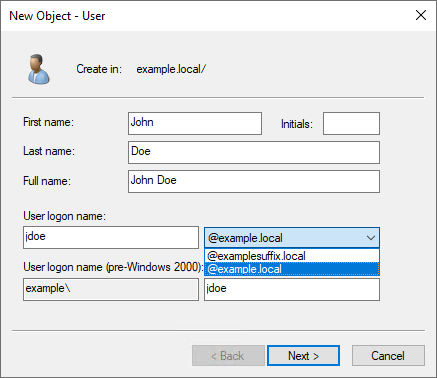

Assign permission sets to Active Directory groups

Permission sets are a way to define permissions centrally in AWS SSO so that they can be applied to all your AWS accounts. After you have created your permission sets, you will assign them to your Active Directory groups to grant access to the respective AWS accounts, using the defined permission set persona. Your users will then use the AWS SSO user portal to authenticate with their AD credentials and can choose which of the assigned AWS accounts and personas they wish to access. Users can configure AWS CLI to use AWS SSO to access the roles they have been assigned.

Figure 5 shows the complete architecture covered in this blog post. The diagram includes AWS SSO within the shared services account connected to the AWS Managed Microsoft AD that is used to provide access to the forests that contain your user identities.

Figure 5: Complete AD architecture with trusts and AWS SSO using AD as the identity source

Access domain-joined infrastructure resources

By joining your Windows Server servers to your Active Directory resource domain, you can centralize the management of your servers by using native Microsoft tooling. Joining your Amazon EC2 Windows instances to your domain enables you to continue using existing tools, such as group policies, to manage your server estate both on-premises and in AWS.

VPCs with workloads that need to be domain joined, to access on-premises networks, or to access other VPCs will need appropriate network connectivity and DNS configuration in place. You can enable network connectivity between workload VPCs and the shared services VPC and other on-premises networks by attaching your VPCs to the transit gateway shared from the networking account. You can enable DNS resolution of your AD domains by attaching the Route 53 Resolver rules, shared from the networking account, to your workload VPCs.

Join instances to your AD domain

Amazon EC2 Windows instances can be manually or seamlessly joined to your resource domain. Manually joining an instance involves the same steps that you would follow on-premises. Seamlessly joining instances requires the AWS Systems Manager agent, which is installed by default in AWS provided Windows AMIs, on the Amazon EC2 instance and an attached instance profile with sufficient permissions. This instance profile should include the AmazonSSMManagedInstanceCore and AmazonSSMDirectoryServiceAccess policies.

In order to join the domain, either manually or seamlessly, the Amazon EC2 instance must be able to resolve the DNS name for your AD domain. This DNS resolution was enabled by the attachment of the correctly configured shared Route 53 Resolver rules to the workload VPCs. Seamlessly joining instances to the domain also requires that your shared services account AWS Managed Microsoft AD directory be shared with the workload account that contains the Amazon EC2 instances.

After your instances are joined to the domain, applications running on the servers will be able to access other domain-joined resources, if authorized by AD, through the connectivity that is provided by the transit gateway attachment on the workload VPC.

Applications that need to access AWS resources that are not domain joined, such as objects in Amazon Simple Storage Service (Amazon S3), should make use of temporary credentials associated with the attached instance profile to access AWS resources. By using these IAM temporary credentials, you can avoid using static long-term credentials. When an application requires access to credentials or other secrets, and cannot use AD or IAM temporary credentials, such as for database logins or for third-party API tokens, use a service designed to handle management of secrets, such as AWS Secrets Manager. See the AWS Well-Architected Security Pillar Identity Management documentation for further guidance.

Figure 6 shows Active Directory access through the transit gateway. The Route 53 forwarding rules, which are shared from the shared services account, are associated with the workload VPCs to enable DNS resolution of Active Directory–integrated DNS domains. Not shown in the diagram is the sharing of the AWS Managed Microsoft AD for the resource forest with the workload accounts.

Figure 6: Flow of AD network traffic through the transit gateway within the network account

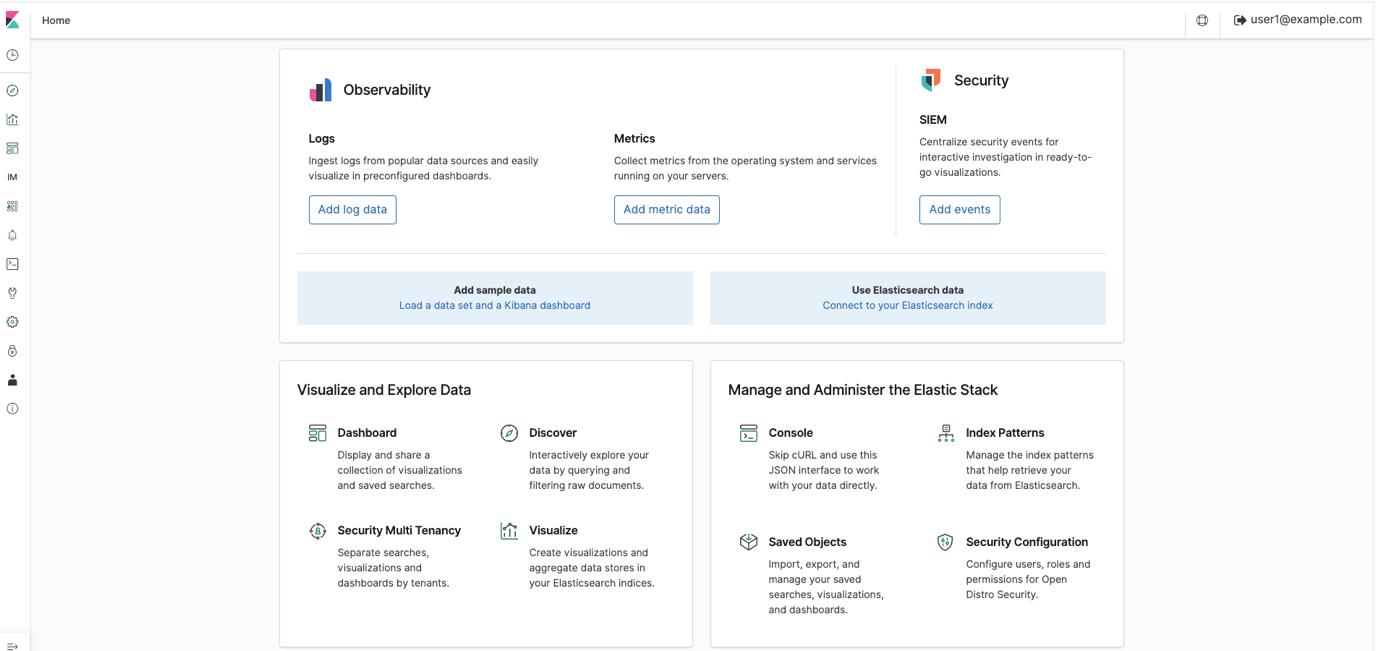

Access applications and third-party services

You might have existing applications that rely on Active Directory or LDAP for user authentication. When you extend your Active Directory environment to AWS, these existing applications can be deployed to your AWS environment, and they will be able to authenticate the users of the application against your AD.

A modern approach for web-based applications is to use identity federation for user authentication. AWS SSO can serve as an identity provider to authenticate users to your AWS SSO-integrated or SAML 2.0 applications. An example of an AWS SSO SAML 2.0 integration is to use AWS SSO to authenticate your VPN users to AWS Client VPN.

You might already be using a third-party identity provider, such as Azure AD or Okta, to provide your users with access to AWS services such as AWS Client VPN or to third-party business applications such as those on the AWS SSO Cloud applications page. These third-party identity providers will typically offer an agent to replicate or synchronize necessary user information from your Active Directory to their service, in order to offer federated authentication for your users. Using these agents to replicate from your existing Active Directory means that you are still using your Active Directory as the single source of truth. To ensure reliable authentication, you should follow the vendor’s recommendations for the high-availability setup of their agent.

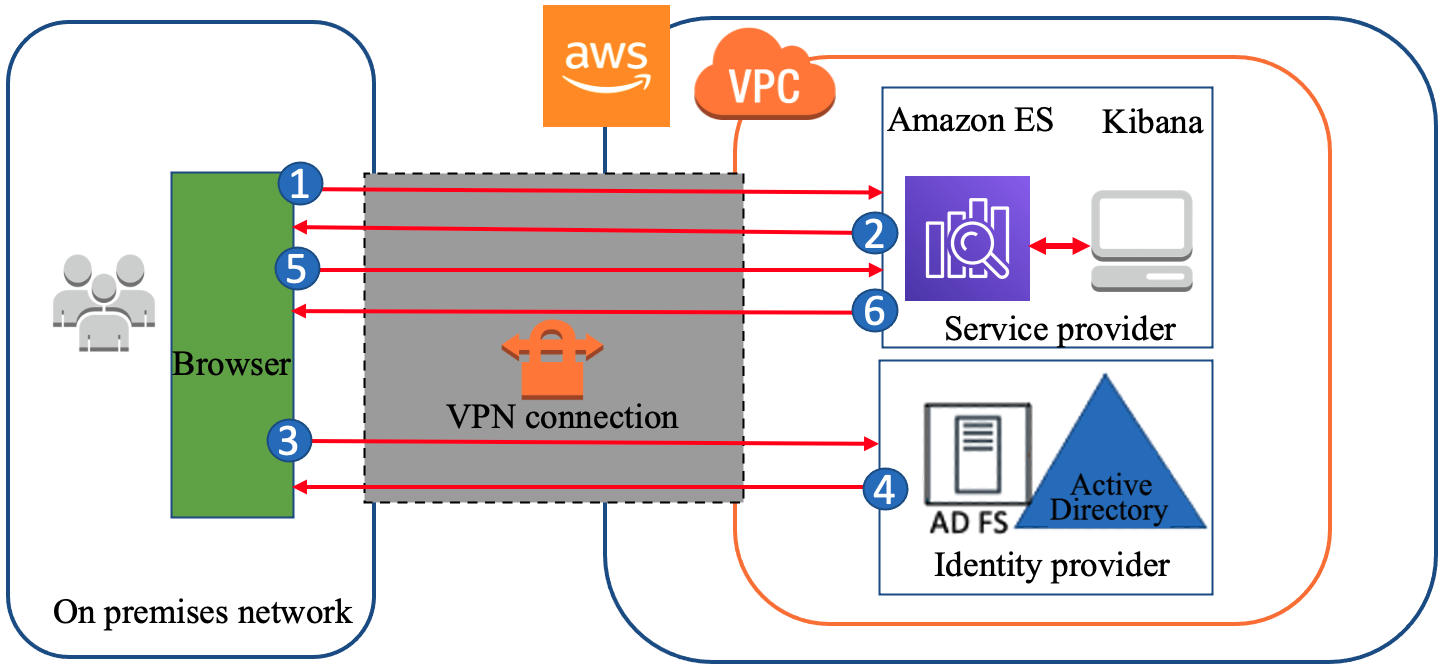

Figure 7 shows the steps that occur when you use AWS SSO to provide identity federation to a web application.

Figure 7: Example flow for identify federation that uses AWS SSO

Conclusion

This post highlights the importance of implementing a cloud authentication and authorization architecture that addresses the variety of requirements for an organization’s AWS Cloud environment. In addition to console access, this post highlights the importance of considering how you will:

- Perform authentication to AWS based Windows and Linux instances

- Integrate AWS services that need Windows-based authentication capabilities

- Integrate authentication for internal user applications

- Provide a single identity source as the source of truth for all AWS user authentication

- Enable MFA for user authentication

The proposed approach provides a highly available Active Directory (AD) infrastructure, running on AWS and integrated with your existing AD, which addresses these considerations. The approach helps you to attain reduced latencies and higher levels of availability by removing dependencies on on-premises resources, other hosting locations, and external network links. This design stores the identity information that is contained within your existing AD in your chosen AWS Region and country, across multiple Availability Zones, which can also help you meet your data residency requirements.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, contact AWS Support.

Want more AWS Security news? Follow us on Twitter.