Post Syndicated from Dr. Rudolf Potucek original https://aws.amazon.com/blogs/devops/securely-validate-business-application-resilience-with-aws-fis-and-iam/

To avoid high costs of downtime, mission critical applications in the cloud need to achieve resilience against degradation of cloud provider APIs and services.

In 2021, AWS launched AWS Fault Injection Simulator (FIS), a fully managed service to perform fault injection experiments on workloads in AWS to improve their reliability and resilience. At the time of writing, FIS allows to simulate degradation of Amazon Elastic Compute Cloud (EC2) APIs using API fault injection actions and thus explore the resilience of workflows where EC2 APIs act as a fault boundary.

In this post we show you how to explore additional fault boundaries in your applications by selectively denying access to any AWS API. This technique is particularly useful for fully managed, “black box” services like Amazon Simple Storage Service (S3) or Amazon Simple Queue Service (SQS) where a failure of read or write operations is sufficient to simulate problems in the service. This technique is also useful for injecting failures in serverless applications without needing to modify code. While similar results could be achieved with network disruption or modifying code with feature flags, this approach provides a fine granular degradation of an AWS API without the need to re-deploy and re-validate code.

Overview

We will explore a common application pattern: user uploads a file, S3 triggers an AWS Lambda function, Lambda transforms the file to a new location and deletes the original:

Figure 1. S3 upload and transform logical workflow: User uploads file to S3, upload triggers AWS Lambda execution, Lambda writes transformed file to a new bucket and deletes original. Workflow can be disrupted at file deletion.

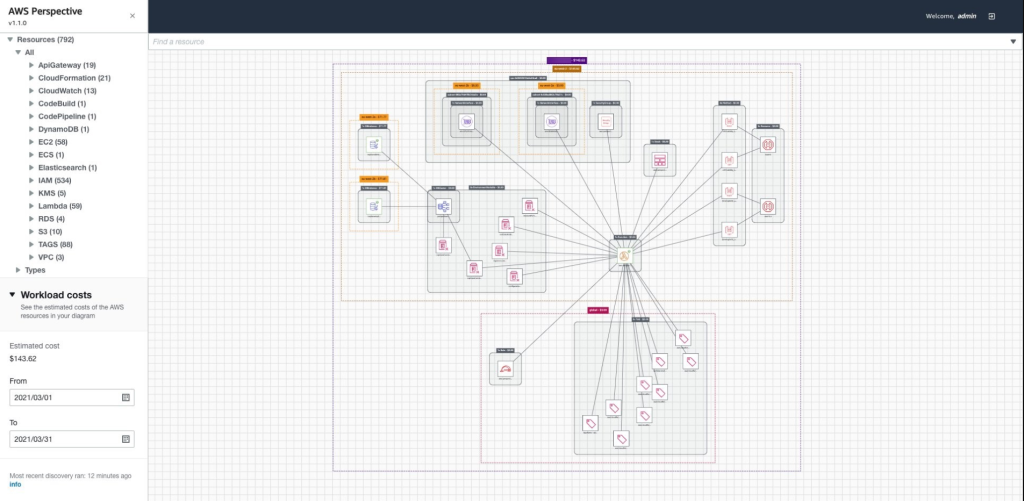

We will simulate the user upload with an Amazon EventBridge rate expression triggering an AWS Lambda function which creates a file in S3:

Figure 2. S3 upload and transform implemented demo workflow: Amazon EventBridge triggers a creator Lambda function, Lambda function creates a file in S3, file creation triggers AWS Lambda execution on transformer function, Lambda writes transformed file to a new bucket and deletes original. Workflow can be disrupted at file deletion.

Using this architecture we can explore the effect of S3 API degradation during file creation and deletion. As shown, the API call to delete a file from S3 is an application fault boundary. The failure could occur, with identical effect, because of S3 degradation or because the AWS IAM role of the Lambda function denies access to the API.

To inject failures we use AWS Systems Manager (AWS SSM) automation documents to attach and detach IAM policies at the API fault boundary and FIS to orchestrate the workflow.

Each Lambda function has an IAM execution role that allows S3 write and delete access, respectively. If the processor Lambda fails, the S3 file will remain in the bucket, indicating a failure. Similarly, if the IAM execution role for the processor function is denied the ability to delete a file after processing, that file will remain in the S3 bucket.

Prerequisites

Following this blog posts will incur some costs for AWS services. To explore this test application you will need an AWS account. We will also assume that you are using AWS CloudShell or have the AWS CLI installed and have configured a profile with administrator permissions. With that in place you can create the demo application in your AWS account by downloading this template and deploying an AWS CloudFormation stack:

git clone https://github.com/aws-samples/fis-api-failure-injection-using-iam.git

cd fis-api-failure-injection-using-iam

aws cloudformation deploy --stack-name test-fis-api-faults --template-file template.yaml --capabilities CAPABILITY_NAMED_IAM

Fault injection using IAM

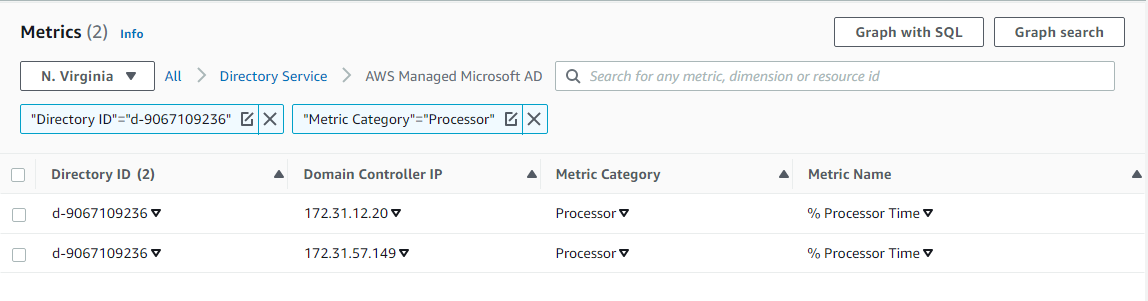

Once the stack has been created, navigate to the Amazon CloudWatch Logs console and filter for /aws/lambda/test-fis-api-faults. Under the EventBridgeTimerHandler log group you should find log events once a minute writing a timestamped file to an S3 bucket named fis-api-failure-ACCOUNT_ID. Under the S3TriggerHandler log group you should find matching deletion events for those files.

Once you have confirmed object creation/deletion, let’s take away the permission of the S3 trigger handler lambda to delete files. To do this you will attach the FISAPI-DenyS3DeleteObject policy that was created with the template:

ROLE_NAME=FISAPI-TARGET-S3TriggerHandlerRole

ROLE_ARN=$( aws iam list-roles --query "Roles[?RoleName=='${ROLE_NAME}'].Arn" --output text )

echo Target Role ARN: $ROLE_ARN

POLICY_NAME=FISAPI-DenyS3DeleteObject

POLICY_ARN=$( aws iam list-policies --query "Policies[?PolicyName=='${POLICY_NAME}'].Arn" --output text )

echo Impact Policy ARN: $POLICY_ARN

aws iam attach-role-policy \

--role-name ${ROLE_NAME}\

--policy-arn ${POLICY_ARN}

With the deny policy in place you should now see object deletion fail and objects should start showing up in the S3 bucket. Navigate to the S3 console and find the bucket starting with fis-api-failure. You should see a new object appearing in this bucket once a minute:

Figure 3. S3 bucket listing showing files not being deleted because IAM permissions DENY file deletion during FIS experiment.

If you would like to graph the results you can navigate to AWS CloudWatch, select “Logs Insights“, select the log group starting with /aws/lambda/test-fis-api-faults-S3CountObjectsHandler, and run this query:

fields @timestamp, @message

| filter NumObjects >= 0

| sort @timestamp desc

| stats max(NumObjects) by bin(1m)

| limit 20

This will show the number of files in the S3 bucket over time:

Figure 4. AWS CloudWatch Logs Insights graph showing the increase in the number of retained files in S3 bucket over time, demonstrating the effect of the introduced failure.

You can now detach the policy:

ROLE_NAME=FISAPI-TARGET-S3TriggerHandlerRole

ROLE_ARN=$( aws iam list-roles --query "Roles[?RoleName=='${ROLE_NAME}'].Arn" --output text )

echo Target Role ARN: $ROLE_ARN

POLICY_NAME=FISAPI-DenyS3DeleteObject

POLICY_ARN=$( aws iam list-policies --query "Policies[?PolicyName=='${POLICY_NAME}'].Arn" --output text )

echo Impact Policy ARN: $POLICY_ARN

aws iam detach-role-policy \

--role-name ${ROLE_NAME}\

--policy-arn ${POLICY_ARN}

We see that newly written files will once again be deleted but the un-processed files will remain in the S3 bucket. From the fault injection we learned that our system does not tolerate request failures when deleting files from S3. To address this, we should add a dead letter queue or some other retry mechanism.

Note: if the Lambda function does not return a success state on invocation, EventBridge will retry. In our Lambda functions we are cost conscious and explicitly capture the failure states to avoid excessive retries.

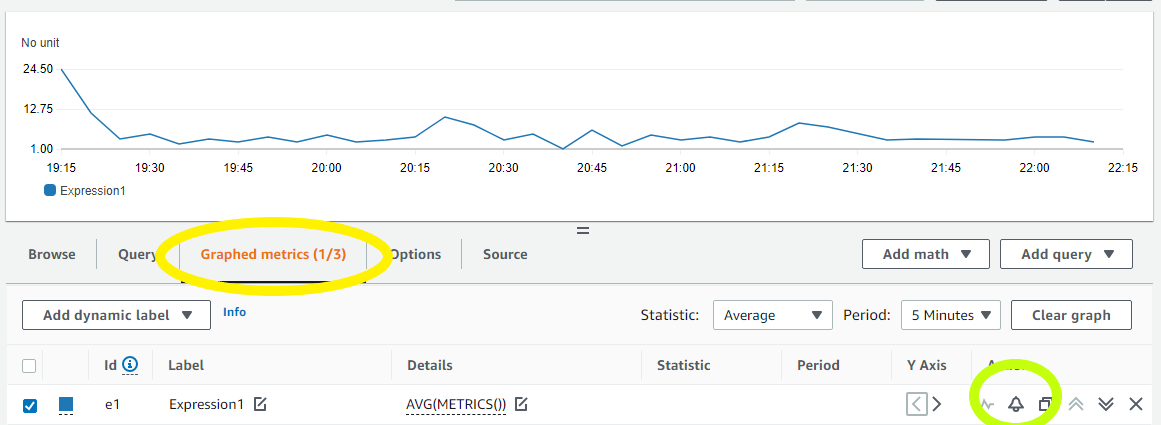

Fault injection using SSM

To use this approach from FIS and to always remove the policy at the end of the experiment, we first create an SSM document to automate adding a policy to a role. To inspect this document, open the SSM console, navigate to the “Documents” section, find the FISAPI-IamAttachDetach document under “Owned by me”, and examine the “Content” tab (make sure to select the correct region). This document takes the name of the Role you want to impact and the Policy you want to attach as parameters. It also requires an IAM execution role that grants it the power to list, attach, and detach specific policies to specific roles.

Let’s run the SSM automation document from the console by selecting “Execute Automation”. Determine the ARN of the FISAPI-SSM-Automation-Role from CloudFormation or by running:

POLICY_NAME=FISAPI-DenyS3DeleteObject

POLICY_ARN=$( aws iam list-policies --query "Policies[?PolicyName=='${POLICY_NAME}'].Arn" --output text )

echo Impact Policy ARN: $POLICY_ARN

Use FISAPI-SSM-Automation-Role, a duration of 2 minutes expressed in ISO8601 format as PT2M, the ARN of the deny policy, and the name of the target role FISAPI-TARGET-S3TriggerHandlerRole:

Figure 5. Image of parameter input field reflecting the instructions in blog text.

Alternatively execute this from a shell:

ASSUME_ROLE_NAME=FISAPI-SSM-Automation-Role

ASSUME_ROLE_ARN=$( aws iam list-roles --query "Roles[?RoleName=='${ASSUME_ROLE_NAME}'].Arn" --output text )

echo Assume Role ARN: $ASSUME_ROLE_ARN

ROLE_NAME=FISAPI-TARGET-S3TriggerHandlerRole

ROLE_ARN=$( aws iam list-roles --query "Roles[?RoleName=='${ROLE_NAME}'].Arn" --output text )

echo Target Role ARN: $ROLE_ARN

POLICY_NAME=FISAPI-DenyS3DeleteObject

POLICY_ARN=$( aws iam list-policies --query "Policies[?PolicyName=='${POLICY_NAME}'].Arn" --output text )

echo Impact Policy ARN: $POLICY_ARN

aws ssm start-automation-execution \

--document-name FISAPI-IamAttachDetach \

--parameters "{

\"AutomationAssumeRole\": [ \"${ASSUME_ROLE_ARN}\" ],

\"Duration\": [ \"PT2M\" ],

\"TargetResourceDenyPolicyArn\": [\"${POLICY_ARN}\" ],

\"TargetApplicationRoleName\": [ \"${ROLE_NAME}\" ]

}"

Wait two minutes and then examine the content of the S3 bucket starting with fis-api-failure again. You should now see two additional files in the bucket, showing that the policy was attached for 2 minutes during which files could not be deleted, and confirming that our application is not resilient to S3 API degradation.

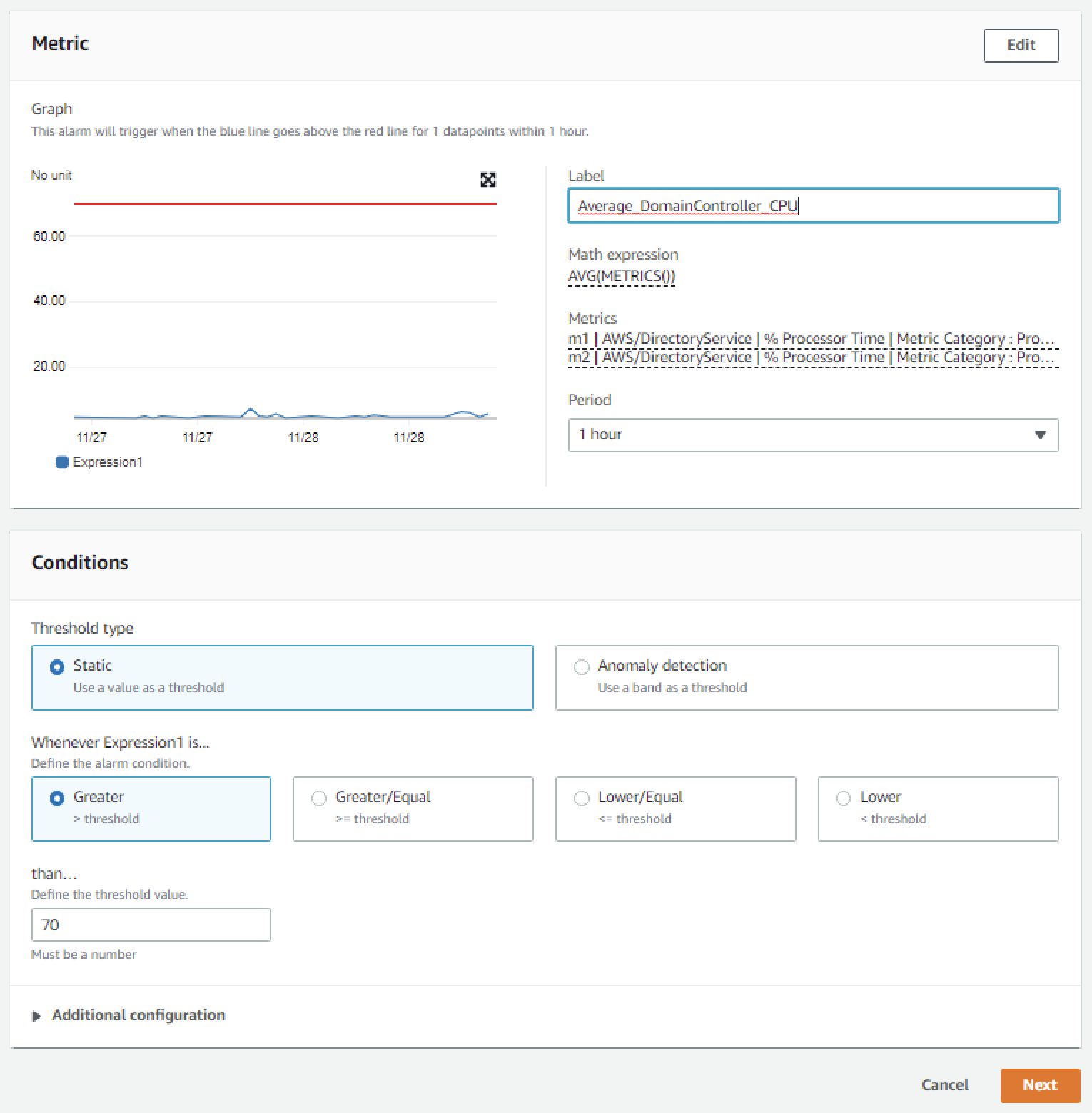

Permissions for injecting failures with SSM

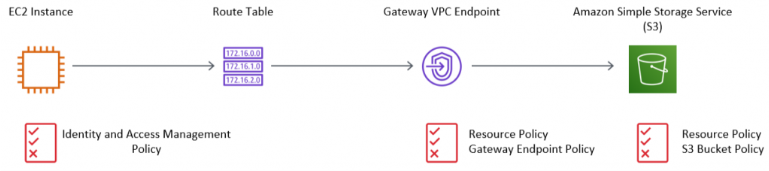

Fault injection with SSM is controlled by IAM, which is why you had to specify the FISAPI-SSM-Automation-Role:

Figure 6. Visual representation of IAM permission used for fault injections with SSM.

This role needs to contain an assume role policy statement for SSM to allow assuming the role:

AssumeRolePolicyDocument:

Statement:

- Action:

- 'sts:AssumeRole'

Effect: Allow

Principal:

Service:

- "ssm.amazonaws.com"

The role also needs to contain permissions to describe roles and their attached policies with an optional constraint on which roles and policies are visible:

- Sid: GetRoleAndPolicyDetails

Effect: Allow

Action:

- 'iam:GetRole'

- 'iam:GetPolicy'

- 'iam:ListAttachedRolePolicies'

Resource:

# Roles

- !GetAtt EventBridgeTimerHandlerRole.Arn

- !GetAtt S3TriggerHandlerRole.Arn

# Policies

- !Ref AwsFisApiPolicyDenyS3DeleteObject

Finally the SSM role needs to allow attaching and detaching a policy document. This requires

- an ALLOW statement

- a constraint on the policies that can be attached

- a constraint on the roles that can be attached to

In the role we collapse the first two requirements into an ALLOW statement with a condition constraint for the Policy ARN. We then express the third requirement in a DENY statement that will limit the '*' resource to only the explicit role ARNs we want to modify:

- Sid: AllowOnlyTargetResourcePolicies

Effect: Allow

Action:

- 'iam:DetachRolePolicy'

- 'iam:AttachRolePolicy'

Resource: '*'

Condition:

ArnEquals:

'iam:PolicyARN':

# Policies that can be attached

- !Ref AwsFisApiPolicyDenyS3DeleteObject

- Sid: DenyAttachDetachAllRolesExceptApplicationRole

Effect: Deny

Action:

- 'iam:DetachRolePolicy'

- 'iam:AttachRolePolicy'

NotResource:

# Roles that can be attached to

- !GetAtt EventBridgeTimerHandlerRole.Arn

- !GetAtt S3TriggerHandlerRole.Arn

We will discuss security considerations in more detail at the end of this post.

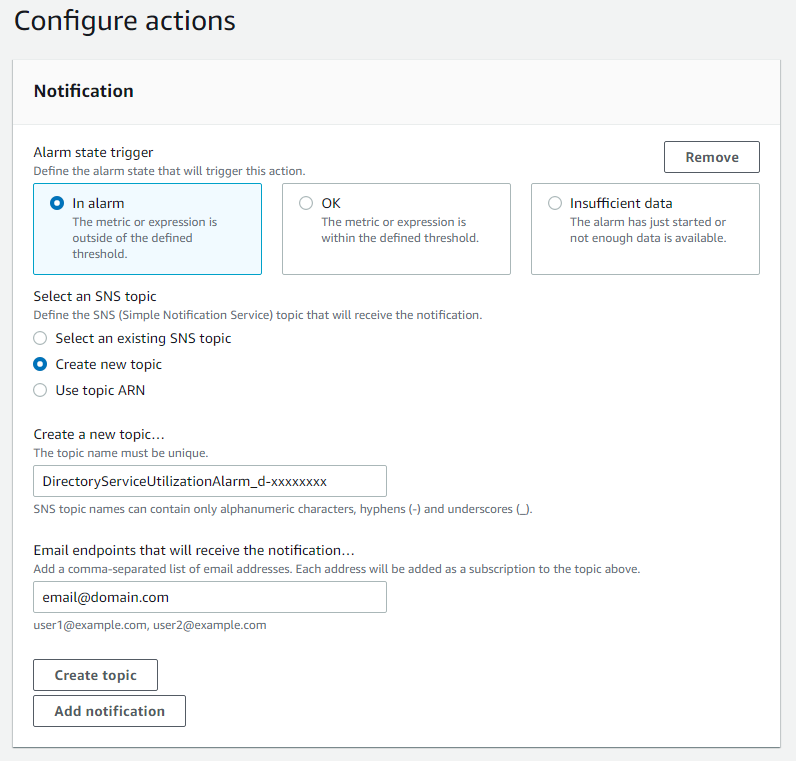

Fault injection using FIS

With the SSM document in place you can now create an FIS template that calls the SSM document. Navigate to the FIS console and filter for FISAPI-DENY-S3PutObject. You should see that the experiment template passes the same parameters that you previously used with SSM:

Figure 7. Image of FIS experiment template action summary. This shows the SSM document ARN to be used for fault injection and the JSON parameters passed to the SSM document specifying the IAM Role to modify and the IAM Policy to use.

You can now run the FIS experiment and after a couple minutes once again see new files in the S3 bucket.

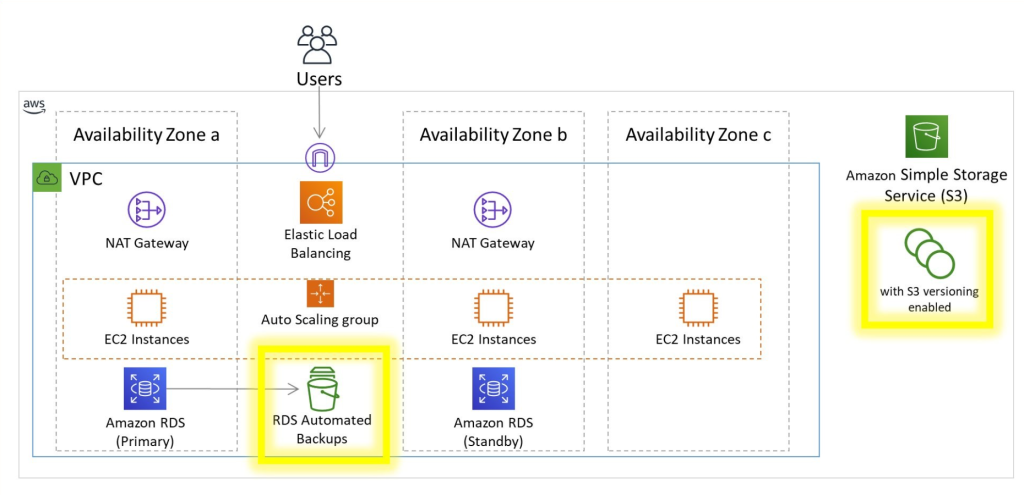

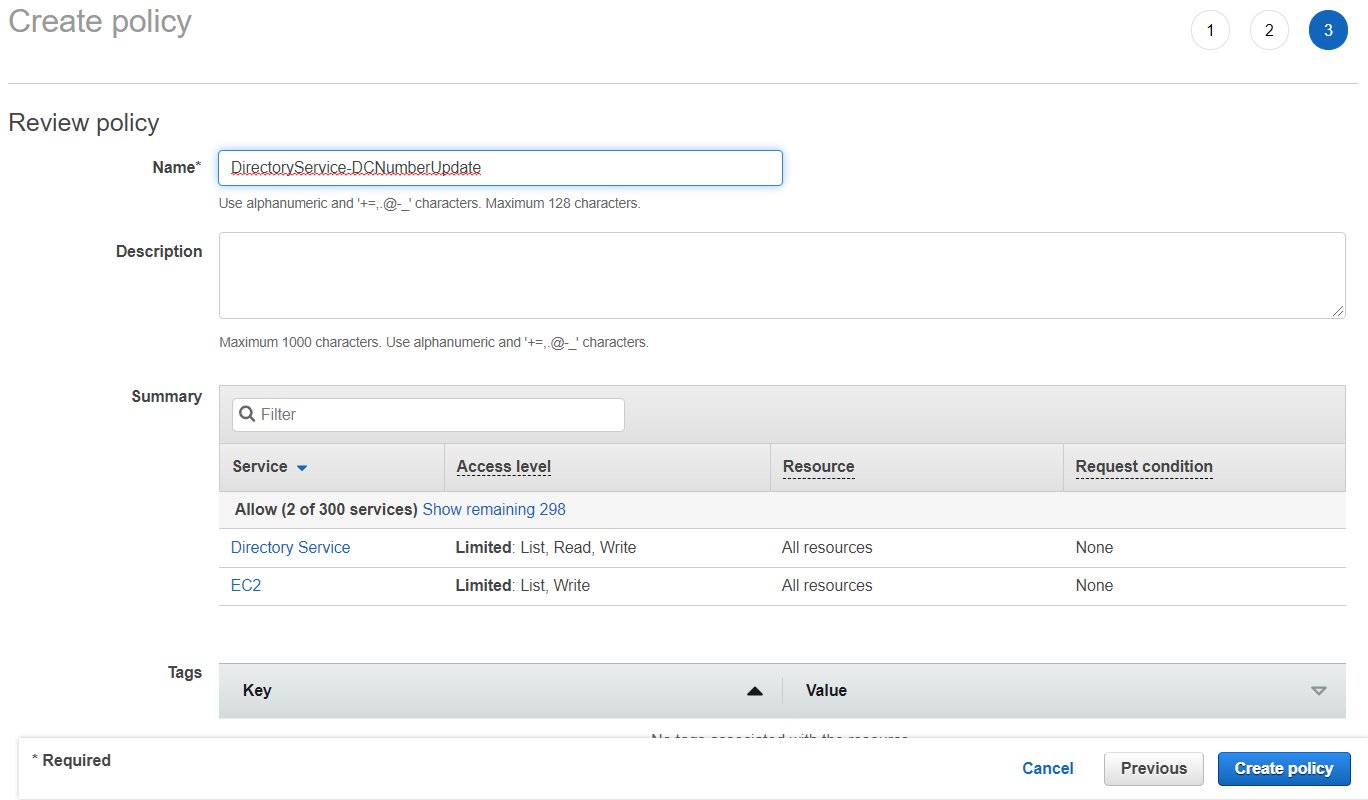

Permissions for injecting failures with FIS and SSM

Fault injection with FIS is controlled by IAM, which is why you had to specify the FISAPI-FIS-Injection-EperimentRole:

Figure 8. Visual representation of IAM permission used for fault injections with FIS and SSM. It shows the SSM execution role permitting access to use SSM automation documents as well as modify IAM roles and policies via the SSM document. It also shows the FIS execution role permitting access to use FIS templates, as well as the pass-role permission to grant the SSM execution role to the SSM service. Finally it shows the FIS user needing to have a pass-role permission to grant the FIS execution role to the FIS service.

This role needs to contain an assume role policy statement for FIS to allow assuming the role:

AssumeRolePolicyDocument:

Statement:

- Action:

- 'sts:AssumeRole'

Effect: Allow

Principal:

Service:

- "fis.amazonaws.com"

The role also needs permissions to list and execute SSM documents:

- Sid: RequiredReadActionsforAWSFIS

Effect: Allow

Action:

- 'cloudwatch:DescribeAlarms'

- 'ssm:GetAutomationExecution'

- 'ssm:ListCommands'

- 'iam:ListRoles'

Resource: '*'

- Sid: RequiredSSMStopActionforAWSFIS

Effect: Allow

Action:

- 'ssm:CancelCommand'

Resource: '*'

- Sid: RequiredSSMWriteActionsforAWSFIS

Effect: Allow

Action:

- 'ssm:StartAutomationExecution'

- 'ssm:StopAutomationExecution'

Resource:

- !Sub 'arn:aws:ssm:${AWS::Region}:${AWS::AccountId}:automation-definition/${SsmAutomationIamAttachDetachDocument}:$DEFAULT'

Finally, remember that the SSM document needs to use a Role of its own to execute the fault injection actions. Because that Role is different from the Role under which we started the FIS experiment, we need to explicitly allow SSM to assume that role with a PassRole statement which will expand to FISAPI-SSM-Automation-Role:

- Sid: RequiredIAMPassRoleforSSMADocuments

Effect: Allow

Action: 'iam:PassRole'

Resource: !Sub 'arn:aws:iam::${AWS::AccountId}:role/${SsmAutomationRole}'

Secure and flexible permissions

So far, we have used explicit ARNs for our guardrails. To expand flexibility, we can use wildcards in our resource matching. For example, we might change the Policy matching from:

Condition:

ArnEquals:

'iam:PolicyARN':

# Explicitly listed policies - secure but inflexible

- !Ref AwsFisApiPolicyDenyS3DeleteObject

or the equivalent:

Condition:

ArnEquals:

'iam:PolicyARN':

# Explicitly listed policies - secure but inflexible

- !Sub 'arn:${AWS::Partition}:iam::${AWS::AccountId}:policy/${FullPolicyName}

to a wildcard notation like this:

Condition:

ArnEquals:

'iam:PolicyARN':

# Wildcard policies - secure and flexible

- !Sub 'arn:${AWS::Partition}:iam::${AWS::AccountId}:policy/${PolicyNamePrefix}*'

If we set PolicyNamePrefix to FISAPI-DenyS3 this would now allow invoking FISAPI-DenyS3PutObject and FISAPI-DenyS3DeleteObject but would not allow using a policy named FISAPI-DenyEc2DescribeInstances.

Similarly, we could change the Resource matching from:

NotResource:

# Explicitly listed roles - secure but inflexible

- !GetAtt EventBridgeTimerHandlerRole.Arn

- !GetAtt S3TriggerHandlerRole.Arn

to a wildcard equivalent like this:

NotResource:

# Wildcard policies - secure and flexible

- !Sub 'arn:${AWS::Partition}:iam::${AWS::AccountId}:role/${RoleNamePrefixEventBridge}*'

- !Sub 'arn:${AWS::Partition}:iam::${AWS::AccountId}:role/${RoleNamePrefixS3}*'

and setting RoleNamePrefixEventBridge to FISAPI-TARGET-EventBridge and RoleNamePrefixS3 to FISAPI-TARGET-S3.

Finally, we would also change the FIS experiment role to allow SSM documents based on a name prefix by changing the constraint on automation execution from:

- Sid: RequiredSSMWriteActionsforAWSFIS

Effect: Allow

Action:

- 'ssm:StartAutomationExecution'

- 'ssm:StopAutomationExecution'

Resource:

# Explicitly listed resource - secure but inflexible

# Note: the $DEFAULT at the end could also be an explicit version number

# Note: the 'automation-definition' is automatically created from 'document' on invocation

- !Sub 'arn:aws:ssm:${AWS::Region}:${AWS::AccountId}:automation-definition/${SsmAutomationIamAttachDetachDocument}:$DEFAULT'

to

- Sid: RequiredSSMWriteActionsforAWSFIS

Effect: Allow

Action:

- 'ssm:StartAutomationExecution'

- 'ssm:StopAutomationExecution'

Resource:

# Wildcard resources - secure and flexible

#

# Note: the 'automation-definition' is automatically created from 'document' on invocation

- !Sub 'arn:aws:ssm:${AWS::Region}:${AWS::AccountId}:automation-definition/${SsmAutomationDocumentPrefix}*'

and setting SsmAutomationDocumentPrefix to FISAPI-. Test this by updating the CloudFormation stack with a modified template:

aws cloudformation deploy --stack-name test-fis-api-faults --template-file template2.yaml --capabilities CAPABILITY_NAMED_IAM

Permissions governing users

In production you should not be using administrator access to use FIS. Instead we create two roles FISAPI-AssumableRoleWithCreation and FISAPI-AssumableRoleWithoutCreation for you (see this template). These roles require all FIS and SSM resources to have a Name tag that starts with FISAPI-. Try assuming the role without creation privileges and running an experiment. You will notice that you can only start an experiment if you add a Name tag, e.g. FISAPI-secure-1, and you will only be able to get details of experiments and templates that have proper Name tags.

If you are working with AWS Organizations, you can add further guard rails by defining SCPs that control the use of the FISAPI-* tags similar to this blog post.

Caveats

For this solution we are choosing to attach policies instead of permission boundaries. The benefit of this is that you can attach multiple independent policies and thus simulate multi-step service degradation. However, this means that it is possible to increase the permission level of a role. While there are situations where this might be of interest, e.g. to simulate security breaches, please implement a thorough security review of any fault injection IAM policies you create. Note that modifying IAM Roles may trigger events in your security monitoring tools.

The AttachRolePolicy and DetachRolePolicy calls from AWS IAM are eventually consistent, meaning that in some cases permission propagation when starting and stopping fault injection may take up to 5 minutes each.

Cleanup

To avoid additional cost, delete the content of the S3 bucket and delete the CloudFormation stack:

# Clean up policy attachments just in case

CLEANUP_ROLES=$(aws iam list-roles --query "Roles[?starts_with(RoleName,'FISAPI-')].RoleName" --output text)

for role in $CLEANUP_ROLES; do

CLEANUP_POLICIES=$(aws iam list-attached-role-policies --role-name $role --query "AttachedPolicies[?starts_with(PolicyName,'FISAPI-')].PolicyName" --output text)

for policy in $CLEANUP_POLICIES; do

echo Detaching policy $policy from role $role

aws iam detach-role-policy --role-name $role --policy-arn $policy

done

done

# Delete S3 bucket content

ACCOUNT_ID=$( aws sts get-caller-identity --query Account --output text )

S3_BUCKET_NAME=fis-api-failure-${ACCOUNT_ID}

aws s3 rm --recursive s3://${S3_BUCKET_NAME}

aws s3 rb s3://${S3_BUCKET_NAME}

# Delete cloudformation stack

aws cloudformation delete-stack --stack-name test-fis-api-faults

aws cloudformation wait stack-delete-complete --stack-name test-fis-api-faults

Conclusion

AWS Fault Injection Simulator provides the ability to simulate various external impacts to your application to validate and improve resilience. We’ve shown how combining FIS with IAM to selectively deny access to AWS APIs provides a generic path to explore fault boundaries across all AWS services. We’ve shown how this can be used to identify and improve a resilience problem in a common S3 upload workflow. To learn about more ways to use FIS, see this workshop.

About the authors:

Raghu Boppanna works as an Enterprise Architect at Vanguard’s Chief Technology Office. Raghu specializes in Data Analytics, Data Migration/Replication including CDC Pipelines, Disaster Recovery and Databases. He has earned several AWS Certifications including AWS Certified Security – Specialty & AWS Certified Data Analytics – Specialty.

Raghu Boppanna works as an Enterprise Architect at Vanguard’s Chief Technology Office. Raghu specializes in Data Analytics, Data Migration/Replication including CDC Pipelines, Disaster Recovery and Databases. He has earned several AWS Certifications including AWS Certified Security – Specialty & AWS Certified Data Analytics – Specialty. Parameswaran V Vaidyanathan is a Senior Cloud Resilience Architect with Amazon Web Services. He helps large enterprises achieve the business goals by architecting and building scalable and resilient solutions on the AWS Cloud.

Parameswaran V Vaidyanathan is a Senior Cloud Resilience Architect with Amazon Web Services. He helps large enterprises achieve the business goals by architecting and building scalable and resilient solutions on the AWS Cloud.

Mithil Prasad is a Principal Customer Solutions Manager with Amazon Web Services. In his role, Mithil works with Customers to drive cloud value realization, provide thought leadership to help businesses achieve speed, agility, and innovation.

Mithil Prasad is a Principal Customer Solutions Manager with Amazon Web Services. In his role, Mithil works with Customers to drive cloud value realization, provide thought leadership to help businesses achieve speed, agility, and innovation.