Post Syndicated from original https://lwn.net/Articles/843169/rss

Stable kernels 5.10.9, 5.4.91, and 4.19.169 have been released with important

fixes. Users of those series should upgrade.

Post Syndicated from original https://lwn.net/Articles/843169/rss

Stable kernels 5.10.9, 5.4.91, and 4.19.169 have been released with important

fixes. Users of those series should upgrade.

Post Syndicated from Cristian Gavazzeni original https://aws.amazon.com/blogs/big-data/part-1-building-a-cost-efficient-petabyte-scale-lake-house-with-amazon-s3-lifecycle-rules-and-amazon-redshift-spectrum/

The continuous growth of data volumes combined with requirements to implement long-term retention (typically due to specific industry regulations) puts pressure on the storage costs of data warehouse solutions, even for cloud native data warehouse services such as Amazon Redshift. The introduction of the new Amazon Redshift RA3 node types helped in decoupling compute from storage growth. Integration points provided by Amazon Redshift Spectrum, Amazon Simple Storage Service (Amazon S3) storage classes, and other Amazon S3 features allow for compliance of retention policies while keeping costs under control.

An enterprise customer in Italy asked the AWS team to recommend best practices on how to implement a data journey solution for sales data; the objective of part 1 of this series is to provide step-by-step instructions and best practices on how to build an end-to-end data lifecycle management system integrated with a data lake house implemented on Amazon S3 with Amazon Redshift. In part 2, we show some additional best practices to operate the solution: implementing a sustainable monthly ageing process, using Amazon Redshift local tables to troubleshoot common issues, and using Amazon S3 access logs to analyze data access patterns.

At re:Invent 2019, AWS announced new Amazon Redshift RA3 nodes. Even though this introduced new levels of cost efficiency in the cloud data warehouse, we faced customer cases where the data volume to be kept is an order of magnitude higher due to specific regulations that impose historical data to be kept for up to 10–12 years or more. In addition, this historical cold data must be accessed by other services and applications external to Amazon Redshift (such as Amazon SageMaker for AI and machine learning (ML) training jobs), and occasionally it needs to be queried jointly with Amazon Redshift hot data. In these situations, Redshift Spectrum is a great fit because, among other factors, you can use it in conjunction with Amazon S3 storage classes to further improve TCO.

Redshift Spectrum allows you to query data that resides in S3 buckets using already in place application code and logic used for data warehouse tables, and potentially performing joins and unions of Amazon Redshift local tables and data on Amazon S3.

Redshift Spectrum uses a fleet of compute nodes managed by AWS that increases system scalability. To use it, we need to define at least an external schema and an external table (unless an external schema and external database are already defined in the AWS Glue Data Catalog). Data definition language (DDL) statements used to define an external table include a location attribute to address S3 buckets and prefixes containing the dataset, which could be in common file formats like ORC, Parquet, AVRO, CSV, JSON, or plain text. Compressed and columnar file formats like Apache Parquet are preferred because they provide less storage usage and better performance.

For a data catalog, we could use AWS Glue or an external hive metastore. For this post, we use AWS Glue.

Amazon S3 storage classes include S3 Standard, S3-IA, S3 One-Zone, S3 Intelligent-Tiering, S3 Glacier, and S3 Glacier Deep Archive. For our use case, we need to keep data accessible for queries for 5 years and with high durability, so we consider only S3 Standard and S3-IA for this time frame, and S3 Glacier only for long term (5–12 years). Data access to S3 Glacier requires data retrieval in the range of minutes (if using expedited retrieval) and this can’t be matched with the ability to query data. We can adopt Glacier for very cold data if you implement a manual process to first restore the Glacier archive to a temporary S3 bucket, and then query this data defined via an external table.

S3 Glacier Select allows you to query on data directly in S3 Glacier, but it only supports uncompressed CSV files. Because the objective of this post is to propose a cost-efficient solution, we didn’t consider it. If for any reason you have constraints for storing in CSV file format (instead of compressed formats like Parquet), Glacier Select might also be a good fit.

Excluding retrieval costs, the cost for storage for S3-IA is typically around 45% cheaper than S3 Standard, and S3 Glacier is 68% cheaper than S3-IA. For updated pricing information, see Amazon S3 pricing.

We don’t use S3 Intelligent Tiering because it bases storage transition on the last access time, and this resets every time we need to query the data. We use the S3 Lifecycle rules that are based either on creation time or prefix or tag matching, which is consistent regardless of data access patterns.

For our use case, we need to implement the data retention strategy for trip records outlined in the following table.

| Corporate Rule | Dataset Start | Dataset end | Data Storage | Engine |

| Last 6 months in Redshift Spectrum | December 2019 | May 2020 | Amazon Redshift local tables | Amazon Redshift |

| Months 6–11 in Amazon S3 | June 2019 | November 2019 | S3 Standard | Redshift Spectrum |

| Months 12–14 in S3-IA | March 2019 | May 2019 | S3-IA | Redshift Spectrum |

| After month 15 | January 2019 | February 2019 | Glacier | N/A |

For this post, we create a new table in a new Amazon Redshift cluster and load a public dataset. We use the New York City Taxi and Limousine Commission (TLC) Trip Record Data because it provides the required historical depth.

We use the Green Taxi Trip Records, based on monthly CSV files containing 20 columns with fields like vendor ID, pickup time, drop-off time, fare, and other information.

As a first step, we create an AWS Identity and Access Management (IAM) role for Redshift Spectrum. This is required to allow access to Amazon Redshift to Amazon S3 for querying and loading data, and also to allow access to the AWS Glue Data Catalog whenever we create, modify, or delete a new external table.

BlogSpectrumRole.Glue-Lakehouse-Policy.JSON

Now you create a single-node Amazon Redshift cluster based on a DC2.large instance type, attaching the newly created IAM role BlogSpectrumRole.

redshift-cluster-1.dbname and ports.To connect to Amazon Redshift, you can use a free client like SQL Workbench/J or use the AWS console embedded query editor with the previously created credentials.

The most efficient method to load data into Amazon Redshift is using the COPY command, because it uses the distributed architecture (each slice can ingest one file at the same time).

Now the greentaxi table includes all records starting from January 2019 to June 2020. You’re now ready to leverage Redshift Spectrum and S3 storage classes to save costs.

Extracting data from Amazon Redshift

You perform the next steps using the AWS Command Line Interface (AWS CLI). For download and installation instructions, see Installing, updating, and uninstalling the AWS CLI version 2.

Use the AWS CONFIGURE command to set the access key and secret access key of your IAM user and your selected AWS Region (same as your S3 buckets) of your Amazon Redshift cluster.

In this section, we evaluate two different use cases:

The UNLOAD command uses the result of an embedded SQL query to extract data from Amazon Redshift to Amazon S3, producing different file formats such as CSV, text, and Parquet. To extract data from January 2019, complete the following steps:

rs-lakehouse-blog-post:

The output shows that the UNLOAD statement generated two files of 33 MB each. By default, UNLOAD generates at least one file for each slice in the Amazon Redshift cluster. My cluster is a single node with DC2 type instances with two slices. This default file format is text, which is not storage optimized.

To simplify the process, you create a single file for each month so that you can later apply lifecycle rules to each file. In real-world scenarios, extracting data with a single file isn’t the best practice in terms of performance optimization. This is just to simplify the process for the purpose of this post.

The output of the UNLOAD commands is a single file (per month) in Parquet format, which takes 80% less space than the previous unload. This is important to save costs related to both Amazon S3 and Glacier, but also for costs associated to Redshift Spectrum queries, which is billed by amount of data scanned.

The next step is creating a lifecycle rule based on creation date to automate the migration to S3-IA after 12 months and to Glacier after 15 months. The proposed policy name is 12IA-15Glacier and it’s filtered on the prefix archive/.

This lifecycle policy migrates all keys in the archive prefix from Amazon S3 to S3-IA after 12 months and from S3-IA to Glacier after 15 months. For example, if today were 2020-09-12, and you unload the 2020-03 data to Amazon S3, by 2021-09-12, this 2020-03 data is automatically migrated to S3-IA.

If using this basic use case, you can skip the partition steps in the section Defining the external schema and external tables.

In this use case, you extract data with different ageing in the same time frame. You extract all data from January 2019 to February 2019 and, because we assume that you aren’t using this data, archive it to S3 Glacier.

Data from March 2019 to May 2019 is migrated as an external table on S3-IA, and data from June 2019 to November 2019 is migrated as an external table to S3 Standard. With this approach, you comply with customer long-term retention policies and regulations, and reduce TCO.

You implement the retention strategy described in the Simulated use case and retention policy section.

extract_longterm, extract_midterm, and extract_shortterm in the destination bucket rs-lakehouse-blog-post. The following code is the syntax for creating the extract_longterm folder:

In this section, you manage your data with storage classes and lifecycle policies.

In the next step, you tag every monthly file with a key value named ageing set to the number of months elapsed from the origin date.

In this set of three objects, the oldest file has the tag ageing set to value 14, and the newest is set to 12. In the second post in this series, you discover how to manage the ageing tag as it increases month by month.

The next step is to create a lifecycle rule based on this specific tag in order to automate the migration to Glacier at month 15. The proposed policy name is 15IAtoGlacier and the definition is to limit the scope to only object with the tag ageing set to 15 in the specific bucket.

This lifecycle policy migrates all objects with the tag ageing set to 15 from S3-IA to Glacier.

Though I described this process as automating the migration, I actually want to control the process from the application level using the self-managed tag mechanism. I use this approach because otherwise, the transition is based on file creation date, and the objective is to be able to delete, update, or create a new file whenever needed (for example, to delete parts of records in order to comply to the GDPR “right to be forgotten” rule).

Now you extract all data from June 2019 to November 2019 (7–11 months old) and keep them in Amazon S3 with a lifecycle policy to automatically migrate to S3-IA after ageing 12 months, using same process as described. These six new objects also inherit the rule created previously to migrate to Glacier after 15 months. Finally, you set the ageing tag as described before.

Use the extract_shortterm prefix for these unload operations.

ageing with range 11 to 6 (June 2019 to November 2019), using either the AWS CLI or console if you prefer.12S3toS3IA, which transitions from Amazon S3 to S3-IA.15IAtoGlacier and new 12S3toS3IA, because the command s3api overwrites the current configuration (no incremental approach) with the new policy definition file (JSON). The following code is the new lifecycle.json:

You get in stdout a single JSON with merge of 15IAtoGlacier and 12S3toS3IA.

Defining the external schema and external tables

Before deleting the records you extracted from Amazon Redshift with the UNLOAD command, we define the external schema and external tables to enable Redshift Spectrum queries for these Parquet files.

taxi_archive in the taxispectrum external schema. If you’re walking through the new customer use case, replace the prefix extract_midterm with archive:

extract_shortterm instead of extract_midterm.You get the number of entries in this external table.

yearmonth.The final step is cleaning all the records extracted from the Amazon Redshift local tables:

Cristian Gavazzeni is a senior solution architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes eating Japanese food and travelling abroad with only fly and drive bookings.

Cristian Gavazzeni is a senior solution architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes eating Japanese food and travelling abroad with only fly and drive bookings.

Francesco Marelli is a senior solutions architect at Amazon Web Services. He has lived and worked in London for 10 years, after that he has worked in Italy, Switzerland and other countries in EMEA. He is specialized in the design and implementation of Analytics, Data Management and Big Data systems, mainly for Enterprise and FSI customers. Francesco also has a strong experience in systems integration and design and implementation of web applications. He loves sharing his professional knowledge, collecting vinyl records and playing bass.

Francesco Marelli is a senior solutions architect at Amazon Web Services. He has lived and worked in London for 10 years, after that he has worked in Italy, Switzerland and other countries in EMEA. He is specialized in the design and implementation of Analytics, Data Management and Big Data systems, mainly for Enterprise and FSI customers. Francesco also has a strong experience in systems integration and design and implementation of web applications. He loves sharing his professional knowledge, collecting vinyl records and playing bass.

Post Syndicated from Биволъ original https://bivol.bg/council-of-europe_stoyan-tonchev_bivo-bg.html

Разследващият журналист Стоян Тончев – автор на Bivol.bg и издател на Liberta.bg, e подложен на системен тормоз от прокуратурата и полицията на България, заради журналистическата му дейност. Съветът на Европа,…

Post Syndicated from Al MS original https://aws.amazon.com/blogs/big-data/run-apache-spark-3-0-workloads-1-7-times-faster-with-amazon-emr-runtime-for-apache-spark/

With Amazon EMR release 6.1.0, Amazon EMR runtime for Apache Spark is now available for Spark 3.0.0. EMR runtime for Apache Spark is a performance-optimized runtime for Apache Spark that is 100% API compatible with open-source Apache Spark.

In our benchmark performance tests using TPC-DS benchmark queries at 3 TB scale, we found EMR runtime for Apache Spark 3.0 provides a 1.7 times performance improvement on average, and up to 8 times improved performance for individual queries over open-source Apache Spark 3.0.0. With Amazon EMR 6.1.0, you can now run your Apache Spark 3.0 applications faster and cheaper without requiring any changes to your applications.

To evaluate the performance improvements, we used TPC-DS benchmark queries with 3 TB scale and ran them on a 6-node c4.8xlarge EMR cluster with data in Amazon Simple Storage Service (Amazon S3). We ran the tests with and without the EMR runtime for Apache Spark. The following two graphs compare the total aggregate runtime and geometric mean for all queries in the TPC-DS 3 TB query dataset between the Amazon EMR releases.

The following table shows the total runtime in seconds.

The following table shows the geometric mean of the runtime in seconds.

In our tests, all queries ran successfully on EMR clusters that used the EMR runtime for Apache Spark. However, when using Spark 3.0 without the EMR runtime, 34 out of the 104 benchmark queries failed due to SPARK-32663. To work around these issues, we disabled spark.shuffle.readHostLocalDisk configuration. However, even after this change, queries 14a and 14b continued to fail. Therefore, we chose to exclude these queries from our benchmark comparison.

The per-query speedup on Amazon EMR 6.1 with and without EMR runtime is illustrated in the following chart. The horizontal axis shows each query in the TPC-DS 3 TB benchmark. The vertical axis shows the speedup of each query due to the EMR runtime. We found a 1.7 times performance improvement as measured by the geometric mean of the per-query speedups, with all queries showing a performance improvement with the EMR Runtime.

You can run your Apache Spark 3.0 workloads faster and cheaper without making any changes to your applications by using Amazon EMR 6.1. To keep up to date, subscribe to the Big Data blog’s RSS feed to learn about more great Apache Spark optimizations, configuration best practices, and tuning advice.

Al MS is a product manager for Amazon EMR at Amazon Web Services.

Al MS is a product manager for Amazon EMR at Amazon Web Services.

Peter Gvozdjak is a senior engineering manager for EMR at Amazon Web Services.

Peter Gvozdjak is a senior engineering manager for EMR at Amazon Web Services.

Post Syndicated from Margaret Zonay original https://blog.rapid7.com/2021/01/19/insightidr-2020-highlights-and-whats-ahead-in-2021/

As we kick off 2021 here at Rapid7, we wanted to take a minute to reflect on 2020, highlight some key InsightIDR product investments we don’t want you to miss, and take a look ahead at where our team sees detection and response going this year.

Whenever we engage with customers or industry professionals, one theme that we hear on repeat is complexity. It can often feel like the cards are stacked against security teams as environments sprawl and security needs outpace the number of experienced professionals we have to address them. This dynamic was further amplified by the pandemic over the past year. Our focus over the past 12 months has been on enabling teams to work smarter, get the most out of our software and services, and accelerate their security maturity as efficiently as possible. Here are some highlights from our journey over 2020:

In 2020, we made continuous enhancements to our Log Search feature to make it more efficient and customizable to customers’ needs. Now, you can:

For a look at the most up-to-date list of Log Search capabilities, check out our help documentation here.

With Rapid7’s lightweight Insight Network Sensor, customers can monitor, capture, and assess end-to-end network traffic across their physical and virtual environments (including AWS environments) with curated IDS alerts, plus DNS and DHCP data. For maximum visibility, customers can add on the network flow data module to further investigations, deepen forensic activities, and enable custom rule creation.

The real-time visibility provided by InsightIDR’s Network Traffic Analysis has been especially helpful for organizations working remotely over the past year. Many customers are building custom InsightIDR dashboards to improve real-time monitoring of activity within their networks and at the edge to maintain optimal security as teams work from home.

Learn about how to leverage NTA and more by checking out our top Network Traffic blogs of 2020:

InsightIDR’s latest add-on module, enhanced endpoint telemetry (EET), brings the enhanced endpoint data that’s currently used by Rapid7’s Managed Detection and Response (MDR) Services team in almost all of their investigations into InsightIDR.

Get a full picture of endpoint activity, create custom detections, and see the full scope of an attack with EET’s process start activity data in Log Search. These logs give visibility into all endpoint activity to tell a story around what triggered a particular detection and to help inform remediation efforts. As remote working has increased for many organizations, so has the number of remote endpoints security teams have to monitor—the level of detail provided by EET helps teams detect and proactively hunt for custom threats across their expanding environments.

Learn more about the benefits of EET in our blog post and how to get started in our help documentation.

Automation is critical for accelerating and streamlining incident response, especially as the threat landscape continues to evolve in 2021 and beyond. This is why we have built-in automation powered by InsightConnect, Rapid7’s Security Orchestration Automation and Response (SOAR) tool, at the heart of InsightIDR. SOC automation with InsightIDR and InsightConnect allows customers to auto-enrich alerts, customize alerting and escalation pathways, and auto-contain threats.

In 2020, we furthered the integration between InsightIDR and InsightConnect—in addition to kicking off workflows from User Behavior Analytics (UBA) alerts, joint customers can now trigger custom workflows to automatically initiate predefined actions each time a Custom Alert is triggered in InsightIDR.

Learn more about the benefits of leveraging SIEM and SOAR by checking out the blogs below:

Only Rapid7 MDR with Active Response can reduce attacker dwell time and save your team time and money with unrivaled response capabilities on both endpoint and user threats. Whether it’s a suspicious authentication while you’re buried in other security initiatives or an attacker executing malicious documents at 3 a.m., you can be confident that Rapid7 MDR is watching and responding to attacks in your environment.

With MDR Elite with Active Response, our team of SOC experts provide 24×7 end-to-end detection and response to immediately limit an attacker’s ability to execute, giving you and your team peace of mind that Rapid7 will take action to protect your business and return the time normally spent investigating and responding to threats back to your analysts.

At Rapid7, we’re grateful to have received multiple recognitions from analysts and customers alike for our Detection and Response portfolio throughout 2020, including:

We’re so thankful to our customers for your continued partnership and feedback throughout the years. As we move into 2021, we’re excited to continue to invest in driving effective and efficient detection and response for teams.

As we move forward in 2021, it’s clear that things aren’t going to jump back to “normal” anytime soon. Many companies continue to work remotely, increasing the already present need for security tools that can keep teams safe and secure.

In 2020, a big theme for InsightIDR was giving teams advanced visibility into their environments. What’s ahead in 2021? More capabilities that help security teams do their jobs faster and more effectively.

Sam Adams, VP of Engineering for Detection and Response at Rapid7 reflected, “In 2020, InsightIDR added a breadth of new ways to detect attacks in your environment, from endpoint to network to cloud. In 2021, we want to add depth to all of these capabilities, by allowing our customers fine-grained tuning and customization of our analytics engine and an even more robust set of tools to investigate alerts faster than ever before.”

When speaking about the detection and response landscape overall, Jeffrey Gardner, a former healthcare company Information Security Officer and recently appointed Practice Advisor for Detection and Response at Rapid7, said, “I think the broader detection industry is at this place where there’s an overabundance of data—security professionals have this feeling of ‘I need these log sources and I want this telemetry collected,’ but most solutions don’t make it easy to pull actionable intelligence from this data. I call out ‘actionable’ because most of the products provide a lot of intel but really leave the ‘what should I do next?’ completely up to the end user without guidance.”

InsightIDR targets this specific issue by providing teams with visibility across their entire environment while simultaneously enabling action from within the solution with curated built-in expertise through out-of-the-box detections, pre-built automation, and high-context investigation and response tools.

When speaking about projected 2021 cybersecurity trends, Bob Rudis, Chief Data Scientist at Rapid7, noted, “We can be fairly certain ransomware tactics and techniques will continue to be commoditized and industrialized, and criminals will continue to exploit organizations that are strapped for resources and distracted by attempting to survive in these chaotic times.”

To stay ahead of these new attacker tactics and techniques, visibility into logs, network traffic, and endpoint data will be crucial. These data sources contain the strongest and earliest indicators of potential compromise (as well as form the three pillars of Gartner’s SOC Visibility Triad). Having all of this critical data in a single solution like InsightIDR will help teams work more efficiently and effectively, as well as stay on top of potential new threats and tactics.

See more of Rapid7’s 2021 cybersecurity predictions in our recent blog post here, and keep an eye on our blog and release notes as we continue to highlight the latest in detection and response at Rapid7 throughout the year.

Post Syndicated from original https://lwn.net/Articles/842964/rss

SciPy is a collection of Python

libraries for scientific and numerical computing. Nearly every serious user

of Python for scientific research uses SciPy. Since Python is popular across

all fields of science, and continues to be a prominent language in some

areas of research, such as data science, SciPy has a large user

base. On New Year’s Eve, SciPy

announced

version 1.6 of the scipy library, which is the central

component in the SciPy stack. That release gives us a good opportunity to delve

into this software and give

some examples of its use.

Post Syndicated from Geographics original https://www.youtube.com/watch?v=0ZzK0P2mEm4

Post Syndicated from original https://lwn.net/Articles/843142/rss

Security updates have been issued by Debian (gst-plugins-bad1.0), Fedora (flatpak), Red Hat (dnsmasq, kernel, kpatch-patch, libpq, linux-firmware, postgresql:10, postgresql:9.6, and thunderbird), SUSE (dnsmasq), and Ubuntu (dnsmasq, htmldoc, log4net, and pillow).

Post Syndicated from Marius Cealera original https://aws.amazon.com/blogs/architecture/field-notes-improving-call-center-experiences-with-iterative-bot-training-using-amazon-connect-and-amazon-lex/

This post was co-written by Abdullah Sahin, senior technology architect at Accenture, and Muhammad Qasim, software engineer at Accenture.

Organizations deploying call-center chat bots are interested in evolving their solutions continuously, in response to changing customer demands. When developing a smart chat bot, some requests can be predicted (for example following a new product launch or a new marketing campaign). There are however instances where this is not possible (following market shifts, natural disasters, etc.)

While voice and chat bots are becoming more and more ubiquitous, keeping the bots up-to-date with the ever-changing demands remains a challenge. It is clear that a build>deploy>forget approach quickly leads to outdated AI that lacks the ability to adapt to dynamic customer requirements.

Call-center solutions which create ongoing feedback mechanisms between incoming calls or chat messages and the chatbot’s AI, allow for a programmatic approach to predicting and catering to a customer’s intent.

This is achieved by doing the following:

This post provides a technical overview of one of Accenture’s Advanced Customer Engagement (ACE+) solutions, explaining how it integrates multiple AWS services to continuously and quickly improve chatbots and stay ahead of customer demands.

Call center solution architects and administrators can use this architecture as a starting point for an iterative bot improvement solution. The goal is to lead to an increase in call deflection and drive better customer experiences.

The goal of the solution is to extract missed intents and utterances from a conversation and present them to the call center agent at the end of a conversation, as part of the after-work flow. A simple UI interface was designed for the agent to select the most relevant missed phrases and forward them to an Analytics/Operations Team for final approval.

Figure 1 – Architecture Diagram

Amazon Connect serves as the contact center platform and handles incoming calls, manages the IVR flows and the escalations to the human agent. Amazon Connect is also used to gather call metadata, call analytics and handle call center user management. It is the platform from which other AWS services are called: Amazon Lex, Amazon DynamoDB and AWS Lambda.

Lex is the AI service used to build the bot. Lambda serves as the main integration tool and is used to push bot transcripts to DynamoDB, deploy updates to Lex and to populate the agent dashboard which is used to flag relevant intents missed by the bot. A generic CRM app is used to integrate the agent client and provide a single, integrated, dashboard. For example, this addition to the agent’s UI, used to review intents, could be implemented as a custom page in Salesforce (Figure 2).

Figure 2 – Agent feedback dashboard in Salesforce. The section allows the agent to select parts of the conversation that should be captured as intents by the bot.

A separate, stand-alone, dashboard is used by an Analytics and Operations Team to approve the new intents, which triggers the bot update process.

The typical use case for this solution (Figure 4) shows how missing intents in the bot configuration are captured from customer conversations. These intents are then validated and used to automatically build and deploy an updated version of a chatbot. During the process, the following steps are performed:

Figure 3 – Use case: the bot is unable to resolve the first call (bottom flow). Post-call analysis results in a new version of the bot being built and deployed. The new bot is able to handle the issue in subsequent calls (top flow)

During the first call (bottom flow) the bot fails to fulfil the request and the customer is escalated to a Live Agent. The agent resolves the query and, post call, analyzes the transcript between the chatbot and the customer, identifies conversation parts that the chatbot should have understood and sends a ‘missed intent/utterance’ report to the Analytics/Ops Team. The team approves and triggers the process that updates the bot.

For the second call, the customer asks the same question. This time, the (trained) bot is able to answer the query and end the conversation.

Ideally, the post-call analysis should be performed, at least in part, by the agent handling the call. Involving the agent in the process is critical for delivering quality results. Any given conversation can have multiple missed intents, some of them irrelevant when looking to generalize a customer’s question.

A call center agent is in the best position to judge what is or is not useful and mark the missed intents to be used for bot training. This is the important logical triage step. Of course, this will result in the occasional increase in the average handling time (AHT). This should be seen as a time investment with the potential to reduce future call times and increase deflection rates.

One alternative to this setup would be to have a dedicated analytics team review the conversations, offloading this work from the agent. This approach avoids the increase in AHT, but also introduces delay and, possibly, inaccuracies in the feedback loop.

The approval from the Analytics/Ops Team is a sign off on the agent’s work and trigger for the bot building process.

The following section focuses on the sequence required to programmatically update intents in existing Lex bots. It assumes a Connect instance is configured and a Lex bot is already integrated with it. Navigate to this page for more information on adding Lex to your Connect flows.

It also does not cover the CRM application, where the conversation transcript is displayed and presented to the agent for intent selection. The implementation details can vary significantly depending on the CRM solution used. Conceptually, most solutions will follow the architecture presented in Figure1: store the conversation data in a database (DynamoDB here) and expose it through an (API Gateway here) to be consumed by the CRM application.

Lex bot update sequence

The core logic for updating the bot is contained in a Lambda function that triggers the Lex update. This adds new utterances to an existing bot, builds it and then publishes a new version of the bot. The Lambda function is associated with an API Gateway endpoint which is called with the following body:

{

“intent”: “INTENT_NAME”,

“utterances”: [“UTTERANCE_TO_ADD_1”, “UTTERANCE_TO_ADD_2” …]

}

Steps to follow:

Figure 4 – Representation of Lex Update Sequence

7. The update bot information is passed to the putBot API to update Lex and the processBehavior is set to “BUILD” to trigger a build. The following code snippet shows how this would be done in JavaScript:

const updateBot = await lexModel

.putBot({

...bot,

processBehavior: "BUILD"

})

.promise()

9. The last step is to publish the bot, for this we fetch the bot alias information and then call the putBotAlias API.

const oldBotAlias = await lexModel

.getBotAlias({

name: config.botAlias,

botName: updatedBot.name

})

.promise()

return lexModel

.putBotAlias({

name: config.botAlias,

botName: updatedBot.name,

botVersion: updatedBot.version,

checksum: oldBotAlias.checksum,

})

In this post, we showed how a programmatic bot improvement process can be implemented around Amazon Lex and Amazon Connect. Continuously improving call center bots is a fundamental requirement for increased customer satisfaction. The feedback loop, agent validation and automated bot deployment pipeline should be considered integral parts to any a chatbot implementation.

Finally, the concept of a feedback-loop is not specific to call-center chatbots. The idea of adding an iterative improvement process in the bot lifecycle can also be applied in other areas where chatbots are used.

Accelerating Innovation with the Accenture AWS Business Group (AABG)

By working with the Accenture AWS Business Group (AABG), you can learn from the resources, technical expertise, and industry knowledge of two leading innovators, helping you accelerate the pace of innovation to deliver disruptive products and services. The AABG helps customers ideate and innovate cloud solutions with customers through rapid prototype development.

Connect with our team at [email protected] to learn and accelerate how to use machine learning in your products and services.

Post Syndicated from James Beswick original https://aws.amazon.com/blogs/compute/operating-lambda-design-principles-in-event-driven-architectures-part-2/

In the Operating Lambda series, I cover important topics for developers, architects, and systems administrators who are managing AWS Lambda-based applications. This three-part section discusses event-driven architectures and how these relate to Lambda-based applications.

Part 1 covers the benefits of the event-driven paradigm and how it can improve throughput, scale and extensibility. This post explains some of the design principles and best practices that can help developers gain the benefits of building Lambda-based applications.

Many of the best practices that apply to software development and distributed systems also apply to serverless application development. The broad principles are consistent with the Well-Architected Framework. The overall goal is to develop workloads that are:

When you develop Lambda-based applications, there are several important design principles that can help you build workloads that meet these goals. You may not apply every principle to every architecture and you have considerable flexibility in how you approach building with Lambda. However, they should guide you in general architecture decisions.

Serverless applications usually comprise several AWS services, integrated with custom code run in Lambda functions. While Lambda can be integrated with most AWS services, the services most commonly used in serverless applications are:

| Category | AWS service |

| Compute | AWS Lambda |

| Data storage | Amazon S3 Amazon DynamoDB Amazon RDS |

| API | Amazon API Gateway |

| Application integration | Amazon EventBridge Amazon SNS Amazon SQS |

| Orchestration | AWS Step Functions |

| Streaming data and analytics | Amazon Kinesis Data Firehose |

There are many well-established, common patterns in distributed architectures that you can build yourself or implement using AWS services. For most customers, there is little commercial value in investing time to develop these patterns from scratch. When your application needs one of these patterns, use the corresponding AWS service:

| Pattern | AWS service |

| Queue | Amazon SQS |

| Event bus | Amazon EventBridge |

| Publish/subscribe (fan-out) | Amazon SNS |

| Orchestration | AWS Step Functions |

| API | Amazon API Gateway |

| Event streams | Amazon Kinesis |

These services are designed to integrate with Lambda and you can use infrastructure as code (IaC) to create and discard resources in the services. You can use any of these services via the AWS SDK without needing to install applications or configure servers. Becoming proficient with using these services via code in your Lambda functions is an important step to producing well-designed serverless applications.

The Lambda service limits your access to the underlying operating systems, hypervisors, and hardware running your Lambda functions. The service continuously improves and changes infrastructure to add features, reduce cost and make the service more performant. Your code should assume no knowledge of how Lambda is architected and assume no hardware affinity.

Similarly, the integration of other services with Lambda is managed by AWS with only a small number of configuration options exposed. For example, when API Gateway and Lambda interact, there is no concept of load balancing available since it is entirely managed by the services. You also have no direct control over which Availability Zones the services use when invoking functions at any point in time, or how and when Lambda execution environments are scaled up or destroyed.

This abstraction allows you to focus on the integration aspects of your application, the flow of data, and the business logic where your workload provides value to your end users. Allowing the services to manage the underlying mechanics helps you develop applications more quickly with less custom code to maintain.

When building Lambda functions, you should assume that the environment exists only for a single invocation. The function should initialize any required state when it is first started – for example, fetching a shopping cart from a DynamoDB table. It should commit any permanent data changes before exiting to a durable store such as S3, DynamoDB, or SQS. It should not rely on any existing data structures or temporary files, or any internal state that would be managed by multiple invocations (such as counters or other calculated, aggregate values).

Lambda provides an initializer before the handler where you can initialize database connections, libraries, and other resources. Since execution environments are reused where possible to improve performance, you can amortize the time taken to initialize these resources over multiple invocations. However, you should not store any variables or data used in the function within this global scope.

Most architectures should prefer many, shorter functions over fewer, larger ones. Making Lambda functions highly specialized for your workload means that they are concise and generally result in shorter executions. The purpose of each function should be to handle the event passed into the function, with no knowledge or expectations of the overall workflow or volume of transactions. This makes the function agnostic to the source of the event with minimal coupling to other services.

Any global-scope constants that change infrequently should be implemented as environment variables to allow updates without deployments. Any secrets or sensitive information should be stored in AWS Systems Manager Parameter Store or AWS Secrets Manager and loaded by the function. Since these resources are account-specific, this allows you to create build pipelines across multiple accounts. The pipelines load the appropriate secrets per environment, without exposing these to developers or requiring any code changes.

Many traditional systems are designed to run periodically and process batches of transactions that have built up over time. For example, a banking application may run every hour to process ATM transactions into central ledgers. In Lambda-based applications, the custom processing should be triggered by every event, allowing the service to scale up concurrency as needed, to provide near-real time processing of transactions.

While you can run cron tasks in serverless applications by using scheduled expressions for rules in Amazon EventBridge, these should be used sparingly or as a last-resort. In any scheduled task that processes a batch, there is the potential for the volume of transactions to grow beyond what can be processed within the 15-minute Lambda timeout. If the limitations of external systems force you to use a scheduler, you should generally schedule for the shortest reasonable recurring time period.

For example, it’s not best practice to use a batch process that triggers a Lambda function to fetch a list of new S3 objects. This is because the service may receive more new objects in between batches than can be processed within a 15-minute Lambda function.

Instead, the Lambda function should be invoked by the S3 service each time a new object is put into the S3 bucket. This approach is significantly more scalable and also invokes processing in near-real time.

Workflows that involve branching logic, different types of failure models and retry logic typically use an orchestrator to keep track of the state of the overall execution. Avoid using Lambda functions for this purpose, since it results in tightly coupled groups of functions and services and complex code handling routing and exceptions.

With AWS Step Functions, you use state machines to manage orchestration. This extracts the error handling, routing, and branching logic from your code, replacing it with state machines declared using JSON. Apart from making workflows more robust and observable, it allows you to add versioning to workflows and make the state machine a codified resource that you can add to a code repository.

It’s common for simpler workflows in Lambda functions to become more complex over time, and for developers to use a Lambda function to orchestrate the flow. When operating a production serverless application, it’s important to identify when this is happening, so you can migrate this logic to a state machine.

AWS serverless services, including Lambda, are fault-tolerant and designed to handle failures. In the case of Lambda, if a service invokes a Lambda function and there is a service disruption, Lambda invokes your function in a different Availability Zone. If your function throws an error, the Lambda service retries your function.

Since the same event may be received more than once, functions should be designed to be idempotent. This means that receiving the same event multiple times does not change the result beyond the first time the event was received.

For example, if a credit card transaction is attempted twice due to a retry, the Lambda function should process the payment on the first receipt. On the second retry, either the Lambda function should discard the event or the downstream service it uses should be idempotent.

A Lambda function implements idempotency typically by using a DynamoDB table to track recently processed identifiers to determine if the transaction has been handled previously. The DynamoDB table usually implements a Time To Live (TTL) value to expire items to limit the storage space used.

For failures within the custom code of a Lambda function, the service offers a number of features to help preserve and retry the event, and provide monitoring to capture that the failure has occurred. Using these approaches can help you develop workloads that are resilient to failure and improve the durability of events as they are processed by Lambda functions.

This post discusses the design principles that can help you develop well-architected serverless applications. I explain why using services instead of code can help improve your application’s agility and scalability. I also show how statelessness and function design also contribute to good application architecture. I cover how using events instead of batches helps serverless development, and how to plan for retries and failures in your Lambda-based applications.

Part 3 of this series will look at common anti-patterns in event-driven architectures and how to avoid building these into your microservices.

For more serverless learning resources, visit Serverless Land.

Post Syndicated from Matt Granger original https://www.youtube.com/watch?v=Ei-4rZiAL3Y

Post Syndicated from Tatjana Dunce original https://blog.zabbix.com/saf-products-integration-into-zabbix/12978/

Top of the line point-to-point microwave equipment manufacturer SAF Tehnika has partnered with Zabbix to provide NMS capabilities to its end customers. SAF Tehnika appreciates Zabbix’s customizability, scalability, ease of template design, and SAF products integration.

I. SAF Tehnika (1:14)

II. SAF point-to-point microwave systems (3:20)

III. SAF product lines (5:37)

√ Integra (5:54)

√ PhoeniX-G2 (6:41)

IV. SAF services (7:21)

V. SAF partnership with Zabbix (8:48)

√ Zabbix templates for SAF equipment (10:50)

√ Zabbix Maps view for Phoenix G2 (15:00)

VI. Zabbix services provided by SAF (17:56)

VII. Questions & Answers (20:00)

SAF Tehnika comes from a really small country — Latvia, as well as Zabbix.

SAF Tehnika:

✓ has been around for over 20 years,

✓ has been profitable/has no debt balance sheet,

✓ is present in 130+ countries,

✓ has manufacturing facilities in the European Union,

✓ is ISO 9001 certified,

✓ is Zabbix Certified Partner since August 2020,

✓ is publicly traded on NASDAQ Riga Stock Exchange,

✓ has flexible R&D, and is able to provide custom solutions based on customer requirements.

SAF Tehnika is primarily manufacturing:

SAF Tehnika main product groups

Point-to-point microwave systems are an alternative to a fiber line. Instead of a fiber line, we have two radio systems with the antenna installed on two towers. The distance between those towers could be anywhere from a few km up to 50 or even 100 km. The data is transmitted from one point to another wirelessly.

SAF Tehnika point-to-point MW system technology provides:

√ WISPs,

√ TV and broadcasting (No.1 in the USA),

√ public safety,

√ utilities & mining,

√ enterprise networks,

√ local government & military,

√ low-latency/HFT (No.1 globally).

The primary PTP Microwave product series manufactured by SAF Tehnika are Integra and Phoenix G2

SAF Tehnika main radio products

Integra is a full-outdoor radio, which can be attached directly to the antenna, so there is nothing indoors besides the power supply.

Integra-E — wireless fiber solution specifically tailored for dense urban deployment:

Integra-X — a powerful dual-core system for network backbone deployment, incorporates two radios in a single enclosure and two modem chains allowing this system to operate with built-in 2+0 XPIC, reaching the maximum data transmission capacity of up to 2.2 gigabits per second.

The PhoeniX G2 product line can either be split-mount with the modem installed indoors and radio — outdoors or full-indoors solution. For instance, the broadcast market is mostly using full-indoor solutions as they prefer to have all the equipment to be indoors and then have a long elliptical line going up to the antenna. Phoenix G2 product line features native ASI transmission in parallel with the IP traffic – a crucial requirement of our broadcast customers.

SAF has also been offering different sets of services:

✓ product training.

✓ link planning.

✓ technical support.

✓ staging and configuration — enables the customers to have all the equipment labeled and configured before it gets to the customer.

✓ FCC coordination — recently added to the SAF portfolio and offered only to the customers in the USA. This provides an opportunity to save time on link planning, FCC coordination, pre-configuration, and hardware purchase from the one-stop-shop – SAF Tehnika.

✓ Zabbix deployment and support.

Before partnering with Zabbix, SAF Tehnika had developed its own network management system and used it for many years. Unfortunately, this software was limited to SAF products. Adding other vendors’ products was a difficult and complicated process.

More and more SAF customers were inquiring about the possibility of adding other vendors’ products to the network management system. That is where Zabbix came in handy, as besides monitoring SAF products, Zabbix can also monitor other vendors’ products just by adding appropriate templates.

Zabbix is an open-source, advanced and robust platform with high customizability and scalability – there are virtually no limits to the number of devices Zabbix can monitor. I am confident our customers will appreciate all of these benefits and enjoy the ability to add SAF and other vendor products to the list of monitored devices.

Finally, SAF Tehnika and Zabbix are located in the same small town, so the partnership was easy and natural.

Following training at Zabbix, SAF engineers obtained the certified specialist status and developed Zabbix SNMP-based templates for main product lines:

SAF main product line templates are available free of charge to all of SAF customers on the SAF webpage: https://www.saftehnika.com/en/downloads

(registration required).

Users proficient in Linux and familiar with Zabbix can definitely install and deploy these templates themselves. Otherwise, SAF specialists are ready to assist in the deployment and integration of Zabbix templates and tuning of the required parameters.

We have an Integra-X link Zabbix dashboard shown as an example below. As Integra-X is a dual-core radio, we provide the monitoring parameters for two radios in a single enclosure.

Zabbix dashboard for Integra-X link

On the top, we display the main health parameters of the link, current received signal level, MSE or so-called noise level, the transmit power, and the IP address – a small summary of the link.

On the left, we display the parameters of the radio and the graphs for the last couple of minutes — the live graphs of the received signal level and MSE, the noise level of the RF link.

On the right, we have the same parameters for the remote side. In the middle, we have added a few parameters, which should be monitored, such as CPU load percentage, the current traffic over the link, and diagnostic parameters, such as the temperature of each of the modems.

At the bottom, we have added the alarm widget. In this example, the alarm of too low received signal level is shown. These alarms are also colored by their severity: red alarms are for disaster-level issues, blue alarms — for information.

From this dashboard, the customers are able to estimate the current status of the link and any issues that have appeared in the past. Note that Zabbix graphs can be easily customized to display the widgets or graphs of customer choice.

Zabbix Maps view for Phoenix G2 1+1 system

In the map, our full-indoor Phoenix G2 system is displayed in duplicate, as this is a 1+1 protected link. Each of the IDU, ASI module, and radio module is protected by the second respective module.

Zabbix allows for naming each of these modules and for monitoring every module’s performance individually. In this example, the ASI module is colored in red as one of the ASI ports has lost the connection, while the radio unit’s red color shows that the received signal is lower than expected.

Zabbix dashboard for Phoenix G2 1+1 system

Besides the maps view, the dashboard for Phoenix G2 1+1 system shows the historical data like alarm log and graphs. The data in red indicates that an issue hasn’t been cleared yet. The data in green – an issue was resolved, for instance, a low signal level was restored after going down for a short period of time.

In the middle we see a summary graph of all four radios’ performance — two on the local side and two on the remote side. Here, we are monitoring the most important parameters — the received signal level and MSE i.e., the noise level.

The graph at the bottom is important for broadcast customers as the majority of them transmit ASI traffic besides Ethernet and IP traffic. Here they’re able to monitor how much traffic was going through this link in the past.

Since SAF Tehnika has experienced Zabbix-certified specialists, who have developed these multiple templates, we are ready to provide Zabbix-related services to our end customers, such as:

NOTE. SAF Zabbix support services are limited to SAF products.

SAF Tehnika is ready and eager to provide Zabbix related services to our customers. If you already have a SAF network and would be willing to integrate it into Zabbix or plan to deploy a new SAF network integrated with Zabbix, you can contact our offices.

SAF contact details

Question. You told us that you provide Zabbix for your customers and create templates to pass to them, and so on. But do you use Zabbix in your own environment, for instance, in your offices, to monitor your own infrastructure?

Answer. SAF has been using Zabbix for almost 10 years and we use it to monitor our internal infrastructure. Currently, SAF has three separate Zabbix networks: one for SAF IT system monitoring, the other for Kubernetes system monitoring (Aranet Cloud services), and a separate Zabbix server for testing purposes, where we are able to test SAF equipment as well as experiment with Zabbix server deployments, templates, etc.

Question. You have passed the specialist courses. Do you have any plans on becoming certified professionals?

Answer. Our specialists are definitely interested in Zabbix certified professionals’ courses. We will make the decision about that based on the revenue Zabbix will bring us and interest of our customers.

Question. You have already provided a couple of templates for Zabbix and for your customers. Do you have any interesting templates you are working on? Do you have plans to create or upgrade some existing templates?

Answer. So far, we have released the templates for the main product lines — Integra and Phoenix G2. We have a few product lines that are are more specialized, such as low-latency products and some older products, such as CFIP Lumina. In case any of our customers are interested in integrating these older products or low-latency products, we might create more templates.

Question. Do you plan to refine the templates to make them a part of the Zabbix out-of-the-box solution?

Answer. If Zabbix is going to approve our templates to make them a part of the out-of-the-box solution, it will benefit our customers in using and monitoring our products. We’ll be delighted to provide the templates for this purpose.

Post Syndicated from The Atlantic original https://www.youtube.com/watch?v=voQ4MSpX9Dg

Post Syndicated from Bruce Schneier original https://www.schneier.com/blog/archives/2021/01/injecting-a-backdoor-into-solarwinds-orion.html

Crowdstrike is reporting on a sophisticated piece of malware that was able to inject malware into the SolarWinds build process:

Key Points

- SUNSPOT is StellarParticle’s malware used to insert the SUNBURST backdoor into software builds of the SolarWinds Orion IT management product.

- SUNSPOT monitors running processes for those involved in compilation of the Orion product and replaces one of the source files to include the SUNBURST backdoor code.

- Several safeguards were added to SUNSPOT to avoid the Orion builds from failing, potentially alerting developers to the adversary’s presence.

Analysis of a SolarWinds software build server provided insights into how the process was hijacked by StellarParticle in order to insert SUNBURST into the update packages. The design of SUNSPOT suggests StellarParticle developers invested a lot of effort to ensure the code was properly inserted and remained undetected, and prioritized operational security to avoid revealing their presence in the build environment to SolarWinds developers.

This, of course, reminds many of us of Ken Thompson’s thought experiment from his 1984 Turing Award lecture, “Reflections on Trusting Trust.” In that talk, he suggested that a malicious C compiler might add a backdoor into programs it compiles.

The moral is obvious. You can’t trust code that you did not totally create yourself. (Especially code from companies that employ people like me.) No amount of source-level verification or scrutiny will protect you from using untrusted code. In demonstrating the possibility of this kind of attack, I picked on the C compiler. I could have picked on any program-handling program such as an assembler, a loader, or even hardware microcode. As the level of program gets lower, these bugs will be harder and harder to detect. A well-installed microcode bug will be almost impossible to detect.

That’s all still true today.

Post Syndicated from Ashley Whittaker original https://www.raspberrypi.org/blog/raspberry-pi-lego-sorter/

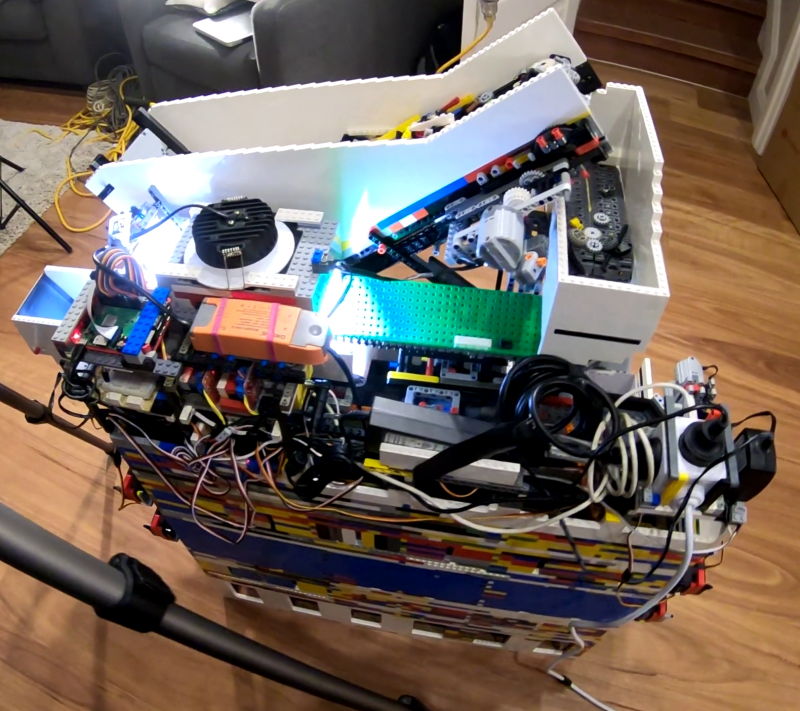

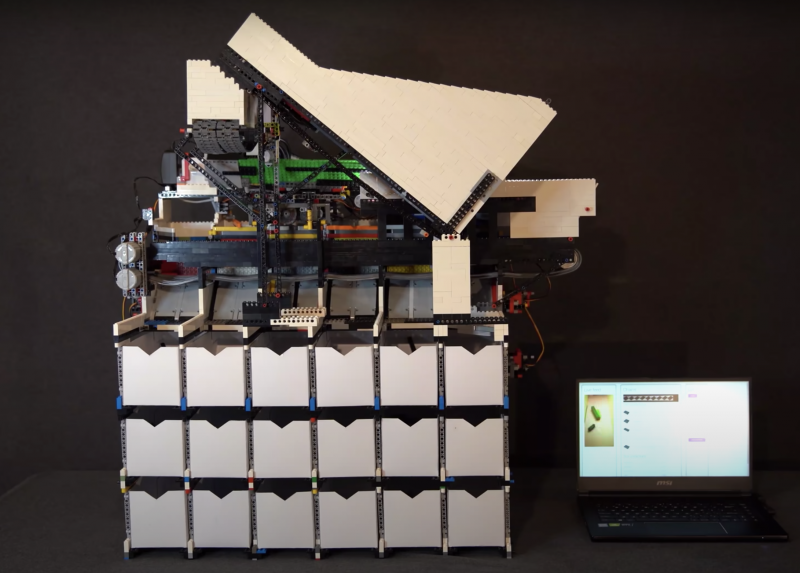

Raspberry Pi is at the heart of this AI–powered, automated sorting machine that is capable of recognising and sorting any LEGO brick.

And its maker Daniel West believes it to be the first of its kind in the world!

This mega-machine was two years in the making and is a LEGO creation itself, built from over 10,000 LEGO bricks.

It can sort any LEGO brick you place in its input bucket into one of 18 output buckets, at the rate of one brick every two seconds.

While Daniel was inspired by previous LEGO sorters, his creation is a huge step up from them: it can recognise absolutely every LEGO brick ever created, even bricks it has never seen before. Hence the ‘universal’ in the name ‘universal LEGO sorting machine’.

The artificial intelligence algorithm behind the LEGO sorting is a convolutional neural network, the go-to for image classification.

What makes Daniel’s project a ‘world first’ is that he trained his classifier using 3D model images of LEGO bricks, which is how the machine can classify absolutely any LEGO brick it’s faced with, even if it has never seen it in real life before.

Daniel has made a whole extra video (above) explaining how the AI in this project works. He shouts out all the open source software he used to run the Raspberry Pi Camera Module and access 3D training images etc. at this point in the video.

Daniel needed the input bucket to carefully pick out a single LEGO brick from the mass he chucks in at once.

This is achieved with a primary and secondary belt slowly pushing parts onto a vibration plate. The vibration plate uses a super fast LEGO motor to shake the bricks around so they aren’t sitting on top of each other when they reach the scanner.

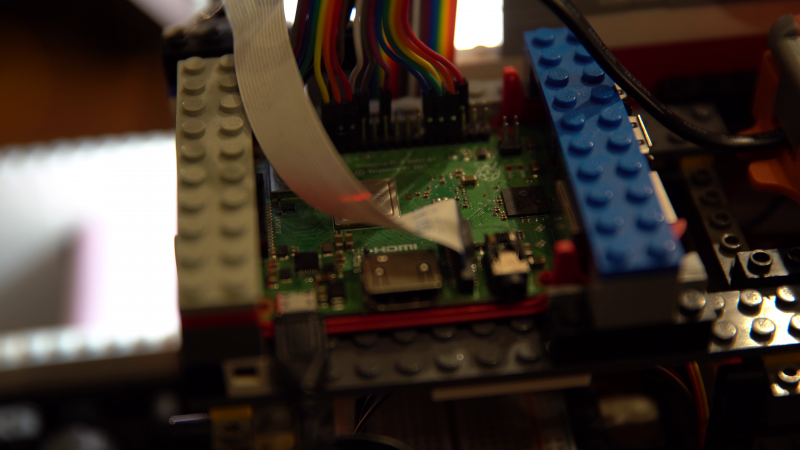

A Raspberry Pi Camera Module captures video of each brick, which Raspberry Pi 3 Model B+ then processes and wirelessly sends to a more powerful computer able to run the neural network that classifies the parts.

The classification decision is then sent back to the sorting machine so it can spit the brick, using a series of servo-controlled gates, into the right output bucket.

Daniel is such a boss maker that he wrote not one, but two further reading articles for those of you who want to deep-dive into this mega LEGO creation:

The post Raspberry Pi LEGO sorter appeared first on Raspberry Pi.

Post Syndicated from Explosm.net original http://explosm.net/comics/5772/

New Cyanide and Happiness Comic

Post Syndicated from original https://lwn.net/Articles/842842/rss

User namespaces provide a number of

interesting challenges for the kernel. They give a user the illusion of

owning the system, but must still operate within the restrictions that

apply outside of the namespace. Resource

limits represent one type of

restriction that, it seems, is proving too restrictive for some users. This

patch set from Alexey Gladkov attempts to address the problem by way of

a not-entirely-obvious approach.

Post Syndicated from original https://lwn.net/Articles/843081/rss

Version

3.9.0.0 of the GNU Radio software-defined radio system has been

released. “All in all, the main breaking change for pure GRC users

will consist in a few changed blocks – an incredible feat, considering the

amount of shift under the hood.”

Post Syndicated from original https://lwn.net/Articles/843054/rss

Security updates have been issued by Arch Linux (atftp, coturn, gitlab, mdbook, mediawiki, nodejs, nodejs-lts-dubnium, nodejs-lts-erbium, nodejs-lts-fermium, nvidia-utils, opensmtpd, php, python-cairosvg, python-pillow, thunderbird, vivaldi, and wavpack), CentOS (firefox and thunderbird), Debian (chromium and snapd), Fedora (chromium, flatpak, glibc, kernel, kernel-headers, nodejs, php, and python-cairosvg), Mageia (bind, caribou, chromium-browser-stable, dom4j, edk2, opensc, p11-kit, policycoreutils, python-lxml, resteasy, sudo, synergy, and unzip), openSUSE (ceph, crmsh, dovecot23, hawk2, kernel, nodejs10, open-iscsi, openldap2, php7, python-jupyter_notebook, slurm_18_08, tcmu-runner, thunderbird, tomcat, viewvc, and vlc), Oracle (dotnet3.1 and thunderbird), Red Hat (postgresql:10, postgresql:12, postgresql:9.6, and xstream), SUSE (ImageMagick, openldap2, slurm, and tcmu-runner), and Ubuntu (icoutils).

By continuing to use the site, you agree to the use of cookies. more information

The cookie settings on this website are set to "allow cookies" to give you the best browsing experience possible. If you continue to use this website without changing your cookie settings or you click "Accept" below then you are consenting to this.