Post Syndicated from Abhishek Panday original https://aws.amazon.com/blogs/devops/manage-roles-and-entitlements-with-pbac-using-amazon-verified-permissions/

Traditionally, customers have used role-based access control (RBAC) to manage entitlements within their applications. The application controls what users can do, based on the roles they are assigned. But, the drive for least privilege has led to an exponential growth in the number of roles. Customers can address this role explosion by moving authorization logic out of the application code, and implementing a policy-based access control (PBAC) model that augments RBAC with attribute-based access control (ABAC).

In this blog post, we cover roles and entitlements, how they are applicable in apps authorization decisions, how customers implement roles and authorization in their app today, and how to shift to a centralized PBAC model by using Amazon Verified Permissions.

Describing roles and entitlements, approaches and challenges of current implementations

In RBAC models, a user’s entitlements are assigned based on job role. This role could be that of a developer, which might grant permissions to affect code in the pipeline of an app. Entitlements represent the features, functions, and resources a user has permissions to access. For example, a customer might be able to place orders or view pets in a pet store application, or a store owner might be entitled to review orders made from their store.

The combination of roles assigned to a user and entitlements granted to these roles determines what a human user can do within your application. Traditionally, application access has all been handled in code by hard coding roles that users can be assigned and mapping those roles directly to a set of actions on resources. However, as the need to apply more granular access control grows (as with least privilege), so do the number of required hard-coded roles that are assigned to users to obtain this level of granularity. This problem is frequently called role explosion, where role definitions grow exponentially which requires additional overhead from your teams to manage and audit roles effectively. For example, the code to authorize request to get details of an order has multiple if/else statements, as shown in the following sample.

boolean userAuthorizedForOrder (Order order, User user){

if (user.storeId == user.storeID) {

if (user.roles.contains("store-owner-roles") { // store owners can only access orders for their own stores

return true;

} else if (user.roles.contains("store-employee")) {

if (isStoreOpen(current_time)) { // Only allow access for the order to store-employees when

return true // store is open

}

}

} else {

if (user.roles("customer-service-associate") && // Only allow customer service associates to orders for cases

user.assignedShift(current_time)) && // they are assinged and only during their assigned shift

user.currentCase.order.orderId == order.orderId

return true;

}

return false;

}

This problem introduces several challenges. First, figuring out why a permission was granted or denied requires a closer look at the code. Second, adding a permission requires code changes. Third, audits can be difficult because you either have to run a battery of tests or explore code across multiple files to demonstrate access controls to auditors. Though there might be additional considerations, these three challenges have led many app owners to begin looking at PBAC methods to address the granularity problem. You can read more about the foundations of PBAC models in Policy-based access control in application development with Amazon Verified Permissions. By shifting to a PBAC model, you can reduce role growth to meet your fine-grained permissions needs. You can also externalize authorization logic from code, develop granular permissions based on roles and attributes, and reduce the time that you spend refactoring code for changes to authorization decisions or reading through the code to audit authorization logic.

In this blog, we demonstrate implementing permissions in a PBAC model through a demo application. The demo application uses Cognito groups to manage role assignment, Verified Permissions to implement entitlements for the roles. The approach restricts the resources that a role can access using attribute-based conditions. This approach works well in usecases when you already have a system in place to manage role assignment and you can define resources that a user may access by matching attributes of the user with attributes of the resource.

Demo app

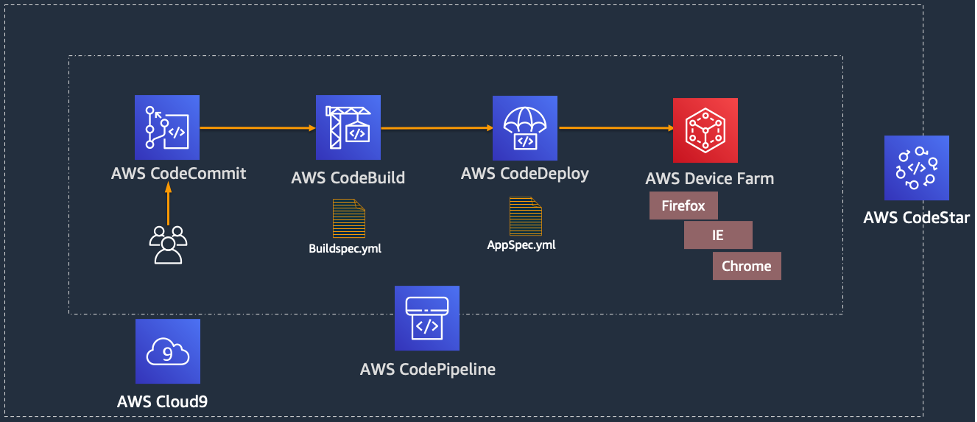

Let’s look at a sample pet store app. The app is used by 2 types of users – end users and store owners. The app enables end users to search and order pets. The app allows store owners to list orders for the store. This sample app is available for download and local testing on the aws-samples/avp-petstore-sample Github repository. The app is a web app built by using AWS Amplify, Amazon API-Gateway, Amazon Cognito, and Amazon Verified Permissions. The following diagram is a high-level illustration of the app’s architecture.

Steps

- The user logs in to the application, and is re-directed to Amazon Cognito to sign-in and obtain a JWT token.

- When user take an action (eg. ListOrders) in the application, the application calls Amazon API-Gateway to process the request.

- Amazon API-Gateway forwards the request to a lambda function, that call Amazon Verified Permissions to authorize the action. If the authorization results in deny, the lambda returns Unauthorized back to the application.

- If the authorization succeed, the application continues to execute the action.

RBAC policies in action

In this section, we focus on building RBAC permissions for the sample pet store app. We will guide you through building RBAC by using Verified Permissions and by focusing on a role for store owners, who are allowed to view all orders for a store. We use Verified Permissions to manage the permissions granted to this role and Amazon Cognito to manage role assignments.

We model the store owner role in Amazon Cognito as a user group called Store-Owner-Role. When a user is assigned the store owner role, the user is added to the “Store-Owner-Role” user group. You can create the users and users groups required to follow along with the sample application by visiting managing users and groups in Amazon Cognito.

After users are assigned to the store owner role, you can enforce that they can list all orders in the store by using the following RBAC policy. The policy provides access to any user in the Store-Owner-Role to perform the ListOrders and GetStoreInventory actions on any resource.

Based on the policy we reviewed – the store owner will receive a Success! when they attempt to list existing orders.

This example further demonstrates the division of responsibility between the identity provider (Amazon Cognito) and Verified Permissions. The identity provider (IdP) is responsible for managing roles and memberships in roles. Verified Permissions is responsible for managing policies that describe what those roles are permitted to do. As demonstrated above, you can use this process to add roles without needing to change code.

Using PBAC to help reduce role explosion

Up until the point of role explosion, RBAC has worked well as the sole authorization model. Unfortunately, we have heard from customers that this model does not scale well because of the challenge of role explosion. Role explosion happens when you have hundreds or thousands of roles, and managing and auditing those roles becomes challenging. In extreme cases, you might have more roles than the number of users in your organization. This happens primarily because organizations keep creating more roles, with each role granting access to a smaller set of resources in an effort to follow the principle of least privilege.

Let’s understand the problem of role explosion through our sample pet store app. The pet store app is now being sold as a SaaS product to pet stores in other locations. As a result, the app needs additional access controls to ensure that each store owner can view only the orders from their own store. The most intuitive way to implement these access controls was to create an additional role for each location, which would restrict the scope of access for a store owner to their respective store’s orders. For example, a role named petstore-austin would allow access only to resources in the Austin, Texas store. RBAC models allow developers to predefine sets of permissions that can be used in an application, and ABAC models allow developers to adapt those permissions to the context of the request (such as the client, the resource, and the method used). The adoption of both RBAC and ABAC models leads to an explosion of either roles or attribute-based rules as the number of store locations increases.

To solve this problem, you can combine RBAC and ABAC policies into a PBAC model. RBAC policies determines the actions the user can take. Augmenting these policies with ABAC policies allows you to control the resouces they can take those actions on. For example, you can scope down the resources a user can access based on identity attributes, such as department or business unit, region, and management level. This approach mitigates role explosion because you need to have only a small number of predefined roles, and access is controlled based on attributes. You can use Verified Permissions to combine RBAC and ABAC models in the form of Cedar policies to build this PBAC solution.

We can demonstrate this solution in the sample pet store app by modifying the policy we created earlier and adding ABAC conditions. The conditions specify that users can only ListOrders of the store they own. The store a store owner owns is represented in Amazon Cognito by employmentStoreCode. This policy now expands on the granularity of access of the original RBAC policy without leading to numerous RBAC policies.

permit (

principal in MyApplication::Group::"Store-Owner-Role",

action in [

MyApplication::Action::"GetStoreInventory",

MyApplication::Action::"ListOrders"

],

resource

) when {

principal.employmentStoreCode == resource.storeId

};We demonstrate that our policy restricts access for store owners to the store they own, by creating a user – eve – who is assigned the Store-Owner-Role and owns petstore-london. When Eve lists orders for the petstore-london store, she gets a success response, indicating she has permissions to list orders.

Next, when even tries to list orders for the petstore-seattle store, she gets a Not Authorized response. She is denied access as she does not own petstore-seattle.

Step-by-step walkthrough of trying the Demo App

If you want to go through the demo of our sample pet store app, we recommend forking it from aws-samples/avp-petstore-sample Github repo and going through this process in README.md to ensure hands-on familiarity.

We will first walk through setting up permissions using only RBAC for the sample pet store application. Next, we will see how you can use PBAC to implement least priveledge as the application scales.

Implement RBAC based Permissions

We describe setting up policies to implement entitlements for the store owner role in Verified Permissions.

-

- Navigate to the AWS Management Console, search for Verified Permissions, and select the service to go to the service page.

- Create new policy store to create a container for your policies. You can create an Empty Policy Store for the purpose of the walk-through.

- Navigate to Policies in the navigation pane and choose Create static policy.

- Select Next and paste in the following Cedar policy and select Save.

permit (

principal in MyApplication::Group::"Store-Owner-Role",

action in [

MyApplication::Action::"GetStoreInventory",

MyApplication::Action::"ListOrders"

],

resource

);- You need to get users and assign the Store-Owner-Role to them. In this case, you will use Amazon Cognito as the IdP and the role can be assigned there. You can create users and groups in Cognito by following the below steps.

- Navigate to Amazon Cognito from the AWS Management Console, and select the user group created for the pet store app.

- Creating a user by clicking create user and create a user with user name eve

- Navigate to the Groups section and create a group called Store-Owner-Role .

- Add eve to the Store-Owner-Role group by clicking Add user to Group, selecting eve and clicking the Add.

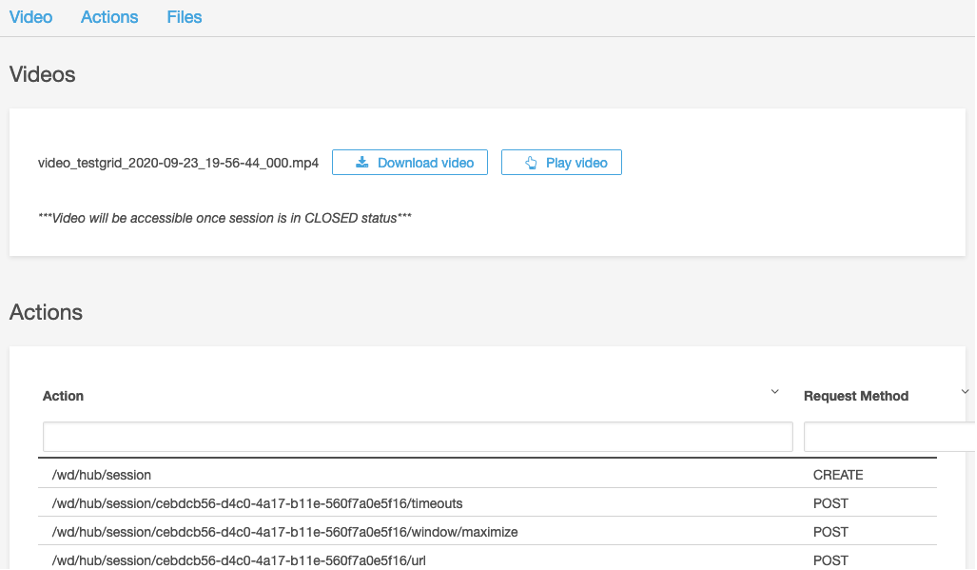

- Now that you have assigned the Store-Owner-Role to the user, and Verified Permissions has a permit policy granting entitlements based on role membership, you can log in to the application as the user – eve – to test functionality. When choosing List All Orders, you can see the approval result in the app’s output.

Implement PBAC based Permissions

As the company grows, you want to be able to limit GetOrders access to a specific store location so that you can follow least privilege. You can update your policy to PBAC by adding an ABAC condition to the existing permit policy. You can add a condition in the policy that restricts listing orders to only those stores the user owns.

Below is the walk-though of updating the application

-

- Navigate to the Verified Permissions console and update the policy to the below.

permit (

principal in MyApplication::Group::"Store-Owner-Role",

action in [

MyApplication::Action::"GetStoreInventory",

MyApplication::Action::"ListOrders"

],

resource

) when {

principal.employmentStoreCode == resource.storeId

};- Navigate to the Amazon Cognito console, select the user eve and click “Edit” in the user attributes section to update the “custom:employmentStoreCode”. Set the attribute value to “petstore-london” as eve owns the petstore-london location

- You can demonstrate that eve can only list orders of “petstore-london” by following the below steps

- We want to make sure that latest changes to the user attributed are passed to the application in the identity token. We will refresh the identity token, by logging out of the app and logging in again as Eve. Navigate back to the application and logout as eve.

- In the application, we set the Pet Store Identifier as petstore-london and click the List All Orders. The result is success!, as Eve is authorized to list orders of the store she owns.

- Next, we change the Pet Store Identifier to petstore-seattle and and click the List All Orders. The result is Not Authorized, as Eve is authorized to list orders of stores she does not owns.

Clean Up section

You can cleanup the resources that were created in this blog by following these steps.

Conclusion

In this post, we reviewed what roles and entitlements are as well as how they are used to manage user authorization in your app. We’ve also covered RBAC and ABAC policy examples with respect to the demo application, avp-petstore-sample, that is available to you via AWS Samples for hands-on testing. The walk-through also covered our example architecture using Amazon Cognito as the IdP and Verified Permissions as the centralized policy store that assessed authorization results based on the policies set for the app. By leveraging Verified Permissions, we could use PBAC model to define fine-grained access while preventing role explosion. For more information about Verified Permissions, see the Amazon Verified Permissions product details page and Resources page.