Post Syndicated from Anuj Gupta original https://aws.amazon.com/blogs/architecture/journey-to-adopt-cloud-native-architecture-series-4-governing-security-at-scale-and-iam-baselining/

In Part 3 of this series, Improved Resiliency and Standardized Observability, we talked about design patterns that you can adopt to improve resiliency, achieve minimum business continuity, and scale applications with lengthy transactions (more than 3 minutes).

As a refresher from previous blogs in this series, our example ecommerce company’s “Shoppers” application runs in the cloud. The company experienced hypergrowth, which posed a number of platform and technology challenges, namely, they needed to scale on the backend without impacting users.

Because of this hypergrowth, distributed denial of service (DDoS) attacks on the ecommerce company’s services increased 10 times in 6 months. Some of these attacks led to downtime and loss of revenue. This blog post shows you how we addressed these threats by implementing a multi-account strategy and applying AWS Identity and Access Management (IAM) best practices.

A multi-account strategy ensures security at scale

Originally, the company’s production and non-production services were running in a single account. This meant non-production vulnerabilities like frequently changing code or privileged access could impact the production environment. Additionally, the application experienced issues due to unexpectedly reaching service quotas. These include (but are not limited to) number of read replicas per master in Amazon Relational Database Service (Amazon RDS) and total storage for all DB instances in Auto Scaling Service Quotas for Amazon Elastic Compute Cloud (Amazon EC2).

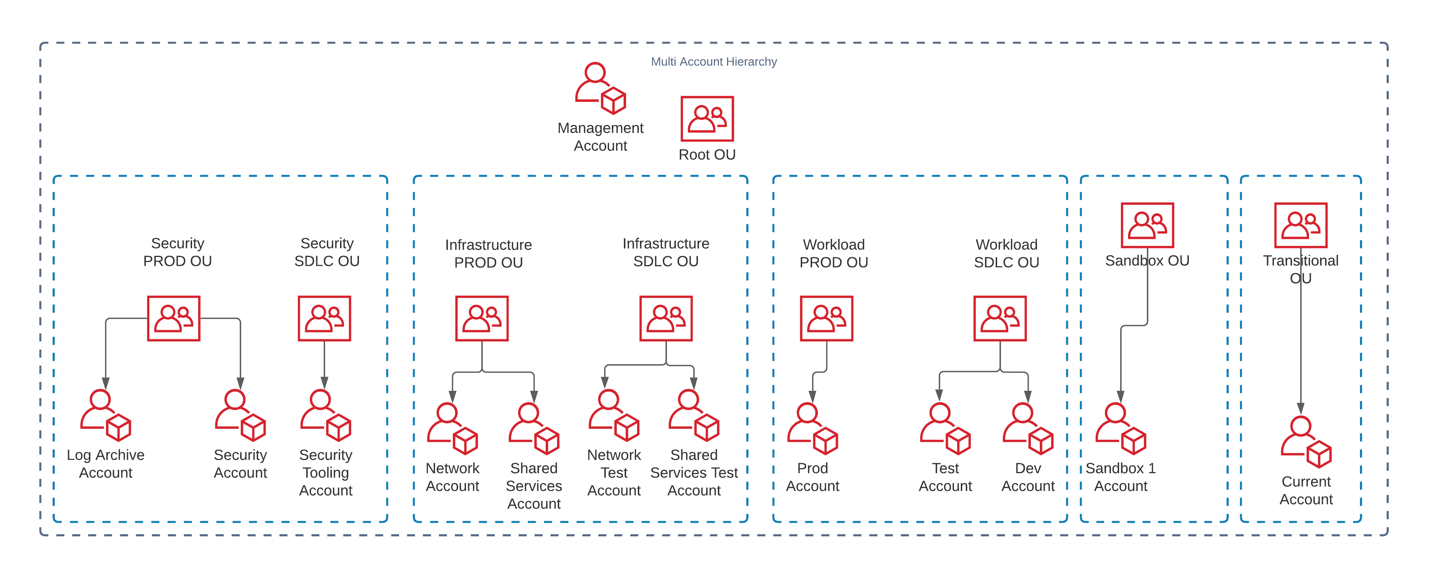

To address these issues, we followed multi-account strategy best practices. We established the multi-account hierarchy shown in Figure 1 that includes the following eight organizational units (OUs) to meet business requirements:

- Security PROD OU

- Security SDLC OU

- Infrastructure PROD OU

- Infrastructure SDLC OU

- Workload PROD OU

- Workload SDLC OU

- Sandbox OU

- Transitional OU

To identify the right fit for our needs, we evaluated AWS Landing Zone and AWS Control Tower. To reduce operation overhead of maintaining a solution, we used AWS Control Tower to deploy guardrails as service control policies (SCPs). These guardrails were then separated into production and non-production environments, creating the hierarchy shown in Figure 1.

We created a new Payer (or Management) Account with Sandbox OU and Transitional OU under Root OU. We then moved existing AWS accounts under the Transitional OU and Sandbox OU. We provisioned new accounts with Account Factory and gradually migrated services from existing AWS accounts into the newly formed Log Archive Account, Security Account, Network Account, and Shared Services Account and applied appropriate guardrails. We then registered Sandbox OU with Control Tower. Additionally, we migrated the centralized logging solution from Part 3 of this blog series to the Security Account. We moved non-production applications into the Dev and Test Accounts, respectively, to isolate workloads. We then moved existing accounts that had production services from the Transitional OU to Workload PROD OU.

Implementing a multi-account strategy alleviated service quota challenges. It isolated variable demand non-production environments from more consistent production environments, which reduced the downtime caused by unplanned scaling events. The multi-account strategy enforces governance at scale, but also promotes innovation by allocating separate accounts with distinct security requirements for proof of concepts and experimentation. This reduces impact risks to production accounts and allows the required guardrails to be automatically applied.

Improving access management and least privilege access

When the company experienced hypergrowth, they not only had to scale their application’s infrastructure, but they also had to increase how often they release their code. They also hired and onboarded new internal teams.

To strengthen new/existing employees’ credentials, we used AWS Trusted Advisor for IAM Access Key Rotation. This identifies IAM users whose access keys have not been rotated for more than 90 days and created an automated way to rotate them. We then generated an IAM credential report to identify IAM users that don’t need console access or that don’t need access keys. We gradually assigned these users role-based access versus IAM access keys.

During a Well-Architected Security Pillar review, we identified some applications that used hardcoded passwords that hadn’t been updated for more than 90 days. We re-factored these applications to get passwords from AWS Secrets Manager and followed best practices for performance.

Additionally, we set up a system to automatically change passwords for RDS databases and wrote an AWS Lambda function to update passwords for third-party integration. Some applications on Amazon EC2 were using IAM access keys to access AWS services. We re-factored them to get permissions from the EC2 instance role attached to the EC2 instances, which reduced operational burden of rotating access keys.

Using IAM Access Analyzer, we analyzed AWS CloudTrail logs and generated policies for IAM roles. This helped us determine the least privilege permissions required for the roles as mentioned in the IAM Access Analyzer makes it easier to implement least privilege permissions by generating IAM policies based on access activity blog.

To streamline access for internal users, we migrated users to AWS Single Sign-On (AWS SSO) federated access. We enabled all features in AWS Organizations to use AWS SSO and created permission sets to define access boundaries for different functions. We assigned permission sets to different user groups and assigned users to user groups based on their job function. This allowed us to reduce the number of IAM policies and use tag-based control when defining AWS SSO permissions policies.

We followed the guidance in the Attribute-based Access Control with AWS SSO blog post to map user attributes and use tags to define permissions boundaries for user groups. This allowed us to provide access to users based on specific teams, projects, and departments. We enforced multi-factor authentication (MFA) for all AWS SSO users by configuring MFA settings to allow sign in only when an MFA device has been registered.

These improvements ensure that only the right people have access to the required resources for the right time. They reduce the risk of compromised security credentials by using AWS Security Token Service (AWS STS) to generate temporary credentials when needed. System passwords are better protected from unwanted access and automatically rotated for improved security. AWS SSO also allows us to enforce permissions at scale when people’s job functions change within or across teams.

Conclusion

In this blog post, we described design patterns we used to implement security governance at scale using multi-account strategy and AWS SSO integrations. We also talked about patterns you can adopt for IAM baselining that allow least privilege access, checking for IAM best practices, and proactively detecting unwanted access.

This blog post also covers why you need to refresh your threat model during hyperscale growth and how different services can make it easier to enforce security controls. In the next blog, we will talk about more security design patterns to improve infrastructure security and incident response during hyperscale.

Find out more

Other blogs in this series

- Journey to Adopt Cloud-Native Architecture Series: #1 – Preparing your Applications for Hypergrowth

- Journey to Adopt Cloud-Native Architecture Series: #2 – Maximizing System Throughput

- Journey to Adopt Cloud-Native Architecture Series: #3 – Improved Resilience and Standardized Observability

Related information

- Blog: How to use AWS Organizations to simplify security at enormous scale

- Blog: IAM Access Analyzer makes it easier to implement least privilege permissions by generating IAM policies based on access activity

- User guide: Getting started with AWS Control Tower

- User guide: AWS Secrets Manager, Cache secrets to improve performance

- Whitepaper: Organizing Your AWS Environment Using Multiple Accounts