Post Syndicated from Esra Kayabali original https://aws.amazon.com/blogs/aws/unify-dns-management-using-amazon-route-53-profiles-with-multiple-vpcs-and-aws-accounts/

If you are managing lots of accounts and Amazon Virtual Private Cloud (Amazon VPC) resources, sharing and then associating many DNS resources to each VPC can present a significant burden. You often hit limits around sharing and association, and you may have gone as far as building your own orchestration layers to propagate DNS configuration across your accounts and VPCs.

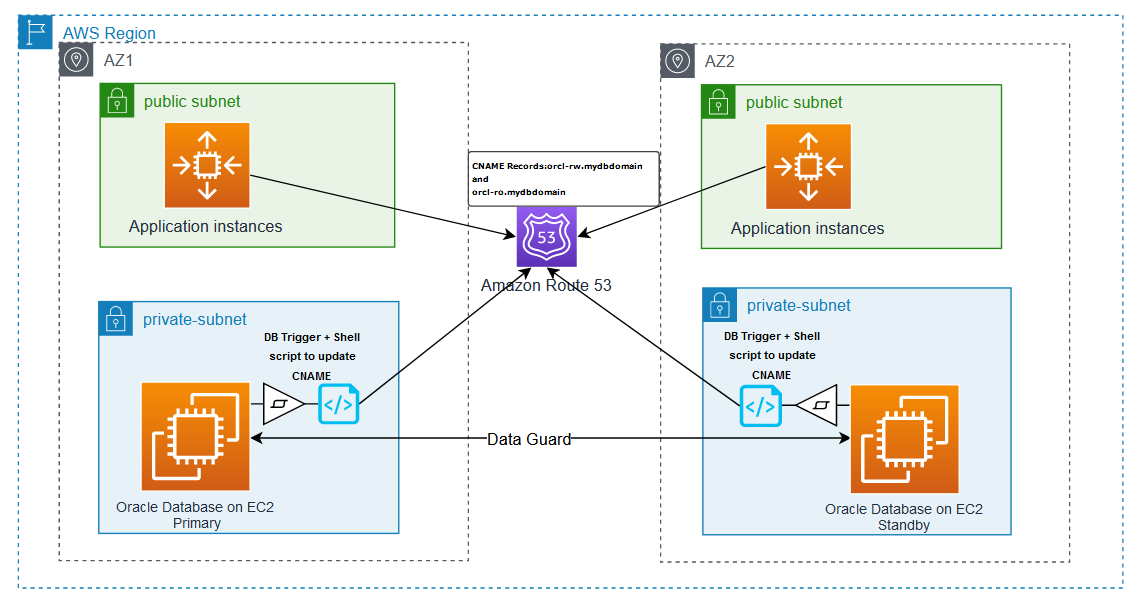

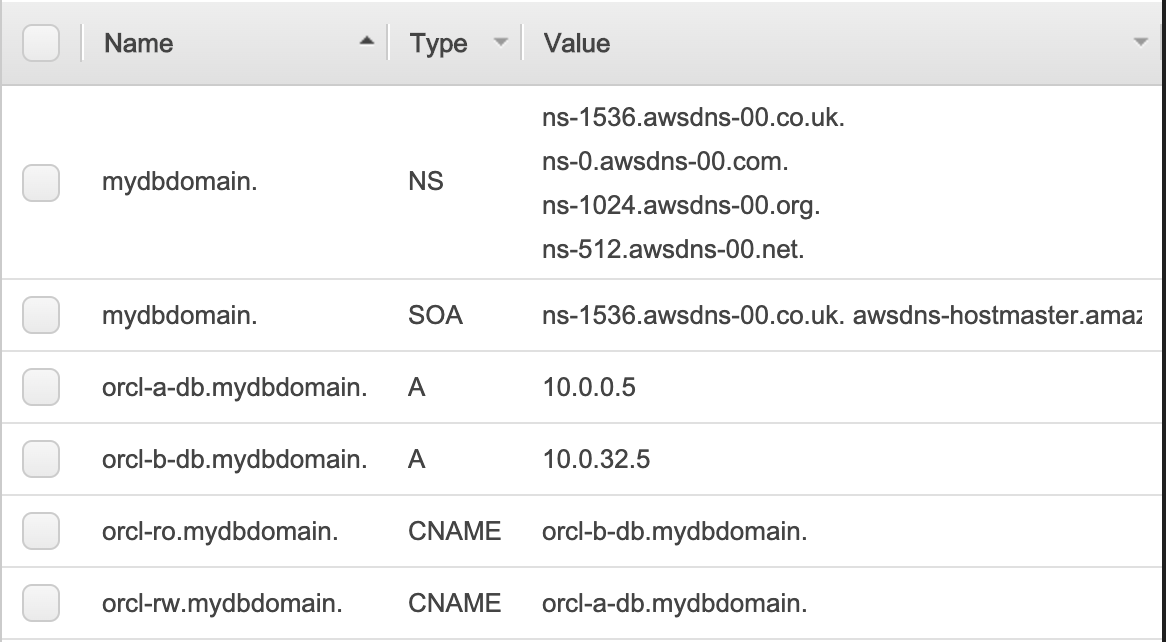

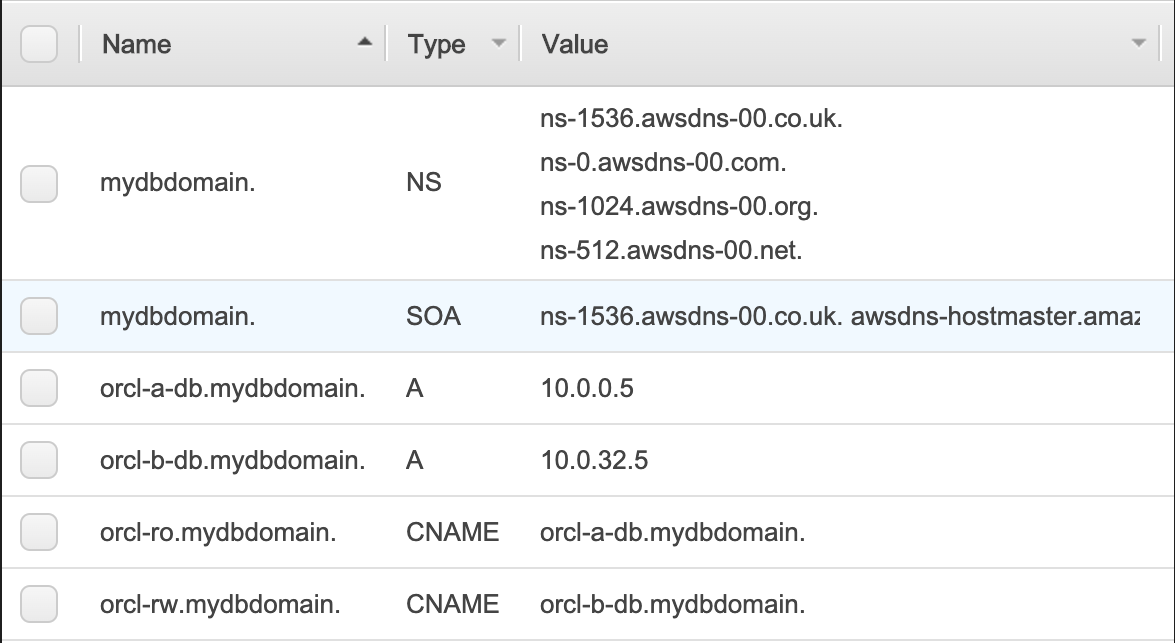

Today, I’m happy to announce Amazon Route 53 Profiles, which provide the ability to unify management of DNS across all of your organization’s accounts and VPCs. Route 53 Profiles let you define a standard DNS configuration, including Route 53 private hosted zone (PHZ) associations, Resolver forwarding rules, and Route 53 Resolver DNS Firewall rule groups, and apply that configuration to multiple VPCs in the same AWS Region. With Profiles, you have an easy way to ensure all of your VPCs have the same DNS configuration without the complexity of handling separate Route 53 resources. Managing DNS across many VPCs is now as simple as managing those same settings for a single VPC.

Profiles are natively integrated with AWS Resource Access Manager (RAM) allowing you to share your Profiles across accounts or with your AWS Organizations account. Profiles integrates seamlessly with Route 53 private hosted zones by allowing you to create and add existing private hosted zones to your Profile so that your organizations have access to these same settings when the Profile is shared across accounts. AWS CloudFormation allows you to use Profiles to set DNS settings consistently for VPCs as accounts are newly provisioned. With today’s release, you can better govern DNS settings for your multi-account environments.

How Route 53 Profiles works

To start using the Route 53 Profiles, I go to the AWS Management Console for Route 53, where I can create Profiles, add resources to them, and associate them to their VPCs. Then, I share the Profile I created across another account using AWS RAM.

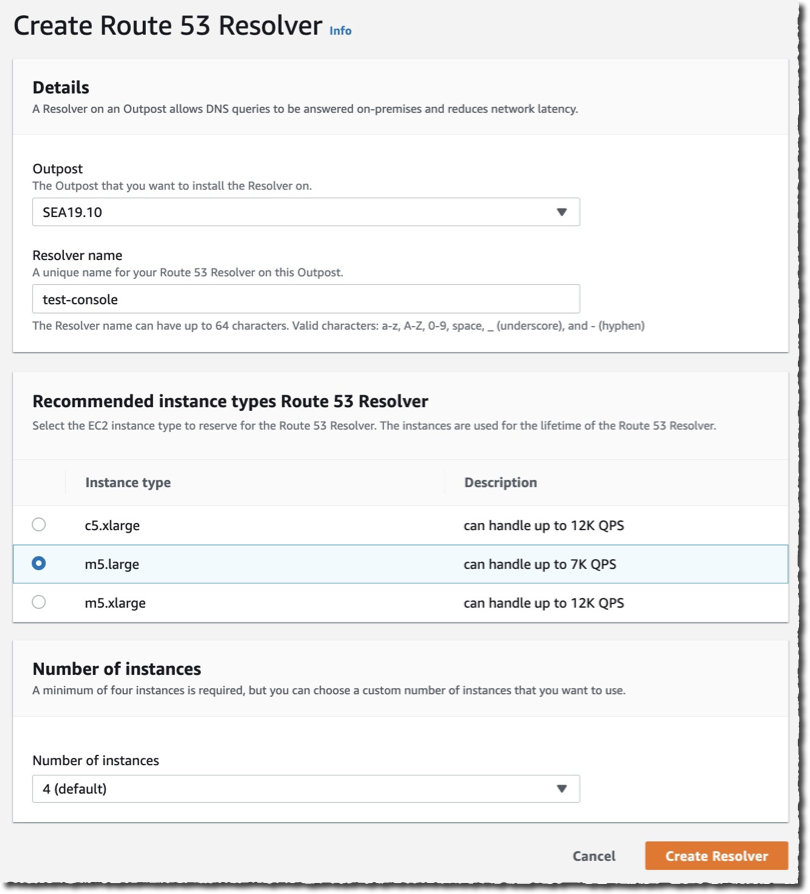

In the navigation pane in the Route 53 console, I choose Profiles and then I choose Create profile to set up my Profile.

I give my Profile configuration a friendly name such as MyFirstRoute53Profile and optionally add tags.

I can configure settings for DNS Firewall rule groups, private hosted zones and Resolver rules or add existing ones within my account all within the Profile console page.

I choose VPCs to associate my VPCs to the Profile. I can add tags as well as do configurations for recursive DNSSEC validation, the failure mode for the DNS Firewalls associated to my VPCs. I can also control the order of DNS evaluation: First VPC DNS then Profile DNS, or first Profile DNS then VPC DNS.

I can associate one Profile per VPC and can associate up to 5,000 VPCs to a single Profile.

Profiles gives me the ability to manage settings for VPCs across accounts in my organization. I am able to disable reverse DNS rules for each of the VPCs the Profile is associated with rather than configuring these on a per-VPC basis. The Route 53 Resolver automatically creates rules for reverse DNS lookups for me so that different services can easily resolve hostnames from IP addresses. If I use DNS Firewall, I am able to select the failure mode for my firewall via settings, to fail open or fail closed. I am also able to specify if I wish for the VPCs associated to the Profile to have recursive DNSSEC validation enabled without having to use DNSSEC signing in Route 53 (or any other provider).

Let’s say I associate a Profile to a VPC. What happens when a query exactly matches both a resolver rule or PHZ associated directly to the VPC and a resolver rule or PHZ associated to the VPC’s Profile? Which DNS settings take precedence, the Profile’s or the local VPC’s? For example, if the VPC is associated to a PHZ for example.com and the Profile contains a PHZ for example.com, that VPC’s local DNS settings will take precedence over the Profile. When a query is made for a name for a conflicting domain name (for example, the Profile contains a PHZ for infra.example.com and the VPC is associated to a PHZ that has the name account1.infra.example.com), the most specific name wins.

Sharing Route 53 Profiles across accounts using AWS RAM

I use AWS Resource Access Manager (RAM) to share the Profile I created in the previous section with my other account.

I choose the Share profile option in the Profiles detail page or I can go to the AWS RAM console page and choose Create resource share.

I provide a name for my resource share and then I search for the ‘Route 53 Profiles’ in the Resources section. I select the Profile in Selected resources. I can choose to add tags. Then, I choose Next.

Profiles utilize RAM managed permissions, which allow me to attach different permissions to each resource type. By default, only the owner (the network admin) of the Profile will be able to modify the resources within the Profile. Recipients of the Profile (the VPC owners) will only be able to view the contents of the Profile (the ReadOnly mode). To allow a recipient of the Profile to add PHZs or other resources to it, the Profile’s owner will have to attach the necessary permissions to the resource. Recipients will not be able to edit or delete any resources added by the Profile owner to the shared resource.

I leave the default selections and choose Next to grant access to my other account.

On the next page, I choose Allow sharing with anyone, enter my other account’s ID and then choose Add. After that, I choose that account ID in the Selected principals section and choose Next.

In the Review and create page, I choose Create resource share. Resource share is successfully created.

Now, I switch to my other account that I share my Profile with and go to the RAM console. In the navigation menu, I go to the Resource shares and choose the resource name I created in the first account. I choose Accept resource share to accept the invitation.

That’s it! Now, I go to my Route 53 Profiles page and I choose the Profile shared with me.

I have access to the shared Profile’s DNS Firewall rule groups, private hosted zones, and Resolver rules. I can associate this account’s VPCs to this Profile. I am not able to edit or delete any resources. Profiles are Regional resources and cannot be shared across Regions.

Available now

You can easily get started with Route 53 Profiles using the AWS Management Console, Route 53 API, AWS Command Line Interface (AWS CLI), AWS CloudFormation, and AWS SDKs.

Route 53 Profiles will be available in all AWS Regions, except in Canada West (Calgary), the AWS GovCloud (US) Regions and the Amazon Web Services China Regions.

For more details about the pricing, visit the Route 53 pricing page.

Get started with Profiles today and please let us know your feedback either through your usual AWS Support contacts or the AWS re:Post for Amazon Route 53.

Happy New Year! Cloud technologies, machine learning, and generative AI have become more accessible, impacting nearly every aspect of our lives. Amazon CTO Dr. Werner Vogels offers four tech predictions for 2024 and beyond:

Happy New Year! Cloud technologies, machine learning, and generative AI have become more accessible, impacting nearly every aspect of our lives. Amazon CTO Dr. Werner Vogels offers four tech predictions for 2024 and beyond: