Post Syndicated from David Boyne original https://aws.amazon.com/blogs/compute/introducing-advanced-logging-controls-for-aws-lambda-functions/

This post is written by Nati Goldberg, Senior Solutions Architect and Shridhar Pandey, Senior Product Manager, AWS Lambda

Today, AWS is launching advanced logging controls for AWS Lambda, giving developers and operators greater control over how function logs are captured, processed, and consumed.

This launch introduces three new capabilities to provide a simplified and enhanced default logging experience on Lambda.

First, you can capture Lambda function logs in JSON structured format without having to use your own logging libraries. JSON structured logs make it easier to search, filter, and analyze large volumes of log entries.

Second, you can control the log level granularity of Lambda function logs without making any code changes, enabling more effective debugging and troubleshooting.

Third, you can also set which Amazon CloudWatch log group Lambda sends logs to, making it easier to aggregate and manage logs at scale.

Overview

Being able to identify and filter relevant log messages is essential to troubleshoot and fix critical issues. To help developers and operators monitor and troubleshoot failures, the Lambda service automatically captures and sends logs to CloudWatch Logs.

Previously, Lambda emitted logs in plaintext format, also known as unstructured log format. This unstructured format could make the logs challenging to query or filter. For example, you had to search and correlate logs manually using well-known string identifiers such as “START”, “END”, “REPORT” or the request id of the function invocation. Without a native way to enrich application logs, you needed custom work to extract data from logs for automated analysis or to build analytics dashboards.

Previously, operators could not control the level of log detail generated by functions. They relied on application development teams to make code changes to emit logs with the required granularity level, such as INFO, DEBUG, or ERROR.

Lambda-based applications often comprise microservices, where a single microservice is composed of multiple single-purpose Lambda functions. Before this launch, Lambda sent logs to a default CloudWatch log group created with the Lambda function with no option to select a log group. Now you can aggregate logs from multiple functions in one place so you can uniformly apply security, governance, and retention policies to your logs.

Capturing Lambda logs in JSON structured format

Lambda now natively supports capturing structured logs in JSON format as a series of key-value pairs, making it easier to search and filter logs more easily.

JSON also enables you to add custom tags and contextual information to logs, enabling automated analysis of large volumes of logs to help understand the function performance. The format adheres to the OpenTelemetry (OTel) Logs Data Model, a popular open-source logging standard, enabling you to use open-source tools to monitor functions.

To set the log format in the Lambda console, select the Configuration tab, choose Monitoring and operations tools on the left pane, then change the log format property:

Currently, Lambda natively supports capturing application logs (logs generated by the function code) and system logs (logs generated by the Lambda service) in JSON structured format.

This is for functions that use non-deprecated versions of Python, Node.js, and Java Lambda managed runtimes, when using Lambda recommended logging methods such as using logging library for Python, console object for Node.js, and LambdaLogger or Log4j for Java.

For other managed runtimes, Lambda currently only supports capturing system logs in JSON structured format. However, you can still capture application logs in JSON structured format for these runtimes by manually configuring logging libraries. See configuring advanced logging controls section in the Lambda Developer Guide to learn more. You can also use Powertools for AWS Lambda to capture logs in JSON structured format.

Changing log format from text to JSON can be a breaking change if you parse logs in a telemetry pipeline. AWS recommends testing any existing telemetry pipelines after switching log format to JSON.

Working with JSON structured format for Node.js Lambda functions

You can use JSON structured format with CloudWatch Embedded Metric Format (EMF) to embed custom metrics alongside JSON structured log messages, and CloudWatch automatically extracts the custom metrics for visualization and alarming. However, to use JSON log format along with EMF libraries for Node.js Lambda functions, you must use the latest version of the EMF client library for Node.js or the latest version of Powertools for AWS Lambda (TypeScript) library.

Configuring log level granularity for Lambda function

You can now filter Lambda logs by log level, such as ERROR, DEBUG, or INFO, without code changes. Simplified log level filtering enables you to choose the required logging granularity level for Lambda functions, without sifting through large volumes of logs to debug errors.

You can specify separate log level filters for application logs (which are logs generated by the function code) and system logs (which are logs generated by the Lambda service, such as START and REPORT log messages). Note that log level controls are only available if the log format of the function is set to JSON.

The Lambda console allows setting both the Application log level and System log level properties:

You can define the granularity level of each log event in your function code. The following statement prints out the event input of the function, emitted as a DEBUG log message:

console.debug(event);

Once configured, log events emitted with a lower log level than the one selected are not published to the function’s CloudWatch log stream. For example, setting the function’s log level to INFO results in DEBUG log events being ignored.

This capability allows you to choose the appropriate amount of logs emitted by functions. For example, you can set a higher log level to improve the signal-to-noise ratio in production logs, or set a lower log level to capture detailed log events for testing or troubleshooting purposes.

Customizing Lambda function’s CloudWatch log group

Previously, you could not specify a custom CloudWatch log group for functions, so you could not stream logs from multiple functions into a shared log group. Also, to set custom retention policy for multiple log groups, you had to create each log group separately using a pre-defined name (for example, /aws/lambda/<function name>).

Now you can select a custom CloudWatch log group to aggregate logs from multiple functions automatically within an application in one place. You can apply security, governance, and retention policies at the application level instead of individually to every function.

To distinguish between logs from different functions in a shared log group, each log stream contains the Lambda function name and version.

You can share the same log group between multiple functions to aggregate logs together. The function’s IAM policy must include the logs:CreateLogStream and logs:PutLogEvents permissions for Lambda to create logs in the specified log group. The Lambda service can optionally create these permissions, when you configure functions in the Lambda console.

You can set the custom log group in the Lambda console by entering the destination log group name. If the entered log group does not exist, Lambda creates it automatically.

Advanced logging controls for Lambda can be configured using Lambda API, AWS Management Console, AWS Command Line Interface (CLI), and infrastructure as code (IaC) tools such as AWS Serverless Application Model (AWS SAM) and AWS CloudFormation.

Example of Lambda advanced logging controls

This section demonstrates how to use the new advanced logging controls for Lambda using AWS SAM to build and deploy the resources in your AWS account.

Overview

The following diagram shows Lambda functions processing newly created objects inside an Amazon S3 bucket, where both functions emit logs into the same CloudWatch log group:

The architecture includes the following steps:

- A new object is created inside an S3 bucket.

- S3 publishes an event using S3 Event Notifications to Amazon EventBridge.

- EventBridge triggers two Lambda functions asynchronously.

- Each function processes the object to extract labels and text, using Amazon Rekognition and Amazon Textract.

- Both functions then emit logs into the same CloudWatch log group.

This uses AWS SAM to define the Lambda functions and configure the required logging controls. The IAM policy allows the function to create a log stream and emit logs to the selected log group:

DetectLabelsFunction:

Type: AWS::Serverless::Function

Properties:

CodeUri: detect-labels/

Handler: app.lambdaHandler

Runtime: nodejs18.x

Policies:

...

- Version: 2012-10-17

Statement:

- Sid: CloudWatchLogGroup

Action:

- logs:CreateLogStream

- logs:PutLogEvents

Resource: !GetAtt CloudWatchLogGroup.Arn

Effect: Allow

LoggingConfig:

LogFormat: JSON

ApplicationLogLevel: DEBUG

SystemLogLevel: INFO

LogGroup: !Ref CloudWatchLogGroup

Deploying the example

To deploy the example:

- Clone the GitHub repository and explore the application.

git clone https://github.com/aws-samples/advanced-logging-controls-lambda/

cd advanced-logging-controls-lambda

- Use AWS SAM to build and deploy the resources to your AWS account. This compiles and builds the application using npm, and then populate the template required to deploy the resources:

sam build

- Deploy the solution to your AWS account with a guided deployment, using AWS SAM CLI interactive flow:

sam deploy --guided

- Enter the following values:

- Stack Name:

advanced-logging-controls-lambda

- Region: your preferred Region (for example,

us-east-1)

- Parameter UploadsBucketName: enter a unique bucket name.

- Accept the rest of the initial defaults.

- To test the application, use the AWS CLI to copy the sample image into the S3 bucket you created.

aws s3 cp samples/skateboard.jpg s3://example-s3-images-bucket

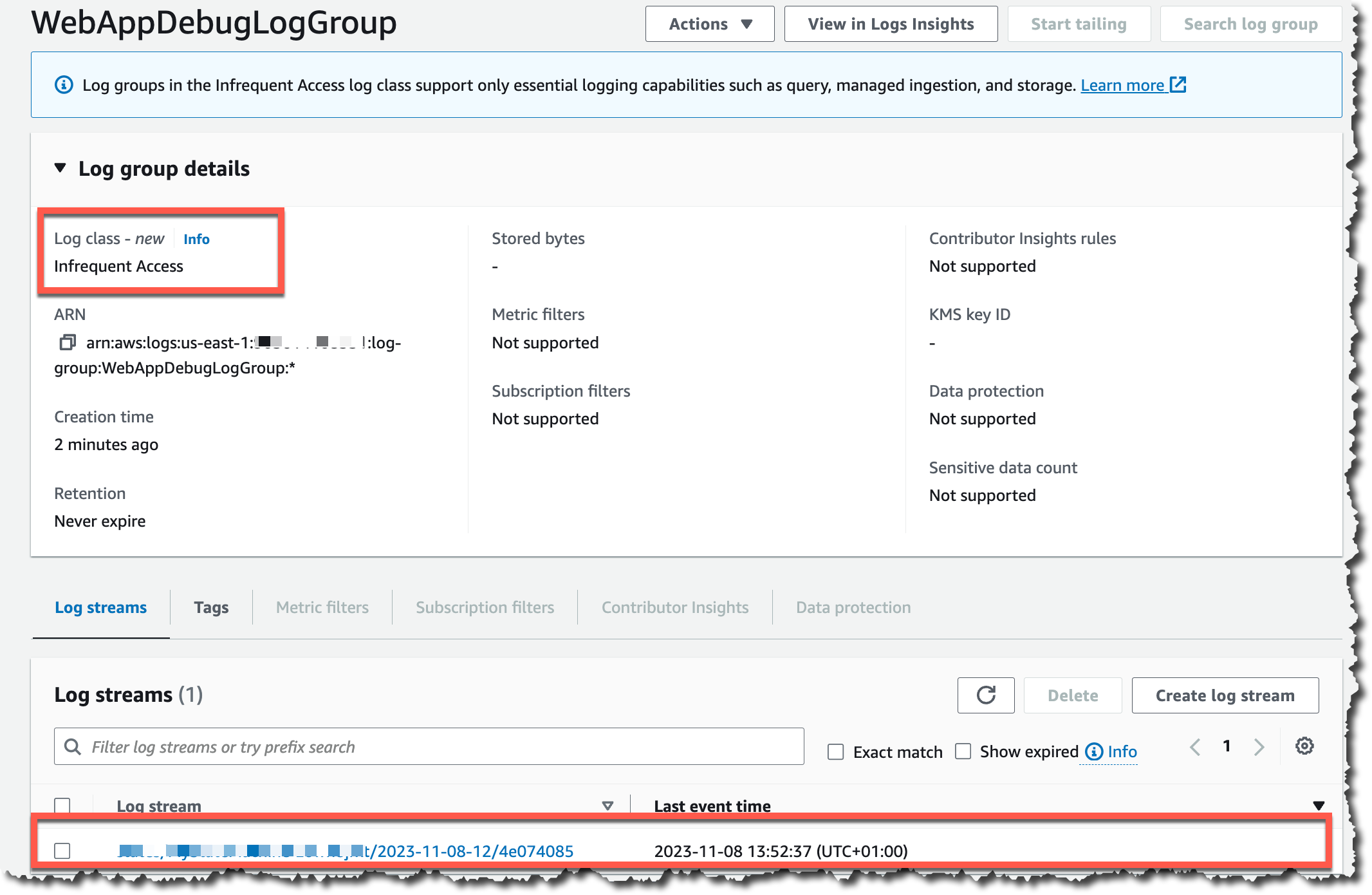

Explore CloudWatch Logs to view the logs emitted into the log group created, AggregatedLabelsLogGroup:

The DetectLabels Lambda function emits DEBUG log events in JSON format to the log stream. Log events with the same log level from the ExtractText Lambda function are omitted. This is a result of the different application log level settings for each function (DEBUG and INFO).

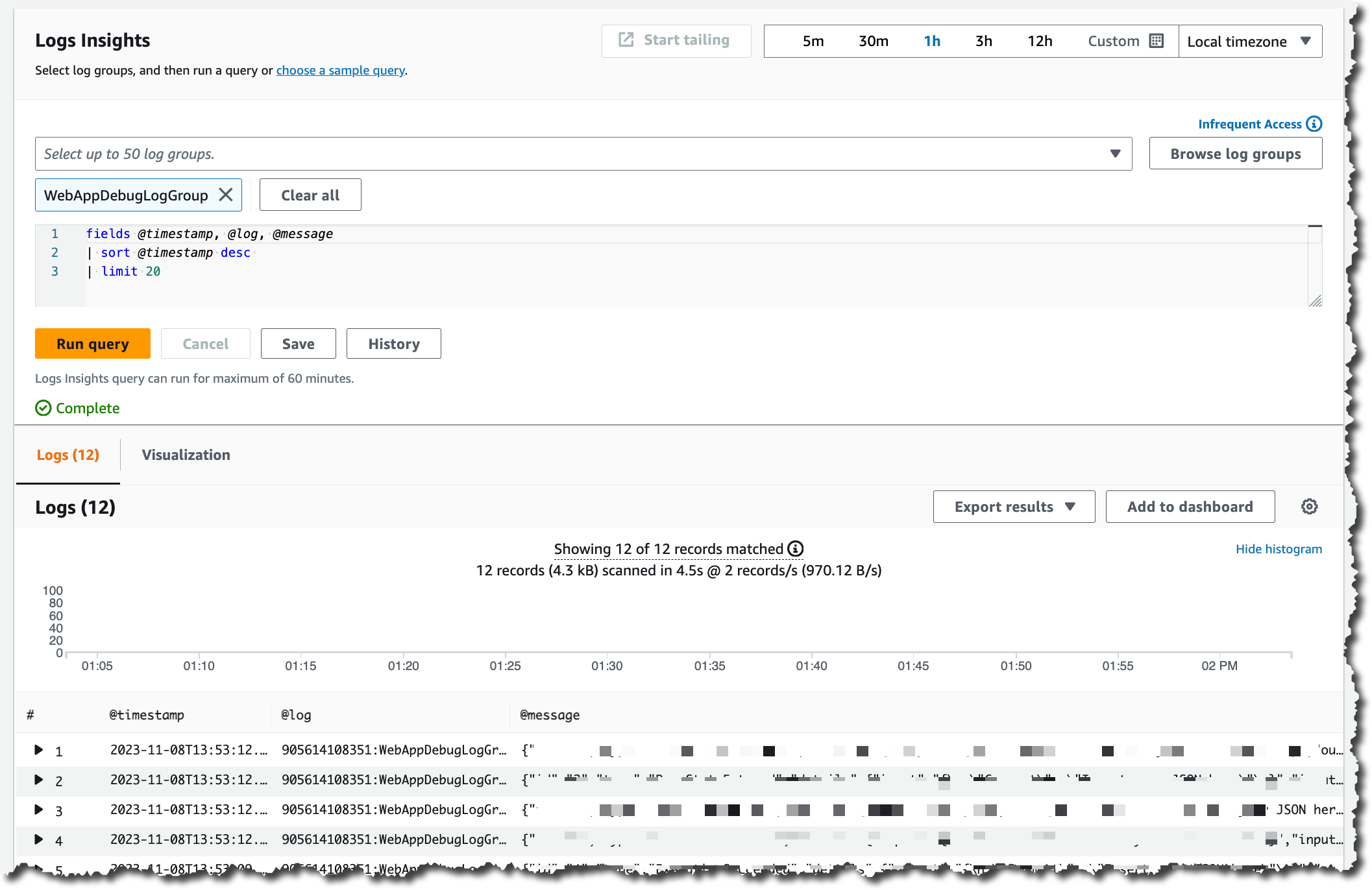

You can also use CloudWatch Logs Insights to search, filter, and analyze the logs in JSON format using this sample query:

You can see the results:

Conclusion

Advanced logging controls for Lambda give you greater control over logging. Use advanced logging controls to control your Lambda function’s log level and format, allowing you to search, query, and filter logs to troubleshoot issues more effectively.

You can also choose the CloudWatch log group where Lambda sends your logs. This enables you to aggregate logs from multiple functions into a single log group, apply retention, security, governance policies, and easily manage logs at scale.

To get started, specify the required settings in the Logging Configuration for any new or existing Lambda functions.

Advanced logging controls for Lambda are available in all AWS Regions where Lambda is available at no additional cost. Learn more about AWS Lambda Advanced Logging Controls.

For more serverless learning resources, visit Serverless Land.

The Internet has a plethora of moving parts: routers, switches, hubs, terrestrial and submarine cables, and connectors on the hardware side, and complex protocol stacks and configurations on the software side. When something goes wrong that slows or disrupts the Internet in a way that affects your customers, you want to be able to localize and understand the issue as quickly as possible.

The Internet has a plethora of moving parts: routers, switches, hubs, terrestrial and submarine cables, and connectors on the hardware side, and complex protocol stacks and configurations on the software side. When something goes wrong that slows or disrupts the Internet in a way that affects your customers, you want to be able to localize and understand the issue as quickly as possible.

Parnab Basak is a Senior Solutions Architect and a Serverless Specialist at AWS. He specializes in creating new solutions that are cloud native using modern software development practices like serverless, DevOps, and analytics. Parnab works closely in the analytics and integration services space helping customers adopt AWS services for their workflow orchestration needs.

Parnab Basak is a Senior Solutions Architect and a Serverless Specialist at AWS. He specializes in creating new solutions that are cloud native using modern software development practices like serverless, DevOps, and analytics. Parnab works closely in the analytics and integration services space helping customers adopt AWS services for their workflow orchestration needs. Chandan Rupakheti is a Solutions Architect and a Serverless Specialist at AWS. He is a passionate technical leader, researcher, and mentor with a knack for building innovative solutions in the cloud and bringing stakeholders together in their cloud journey. Outside his professional life, he loves spending time with his family and friends besides listening and playing music.

Chandan Rupakheti is a Solutions Architect and a Serverless Specialist at AWS. He is a passionate technical leader, researcher, and mentor with a knack for building innovative solutions in the cloud and bringing stakeholders together in their cloud journey. Outside his professional life, he loves spending time with his family and friends besides listening and playing music. Vinod Jayendra is a Enterprise Support Lead in ISV accounts at Amazon Web Services, where he helps customers in solving their architectural, operational, and cost optimization challenges. With a particular focus on Serverless technologies, he draws from his extensive background in application development to deliver top-tier solutions. Beyond work, he finds joy in quality family time, embarking on biking adventures, and coaching youth sports team.

Vinod Jayendra is a Enterprise Support Lead in ISV accounts at Amazon Web Services, where he helps customers in solving their architectural, operational, and cost optimization challenges. With a particular focus on Serverless technologies, he draws from his extensive background in application development to deliver top-tier solutions. Beyond work, he finds joy in quality family time, embarking on biking adventures, and coaching youth sports team. Rupesh Tiwari is a Senior Solutions Architect at AWS in New York City, with a focus on Financial Services. He has over 18 years of IT experience in the finance, insurance, and education domains, and specializes in architecting large-scale applications and cloud-native big data workloads. In his spare time, Rupesh enjoys singing karaoke, watching comedy TV series, and creating joyful moments with his family.

Rupesh Tiwari is a Senior Solutions Architect at AWS in New York City, with a focus on Financial Services. He has over 18 years of IT experience in the finance, insurance, and education domains, and specializes in architecting large-scale applications and cloud-native big data workloads. In his spare time, Rupesh enjoys singing karaoke, watching comedy TV series, and creating joyful moments with his family.

Deepthi Mohan is a Principal PMT on the Amazon Managed Service for Apache Flink team.

Deepthi Mohan is a Principal PMT on the Amazon Managed Service for Apache Flink team. Francisco Morillo is a Streaming Solutions Architect at AWS. Francisco works with AWS customers, helping them design real-time analytics architectures using AWS services, supporting Amazon Managed Streaming for Apache Kafka (Amazon MSK) and Amazon Managed Service for Apache Flink.

Francisco Morillo is a Streaming Solutions Architect at AWS. Francisco works with AWS customers, helping them design real-time analytics architectures using AWS services, supporting Amazon Managed Streaming for Apache Kafka (Amazon MSK) and Amazon Managed Service for Apache Flink. Happy New Year! Cloud technologies, machine learning, and generative AI have become more accessible, impacting nearly every aspect of our lives. Amazon CTO Dr. Werner Vogels offers four tech predictions for 2024 and beyond:

Happy New Year! Cloud technologies, machine learning, and generative AI have become more accessible, impacting nearly every aspect of our lives. Amazon CTO Dr. Werner Vogels offers four tech predictions for 2024 and beyond:

DescribeGetMetricData – This handler returns a string that includes the name of the connector, default values for the arguments to the other handler, and a text description in

DescribeGetMetricData – This handler returns a string that includes the name of the connector, default values for the arguments to the other handler, and a text description in