Post Syndicated from Ibukun Oyewumi original https://aws.amazon.com/blogs/security/top-10-security-best-practices-for-securing-backups-in-aws/

Security is a shared responsibility between AWS and the customer. Customers have asked for ways to secure their backups in AWS. This post will guide you through a curated list of the top ten security best practices to secure your backup data and operations in AWS. While this blog post focuses on backup data and operations in AWS Backup service, the recommended security best practices can be leveraged by organizations that utilize other backup solutions, such as backup tools from the AWS Marketplace.

Since security practices constantly evolve to mitigate new risks, it’s important that you conduct regular risk assessments to determine the applicability of security controls, and implement multiple layers of controls to mitigate risks to your data.

#1 – Implement a backup strategy

A comprehensive backup strategy is an essential part of an organization’s data protection plan to withstand, recover, and reduce any impact that might be sustained due to a security event. You should create an extensive backup strategy that defines which data must be backed up, how often data must be backed up, and monitoring of backup and recovery tasks. When you develop a comprehensive strategy for backing up and restoring data, you should first identify interruptions that may occur, and their potential business impact.

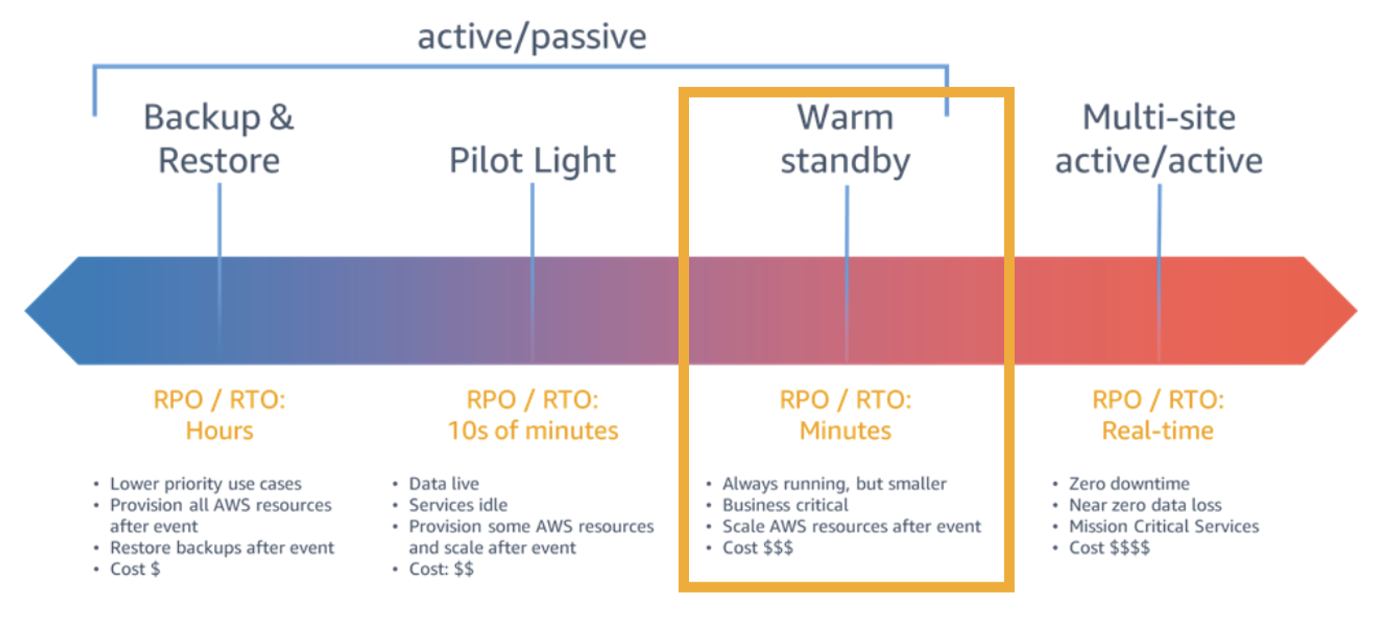

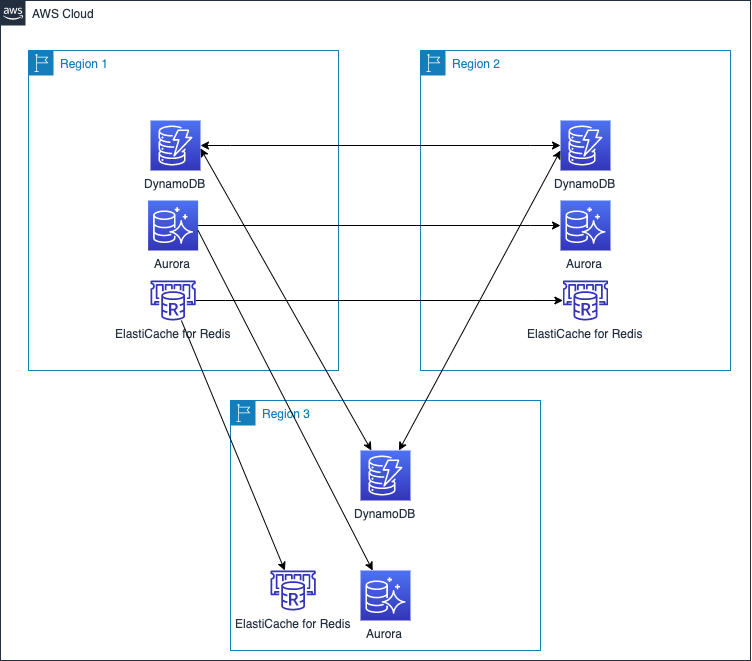

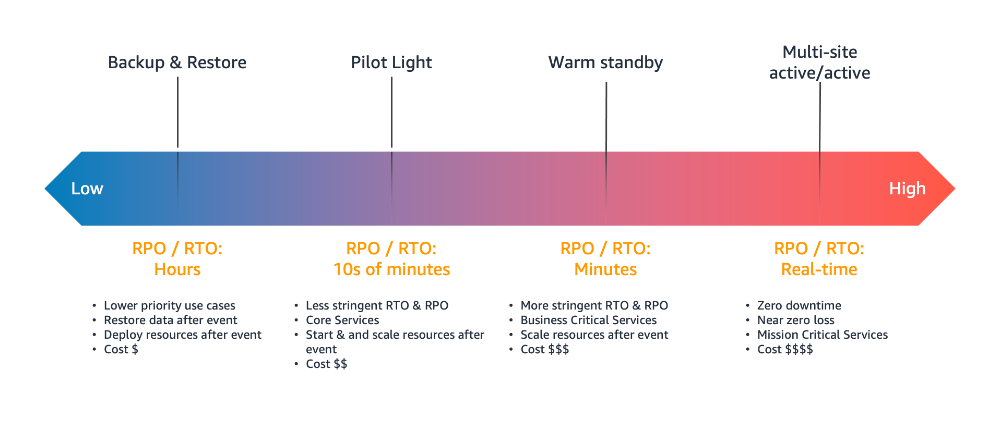

Your objective should be building a recovery strategy that brings your workload back up or avoids downtime within the acceptable Recovery Time Objective (RTO) and Recovery Point Objective (RPO). RTO is the acceptable delay between the interruption of service and restoration of service, and RPO is the acceptable amount of time since the last data recovery point. You should consider a granular backup strategy that includes all of the following: continuous backup cadence, Point-in-Time Recovery (PITR), file-level recovery, application data–level recovery, volume-level recovery, instance-level recovery, etc.

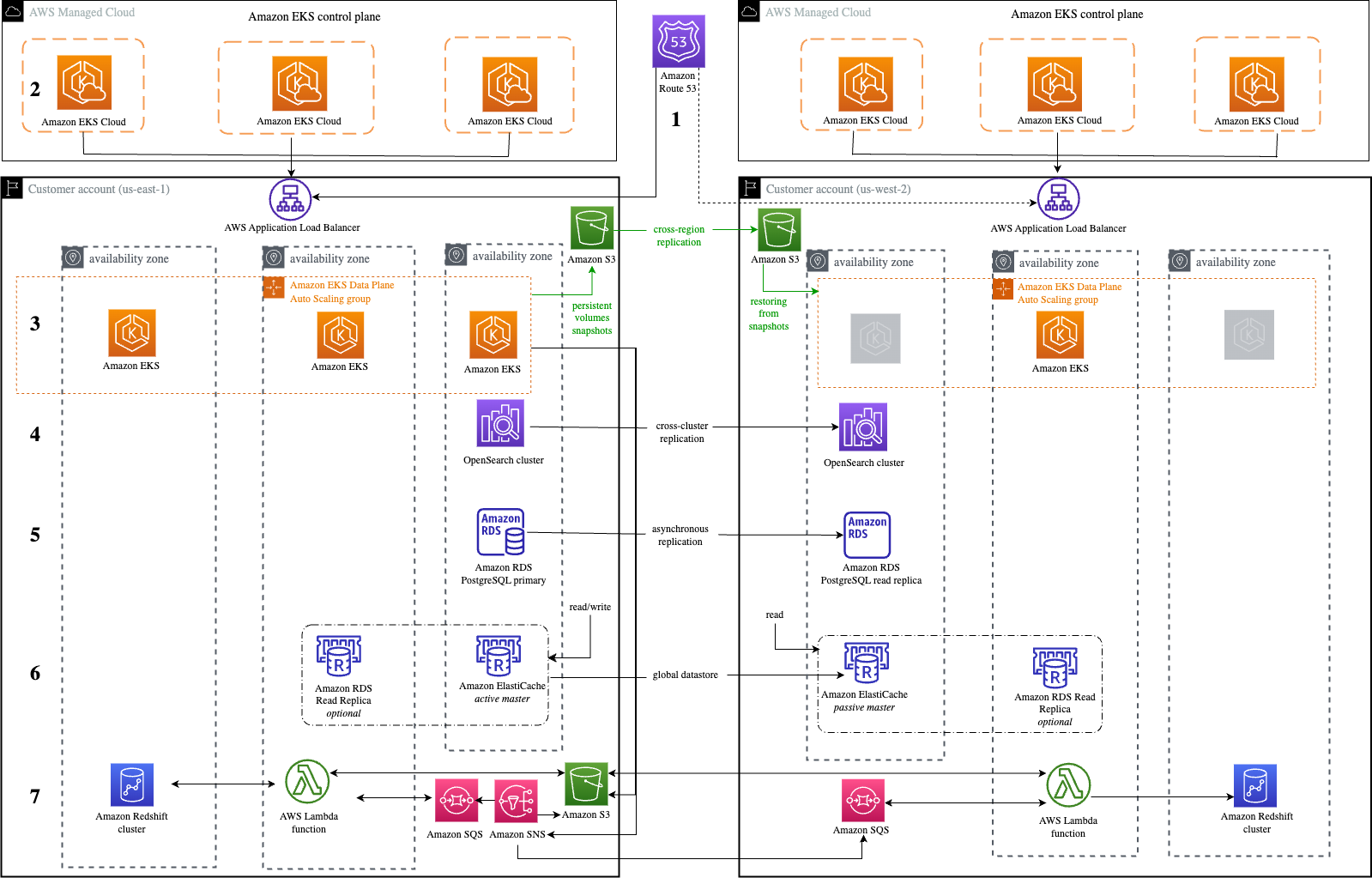

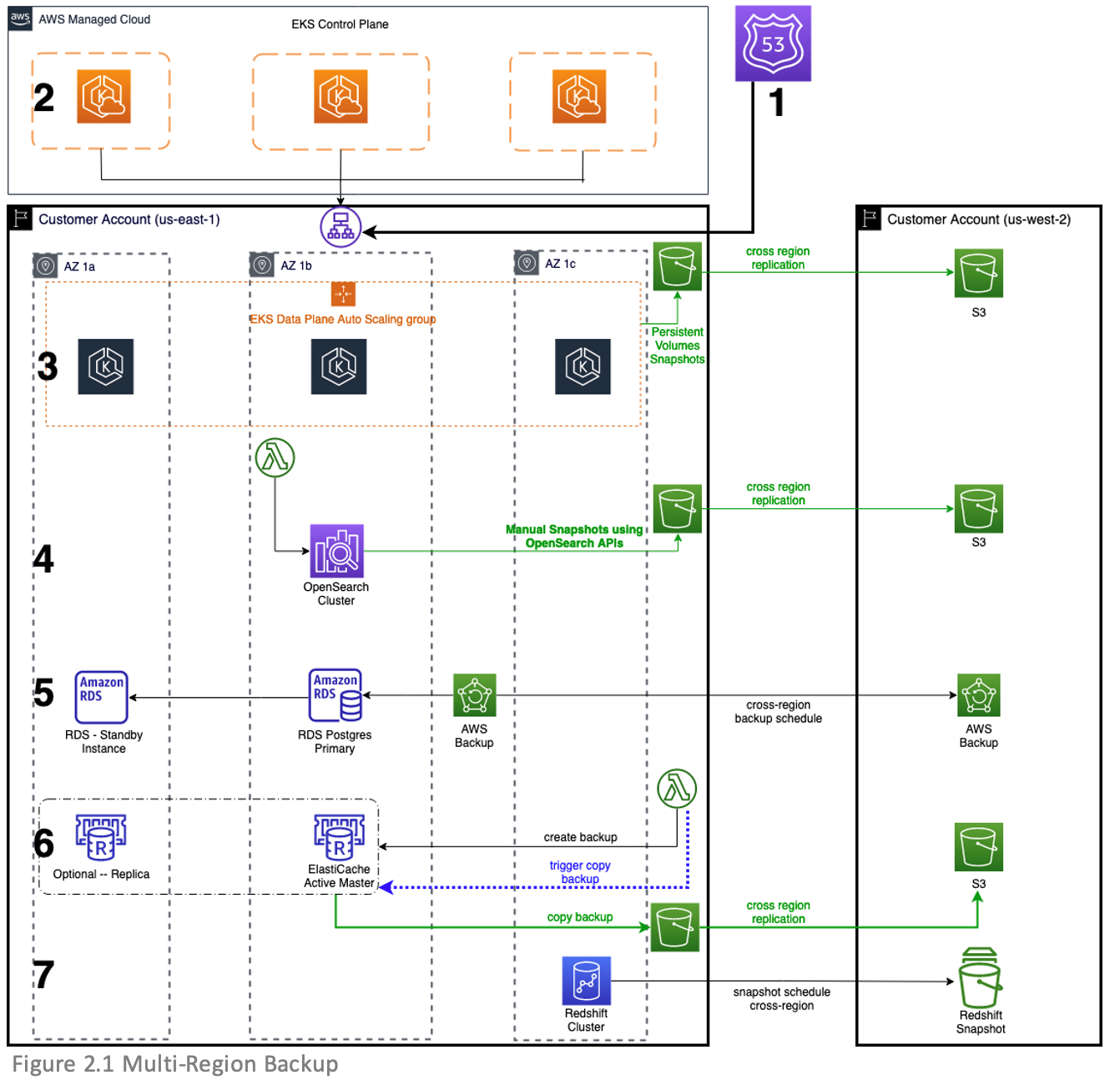

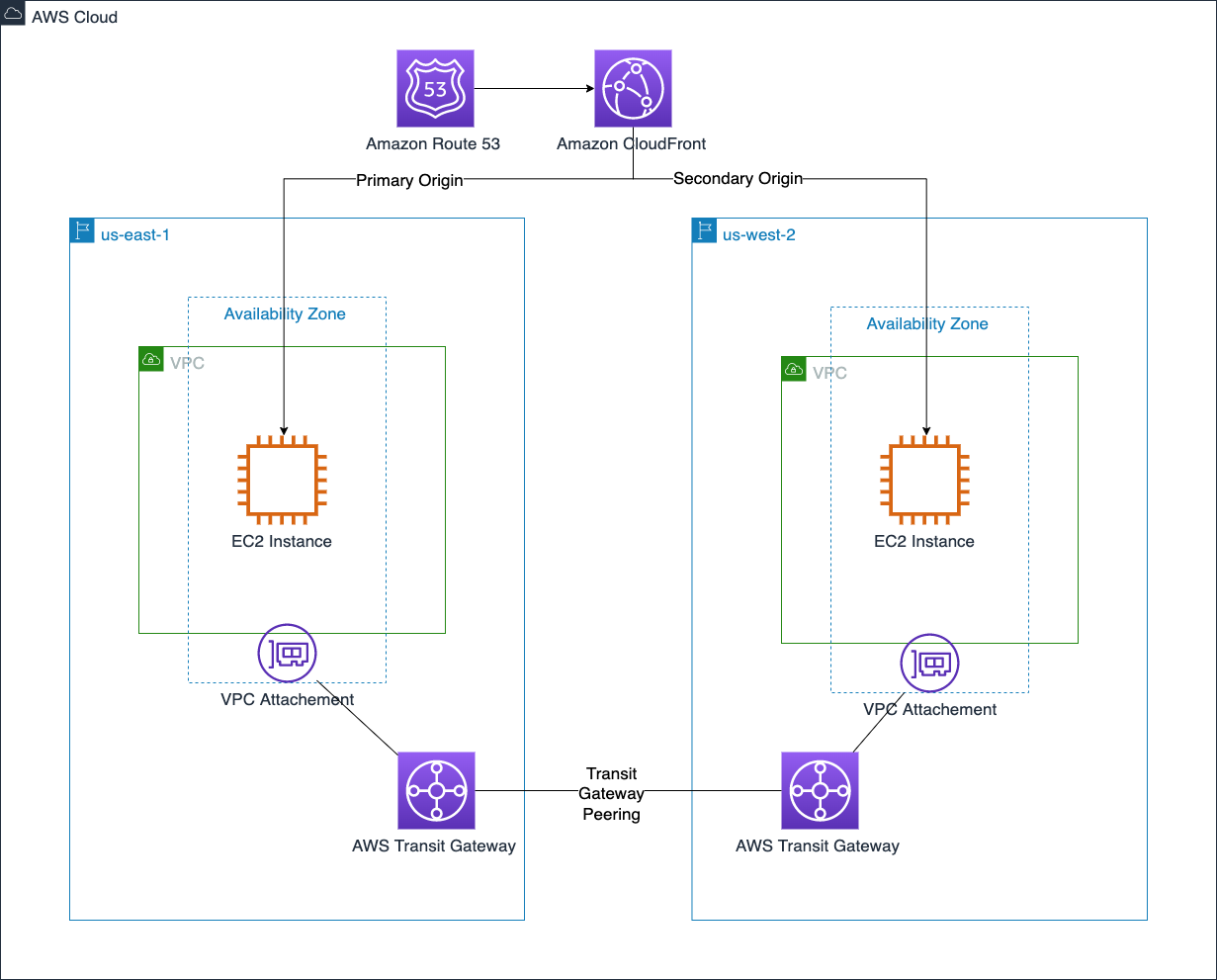

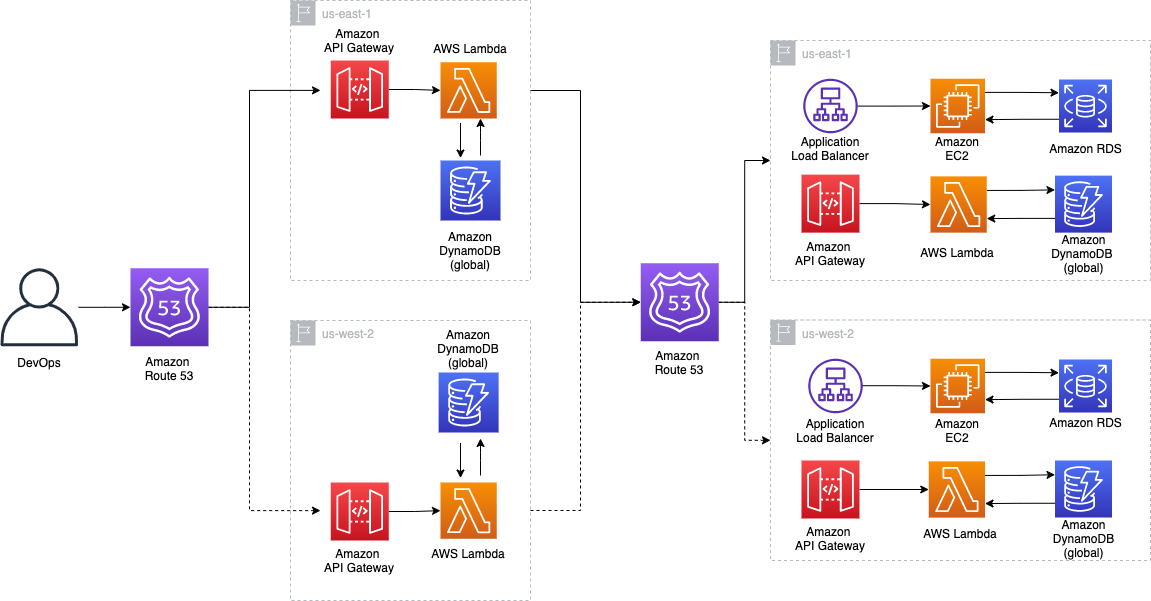

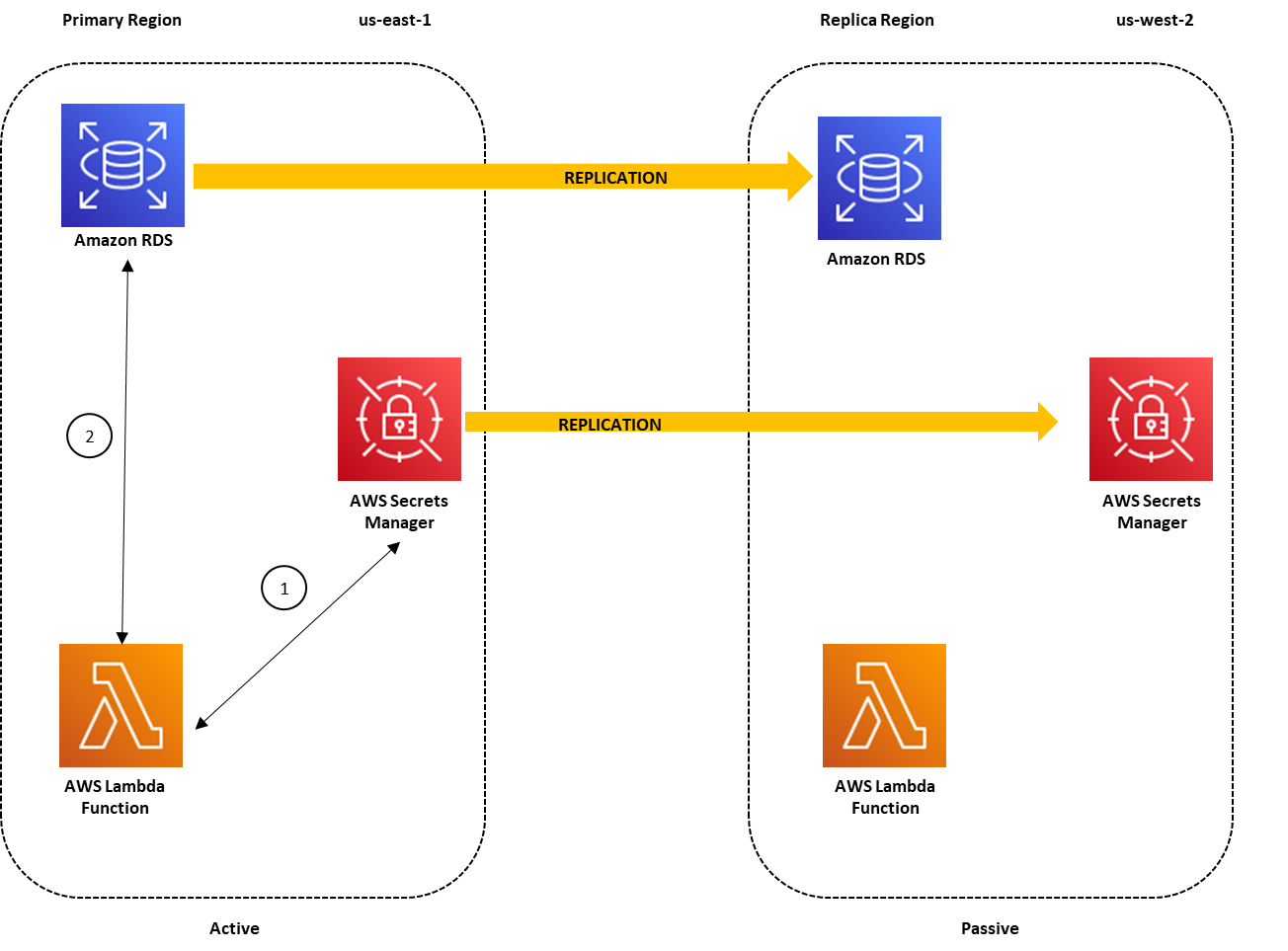

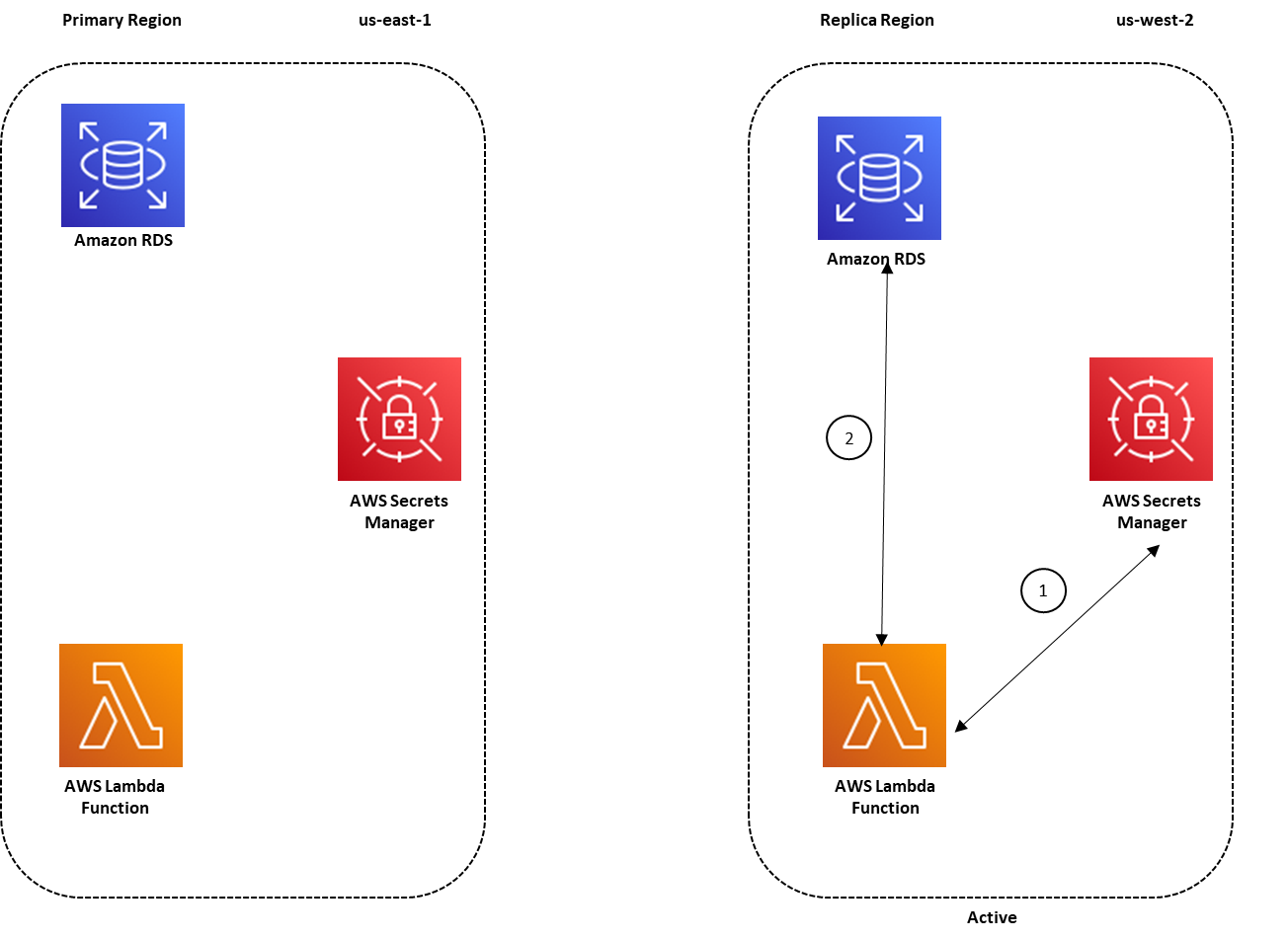

A well-designed backup strategy should include actions that can protect and recover your resources from ransomware, with detailed recovery requirements for your applications and their data dependencies. For example, while you establish preventive and detective controls to mitigate the risk of ransomware, you should also design the appropriate level of granularity for cross-region and/or cross-account copy and restore patterns, to ensure that administrators do not restore corrupt backup data in the event of a security event.

In some industries, when developing a backup strategy, you must also consider the regulations for data retention requirements. You should make sure your backup strategy is designed with the necessary retention requirements (per data classification level and/or resource type) sufficient to meet your regulatory needs.

Consult your security compliance teams to validate whether your backup resources and operations should be included or segmented from the scope of your compliance programs. In my experience as a PCI DSS Qualified Security Assessor (QSA), I’ve seen successful/more mature customers include backup and recovery as critical parts of their security program. This helps them understand where data is across their environment and appropriately define compliance scope.

Refer to Backup and Recovery Approaches Using AWS and the Reliability Pillar of the AWS Well-Architected Framework for architectural best practices for designing and operating reliable, secure, efficient, and cost-effective workloads in the cloud.

#2 – Incorporate backup in DR and BCP

Disaster recovery (DR) is the process of preparing, responding, and recovering from a disaster. It is an important part of your resiliency strategy, and concerns how your workload responds when a disaster strikes. A disaster could be a technical failure, human action, or natural event. A Business Continuity Plan (BCP) outlines how an organization intends to continue normal business operations during an unplanned disruption.

Your disaster recovery plan should be a subset of your organization’s business continuity plan (BCP) and you should incorporate AWS Backup procedures in your enterprise business continuity plan. For example, a security event that affects production data might require you to invoke a disaster recovery plan that fails over to backup data from another AWS Region. You should ensure that your employees are familiar with and have practiced using AWS Backup along with your organizational procedures, so that if disaster strikes, your organization can continue its normal operations with little or no service disruption.

#3 – Automate backup operations

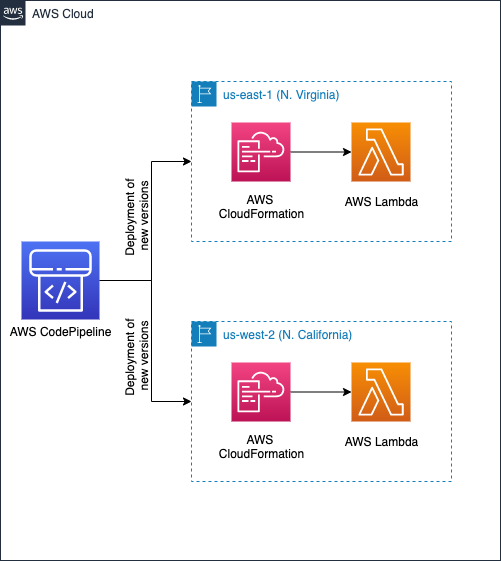

Organizations should configure their backup plans and resource assignments to reflect their enterprise data protection policies. Automating and deploying backup policies or organization-wide backup plans allows you to standardize and scale your backup strategy. You can leverage AWS Organizations to centrally automate backup policies to implement, configure, manage, and govern backup activity across supported AWS resources by scheduling backup operations.

You should consider implementing infrastructure as code (IaC) and event-driven architecture as essential parts of your digital transformation and backup strategy, to improve productivity and govern infrastructure operations across multi-account environments. Automating backups allows you to reduce manual overhead from time-consuming configuration of your backups, minimizes the risk for errors, provides visibility on drift detection, and enhances backup policy compliance across multiple AWS workloads or accounts.

Implementing backup policies as code can help you meet data protection regulations, by configuring different requirements for your resource types, scaling your enterprise data protection strategy, and implementing lifecycle rules to specify how long before a recovery point either transitions to cold storage or is deleted, which can help optimize your costs.

When automating your backup operations, you can scale resource assignment options using AWS Tags and Resource IDs to automatically identify the AWS resources that store data for your business-critical applications and protect your data using immutable backups. This can help you prioritize security controls, such as access permissions and backup plans or policies.

#4 – Implement access control mechanisms

When thinking about security in the cloud, your foundational strategy should begin with a strong identity foundation to ensure a user has the right permissions to access data. Appropriate authentication and authorization can mitigate the risk of security events. The shared responsibility model requires AWS customers to implement access control policies. You can use AWS Identity and Access Management (IAM) service to create and manage access policies at scale.

When configuring access rights and permissions, you should implement the principle of least privilege by ensuring each user or system accessing your backup data or Vault is only given the permissions necessary to fulfill their job duties. Using AWS Backup, you should implement access control policies by setting access policies on backup vaults to protect your cloud workloads.

For example, implementing access control policies allows you to grant users access to create backup plans and on-demand backups, but still limit their ability to delete recovery points once they’ve been created. Using vault access policies, you can share a destination backup vault with a source AWS Account, user, or IAM role, as required by your business needs. Access policy can also allow you to share a backup vault with one or multiple accounts, or with your entire organization in AWS Organizations.

As you scale your workloads or migrate into AWS, you may need to centrally manage permissions to your backup vaults and operations. You should use service control policies (SCPs) to implement centralized control over the maximum available permissions for all accounts in your organization. This offers defense in depth, and ensures your users stay within the defined access control guidelines. To learn more, read how you can secure your AWS Backup data and operations using service control policies (SCPs).

To mitigate security risks such as unintended access to your backup resources and data, use AWS IAM Access Analyzer to identify any AWS Backup IAM role shared with an external entity such as AWS account, a root user, an IAM user or role, a federated user, an AWS service, an anonymous user, or other entity that you can use to create a filter.

#5 – Encrypt backup data and vault

Organizations increasingly need to improve their data security strategy, and may be required to meet data protection regulations as they scale in the cloud. The correct implementation of encryption methods can provide an additional layer of protection above foundational access control mechanisms providing a mitigation if your primary access control policies fail.

For example, if you configure overly permissive access control policies on your Backup data, your key management system or process can mitigate the maximum impact of a security event, since there are separate authorization mechanisms to access your data and encryption key which means that the backup data is only viewable as cipher text.

To get the most from AWS cloud encryption, you should encrypt data both in transit and at rest. To protect data in transit, AWS uses published API calls to access AWS Backup through the network using Transport Layer Security (TLS) protocol to provide encryption between you, your application and the Backup service. To protect data at rest, AWS offers cloud-native options of using AWS Key Managed System (KMS) or AWS CloudHSM which leverages Advanced Encryption Standard (AES) with 256-bit keys (AES-256), a strong industry-adopted algorithm for encrypting data. You should evaluate your data governance and regulatory requirements, and select the appropriate encryption service to encrypt your cloud data and backup vaults.

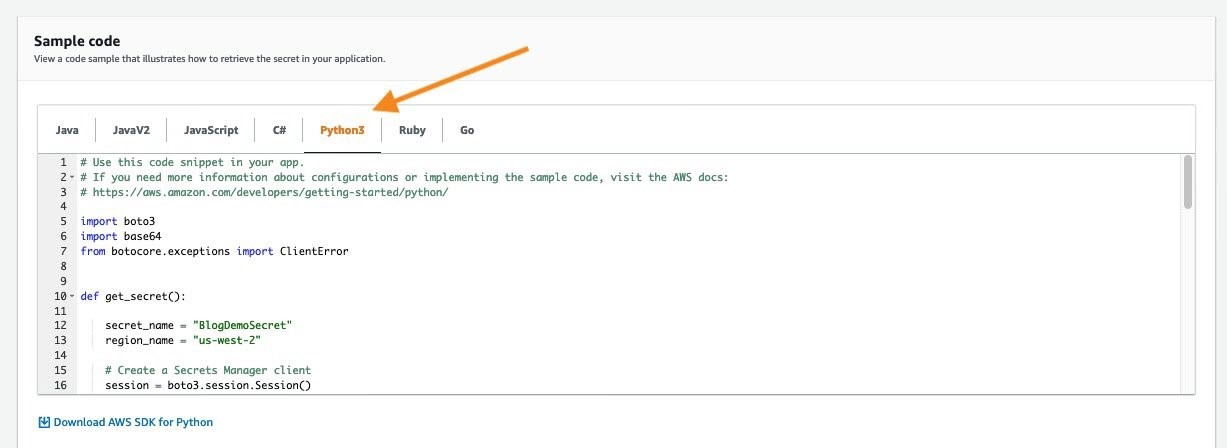

Encryption configuration differs depending on the resource type and backup operations across accounts or Regions. Certain resource types support the ability to encrypt your backups using a separate encryption key from the key used to encrypt the source resource. Since you are responsible for managing access controls to determine who can access your Backup data or vault encryption keys and under which conditions, you should use the policy language offered by AWS KMS to define access controls on keys. You can also use AWS Backup Audit Manager to confirm that your backup is properly encrypted.

To learn more, refer to the documentation on encryption for backups and backup copies.

AWS KMS multi-Region keys allows you to replicate keys from one Region into another. Multi-Region keys are designed to simplify encryption management when your encrypted data has to be copied into other Regions for disaster recovery. You should evaluate the need to implement multi-region KMS keys as part of your overall backup strategy.

#6 – Safeguard backups using immutable storage

Immutable storage allows organizations to write data in a Write Once Read Many (WORM) state. While in a WORM state, data can be written one time, read and used as often as needed after it has been committed or written to the storage medium. Immutable storage ensures data integrity is maintained and provides protection against deletes, overwrites, inadvertent and unauthorized access, ransomware compromise etc. Immutable storage offers an efficient mechanism to address potential security events with real impacts on your business operations.

Immutable storage can be used for better governance when paired with strong SCP restrictions, or can be used in a compliance WORM mode when the letter of the law (such as a legal hold) requires access to immutable data.

You can maintain data availability and integrity with AWS Backup Vault Lock to protect your backups* such that unauthorized entities cannot erase, alter or corrupt your customer or business data during the required retention period. AWS Backup Vault Lock helps you meet your organization’s data protection policies by preventing deletions by privileged users (including the AWS account root user), changes to your backup lifecycle settings, and updates that alter your defined retention period.

AWS Backup Vault Lock ensures immutability and adds an additional layer of defense that protects backups (recovery points) in your Backup Vaults, especially in highly- regulated industries with stringent integrity needs for backups and archives. AWS Backup Vault Lock makes sure your data is preserved along with a backup to recover from in case of unintended or malicious actions.

*The feature has not yet been assessed for compliance with the Securities and Exchange Commission (SEC) rule 17a-4(f) and the Commodity Futures Trading Commission (CFTC) in regulation 17 C.F.R. 1.31(b)-(c).

#7 – Implement backup monitoring and alerting

Backup jobs can fail. A failed job, such as backup, restore, or copy task, may have impact on subsequent steps in a process. When the initial backup job fails, there’s a high probability that other succeeding tasks will also fail. In such a scenario, you can best understand the course of events through monitoring and notification.

Enabling and configuring notifications to generate emails to monitor AWS Backup jobs gives you awareness of your backup activities, ensures you meet critical service-level agreements (SLAs), enhances your business-as-usual monitoring, and helps you meet compliance obligations. You can implement backup monitoring for your workloads by integrating AWS Backup with other AWS services and ticketing systems to perform automated investigation and escalation flows.

For example, use Amazon CloudWatch to track metrics, create alarms, and view dashboards, Amazon EventBridge to monitor AWS Backup processes and events, AWS CloudTrail to monitor AWS Backup API calls with detailed information on the time, source IP, users, and accounts making those calls, and Amazon Simple Notification Service (Amazon SNS) to subscribe to AWS Backup-related topics such as backup, restore, and copy events. Monitoring and alerting can provide organizational awareness for your backup jobs, which helps you respond to backup failures.

You can use AWS Backup Audit Manager to automatically generate evidence of your daily backup audit reports per account and Region. You can also scale your backup monitoring across multiple accounts by using a set of automation templates and dashboards (known as the backup observer solution) to obtain aggregated daily cross-account multi-Region AWS Backup reporting.

#8 – Audit backup configuration

Organizations should audit the compliance of AWS Backup policies against defined controls such as defined backup frequency. You should continuously and automatically track your backup activity and generate automatic reports to find and investigate backup operations or resources which are not compliant with your business requirements.

AWS Backup Audit Manager provides built-in, customizable, compliance controls that align with your business compliance and regulatory requirements. AWS Backup Audit Manager provides five backup governance control templates, including backup resources protected by backup plans, backup plan with a minimum frequency and minimum retention, etc. If you leverage infrastructure-as-code automation, you can use AWS Backup Audit Manager with AWS CloudFormation.

AWS Security Hub provides you with a comprehensive view of your security state in AWS and helps you check your environment against security best practices and industry standards such as AWS Foundational Security Best Practices controls. If you leverage AWS Security Hub within your cloud environment, we recommend you enable the AWS Foundational Security Best Practices, as it includes detective controls that can help with securing backups in AWS. The detective controls in AWS Backup Audit Manager and Security Hub are also mostly available as AWS managed rules in AWS Config.

#9 – Test data recovery capabilities

Ideally, any data stored as a backup must be able to be successfully restored when required. Your backup strategy must include testing your backups. A backup strategy is not effective if backed up data cannot be restored. You should regularly test your ability to find certain recovery points and restore them. While AWS Backup automatically copies tags from the resources it protects to the recovery points, tags are not copied from recovery points to the corresponding restored resources. To scale your inventory management and locate recovery points, you should consider retaining your tags on resources created by AWS Backup restore jobs, using AWS Backup events to trigger a tag replication process.

You can start your data recovery workflow by establishing data recovery patterns and then regularly test them. You should create a simple and repeatable process that allows you to perform continuous data recovery testing to increase confidence in your ability to recover backup data. For example, you can create a pattern to test a cross-account, cross-region restore operation from a central DR backup vault encrypted with a customer-managed KMS key to a source account backup vault encrypted with a different customer-managed KMS key.

If you don’t frequently test such restore operations, you may find that your assumptions on KMS encryption for cross-account, cross-region operations are incorrect. Oftentimes, the only backup recovery pattern that actually works is the path you test frequently. Through routine testing of supported backup resource types, you can spot early warnings that could potentially cause future disturbances and loss of critical data. If possible, maintain a limited but feasible number of recovery paths and patterns to prevent wasted storage space, optimize costs, and save time. It’s easier to fix the problem when a recovery test fails than losing valuable or critical data.

#10 – Incorporate backup in incident response plan

Security Incident Response Simulations (SIRS) are internal events that provide a structured opportunity to practice your incident response plan and procedures during a realistic scenario. It’s valuable to test your backup data and operations in creative SIRS activities to test yourself against the unexpected. This helps you validate your organizational readiness and develop comfort with the rare and unexpected. Your simulations must be realistic, and should involve cross-functional organizational teams required to respond to events.

Start with basic and easy simulation exercises, and work towards a full, complex event. For example, you can build a realistic model that consists of an Amazon Virtual Private Cloud and associated resources that simulate inadvertent overexposure of information or a potential data breach due to changes to policies and access control lists. Document lessons learned to evaluate how well your incident response plan worked, and to identify improvements that need to be made to future response procedures.

You can use AWS Backup to set up automated instance-level backups as AMIs and volume-level backups as snapshots across multiple AWS accounts. This can help your incident response team enhance their forensic process such as automated forensic disk collection, by providing a restore point that could reduce the scope and impact of potential security events such as ransomware.

Conclusion

In this blog post, I showed you the top ten security best practices and controls to protect your backup data in AWS. I encourage you to use these best practices to design and implement a backup and recovery strategy and architecture with multiple layers of controls that scales and achieves your business needs. To learn more about AWS Backup, refer to the AWS Backup documentation.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, start a new thread on the AWS Backup forum or contact AWS Support.

Further reading

Additional resources to consider:

Prescriptive Guidance: Backup and recovery approaches on AWS

Blog: Automate centralized backup at scale across AWS services using AWS Backup

Blog: Disaster Recovery (DR) Architecture on AWS, Part I: Strategies for Recovery in the Cloud

Blog: The importance of encryption and how AWS can help

Blog: Enhance the security posture of your backups with AWS Backup Vault Lock

Blog: Monitor, Evaluate, and Demonstrate Backup Compliance with AWS Backup Audit Manager

Blog: Create and share encrypted backups across accounts and Regions using AWS Backup

Blog: Simplify auditing your data protection policies with AWS Backup Audit Manager

Blog: Managing access to backups using service control policies with AWS Backup

Blog: Obtain aggregated daily cross-account multi-Region AWS Backup reporting

Want more AWS Security news? Follow us on Twitter.