Post Syndicated from Channy Yun original https://aws.amazon.com/blogs/aws/new-amazon-lightsail-for-research-with-all-in-one-research-environments/

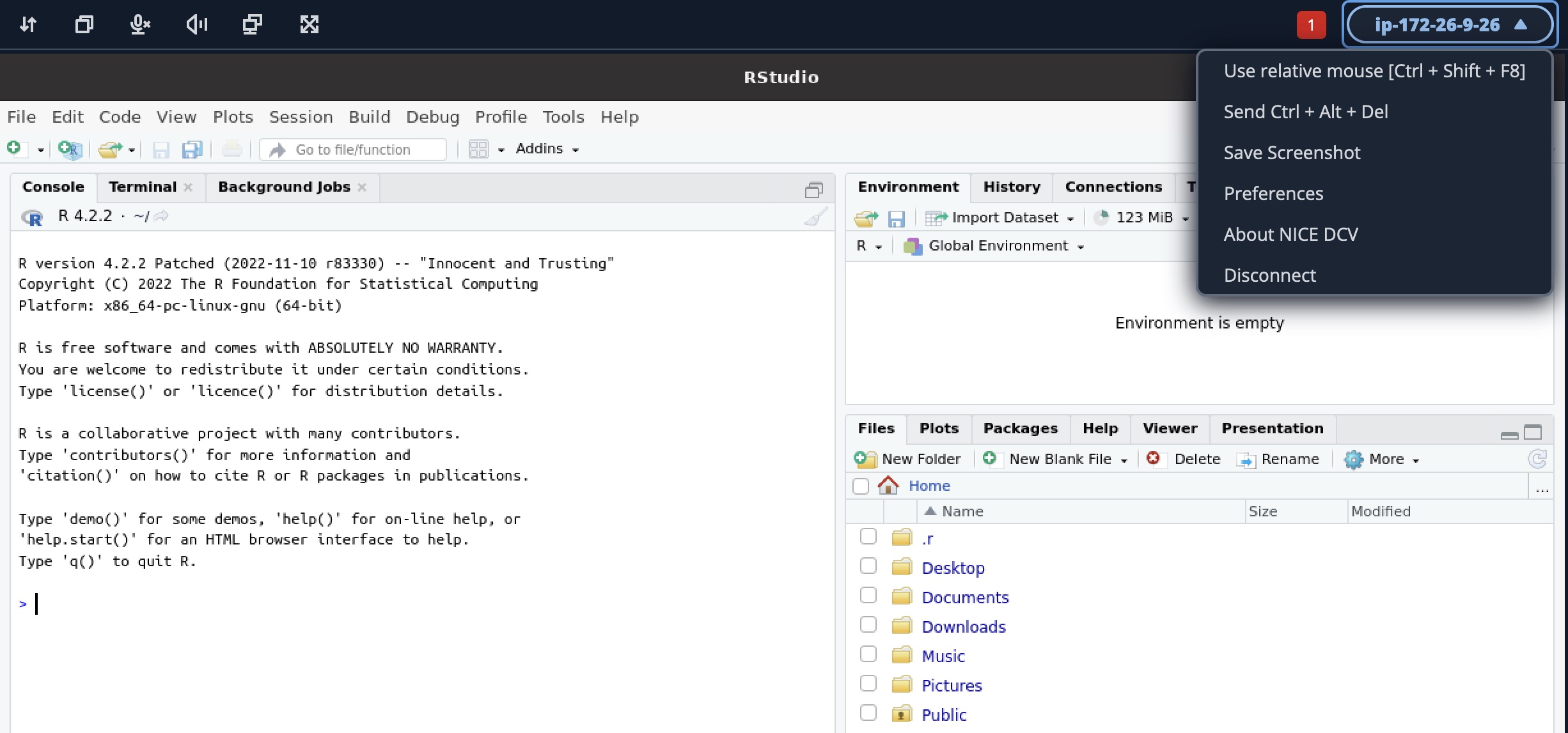

Today we are announcing the general availability of Amazon Lightsail for Research, a new offering that makes it easy for researchers and students to create and manage a high-performance CPU or a GPU research computer in just a few clicks on the cloud. You can use your preferred integrated development environments (IDEs) like preinstalled Jupyter, RStudio, Scilab, VSCodium, or native Ubuntu operating system on your research computer.

You no longer need to use your own research laptop or shared school computers for analyzing larger datasets or running complex simulations. You can create your own research environments and directly access the application running on the research computer remotely via a web browser. Also, you can easily upload data to and download from your research computer via a simple web interface.

You pay only for the duration the computers are in use and can delete them at any time. You can also use budgeting controls that can automatically stop your computer when it’s not in use. Lightsail for Research also includes all-inclusive prices of compute, storage, and data transfer, so you know exactly how much you will pay for the duration you use the research computer.

Get Started with Amazon Lightsail for Research

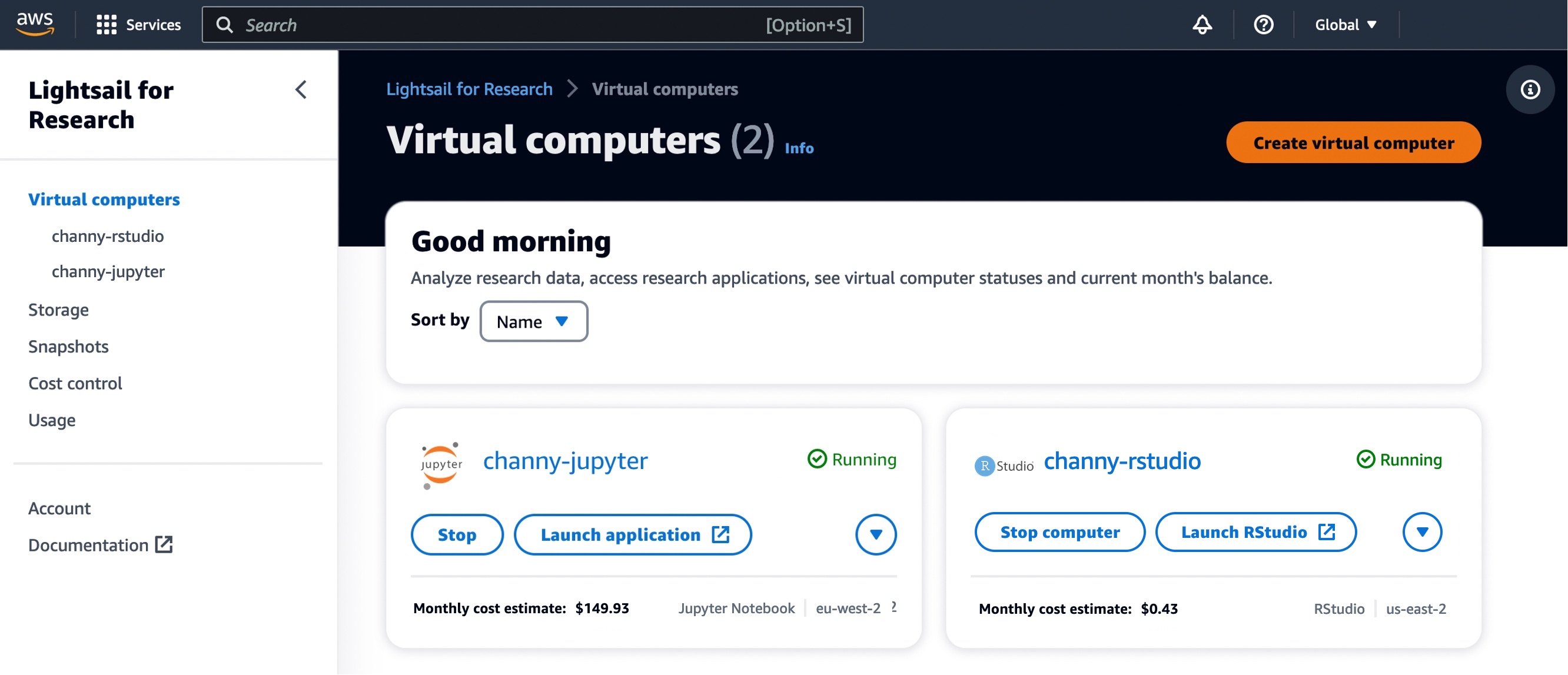

To get started, navigate to the Lightsail for Research console, and choose Virtual computers in the left menu. You can see my research computers naming “channy-jupyter” or “channy-rstudio” already created.

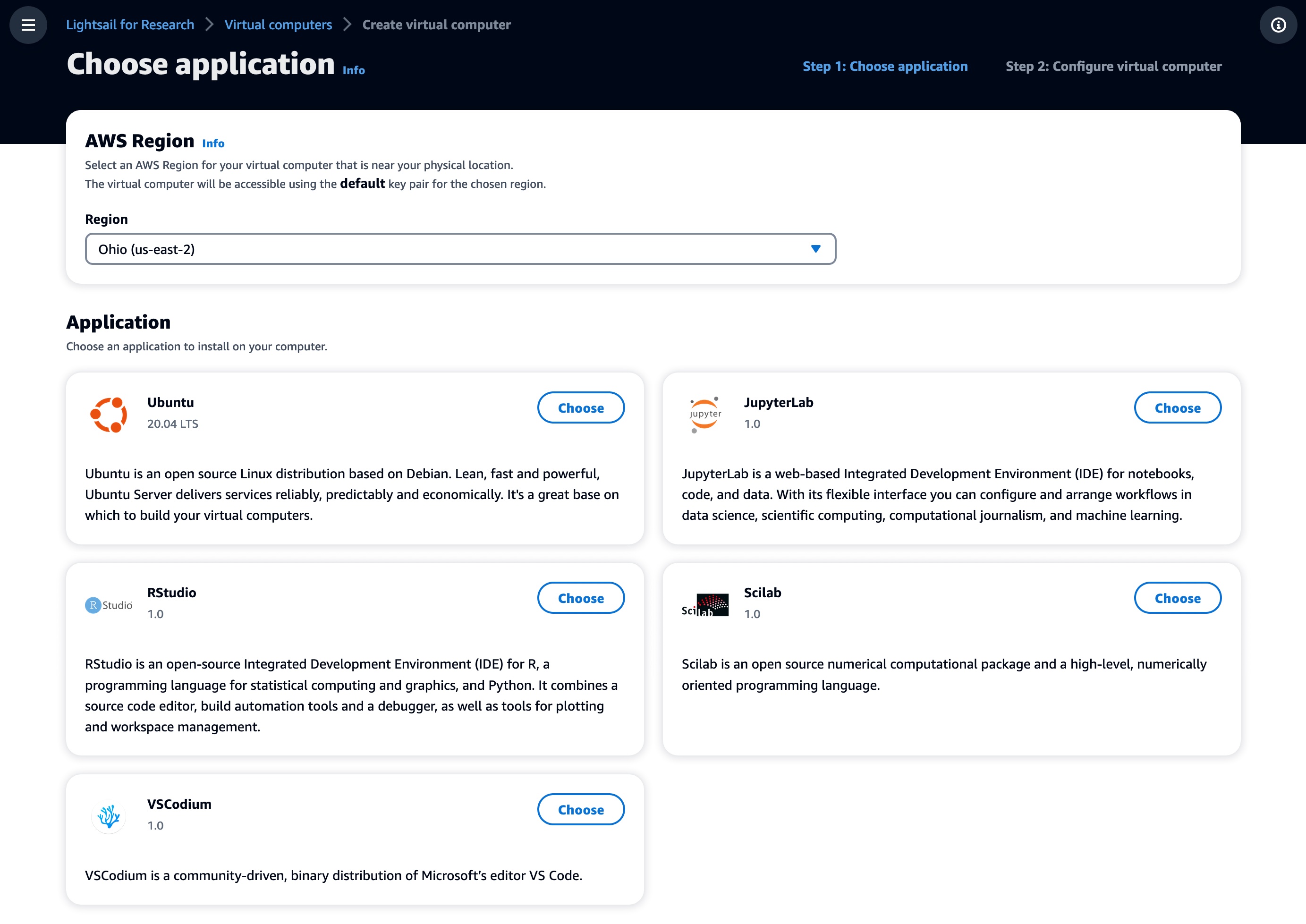

Choose Create virtual computer to create a new research computer, and select which software you’d like preinstalled on your computer and what type of research computer you’d like to create.

In the first step, choose the application you want installed on your computer and the AWS Region to be located in. We support Jupyter, RStudio, Scilab, and VSCodium. You can install additional packages and extensions through the interface of these IDE applications.

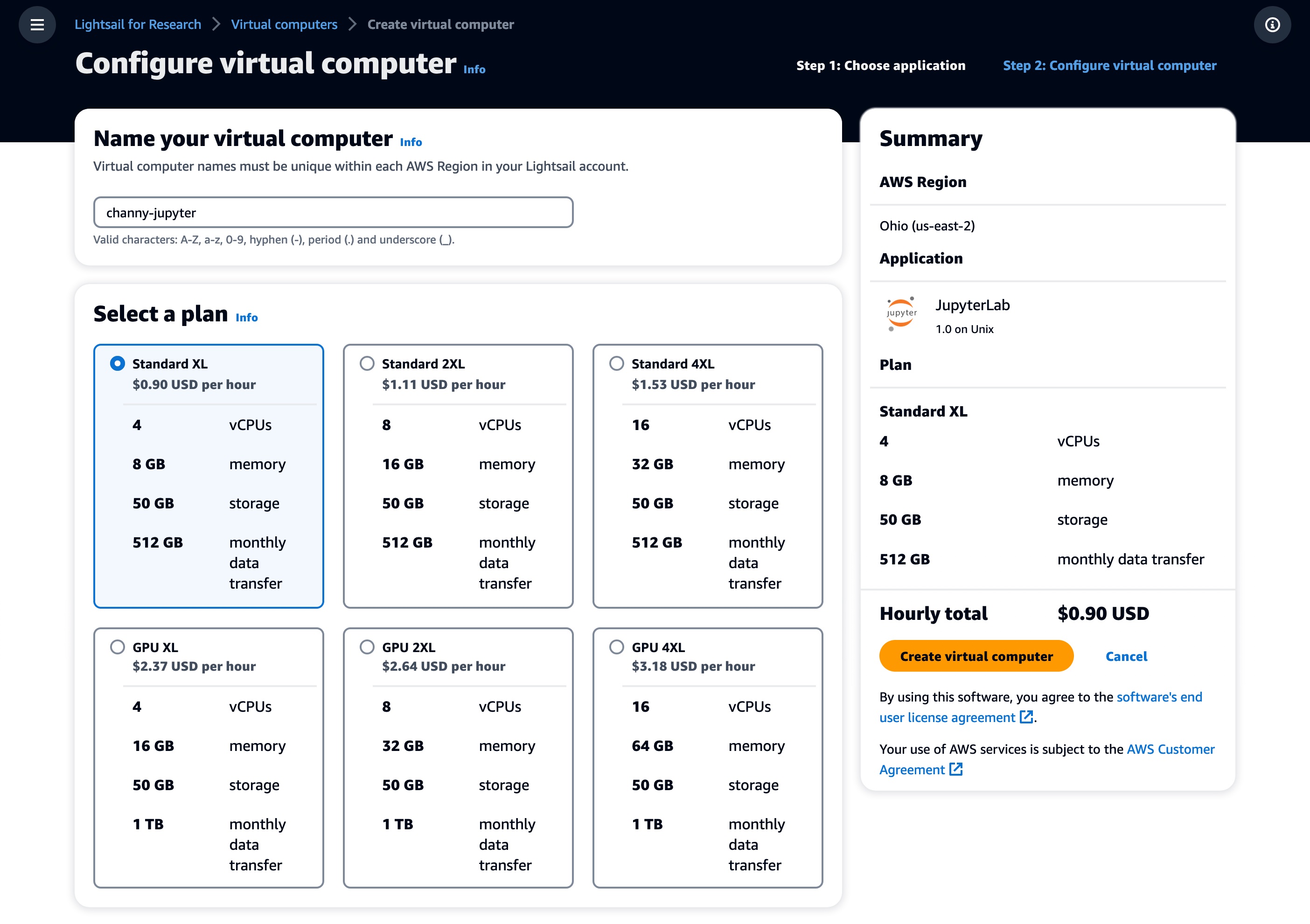

Next, choose the desired virtual hardware type, including a fixed amount of compute (vCPUs or GPUs), memory (RAM), SSD-based storage volume (disk) space, and a monthly data transfer allowance. Bundles are charged on an hourly and on-demand basis.

Standard types are compute-optimized and ideal for compute-bound applications that benefit from high-performance processors.

| Name |

vCPUs |

Memory |

Storage |

Monthly data

transfer allowance* |

| Standard XL |

4 |

8 GB |

50 GB |

0.5TB |

| Standard 2XL |

8 |

16 GB |

50 GB |

0.5TB |

| Standard 4XL |

16 |

32 GB |

50 GB |

0.5TB |

GPU types provide a high-performance platform for general-purpose GPU computing. You can use these bundles to accelerate scientific, engineering, and rendering applications and workloads.

| Name |

GPU |

vCPUs |

Memory |

Storage |

Monthly data

transfer allowance* |

| GPU XL |

1 |

4 |

16 GB |

50 GB |

1 TB |

| GPU 2XL |

1 |

8 |

32 GB |

50 GB |

1 TB |

| GPU 4XL |

1 |

16 |

64 GB |

50 GB |

1 TB |

* AWS created the Global Data Egress Waiver (GDEW) program to help eligible researchers and academic institutions use AWS services by waiving data egress fees. To learn more, see the blog post.

After making your selections, name your computer and choose Create virtual computer to create your research computer. Once your computer is created and running, choose the Launch application button to open a new window that will display the preinstalled application you selected.

Lightsail for Research Features

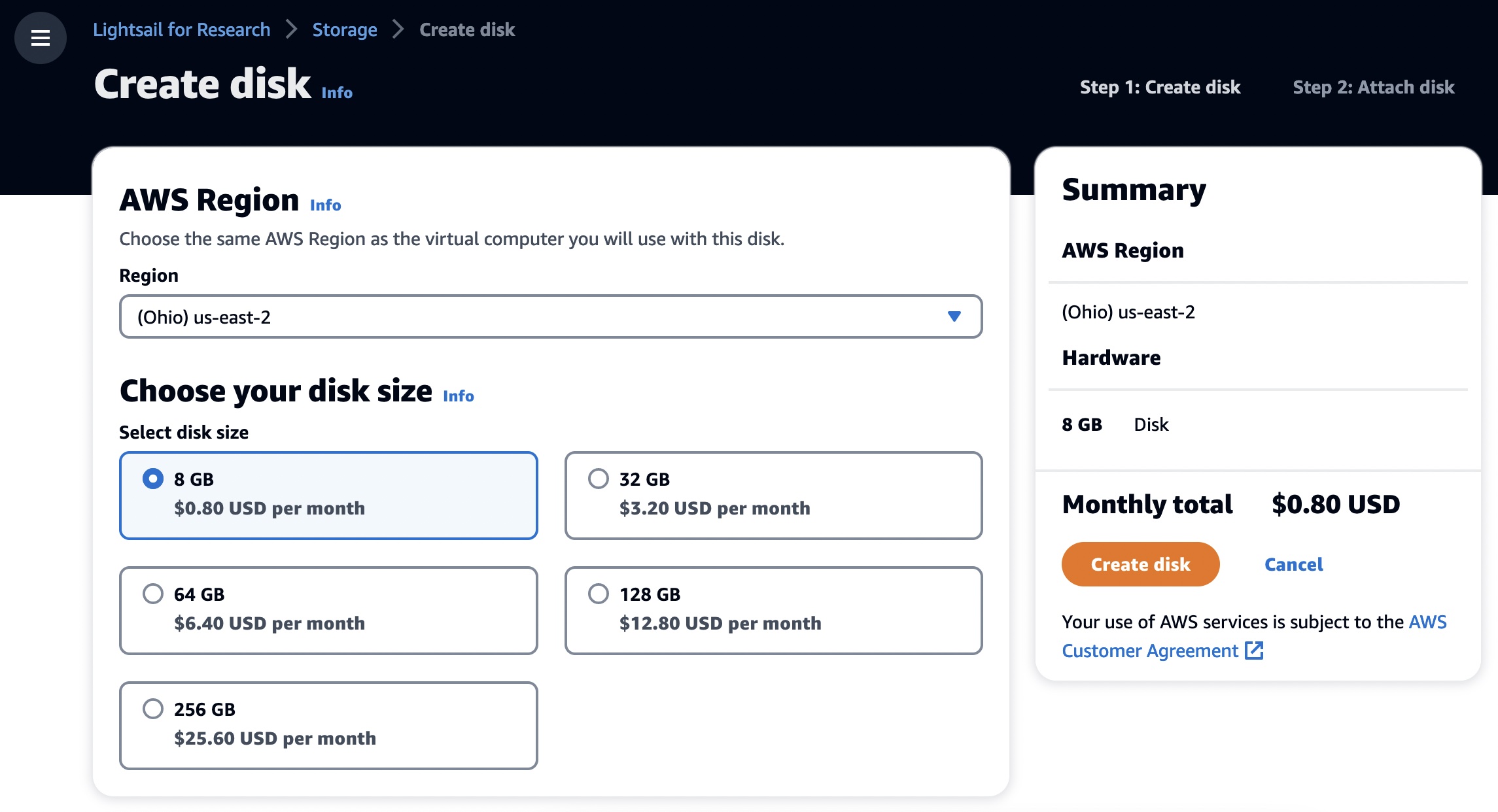

As with existing Lightsail instances, you can create additional block-level storage volumes (disks) that you can attach to a running Lightsail for Research virtual computer. You can use a disk as a primary storage device for data that requires frequent and granular updates. To create your own storage, choose Storage and Create disk.

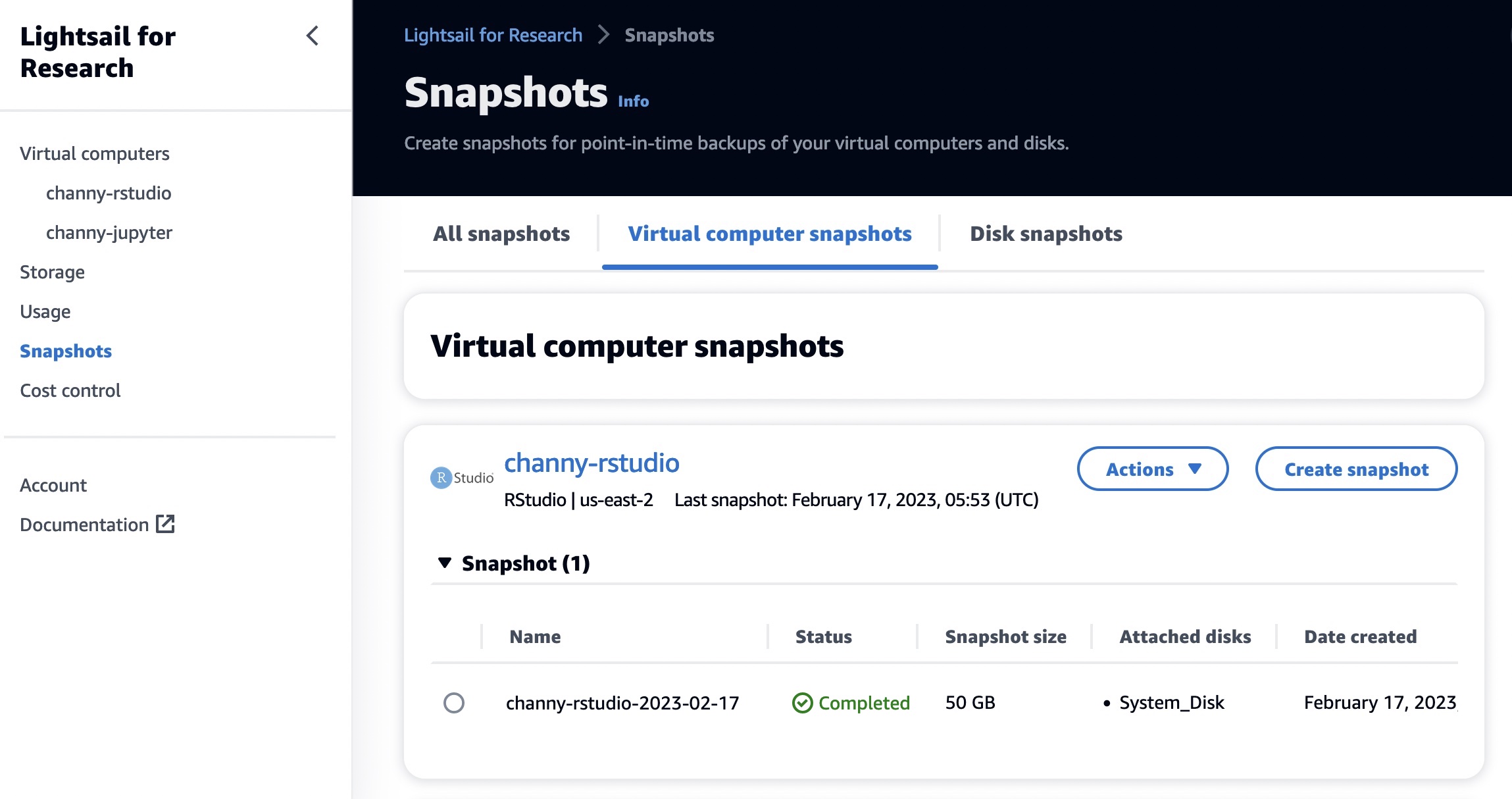

You can also create Snapshots, a point-in-time copy of your data. You can create a snapshot of your Lightsail for Research virtual computers and use it as baselines to create new computers or for data backup. A snapshot contains all of the data that is needed to restore your computer from the moment when the snapshot was taken.

When you restore a computer by creating it from a snapshot, you can easily create a new one or upgrade your computer to a larger size using a snapshot backup. Create snapshots frequently to protect your data from corrupt applications or user errors.

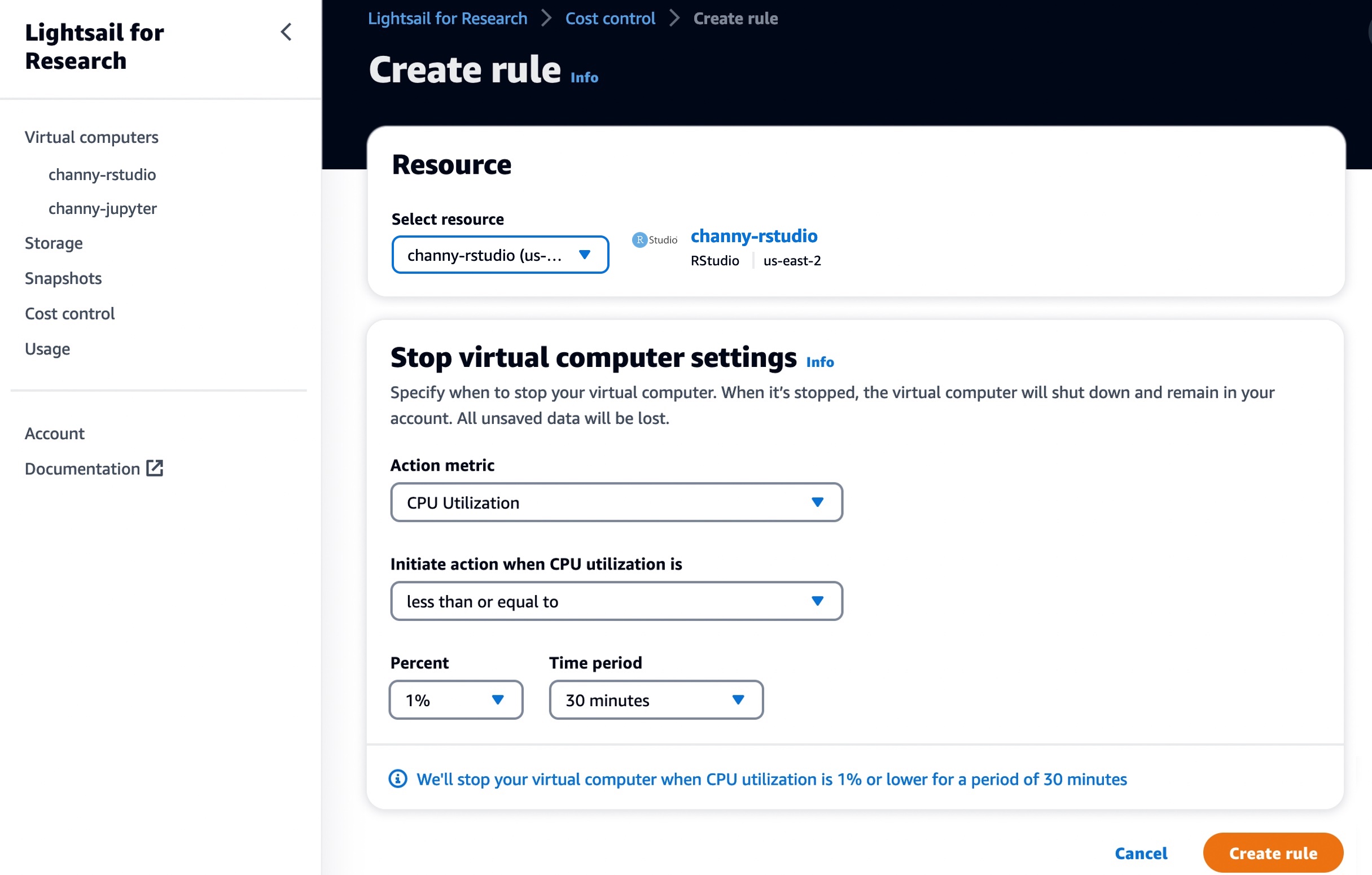

You can use Cost control rules that you define to help manage the usage and cost of your Lightsail for Research virtual computers. You can create rules that stop running computers when average CPU utilization over a selected time period falls below a prescribed level.

For example, you can configure a rule that automatically stops a specific computer when its CPU utilization is equal to or less than 1 percent for a 30-minute period. Lightsail for Research will then automatically stop the computer so that you don’t incur charges for running computers.

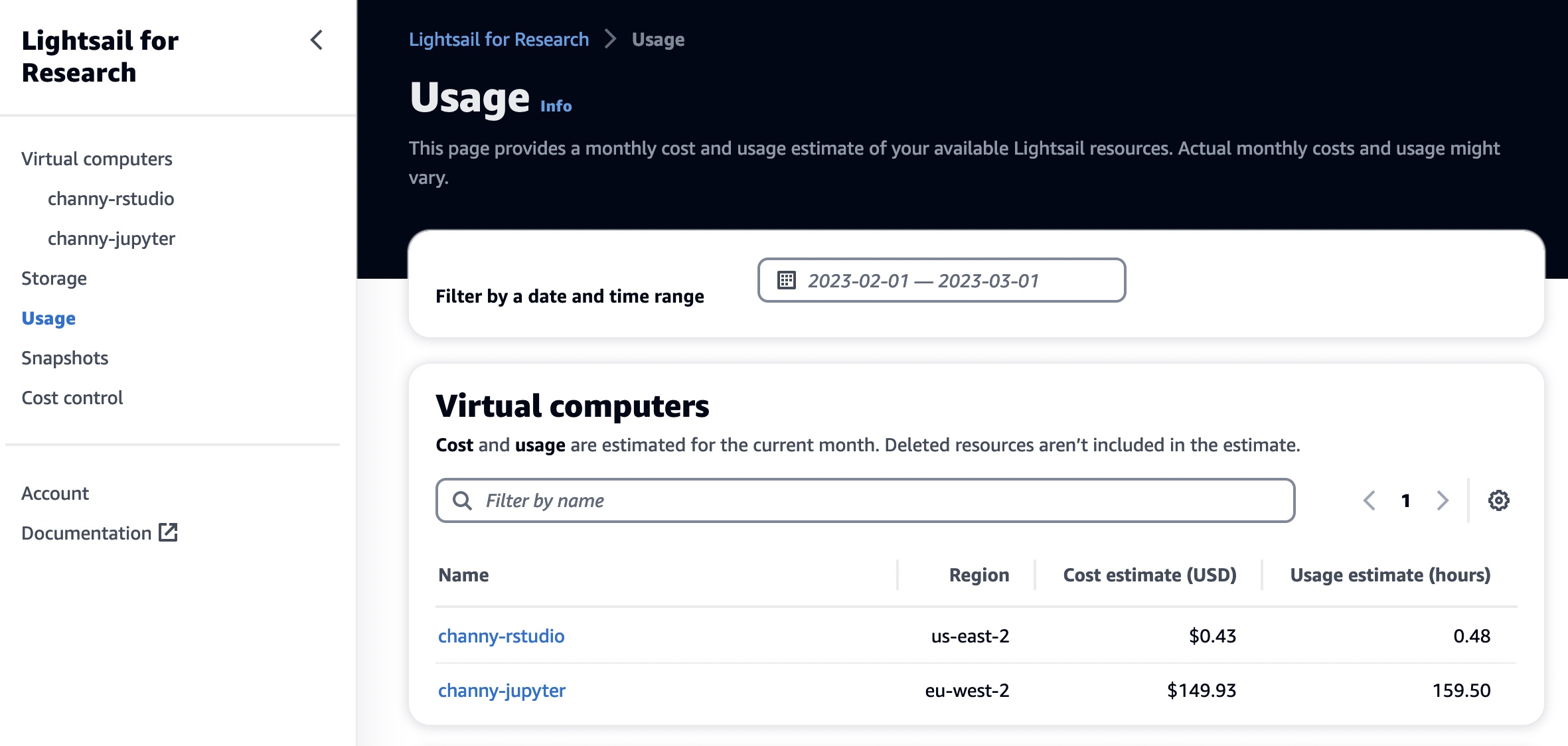

In the Usage menu, you can view the cost estimate and usage hours for your resources during a specified time period.

Now Available

Amazon Lightsail for Research is now available in the US East (Ohio), US West (Oregon), Asia Pacific (Mumbai), Asia Pacific (Seoul), Asia Pacific (Singapore), Asia Pacific (Sydney), Asia Pacific (Tokyo), Canada (Central), Europe (Frankfurt), Europe (Ireland), Europe (London), Europe (Paris), Europe (Stockholm), and Europe (Sweden) Regions.

Now you can start using it today. To learn more, see the Amazon Lightsail for Research User Guide, and please send feedback to AWS re:Post for Amazon Lightsail or through your usual AWS support contacts.

– Channy

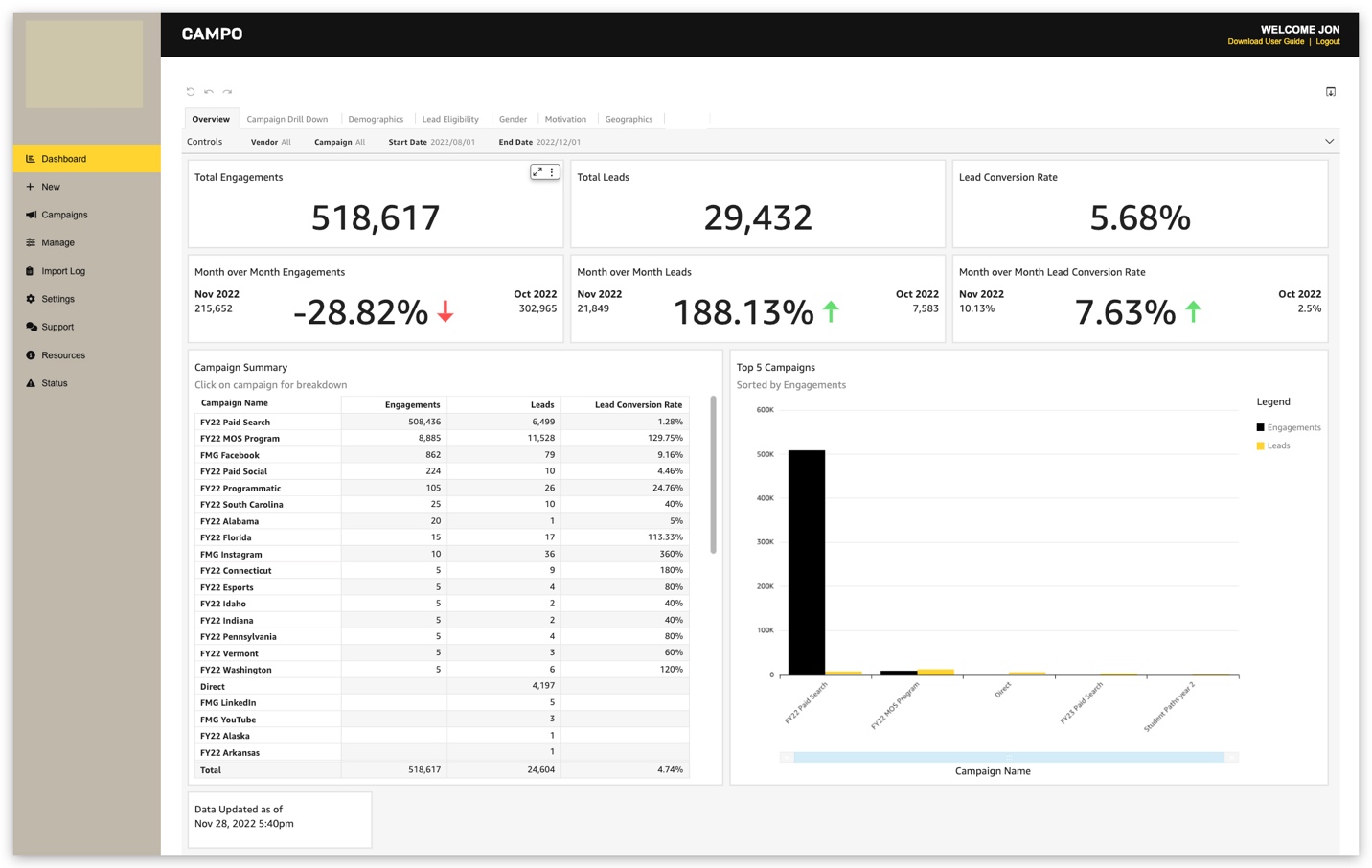

Jon Walker, Senior Director of Engineering, is a native Nashvillian who has been in the technology field for over 19 years. He oversees enterprise-wide system engineering, development, and technology programs for large federal and DoD clients, as well as iostudio’s commercial clients.

Jon Walker, Senior Director of Engineering, is a native Nashvillian who has been in the technology field for over 19 years. He oversees enterprise-wide system engineering, development, and technology programs for large federal and DoD clients, as well as iostudio’s commercial clients. Ari Orlinsky, Director of Information Services, leads iostudio’s Information Systems Department, responsible for AWS Cloud, SaaS applications, on-premises technology, risk assessment, compliance, budgeting, and human resource management. With nearly 20 years’ experience in strategic IS and technology operations, Ari has developed a keen enthusiasm for emerging technologies, DOD security and compliance, large format interactive experiences, and customer service communication technologies. As iostudio’s Technical Product Owner across internal and client-facing applications including a cloud-based omni-channel contact center platform, he advocates for secure deployment of applicable technologies to the cloud while ensuring resilient on-premises data center solutions.

Ari Orlinsky, Director of Information Services, leads iostudio’s Information Systems Department, responsible for AWS Cloud, SaaS applications, on-premises technology, risk assessment, compliance, budgeting, and human resource management. With nearly 20 years’ experience in strategic IS and technology operations, Ari has developed a keen enthusiasm for emerging technologies, DOD security and compliance, large format interactive experiences, and customer service communication technologies. As iostudio’s Technical Product Owner across internal and client-facing applications including a cloud-based omni-channel contact center platform, he advocates for secure deployment of applicable technologies to the cloud while ensuring resilient on-premises data center solutions. Sumitha AP is a Sr. Solutions Architect at AWS. Sumitha works with SMB customers to help them design secure, scalable, reliable, and cost-effective solutions in the AWS Cloud. She has a focus on data and analytics and provides guidance on building analytics solutions on AWS.

Sumitha AP is a Sr. Solutions Architect at AWS. Sumitha works with SMB customers to help them design secure, scalable, reliable, and cost-effective solutions in the AWS Cloud. She has a focus on data and analytics and provides guidance on building analytics solutions on AWS.