Post Syndicated from Григор original http://www.gatchev.info/blog/?p=2672

Ето ви сериозно съкратен разказ за една от многото руски групи за пропагандни лъжи, кръстена от изследователите на Майкрософт “Storm-1516”. (Числото е пореден номер.)

—-

Започнала е като клон на Агенцията за Интернет проучвания – руска пропагандна група, създадена от Евгений Пригожин и регистрирана като „частна фирма“, за да се прикрие фактът, че е управлявана от отдела за активни мероприятия в чужбина на КГБ. Отделното съществуване на Storm-1516 е забелязано за пръв път от група изследователи на дезинформацията в медиите в университета Клемсън.

Основният им канал за разпространение са създавани в YouTube и X акаунти, представящи се за разследващи журналисти или уисълблоуъри. След това разпространява чрез тях пропагандни лъжи на английски, руски, финландски, арабски и френски езици. Често една и съща лъжа се пуска през няколко акаунта, за да оставя впечатление за достоверност.

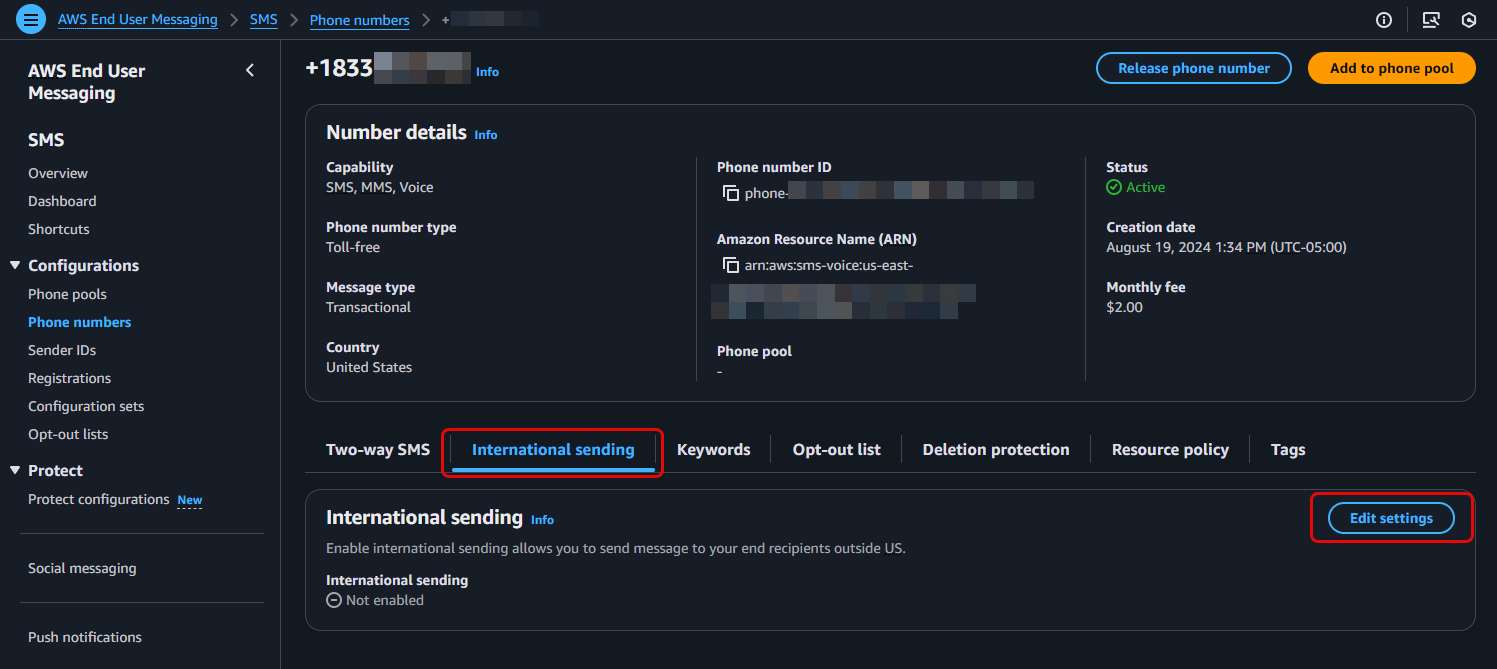

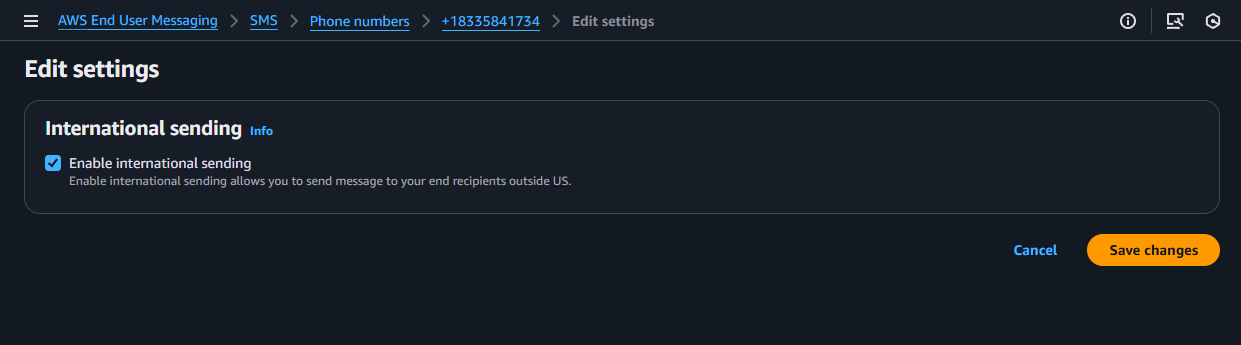

Основният ѝ метод е заснемане на фалшиви видеота с участие на актьори или генерирането им с AI (по-рано – с дийпфейкване). Вероятната локация на екипа, който заснема видеотата с актьори, е Санкт Петербург. Те често се позовават на източници, които всъщност не съществуват. Пуснатите от тях видеота, както и другите пропагандни лъжи, след това биват споделяни от контролирани от ГРУ ботмрежи за ехо-акаунти.

В командването на групата вероятно взимат участие, или се намират под общо командване с нея, антилибералният „Центр геополитических экспертиз“ и друга пропагандна група, известна като „Фондация для борьбы с несправедливости“. Тясно свързани с нея са:

– групата Storm-1099, специализирана в създаване на фалшиви или имитационни пропагандни уебсайтове

– групата Storm-1679, специализирана в създаване на фалшиви документи и новинарски рапорти от името на достоверно изглеждащи, но фалшиви организации

– Ruza Flood, Volga Flood и Rybar – организации, които също разпространяват фалшива информация, често под името на реална личност (напр. Rybar – Михаил Звинчук) или фалшива персона, изпълнявана от актьор (напр. „Саня от Флорида“).

Свързана с нея изглежда и руската медийна компания Tenet Media, затворена през 2024 г. Основната ѝ дейност е била да плаща на десни блогъри в САЩ да снимат видеота с поръчано от нея съдържание.

Някои от основните теми, по които Storm-1516 генерират фалшиво съдържание, са:

– Намаляване на подкрепата за западна помощ за Украйна срещу руската агресия

През май 2024 г. те пускат фалшиво видео, което показва как украински войници горят чучело на Доналд Тръмп.

Много от фалшивките им лъжат, че украинските лидери злоупотребяват със западната помощ – купуват си яхти, имения, луксозни коли, наркотици и т.н. Имало е случаи, когато американски политици са се хващали на тези лъжи.

– Подкрепа за Доналд Тръмп

Честа тяхна теза е че украински тролски ферми работят срещу избирането на Доналд Тръмп. Целта ѝ е да прикрие отлично доказания факт, че всички руски тролски ферми бяха ангажирани в подкрепа на Доналд Тръмп. Едно от фалшивите видеота показва „служител на украинска тролска ферма“, който „си признава“, че ЦРУ изпълнява заговор, за да попречи на Тръмп да стане президент.

– Свързване на Украйна с Хамас

През октомври 2023 г. те пускат фалшиво видео, в което „ръководител на Хамас“ (лицето му е скрито) „благодари на Зеленски за доставките на оръжие и муниции“. В реалността между Украйна и Хамас няма връзки. Същият актьор в друго видео, пуснато преди Олимпийските игри в Париж, предупреждава, че „Хамас ще атакуват Олимпийските игри“. Видеото е широко разпространено чрез руските тролски мрежи. Френските и израелските служби потвърждават, че е фалшиво.

– Подкрепа за Доналд Тръмп

От началото на лятото на 2024 г. до самите избори за президент в САЩ групата, както и всички други тролски ресурси на ГРУ, е съсредоточена върху подкрепа за Доналд Тръмп. Storm-1516 основно произвеждат фалшиви видеота и новини, обвиняващи кандидатите на демократите в престъпления и разпространяващи конспиративни теории спрямо тях. През това време групата оперира над 100 фалшиви уебсайта, които очернят демократите и хвалят Тръмп и Русия. Частта от групата, която се грижи за тези сайтове, е ръководена от Джон Марк Дугън – бивш полицай от Флорида, конспиративен теорист, уличен в платена проруска пропаганда и потърсил политическо убежище в Русия.

Един от разпространяваните от групата фалшификати е, че Сикрет Сървис са открили в имението на Тръмп подслушвателни устройства, монтирани там от ФБР. Тръмп и Ванс също споделят тази лъжа в социалните мрежи.

Друг гласи, че при посещение в Замбия Камала Харис е застреляла нелегално Касуба – популярен женски черен носорог (видът е застрашен от изчезване). Фалшификатът е споделен от над милион фалшиви акаунта в Х, както и от работещи за Русия западни пропагандисти (Chay Bowes и т.н.)

Друго тяхно фалшиво видео, разпространено от Джон Дугън, „показва“ как поддръжници на Камала Харис атакуват сбирка на поддръжници на Тръмп и пребиват един от посетителите. Към това са прикрепени обичайните опорки за „агресивните леви екстремисти“, и коментари, целящи да засилят междурасови напрежения в САЩ.

На друго тяхно фалшиво видео „нелегален имигрант от Хаити“ разказва „как му е било платено да гласува за Харис многократно с различни шофьорски книжки“.

На друго тяхно фалшиво видео, пуснато в деня на изборите, „американски гласоподавател“ разказва как двама поддръжници на Харис нападнали и пребили поддръжник на Тръмп, за да му попречат да гласува.

В много агресивно разпространявано тяхно видео, видяно от почти 3 милиона души, жена твърди че е била блъсната като тинейджърка през 2011 г. от Камала Харис с кола и оставена да умре. Била цялата изпотрошена, претърпяла 11 операции преди да проходи отново, била и до момента в постоянни болки и т.н… Жената, посочена в различни източници като „Алисия Браун“, „Алиша Браун“ и т.н., е платена актриса. Рентгеновата снимка, показана във видеото за нейна, е взета от медицинско списание. Снимката на катастрофата от видеото е от катастрофа в Гуам през 2018 г. Новинарската агенция, посочена във видеото като източник на материала, не съществува (с изключение на фалшив уебсайт, създаден и поддържан от Storm-1516). Частта от видеото, на която Камала Харис напуска мястото на катастрофата, е дийпфейк… Видеото е споделено от много конгресмени-републиканци, включително Дж. Д. Ванс.

През октомври 2024 г., точно преди изборите, Storm-1516 прави съвместна кампания с QAnon за изкарване на Тим Валц (кандидат за вицепрезидент на демократите) педофил. Близко до QAnon радио излъчва „интервюта“ с „родител“ (в ролята – Джон Дугън) на „ученик“ на Валц, и със „студент на Валц от Казахстан“. (Проверката показва, че в училището, където преподава Валц, за последните 20 години не е имало студенти от Казахстан.) Части от излъчването са споделени масово от руски тролски ботмрежи и са видени от над 800 000 души в САЩ.

Един от акаунтите, споделили излъчването, е “BlackInsurrectionist” – създаден и управляван от Storm-1516. Има го във всички големи социални мрежи, следван е от водещи републикански политици (вкл. Тръмп-младши и Роджър Стоун). С тяхна помощ излъчването стига до около 33 милиона души.

В средата на октомври 2024 г. този акаунт пуска видео с е-майл, за който твърди, че е от малолетен, сексуално преследван от Валц. Многобройни признаци, че видеото е фалшификат, са моментално посочени от много негови зрители, но търсенията в Гугъл за „Tim Waltz pedophile“ скачат стократно. Няколко дни по-късно друг акаунт за конспиративни теории, също управляван от Storm-1516, пуска друго видео с подобни твърдения. Трето подобно видео, очевидно дело на същата група, е пуснато от акаунт на QAnon. Анализ на експерти от Wired показва, че всичките тези видеота са дийпфейкове.

На 19 октомври почти всички сайтове, управлявани от Джон Дугън, пускат голям репортаж, който цитира тези видеота (и ги представя за истински). Репортажът е цитиран от десни инфлуенсъри, между тях Кендис Оуенс и Джак Пособиец. На 21 октомври експерти на Wired успяват да докажат, че видеотата са дело на Storm-1516.

Друго фалшиво видео, създадено от същата група и пуснато през акаунт на QAnon 2 дни преди изборите, показва как член на изборна комисия проверява пратени по пощата бюлетини и къса тези, които са за Доналд Тръмп. Експертите веднага забелязват, че материалите и бюлетините, показани във видеото, не са истински – очевидно реквизиторът на групата се е изложил.

… Мога да напиша още няколко пъти по толкова за тях. А като погледнете поредния им номер, се досещайте колко още са като тях.

Правете си изводите.