Post Syndicated from Explosm.net original http://explosm.net/comics/5926/

New Cyanide and Happiness Comic

Post Syndicated from Explosm.net original http://explosm.net/comics/5926/

New Cyanide and Happiness Comic

Post Syndicated from Oglaf! -- Comics. Often dirty. original https://www.oglaf.com/closingceremony/

Post Syndicated from Talks at Google original https://www.youtube.com/watch?v=B8KmuNq7FSY

Post Syndicated from Techmoan original https://www.youtube.com/watch?v=nJq30FR2GN8

Post Syndicated from Explosm.net original http://explosm.net/comics/5925/

New Cyanide and Happiness Comic

Post Syndicated from Matt Granger original https://www.youtube.com/watch?v=XaB1yemkuPI

Post Syndicated from Емилия Милчева original https://toest.bg/zhutva-e-sega-dey-karadzhata/

Когато всички в България, гласували и негласували, очакват Промяна от победителя в изборите, недопустимо е кандидатът за премиер да е бил министър в две бивши правителства. Не само от имиджова гледна точка, но и от тази на политическата логика е необходим нов човек, който да символизира тази промяна, да бъде неин флагман. Така, както с енергичните си действия по вадене на скелети от управлението…

Post Syndicated from Светла Енчева original https://toest.bg/naistina-li-se-oturvahme-ot-natsionalizma/

След предсрочните избори на 11 юли мнозина въздъхнаха с облекчение – във втори пореден парламент няма да има националистически партии. Дори животът на 46-тото Народно събрание да се окаже не по-дълъг от този на 45-тото, на пръв поглед резултатите очертават поне три важни тенденции. Първата е, че за крайнодесните ни партии две плюс две очевидно е по-малко от четири. С други думи…

Post Syndicated from Венелина Попова original https://toest.bg/golemite-gubeshti-na-poslednite-izbori/

България е в политическа криза, която имаше риск се задълбочи, ако Слави Трифонов беше внесъл проекта си за кабинет с премиер Николай Василев в новото Народно събрание. С начина, по който го обяви – в първия ден след изборите, още преди ЦИК да се е произнесла окончателно кой е победителят с първо право да състави правителство – лидерът на „Има такъв народ“ принизи политиката до шоу.

Post Syndicated from Иван Радев original https://toest.bg/bg-leaders-vaccine-covid-19/

За шест месеца – от декември 2020 г. до юни 2021 г. – лидерите на всички страни от Европейския съюз обявиха, че са се ваксинирали срещу COVID-19. На всички без една. България. За разлика от европейските си колеги, президентът Румен Радев още не се е решил да предприеме тази стъпка. Поне не публично. Същото важи и за бившия министър-председател Бойко Борисов, както и за служебния премиер Стефан…

Post Syndicated from Йоанна Елми original https://toest.bg/kak-se-uchim-na-mediyna-gramotnost/

„Училище на ХХI век“ е съвместна рубрика на „Тоест“ и „Заедно в час“, в която ще ви представим успешните практики в българското образование и ще търсим работещи решения за неговото подобряване. Един от най-големите проблеми на учениците е, че не умеят да различават факт от мнение, споделя Сава Ташев, учител в столичното 97-мо СУ „Братя Миладинови“. Той преподава медийна грамотност „през предмета…

Post Syndicated from original https://toest.bg/kreshtim-na-sveta-che-imame-nuzhda-ot-pomosht/

Вторник, 13 юли 2021 г. – Здравей! Пиша ти от тайна WiFi мрежа. – Защо тайна? – Прекъснаха ни интернета. Никой няма връзка през мобилни данни на телефона, защото не искат да се публикува това, което в момента се случва. Това е моят вик по улиците на Хавана от днес. – Това ти ли си в аудиото? – Да. Не можем да издържаме повече. Убиват ни от глад. Аз повече не мога така.

Post Syndicated from Стефан Иванов original https://toest.bg/na-vtoro-chetene-prazninata/

Никой от нас не чете единствено най-новите книги. Тогава защо само за тях се пише? „На второ четене“ е рубрика, в която отваряме списъците с книги, публикувани преди поне година, четем ги и препоръчваме любимите си от тях. Рубриката е част от партньорската програма Читателски клуб „Тоест“. Изборът на заглавия обаче е единствено на авторите – Стефан Иванов и Севда Семер, които биха ви препоръчали…

Post Syndicated from Тоест original https://toest.bg/editorial-12-16-july-2021/

И вторите парламентарни избори за 2021 г. са вече история. Вероятността до края на годината да ни се наложи отново да гласуваме за парламент не може да се изключи напълно, предвид събитията от седмицата. В „Има такъв народ“ очевидно не осъзнават, че макар формално да спечелиха изборите, победата им е пирова и не могат да си позволят комфорта еднолично да определят посоката. Или просто не желаят да…

Post Syndicated from Bruce Schneier original https://www.schneier.com/blog/archives/2021/07/friday-squid-blogging-giant-squid-model.html

Pretty wooden model.

As usual, you can also use this squid post to talk about the security stories in the news that I haven’t covered.

Read my blog posting guidelines here.

Post Syndicated from Dhiraj Thakur original https://aws.amazon.com/blogs/big-data/part-1-query-an-apache-hudi-dataset-in-an-amazon-s3-data-lake-with-amazon-athena-part-1-read-optimized-queries/

On July 16, 2021, Amazon Athena upgraded its Apache Hudi integration with new features and support for Hudi’s latest 0.8.0 release. Hudi is an open-source storage management framework that provides incremental data processing primitives for Hadoop-compatible data lakes. This upgraded integration adds the latest community improvements to Hudi along with important new features including snapshot queries, which provide near real-time views of table data, and reading bootstrapped tables which provide efficient migration of existing table data.

In this series of posts on Athena and Hudi, we will provide a short overview of key Hudi capabilities along with detailed procedures for using read-optimized queries, snapshot queries, and bootstrapped tables.

With Apache Hudi, you can perform record-level inserts, updates, and deletes on Amazon S3, allowing you to comply with data privacy laws, consume real-time streams and change data captures, reinstate late-arriving data, and track history and rollbacks in an open, vendor neutral format. Apache Hudi uses Apache Parquet and Apache Avro storage formats for data storage, and includes built-in integrations with Apache Spark, Apache Hive, and Apache Presto, which enables you to query Apache Hudi datasets using the same tools that you use today with near-real-time access to fresh data.

An Apache Hudi dataset can be one of the following table types:

Apache Hudi provides three logical views for accessing data:

As of this writing, Athena supports read-optimized and real-time views.

In this post, you will use Athena to query an Apache Hudi read-optimized view on data residing in Amazon S3. The walkthrough includes the following high-level steps:

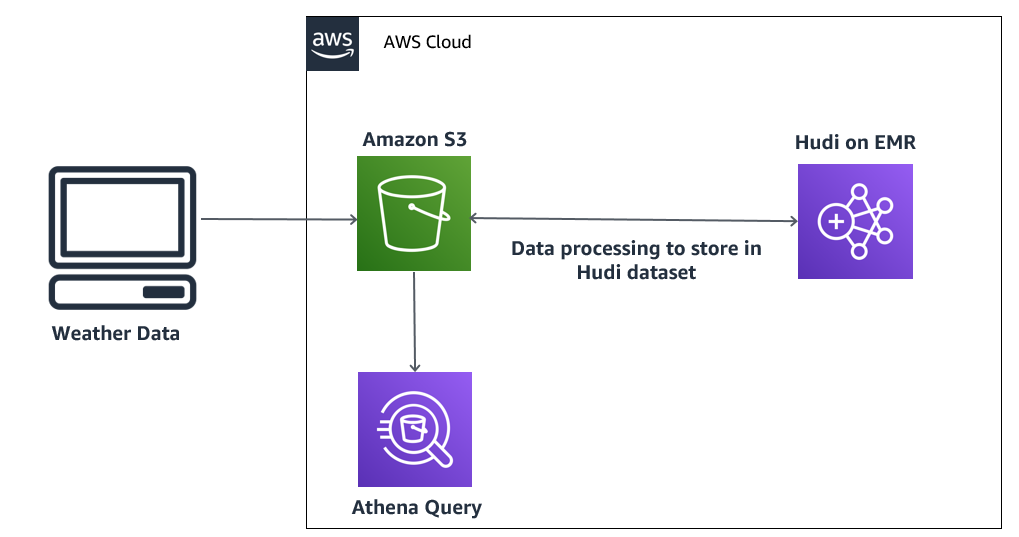

The following diagram illustrates our solution architecture.

In this architecture, you have high-velocity weather data stored in an S3 data lake. This raw dataset is processed on Amazon EMR and stored in an Apache Hudi dataset in Amazon S3 for further analysis by Athena. If the data is updated, Apache Hudi performs an update on the existing record, and these updates are reflected in the results fetched by the Athena query.

Let’s build this architecture.

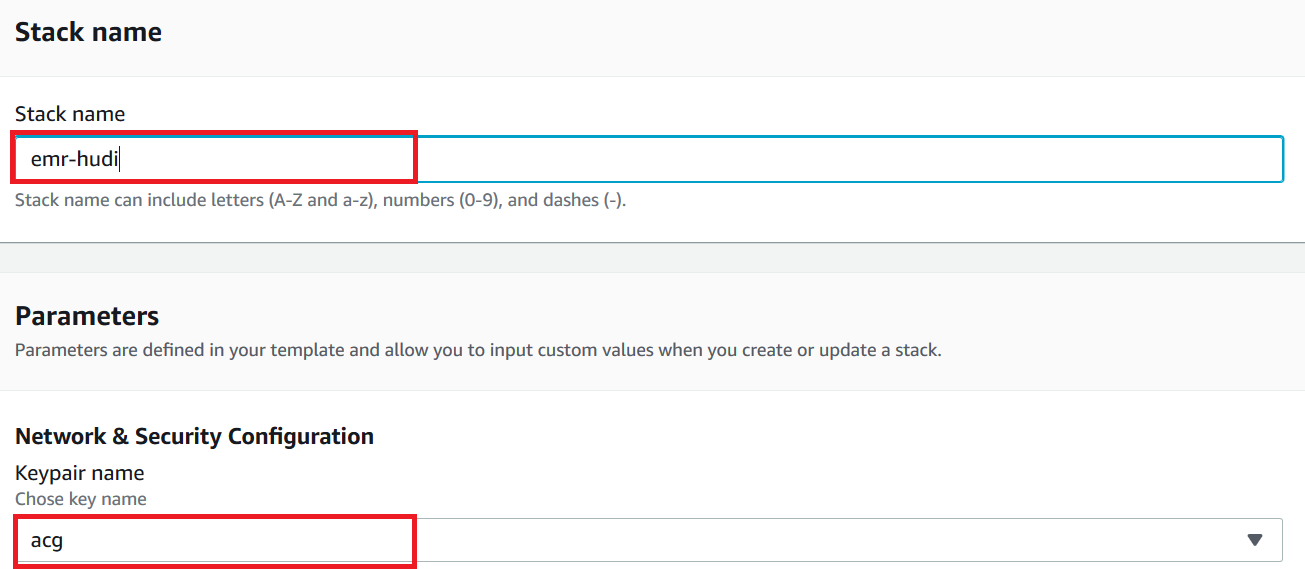

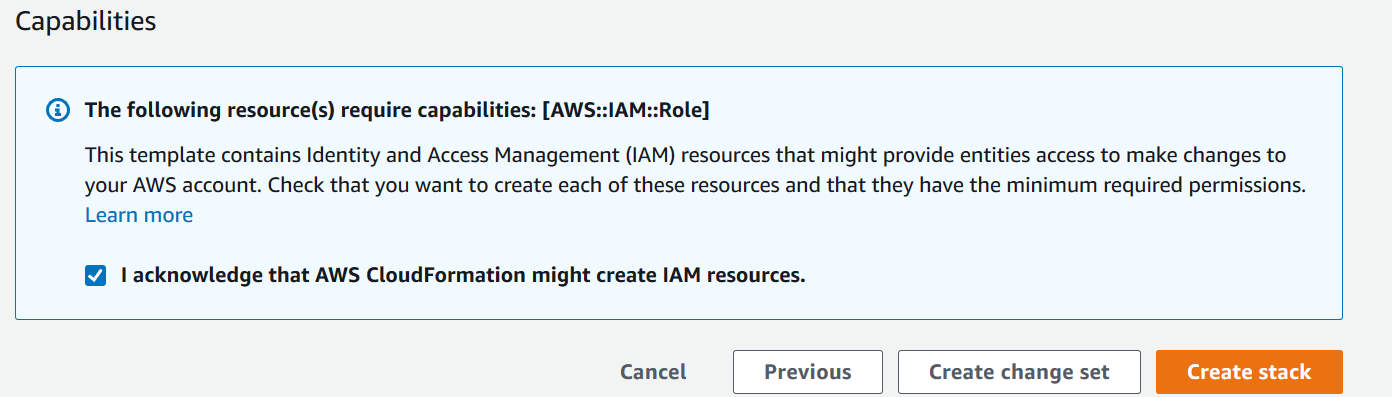

Before getting started, we set up our resources. For this post, we use the us-east-1 Region.

weather-raw-bucket).parquet_file and delta_parquet.data_insertion_cow_delta_script, data_insertion_cow_script, data_insertion_mor_delta_script, and data_insertion_mor_script), and Athena DDL code (athena_weather_hudi_cow.sql and athena_weather_hudi_mor.sql) from the GitHub repo.weather_oct_2020.parquet file to weather-raw-bucket/parquet_file.weather_delta.parquet to weather-raw-bucket/delta_parquet. We update an existing weather record from a relative_humidity of 81 to 50 and a temperature of 6.4 to 10.athena-hudi-bucket/hudi_weather.This is required to connect to the EMR cluster nodes. For more information, see Connect to the Master Node Using SSH.

When the cluster is ready, you can use the provided key pair to SSH into the primary node.

spark-shell to work with Apache Hudi:

spark-shell, run the following Scala code in the script data_insertion_cow_script to import weather data from the S3 data lake to an Apache Hudi dataset using the CoW storage type:

Replace the S3 bucket path for inputDataPath and hudiTablePath in the preceding code with your S3 bucket.

For more information about DataSourceWriteOptions, see Work with a Hudi Dataset.

spark-shell, count the total number of records in the Apache Hudi dataset:

data_insertion_mor_script (the default is COPY_ON_WRITE).spark.sql("show tables").show(); query to list three tables, one for CoW and two queries, _rt and _ro, for MoR.The following screenshot shows our output.

Let’s check the processed Apache Hudi dataset in the S3 data lake.

weather_hudi_cow and weather_hudi_mor are in athena-hudi-bucket.

weather_hudi_cow subfolder to see the Apache Hudi dataset that is partitioned using the date key—one for each date in our dataset.

hudi_athena_test database using following command:

You use this database to create all your tables.

athena_weather_hudi_cow.sql script:

Replace the S3 bucket path in the preceding code with your S3 bucket (Hudi table path) in LOCATION.

athena_weather_judi_cow.sql script on the Athena console:

Replace the S3 bucket path in the preceding code with your S3 bucket (Hudi table path) in LOCATION.

It should return a single row with a count of 1,000.

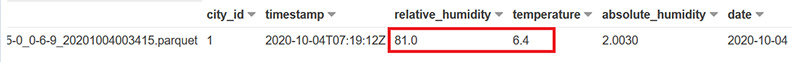

Now let’s check the record that we want to update.

The output should look like the following screenshot. Note the value of relative_humidity and temperature.

data_insertion_cow_delta_script script on the spark-shell prompt to update the data:

Replace the S3 bucket path for inputDataPath and hudiTablePath in the preceding code with your S3 bucket.

The following screenshot shows our query results.

The relative_humidity and temperature values for the relevant record are updated.

You must clean up the resources you created earlier to avoid ongoing charges.

weather-raw-bucket and athena-hudi-bucket and delete the buckets.As you have learned in this post, we used Apache Hudi support in Amazon EMR to develop a data pipeline to simplify incremental data management use cases that require record-level insert and update operations. We used Athena to read the read-optimized view of an Apache Hudi dataset in an S3 data lake.

Dhiraj Thakur is a Solutions Architect with Amazon Web Services. He works with AWS customers and partners to provide guidance on enterprise cloud adoption, migration and strategy. He is passionate about technology and enjoys building and experimenting in Analytics and AI/ML space.

Dhiraj Thakur is a Solutions Architect with Amazon Web Services. He works with AWS customers and partners to provide guidance on enterprise cloud adoption, migration and strategy. He is passionate about technology and enjoys building and experimenting in Analytics and AI/ML space.

Sameer Goel is a Solutions Architect in The Netherlands, who drives customer success by building prototypes on cutting-edge initiatives. Prior to joining AWS, Sameer graduated with a master’s degree from NEU Boston, with a Data Science concentration. He enjoys building and experimenting with creative projects and applications.

Sameer Goel is a Solutions Architect in The Netherlands, who drives customer success by building prototypes on cutting-edge initiatives. Prior to joining AWS, Sameer graduated with a master’s degree from NEU Boston, with a Data Science concentration. He enjoys building and experimenting with creative projects and applications.

Imtiaz (Taz) Sayed is the WW Tech Master for Analytics at AWS. He enjoys engaging with the community on all things data and analytics.

Imtiaz (Taz) Sayed is the WW Tech Master for Analytics at AWS. He enjoys engaging with the community on all things data and analytics.

Post Syndicated from Bruce Schneier original https://www.schneier.com/blog/archives/2021/07/revil-is-off-line.html

This is an interesting development:

Just days after President Biden demanded that President Vladimir V. Putin of Russia shut down ransomware groups attacking American targets, the most aggressive of the groups suddenly went off-line early Tuesday.

[…]

Gone was the publicly available “happy blog” the group maintained, listing some of its victims and the group’s earnings from its digital extortion schemes. Internet security groups said the custom-made sites - think of them as virtual conference rooms — where victims negotiated with REvil over how much ransom they would pay to get their data unlocked also disappeared. So did the infrastructure for making payments.

Okay. So either the US took them down, Russia took them down, or they took themselves down.

Post Syndicated from Aaron Wells original https://blog.rapid7.com/2021/07/16/insightvm-release-roundup-q2-2021/

The world is changing rapidly. We hear that phrase a lot. Throughout Q2 though, it really is true. Vaccines have been rolling out, to varying success depending on the part of the world, but there is optimism.

As Rapid7 offices begin to open up to our hard-working team members around the globe, we want to infuse some of that optimism into the latest and greatest new features and updates now available to InsightVM customers. The back half of the year will no doubt bring new threats (will ransomware attacks keep going bigger?), so let’s dive into what’s new so you can prepare and prosper.

In our Q1 recap, we covered 2 releases that can each have significant positive impact on your operations, so they bear repeating here.

Now available in InsightVM, you can now navigate directly to the new Kubernetes tab to initiate the Kubernetes monitor in DockerHub. Then, deploy it to your clusters to see data in Container VRM within InsightVM. You can also see monitor health and connection details via the Data Collection Management page.

The Executive Summary Report in InsightVM has expanded its functionality so users can filter the report for at-a-glance views of priority items. Shape the report to access key metrics and communicate progress to desired goals and outcomes.

The new releases and updates for the second quarter of 2021 were aimed at quick-look features that bolster our goal of providing customers with evolving ease-of-use functionalities and products that increasingly focus on at-a-glance convenience.

Featuring new cards as well as new ways to filter cards, these features solve 3 distinct issues:

Rapid7’s Patch Tuesday dashboard template now provides an easy way to stay up to date on information associated with deployment of new Microsoft patches and cycles. Why search around for news or insights when you can get them in the one-stop-shop where your team already receives updates and kicks off remediation efforts on the latest vulnerabilities?

Featuring new cards detailing the assets affected as well as trends, assessments, and biggest risks, you can now learn about and prioritize remediation efforts on all Microsoft vulnerabilities within this expanded InsightVM dashboard.

Hunting down fine-grained vulnerability-and-remediation details

If this were about finding the best way to navigate your way past a big city, we would say this new feature is the loop that takes you around the traffic vs taking the surface streets that often put you in the traffic.

You can now quickly filter all of your cards by applying a single query to your dashboard. Gone are the days of manually filtering each and every card just to focus your view on a group of assets or vulnerabilities. Long story short: You save more time by quickly filtering to your desired view.

To continue the traffic analogy, getting somewhere faster than you’re used to is always a great thing. The latest InsightVM improvements help you do just that by addressing 3 issues:

Now you can simply deploy a check, load it into the Security Console, then the console does the rest. Just load the check, start the scan, and the console will automatically push that check to whichever Scan Engine(s) you specify.

Peek. Panel. Proof. What that actually means is InsightVM now offers at-a-glance context about a specific vulnerability via a “peek panel.” When a user clicks on an affected asset from the vulnerability details page, the panel opens to the right and displays the proof details.

Teams assessing container image builds in their CI/CD pipeline can now see results in the InsightVM Container Security feature Builds tab.

We hope you have a successful quarter and a great season, wherever your business takes you. Until next time…

Post Syndicated from Alan David Foster original https://blog.rapid7.com/2021/07/16/metasploit-wrap-up-121/

Prior to this release Metasploit offered two separate exploit modules for targeting MS17-010, dubbed Eternal Blue. The Ruby module previously only supported Windows 7, and a separate ms17_010_eternalblue_win8 Python module would target Windows 8 and above.

Now Metasploit provides a single Ruby exploit module exploits/windows/smb/ms17_010_eternalblue.rb which has the capability to target Windows 7, Windows 8.1, Windows 2012 R2, and Windows 10. This change removes the need for users to have Python and impacket installed on their host machine, and the automatic targeting functionality will now also make this module easier to run and exploit targets.

The Anti-Malware Scan Interface integrated into Windows poses a lot of challenges for offensive security testing. While bypasses exist and one such technique is integrated directly into Metasploit, the stub itself is identified as malicious. A chicken and egg problem exists due to the stub being incapable of being executed to bypass AMSI and permit the payload from executing. To address this, Metasploit now randomizes the AMSI bypass stub itself. The randomization both obfuscates literal string values that are known qualifiers for AMSI such as amsiInitFailed as well as shuffles the placement of powershell expressions. With these improvements in place, Powershell payloads are now much more likely to be successfully executed. While the bypass stub is now prepended by default for all exploit modules, it can be explicitly disabled by setting Powershell::prepend_protections_bypass to false.

Our very own Will Vu has added a new exploit module targeting VMware vCenter Server CVE-2021-21985. This module exploits Java unsafe reflection and SSRF in the VMware vCenter Server Virtual SAN Health Check plugin’s ProxygenController class to execute code as the vsphere-ui user. See the vendor advisory for affected and patched versions. This module has been tested against VMware vCenter Server 6.7 Update 3m (Linux appliance). For testing in your own lab environment, full details are in the module documentation.

root permissions, which can then be used to gain a shell as root. Note that exploitation requires that users have a non-interactive session on some systems so users may need to gain a SSH session first before exploiting this vulnerability.ms17_010_eternalblue_win8.py and consolidates the functionality into exploits/windows/smb/ms17_010_eternalblue.rb – which as a result can now target Windows 7, Windows 8.1, Windows 2012 R2, and Windows 10. This change now removes the need to have Python installed on the host machine, and the automatic targeting functionality will now make this module easier to run.post/multi/manage/shell_to_meterpreter, and other interactions with command shell based sessionsauxiliary/scanner/ssh/eaton_xpert_backdoor failed to load correctlyAs always, you can update to the latest Metasploit Framework with msfupdate

and you can get more details on the changes since the last blog post from

GitHub:

If you are a git user, you can clone the Metasploit Framework repo (master branch) for the latest.

To install fresh without using git, you can use the open-source-only Nightly Installers or the

binary installers (which also include the commercial edition).

Post Syndicated from Bill Magee original https://aws.amazon.com/blogs/architecture/using-amazon-macie-to-validate-s3-bucket-data-classification/

Securing sensitive information is a high priority for organizations for many reasons. At the same time, organizations are looking for ways to empower development teams to stay agile and innovative. Centralized security teams strive to create systems that align to the needs of the development teams, rather than mandating how those teams must operate.

Security teams who create automation for the discovery of sensitive data have some issues to consider. If development teams are able to self-provision data storage, how does the security team protect that data? If teams have a business need to store sensitive data, they must consider how, where, and with what safeguards that data is stored.

Let’s look at how we can set up Amazon Macie to validate data classifications provided by decentralized software development teams. Macie is a fully managed service that uses machine learning (ML) to discover sensitive data in AWS. If you are not familiar with Macie, read New – Enhanced Amazon Macie Now Available with Substantially Reduced Pricing.

Data classification is part of the security pillar of a Well-Architected application. Following the guidelines provided in the AWS Well-Architected Framework, we can develop a resource-tagging scheme that fits our needs.

In our example, we have multiple levels of data classification that represent different levels of risk associated with each classification. When a software development team creates a new Amazon Simple Storage Service (S3) bucket, they are responsible for labeling that bucket with a tag. This tag represents the classification of data stored in that bucket. The security team must maintain a system to validate that the data in those buckets meets the classification specified by the development teams.

This separation of roles and responsibilities for development and security teams who work independently requires a validation system that’s decoupled from S3 bucket creation. It should automatically detect new buckets or data in the existing buckets, and validate the data against the assigned classification tags. It should also notify the appropriate development teams of misclassified or unclassified buckets in a timely manner. These notifications can be through standard notification channels, such as email or Slack channel notifications.

Figure 1. Validation system for data classification

We assume that teams are permitted to create S3 buckets and we will use AWS Config to enforce the following required tags: DataClassification and SupportSNSTopic. The DataClassification tag indicates what type of data is allowed in the bucket. The SupportSNSTopic tag indicates an Amazon Simple Notification Service (SNS) topic. If there are issues found with the data in the bucket, a message is published to the topic, and Amazon SNS will deliver an alert. For example, if there is personally identifiable information (PII) data in a bucket that is classified as non-sensitive, the system will alert the owners of the bucket.

Macie is configured to scan all S3 buckets on a scheduled basis. This configuration ensures that any new bucket and data placed in the buckets is analyzed the next time the Macie job runs.

Macie provides several managed data identifiers for discovering and classifying the data. These include bank account numbers, credit card information, authentication credentials, PII, and more. You can also create custom identifiers (or rules) to gather information not covered by the managed identifiers.

Macie integrates with Amazon EventBridge to allow us to capture data classification events and route them to one or more destinations for reporting and alerting needs. In our configuration, the event initiates an AWS Lambda. The Lambda function is used to validate the data classification inferred by Macie against the classification specified in the DataClassification tag using custom business logic. If a data classification violation is found, the Lambda then sends a message to the Amazon SNS topic specified in the SupportSNSTopic tag.

The Lambda function also creates custom metrics and sends those to Amazon CloudWatch. The metrics are organized by engineering team and severity. This allows the security team to create a dashboard of metrics based on the Macie findings. The findings can also be filtered per engineering team and severity to determine which teams need to be contacted to ensure remediation.

This solution provides a centralized security team with the tools it needs. The team can validate the data classification of an Amazon S3 bucket that is self-provisioned by a development team. New Amazon S3 buckets are automatically included in the Macie jobs and alerts. These are only sent out if the data in the bucket does not conform to the classification specified by the development team. The data auditing process is loosely coupled with the Amazon S3 Bucket creation process, enabling self-service capabilities for development teams, while ensuring proper data classification. Your teams can stay agile and innovative, while maintaining a strong security posture.

Learn more about Amazon Macie and Data Classification.

By continuing to use the site, you agree to the use of cookies. more information

The cookie settings on this website are set to "allow cookies" to give you the best browsing experience possible. If you continue to use this website without changing your cookie settings or you click "Accept" below then you are consenting to this.