Post Syndicated from Sébastien Stormacq original https://aws.amazon.com/blogs/aws/aws-weekly-roundup-amazon-api-gateway-aws-step-functions-amazon-ecs-amazon-eks-amazon-lightsail-amazon-vpc-and-more-january-29-2024/

This past week our service teams continue to innovate on your behalf, and a lot has happened in the Amazon Web Services (AWS) universe. I’ll also share about all the AWS Community events and initiatives that are happening around the world.

Let’s dive in!

Last week’s launches

Here are some launches that got my attention:

AWS Step Functions adds integration for 33 services including Amazon Q – AWS Step Functions is a visual workflow service capable of orchestrating over 11,000+ API actions from over 220 AWS services to help customers build distributed applications at scale. This week, AWS Step Functions expands its AWS SDK integrations with support for 33 additional AWS services, including Amazon Q, AWS B2B Data Interchange, and Amazon CloudFront KeyValueStore.

Amazon Elastic Container Service (Amazon ECS) Service Connect introduces support for automatic traffic encryption with TLS Certificates – Amazon ECS launches support for automatic traffic encryption with Transport Layer Security (TLS) certificates for its networking capability called ECS Service Connect. With this support, ECS Service Connect allows your applications to establish a secure connection by encrypting your network traffic.

Amazon Elastic Kubernetes Service (Amazon EKS) and Amazon EKS Distro support Kubernetes version 1.29 – Kubernetes version 1.29 introduced several new features and bug fixes. You can create new EKS clusters using v1.29 and upgrade your existing clusters to v1.29 using the Amazon EKS console, the eksctl command line interface, or through an infrastructure-as-code (IaC) tool.

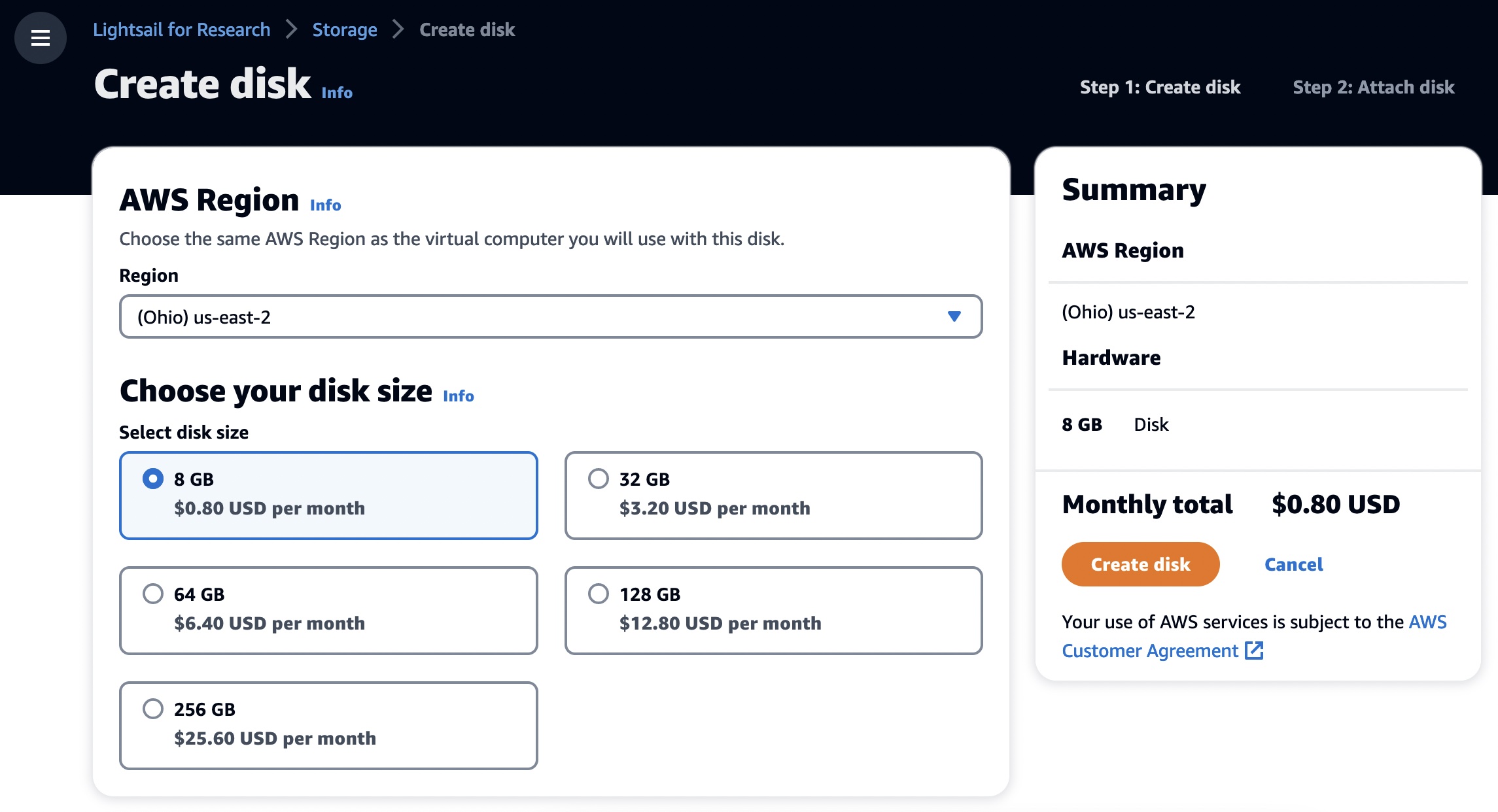

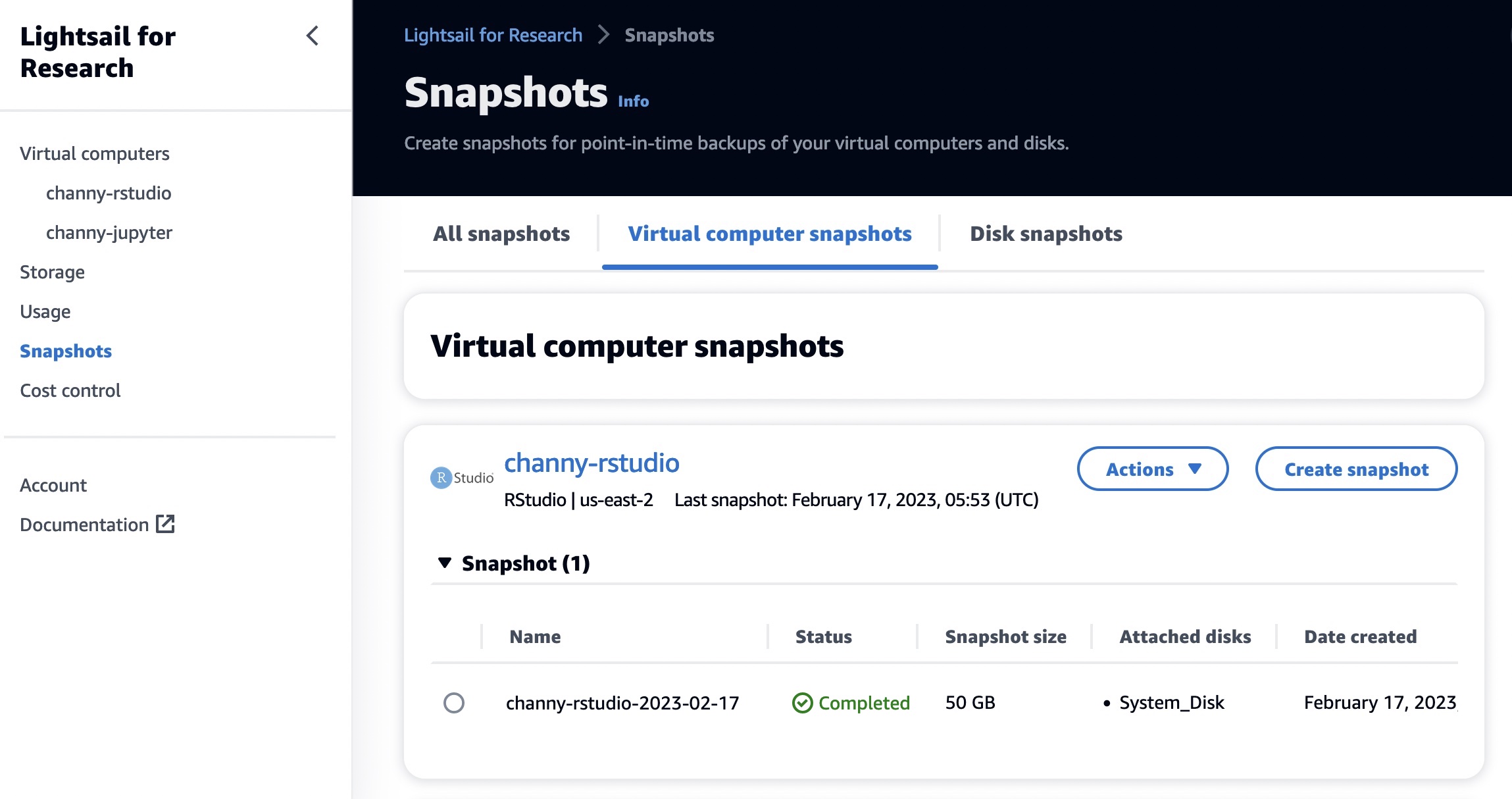

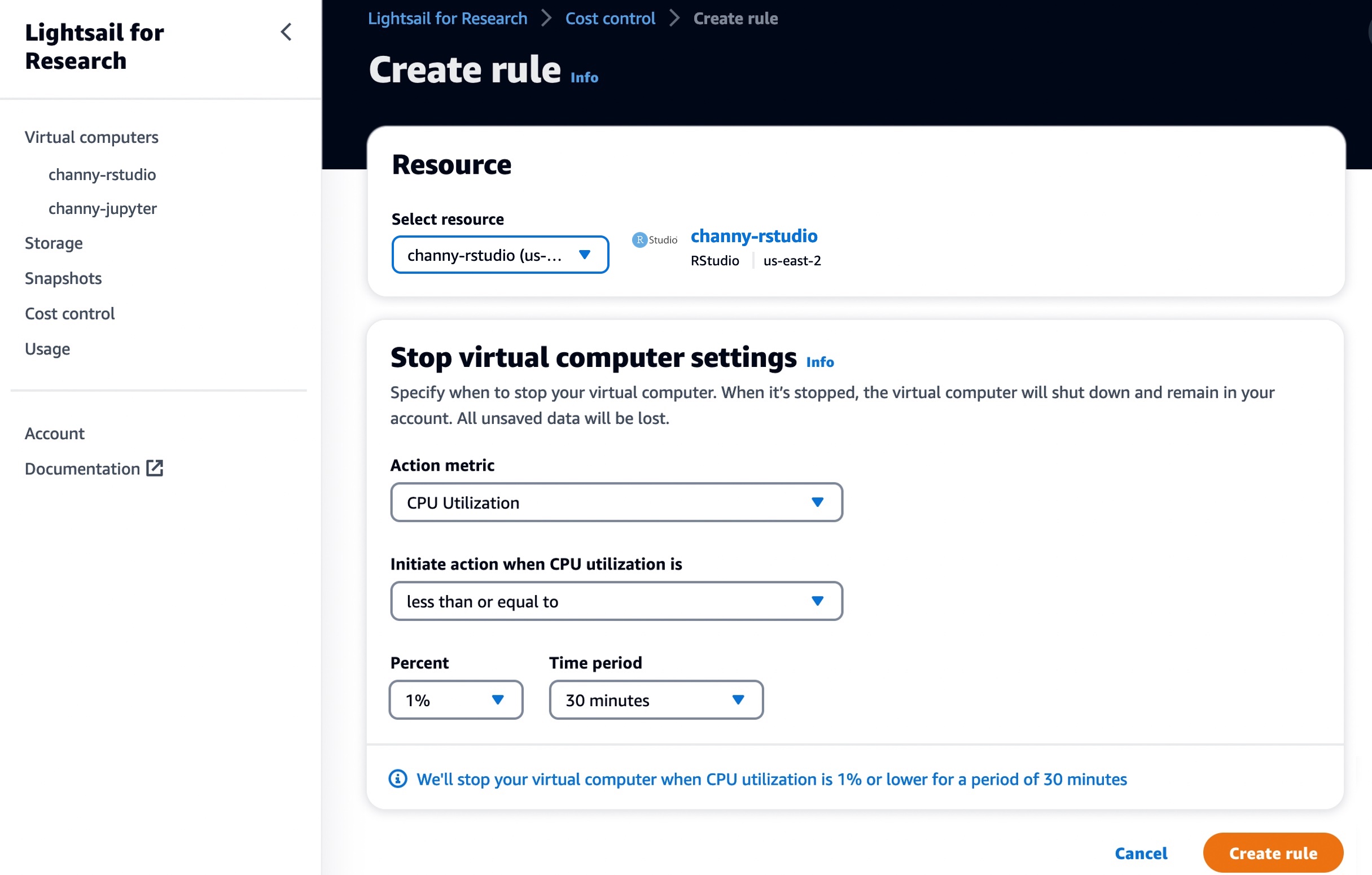

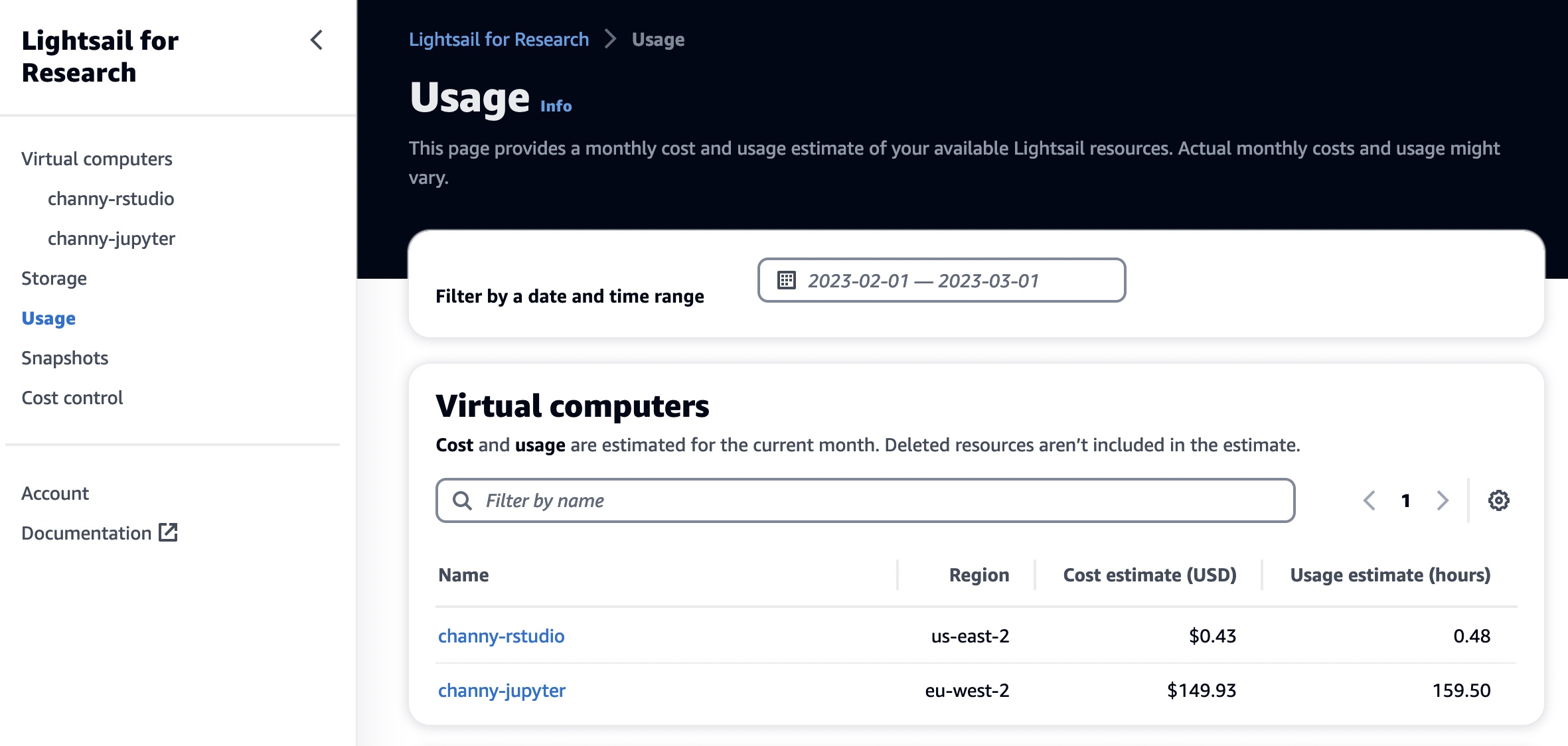

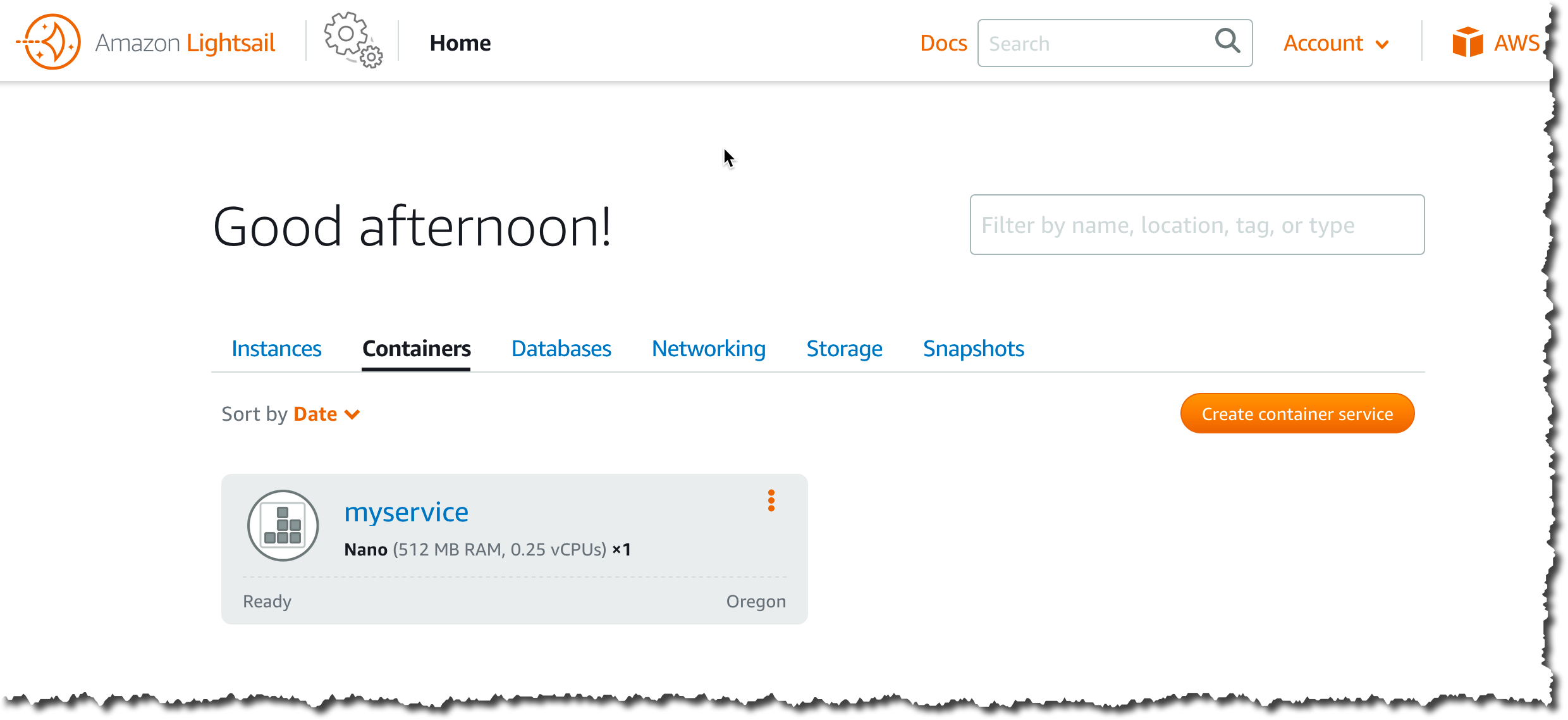

IPv6 instance bundles on Amazon Lightsail – With these new instance bundles, you can get up and running quickly on IPv6-only without the need for a public IPv4 address with the ease of use and simplicity of Amazon Lightsail. If you have existing Lightsail instances with a public IPv4 address, you can migrate your instances to IPv6-only in a few simple steps.

Amazon Virtual Private Cloud (Amazon VPC) supports idempotency for route table and network ACL creation – Idempotent creation of route tables and network ACLs is intended for customers that use network orchestration systems or automation scripts that create route tables and network ACLs as part of a workflow. It allows you to safely retry creation without additional side effects.

Amazon Interactive Video Service (Amazon IVS) announces audio-only pricing for Low-Latency Streaming – Amazon IVS is a managed live streaming solution that is designed to make low-latency or real-time video available to viewers around the world. It now offers audio-only pricing for its Low-Latency Streaming capability at 1/10th of the existing HD video rate.

Sellers can resell third-party professional services in AWS Marketplace – AWS Marketplace sellers, including independent software vendors (ISVs), consulting partners, and channel partners, can now resell third-party professional services in AWS Marketplace. Services can include implementation, assessments, managed services, training, or premium support.

Introducing the AWS Small and Medium Business (SMB) Competency – This is the first go-to-market AWS Specialization designed for partners who deliver to small and medium-sized customers. The SMB Competency provides enhanced benefits for AWS Partners to invest and focus on SMB customer business, such as becoming the go-to standard for participation in new pilots and sales initiatives and receiving unique access to scale demand generation engines.

For a full list of AWS announcements, be sure to keep an eye on the What’s New at AWS page.

X in Y – We launched existing services and instance types in additional Regions:

- Amazon Q in QuickSight is now available in Europe (Frankfurt). With Amazon Q in QuickSight, business users can generate compelling data stories that provide narratives of data, create dashboard summaries to share key insights from data in seconds, and confidently answer questions not answered by dashboards and reports.

- Amazon Launch Wizard is now available in Asia Pacific (Melbourne) and Europe (Spain, Zurich). AWS Launch Wizard provides a step-by-step guide to help size, configure, and deploy AWS resources for SAP HANA and SAP NetWeaver systems built on SAP HANA and Adaptive Server Enterprise (ASE) using application programming interfaces (APIs) or a console-based experience.

- Amazon RDS Custom for Oracle is now available in Europe (Paris). By using Amazon RDS Custom for Oracle, you can benefit from the agility of a managed database service, with features such as automated backups and point-in-time recovery, and also help meet your database application’s customization requirements.

- Amazon EC2 M7a, R7a instances now available in Asia Pacific (Tokyo). M7a and R7a instances, powered by 4th Gen AMD EPYC processors (code-named Genoa) with a maximum frequency of 3.7 GHz, deliver up to 50 percent higher performance compared to M6a and R6a instances, respectively.

- Amazon EC2 C7i instances are now available in Europe (Frankfurt) and South America (São Paulo). C7i instances are powered by custom 4th Gen Intel Xeon Scalable processors only available on AWS. They offer up to 15 percent better performance over comparable x86-based Intel processors utilized by other cloud providers.

- Amazon EC2 High Memory instances now available in Europe (Stockholm). Amazon EC2 High Memory instances are certified by SAP for running Business Suite on HANA, SAP S/4HANA, Data Mart Solutions on HANA, Business Warehouse on HANA, and SAP BW/4HANA in production environments.

- Amazon Connect SMS is now available in Asia Pacific (Seoul, Sydney). Amazon Connect SMS makes it easy for you to resolve customer issues via text messaging.

Other AWS news

Here are some additional projects, programs, and news items that you might find interesting:

Export a Software Bill of Materials using Amazon Inspector – Generating an SBOM gives you critical security information that offers you visibility into specifics about your software supply chain, including the packages you use the most frequently and the related vulnerabilities that might affect your whole company. My colleague Varun Sharma in South Africa shows how to export a consolidated SBOM for the resources monitored by Amazon Inspector across your organization in industry standard formats, including CycloneDx and SPDX. It also shares insights and approaches for analyzing SBOM artifacts using Amazon Athena.

Export a Software Bill of Materials using Amazon Inspector – Generating an SBOM gives you critical security information that offers you visibility into specifics about your software supply chain, including the packages you use the most frequently and the related vulnerabilities that might affect your whole company. My colleague Varun Sharma in South Africa shows how to export a consolidated SBOM for the resources monitored by Amazon Inspector across your organization in industry standard formats, including CycloneDx and SPDX. It also shares insights and approaches for analyzing SBOM artifacts using Amazon Athena.

AWS open source news and updates – My colleague Ricardo writes this weekly open source newsletter in which he highlights new open source projects, tools, and demos from the AWS Community.

Upcoming AWS events

Check your calendars and sign up for these AWS events:

AWS Innovate: AI/ML and Data Edition – Register now for the Asia Pacific & Japan AWS Innovate online conference on February 22, 2024, to explore, discover, and learn how to innovate with artificial intelligence (AI) and machine learning (ML). Choose from over 50 sessions in three languages and get hands-on with technical demos aimed at generative AI builders.

AWS Innovate: AI/ML and Data Edition – Register now for the Asia Pacific & Japan AWS Innovate online conference on February 22, 2024, to explore, discover, and learn how to innovate with artificial intelligence (AI) and machine learning (ML). Choose from over 50 sessions in three languages and get hands-on with technical demos aimed at generative AI builders.

AWS Summit Paris – The AWS Summit Paris is an annual event that is held in Paris, France. It is a great opportunity for cloud computing professionals from all over the world to learn about the latest AWS technologies, network with other professionals, and collaborate on projects. The Summit is free to attend and features keynote presentations, breakout sessions, and hands-on labs. Registrations are open!

AWS Summit Paris – The AWS Summit Paris is an annual event that is held in Paris, France. It is a great opportunity for cloud computing professionals from all over the world to learn about the latest AWS technologies, network with other professionals, and collaborate on projects. The Summit is free to attend and features keynote presentations, breakout sessions, and hands-on labs. Registrations are open!

AWS Community re:Invent re:Caps – Join a Community re:Cap event organized by volunteers from AWS User Groups and AWS Cloud Clubs around the world to learn about the latest announcements from AWS re:Invent.

AWS Community re:Invent re:Caps – Join a Community re:Cap event organized by volunteers from AWS User Groups and AWS Cloud Clubs around the world to learn about the latest announcements from AWS re:Invent.

You can browse all upcoming in-person and virtual events.

That’s all for this week. Check back next Monday for another Weekly Roundup!

This post is part of our Weekly Roundup series. Check back each week for a quick roundup of interesting news and announcements from AWS!