Post Syndicated from Macey Neff original https://aws.amazon.com/blogs/compute/introducing-instance-maintenance-policy-for-amazon-ec2-auto-scaling/

This post is written by Ahmed Nada, Principal Solutions Architect, Flexible Compute and Kevin OConnor, Principal Product Manager, Amazon EC2 Auto Scaling.

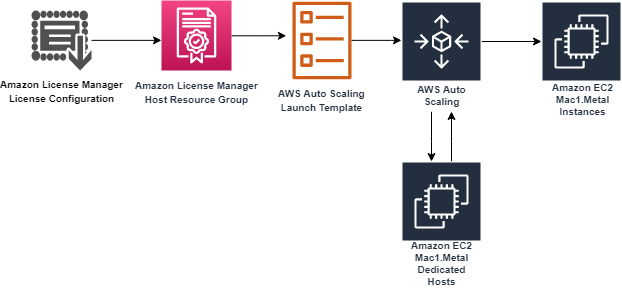

Amazon Web Services (AWS) customers around the world trust Amazon EC2 Auto Scaling to provision, scale, and manage Amazon Elastic Compute Cloud (Amazon EC2) capacity for their workloads. Customers have come to rely on Amazon EC2 Auto Scaling instance refresh capabilities to drive deployments of new EC2 Amazon Machine Images (AMIs), change EC2 instance types, and make sure their code is up-to-date.

Currently, EC2 Auto Scaling uses a combination of ‘launch before terminate’ and ‘terminate and launch’ behaviors depending on the replacement cause. Customers have asked for more control over when new instances are launched, so they can minimize any potential disruptions created by replacing instances that are actively in use. This is why we’re excited to introduce instance maintenance policy for Amazon EC2 Auto Scaling, an enhancement that provides customers with greater control over the EC2 instance replacement processes to make sure instances are replaced in a way that aligns with performance priorities and operational efficiencies while minimizing Amazon EC2 costs.

This post dives into varying ways to configure an instance maintenance policy and gives you tools to use it in your Amazon EC2 Auto Scaling groups.

Background

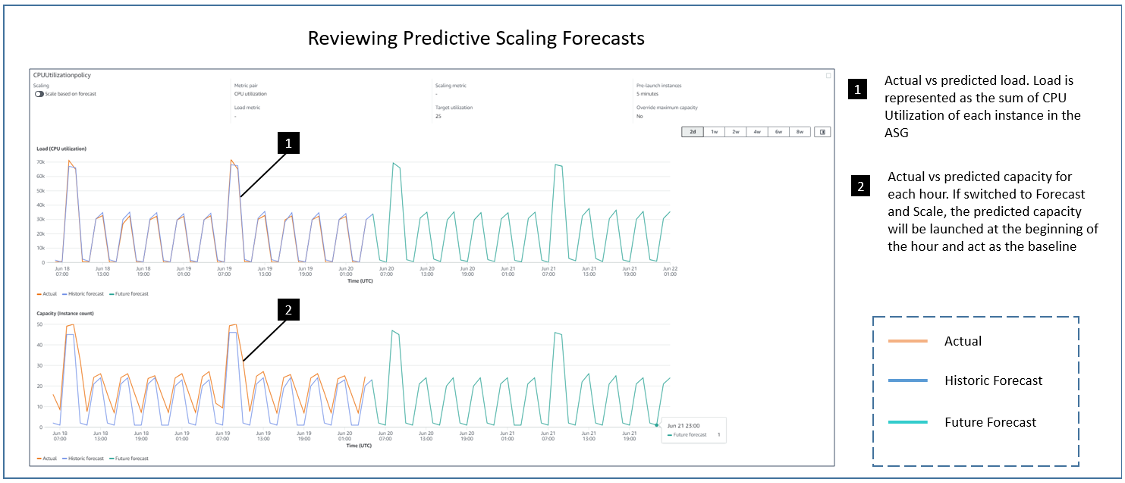

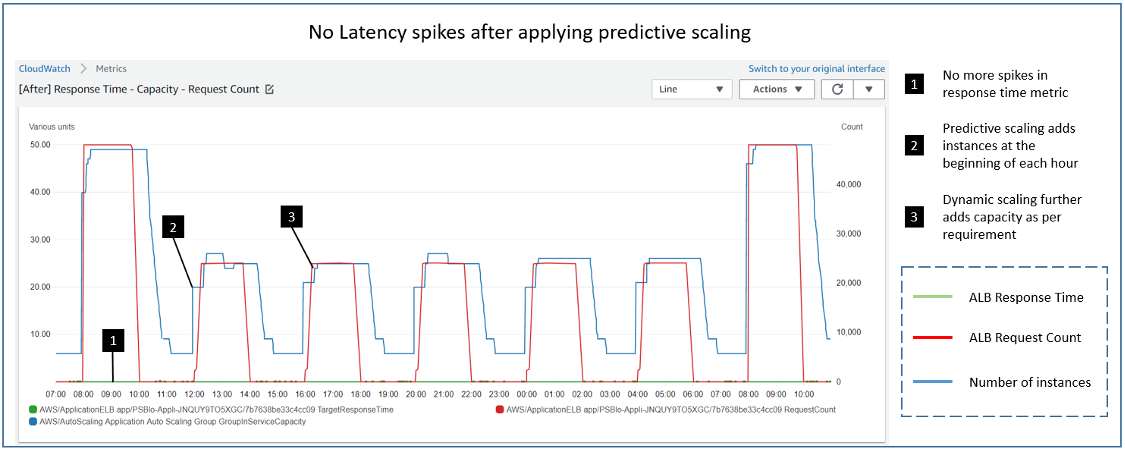

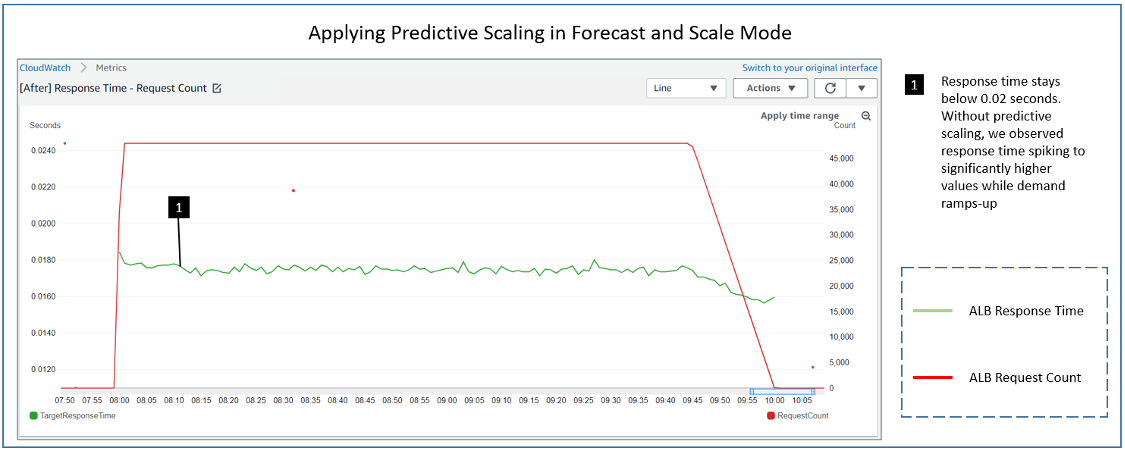

AWS launched Amazon EC2 Auto Scaling in 2009 with the goal of simplifying the process of managing Amazon EC2 capacity. Since then, we’ve continued to innovate with advanced features like predictive scaling, attribute-based instance selection, and warm pools.

A fundamental Amazon EC2 Auto Scaling capability is replacing instances based on instance health, due to Amazon EC2 Spot Instance interruptions, or in response to an instance refresh operation. The instance refresh capability allows you to maintain a fleet of healthy and high-performing EC2 instances in your Amazon EC2 Auto Scaling group. In some situations, it’s possible that terminating instances before launching a replacement can impact performance, or in the worst case, cause downtime for your applications. No matter what your requirements are, instance maintenance policy allows you to fine-tune the instance replacement process to match your specific needs.

Overview

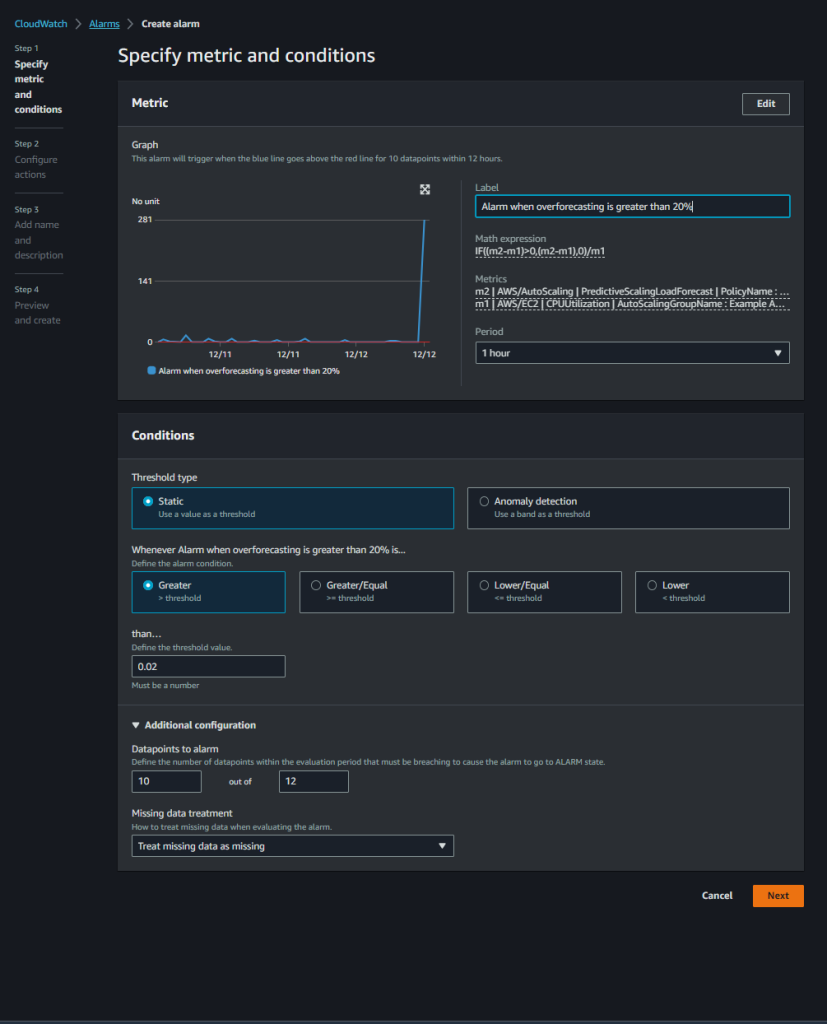

Instance maintenance policy adds two new Amazon EC2 Auto Scaling group settings: minimum healthy percentage (MinHealthyPercentage) and maximum healthy percentage (MaxHealthyPercentage). These values represent the percentage of the group’s desired capacity that must be in a healthy and running state during instance replacement. Values for MinHealthyPercentage can range from 0 to 100 percent and from 100 to 200 percent for MaxHealthyPercentage. These settings are applied to all events that lead to instance replacement, such as Health-check based replacement, Max Instance Lifetime, EC2 Spot Capacity Rebalancing, Availability Zone rebalancing, Instance Purchase Option Rebalancing, and Instance refresh. You can also override the group-level instance maintenance policy during instance refresh operations to meet specific deployment use cases.

Before launching instance maintenance policy, an Amazon EC2 Auto Scaling group would use the previously described behaviors when replacing instances. By setting the MinHealthyPercentage of the instance maintenance policy to 100% and the MaxHealthyPercentage to a value greater than 100%, the Amazon EC2 Auto Scaling group first launches replacement instances and waits for them to become available before terminating the instances being replaced.

Setting up instance maintenance policy

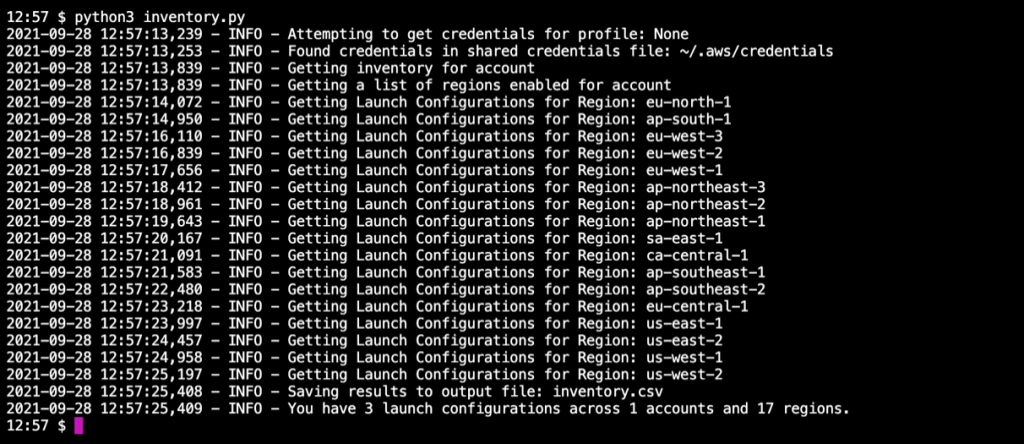

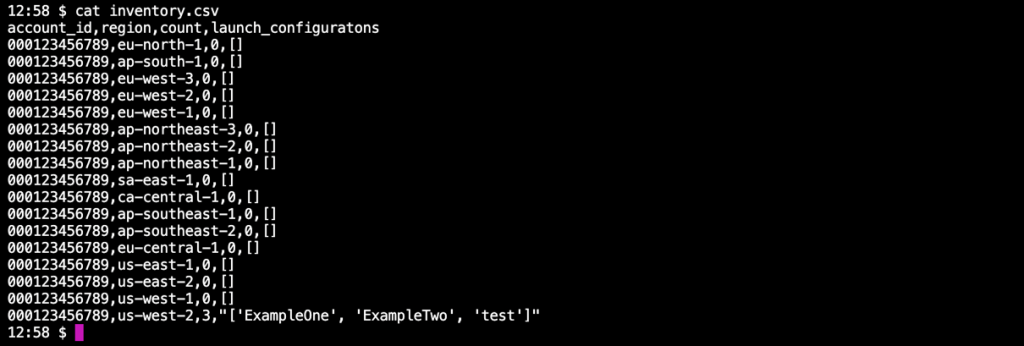

You can add an instance maintenance policy to new or existing Amazon EC2 Auto Scaling groups using the AWS Management Console, AWS Command Line Interface (AWS CLI), AWS SDK, AWS CloudFormation, and Terraform.

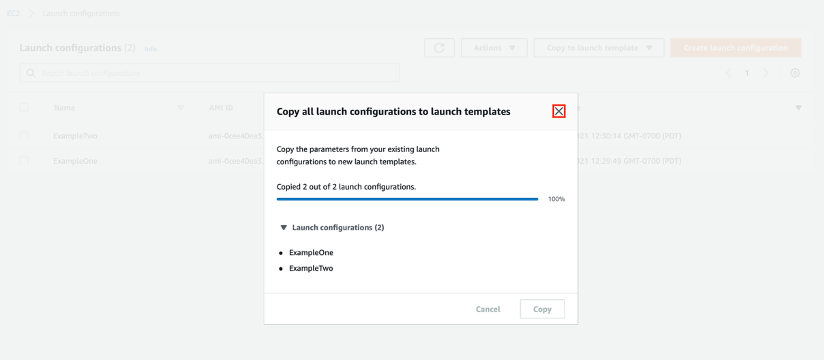

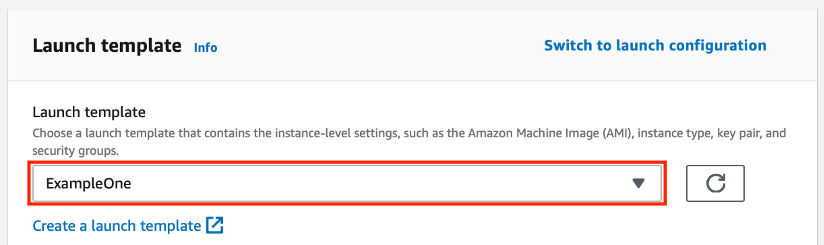

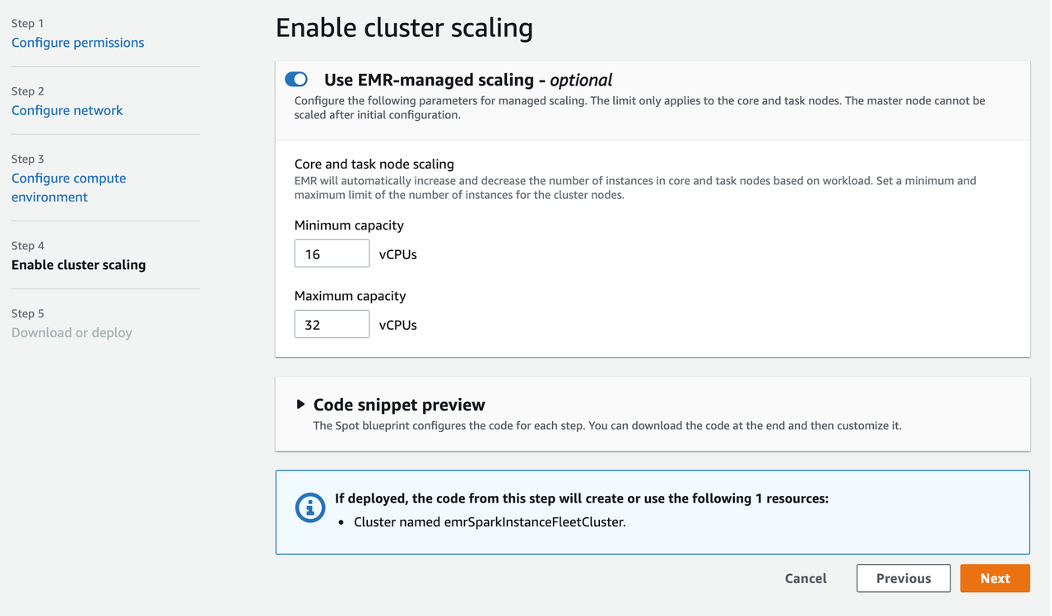

When creating or editing Amazon EC2 Auto Scaling groups in the Console, you are presented with four options to define the replacement behavior of your instance maintenance policy. These options include the No policy option, which allows you to maintain the default instance replacement settings that the Amazon EC2 Auto Scaling service uses today.

Image 1: The GUI for the instance maintenance policy feature within the “Create Auto Scaling group” wizard.

Using instance maintenance policy to increase application availability

The Launch before terminating policy is the right selection when you want to favor availability of your Amazon EC2 Auto Scaling group capacity. This policy setting temporarily increases the group’s capacity by launching new instances during replacement operations. In the Amazon EC2 console, you select the Launch before terminating replacement behavior, and then set your desired MaxHealthyPercentage value to determine how many more instances should be launched during instance replacement.

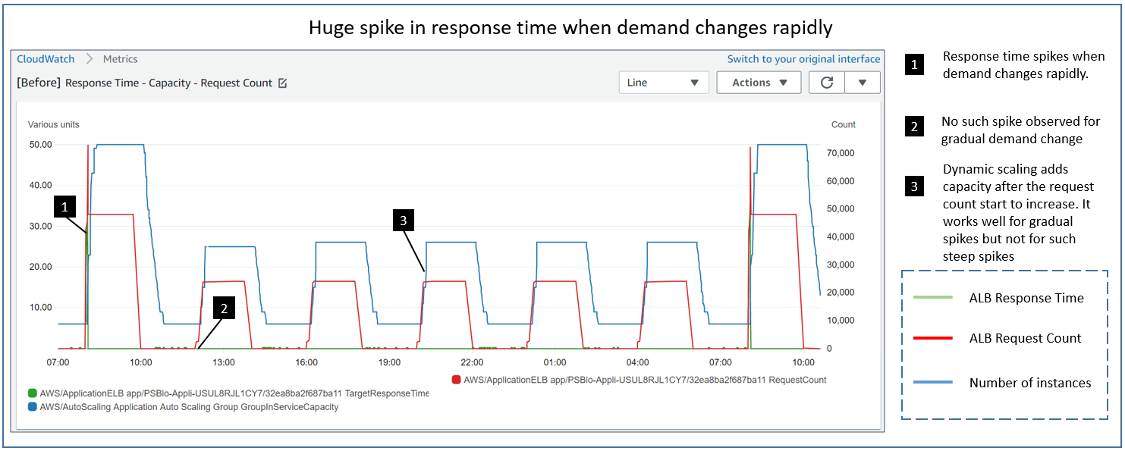

For example, if you are managing a workload that requires optimal availability during instance replacements, choose the Launch before terminating policy type with a MinHealthyPercentage set to 100%. If you set your MaxHealthyPercentage to 150%, then Amazon EC2 Auto Scaling launches replacement instances before terminating instances to be replaced. You should see the desired capacity increase by 50%, exceeding the group maximum capacity during the operation to provide you with the needed availability. The chart in the following figure illustrates what an instance refresh operation would behave like with a Launch before terminating policy.

Figure 1: A graph simulating the instance replacement process with a policy configured to launch before terminating.

Overriding a group’s instance maintenance policy during instance refresh

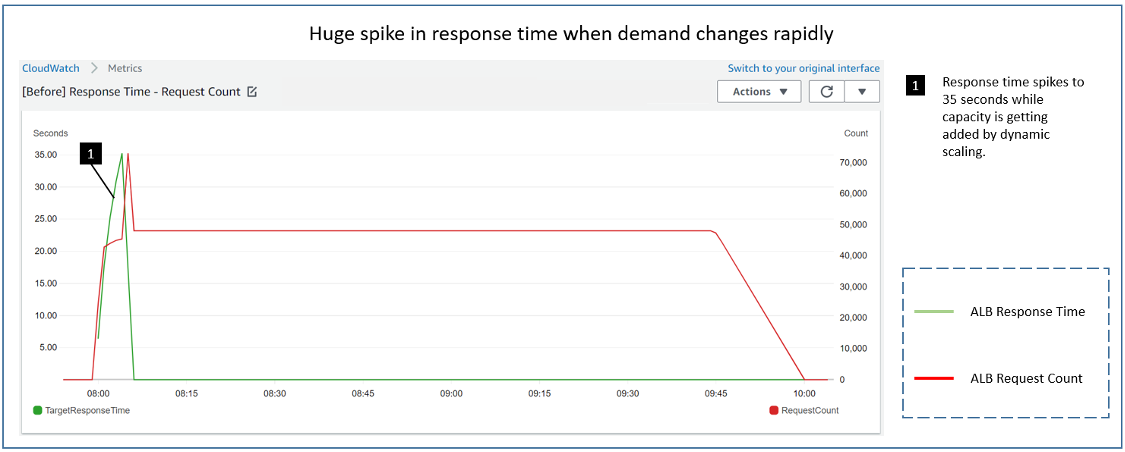

Instance maintenance policy settings apply to all instance replacement operations, but they can be overridden at the start of a new instance refresh operation. Overriding instance maintenance policy is helpful in situations like a bad code deployment that needs replacing without downtime. You could configure an instance maintenance policy to bring an entirely new group’s worth of instances into service before terminating the instances with the problematic code. In this situation, you set the MaxHealthyPercentage to 200% for the instance refresh operation and the replacement happens in a single cycle to promptly address the bad code issue. Setting the MaxHealthyPercentage to 200% will allow the replacement settings to breach the Auto Scaling Group’s Max capacity value, but would be constrained by any account level quotas, so be sure to factor these into application of this feature. See the following figure for a visualization of how this operation would behave.

Figure 2: A graph simulating the instance replacement process with a policy configured to accelerate a new deployment.

Controlling costs during replacements and deployments

The Terminate and launch policy option allows you to favor cost control during instance replacement. By configuring this policy type, Amazon EC2 Auto Scaling terminates existing instances and then launches new instances during the replacement process. To set a Terminate and launch policy, you must specify a MinHealthyPercentage to establish how low the capacity can drop, and keep your MaxHealthyPercentage set to 100%. This configuration keeps the Auto Scaling group’s capacity at or below the desired capacity setting.

The following figure shows behavior with the MinHealthyPercentage set to 80%. During the instance replacement process, the Auto Scaling group first terminates 20% of the instances and immediately launches replacement instances, temporarily reducing the group’s healthy capacity to 80%. The group waits for the new instances to pass its configured health checks and complete warm up before it moves on to replacing the remaining batches of instances.

Figure 3: A graph simulating the instance replacement process with a policy configured to terminate and launch.

Note that the difference between MinHealthyPercentage and MaxHealthyPercentage values impacts the speed of the instance replacement process. In the preceding figure, the Amazon EC2 Auto Scaling group replaces 20% of the instances in each cycle. The larger the gap between the MinHealthyPercentage and MaxHealthyPercentage, the faster the replacement process.

Using a custom policy for maximum flexibility

You can also choose to adopt a Custom behavior option, where you have the flexibility to set the MinHealthyPercentage and MinHealthyPercentage values to whatever you choose. Using this policy type allows you to fine-tune the replacement behavior and control the capacity of your instances within the Amazon EC2 Auto Scaling group to tailor the instance maintenance policy to meet your unique needs.

What about fractional replacement calculations?

Amazon EC2 Auto Scaling always favors availability when performing instance replacements. When instance maintenance policy is configured, Amazon EC2 Auto Scaling also prioritizes launching a new instance rather than going below the MinHealthyPercentage. For example, in an Amazon EC2 Auto Scaling group with a desired capacity of 10 instances and an instance maintenance policy with MinHealthyPercentage set to 99% and MaxHealthyPercentage set to 100%, your settings do not allow for a reduction in capacity of at least one instance. Therefore, Amazon EC2 Auto Scaling biases toward launch before terminating and launches one new instance before terminating any instances that need replacing.

Configuring an instance maintenance policy is not mandatory. If you don’t configure your Amazon EC2 Auto Scaling groups to use an instance maintenance policy, then there is no change in the behavior of your Amazon EC2 Auto Scaling groups’ existing instance replacement process.

You can set a group-level instance maintenance policy through your CloudFormation or Terraform templates. Within your templates, you must set values for both the MinHealthyPercentage and MaxHealthyPercentage settings to determine the instance replacement behavior that aligns with the specific requirements of your Amazon EC2 Auto Scaling group.

Conclusion

In this post, we introduced the new instance maintenance policy feature for Amazon EC2 Auto Scaling groups, explored its capabilities, and provided examples of how to use this new feature. Instance maintenance policy settings apply to all instance replacement processes with the option to override the settings on a per instance refresh basis. By configuring instance maintenance policies, you can control the launch and lifecycle of instances in your Amazon EC2 Auto Scaling groups, increase application availability, reduce manual intervention, and improve cost control for your Amazon EC2 usage.

To learn more about the feature and how to get started, refer to the Amazon EC2 Auto Scaling User Guide.