Post Syndicated from Nikunj Vaidya original https://aws.amazon.com/blogs/devops/configure-devops-guru-multiple-accounts-regions-using-cfn-stacksets/

As applications become increasingly distributed and complex, operators need more automated practices to maintain application availability and reduce the time and effort spent on detecting, debugging, and resolving operational issues.

Enter Amazon DevOps Guru (preview).

Amazon DevOps Guru is a machine learning (ML) powered service that gives you a simpler way to improve an application’s availability and reduce expensive downtime. Without involving any complex configuration setup, DevOps Guru automatically ingests operational data in your AWS Cloud. When DevOps Guru identifies a critical issue, it automatically alerts you with a summary of related anomalies, the likely root cause, and context on when and where the issue occurred. DevOps Guru also, when possible, provides prescriptive recommendations on how to remediate the issue.

Using Amazon DevOps Guru is easy and doesn’t require you to have any ML expertise. To get started, you need to configure DevOps Guru and specify which AWS resources to analyze. If your applications are distributed across multiple AWS accounts and AWS Regions, you need to configure DevOps Guru for each account-Region combination. Though this may sound complex, it’s in fact very simple to do so using AWS CloudFormation StackSets. This post walks you through the steps to configure DevOps Guru across multiple AWS accounts or organizational units, using AWS CloudFormation StackSets.

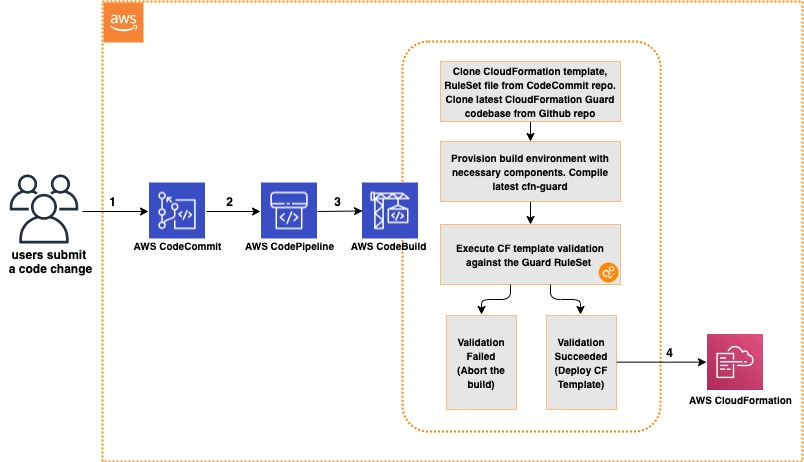

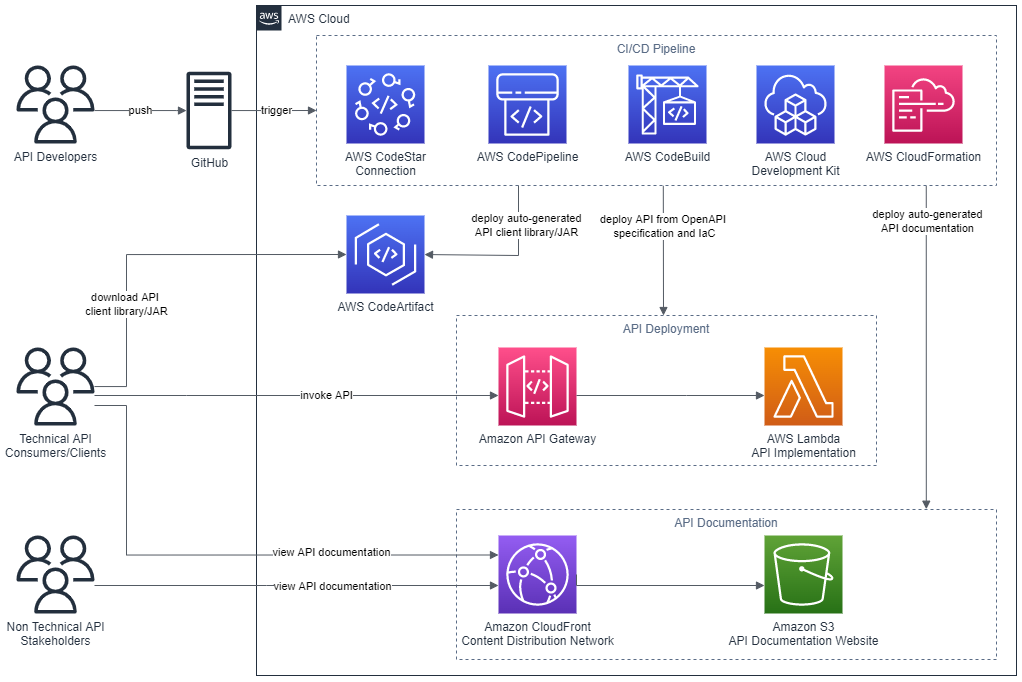

Solution overview

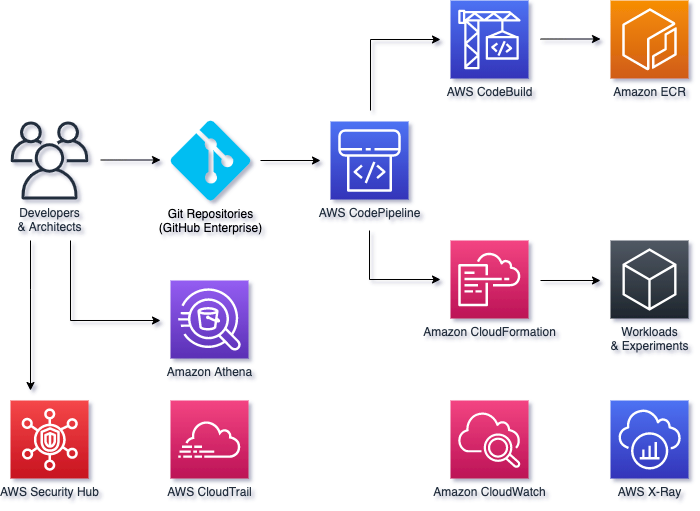

The goal of this post is to provide you with sample templates to facilitate onboarding Amazon DevOps Guru across multiple AWS accounts. Instead of logging into each account and enabling DevOps Guru, you use AWS CloudFormation StackSets from the primary account to enable DevOps Guru across multiple accounts in a single AWS CloudFormation operation. When it’s enabled, DevOps Guru monitors your associated resources and provides you with detailed insights for anomalous behavior along with intelligent recommendations to mitigate and incorporate preventive measures.

We consider various options in this post for enabling Amazon DevOps Guru across multiple accounts and Regions:

- All resources across multiple accounts and Regions

- Resources from specific CloudFormation stacks across multiple accounts and Regions

- For All resources in an organizational unit

In the following diagram, we launch the AWS CloudFormation StackSet from a primary account to enable Amazon DevOps Guru across two AWS accounts and carry out operations to generate insights. The StackSet uses a single CloudFormation template to configure DevOps Guru, and deploys it across multiple accounts and regions, as specified in the command.

Figure: Shows enabling of DevOps Guru using CloudFormation StackSets

When Amazon DevOps Guru is enabled to monitor your resources within the account, it uses a combination of vended Amazon CloudWatch metrics, AWS CloudTrail logs, and specific patterns from its ML models to detect an anomaly. When the anomaly is detected, it generates an insight with the recommendations.

Figure: Shows DevOps Guru monitoring the resources and generating insights for anomalies detected

Prerequisites

To complete this post, you should have the following prerequisites:

- Two AWS accounts. For this post, we use the account numbers 111111111111 (primary account) and 222222222222. We will carry out the CloudFormation operations and monitoring of the stacks from this primary account.

- To use organizations instead of individual accounts, identify the organization unit (OU) ID that contains at least one AWS account.

- Access to a bash environment, either using an AWS Cloud9 environment or your local terminal with the AWS Command Line Interface (AWS CLI) installed.

- AWS Identity and Access Management (IAM) roles for AWS CloudFormation StackSets.

- Knowledge of CloudFormation StackSets

(a) Using an AWS Cloud9 environment or AWS CLI terminal

We recommend using AWS Cloud9 to create an environment to get access to the AWS CLI from a bash terminal. Make sure you select Linux2 as the operating system for the AWS Cloud9 environment.

Alternatively, you may use your bash terminal in your favorite IDE and configure your AWS credentials in your terminal.

(b) Creating IAM roles

If you are using Organizations for account management, you would not need to create the IAM roles manually and instead use Organization based trusted access and SLRs. You may skip the sections (b), (c) and (d). If not using Organizations, please read further.

Before you can deploy AWS CloudFormation StackSets, you must have the following IAM roles:

- AWSCloudFormationStackSetAdministrationRole

- AWSCloudFormationStackSetExecutionRole

The IAM role AWSCloudFormationStackSetAdministrationRole should be created in the primary account whereas AWSCloudFormationStackSetExecutionRole role should be created in all the accounts where you would like to run the StackSets.

If you’re already using AWS CloudFormation StackSets, you should already have these roles in place. If not, complete the following steps to provision these roles.

(c) Creating the AWSCloudFormationStackSetAdministrationRole role

To create the AWSCloudFormationStackSetAdministrationRole role, sign in to your primary AWS account and go to the AWS Cloud9 terminal.

Execute the following command to download the file:

curl -O https://s3.amazonaws.com/cloudformation-stackset-sample-templates-us-east-1/AWSCloudFormationStackSetAdministrationRole.yml

Execute the following command to create the stack:

aws cloudformation create-stack \

--stack-name AdminRole \

--template-body file:///$PWD/AWSCloudFormationStackSetAdministrationRole.yml \

--capabilities CAPABILITY_NAMED_IAM \

--region us-east-1

(d) Creating the AWSCloudFormationStackSetExecutionRole role

You now create the role AWSCloudFormationStackSetExecutionRole in the primary account and other target accounts where you want to enable DevOps Guru. For this post, we create it for our two accounts and two Regions (us-east-1 and us-east-2).

Execute the following command to download the file:

curl -O https://s3.amazonaws.com/cloudformation-stackset-sample-templates-us-east-1/AWSCloudFormationStackSetExecutionRole.yml

Execute the following command to create the stack:

aws cloudformation create-stack \

--stack-name ExecutionRole \

--template-body file:///$PWD/AWSCloudFormationStackSetExecutionRole.yml \

--parameters ParameterKey=AdministratorAccountId,ParameterValue=111111111111 \

--capabilities CAPABILITY_NAMED_IAM \

--region us-east-1

Now that the roles are provisioned, you can use AWS CloudFormation StackSets in the next section.

Running AWS CloudFormation StackSets to enable DevOps Guru

With the required IAM roles in place, now you can deploy the stack sets to enable DevOps Guru across multiple accounts.

As a first step, go to your bash terminal and clone the GitHub repository to access the CloudFormation templates:

git clone https://github.com/aws-samples/amazon-devopsguru-samples

cd amazon-devopsguru-samples/enable-devopsguru-stacksets

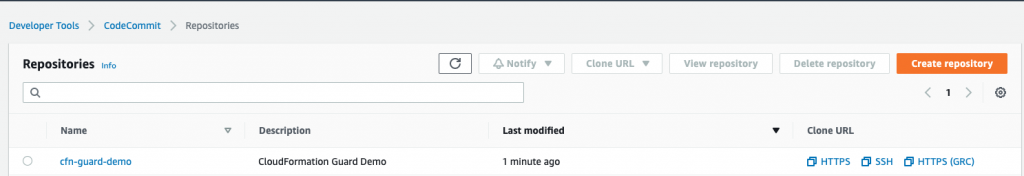

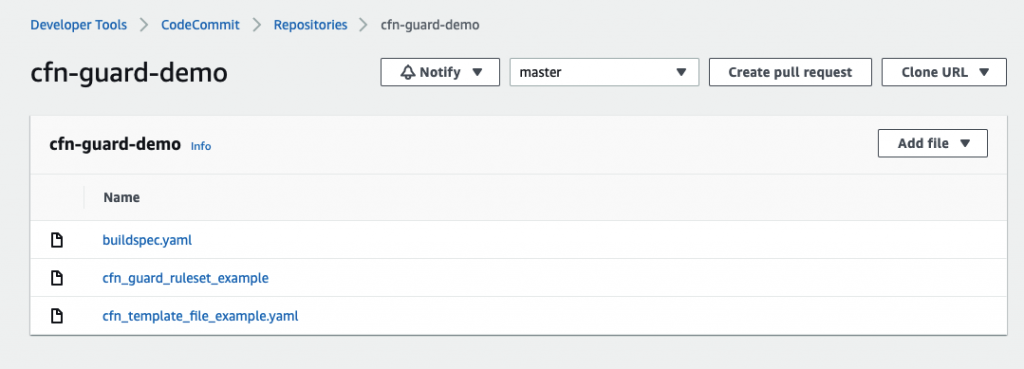

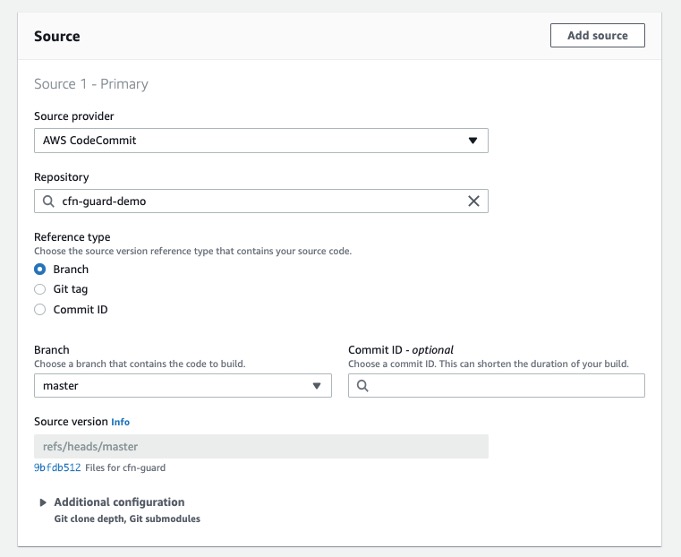

(a) Configuring Amazon SNS topics for DevOps Guru to send notifications for operational insights

If you want to receive notifications for operational insights generated by Amazon DevOps Guru, you need to configure an Amazon Simple Notification Service (Amazon SNS) topic across multiple accounts. If you have already configured SNS topics and want to use them, identify the topic name and directly skip to the step to enable DevOps Guru.

Note for Central notification target: You may prefer to configure an SNS Topic in the central AWS account so that all Insight notifications are sent to a single target. In such a case, you would need to modify the central account SNS topic policy to allow other accounts to send notifications.

To create your stack set, enter the following command (provide an email for receiving insights):

aws cloudformation create-stack-set \

--stack-set-name CreateDevOpsGuruTopic \

--template-body file:///$PWD/CreateSNSTopic.yml \

--parameters ParameterKey=EmailAddress,ParameterValue=<[email protected]> \

--region us-east-1

Instantiate AWS CloudFormation StackSets instances across multiple accounts and multiple Regions (provide your account numbers and Regions as needed):

aws cloudformation create-stack-instances \

--stack-set-name CreateDevOpsGuruTopic \

--accounts '["111111111111","222222222222"]' \

--regions '["us-east-1","us-east-2"]' \

--operation-preferences FailureToleranceCount=0,MaxConcurrentCount=1

After running this command, the SNS topic devops-guru is created across both the accounts. Go to the email address specified and confirm the subscription by clicking the Confirm subscription link in each of the emails that you receive. Your SNS topic is now fully configured for DevOps Guru to use.

Figure: Shows creation of SNS topic to receive insights from DevOps Guru

(b) Enabling DevOps Guru

Let us first examine the CloudFormation template format to enable DevOps Guru and configure it to send notifications over SNS topics. See the following code snippet:

Resources:

DevOpsGuruMonitoring:

Type: AWS::DevOpsGuru::ResourceCollection

Properties:

ResourceCollectionFilter:

CloudFormation:

StackNames: *

DevOpsGuruNotification:

Type: AWS::DevOpsGuru::NotificationChannel

Properties:

Config:

Sns:

TopicArn: arn:aws:sns:us-east-1:111111111111:SnsTopic

When the StackNames property is fed with a value of *, it enables DevOps Guru for all CloudFormation stacks. However, you can enable DevOps Guru for only specific CloudFormation stacks by providing the desired stack names as shown in the following code:

Resources:

DevOpsGuruMonitoring:

Type: AWS::DevOpsGuru::ResourceCollection

Properties:

ResourceCollectionFilter:

CloudFormation:

StackNames:

- StackA

- StackB

For the CloudFormation template in this post, we provide the names of the stacks using the parameter inputs. To enable the AWS CLI to accept a list of inputs, we need to configure the input type as CommaDelimitedList, instead of a base string. We also provide the parameter SnsTopicName, which the template substitutes into the TopicArn property.

See the following code:

AWSTemplateFormatVersion: 2010-09-09

Description: Enable Amazon DevOps Guru

Parameters:

CfnStackNames:

Type: CommaDelimitedList

Description: Comma separated names of the CloudFormation Stacks for DevOps Guru to analyze.

Default: "*"

SnsTopicName:

Type: String

Description: Name of SNS Topic

Resources:

DevOpsGuruMonitoring:

Type: AWS::DevOpsGuru::ResourceCollection

Properties:

ResourceCollectionFilter:

CloudFormation:

StackNames: !Ref CfnStackNames

DevOpsGuruNotification:

Type: AWS::DevOpsGuru::NotificationChannel

Properties:

Config:

Sns:

TopicArn: !Sub arn:aws:sns:${AWS::Region}:${AWS::AccountId}:${SnsTopicName}

Now that we reviewed the CloudFormation syntax, we will use this template to implement the solution. For this post, we will consider three use cases for enabling Amazon DevOps Guru:

(i) For all resources across multiple accounts and Regions

(ii) For all resources from specific CloudFormation stacks across multiple accounts and Regions

(iii) For all resources in an organization

Let us review each of the above points in detail.

(i) Enabling DevOps Guru for all resources across multiple accounts and Regions

Note: Carry out the following steps in your primary AWS account.

You can use the CloudFormation template (EnableDevOpsGuruForAccount.yml) from the current directory, create a stack set, and then instantiate AWS CloudFormation StackSets instances across desired accounts and Regions.

The following command creates the stack set:

aws cloudformation create-stack-set \

--stack-set-name EnableDevOpsGuruForAccount \

--template-body file:///$PWD/EnableDevOpsGuruForAccount.yml \

--parameters ParameterKey=CfnStackNames,ParameterValue=* \

ParameterKey=SnsTopicName,ParameterValue=devops-guru \

--region us-east-1

The following command instantiates AWS CloudFormation StackSets instances across multiple accounts and Regions:

aws cloudformation create-stack-instances \

--stack-set-name EnableDevOpsGuruForAccount \

--accounts '["111111111111","222222222222"]' \

--regions '["us-east-1","us-east-2"]' \

--operation-preferences FailureToleranceCount=0,MaxConcurrentCount=1

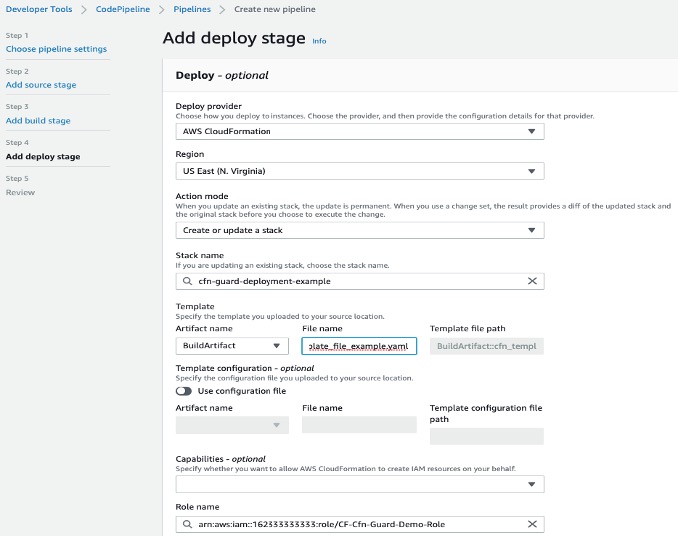

The following screenshot of the AWS CloudFormation console in the primary account running StackSet, shows the stack set deployed in both accounts.

Figure: Screenshot for deployed StackSet and Stack instances

The following screenshot of the Amazon DevOps Guru console shows DevOps Guru is enabled to monitor all CloudFormation stacks.

Figure: Screenshot of DevOps Guru dashboard showing DevOps Guru enabled for all CloudFormation stacks

(ii) Enabling DevOps Guru for specific CloudFormation stacks for individual accounts

Note: Carry out the following steps in your primary AWS account

In this use case, we want to enable Amazon DevOps Guru only for specific CloudFormation stacks for individual accounts. We use the AWS CloudFormation StackSets override parameters feature to rerun the stack set with specific values for CloudFormation stack names as parameter inputs. For more information, see Override parameters on stack instances.

If you haven’t created the stack instances for individual accounts, use the create-stack-instances AWS CLI command and pass the parameter overrides. If you have already created stack instances, update the existing stack instances using update-stack-instances and pass the parameter overrides. Replace the required account number, Regions, and stack names as needed.

In account 111111111111, create instances with the parameter override with the following command, where CloudFormation stacks STACK-NAME-1 and STACK-NAME-2 belong to this account in us-east-1 Region:

aws cloudformation create-stack-instances \

--stack-set-name EnableDevOpsGuruForAccount \

--accounts '["111111111111"]' \

--parameter-overrides ParameterKey=CfnStackNames,ParameterValue=\"<STACK-NAME-1>,<STACK-NAME-2>\" \

--regions '["us-east-1"]' \

--operation-preferences FailureToleranceCount=0,MaxConcurrentCount=1

Update the instances with the following command:

aws cloudformation update-stack-instances \

--stack-set-name EnableDevOpsGuruForAccount \

--accounts '["111111111111"]' \

--parameter-overrides ParameterKey=CfnStackNames,ParameterValue=\"<STACK-NAME-1>,<STACK-NAME-2>\" \

--regions '["us-east-1"]' \

--operation-preferences FailureToleranceCount=0,MaxConcurrentCount=1

In account 222222222222, create instances with the parameter override with the following command, where CloudFormation stacks STACK-NAME-A and STACK-NAME-B belong to this account in the us-east-1 Region:

aws cloudformation create-stack-instances \

--stack-set-name EnableDevOpsGuruForAccount \

--accounts '["222222222222"]' \

--parameter-overrides ParameterKey=CfnStackNames,ParameterValue=\"<STACK-NAME-A>,<STACK-NAME-B>\" \

--regions '["us-east-1"]' \

--operation-preferences FailureToleranceCount=0,MaxConcurrentCount=1

Update the instances with the following command:

aws cloudformation update-stack-instances \

--stack-set-name EnableDevOpsGuruForAccount \

--accounts '["222222222222"]' \

--parameter-overrides ParameterKey=CfnStackNames,ParameterValue=\"<STACK-NAME-A>,<STACK-NAME-B>\" \

--regions '["us-east-1"]' \

--operation-preferences FailureToleranceCount=0,MaxConcurrentCount=1

The following screenshot of the DevOps Guru console shows that DevOps Guru is enabled for only two CloudFormation stacks.

Figure: Screenshot of DevOps Guru dashboard with DevOps Guru enabled for two CloudFormation stacks

(iii) Enabling DevOps Guru for all resources in an organization

Note: Carry out the following steps in your primary AWS account

If you’re using AWS Organizations, you can take advantage of the AWS CloudFormation StackSets feature support for Organizations. This way, you don’t need to add or remove stacks when you add or remove accounts from the organization. For more information, see New: Use AWS CloudFormation StackSets for Multiple Accounts in an AWS Organization.

The following example shows the command line using multiple Regions to demonstrate the use. Update the OU as needed. If you need to use additional Regions, you may have to create an SNS topic in those Regions too.

To create a stack set for an OU and across multiple Regions, enter the following command:

aws cloudformation create-stack-set \

--stack-set-name EnableDevOpsGuruForAccount \

--template-body file:///$PWD/EnableDevOpsGuruForAccount.yml \

--parameters ParameterKey=CfnStackNames,ParameterValue=* \

ParameterKey=SnsTopicName,ParameterValue=devops-guru \

--region us-east-1 \

--permission-model SERVICE_MANAGED \

--auto-deployment Enabled=true,RetainStacksOnAccountRemoval=true

Instantiate AWS CloudFormation StackSets instances for an OU and across multiple Regions with the following command:

aws cloudformation create-stack-instances \

--stack-set-name EnableDevOpsGuruForAccount \

--deployment-targets OrganizationalUnitIds='["<organizational-unit-id>"]' \

--regions '["us-east-1","us-east-2"]' \

--operation-preferences FailureToleranceCount=0,MaxConcurrentCount=1

In this way, you can run CloudFormation StackSets to enable and configure DevOps Guru across multiple accounts, Regions, with simple and easy steps.

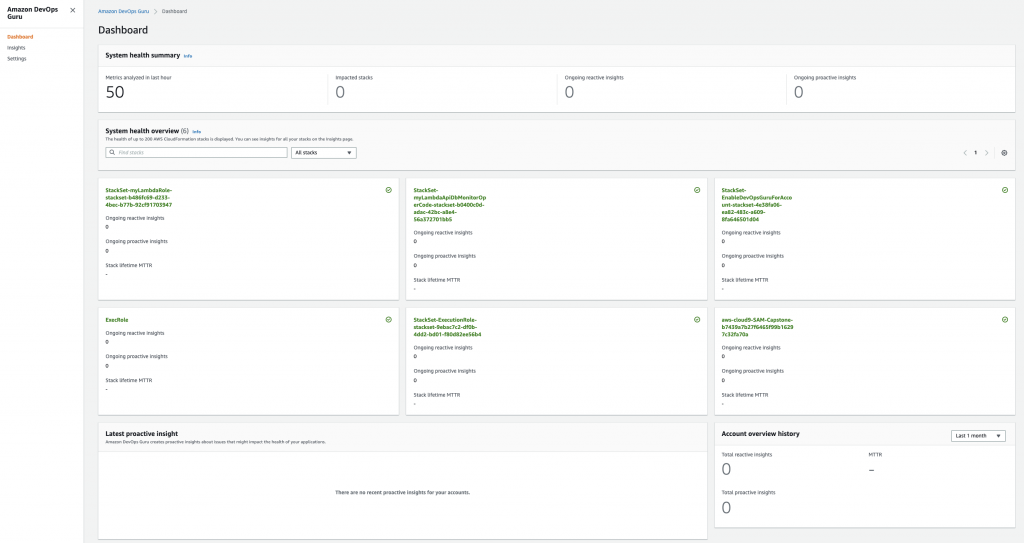

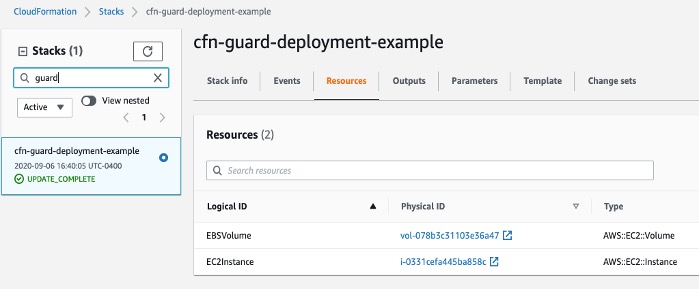

Reviewing DevOps Guru insights

Amazon DevOps Guru monitors for anomalies in the resources in the CloudFormation stacks that are enabled for monitoring. The following screenshot shows the initial dashboard.

Figure: Screenshot of DevOps Guru dashboard

On enabling DevOps Guru, it may take up to 24 hours to analyze the resources and baseline the normal behavior. When it detects an anomaly, it highlights the impacted CloudFormation stack, logs insights that provide details about the metrics indicating an anomaly, and prints actionable recommendations to mitigate the anomaly.

Figure: Screenshot of DevOps Guru dashboard showing ongoing reactive insight

The following screenshot shows an example of an insight (which now has been resolved) that was generated for the increased latency for an ELB. The insight provides various sections in which it provides details about the metrics, the graphed anomaly along with the time duration, potential related events, and recommendations to mitigate and implement preventive measures.

Figure: Screenshot for an Insight generated about ELB Latency

Cleaning up

When you’re finished walking through this post, you should clean up or un-provision the resources to avoid incurring any further charges.

- On the AWS CloudFormation StackSets console, choose the stack set to delete.

- On the Actions menu, choose Delete stacks from StackSets.

- After you delete the stacks from individual accounts, delete the stack set by choosing Delete StackSet.

- Un-provision the environment for AWS Cloud9.

Conclusion

This post reviewed how to enable Amazon DevOps Guru using AWS CloudFormation StackSets across multiple AWS accounts or organizations to monitor the resources in existing CloudFormation stacks. Upon detecting an anomaly, DevOps Guru generates an insight that includes the vended CloudWatch metric, the CloudFormation stack in which the resource existed, and actionable recommendations.

We hope this post was useful to you to onboard DevOps Guru and that you try using it for your production needs.

About the Authors

Nikunj Vaidya is a Sr. Solutions Architect with Amazon Web Services, focusing in the area of DevOps services. He builds technical content for the field enablement and offers technical guidance to the customers on AWS DevOps solutions and services that would streamline the application development process, accelerate application delivery, and enable maintaining a high bar of software quality.

Nuatu Tseggai is a Cloud Infrastructure Architect at Amazon Web Services. He enjoys working with customers to design and build event-driven distributed systems that span multiple services.

![A VPC selected in the scanned resources list]](https://d2908q01vomqb2.cloudfront.net/7719a1c782a1ba91c031a682a0a2f8658209adbf/2024/01/11/add-resources-to-template.png)

Mike is a Principal Solutions Architect with the Startup Team at Amazon Web Services. He is a former founder, current mentor, and enjoys helping startups live their best cloud life.

Mike is a Principal Solutions Architect with the Startup Team at Amazon Web Services. He is a former founder, current mentor, and enjoys helping startups live their best cloud life. Sean is a Senior Startup Solutions Architect at AWS. Before AWS, he was Director of Scientific Computing at the Howard Hughes Medical Institute.

Sean is a Senior Startup Solutions Architect at AWS. Before AWS, he was Director of Scientific Computing at the Howard Hughes Medical Institute.