Post Syndicated from Michael Kammer original https://blog.zabbix.com/what-is-network-monitoring-everything-you-need-to-know/26539/

Your company’s network is the glue that bonds your enterprise together. The technology of networking is growing more stable and reliable all the time, but it doesn’t mean you can leave your network unattended – quality network monitoring is an absolute must-have.

What are network monitoring systems?

At its most basic, network monitoring is a critical IT process where all networking components (as well as key performance indicators like network hardware CPU utilization and network bandwidth) are continuously and proactively monitored to improve performance, eliminate bottlenecks, and prevent network congestion and downtime.

Put more simply, it’s the act of keeping an eye on all the connected elements that are relevant to your business. That means all your hardware and software resources, including routers, switches, firewalls, servers, PCs, printers, phones, and tablets.

A network monitoring system is a set of software tools that lets you program this action. It allows you to constantly monitor your network infrastructure by doing systematic tests to look for issues and notifying you if any are found. A good system makes monitoring your network easy by:

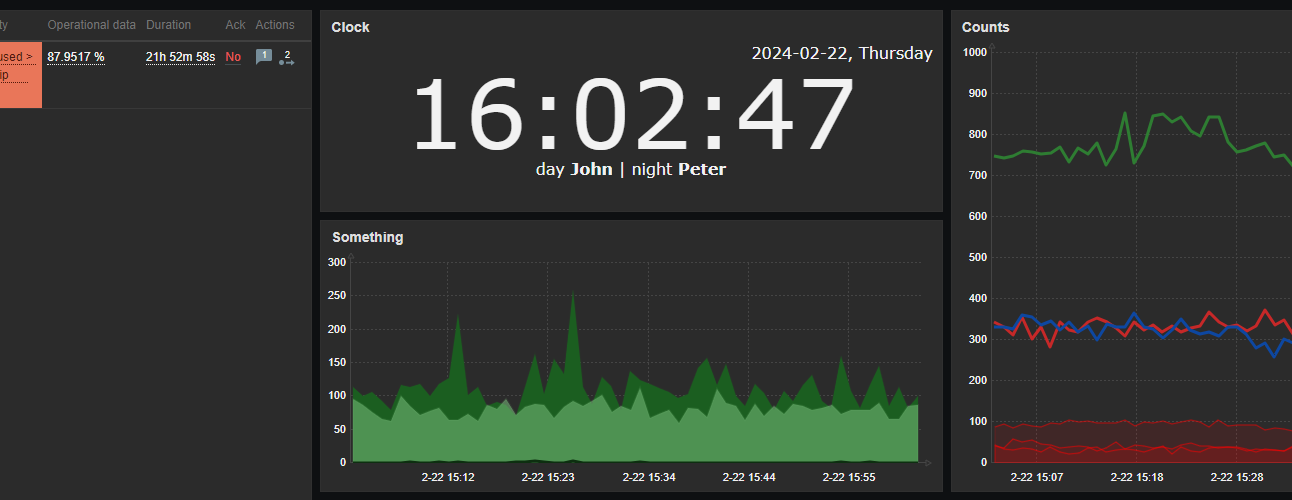

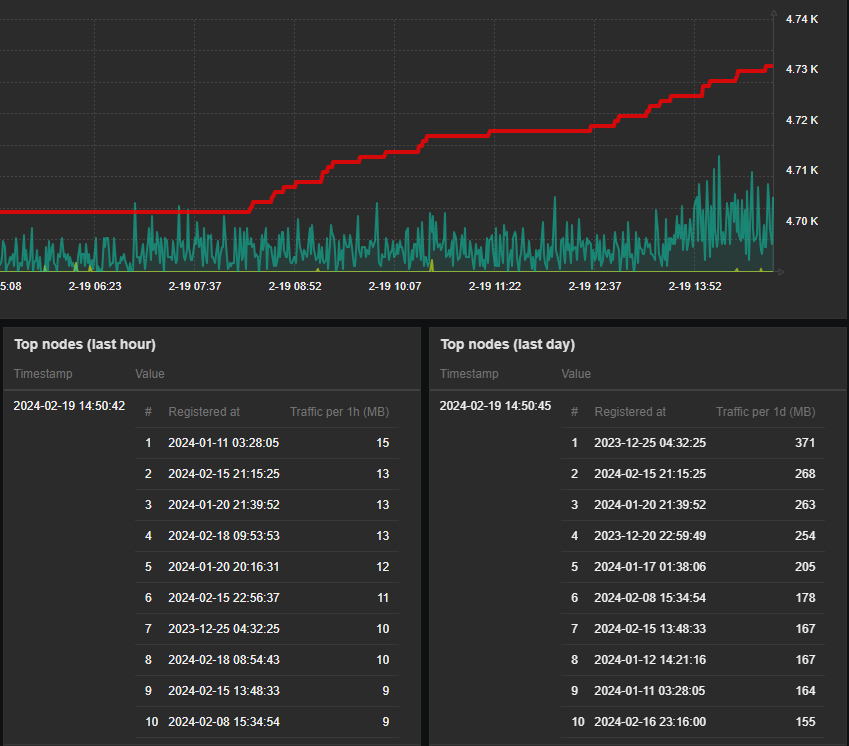

- Allowing you to see all information in dashboards

- Generating reports on demand

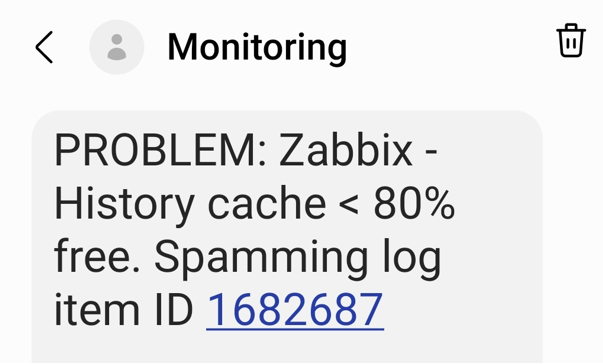

- Sending alerts

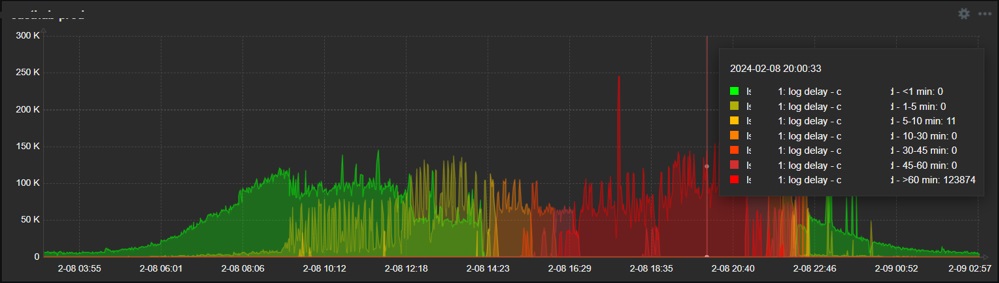

- Displaying the monitoring data you need in easy-to-read graphs

What are some key benefits of network monitoring?

A quality network monitoring solution allows you to:

Monitoring gives you the visibility to benchmark your network’s everyday performance. It also makes it easy to spot any fluctuations in performance, which in turn allows you to identify any unwanted changes.

Effectively allocate resources

IT teams need a clear understanding of the source of problems. They also need the ability to minimize tedious troubleshooting and put in place proactive measures to stay ahead of IT outages. To use a plumbing analogy, monitoring lets them fix cracks before a leak happens.

Identify security threats

Preventing security breaches is a major challenge for any organization. As attacks become increasingly more sophisticated and difficult to trace, detecting and mitigating any form of network threat before it escalates is critical. Network monitoring makes it easier to protect data and systems by providing early warning of any suspicious anomalies.

Manage a changing IT environment

New technologies like internet-enabled sensors, wireless devices, and cloud technologies make it harder for IT teams to track performance fluctuations or suspicious activity. A network monitoring solution can:

- Give IT teams a comprehensive inventory of wired and wireless devices

- Make it easy to analyze long-term trends

- Help you get the most out of your available assets

Proactively detect and resolve issues before they affect users

Monitoring a network closely allows an organization to quickly resolve issues and prevent major disruptions. This means fewer interruptions to operations and better utilization of IT resources.

Deploy new technology and system upgrades successfully

Thanks to monitoring, IT teams can learn how equipment has performed over time and use trend analysis to see whether current technology can scale to meet business needs. This can:

- Give a clear picture of whether a network is able to support the launch of a new technology

- Mitigate any risks associated with a major change

- Easily demonstrate ROI by providing comprehensive metrics

What are some different types of network monitoring?

Different types of monitoring exist depending on what exactly needs to be monitored. Some of the most common include fault monitoring, log monitoring, network performance monitoring, configuration monitoring, and availability monitoring.

Fault monitoring

As the name suggests, fault monitoring involves finding and reporting faults in a computer network. It is crucial for maintaining uninterrupted network uptime and is essential to keeping all programs and services running smoothly.

Log monitoring

Resources such as servers, applications, and websites continuously generate logs, which can:

- Provide valuable insights into user activity

- Help a business comply with regulations

- Promptly resolve incidents

- Boost network security

NPM tracks monitoring parameters like latency, network traffic, bandwidth usage, and throughput, with the goal of optimizing user experience. NPM tools provide valuable information that can be used to minimize downtime and troubleshoot network issues.

Configuration monitoring

Monitoring network configuration involves keeping track of the software and firmware in use on the network and making sure that any inconsistencies are identified and addressed. This prevents any gaps in visibility or security.

Network availability monitoring

Availability monitoring is the monitoring of all IT infrastructure to determine the uptime of devices. By consistently monitoring devices and servers, organizations can receive alerts when there is a network crash or when a device becomes unavailable. ICMP, SNMP, and Syslogs are the most commonly used availability monitoring techniques.

How does it work?

Network monitoring uses multiple techniques to test the availability and functionality of a network. Here are a few of the most common techniques used to collect data for monitoring software:

Ping

A ping is the simplest technique that monitoring software uses to test hosts within a network. The monitoring system sends out a signal and records:

- Whether the signal was received,

- How long it took the host to receive the signal

- Whether any signal data was lost

That data is then used to determine:

- Whether the host is active

- How efficient the host is

- The transmission time and packet loss experienced when communicating with the host

- Any other vital information

Simple network management protocol (SNMP)

SNMP is the most widely used protocol for modern network management systems. It uses monitoring software to monitor individual devices in a network. In this system, each monitored device has SNMP agent monitoring software that sends information about the device’s performance to the monitoring solution, which collects this information in a database and then analyzes it for errors.

Syslog

Syslog is an automated messaging system that sends messages when an event affects a network device. Technicians can set up devices to send out messages when the device encounters an error, shuts down unexpectedly, encounters a configuration failure, and more. These messages often contain information that can be used for system management as well as security systems.

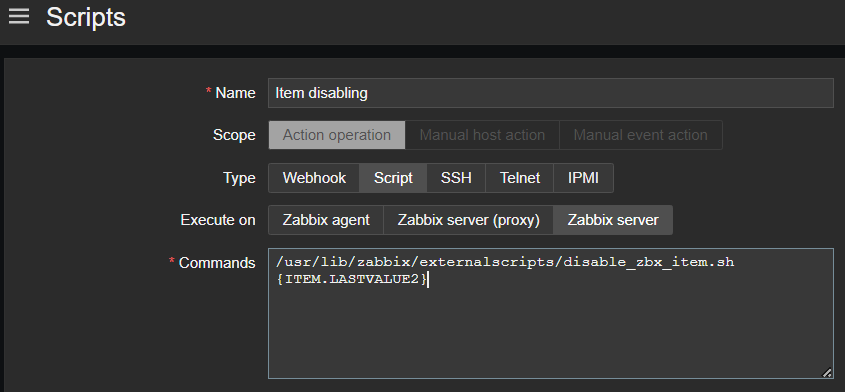

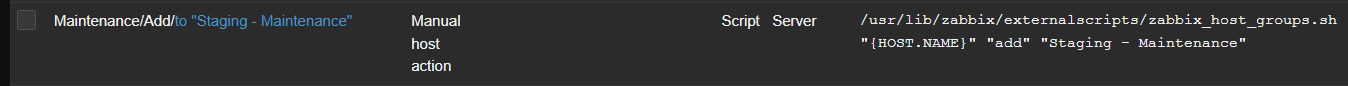

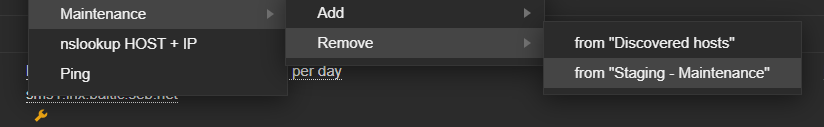

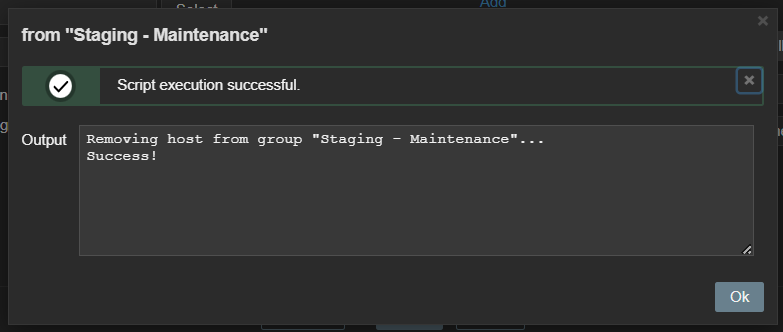

Scripts

Scripts are simple programs that collect basic information and instruct the network to perform an action within certain conditions. They can fill gaps in monitoring software functionality, performing scheduled tasks such as resetting and reconfiguring a public access computer every night.

Scripts can also be used to collect data, sending out an alert if results don’t fall within certain thresholds. Network managers will usually set these thresholds, programming the network software to send out an alert if data indicates issues, including:

- Slow throughput

- High error rates

- Unavailable devices

- Slower-than-usual response times

How can businesses benefit from network monitoring?

Here are 5 ways that quality network monitoring can benefit any business:

Increased reliability

The main function of any monitoring solution is to show whether a device is working or not. A proactive approach to maintaining a healthy network will keep tech support requests and downtime to an absolute minimum.

Improved visibility

Having complete visibility of all your hardware and software assets allows you to easily monitor the health of your network. Monitoring tracks the data moving along cables and through servers, switches, connections, and routers. In the event of a problem, your IT team can identify the root cause and fix the issue quickly.

Network monitoring software lets you know which parts of your network are being properly used, overused, or underused. You can also uncover unnecessary costs that can be eliminated or identify a network component that needs upgrading.

Stricter compliance

Today’s IT teams need to meet strict regulatory and protection standards in increasingly complex networks. The latest compliance guidelines recommend actively watching for changes in normal system behavior and unusual data flow. The data provided by monitoring tools makes it easy to assess your entire system and deliver a service that meets all required standards.

Greater profitability

Network monitoring makes businesses more productive by saving network management time and lowering operating costs. If your team is aware of current and impending issues, you can reduce downtime and increase productivity and efficiency.

The Zabbix advantage

At Zabbix, we’ve perfected an enterprise IT infrastructure monitoring software that can deploy anywhere and monitor any device, system, or app in any environment while providing comprehensive data protection, easy integration, and unlimited visualization options.

You can also count on complete transparency, a predictable release cycle, a vibrant and active user community, and an outstanding user experience.

Everything we do scales easily, so we’re able to grow right along with you. What’s more, we offer a comprehensive range of professional services, including implementation, integration, custom development, consulting services, technical support, and a full suite of training programs.

The best part? Because Zabbix is open-source, it’s not just affordable – it’s free. Get in touch with us to find out more and get started on the path to maximum network efficiency today.

FAQ

What is an example of basic network monitoring?

An example of basic network monitoring is a network engineer collecting real-time data from a data center and setting up alerts when a problem (such as a device failure, a temperature spike, a power outage, or a network capacity issue) appears.

What is network monitoring used for?

Network monitoring can:

• Determine whether a network is running optimally in real time

• Proactively identify deficiencies and optimize efficiency

• Catch and repair problems before they impact operations

• Reduce downtime and make sure employees have access to the resources they need

• Boost the availability of APIs and webpages

• Optimize network performance and availability

What is the most popular network monitoring program?

Some of the most popular network monitoring programs available on the market include:

• Zabbix

• SolarWinds Network Performance Monitor

• Auvik

• Datadog

• ManageEngine OpManager

• Site24x7

• Checkmk

• Progress WhatsUp Gold

• Microsoft Resource Monitor

• Wireshark

• Nagios

• Ntop

• Cacti

• FreeNATS

• Icinga

What are the key steps in network monitoring?

A network monitoring process includes all phases involved in executing efficient network monitoring. These phases include:

- Locating all key network components

- Actively monitoring the components

- Creating alerts for component health and metrics

- Making a plan for managing issues

- Analyzing generated reports

- Adjusting the process as necessary

The post What is Network Monitoring? Everything You Need to Know appeared first on Zabbix Blog.