Post Syndicated from Sushanth Kothapally original https://aws.amazon.com/blogs/big-data/scale-aws-glue-jobs-by-optimizing-ip-address-consumption-and-expanding-network-capacity-using-a-private-nat-gateway/

As businesses expand, the demand for IP addresses within the corporate network often exceeds the supply. An organization’s network is often designed with some anticipation of future requirements, but as enterprises evolve, their information technology (IT) needs surpass the previously designed network. Companies may find themselves challenged to manage the limited pool of IP addresses.

For data engineering workloads when AWS Glue is used in such a constrained network configuration, your team may sometimes face hurdles running many jobs simultaneously. This happens because you may not have enough IP addresses to support the required connections to databases. To overcome this shortage, the team may get more IP addresses from your corporate network pool. These obtained IP addresses can be unique (non-overlapping) or overlapping, when the IP addresses are reused in your corporate network.

When you use overlapping IP addresses, you need an additional network management to establish connectivity. Networking solutions can include options like private Network Address Translation (NAT) gateways, AWS PrivateLink, or self-managed NAT appliances to translate IP addresses.

In this post, we will discuss two strategies to scale AWS Glue jobs:

- Optimizing the IP address consumption by right-sizing Data Processing Units (DPUs), using the Auto Scaling feature of AWS Glue, and fine-tuning of the jobs.

- Expanding the network capacity using additional non-routable Classless Inter-Domain Routing (CIDR) range with a private NAT gateway.

Before we dive deep into these solutions, let us understand how AWS Glue uses Elastic Network Interface (ENI) for establishing connectivity. To enable access to data stores inside a VPC, you need to create an AWS Glue connection that is attached to your VPC. When an AWS Glue job runs in your VPC, the job creates an ENI inside the configured VPC for each data connection, and that ENI uses an IP address in the specified VPC. These ENIs are short-lived and active until job is complete.

Now let us look at the first solution that explains optimizing the AWS Glue IP address consumption.

Strategies for efficient IP address consumption

In AWS Glue, the number of workers a job uses determines the count of IP addresses used from your VPC subnet. This is because each worker requires one IP address that maps to one ENI. When you don’t have enough CIDR range allocated to the AWS Glue subnet, you may observe IP address exhaustion errors. The following are some best practices to optimize AWS Glue IP address consumption:

- Right-sizing the job’s DPUs – AWS Glue is a distributed processing engine. It works efficiently when it can run tasks in parallel. If a job has more than the required DPUs, it doesn’t always run quicker. So, finding the right number of DPUs will make sure you use IP addresses optimally. By building observability in the system and analyzing the job performance, you can get insights into ENI consumption trends and then configure the appropriate capacity on the job for the right size. For more details, refer to Monitoring for DPU capacity planning. The Spark UI is a helpful tool to monitor AWS Glue jobs’ workers usage. For more details, refer to Monitoring jobs using the Apache Spark web UI.

- AWS Glue Auto Scaling – It’s often difficult to predict a job’s capacity requirements upfront. Enabling the Auto Scaling feature of AWS Glue will offload some of this responsibility to AWS. At runtime based on the workload requirements, the job automatically scales worker nodes upto the defined maximum configuration. If there is no additional need, AWS Glue will not overprovision workers, thereby saving resources and reducing cost. The Auto Scaling feature is available in AWS Glue 3.0 and later. For more information, refer to Introducing AWS Glue Auto Scaling: Automatically resize serverless computing resources for lower cost with optimized Apache Spark.

- Job-level optimization – Identify job-level optimizations by using AWS Glue job metrics , and apply best practices from Best practices for performance tuning AWS Glue for Apache Spark jobs.

Next let us look at the second solution that elaborates network capacity expansion.

Solutions for network size (IP address) expansion

In this section, we will discuss two possible solutions to expand network size in more detail.

Expand VPC CIDR ranges with routable addresses

One solution is to add more private IPv4 CIDR ranges from RFC 1918 to your VPC. Theoretically, each AWS account can be assigned to some or all these IP address CIDRs. Your IP Address Management (IPAM) team often manages the allocation of IP addresses that each business unit can use from RFC1918 to avoid overlapping IP addresses across multiple AWS accounts or business units. If your current routable IP address quota allocated by the IPAM team is not sufficient, then you can request for more.

If your IPAM team issues you an additional non-overlapping CIDR range, then you can either add it as a secondary CIDR to your existing VPC or create a new VPC with it. If you are planning to create a new VPC, then you can inter-connect the VPCs via VPC peering or AWS Transit Gateway.

If this additional capacity is sufficient to run all your jobs within defined the timeframe, then it is a simple and cost-effective solution. Otherwise, you can consider adopting overlapping IP addresses with a private NAT gateway, as described in the following section. With the following solution you must use Transit Gateway to connect VPCs as VPC peering is not possible when there are overlapping CIDR ranges in those two VPCs.

Configure non-routable CIDR with a private NAT gateway

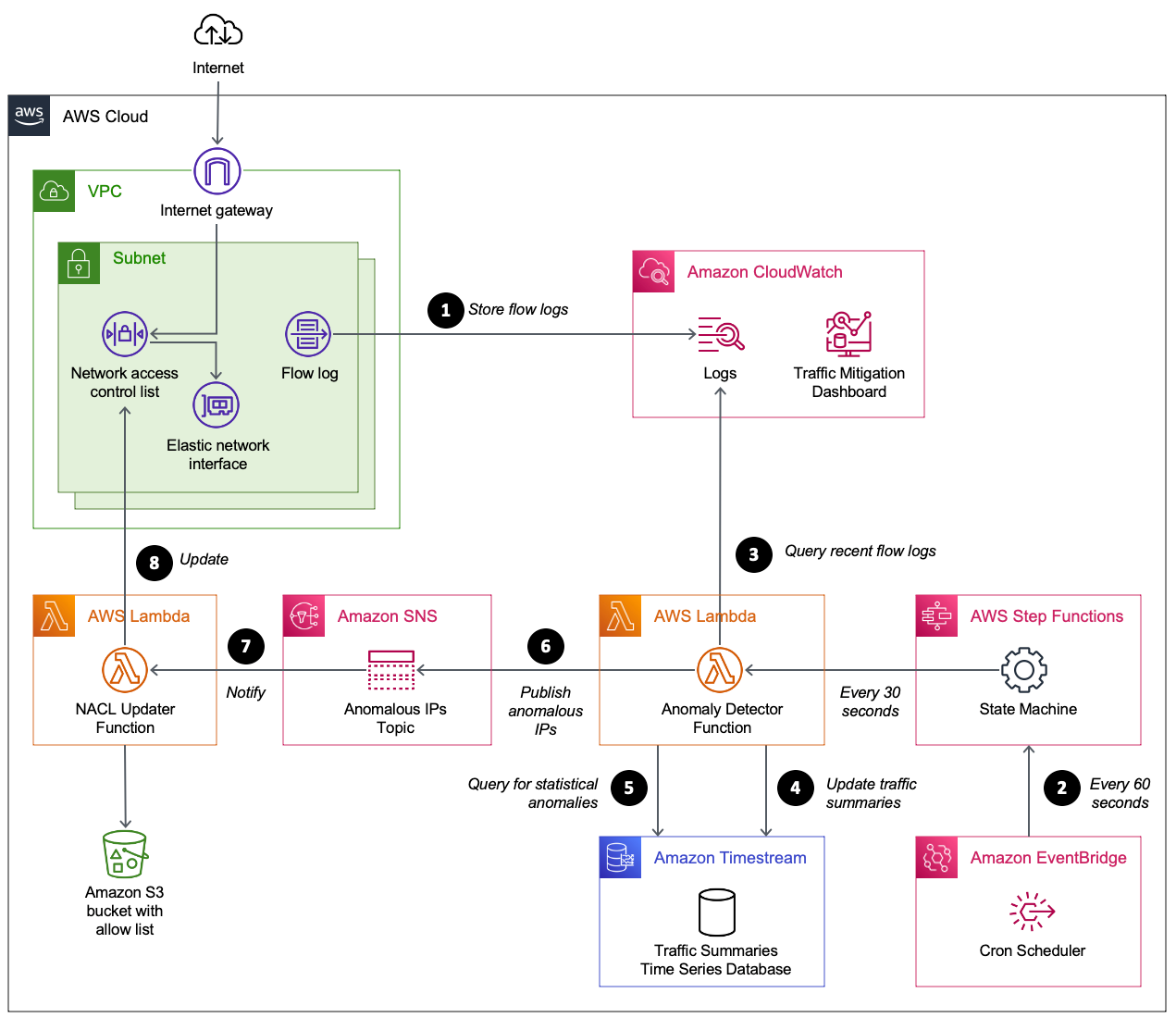

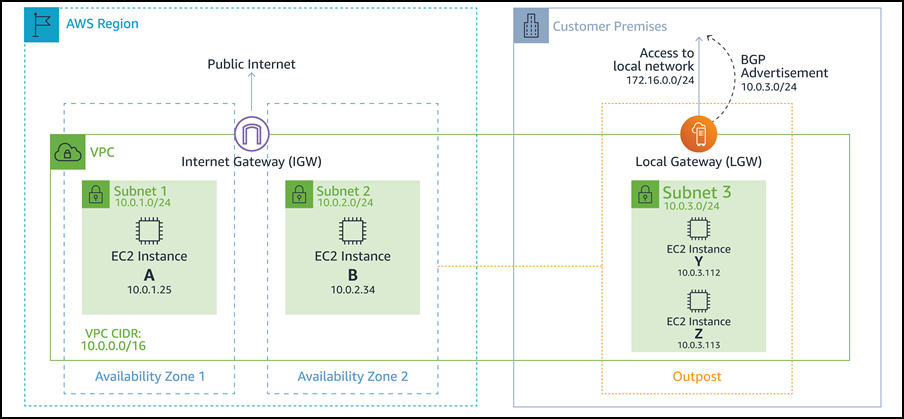

As described in the AWS whitepaper Building a Scalable and Secure Multi-VPC AWS Network Infrastructure, you can expand your network capacity by creating a non-routable IP address subnet and using a private NAT gateway that is located in a routable IP address space (non-overlapping) to route traffic. A private NAT gateway translates and routes traffic between non-routable IP addresses and routable IP addresses. The following diagram demonstrates the solution with reference to AWS Glue.

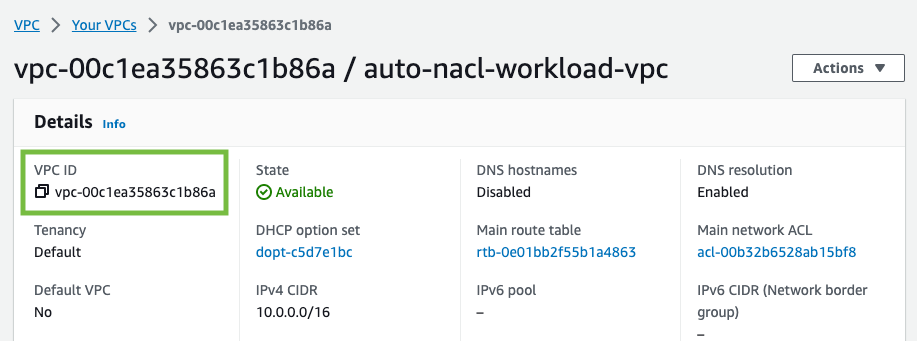

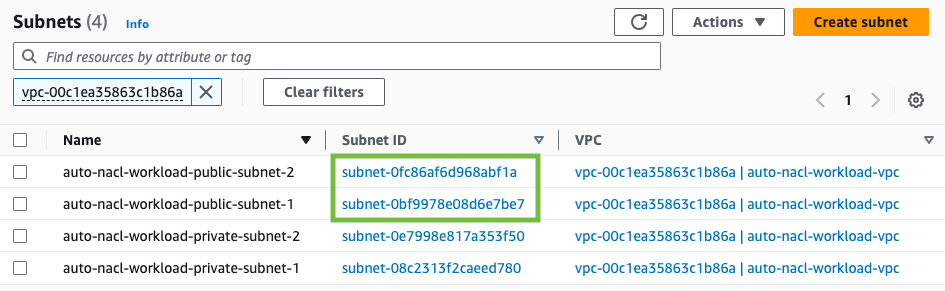

As you can see in the above diagram, VPC A (ETL) has two CIDR ranges attached. The smaller CIDR range 172.33.0.0/24 is routable because it not reused anywhere, whereas the larger CIDR range 100.64.0.0/16 is non-routable because it is reused in the database VPC.

In VPC B (Database), we have hosted two databases in routable subnets 172.30.0.0/26 and 172.30.0.64/26. These two subnets are in two separate Availability Zones for high availability. We also have two additional unused subnet 100.64.0.0/24 and 100.64.1.0/24 to simulate a non-routable setup.

You can choose the size of the non-routable CIDR range based on your capacity requirements. Since you can reuse IP addresses, you can create a very large subnet as needed. For example, a CIDR mask of /16 would give you approximately 65,000 IPv4 addresses. You can work with your network engineering team and size the subnets.

In short, you can configure AWS Glue jobs to use both routable and non-routable subnets in your VPC to maximize the available IP address pool.

Now let us understand how Glue ENIs that are in a non-routable subnet communicate with data sources in another VPC.

The data flow for the use case demonstrated here is as follows (referring to the numbered steps in figure above):

- When an AWS Glue job needs to access a data source, it first uses the AWS Glue connection on the job and creates the ENIs in the non-routable subnet 100.64.0.0/24 in VPC A. Later AWS Glue uses the database connection configuration and attempts to connect to the database in VPC B 172.30.0.0/24.

- As per the route table

VPCA-Non-Routable-RouteTablethe destination 172.30.0.0/24 is configured for a private NAT gateway. The request is sent to the NAT gateway, which then translates the source IP address from a non-routable IP address to a routable IP address. Traffic is then sent to the transit gateway attachment in VPC A because it’s associated with theVPCA-Routable-RouteTableroute table in VPC A. - Transit Gateway uses the 172.30.0.0/24 route and sends the traffic to the VPC B transit gateway attachment.

- The transit gateway ENI in VPC B uses VPC B’s local route to connect to the database endpoint and query the data.

- When the query is complete, the response is sent back to VPC A. The response traffic is routed to the transit gateway attachment in VPC B, then Transit Gateway uses the 172.33.0.0/24 route and sends traffic to the VPC A transit gateway attachment.

- The transit gateway ENI in VPC A uses the local route to forward the traffic to the private NAT gateway, which translates the destination IP address to that of ENIs in non-routable subnet.

- Finally, the AWS Glue job receives the data and continues processing.

The private NAT gateway solution is an option if you need extra IP addresses when you can’t obtain them from a routable network in your organization. Sometimes with each additional service there is an additional cost incurred, and this trade-off is necessary to meet your goals. Refer to the NAT Gateway pricing section on the Amazon VPC pricing page for more information.

Prerequisites

To complete the walk-through of the private NAT gateway solution, you need the following:

- An AWS account with a role that has sufficient access to provision the required resources.

- An AWS Identity and Access Management (IAM) user with access to AWS resources, including Amazon Virtual Private Cloud (Amazon VPC), Transit Gateway, a private NAT gateway, and an Amazon Relational Database Service (Amazon RDS) for MySQL database.

Deploy the solution

To implement the solution, complete the following steps:

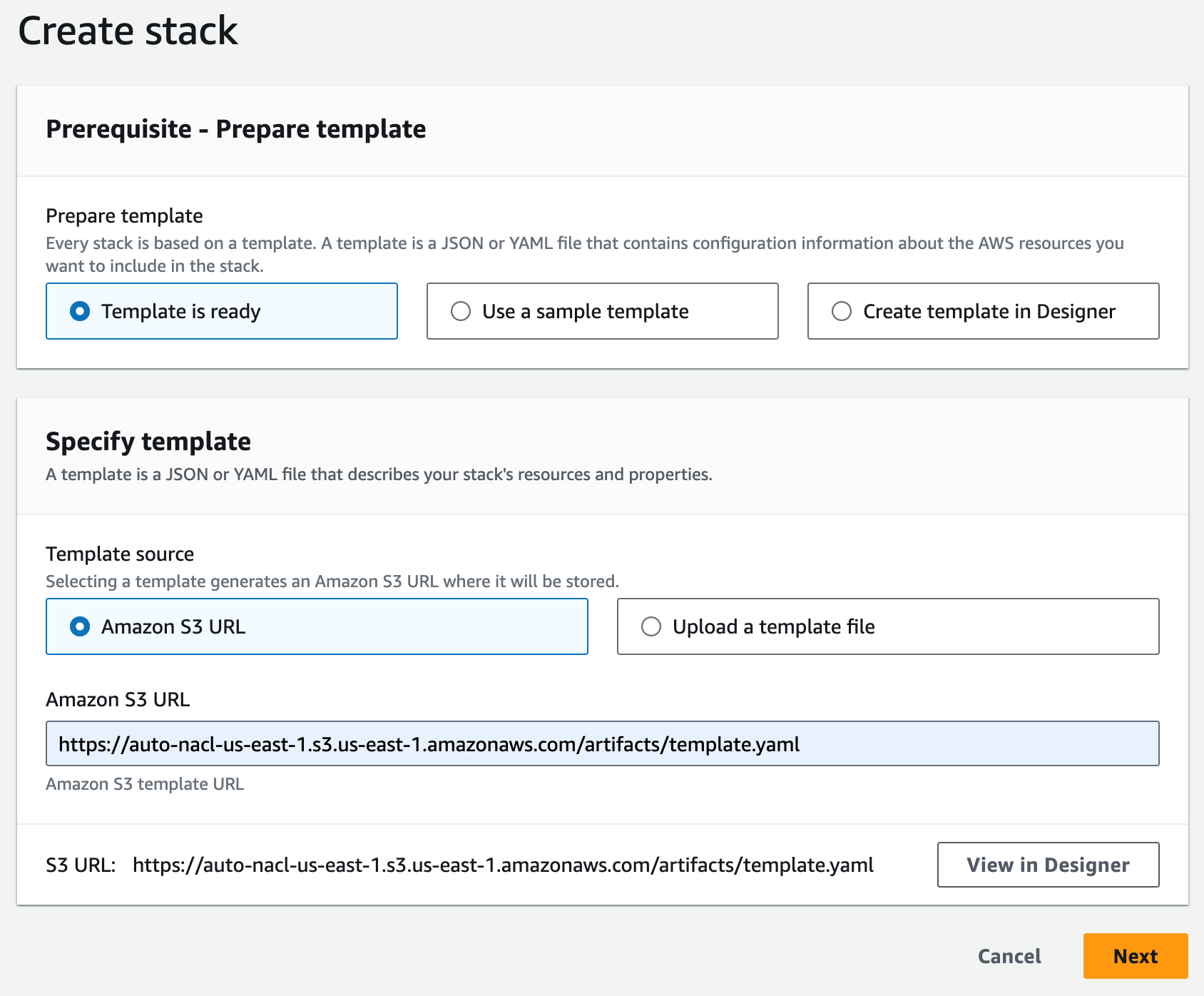

- Sign in to your AWS management console.

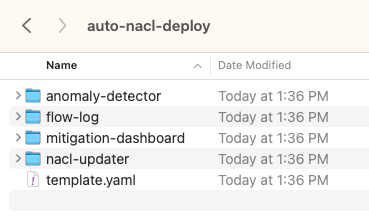

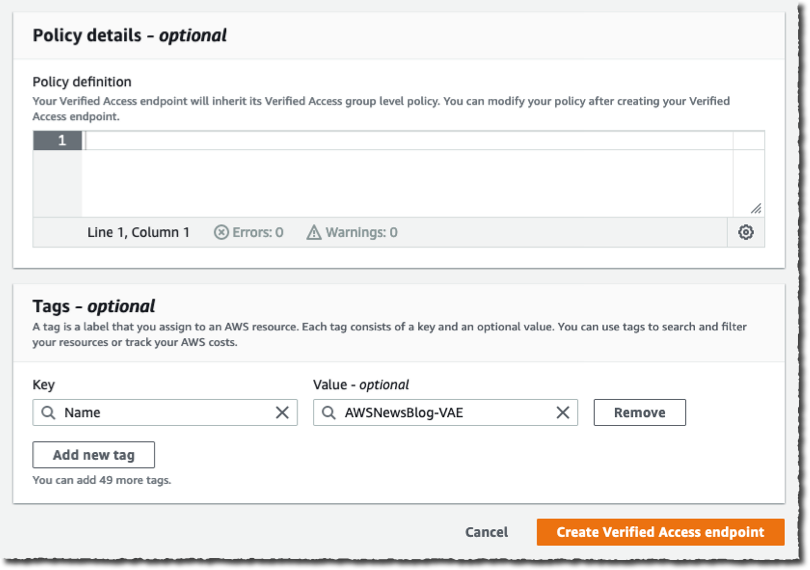

- Deploy the solution by clicking

. This stack defaults to

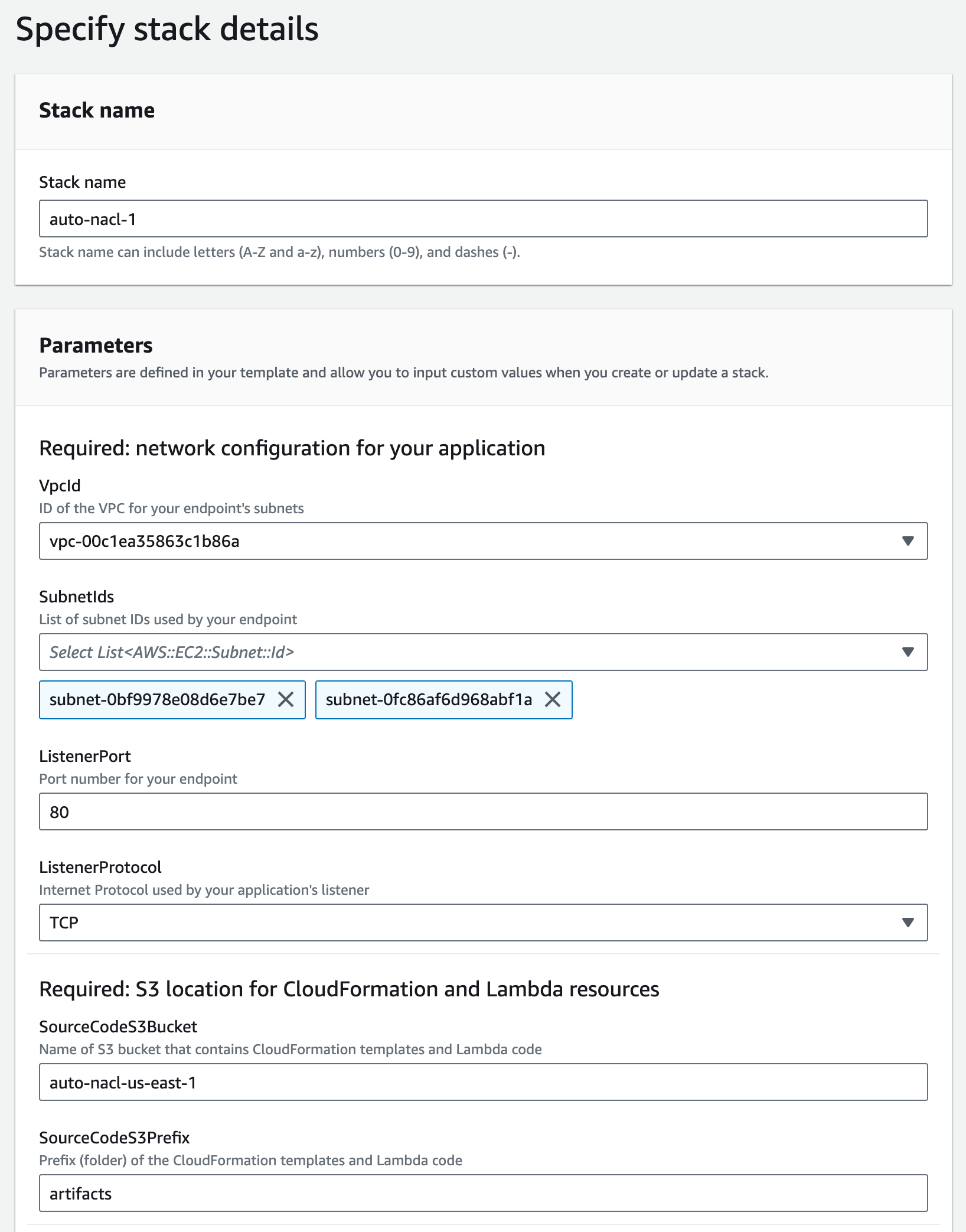

. This stack defaults to us-east-1, you can select your desired Region. - Click next and then specify the stack details. You can retain the input parameters to the prepopulated default values or change them as needed.

- For

DatabaseUserPassword, enter an alphanumeric password of your choice and ensure to note it down for further use. - For

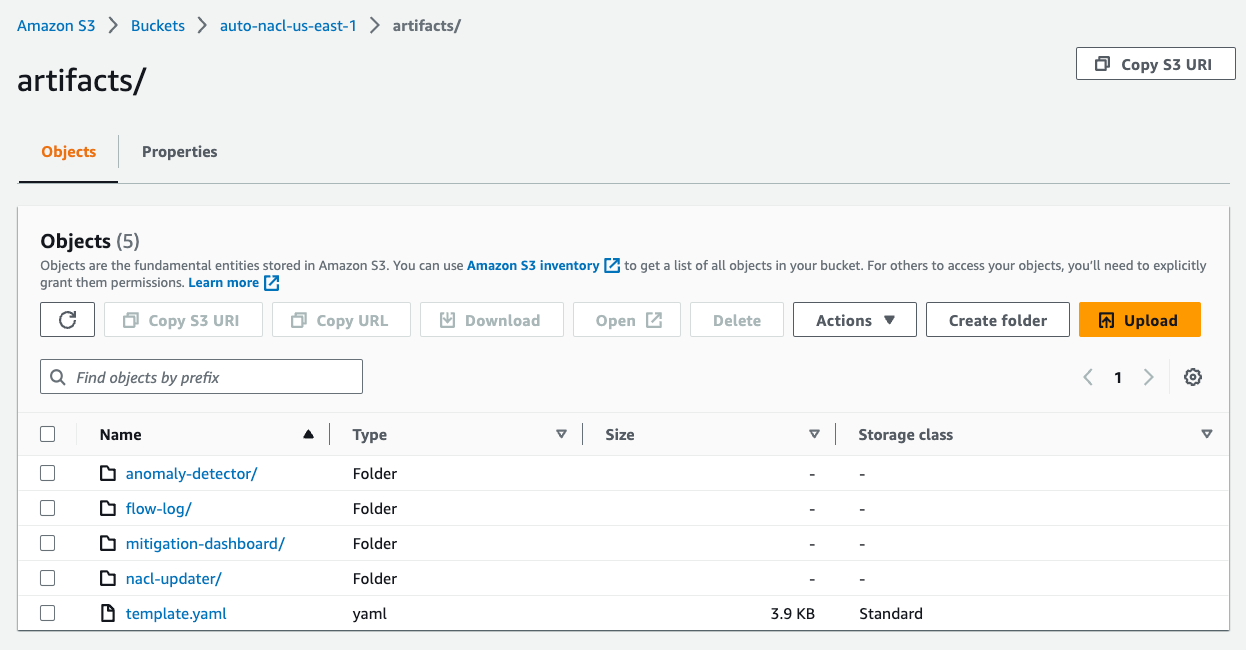

S3BucketName, enter a unique Amazon Simple Storage Service (Amazon S3) bucket name. This bucket stores the AWS Glue job script that will be copied from an AWS public code repository.

- Click next.

- Leave the default values and click next again.

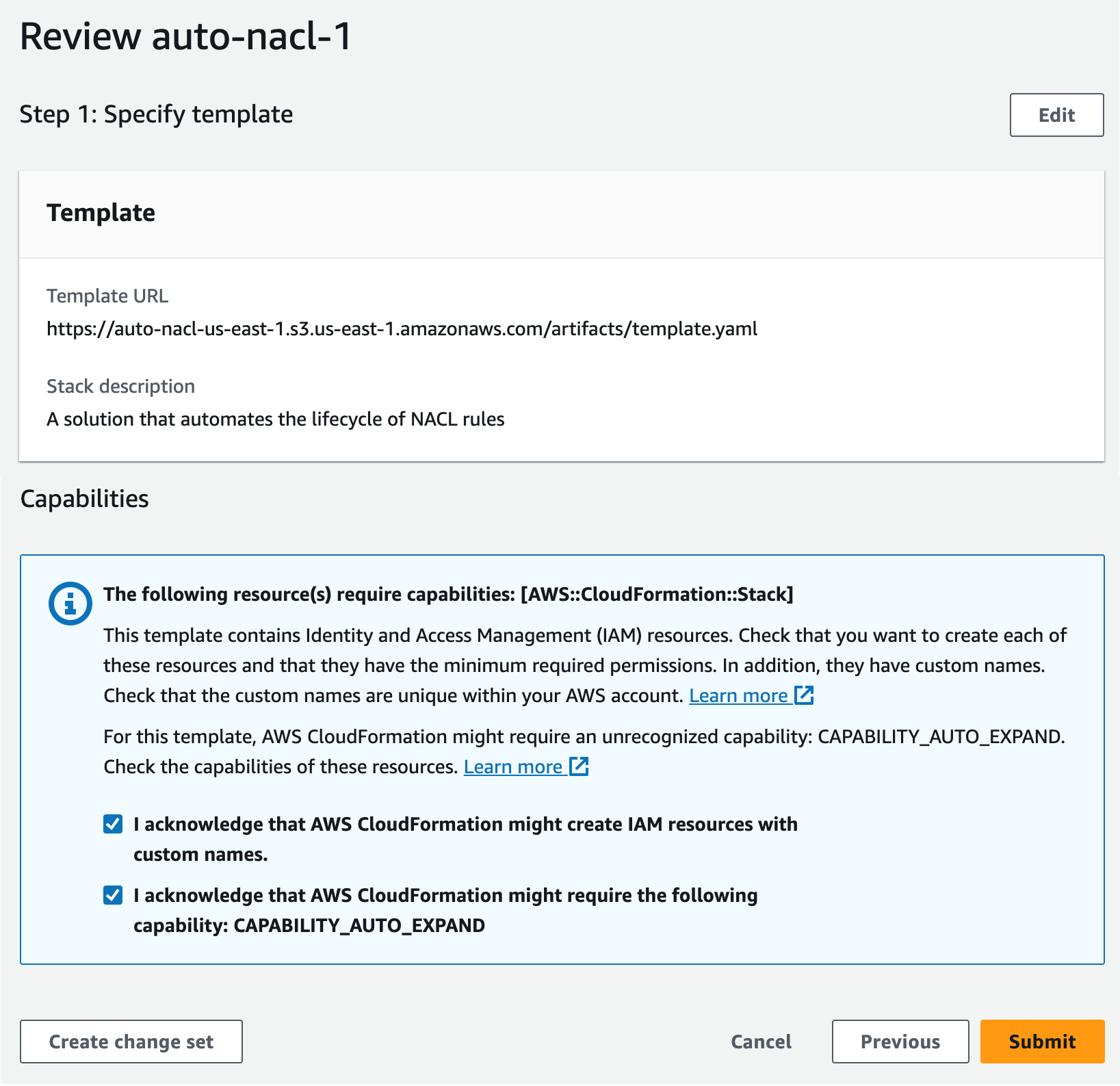

- Review the details, acknowledge the creation of IAM resources, and click submit to start the deployment.

You can monitor the events to see resources being created on the AWS CloudFormation console. It may take around 20 minutes for the stack resources to be created.

After the stack creation is complete, go to the Outputs tab on the AWS CloudFormation console and note the following values for later use:

DBSourceDBTargetSourceCrawlerTargetCrawler

Connect to an AWS Cloud9 instance

Next, we need to prepare the source and target Amazon RDS for MySQL tables using an AWS Cloud9 instance. Complete the following steps:

- On the AWS Cloud9 console page, locate the

aws-glue-cloud9environment. - In the Cloud9 IDE column, click on Open to launch your AWS Cloud9 instance in a new web browser.

Prepare the source MySQL table

Complete the following steps to prepare your source table:

- From the AWS Cloud9 terminal, install the MySQL client using the following command:

sudo yum update -y && sudo yum install -y mysql - Connect to the source database using the following command. Replace the source hostname with the DBSource value you captured earlier. When prompted, enter the database password that you specified during the stack creation.

mysql -h <Source Hostname> -P 3306 -u admin -p - Run the following scripts to create the source

emptable, and load the test data:-- connect to source database USE srcdb; -- Drop emp table if it exists DROP TABLE IF EXISTS emp; -- Create the emp table CREATE TABLE emp (empid INT AUTO_INCREMENT, ename VARCHAR(100) NOT NULL, edept VARCHAR(100) NOT NULL, PRIMARY KEY (empid)); -- Create a stored procedure to load sample records into emp table DELIMITER $$ CREATE PROCEDURE sp_load_emp_source_data() BEGIN DECLARE empid INT; DECLARE ename VARCHAR(100); DECLARE edept VARCHAR(50); DECLARE cnt INT DEFAULT 1; -- Initialize counter to 1 to auto-increment the PK DECLARE rec_count INT DEFAULT 1000; -- Initialize sample records counter TRUNCATE TABLE emp; -- Truncate the emp table WHILE cnt <= rec_count DO -- Loop and load the required number of sample records SET ename = CONCAT('Employee_', FLOOR(RAND() * 100) + 1); -- Generate random employee name SET edept = CONCAT('Dept_', FLOOR(RAND() * 100) + 1); -- Generate random employee department -- Insert record with auto-incrementing empid INSERT INTO emp (ename, edept) VALUES (ename, edept); -- Increment counter for next record SET cnt = cnt + 1; END WHILE; COMMIT; END$$ DELIMITER ; -- Call the above stored procedure to load sample records into emp table CALL sp_load_emp_source_data(); - Check the source

emptable’s count using the below SQL query (you need this at later step for verification).select count(*) from emp; - Run the following command to exit from the MySQL client utility and return to the AWS Cloud9 instance’s terminal:

quit;

Prepare the target MySQL table

Complete the following steps to prepare the target table:

- Connect to the target database using the following command. Replace the target hostname with the DBTarget value you captured earlier. When prompted enter the database password that you specified during the stack creation.

mysql -h <Target Hostname> -P 3306 -u admin -p - Run the following scripts to create the target

emptable. This table will be loaded by the AWS Glue job in the subsequent step.-- connect to the target database USE targetdb; -- Drop emp table if it exists DROP TABLE IF EXISTS emp; -- Create the emp table CREATE TABLE emp (empid INT AUTO_INCREMENT, ename VARCHAR(100) NOT NULL, edept VARCHAR(100) NOT NULL, PRIMARY KEY (empid) );

Verify the networking setup (Optional)

The following steps are useful to understand NAT gateway, route tables, and the transit gateway configurations of private NAT gateway solution. These components were created during the CloudFormation stack creation.

- On the Amazon VPC console page, navigate to Virtual private cloud section and locate NAT gateways.

- Search for NAT Gateway with name

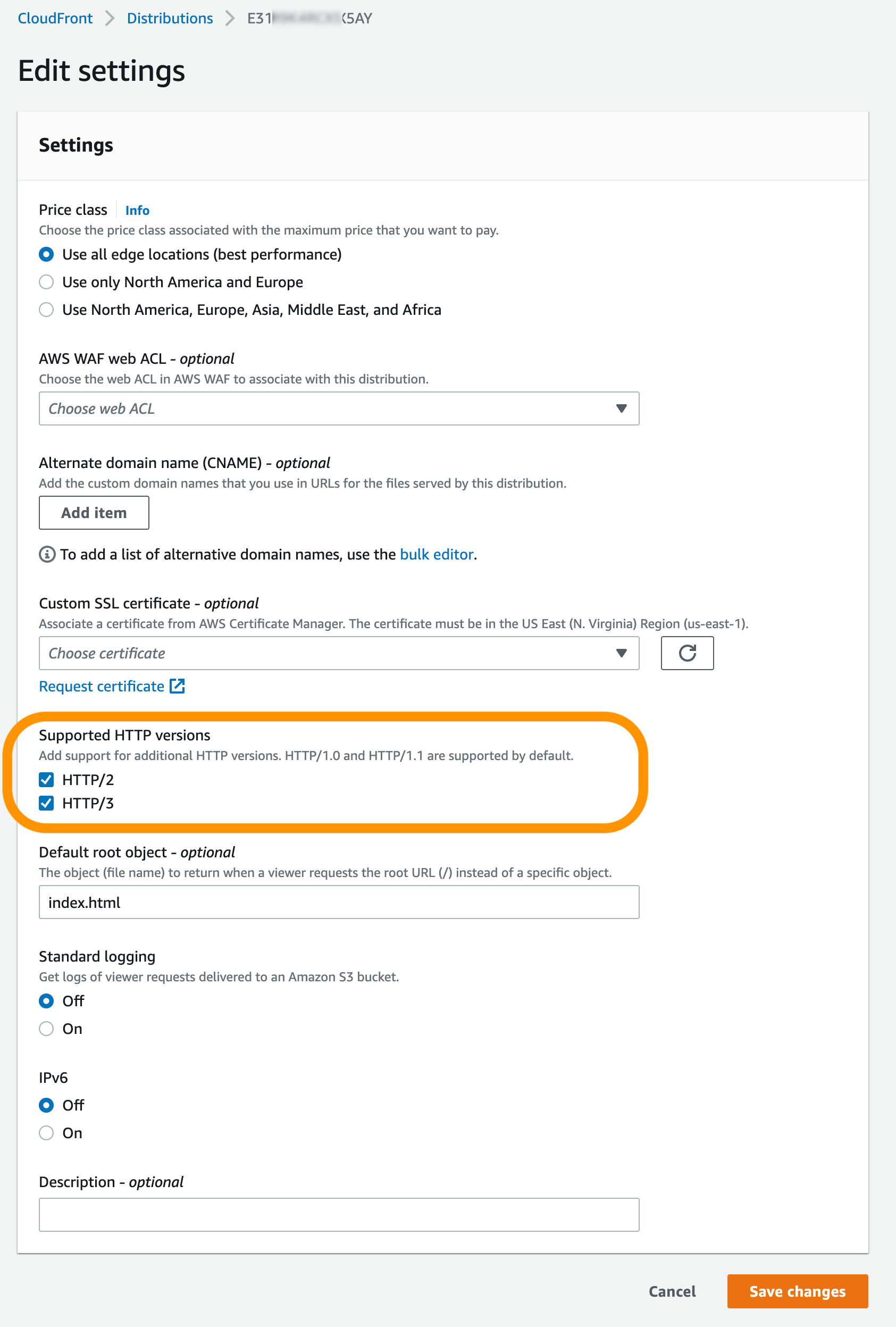

Glue-OverlappingCIDR-NATGWand explore it further. As you can see in the following screenshot, the NAT gateway was created in VPC A (ETL) on the routable subnet.

- In the left side navigation pane, navigate to Route tables under virtual private cloud section.

- Search for

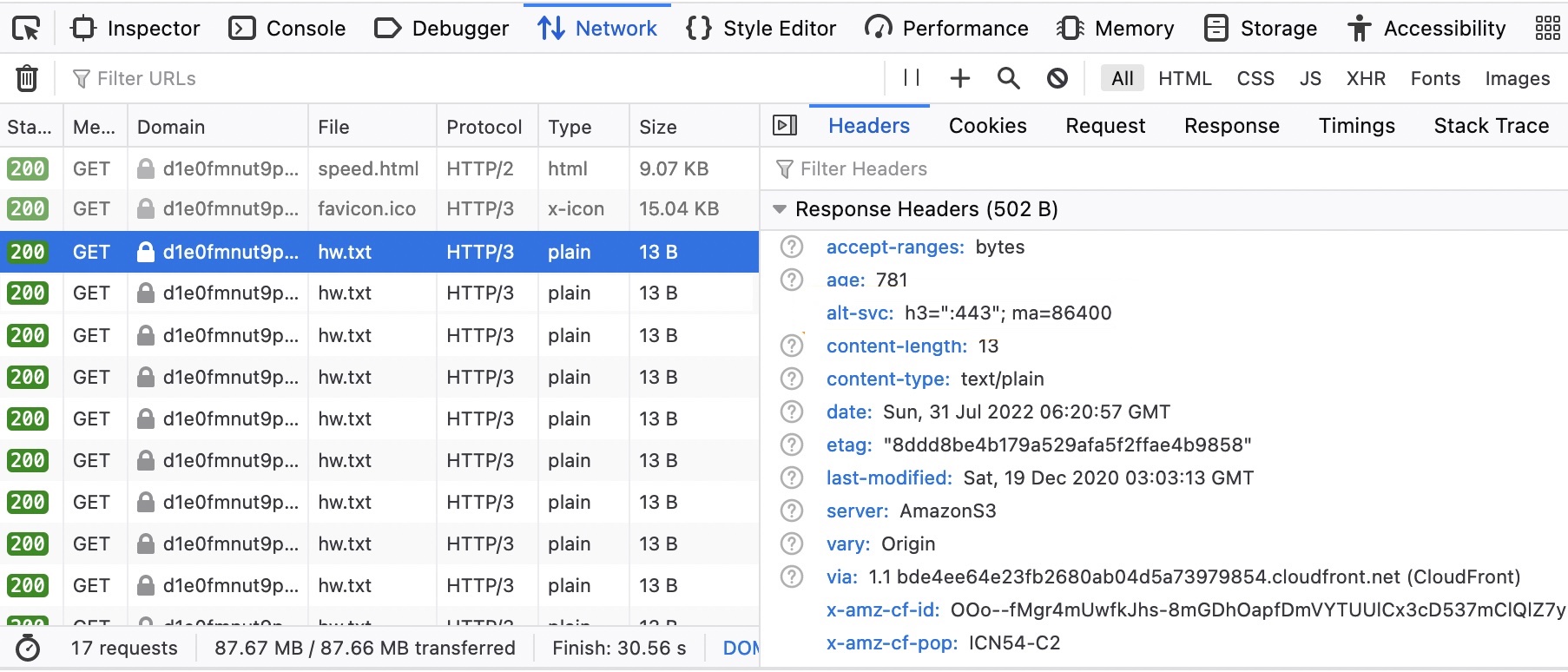

VPCA-Non-Routable-RouteTableand explore it further. You can see that the route table is configured to translate traffic from overlapping CIDR using the NAT gateway.

- In the left side navigation pane, navigate to Transit gateways section and click on Transit gateway attachments. Enter

VPC-in the search box and locate the two newly created transit gateway attachments. - You can explore these attachments further to learn their configurations.

Run the AWS Glue crawlers

Complete the following steps to run the AWS Glue crawlers that are required to catalog the source and target emp tables. This is a prerequisite step for running the AWS Glue job.

- On the AWS Glue Console page, under Data Catalog section in the navigation pane, click on Crawlers.

- Locate the source and target crawlers that you noted earlier.

- Select these crawlers and click Run to create the respective AWS Glue Data Catalog tables.

- You can monitor the AWS Glue crawlers for the successful completion. It may take around 3–4 minutes for both crawlers to complete. When they’re done, the last run status of the job changes to Succeeded, and you can also see there are two AWS Glue catalog tables created from this run.

Run the AWS Glue ETL job

After you set up the tables and complete the prerequisite steps, you are now ready to run the AWS Glue job that you created using the CloudFormation template. This job connects to the source RDS for MySQL database, extracts the data, and loads the data into the target RDS for MySQL database. This job reads data from a source MySQL table and loads it to the target MySQL table using private NAT gateway solution. To run the AWS Glue job, complete the following steps:

- On the AWS Glue console, click on ETL jobs in the navigation pane.

- Click on the job

glue-private-nat-job. - Click Run to start it.

The following is the PySpark script for this ETL job:

import sys

from awsglue.transforms import *

from awsglue.utils import getResolvedOptions

from pyspark.context import SparkContext

from awsglue.context import GlueContext

from awsglue.job import Job

args = getResolvedOptions(sys.argv, ["JOB_NAME"])

sc = SparkContext()

glueContext = GlueContext(sc)

spark = glueContext.spark_session

job = Job(glueContext)

job.init(args["JOB_NAME"], args)

# Script generated for node AWS Glue Data Catalog

AWSGlueDataCatalog_node = glueContext.create_dynamic_frame.from_catalog(

database="glue_cat_db_source",

table_name="srcdb_emp",

transformation_ctx="AWSGlueDataCatalog_node",

)

# Script generated for node Change Schema

ChangeSchema_node = ApplyMapping.apply(

frame=AWSGlueDataCatalog_node,

mappings=[

("empid", "int", "empid", "int"),

("ename", "string", "ename", "string"),

("edept", "string", "edept", "string"),

],

transformation_ctx="ChangeSchema_node",

)

# Script generated for node AWS Glue Data Catalog

AWSGlueDataCatalog_node = glueContext.write_dynamic_frame.from_catalog(

frame=ChangeSchema_node,

database="glue_cat_db_target",

table_name="targetdb_emp",

transformation_ctx="AWSGlueDataCatalog_node",

)

job.commit()

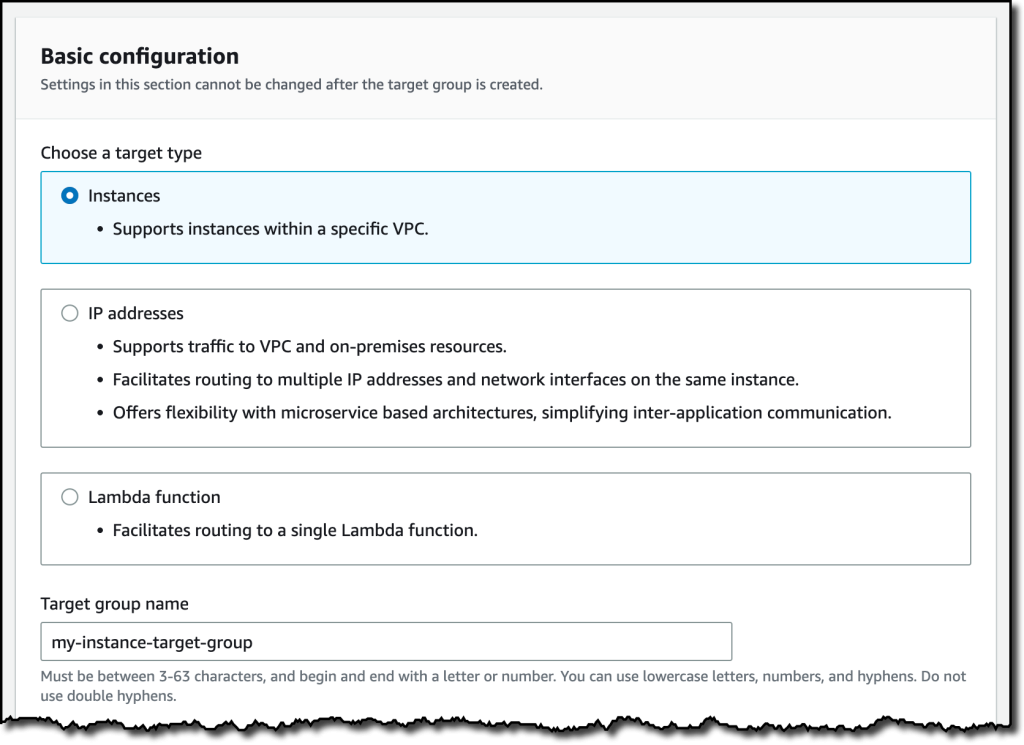

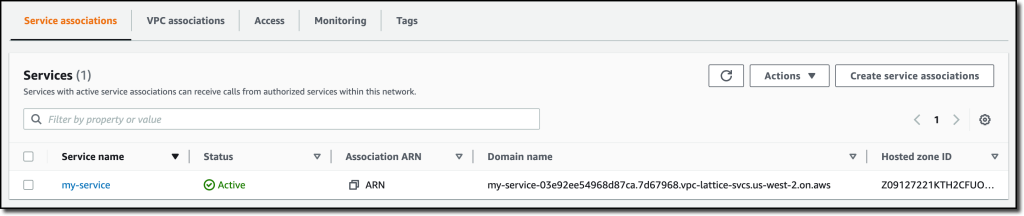

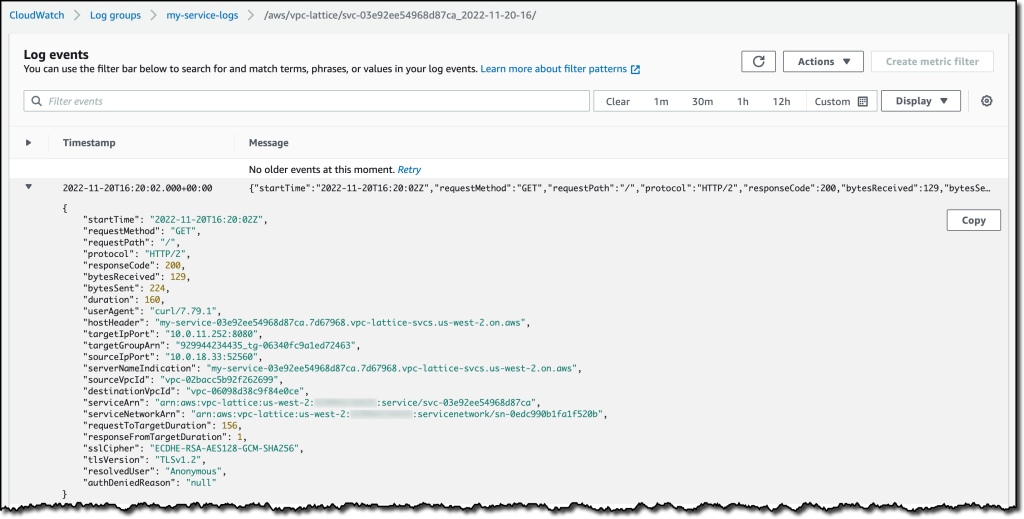

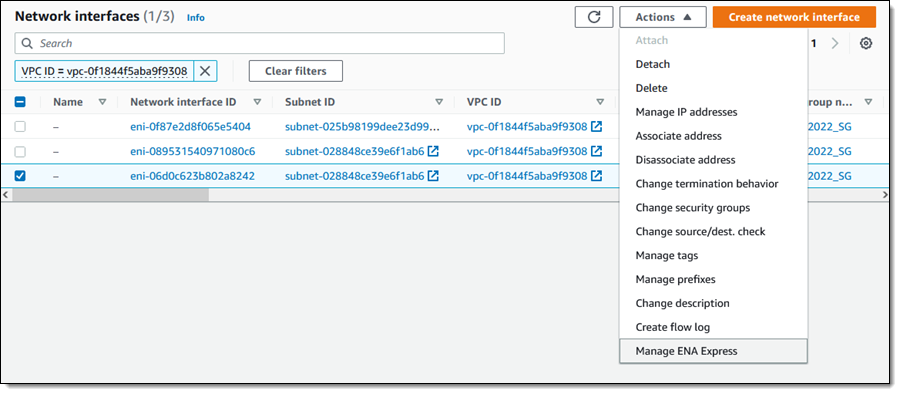

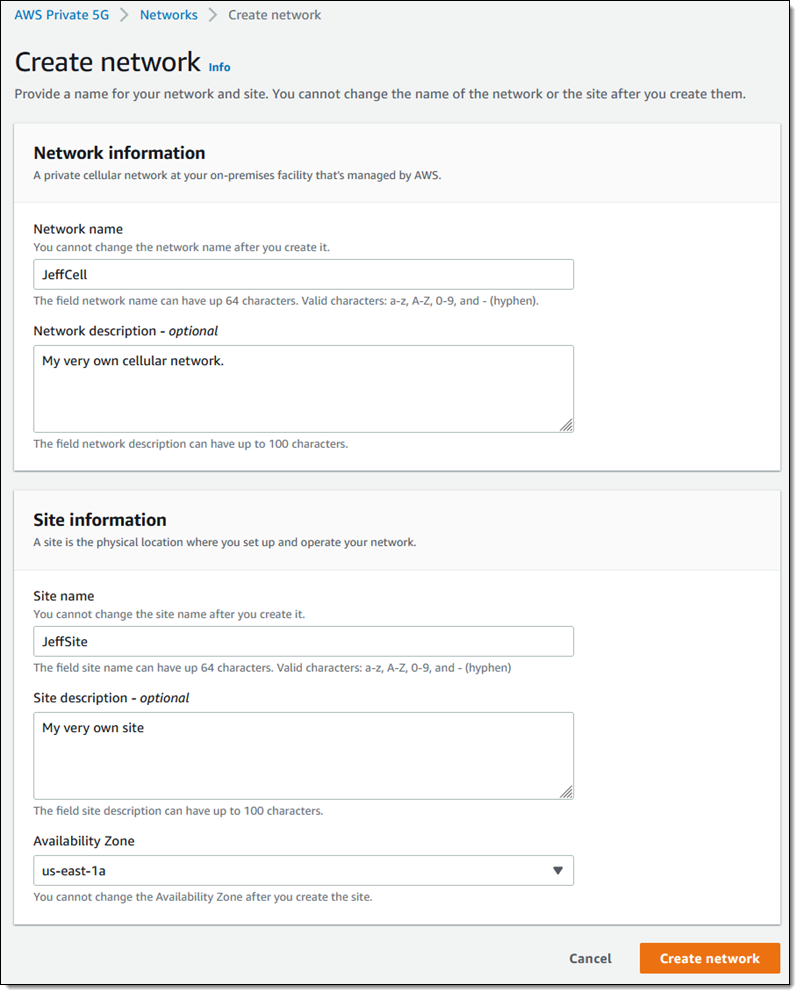

Based on the job’s DPU configuration, AWS Glue creates a set of ENIs in the non-routable subnet that is configured on the AWS Glue connection. You can monitor these ENIs on the Network Interfaces page of the Amazon Elastic Compute Cloud (Amazon EC2) console.

The below screenshot shows the 10 ENIs that were created for the job run to match the requested number of workers configured on the job parameters. As expected, the ENIs were created in the non-routable subnet of VPC A, enabling scalability of IP addresses. After the job is complete, these ENIs will be automatically released by AWS Glue.

When the AWS Glue job is running, you can monitor its status. Upon successful completion, the job’s status changes to Succeeded.

Verify the results

After the AWS Glue job is complete, connect to the target MySQL database. Verify if the target record count matches to the source. You can use the below SQL query in AWS Cloud9 terminal.

USE targetdb;

SELECT count(*) from emp;Finally, exit from the MySQL client utility using the following command and return to the AWS Cloud9 terminal: quit;

You can now confirm that AWS Glue has successfully completed a job to load data to a target database using the IP addresses from a non-routable subnet. This concludes end to end testing of the private NAT gateway solution.

Clean up

To avoid incurring future charges, delete the resource created via CloudFormation stack by completing the following steps:

- On the AWS CloudFormation console, click Stacks in the navigation pane.

- Select the stack

AWSGluePrivateNATStack. - Click on Delete to delete the stack. When prompted confirm the stack deletion.

Conclusion

In this post, we demonstrated how you can scale AWS Glue jobs by optimizing IP addresses consumption and expanding your network capacity by using a private NAT gateway solution. This two-fold approach helps you to get unblocked in an environment that has IP address capacity constraints. The options discussed in the AWS Glue IP address optimization section are complimentary to the IP address expansion solutions, and you can iteratively build to mature your data platform.

Learn more about AWS Glue job optimization techniques from Monitor and optimize cost on AWS Glue for Apache Spark and Best practices to scale Apache Spark jobs and partition data with AWS Glue.

About the authors

Sushanth Kothapally is a Solutions Architect at Amazon Web Services supporting Automotive and Manufacturing customers. He is passionate about designing technology solutions to meet business goals and has keen interest in serverless and event-driven architectures.

Sushanth Kothapally is a Solutions Architect at Amazon Web Services supporting Automotive and Manufacturing customers. He is passionate about designing technology solutions to meet business goals and has keen interest in serverless and event-driven architectures.

Senthil Kamala Rathinam is a Solutions Architect at Amazon Web Services specializing in Data and Analytics. He is passionate about helping customers to design and build modern data platforms. In his free time, Senthil loves to spend time with his family and play badminton.

Senthil Kamala Rathinam is a Solutions Architect at Amazon Web Services specializing in Data and Analytics. He is passionate about helping customers to design and build modern data platforms. In his free time, Senthil loves to spend time with his family and play badminton.