Post Syndicated from Brendan Jenkins original https://aws.amazon.com/blogs/devops/quickly-go-from-idea-to-pr-with-codecatalyst-using-amazon-q/

Amazon Q feature development enables teams using Amazon CodeCatalyst to scale with AI to assist developers in completing everyday software development tasks. Developers can now go from an idea in an issue to a fully tested, merge-ready, running application code in a Pull Request (PR) with natural language inputs in a few clicks. Developers can also provide feedback to Amazon Q directly on the published pull request and ask it to generate a new revision. If the code change falls short of expectations, a new development environment can be created directly from the pull request, necessary adjustments can be made manually, a new revision published, and proceed with the merge upon approval.

In this blog, we will walk through a use case leveraging the Modern three-tier web application blueprint, and adding a feature to the web application. We’ll leverage Amazon Q feature development to quickly go from Idea to PR. We also suggest following the steps outlined below in this blog in your own application so you can gain a better understanding of how you can use this feature in your daily work.

Solution Overview

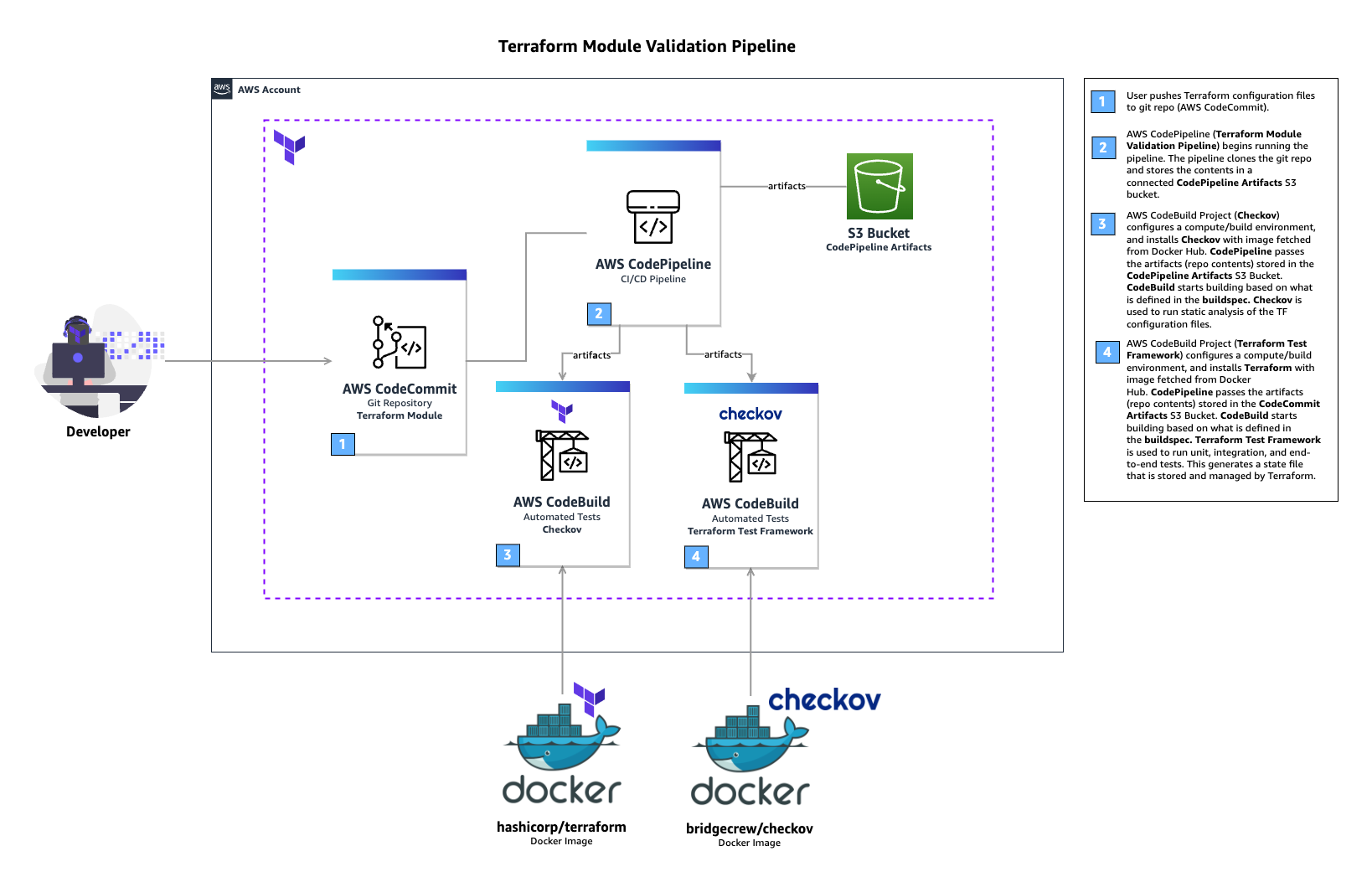

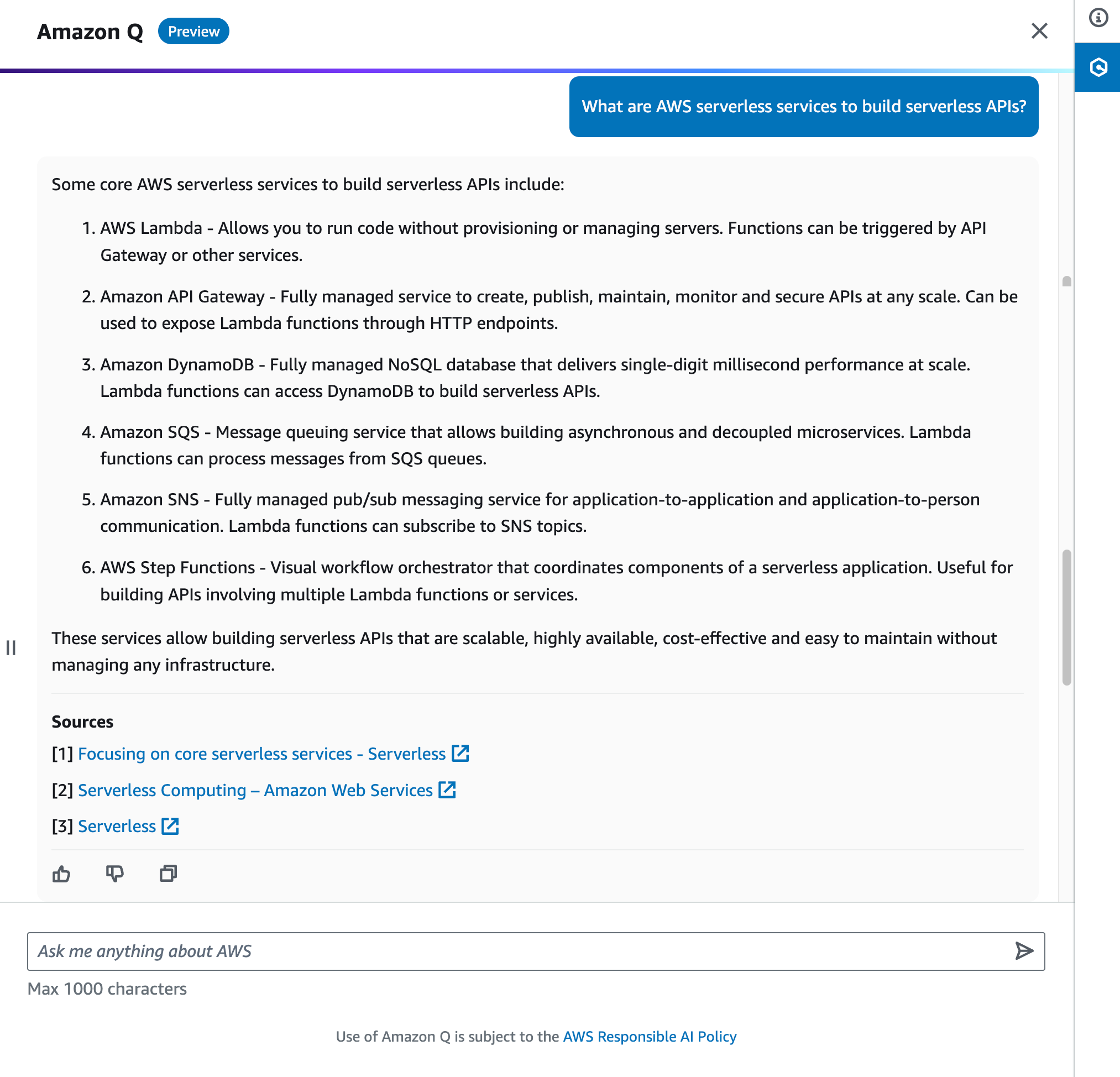

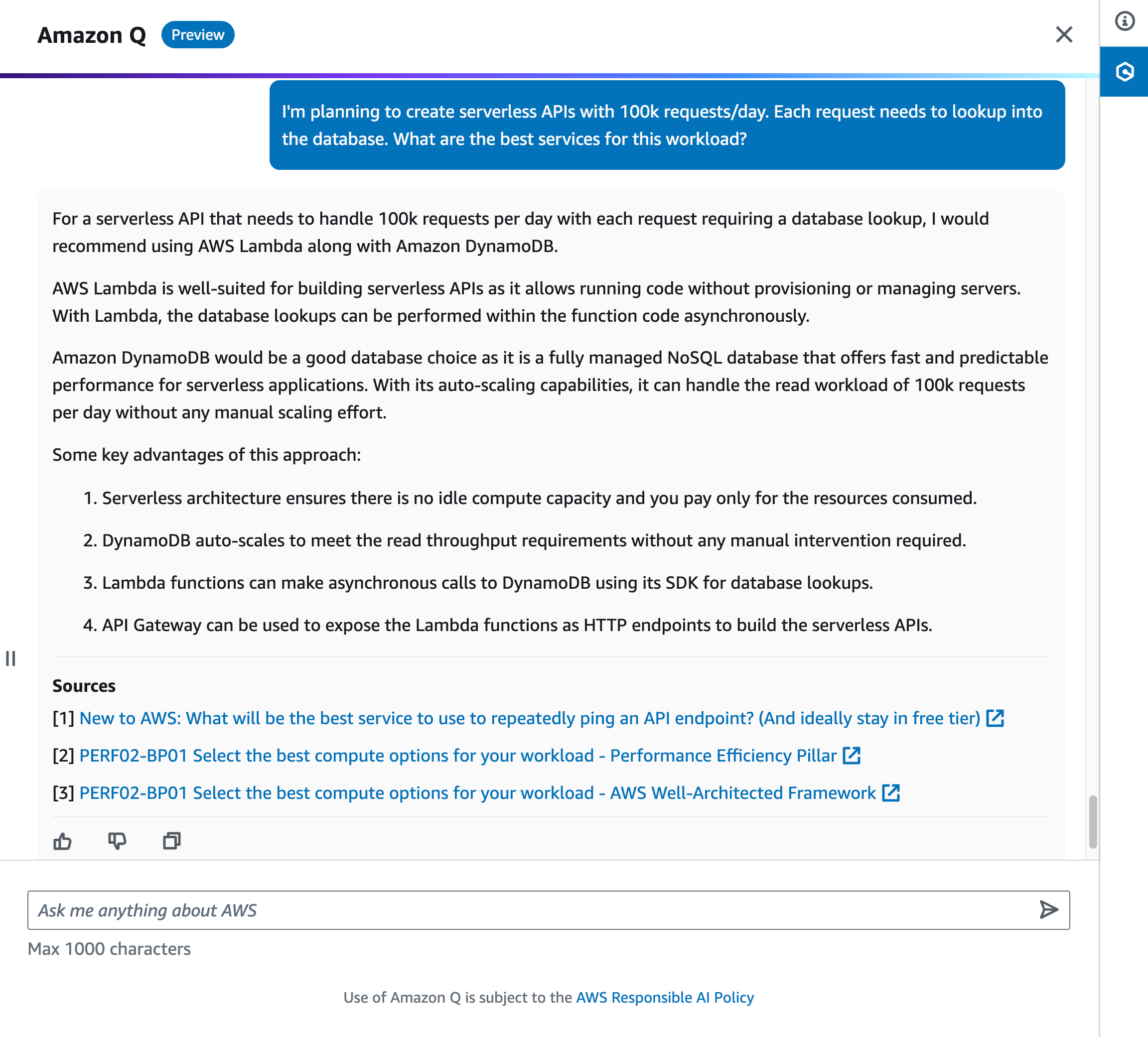

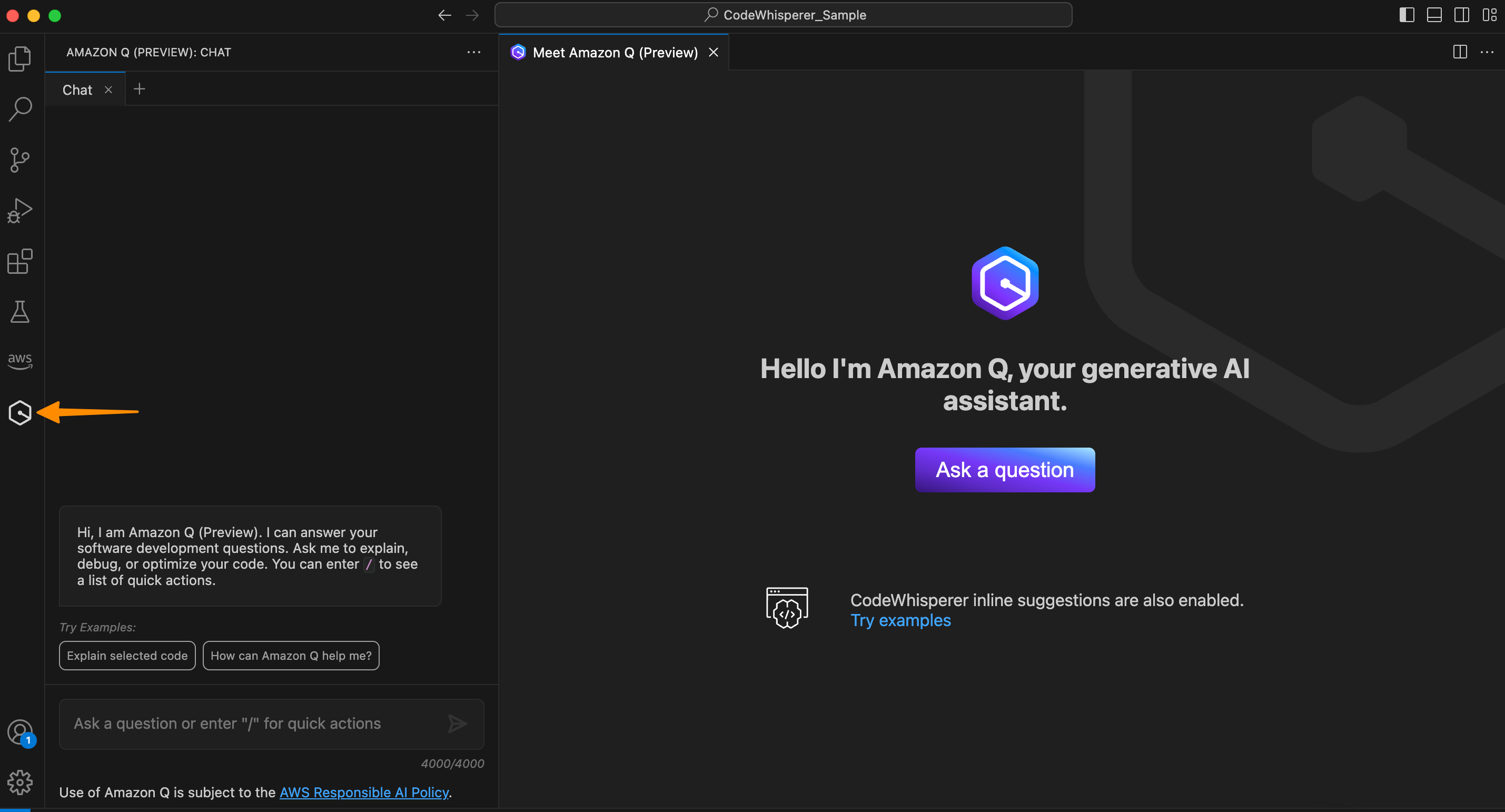

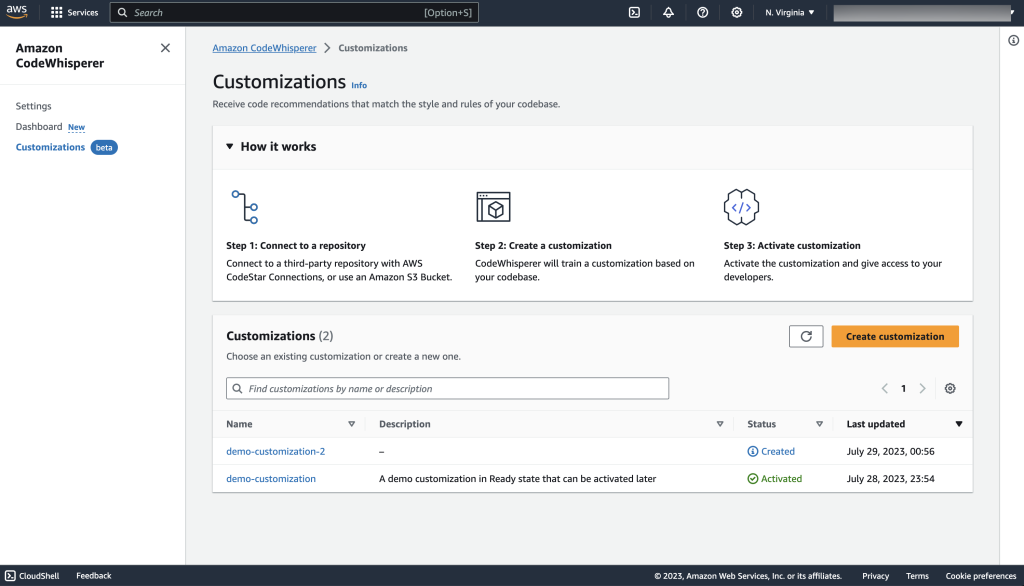

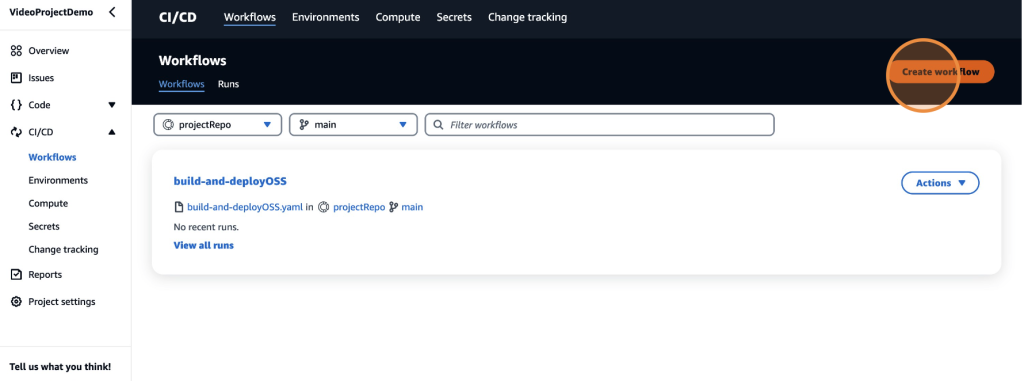

Amazon Q feature development is integrated into CodeCatalyst. Figure 1 details how users can assign Amazon Q an issue. When assigning the issue, users answer a few preliminary questions and Amazon Q outputs the proposed approach, where users can either approve or provide additional feedback to Amazon Q. Once approved, Amazon Q will generate a PR where users can review, revise, and merge the PR into the repository.

Figure 1: Amazon Q feature development workflow

Prerequisites

Although we will walk through a sample use case in this blog using a Blueprint from CodeCatalyst, after, we encourage you to try this with your own application so you can gain hands-on experience with utilizing this feature. If you are using CodeCatalyst for the first time, you’ll need:

- A CodeCatalyst space

- Amazon Q feature development in CodeCatalyst is currently in preview. To access this feature, ensure that you are using the Standard or Enterprise tier and the generative AI feature is enabled in your Space

Walkthrough

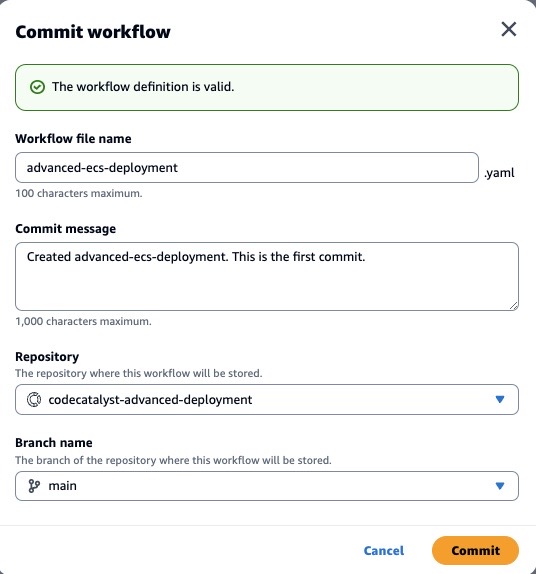

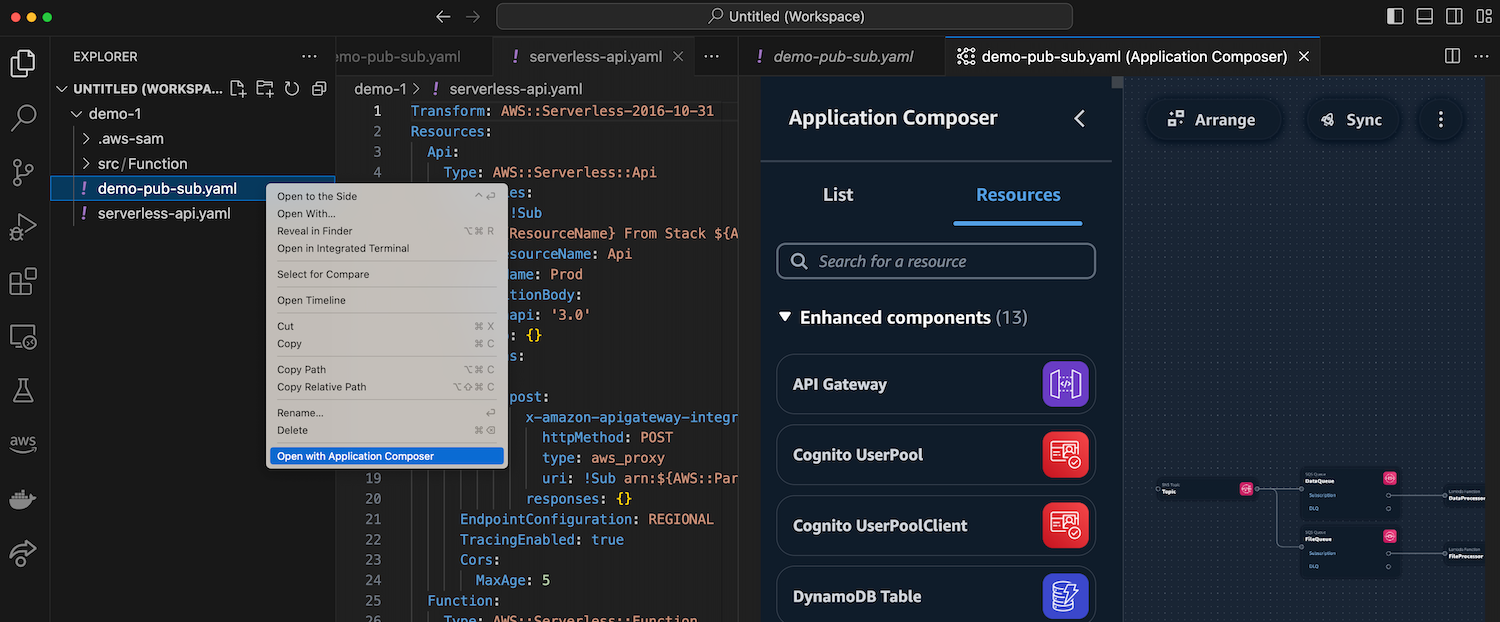

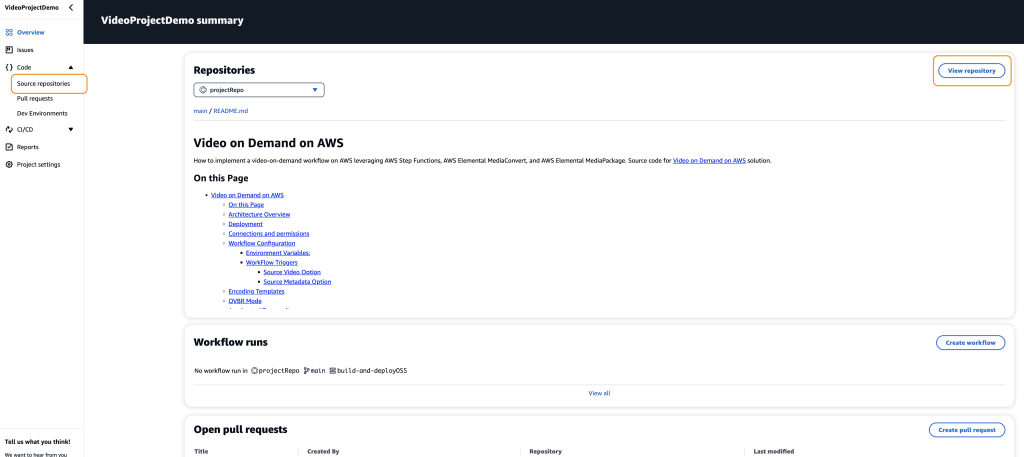

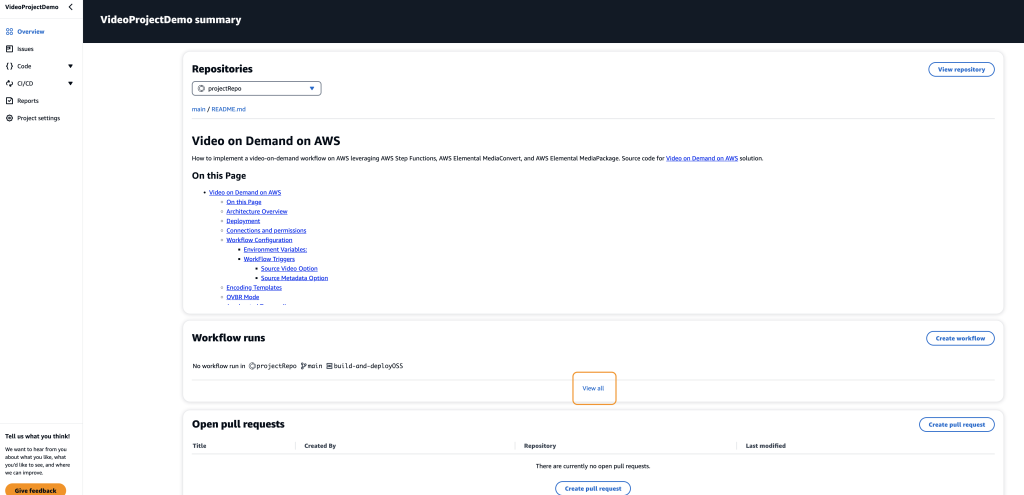

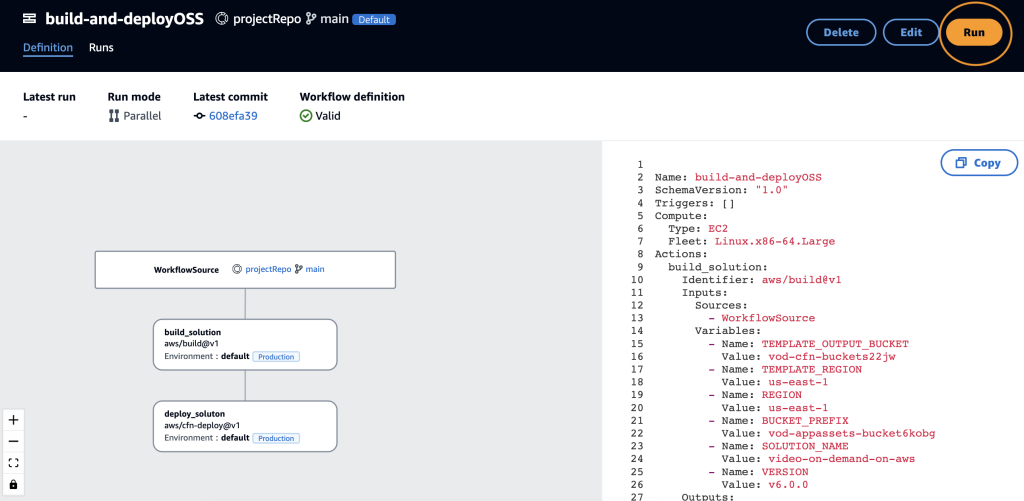

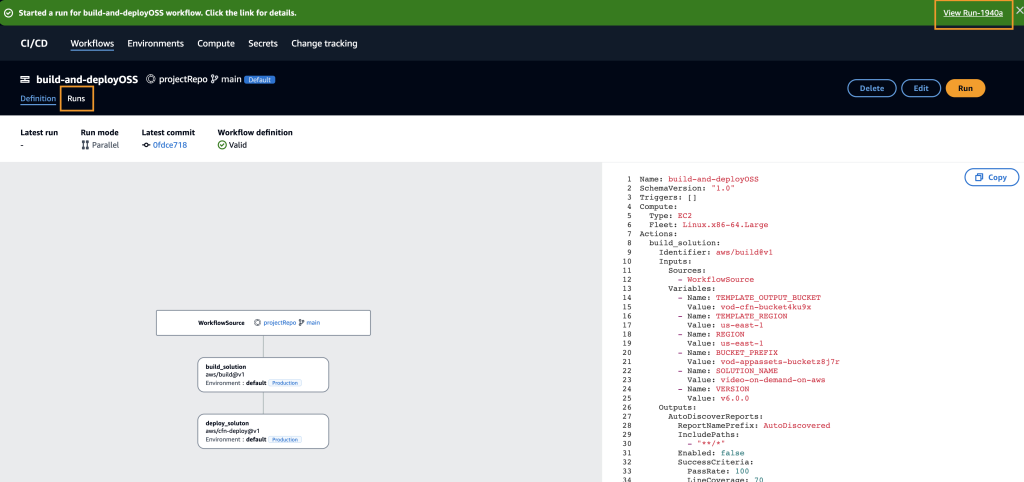

Step 1: Creating the blueprint

In this blog, we’ll leverage the Modern three-tier web application blueprint to walk through a sample use case. This blueprint creates a Mythical Mysfits three-tier web application with modular presentation, application, and data layers.

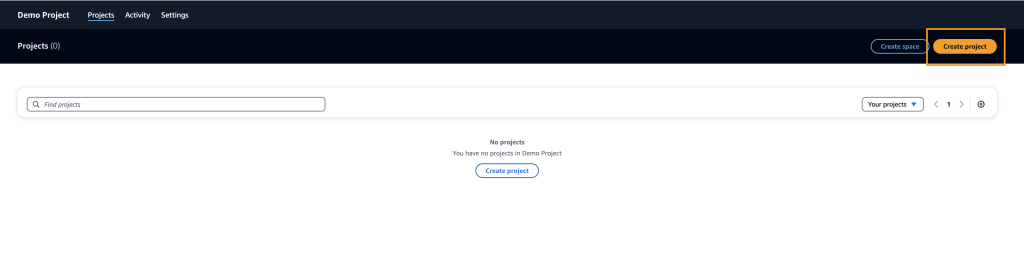

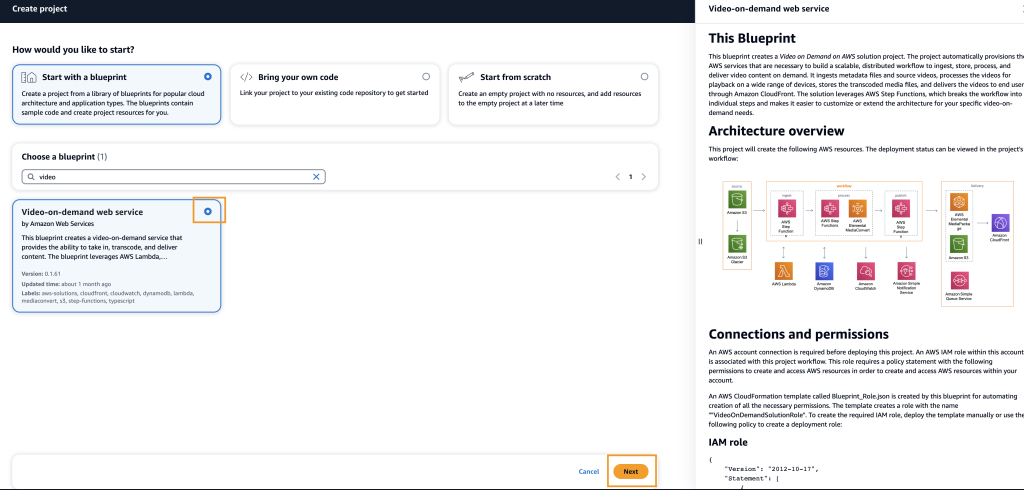

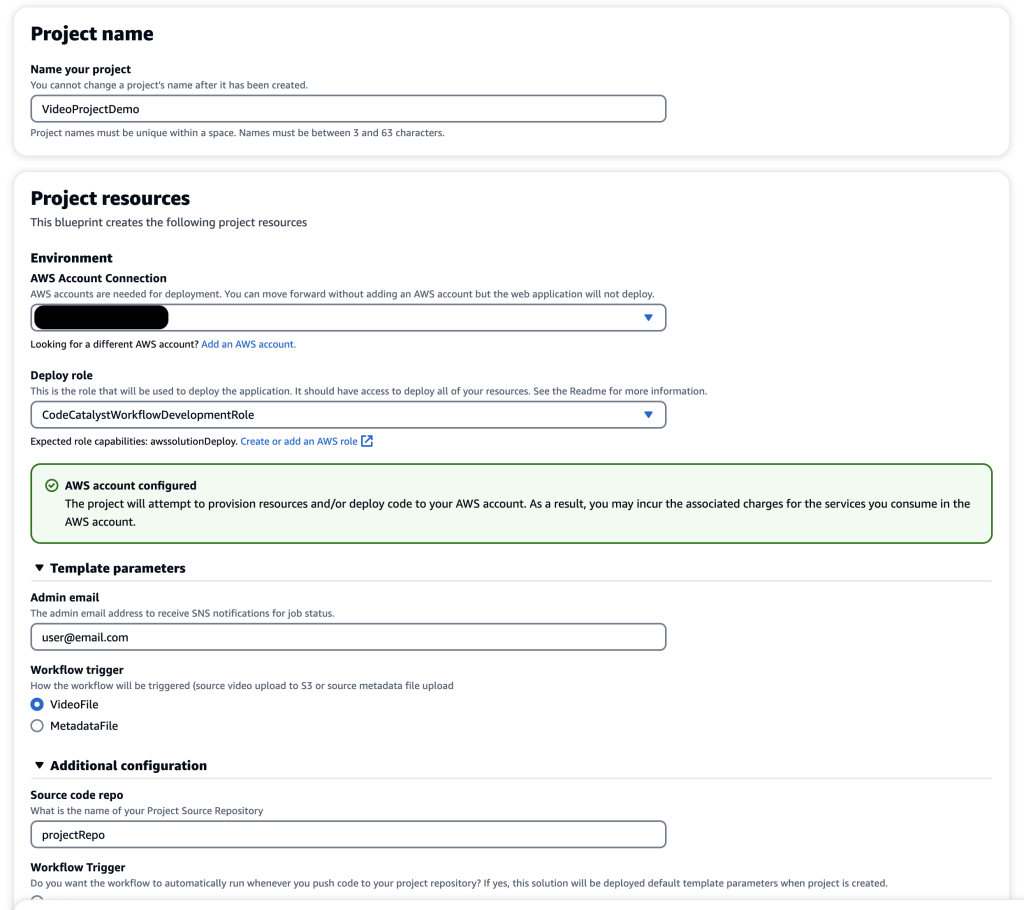

Figure 2: Creating a new Modern three-tier application blueprint

First, within your space click “Create Project” and select the Modern three-tier web application CodeCatalyst Blueprint as shown above in Figure 2.

Enter a Project name and select: Lambda for the Compute Platform and Amplify Hosting for Frontend Hosting Options. Additionally, ensure your AWS account is selected along with creating a new IAM Role.

Once the project is finished creating, the application will deploy via a CodeCatalyst workflow, assuming the AWS account and IAM role were setup correctly. The deployed application will be similar to the Mythical Mysfits website.

Step 2: Create a new issue

The Product Manager (PM) has asked us to add a feature to the newly created application, which entails creating the ability to add new mythical creatures. The PM has provided a detailed description to get started.

In the Issues section of our new project, click Create Issue

For the Issue title, enter “Ability to add a new mythical creature” and for the Description enter “Users should be able to add a new mythical creature to the website. There should be a new Add button on the UI, when prompted should allow the user to fill in Name, Age, Description, Good/Evil, Lawful/Chaotic, Species, Profile Image URI and thumbnail Image URI for the new creature. When the user clicks save, the application should leverage the existing API in app.py to save the new creature to the DynamoDB table.”

Furthermore, click Assign to Amazon Q as shown below in Figure 3.

Figure 3: Assigning a new issue to Amazon Q

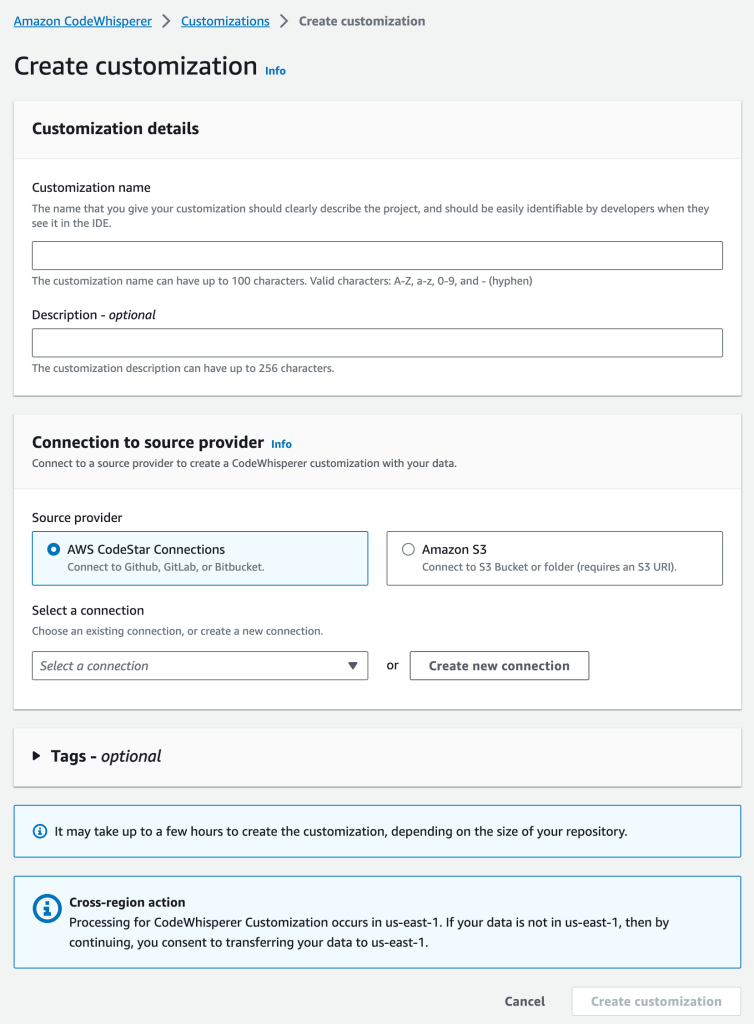

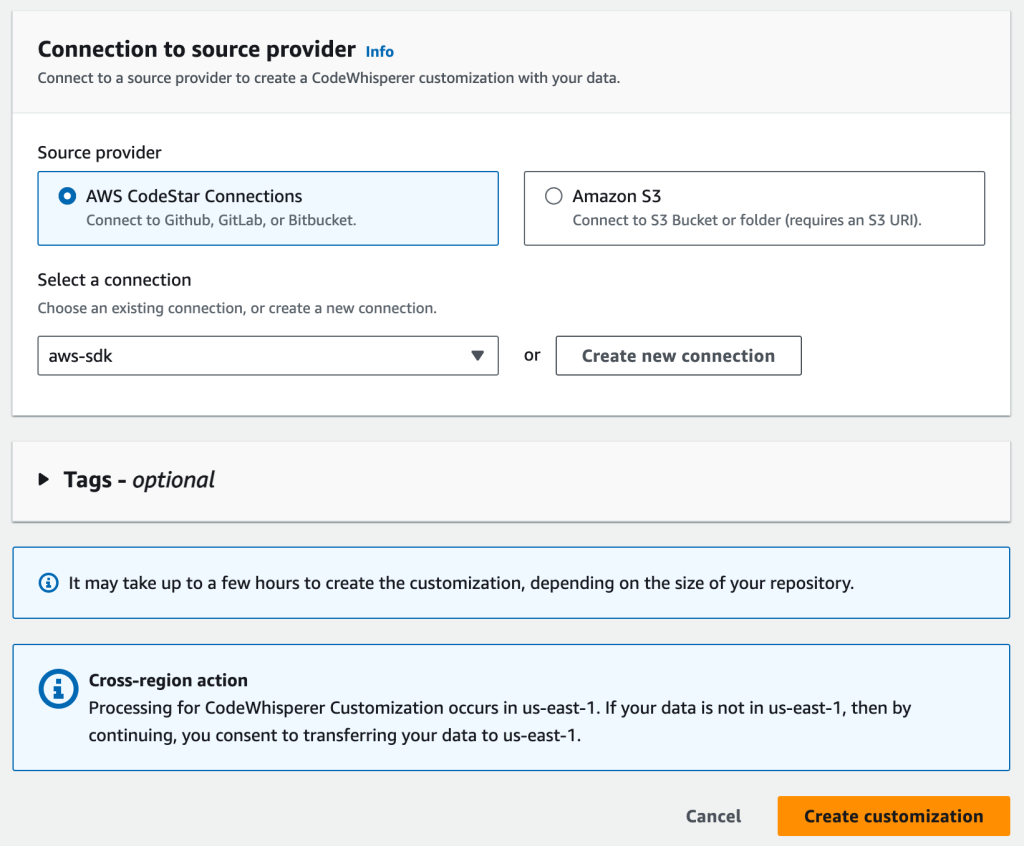

Lastly, enable the Require Amazon Q to stop after each step and await review of its work. In this use case, we do not anticipate having any changes to our workflow files to support this new feature so we will leave the Allow Amazon Q to modify workflow files disabled as shown below in Figure 4. Click Create Issue and Amazon Q will get started.

Figure 4: Configurations for assigning Amazon Q

Step 3: Review Amazon Qs Approach

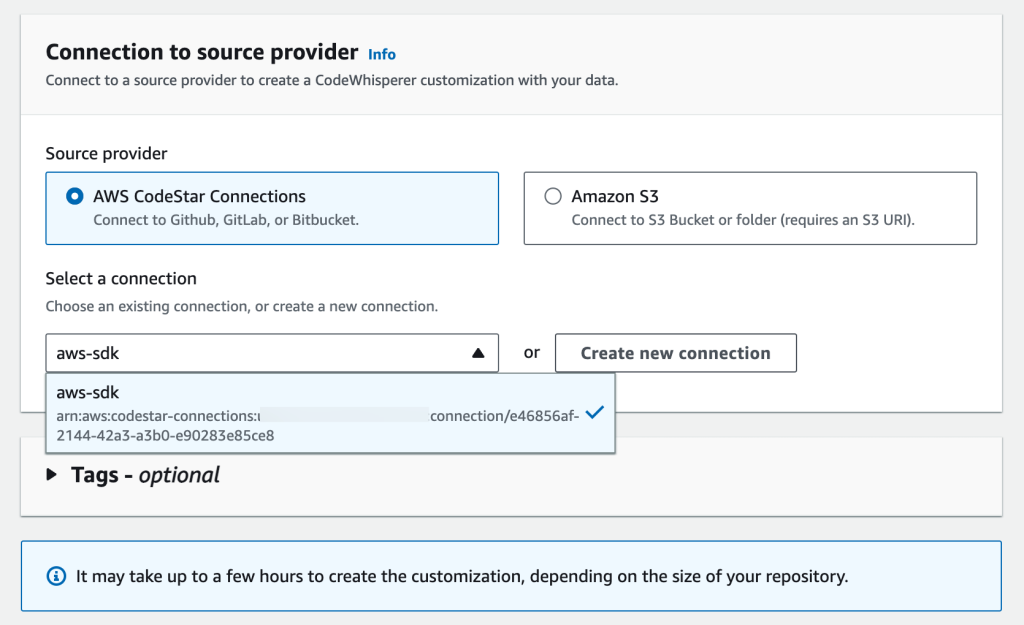

After a few minutes, Amazon Q will generate its understanding of the project in the Background section as well as an Approach to make the changes for the issue you created as show in Figure 5 below

(**Note: The Background and Approach generated for you may be different than what is shown in Figure 5 below).

We have the option to proceed as is or can reply to the Approach via a Comment to provide feedback so Amazon Q can refine it to align better with the use case.

Figure 5: Reviewing Amazon Qs Background and Approach

In the approach, we notice Amazon Q is suggesting it will create a new method to create and save the new item to the table, but we already have an existing method. We decide to leave feedback as show in Figure 6 letting Amazon Q know the existing method should be leveraged.

Figure 6: Provide feedback to Approach

Amazon Q will now refine the approach based on the feedback provided. The refined approach generated by Amazon Q meets our requirements, including unit tests, so we decide to click Proceed as shown in Figure 7 below.

Figure 7: Confirm approach and click Proceed

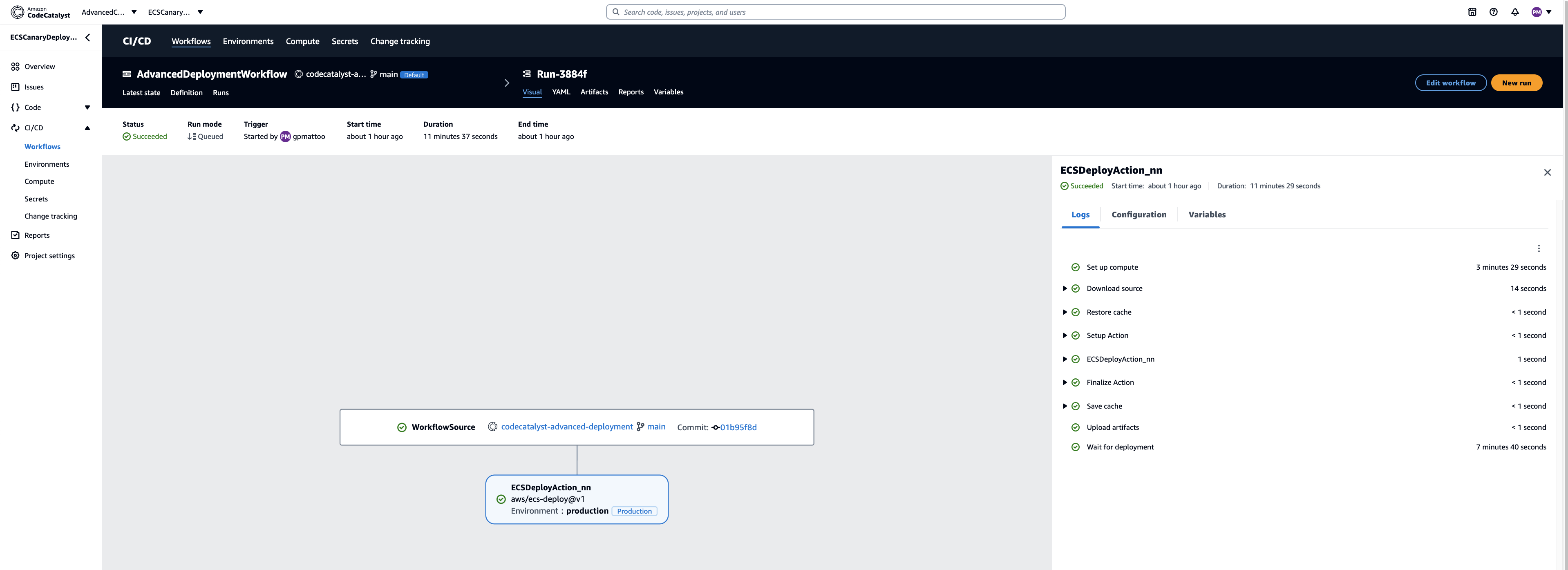

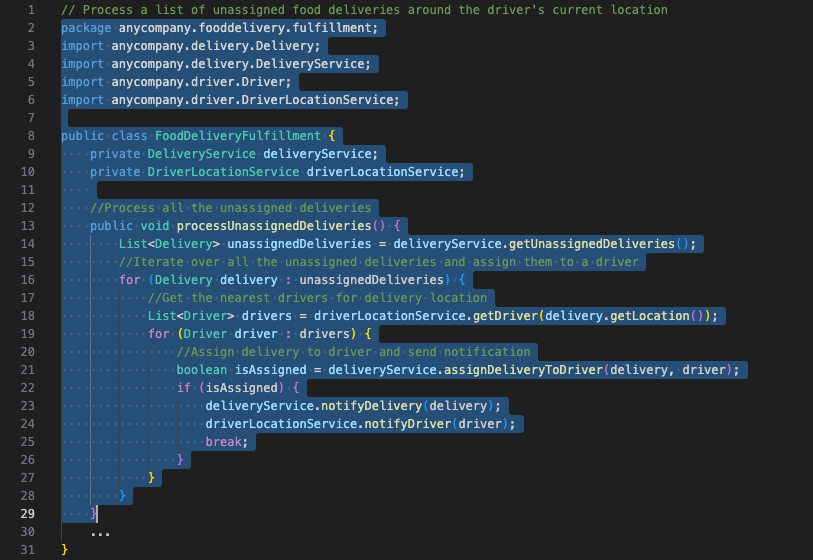

Now, Amazon Q will generate the code for implementation & create a PR with code changes that can be reviewed.

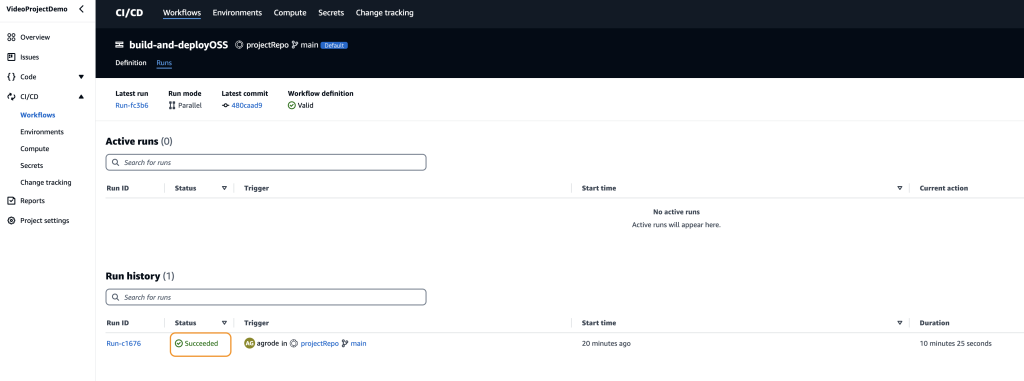

Step 4: Review the PR

Within our project, under Code on the left panel click on Pull requests. You should see the new PR created by Amazon Q.

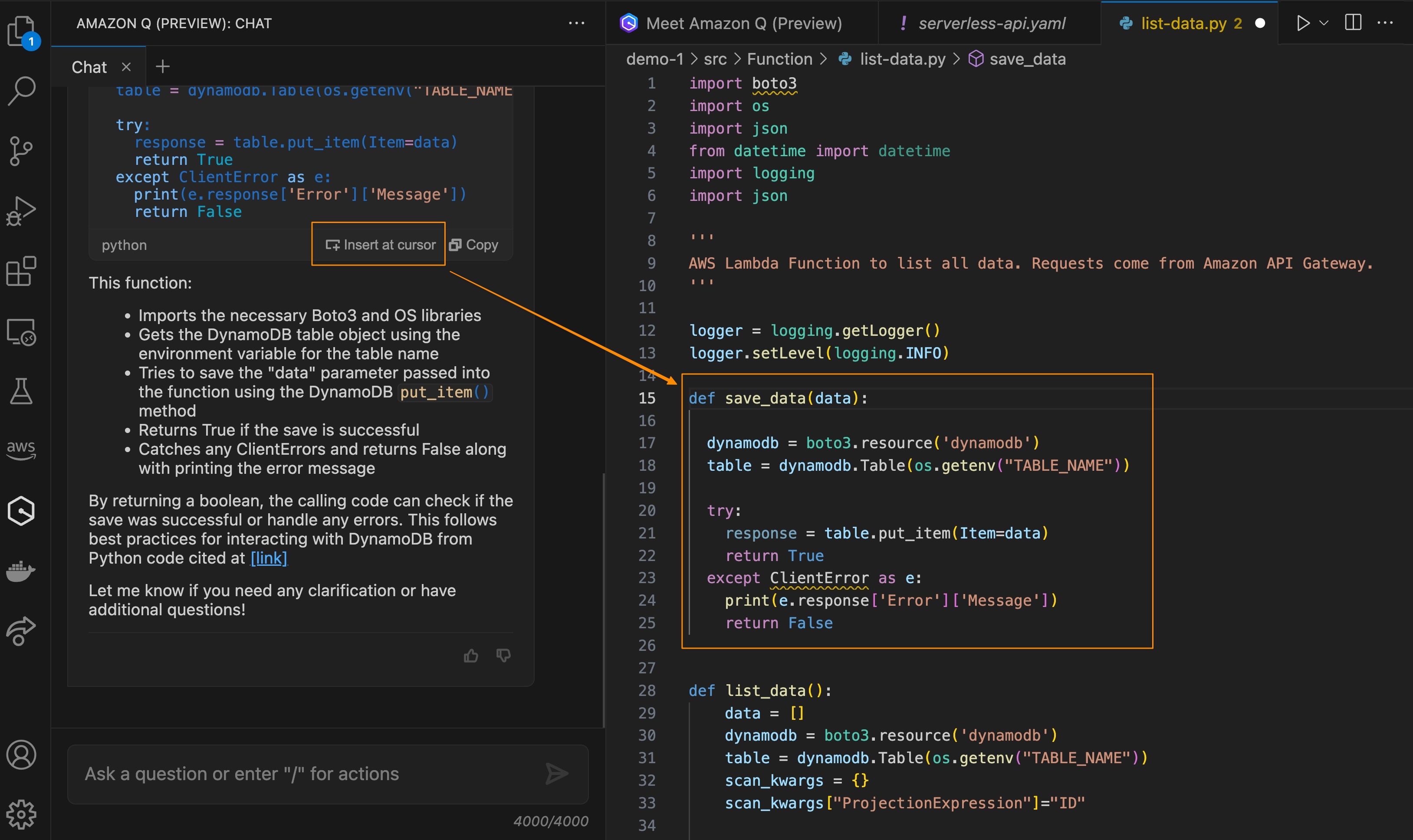

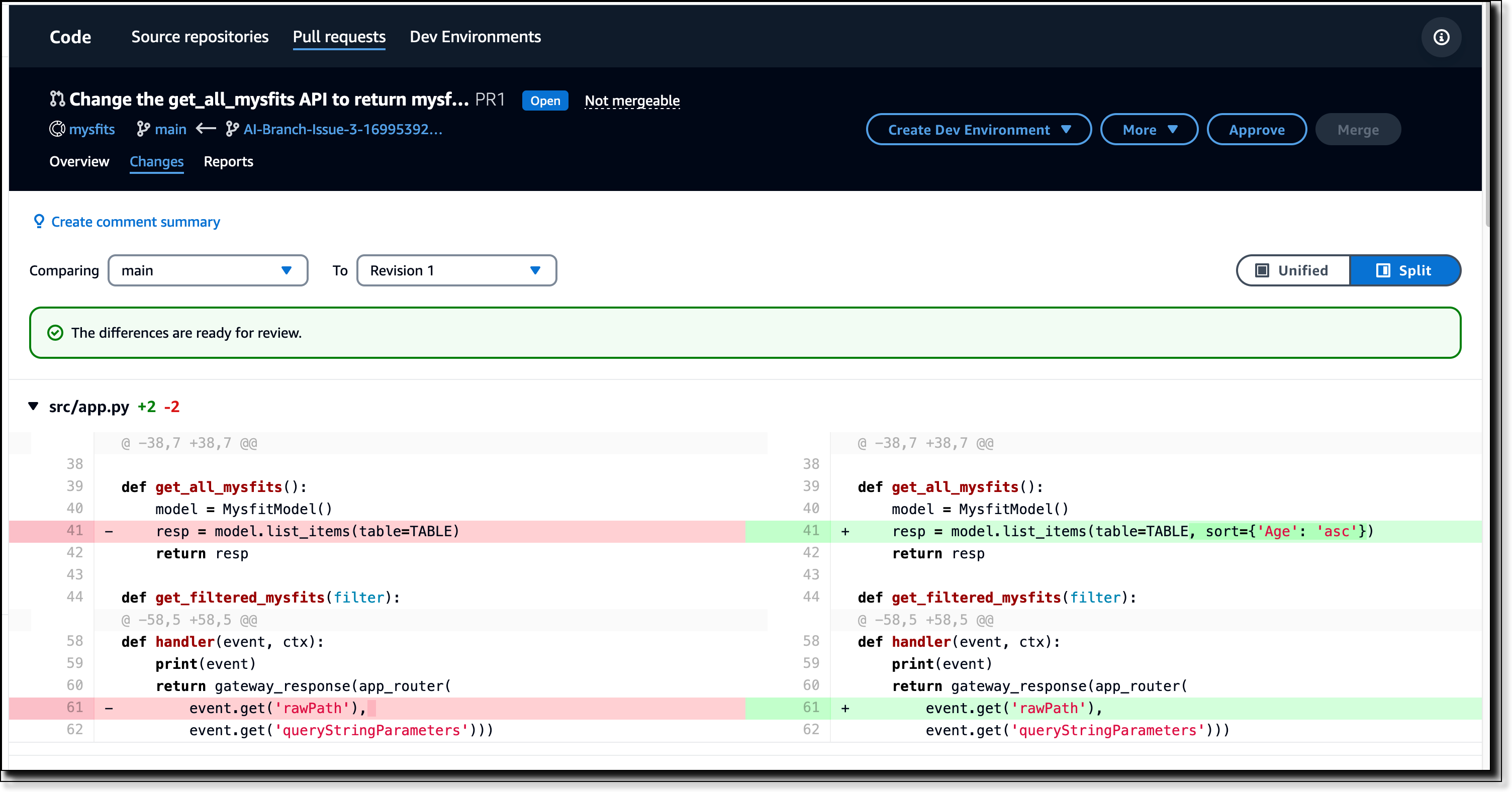

The PR description contains the approach that Amazon Q took to generate the code. This is helpful to reviewers who want to gain a high-level understanding of the changes included in the PR before diving into the details. You will also be able to review all changes made to the code as shown below in Figure 8.

Figure 8: Changes within PR

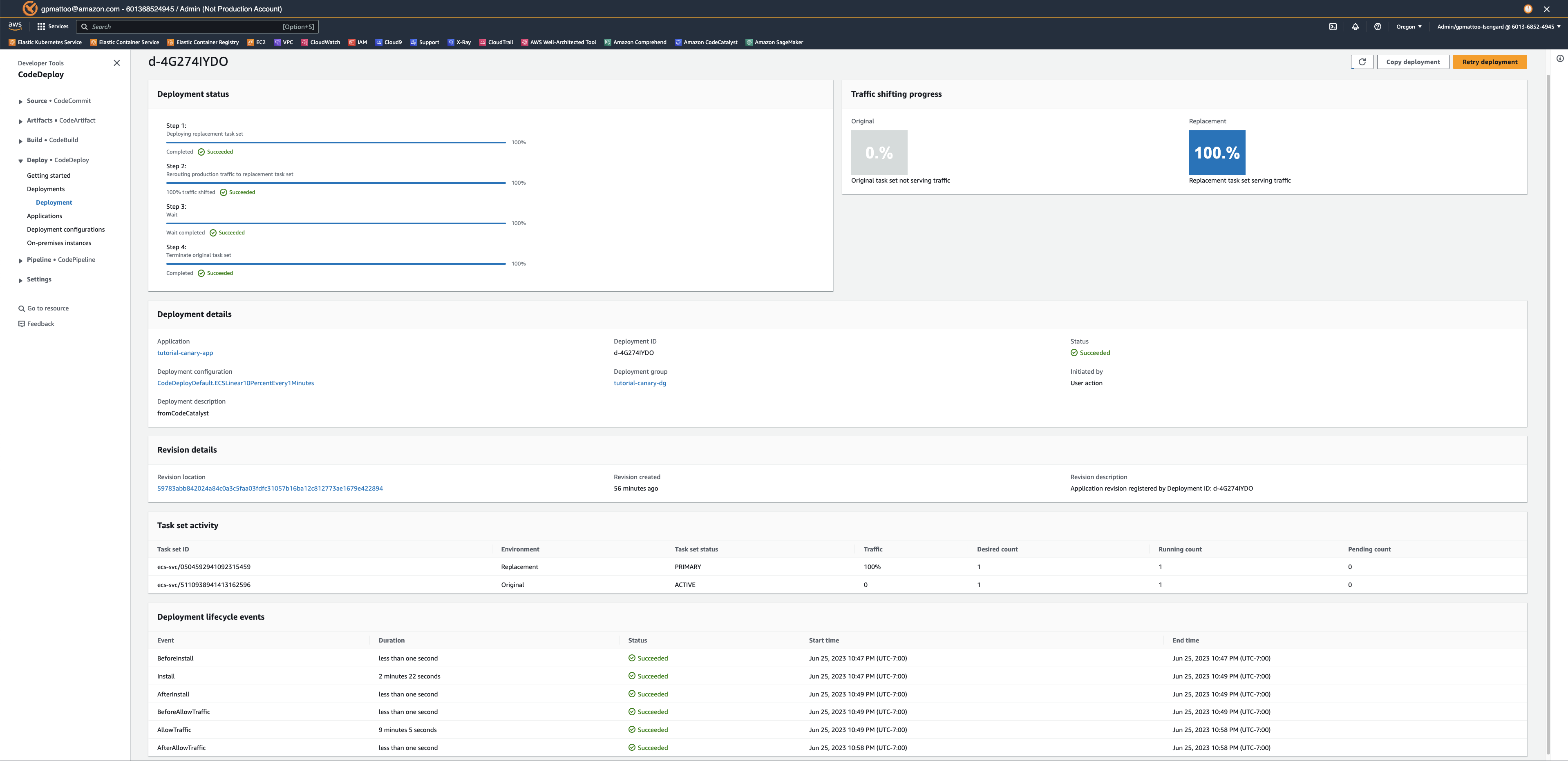

Step 5 (Optional): Provide feedback on PR

After reviewing the changes in the PR, I leave comments on a few items that can be improved. Notably, all fields on the new input form for creating a new creature should be required. After I complete leaving comments, I hit the Create Revision button. Amazon Q will take my comments, update the code accordingly and create a new revision of the PR as shown in Figure 9 below.

Figure 9: PR Revision created.

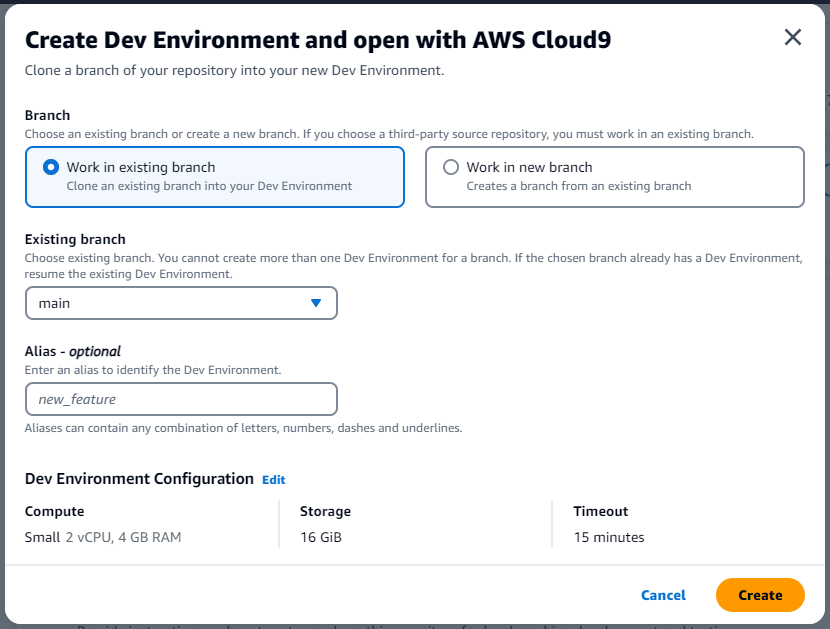

After reviewing the latest revision created by Amazon Q, I am happy with the changes and proceed with testing the changes directly from CodeCatalyst by utilizing Dev Environments. Once I have completed testing of the new feature and everything works as expected, we will let our peers review the PR to provide feedback and approve the pull request.

As part of following the steps in this blog post, if you upgraded your Space to Standard or Enterprise tier, please ensure you downgrade to the Free tier to avoid any unwanted additional charges. Additionally, delete the project and any associated resources deployed in the walkthrough.

Unassign Amazon Q from any issues no longer being worked on. If Amazon Q has finished its work on an issue or could not find a solution, make sure to unassign Amazon Q to avoid reaching the maximum quota for generative AI features. For more information, see Managing generative AI features and Pricing.

Best Practices for using Amazon Q Feature Development

You can follow a few best practices to ensure you experience the best results when using Amazon Q feature development:

- When describing your feature or issue, provide as much context as possible to get the best result from Amazon Q. Being too vague or unclear may not produce ideal results for your use case.

- Changes and new features should be as focused as possible. You will likely not experience the best results when making large and complex changes in a single issue. Instead, break the changes or feature up into smaller, more manageable issues where you will see better results.

- Leverage the feedback feature to practice giving input on approaches Amazon Q takes to ensure it gets to a similar outcome as highlighted in the blog.

Conclusion

In this post, you’ve seen how you can quickly go from Idea to PR using the Amazon Q Feature development capability in CodeCatalyst. You can leverage this new feature to start building new features in your applications. Check out Amazon CodeCatalyst feature development today.