Post Syndicated from Suresh Samuel original https://aws.amazon.com/blogs/security/access-accounts-with-aws-management-console-private-access/

AWS Management Console Private Access is an advanced security feature to help you control access to the AWS Management Console. In this post, I will show you how this feature works, share current limitations, and provide AWS CloudFormation templates that you can use to automate the deployment. AWS Management Console Private Access is useful when you want to restrict users from signing in to unknown AWS accounts from within your network. With this feature, you can limit access to the console only to a specified set of known accounts when the traffic originates from within your network.

For enterprise customers, users typically access the console from devices that are connected to a corporate network, either directly or through a virtual private network (VPN). With network connectivity to the console, users can authenticate into an account with valid credentials, including third-party accounts and personal accounts. For enterprise customers with stringent network access controls, this feature provides a way to control which accounts can be accessed from on-premises networks.

How AWS Management Console Private Access works

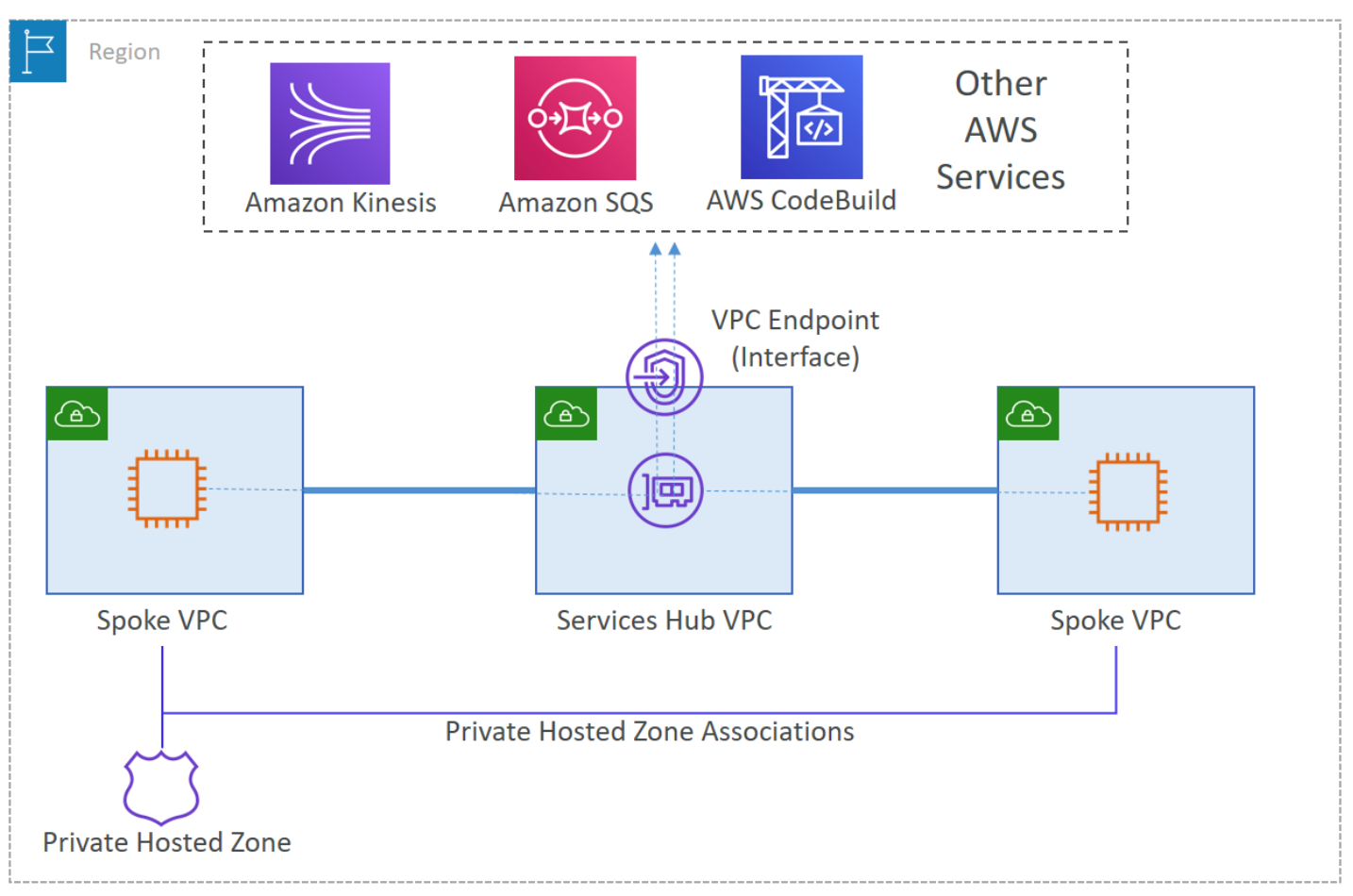

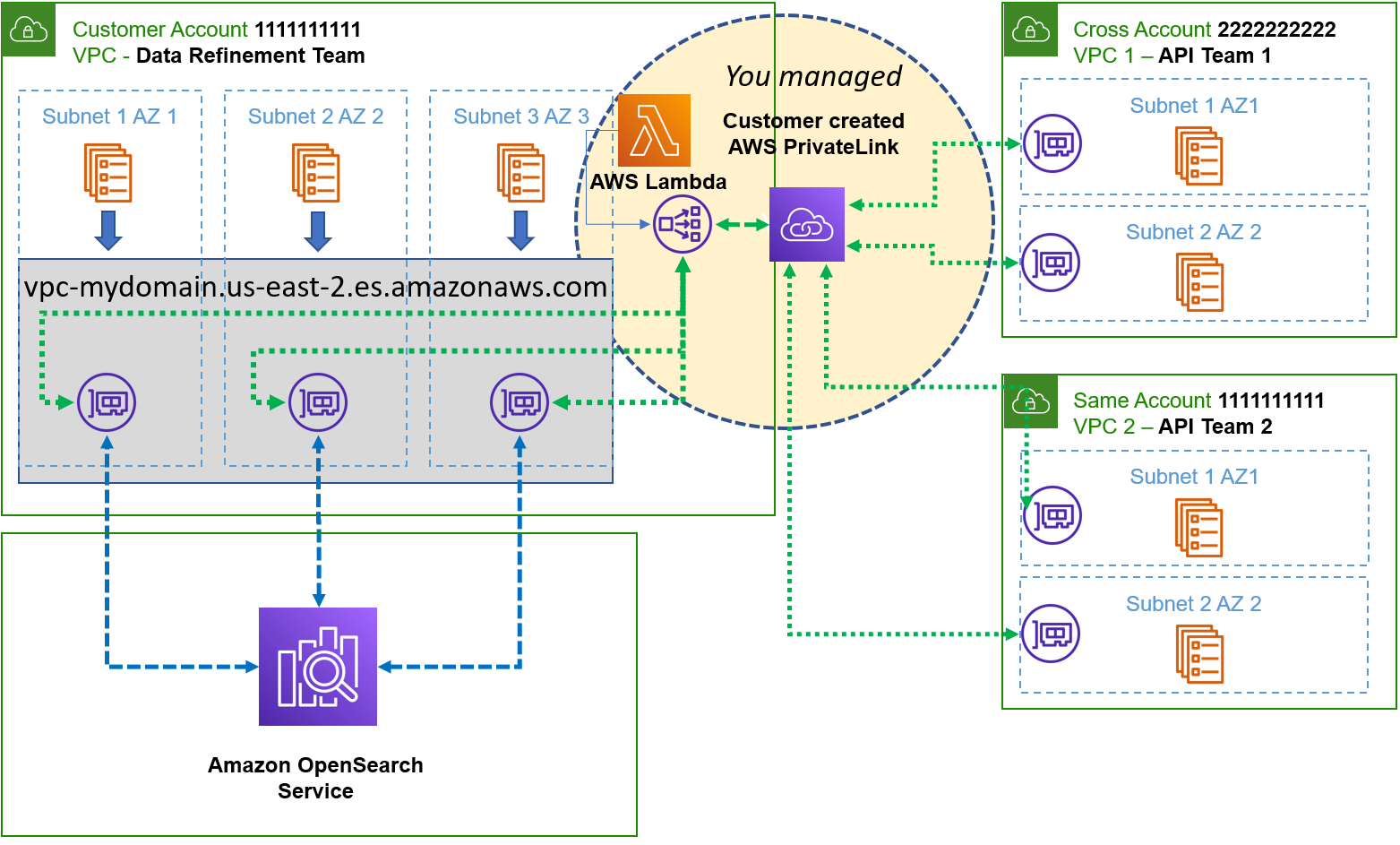

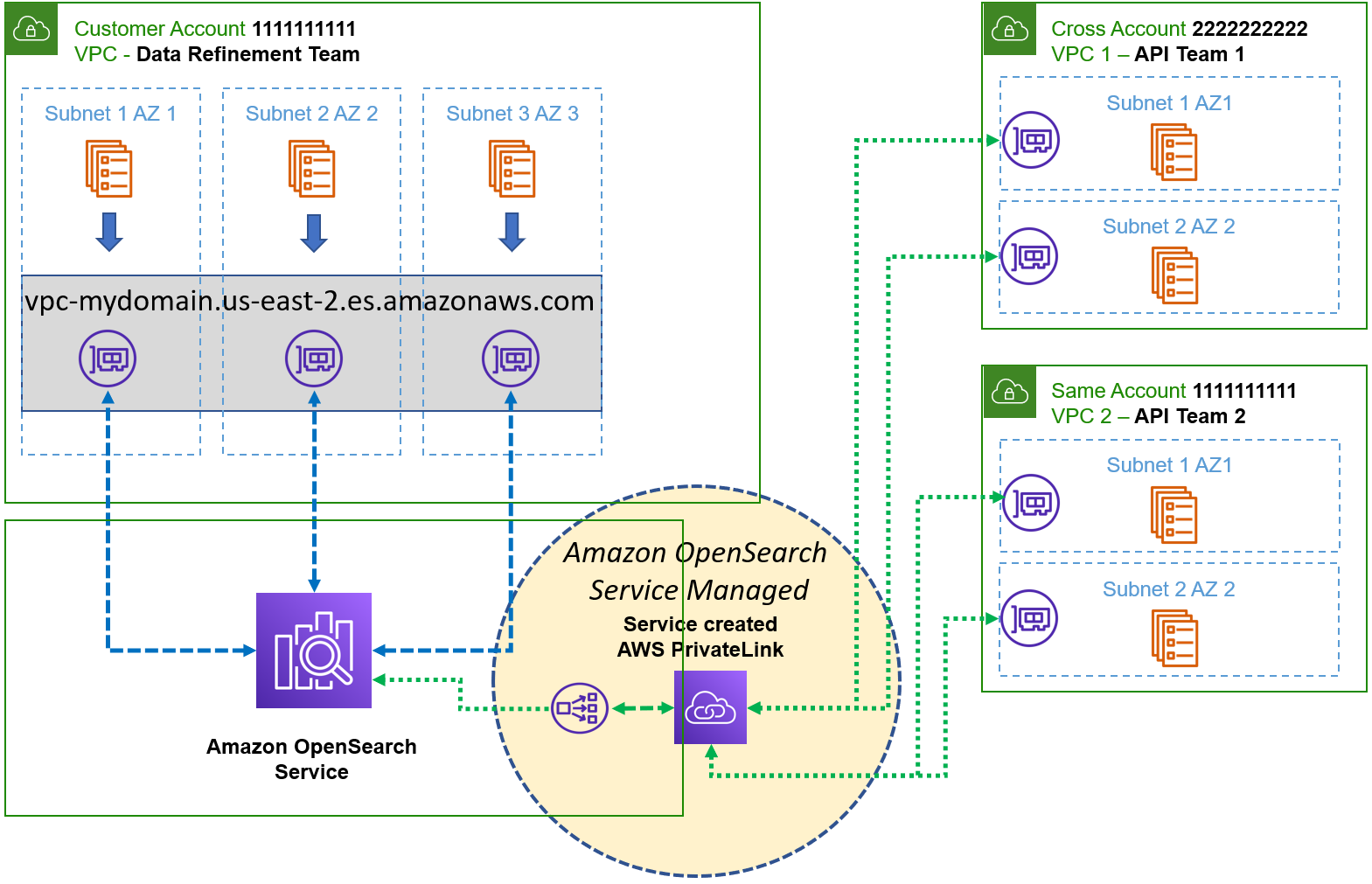

AWS PrivateLink now supports the AWS Management Console, which means that you can create Virtual Private Cloud (VPC) endpoints in your VPC for the console. You can then use DNS forwarding to conditionally route users’ browser traffic to the VPC endpoints from on-premises and define endpoint policies that allow or deny access to specific accounts, organizations, or organizational units (OUs). To privately reach the endpoints, you must have a hybrid network connection between on-premises and AWS over AWS Direct Connect or AWS Site-to-Site VPN.

When you conditionally forward DNS queries for the zone aws.amazon.com from on-premises to an Amazon Route 53 Resolver inbound endpoint within the VPC, Route 53 will prefer the private hosted zone for aws.amazon.com to resolve the queries. The private hosted zone makes it simple to centrally manage records for the console in the AWS US East (N. Virginia) Region (us-east-1) as well as other Regions.

Configure a VPC endpoint for the console

To configure VPC endpoints for the console, you must complete the following steps:

- Create interface VPC endpoints in a VPC in the US East (N. Virginia) Region for the console and sign-in services. Repeat for other desired Regions. You must create VPC endpoints in the US East (N. Virginia) Region because the default DNS name for the console resolves to this Region. Specify the accounts, organizations, or OUs that should be allowed or denied in the endpoint policies. For instructions on how to create interface VPC endpoints, see Access an AWS service using an interface VPC endpoint.

- Create a Route 53 Resolver inbound endpoint in a VPC and note the IP addresses for the elastic network interfaces of the endpoint. Forward DNS queries for the console from on-premises to these IP addresses. For instructions on how to configure Route 53 Resolver, see Getting started with Route 53 Resolver.

- Create a Route 53 private hosted zone with records for the console and sign-in subdomains. For the full list of records needed, see DNS configuration for AWS Management Console and AWS Sign-In. Then associate the private hosted zone with the same VPC that has the Resolver inbound endpoint. For instructions on how to create a private hosted zone, see Creating a private hosted zone.

- Conditionally forward DNS queries for aws.amazon.com to the IP addresses of the Resolver inbound endpoint.

How to access Regions other than US East (N. Virginia)

To access the console for another supported Region using AWS Management Console Private Access, complete the following steps:

- Create the console and sign-in VPC endpoints in a VPC in that Region.

- Create resource records for <region>.console.aws.amazon.com and <region>.signin.aws.amazon.com in the private hosted zone, with values that target the respective VPC endpoints in that Region. Replace <region> with the region code (for example, us-west-2).

For increased resiliency, you can also configure a second Resolver inbound endpoint in a different Region other than the US East (N. Virginia) Region (us-east-1). On-premises DNS resolvers can use both endpoints for resilient DNS resolution to the private hosted zone.

Automate deployment of AWS Management Console Private Access

I created an AWS CloudFormation template that you can use to deploy the required resources in the US East (N. Virginia) Region (us-east-1). To get the template, go to console-endpoint-use1.yaml. The CloudFormation stack deploys the required VPC endpoints, Route 53 Resolver inbound endpoint, and private hosted zone with required records.

Note: The default endpoint policy allows all accounts. For sample policies with conditions to restrict access, see Allow AWS Management Console use for expected accounts and organizations only (trusted identities).

I also created a CloudFormation template that you can use to deploy the required resources in other Regions where private access to the console is required. To get the template, go to console-endpoint-non-use1.yaml.

Cost considerations

When you configure AWS Management Console Private Access, you will incur charges. You can use the following information to estimate these charges:

- PrivateLink pricing is based on the number of hours that the VPC endpoints remain provisioned. In the US East (N. Virginia) Region, this is $0.01 per VPC endpoint per Availability Zone ($/hour).

- Data processing charges per gigabyte (GB) of data processed through the VPC endpoints is $0.01 in the US East (N. Virginia) Region.

- The Route 53 Resolver inbound endpoint is charged per IP (elastic network interface) per hour. In the US East (N. Virginia) Region, this is $0.125 per IP address per hour. See Route 53 pricing.

- DNS queries to the inbound endpoint are charged at $0.40 per million queries.

- The Route 53 hosted zone is charged at $0.50 per hosted zone per month. To allow testing, AWS won’t charge you for a hosted zone that you delete within 12 hours of creation.

Based on this pricing model, the cost of configuring AWS Management Console Private Access in the US East (N. Virginia) Region in two Availability Zones is approximately $212.20 per month for the deployed resources. DNS queries and data processing charges are additional based on actual usage. You can also apply this pricing model to help estimate the cost to configure in additional supported Regions. Route 53 is a global service, so you only have to create the private hosted zone once along with the resources in the US East (N. Virginia) Region.

Limitations and considerations

Before you get started with AWS Management Console Private Access, make sure to review the following limitations and considerations:

- For a list of supported Regions and services, see Supported AWS Regions, service consoles, and features.

- You can use this feature to restrict access to specific accounts from customer networks by forwarding DNS queries to the VPC endpoints. This feature doesn’t prevent users from accessing the console directly from the internet by using the console’s public endpoints from devices that aren’t on the corporate network.

- The following subdomains aren’t currently supported by this feature and won’t be accessible through private access:

- docs.aws.amazon.com

- health.aws.amazon.com

- status.aws.amazon.com

- After a user completes authentication and accesses the console with private access, when they navigate to an individual service console, for example Amazon Elastic Compute Cloud (Amazon EC2), they must have network connectivity to the service’s API endpoint, such as ec2.amazonaws.com. This is needed for the console to make API calls such as ec2:DescribeInstances to display resource details in the service console.

Conclusion

In this blog post, I outlined how you can configure the console through AWS Management Console Private Access to restrict access to AWS accounts from on-premises, how the feature works, and how to configure it for multiple Regions. I also provided CloudFormation templates that you can use to automate the configuration of this feature. Finally, I shared information on costs and some limitations that you should consider before you configure private access to the console.

For more information about how to set up and test AWS Management Console Private Access and reference architectures, see Try AWS Management Console Private Access. For the latest CloudFormation templates, see the aws-management-console-private-access-automation GitHub repository.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, start a new thread at re:Post.

Want more AWS Security news? Follow us on Twitter.

We also have more

We also have more

Lotfi Mouhib is a Senior Solutions Architect working for the Public Sector team with Amazon Web Services. He helps public sector customers across EMEA realize their ideas, build new services, and innovate for citizens. In his spare time, Lotfi enjoys cycling and running.

Lotfi Mouhib is a Senior Solutions Architect working for the Public Sector team with Amazon Web Services. He helps public sector customers across EMEA realize their ideas, build new services, and innovate for citizens. In his spare time, Lotfi enjoys cycling and running.