Post Syndicated from David Boyne original https://aws.amazon.com/blogs/compute/sending-and-receiving-cloudevents-with-amazon-eventbridge/

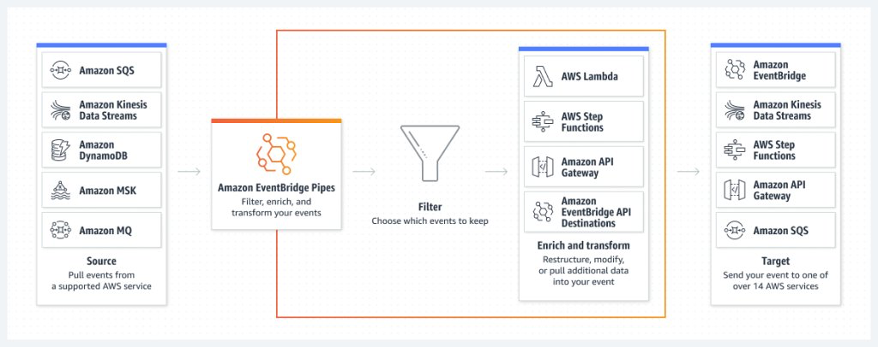

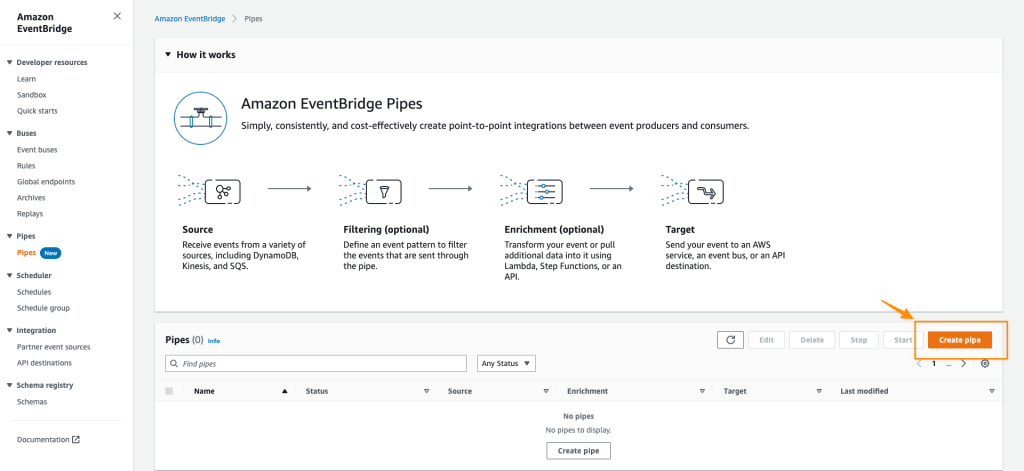

Amazon EventBridge helps developers build event-driven architectures (EDA) by connecting loosely coupled publishers and consumers using event routing, filtering, and transformation. CloudEvents is an open-source specification for describing event data in a common way. Developers can publish CloudEvents directly to EventBridge, filter and route them, and use input transformers and API Destinations to send CloudEvents to downstream AWS services and third-party APIs.

Overview

Event design is an important aspect in any event-driven architecture. Developers building event-driven architectures often overlook the event design process when building their architectures. This leads to unwanted side effects like exposing implementation details, lack of standards, and version incompatibility.

Without event standards, it can be difficult to integrate events or streams of messages between systems, brokers, and organizations. Each system has to understand the event structure or rely on custom-built solutions for versioning or validation.

CloudEvents is a specification for describing event data in common formats to provide interoperability between services, platforms, and systems using Cloud Native Computing Foundation (CNCF) projects. As CloudEvents is a CNCF graduated project, many third-party brokers and systems adopt this specification.

Using CloudEvents as a standard format to describe events makes integration easier and you can use open-source tooling to help build event-driven architectures and future proof any integrations. EventBridge can route and filter CloudEvents based on common metadata, without needing to understand the business logic within the event itself.

CloudEvents support two implementation modes, structured mode and binary mode, and a range of protocols including HTTP, MQTT, AMQP, and Kafka. When publishing events to an EventBridge bus, you can structure events as CloudEvents and route them to downstream consumers. You can use input transformers to transform any event into the CloudEvents specification. Events can also be forwarded to public APIs, using EventBridge API destinations, which supports both structured and binary mode encodings, enhancing interoperability with external systems.

Standardizing events using Amazon EventBridge

When publishing events to an EventBridge bus, EventBridge uses its own event envelope and represents events as JSON objects. EventBridge requires that you define top-level fields, such as detail-type and source. You can use any event/payload in the detail field.

This example event shows an OrderPlaced event from the orders-service that is unstructured without any event standards. The data within the event contains the order_id, customer_id and order_total.

{

"version": "0",

"id": "dbc1c73a-c51d-0c0e-ca61-ab9278974c57",

"account": "1234567890",

"time": "2023-05-23T11:38:46Z",

"region": "us-east-1",

"detail-type": "OrderPlaced",

"source": "myapp.orders-service",

"resources": [],

"detail": {

"data": {

"order_id": "c172a984-3ae5-43dc-8c3f-be080141845a",

"customer_id": "dda98122-b511-4aaf-9465-77ca4a115ee6",

"order_total": "120.00"

}

}

}

Publishers may also choose to add an additional metadata field along with the data field within the detail field to help define a set of standards for their events.

{

"version": "0",

"id": "dbc1c73a-c51d-0c0e-ca61-ab9278974c58",

"account": "1234567890",

"time": "2023-05-23T12:38:46Z",

"region": "us-east-1",

"detail-type": "OrderPlaced",

"source": "myapp.orders-service",

"resources": [],

"detail": {

"metadata": {

"idempotency_key": "29d2b068-f9c7-42a0-91e3-5ba515de5dbe",

"correlation_id": "dddd9340-135a-c8c6-95c2-41fb8f492222",

"domain": "ORDERS",

"time": "1707908605"

},

"data": {

"order_id": "c172a984-3ae5-43dc-8c3f-be080141845a",

"customer_id": "dda98122-b511-4aaf-9465-77ca4a115ee6",

"order_total": "120.00"

}

}

}

This additional event information helps downstream consumers, improves debugging, and can manage idempotency. While this approach offers practical benefits, it duplicates solutions that are already solved with the CloudEvents specification.

Publishing CloudEvents using Amazon EventBridge

When publishing events to EventBridge, you can use CloudEvents structured mode. A structured-mode message is where the entire event (attributes and data) is encoded in the message body, according to a specific event format. A binary-mode message is where the event data is stored in the message body, and event attributes are stored as part of the message metadata.

CloudEvents has a list of required fields but also offers flexibility with optional attributes and extensions. CloudEvents also offers a solution to implement idempotency, requiring that the combination of id and source must uniquely identify an event, which can be used as the idempotency key in downstream implementations.

{

"version": "0",

"id": "dbc1c73a-c51d-0c0e-ca61-ab9278974c58",

"account": "1234567890",

"time": "2023-05-23T12:38:46Z",

"region": "us-east-1",

"detail-type": "OrderPlaced",

"source": "myapp.orders-service",

"resources": [],

"detail": {

"specversion": "1.0",

"id": "bba4379f-b764-4d90-9fb2-9f572b2b0b61",

"source": "myapp.orders-service",

"type": "OrderPlaced",

"data": {

"order_id": "c172a984-3ae5-43dc-8c3f-be080141845a",

"customer_id": "dda98122-b511-4aaf-9465-77ca4a115ee6",

"order_total": "120.00"

},

"time": "2024-01-01T17:31:00Z",

"dataschema": "https://us-west-2.console.aws.amazon.com/events/home?region=us-west-2#/registries/discovered-schemas/schemas/myapp.orders-service%40OrderPlaced",

"correlationid": "dddd9340-135a-c8c6-95c2-41fb8f492222",

"domain": "ORDERS"

}

}By incorporating the required fields, the OrderPlaced event is now CloudEvents compliant. The event also contains optional and extension fields for additional information. Optional fields such as dataschema can be useful for brokers and consumers to retrieve a URI path to the published event schema. This example event references the schema in the EventBridge schema registry, so downstream consumers can fetch the schema to validate the payload.

Mapping existing events into CloudEvents using input transformers

When you define a target in EventBridge, input transformations allow you to modify the event before it reaches its destination. Input transformers are configured per target, allowing you to convert events when your downstream consumer requires the CloudEvents format and you want to avoid duplicating information.

Input transformers allow you to map EventBridge fields, such as id, region, detail-type, and source, into corresponding CloudEvents attributes.

This example shows how to transform any EventBridge event into CloudEvents format using input transformers, so the target receives the required structure.

{

"version": "0",

"id": "dbc1c73a-c51d-0c0e-ca61-ab9278974c58",

"account": "1234567890",

"time": "2024-01-23T12:38:46Z",

"region": "us-east-1",

"detail-type": "OrderPlaced",

"source": "myapp.orders-service",

"resources": [],

"detail": {

"order_id": "c172a984-3ae5-43dc-8c3f-be080141845a",

"customer_id": "dda98122-b511-4aaf-9465-77ca4a115ee6",

"order_total": "120.00"

}

}

Using this input transformer and input template EventBridge transforms the event schema into the CloudEvents specification for downstream consumers.

Input transformer for CloudEvents:

{

"id": "$.id",

"source": "$.source",

"type": "$.detail-type",

"time": "$.time",

"data": "$.detail"

}Input template for CloudEvents:

{

"specversion": "1.0",

"id": "<id>",

"source": "<source>",

"type": "<type>",

"time": "<time>",

"data": <data>

}This example shows the event payload that is received by downstream targets, which is mapped to the CloudEvents specification.

{

"specversion": "1.0",

"id": "dbc1c73a-c51d-0c0e-ca61-ab9278974c58",

"source": "myapp.orders-service",

"type": "OrderPlaced",

"time": "2024-01-23T12:38:46Z",

"data": {

"order_id": "c172a984-3ae5-43dc-8c3f-be080141845a",

"customer_id": "dda98122-b511-4aaf-9465-77ca4a115ee6",

"order_total": "120.00"

}

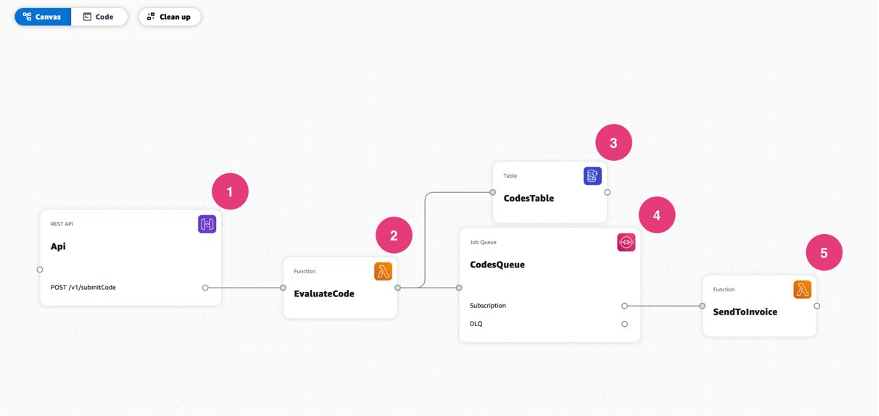

}For more information on using input transformers with CloudEvents, see this pattern on Serverless Land.

Transforming events into CloudEvents using API destinations

EventBridge API destinations allows you to trigger HTTP endpoints based on matched rules to integrate with third-party systems using public APIs. You can route events to APIs that support the CloudEvents format by using input transformations and custom HTTP headers to convert EventBridge events to CloudEvents. API destinations now supports custom content-type headers. This allows you to send structured or binary CloudEvents to downstream consumers.

Sending binary CloudEvents using API destinations

When sending binary CloudEvents over HTTP, you must use the HTTP binding specification and set the necessary CloudEvents headers. These headers tell the downstream consumer that the incoming payload uses the CloudEvents format. The body of the request is the event itself.

CloudEvents headers are prefixed with ce-. You can find the list of headers in the HTTP protocol binding documentation.

This example shows the Headers for a binary event:

POST /order HTTP/1.1

Host: webhook.example.com

ce-specversion: 1.0

ce-type: OrderPlaced

ce-source: myapp.orders-service

ce-id: bba4379f-b764-4d90-9fb2-9f572b2b0b61

ce-time: 2024-01-01T17:31:00Z

ce-dataschema: https://us-west-2.console.aws.amazon.com/events/home?region=us-west-2#/registries/discovered-schemas/schemas/myapp.orders-service%40OrderPlaced

correlationid: dddd9340-135a-c8c6-95c2-41fb8f492222

domain: ORDERS

Content-Type: application/json; charset=utf-8

This example shows the body for a binary event:

{

"order_id": "c172a984-3ae5-43dc-8c3f-be080141845a",

"customer_id": "dda98122-b511-4aaf-9465-77ca4a115ee6",

"order_total": "120.00"

}

For more information when using binary CloudEvents with API destinations, explore this pattern available on Serverless Land.

Sending structured CloudEvents using API destinations

To support structured mode with CloudEvents, you must specify the content-type as application/cloudevents+json; charset=UTF-8, which tells the API consumer that the payload of the event is adhering to the CloudEvents specification.

POST /order HTTP/1.1

Host: webhook.example.com

Content-Type: application/cloudevents+json; charset=utf-8

{

"specversion": "1.0",

"id": "bba4379f-b764-4d90-9fb2-9f572b2b0b61",

"source": "myapp.orders-service",

"type": "OrderPlaced",

"data": {

"order_id": "c172a984-3ae5-43dc-8c3f-be080141845a",

"customer_id": "dda98122-b511-4aaf-9465-77ca4a115ee6",

"order_total": "120.00"

},

"time": "2024-01-01T17:31:00Z",

"dataschema": "https://us-west-2.console.aws.amazon.com/events/home?region=us-west-2#/registries/discovered-schemas/schemas/myapp.orders-service%40OrderPlaced",

"correlationid": "dddd9340-135a-c8c6-95c2-41fb8f492222",

"domain":"ORDERS"

}

Conclusion

Carefully designing events plays an important role when building event-driven architectures to integrate producers and consumers effectively. The open-source CloudEvents specification helps developers to standardize integration processes, simplifying interactions between internal systems and external partners.

EventBridge allows you to use a flexible payload structure within an event’s detail property to standardize events. You can publish structured CloudEvents directly onto an event bus in the detail field and use payload transformations to allow downstream consumers to receive events in the CloudEvents format.

EventBridge simplifies integration with third-party systems using API destinations. Using the new custom content-type headers with input transformers to modify the event structure, you can send structured or binary CloudEvents to integrate with public APIs.

For more serverless learning resources, visit Serverless Land.

Florian Mair is a Senior Solutions Architect and data streaming expert at AWS. He is a technologist that helps customers in Germany succeed and innovate by solving business challenges using AWS Cloud services. Besides working as a Solutions Architect, Florian is a passionate mountaineer, and has climbed some of the highest mountains across Europe.

Florian Mair is a Senior Solutions Architect and data streaming expert at AWS. He is a technologist that helps customers in Germany succeed and innovate by solving business challenges using AWS Cloud services. Besides working as a Solutions Architect, Florian is a passionate mountaineer, and has climbed some of the highest mountains across Europe. Benjamin Meyer is a Senior Solutions Architect at AWS, focused on Games businesses in Germany to solve business challenges by using AWS Cloud services. Benjamin has been an avid technologist for 7 years, and when he’s not helping customers, he can be found developing mobile apps, building electronics, or tending to his cacti.

Benjamin Meyer is a Senior Solutions Architect at AWS, focused on Games businesses in Germany to solve business challenges by using AWS Cloud services. Benjamin has been an avid technologist for 7 years, and when he’s not helping customers, he can be found developing mobile apps, building electronics, or tending to his cacti.

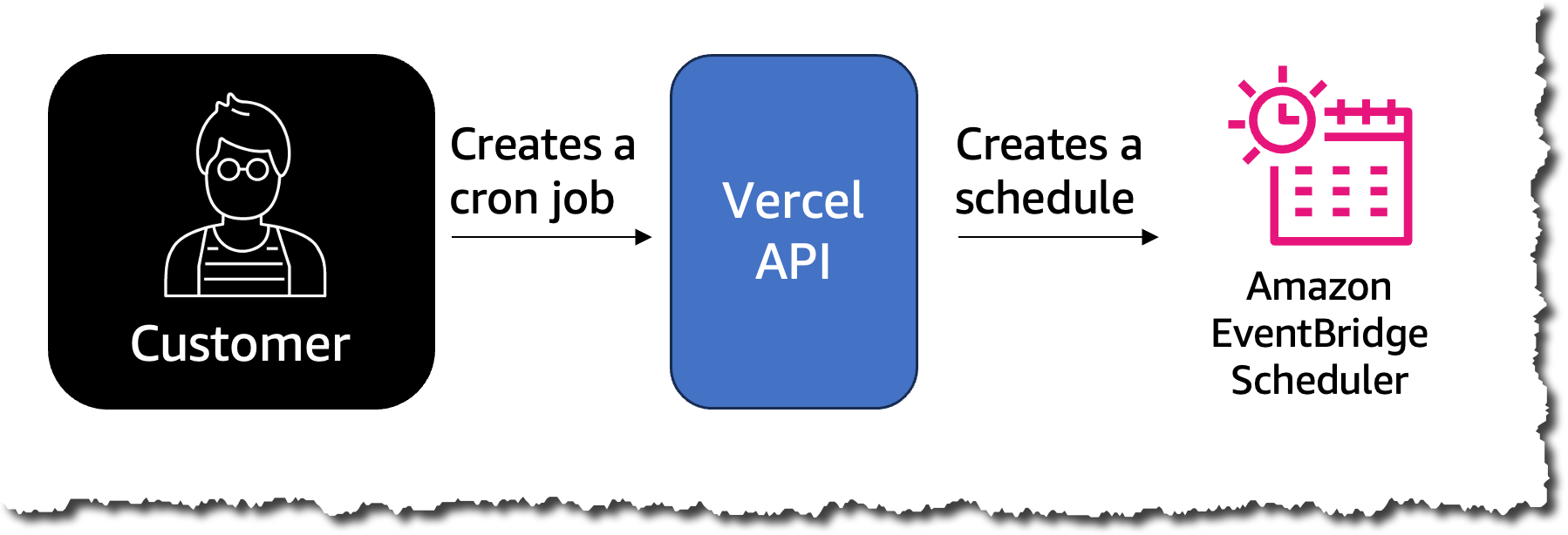

Amazon EventBridge Scheduler | Schedule tasks over +200 targets!

Amazon EventBridge Scheduler | Schedule tasks over +200 targets!

Ninad Phatak is a Principal Data Architect at Amazon Development Center India. He specializes in data engineering and datawarehousing technologies and helps customers architect their analytics use cases and platforms on AWS.

Ninad Phatak is a Principal Data Architect at Amazon Development Center India. He specializes in data engineering and datawarehousing technologies and helps customers architect their analytics use cases and platforms on AWS. Vinay Kondapi is Head of product for Amazon AppFlow. He specializes in Application and data integration with SaaS products at AWS.

Vinay Kondapi is Head of product for Amazon AppFlow. He specializes in Application and data integration with SaaS products at AWS.

Gopalakrishnan Ramaswamy is a Solutions Architect at AWS based out of India with extensive background in database, analytics, and machine learning. He helps customers of all sizes solve complex challenges by providing solutions using AWS products and services. Outside of work, he likes the outdoors, physical activities and spending time with friends and family.

Gopalakrishnan Ramaswamy is a Solutions Architect at AWS based out of India with extensive background in database, analytics, and machine learning. He helps customers of all sizes solve complex challenges by providing solutions using AWS products and services. Outside of work, he likes the outdoors, physical activities and spending time with friends and family.