Post Syndicated from Julian Wood original https://aws.amazon.com/blogs/compute/building-aws-lambda-governance-and-guardrails/

When building serverless applications using AWS Lambda, there are a number of considerations regarding security, governance, and compliance. This post highlights how Lambda, as a serverless service, simplifies cloud security and compliance so you can concentrate on your business logic. It covers controls that you can implement for your Lambda workloads to ensure that your applications conform to your organizational requirements.

The Shared Responsibility Model

The AWS Shared Responsibility Model distinguishes between what AWS is responsible for and what customers are responsible for with cloud workloads. AWS is responsible for “Security of the Cloud” where AWS protects the infrastructure that runs all the services offered in the AWS Cloud. Customers are responsible for “Security in the Cloud”, managing and securing their workloads. When building traditional applications, you take on responsibility for many infrastructure services, including operating systems and network configuration.

Traditional application shared responsibility

One major benefit when building serverless applications is shifting more responsibility to AWS so you can concentrate on your business applications. AWS handles managing and patching the underlying servers, operating systems, and networking as part of running the services.

Serverless application shared responsibility

For Lambda, AWS manages the application platform where your code runs, which includes patching and updating the managed language runtimes. This reduces the attack surface while making cloud security simpler. You are responsible for the security of your code and AWS Identity and Access Management (IAM) to the Lambda service and within your function.

Lambda is SOC, HIPAA, PCI, and ISO-compliant. For more information, see Compliance validation for AWS Lambda and the latest Lambda certification and compliance readiness services in scope.

Lambda isolation

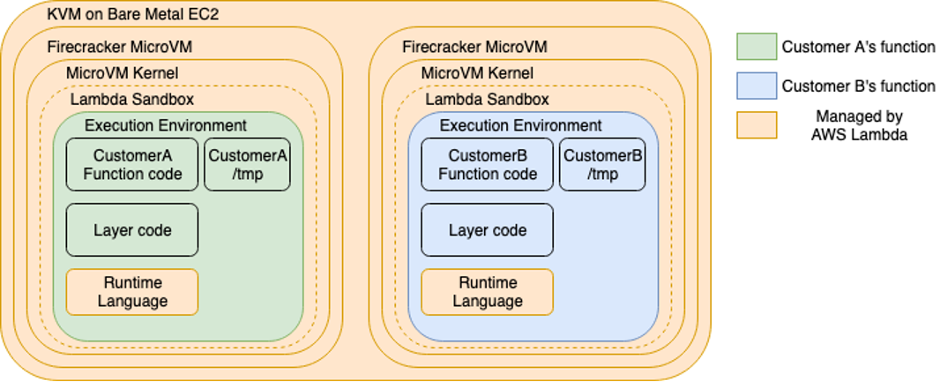

Lambda functions run in separate isolated AWS accounts that are dedicated to the Lambda service. Lambda invokes your code in a secure and isolated runtime environment within the Lambda service account. A runtime environment is a collection of resources running in a dedicated hardware-virtualized Micro Virtual Machines (MVM) on a Lambda worker node.

Lambda workers are bare metalEC2 Nitro instances, which are managed and patched by the Lambda service team. They have a maximum lease lifetime of 14 hours to keep the underlying infrastructure secure and fresh. MVMs are created by Firecracker, an open source virtual machine monitor (VMM) that uses Linux’s Kernel-based Virtual Machine (KVM) to create and manage MVMs securely at scale.

MVMs maintain a strong separation between runtime environments at the virtual machine hardware level, which increases security. Runtime environments are never reused across functions, function versions, or AWS accounts.

Isolation model for AWS Lambda workers

Network security

Lambda functions always run inside secure Amazon Virtual Private Cloud (Amazon VPCs) owned by the Lambda service. This gives the Lambda function access to AWS services and the public internet. There is no direct network inbound access to Lambda workers, runtime environments, or Lambda functions. All inbound access to a Lambda function only comes via the Lambda Invoke API, which sends the event object to the function handler.

You can configure a Lambda function to connect to private subnets in a VPC in your account if necessary, which you can control with IAM condition keys . The Lambda function still runs inside the Lambda service VPC but sends all network traffic through your VPC. Function outbound traffic comes from your own network address space.

AWS Lambda service VPC with VPC-to-VPC NAT to customer VPC

To give your VPC-connected function access to the internet, route outbound traffic to a NAT gateway in a public subnet. Connecting a function to a public subnet doesn’t give it internet access or a public IP address, as the function is still running in the Lambda service VPC and then routing network traffic into your VPC.

All internal AWS traffic uses the AWS Global Backbone rather than traversing the internet. You do not need to connect your functions to a VPC to avoid connectivity to AWS services over the internet. VPC connected functions allow you to control and audit outbound network access.

You can use security groups to control outbound traffic for VPC-connected functions and network ACLs to block access to CIDR IP ranges or ports. VPC endpoints allow you to enable private communications with supported AWS services without internet access.

You can use VPC Flow Logs to audit traffic going to and from network interfaces in your VPC.

Runtime environment re-use

Each runtime environment processes a single request at a time. After Lambda finishes processing the request, the runtime environment is ready to process an additional request for the same function version. For more information on how Lambda manages runtime environments, see Understanding AWS Lambda scaling and throughput.

Data can persist in the local temporary filesystem path, in globally scoped variables, and in environment variables across subsequent invocations of the same function version. Ensure that you only handle sensitive information within individual invocations of the function by processing it in the function handler, or using local variables. Do not re-use files in the local temporary filesystem to process unencrypted sensitive data. Do not put sensitive or confidential information into Lambda environment variables, tags, or other freeform fields such as Name fields.

For more Lambda security information, see the Lambda security whitepaper.

Multiple accounts

AWS recommends using multiple accounts to isolate your resources because they provide natural boundaries for security, access, and billing. Use AWS Organizations to manage and govern individual member accounts centrally. You can use AWS Control Tower to automate many of the account build steps and apply managed guardrails to govern your environment. These include preventative guardrails to limit actions and detective guardrails to detect and alert on non-compliance resources for remediation.

Lambda access controls

Lambda permissions define what a Lambda function can do, and who or what can invoke the function. Consider the following areas when applying access controls to your Lambda functions to ensure least privilege:

Execution role

Lambda functions have permission to access other AWS resources using execution roles. This is an AWS principal that the Lambda service assumes which grants permissions using identity policy statements assigned to the role. The Lambda service uses this role to fetch and cache temporary security credentials, which are then available as environment variables during a function’s invocation. It may re-use them across different runtime environments that use the same execution role.

Ensure that each function has its own unique role with the minimum set of permissions..

Identity/user policies

IAM identity policies are attached to IAM users, groups, or roles. These policies allow users or callers to perform operations on Lambda functions. You can restrict who can create functions, or control what functions particular users can manage.

Resource policies

Resource policies define what identities have fine-grained inbound access to managed services. For example, you can restrict which Lambda function versions can add events to a specific Amazon EventBridge event bus. You can use resource-based policies on Lambda resources to control what AWS IAM identities and event sources can invoke a specific version or alias of your function. You also use a resource-based policy to allow an AWS service to invoke your function on your behalf. To see which services support resource-based policies, see “AWS services that work with IAM”.

Attribute-based access control (ABAC)

With attribute-based access control (ABAC), you can use tags to control access to your Lambda functions. With ABAC, you can scale an access control strategy by setting granular permissions with tags without requiring permissions updates for every new user or resource as your organization scales. You can also use tag policies with AWS Organizations to standardize tags across resources.

Permissions boundaries

Permissions boundaries are a way to delegate permission management safely. The boundary places a limit on the maximum permissions that a policy can grant. For example, you can use boundary permissions to limit the scope of the execution role to allow only read access to databases. A builder with permission to manage a function or with write access to the applications code repository cannot escalate the permissions beyond the boundary to allow write access.

Service control policies

When using AWS Organizations, you can use Service control policies (SCPs) to manage permissions in your organization. These provide guardrails for what actions IAM users and roles within the organization root or OUs can do. For more information, see the AWS Organizations documentation, which includes example service control policies.

Code signing

As you are responsible for the code that runs in your Lambda functions, you can ensure that only trusted code runs by using code signing with the AWS Signer service. AWS Signer digitally signs your code packages and Lambda validates the code package before accepting the deployment, which can be part of your automated software deployment process.

Auditing Lambda configuration, permissions and access

You should audit access and permissions regularly to ensure that your workloads are secure. Use the IAM console to view when an IAM role was last used.

IAM last used

IAM access advisor

Use IAM access advisor on the Access Advisor tab in the IAM console to review when was the last time an AWS service was used from a specific IAM user or role. You can use this to remove IAM policies and access from your IAM roles.

IAM access advisor

AWS CloudTrail

AWS CloudTrail helps you monitor, log, and retain account activity to provide a complete event history of actions across your AWS infrastructure. You can monitor Lambda API actions to ensure that only appropriate actions are made against your Lambda functions. These include CreateFunction, DeleteFunction, CreateEventSourceMapping, AddPermission, UpdateEventSourceMapping, UpdateFunctionConfiguration, and UpdateFunctionCode.

AWS CloudTrail

IAM Access Analyzer

You can validate policies using IAM Access Analyzer, which provides over 100 policy checks with security warnings for overly permissive policies. To learn more about policy checks provided by IAM Access Analyzer, see “IAM Access Analyzer policy validation”.

You can also generate IAM policies based on access activity from CloudTrail logs, which contain the permissions that the role used in your specified date range.

IAM Access Analyzer

AWS Config

AWS Config provides you with a record of the configuration history of your AWS resources. AWS Config monitors the resource configuration and includes rules to alert when they fall into a non-compliant state.

For Lambda, you can track and alert on changes to your function configuration, along with the IAM execution role. This allows you to gather Lambda function lifecycle data for potential audit and compliance requirements. For more information, see the Lambda Operators Guide.

AWS Config includes Lambda managed config rules such as lambda-concurrency-check, lambda-dlq-check, lambda-function-public-access-prohibited, lambda-function-settings-check, and lambda-inside-vpc. You can also write your own rules.

There are a number of other AWS services to help with security compliance.

- AWS Audit Manager: Collect evidence to help you audit your use of cloud services.

- Amazon GuardDuty: Detect unexpected and potentially unauthorized activity in your AWS environment.

- Amazon Macie: Evaluates your content to identify business-critical or potentially confidential data.

- AWS Trusted Advisor: Identify opportunities to improve stability, save money, or help close security gaps.

- AWS Security Hub: Provides security checks and recommendations across your organization.

Conclusion

Lambda makes cloud security simpler by taking on more responsibility using the AWS Shared Responsibility Model. Lambda implements strict workload security at scale to isolate your code and prevent network intrusion to your functions. This post provides guidance on assessing and implementing best practices and tools for Lambda to improve your security, governance, and compliance controls. These include permissions, access controls, multiple accounts, and code security. Learn how to audit your function permissions, configuration, and access to ensure that your applications conform to your organizational requirements.

For more serverless learning resources, visit Serverless Land.